```","{""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",866668415,"Columns named ""link"" display in bold",

https://github.com/simonw/datasette/issues/1308#issuecomment-826040909,https://api.github.com/repos/simonw/datasette/issues/1308,826040909,MDEyOklzc3VlQ29tbWVudDgyNjA0MDkwOQ==,9599,simonw,2021-04-24T06:01:21Z,2021-04-24T06:01:21Z,OWNER,"Demo:

```

echo '{""link"": ""https://example.com/""}' | sqlite-utils insert link.db link -

```

","{""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",866668415,"Columns named ""link"" display in bold",

https://github.com/simonw/datasette/issues/1308#issuecomment-826040676,https://api.github.com/repos/simonw/datasette/issues/1308,826040676,MDEyOklzc3VlQ29tbWVudDgyNjA0MDY3Ng==,9599,simonw,2021-04-24T05:58:35Z,2021-04-24T05:58:35Z,OWNER,Here's why: https://github.com/simonw/datasette/blob/6ed9238178a56da5fb019f37fb1e1e15886be1d1/datasette/static/app.css#L435-L437,"{""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",866668415,"Columns named ""link"" display in bold",

https://github.com/simonw/datasette/issues/1295#issuecomment-817301355,https://api.github.com/repos/simonw/datasette/issues/1295,817301355,MDEyOklzc3VlQ29tbWVudDgxNzMwMTM1NQ==,9599,simonw,2021-04-11T12:40:25Z,2021-04-11T12:41:06Z,OWNER,"I could have a page about error codes in the docs, then have `https://datasette.io/E123` style URLs for each error core which are shown when that error occurs and redirect to the corresponding documentation section.

Can enforce these with a documentation unit test.","{""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",855296937,Errors should have links to further information,

https://github.com/simonw/datasette/issues/1293#issuecomment-813438771,https://api.github.com/repos/simonw/datasette/issues/1293,813438771,MDEyOklzc3VlQ29tbWVudDgxMzQzODc3MQ==,9599,simonw,2021-04-05T14:58:48Z,2021-04-05T14:58:48Z,OWNER,I may need to do something special for rowid columns - there is a `RowId` opcode that might come into play here.,"{""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",849978964,Show column metadata plus links for foreign keys on arbitrary query results,

https://github.com/simonw/datasette/issues/1293#issuecomment-813480043,https://api.github.com/repos/simonw/datasette/issues/1293,813480043,MDEyOklzc3VlQ29tbWVudDgxMzQ4MDA0Mw==,9599,simonw,2021-04-05T16:16:17Z,2021-04-05T16:16:17Z,OWNER,"https://latest.datasette.io/fixtures?sql=explain+select+*+from+paginated_view will be an interesting test query - because `paginated_view` is defined like this:

```sql

CREATE VIEW paginated_view AS

SELECT

content,

'- ' || content || ' -' AS content_extra

FROM no_primary_key;

```

So this will help test that the mechanism isn't confused by output columns that are created through a concatenation expression.","{""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",849978964,Show column metadata plus links for foreign keys on arbitrary query results,

https://github.com/simonw/datasette/issues/1293#issuecomment-813445512,https://api.github.com/repos/simonw/datasette/issues/1293,813445512,MDEyOklzc3VlQ29tbWVudDgxMzQ0NTUxMg==,9599,simonw,2021-04-05T15:11:40Z,2021-04-05T15:11:40Z,OWNER,"Here's some older example code that works with opcodes from Python, in this case to output indexes used by a query: https://github.com/plasticityai/supersqlite/blob/master/supersqlite/idxchk.py","{""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",849978964,Show column metadata plus links for foreign keys on arbitrary query results,

https://github.com/simonw/datasette/issues/1293#issuecomment-813134637,https://api.github.com/repos/simonw/datasette/issues/1293,813134637,MDEyOklzc3VlQ29tbWVudDgxMzEzNDYzNw==,9599,simonw,2021-04-05T01:21:59Z,2021-04-05T01:21:59Z,OWNER,"http://www.sqlite.org/draft/lang_explain.html says:

> Applications should not use EXPLAIN or EXPLAIN QUERY PLAN since their exact behavior is variable and only partially documented.

I'm going to keep exploring this though.","{""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",849978964,Show column metadata plus links for foreign keys on arbitrary query results,

https://github.com/simonw/datasette/issues/1293#issuecomment-813134386,https://api.github.com/repos/simonw/datasette/issues/1293,813134386,MDEyOklzc3VlQ29tbWVudDgxMzEzNDM4Ng==,9599,simonw,2021-04-05T01:20:28Z,2021-08-13T00:42:30Z,OWNER,"... that output might also provide a better way to extract variables than the current mechanism using a regular expression, by looking for the `Variable` opcodes.

[UPDATE: it did indeed do that, see #1421]","{""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",849978964,Show column metadata plus links for foreign keys on arbitrary query results,

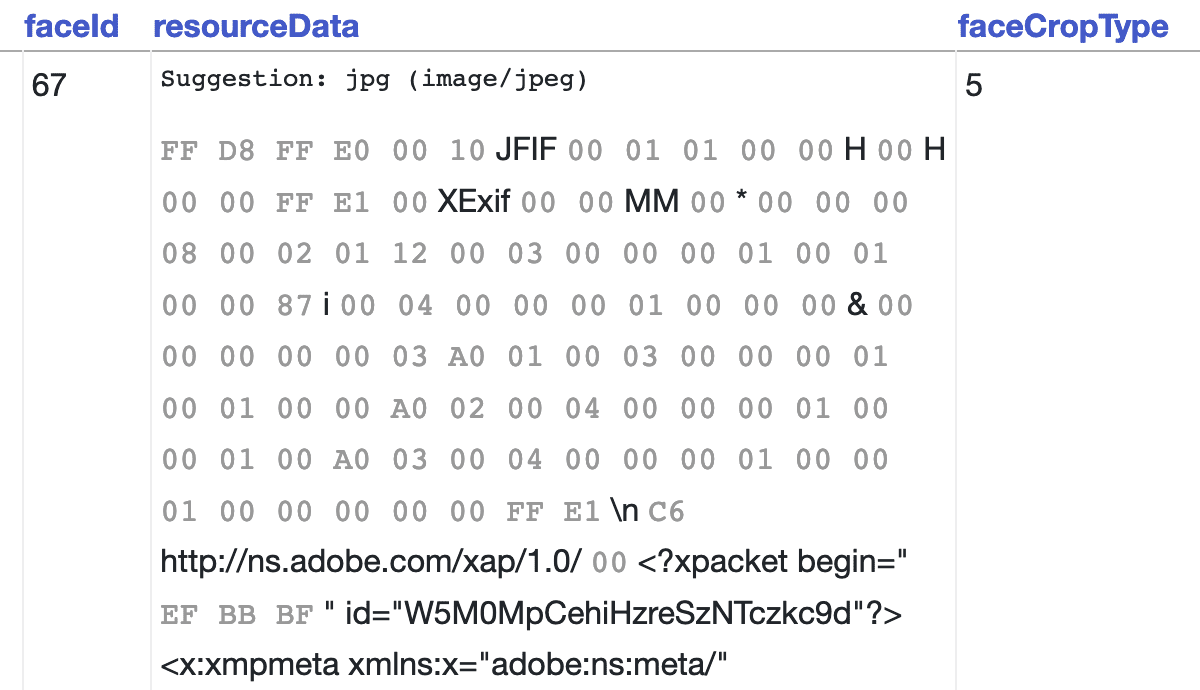

https://github.com/simonw/datasette/issues/1293#issuecomment-813134227,https://api.github.com/repos/simonw/datasette/issues/1293,813134227,MDEyOklzc3VlQ29tbWVudDgxMzEzNDIyNw==,9599,simonw,2021-04-05T01:19:31Z,2021-04-05T01:19:31Z,OWNER,"| addr | opcode | p1 | p2 | p3 | p4 | p5 | comment |

|--------|---------------|------|------|------|-----------------------|------|-----------|

| 0 | Init | 0 | 47 | 0 | | 00 | |

| 1 | OpenRead | 0 | 51 | 0 | 15 | 00 | |

| 2 | Integer | 15 | 2 | 0 | | 00 | |

| 3 | Once | 0 | 15 | 0 | | 00 | |

| 4 | OpenEphemeral | 2 | 1 | 0 | k(1,) | 00 | |

| 5 | VOpen | 1 | 0 | 0 | vtab:3E692C362158 | 00 | |

| 6 | String8 | 0 | 5 | 0 | CPAD_2020a_SuperUnits | 00 | |

| 7 | SCopy | 7 | 6 | 0 | | 00 | |

| 8 | Integer | 2 | 3 | 0 | | 00 | |

| 9 | Integer | 2 | 4 | 0 | | 00 | |

| 10 | VFilter | 1 | 15 | 3 | | 00 | |

| 11 | Rowid | 1 | 8 | 0 | | 00 | |

| 12 | MakeRecord | 8 | 1 | 9 | C | 00 | |

| 13 | IdxInsert | 2 | 9 | 8 | 1 | 00 | |

| 14 | VNext | 1 | 11 | 0 | | 00 | |

| 15 | Return | 2 | 0 | 0 | | 00 | |

| 16 | Rewind | 2 | 46 | 0 | | 00 | |

| 17 | Column | 2 | 0 | 1 | | 00 | |

| 18 | IsNull | 1 | 45 | 0 | | 00 | |

| 19 | SeekRowid | 0 | 45 | 1 | | 00 | |

| 20 | Column | 0 | 2 | 11 | | 00 | |

| 21 | Function0 | 1 | 10 | 9 | like(2) | 02 | |

| 22 | IfNot | 9 | 45 | 1 | | 00 | |

| 23 | Column | 0 | 14 | 13 | | 00 | |

| 24 | Function0 | 1 | 12 | 9 | intersects(2) | 02 | |

| 25 | Ne | 14 | 45 | 9 | | 51 | |

| 26 | Column | 0 | 14 | 9 | | 00 | |

| 27 | Function0 | 0 | 9 | 15 | asgeojson(1) | 01 | |

| 28 | Rowid | 0 | 16 | 0 | | 00 | |

| 29 | Column | 0 | 1 | 17 | | 00 | |

| 30 | Column | 0 | 2 | 18 | | 00 | |

| 31 | Column | 0 | 3 | 19 | | 00 | |

| 32 | Column | 0 | 4 | 20 | | 00 | |

| 33 | Column | 0 | 5 | 21 | | 00 | |

| 34 | Column | 0 | 6 | 22 | | 00 | |

| 35 | Column | 0 | 7 | 23 | | 00 | |

| 36 | Column | 0 | 8 | 24 | | 00 | |

| 37 | Column | 0 | 9 | 25 | | 00 | |

| 38 | Column | 0 | 10 | 26 | | 00 | |

| 39 | Column | 0 | 11 | 27 | | 00 | |

| 40 | RealAffinity | 27 | 0 | 0 | | 00 | |

| 41 | Column | 0 | 12 | 28 | | 00 | |

| 42 | Column | 0 | 13 | 29 | | 00 | |

| 43 | Column | 0 | 14 | 30 | | 00 | |

| 44 | ResultRow | 15 | 16 | 0 | | 00 | |

| 45 | Next | 2 | 17 | 0 | | 00 | |

| 46 | Halt | 0 | 0 | 0 | | 00 | |

| 47 | Transaction | 0 | 0 | 265 | 0 | 01 | |

| 48 | Variable | 1 | 31 | 0 | :freedraw | 00 | |

| 49 | Function0 | 1 | 31 | 7 | geomfromgeojson(1) | 01 | |

| 50 | String8 | 0 | 10 | 0 | %mini% | 00 | |

| 51 | Variable | 1 | 32 | 0 | :freedraw | 00 | |

| 52 | Function0 | 1 | 32 | 12 | geomfromgeojson(1) | 01 | |

| 53 | Integer | 1 | 14 | 0 | | 00 | |

| 54 | Goto | 0 | 1 | 0 | | 00 | |

Essential documentation for understanding that output: https://www.sqlite.org/opcode.html","{""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",849978964,Show column metadata plus links for foreign keys on arbitrary query results,

https://github.com/simonw/datasette/issues/1293#issuecomment-813134072,https://api.github.com/repos/simonw/datasette/issues/1293,813134072,MDEyOklzc3VlQ29tbWVudDgxMzEzNDA3Mg==,9599,simonw,2021-04-05T01:18:37Z,2021-04-05T01:18:37Z,OWNER,"Had a fantastic suggestion on the SQLite forum: it might be possible to get what I want by interpreting the opcodes output by `explain select ...`.

Copying the reply I posted to this thread:

That's really useful, thanks! It looks like it _might_ be possible for me to reconstruct where each column came from using the `explain select` output.

Here's a complex example:

It looks like the opcodes I need to inspect are `OpenRead`, `Column` and `ResultRow`.

`OpenRead` tells me which tables are being opened - the `p2` value (in this case 51) corresponds to the `rootpage` column in `sqlite_master` here: - it gets assigned to the register in `p1`.

The `Column` opcodes tell me which columns are being read - `p1` is that table reference, and `p2` is the `cid` of the column within that table.

The `ResultRow` opcode then tells me which columns are used in the results. `15 16` means start at the 15th and then read the next 16 columns.

I think this might work!","{""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",849978964,Show column metadata plus links for foreign keys on arbitrary query results,

https://github.com/simonw/datasette/issues/1293#issuecomment-813116177,https://api.github.com/repos/simonw/datasette/issues/1293,813116177,MDEyOklzc3VlQ29tbWVudDgxMzExNjE3Nw==,9599,simonw,2021-04-04T23:31:00Z,2021-04-04T23:31:00Z,OWNER,"Sadly it doesn't do what I need. This query should only return one column, but instead I get back every column that was consulted by the query:

","{""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",849978964,Show column metadata plus links for foreign keys on arbitrary query results,

https://github.com/simonw/datasette/issues/1293#issuecomment-813115607,https://api.github.com/repos/simonw/datasette/issues/1293,813115607,MDEyOklzc3VlQ29tbWVudDgxMzExNTYwNw==,9599,simonw,2021-04-04T23:25:15Z,2021-04-04T23:25:15Z,OWNER,"Oh wow, I just spotted https://github.com/macbre/sql-metadata

> Uses tokenized query returned by python-sqlparse and generates query metadata. Extracts column names and tables used by the query. Provides a helper for normalization of SQL queries and tables aliases resolving.

It's for MySQL, PostgreSQL and Hive right now but maybe getting it working with SQLite wouldn't be too hard?","{""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",849978964,Show column metadata plus links for foreign keys on arbitrary query results,

https://github.com/simonw/datasette/issues/1293#issuecomment-813115414,https://api.github.com/repos/simonw/datasette/issues/1293,813115414,MDEyOklzc3VlQ29tbWVudDgxMzExNTQxNA==,9599,simonw,2021-04-04T23:23:34Z,2021-04-04T23:23:34Z,OWNER,"The other approach I considered for this was to have my own SQL query parser running in Python, which could pick apart a complex query and figure out which column was sourced from which table. I dropped this idea because it felt that the moment `select *` came into play a pure parsing approach wouldn't work - I'd need knowledge of the schema in order to resolve the `*`.

A Python parser approach might be good enough to handle a subset of queries - those that don't use `select *` for example - and maybe that would be worth shipping? The feature doesn't have to be perfect for it to be useful.","{""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",849978964,Show column metadata plus links for foreign keys on arbitrary query results,

https://github.com/simonw/datasette/issues/1293#issuecomment-813114933,https://api.github.com/repos/simonw/datasette/issues/1293,813114933,MDEyOklzc3VlQ29tbWVudDgxMzExNDkzMw==,9599,simonw,2021-04-04T23:19:22Z,2021-04-04T23:19:22Z,OWNER,I asked about this on the SQLite forum: https://sqlite.org/forum/forumpost/0180277fb7,"{""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",849978964,Show column metadata plus links for foreign keys on arbitrary query results,

https://github.com/simonw/datasette/issues/1293#issuecomment-813113653,https://api.github.com/repos/simonw/datasette/issues/1293,813113653,MDEyOklzc3VlQ29tbWVudDgxMzExMzY1Mw==,9599,simonw,2021-04-04T23:10:49Z,2021-04-04T23:10:49Z,OWNER,"One option I've not fully explored yet: could I write my own custom SQLite C extension which exposes this functionality as a callable function?

Then I could load that extension and run a SQL query something like this:

```

select database, table, column from analyze_query(:sql_query)

```

Where `analyze_query(...)` would be a fancy virtual table function of some sort that uses the underlying `sqlite3_column_database_name()` C functions to analyze the SQL query and return details of what it would return.","{""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",849978964,Show column metadata plus links for foreign keys on arbitrary query results,

https://github.com/simonw/datasette/issues/1293#issuecomment-813113403,https://api.github.com/repos/simonw/datasette/issues/1293,813113403,MDEyOklzc3VlQ29tbWVudDgxMzExMzQwMw==,9599,simonw,2021-04-04T23:08:48Z,2021-04-04T23:08:48Z,OWNER,"Worth noting that adding `limit 0` to the query still causes it to conduct the permission checks, hopefully while avoiding doing any of the actual work of executing the query:

```pycon

In [20]: db.execute('select * from compound_primary_key join facetable on facetable.rowid = compound_primary_key.rowid limit 0').fetchall()

...:

args (21, None, None, None, None) kwargs {}

args (20, 'compound_primary_key', 'pk1', 'main', None) kwargs {}

args (20, 'compound_primary_key', 'pk2', 'main', None) kwargs {}

args (20, 'compound_primary_key', 'content', 'main', None) kwargs {}

args (20, 'facetable', 'pk', 'main', None) kwargs {}

args (20, 'facetable', 'created', 'main', None) kwargs {}

args (20, 'facetable', 'planet_int', 'main', None) kwargs {}

args (20, 'facetable', 'on_earth', 'main', None) kwargs {}

args (20, 'facetable', 'state', 'main', None) kwargs {}

args (20, 'facetable', 'city_id', 'main', None) kwargs {}

args (20, 'facetable', 'neighborhood', 'main', None) kwargs {}

args (20, 'facetable', 'tags', 'main', None) kwargs {}

args (20, 'facetable', 'complex_array', 'main', None) kwargs {}

args (20, 'facetable', 'distinct_some_null', 'main', None) kwargs {}

args (20, 'facetable', 'pk', 'main', None) kwargs {}

args (20, 'compound_primary_key', 'ROWID', 'main', None) kwargs {}

Out[20]: []

```","{""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",849978964,Show column metadata plus links for foreign keys on arbitrary query results,

https://github.com/simonw/datasette/issues/1293#issuecomment-813113218,https://api.github.com/repos/simonw/datasette/issues/1293,813113218,MDEyOklzc3VlQ29tbWVudDgxMzExMzIxOA==,9599,simonw,2021-04-04T23:07:25Z,2021-04-04T23:07:25Z,OWNER,"Here are all of the available constants:

```pycon

In [3]: for k in dir(sqlite3):

...: if k.startswith(""SQLITE_""):

...: print(k, getattr(sqlite3, k))

...:

SQLITE_ALTER_TABLE 26

SQLITE_ANALYZE 28

SQLITE_ATTACH 24

SQLITE_CREATE_INDEX 1

SQLITE_CREATE_TABLE 2

SQLITE_CREATE_TEMP_INDEX 3

SQLITE_CREATE_TEMP_TABLE 4

SQLITE_CREATE_TEMP_TRIGGER 5

SQLITE_CREATE_TEMP_VIEW 6

SQLITE_CREATE_TRIGGER 7

SQLITE_CREATE_VIEW 8

SQLITE_CREATE_VTABLE 29

SQLITE_DELETE 9

SQLITE_DENY 1

SQLITE_DETACH 25

SQLITE_DONE 101

SQLITE_DROP_INDEX 10

SQLITE_DROP_TABLE 11

SQLITE_DROP_TEMP_INDEX 12

SQLITE_DROP_TEMP_TABLE 13

SQLITE_DROP_TEMP_TRIGGER 14

SQLITE_DROP_TEMP_VIEW 15

SQLITE_DROP_TRIGGER 16

SQLITE_DROP_VIEW 17

SQLITE_DROP_VTABLE 30

SQLITE_FUNCTION 31

SQLITE_IGNORE 2

SQLITE_INSERT 18

SQLITE_OK 0

SQLITE_PRAGMA 19

SQLITE_READ 20

SQLITE_RECURSIVE 33

SQLITE_REINDEX 27

SQLITE_SAVEPOINT 32

SQLITE_SELECT 21

SQLITE_TRANSACTION 22

SQLITE_UPDATE 23

```","{""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",849978964,Show column metadata plus links for foreign keys on arbitrary query results,

https://github.com/simonw/datasette/issues/1293#issuecomment-813113175,https://api.github.com/repos/simonw/datasette/issues/1293,813113175,MDEyOklzc3VlQ29tbWVudDgxMzExMzE3NQ==,9599,simonw,2021-04-04T23:07:01Z,2021-04-04T23:07:01Z,OWNER,"A more promising route I found involved the `db.set_authorizer` method. This can be used to log the permission checks that SQLite uses, including checks for permission to access specific columns of specific tables. For a while I thought this could work!

```pycon

>>> def print_args(*args, **kwargs):

... print(""args"", args, ""kwargs"", kwargs)

... return sqlite3.SQLITE_OK

>>> db = sqlite3.connect(""fixtures.db"")

>>> db.execute('select * from compound_primary_key join facetable on rowid').fetchall()

args (21, None, None, None, None) kwargs {}

args (20, 'compound_primary_key', 'pk1', 'main', None) kwargs {}

args (20, 'compound_primary_key', 'pk2', 'main', None) kwargs {}

args (20, 'compound_primary_key', 'content', 'main', None) kwargs {}

args (20, 'facetable', 'pk', 'main', None) kwargs {}

args (20, 'facetable', 'created', 'main', None) kwargs {}

args (20, 'facetable', 'planet_int', 'main', None) kwargs {}

args (20, 'facetable', 'on_earth', 'main', None) kwargs {}

args (20, 'facetable', 'state', 'main', None) kwargs {}

args (20, 'facetable', 'city_id', 'main', None) kwargs {}

args (20, 'facetable', 'neighborhood', 'main', None) kwargs {}

args (20, 'facetable', 'tags', 'main', None) kwargs {}

args (20, 'facetable', 'complex_array', 'main', None) kwargs {}

args (20, 'facetable', 'distinct_some_null', 'main', None) kwargs {}

```

Those `20` values (where 20 is `SQLITE_READ`) looked like they were checking permissions for the columns in the order they would be returned!

Then I found a snag:

```pycon

In [18]: db.execute('select 1 + 1 + (select max(rowid) from facetable)')

args (21, None, None, None, None) kwargs {}

args (31, None, 'max', None, None) kwargs {}

args (20, 'facetable', 'pk', 'main', None) kwargs {}

args (21, None, None, None, None) kwargs {}

args (20, 'facetable', '', None, None) kwargs {}

```

Once a subselect is involved the order of the `20` checks no longer matches the order in which the columns are returned from the query.","{""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",849978964,Show column metadata plus links for foreign keys on arbitrary query results,

https://github.com/simonw/datasette/issues/1293#issuecomment-813112546,https://api.github.com/repos/simonw/datasette/issues/1293,813112546,MDEyOklzc3VlQ29tbWVudDgxMzExMjU0Ng==,9599,simonw,2021-04-04T23:02:45Z,2021-04-04T23:02:45Z,OWNER,"I've done various pieces of research into this over the past few years. Capturing what I've discovered in this ticket.

The SQLite C API has functions that can help with this: https://www.sqlite.org/c3ref/column_database_name.html details those. But they're not exposed in the Python SQLite library.

Maybe it would be possible to use them via `ctypes`? My hunch is that I would have to re-implement the full `sqlite3` module with `ctypes`, which sounds daunting.","{""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",849978964,Show column metadata plus links for foreign keys on arbitrary query results,

https://github.com/simonw/datasette/issues/1292#issuecomment-813109789,https://api.github.com/repos/simonw/datasette/issues/1292,813109789,MDEyOklzc3VlQ29tbWVudDgxMzEwOTc4OQ==,9599,simonw,2021-04-04T22:37:47Z,2021-04-04T22:37:47Z,OWNER,Could maybe replace this code: https://github.com/simonw/datasette/blob/0a7621f96f8ad14da17e7172e8a7bce24ef78966/datasette/utils/__init__.py#L1021-L1026,"{""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",849975810,Research ctypes.util.find_library('spatialite'),

https://github.com/simonw/datasette/issues/620#issuecomment-813167335,https://api.github.com/repos/simonw/datasette/issues/620,813167335,MDEyOklzc3VlQ29tbWVudDgxMzE2NzMzNQ==,9599,simonw,2021-04-05T03:57:22Z,2021-04-05T03:57:22Z,OWNER,This may be obsoleted by #1293 - it looks like I may be able to auto-detect these foreign keys for arbitrary queries after all.,"{""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",520667773,Mechanism for indicating foreign key relationships in the table and query page URLs,

https://github.com/simonw/datasette/issues/1293#issuecomment-813164282,https://api.github.com/repos/simonw/datasette/issues/1293,813164282,MDEyOklzc3VlQ29tbWVudDgxMzE2NDI4Mg==,9599,simonw,2021-04-05T03:42:26Z,2021-04-05T03:42:36Z,OWNER,"Extracting variables with this trick appears to work OK, but you have to pass the correct variables to the `explain select...` query. Using `defaultdict` seems to work there:

```pycon

>>> rows = conn.execute('explain select * from repos where id = :id', defaultdict(int))

>>> [dict(r) for r in rows if r['opcode'] == 'Variable']

[{'addr': 2,

'opcode': 'Variable',

'p1': 1,

'p2': 1,

'p3': 0,

'p4': ':id',

'p5': 0,

'comment': None}]

```","{""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",849978964,Show column metadata plus links for foreign keys on arbitrary query results,

https://github.com/simonw/datasette/issues/1293#issuecomment-813162622,https://api.github.com/repos/simonw/datasette/issues/1293,813162622,MDEyOklzc3VlQ29tbWVudDgxMzE2MjYyMg==,9599,simonw,2021-04-05T03:34:24Z,2021-04-05T03:40:35Z,OWNER,"This almost works, but throws errors with some queries (anything with a `rowid` column for example) - it needs a bunch of test coverage.

```python

def columns_for_query(conn, sql):

rows = conn.execute('explain ' + sql).fetchall()

table_rootpage_by_register = {r['p1']: r['p2'] for r in rows if r['opcode'] == 'OpenRead'}

names_by_rootpage = dict(

conn.execute(

'select rootpage, name from sqlite_master where rootpage in ({})'.format(

', '.join(map(str, table_rootpage_by_register.values()))

)

)

)

columns_by_column_register = {}

for row in rows:

if row['opcode'] == 'Column':

addr, opcode, table_id, cid, column_register, p4, p5, comment = row

table = names_by_rootpage[table_rootpage_by_register[table_id]]

columns_by_column_register[column_register] = (table, cid)

result_row = [dict(r) for r in rows if r['opcode'] == 'ResultRow'][0]

registers = list(range(result_row[""p1""], result_row[""p1""] + result_row[""p2""] - 1))

all_column_names = {}

for table in names_by_rootpage.values():

table_xinfo = conn.execute('pragma table_xinfo({})'.format(table)).fetchall()

for row in table_xinfo:

all_column_names[(table, row[""cid""])] = row[""name""]

final_output = []

for r in registers:

try:

table, cid = columns_by_column_register[r]

final_output.append((table, all_column_names[table, cid]))

except KeyError:

final_output.append((None, None))

return final_output

```","{""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",849978964,Show column metadata plus links for foreign keys on arbitrary query results,

https://github.com/simonw/datasette/issues/1273#issuecomment-813061516,https://api.github.com/repos/simonw/datasette/issues/1273,813061516,MDEyOklzc3VlQ29tbWVudDgxMzA2MTUxNg==,9599,simonw,2021-04-04T16:32:40Z,2021-04-04T16:32:40Z,OWNER,Useful tutorial series from 2012: https://northredoubt.com/n/2012/01/20/spatialite-speed-test/,"{""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",838382890,Refresh SpatiaLite documentation,

https://github.com/simonw/datasette/issues/1287#issuecomment-812935384,https://api.github.com/repos/simonw/datasette/issues/1287,812935384,MDEyOklzc3VlQ29tbWVudDgxMjkzNTM4NA==,9599,simonw,2021-04-03T22:38:33Z,2021-04-03T22:38:33Z,OWNER,"https://twitter.com/llanga/status/1378431719934681094 looks like I should wait for 3.9.4, out in a few days.","{""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",849396758,Upgrade to Python 3.9.4,

https://github.com/simonw/datasette/issues/916#issuecomment-812941818,https://api.github.com/repos/simonw/datasette/issues/916,812941818,MDEyOklzc3VlQ29tbWVudDgxMjk0MTgxOA==,9599,simonw,2021-04-03T23:43:11Z,2021-04-03T23:43:11Z,OWNER,"Relevant code is some of the most complex in all of Datasette.

https://github.com/simonw/datasette/blob/0a7621f96f8ad14da17e7172e8a7bce24ef78966/datasette/views/table.py#L530-L594

And

https://github.com/simonw/datasette/blob/0a7621f96f8ad14da17e7172e8a7bce24ef78966/datasette/views/table.py#L743-L771

I'll need to think hard about how to refactor this out into something more understandable before implementing previous links.","{""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",672421411,"Support reverse pagination (previous page, has-previous-items)",

https://github.com/simonw/datasette/issues/916#issuecomment-812941340,https://api.github.com/repos/simonw/datasette/issues/916,812941340,MDEyOklzc3VlQ29tbWVudDgxMjk0MTM0MA==,9599,simonw,2021-04-03T23:38:37Z,2021-04-03T23:38:37Z,OWNER,"Same query again with `a, d, v` returns 0 results, which is also as we would want: it signifies that we are back to the very first page: https://latest.datasette.io/fixtures?sql=select+pk1%2C+pk2%2C+pk3%2C+content+from+compound_three_primary_keys+where+%28%28pk1+%3C+%3Ap0%29%0D%0A++or%0D%0A%28pk1+%3D+%3Ap0+and+pk2+%3C+%3Ap1%29%0D%0A++or%0D%0A%28pk1+%3D+%3Ap0+and+pk2+%3D+%3Ap1+and+pk3+%3C+%3Ap2%29%29+order+by+pk1+desc%2C+pk2+desc%2C+pk3+desc+limit+1+offset+99&p0=a&p1=d&p2=v","{""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",672421411,"Support reverse pagination (previous page, has-previous-items)",

https://github.com/simonw/datasette/issues/916#issuecomment-812941112,https://api.github.com/repos/simonw/datasette/issues/916,812941112,MDEyOklzc3VlQ29tbWVudDgxMjk0MTExMg==,9599,simonw,2021-04-03T23:35:55Z,2021-04-03T23:35:55Z,OWNER,"I tried flipping the direction of the sort and the comparison operators and got this: https://latest.datasette.io/fixtures?sql=select+pk1%2C+pk2%2C+pk3%2C+content+from+compound_three_primary_keys+where+%28%28pk1+%3C+%3Ap0%29%0D%0A++or%0D%0A%28pk1+%3D+%3Ap0+and+pk2+%3C+%3Ap1%29%0D%0A++or%0D%0A%28pk1+%3D+%3Ap0+and+pk2+%3D+%3Ap1+and+pk3+%3C+%3Ap2%29%29+order+by+pk1+desc%2C+pk2+desc%2C+pk3+desc+limit+1+offset+99&p0=a&p1=h&p2=r

```sql

select pk1, pk2, pk3, content from compound_three_primary_keys where ((pk1 < :p0)

or

(pk1 = :p0 and pk2 < :p1)

or

(pk1 = :p0 and pk2 = :p1 and pk3 < :p2)) order by pk1 desc, pk2 desc, pk3 desc limit 1 offset 99

```

Which returned `a-d-v` as desired. I messed around with it to find the `limit 1 offset 99` values.","{""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",672421411,"Support reverse pagination (previous page, has-previous-items)",

https://github.com/simonw/datasette/issues/916#issuecomment-812940907,https://api.github.com/repos/simonw/datasette/issues/916,812940907,MDEyOklzc3VlQ29tbWVudDgxMjk0MDkwNw==,9599,simonw,2021-04-03T23:33:41Z,2021-04-03T23:33:41Z,OWNER,"Let's figure out the SQL for this. The most complex case is probably this one: https://latest.datasette.io/fixtures/compound_three_primary_keys?_next=a%2Ch%2Cr

Here's the SQL for that page: https://latest.datasette.io/fixtures?sql=select+pk1%2C+pk2%2C+pk3%2C+content+from+compound_three_primary_keys+where+%28%28pk1+%3E+%3Ap0%29%0A++or%0A%28pk1+%3D+%3Ap0+and+pk2+%3E+%3Ap1%29%0A++or%0A%28pk1+%3D+%3Ap0+and+pk2+%3D+%3Ap1+and+pk3+%3E+%3Ap2%29%29+order+by+pk1%2C+pk2%2C+pk3+limit+101&p0=a&p1=h&p2=r

```sql

select pk1, pk2, pk3, content from compound_three_primary_keys where ((pk1 > :p0)

or

(pk1 = :p0 and pk2 > :p1)

or

(pk1 = :p0 and pk2 = :p1 and pk3 > :p2)) order by pk1, pk2, pk3 limit 101

```

Where `p0` is `a`, `p1` is `h` and `p2` is `r`.

Given the above, how would I figure out the correct previous link? It should be https://latest.datasette.io/fixtures/compound_three_primary_keys?_next=a%2Cd%2Cv - `a`, `d`, `v`.","{""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",672421411,"Support reverse pagination (previous page, has-previous-items)",

https://github.com/simonw/datasette/issues/916#issuecomment-812940457,https://api.github.com/repos/simonw/datasette/issues/916,812940457,MDEyOklzc3VlQ29tbWVudDgxMjk0MDQ1Nw==,9599,simonw,2021-04-03T23:28:40Z,2021-04-03T23:28:40Z,OWNER,"I think my ideal implementation for this would be to reverse the order, grab the previous page-size-plus-one items, then return a `?_next=x` token that would provide the previous page sorted back in the expected default order.

The alternative would be to have a `?_previous=x` token which can be used to paginate backwards in reverse order, but I think this would be confusing as it would result in ""hit next page, then hit previous page"" returning you to a new state which features rows in the reverse order.","{""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",672421411,"Support reverse pagination (previous page, has-previous-items)",

https://github.com/simonw/datasette/issues/916#issuecomment-812804998,https://api.github.com/repos/simonw/datasette/issues/916,812804998,MDEyOklzc3VlQ29tbWVudDgxMjgwNDk5OA==,9599,simonw,2021-04-03T03:47:45Z,2021-04-03T03:47:45Z,OWNER,I found one example of an implementation of reversed keyset pagination here: https://github.com/tvainika/objection-keyset-pagination/blob/cb21a493c96daa6e63c302efae6718d09aa11661/index.js#L74-L79,"{""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",672421411,"Support reverse pagination (previous page, has-previous-items)",

https://github.com/simonw/datasette/issues/1289#issuecomment-812803256,https://api.github.com/repos/simonw/datasette/issues/1289,812803256,MDEyOklzc3VlQ29tbWVudDgxMjgwMzI1Ng==,9599,simonw,2021-04-03T03:29:25Z,2021-04-03T03:29:25Z,OWNER,"https://github.com/simonw/datasette/actions/runs/713207828 ran with `pytest-xdist` in 4m22s:

Here's the test suite running on regular `pytest` in 5m13s:

Not a huge speed-up because there are only 2 available cores in the GitHub Actions environment, but still worthwhile - especially since this lets people run in parallel on their own laptops.","{""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",849543502,Speed up tests with pytest-xdist,

https://github.com/simonw/datasette/issues/1289#issuecomment-812768915,https://api.github.com/repos/simonw/datasette/issues/1289,812768915,MDEyOklzc3VlQ29tbWVudDgxMjc2ODkxNQ==,9599,simonw,2021-04-03T00:59:15Z,2021-04-03T00:59:26Z,OWNER,"Looks like `-n auto` only detected two cores on GitHub Actions: https://github.com/simonw/datasette/runs/2257597137?check_suite_focus=true

```

============================= test session starts ==============================

platform linux -- Python 3.7.10, pytest-6.2.2, py-1.10.0, pluggy-0.13.1

SQLite: 3.31.1

rootdir: /home/runner/work/datasette/datasette, configfile: pytest.ini

plugins: xdist-2.2.1, timeout-1.4.2, forked-1.3.0, asyncio-0.14.0

gw0 I / gw1 I

gw0 [878] / gw1 [878]

```","{""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",849543502,Speed up tests with pytest-xdist,

https://github.com/simonw/datasette/issues/1289#issuecomment-812767460,https://api.github.com/repos/simonw/datasette/issues/1289,812767460,MDEyOklzc3VlQ29tbWVudDgxMjc2NzQ2MA==,9599,simonw,2021-04-03T00:48:26Z,2021-04-03T00:48:26Z,OWNER,"On my Mac `pytest-xdist` ran the test suite (minus two tests) in 59s, as opposed to 2m23s without xdist.","{""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",849543502,Speed up tests with pytest-xdist,

https://github.com/simonw/datasette/issues/1287#issuecomment-812665092,https://api.github.com/repos/simonw/datasette/issues/1287,812665092,MDEyOklzc3VlQ29tbWVudDgxMjY2NTA5Mg==,9599,simonw,2021-04-02T18:54:29Z,2021-04-02T18:54:29Z,OWNER,`python:3.9.3-slim-buster` isn't on Docker Hub yet either.,"{""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",849396758,Upgrade to Python 3.9.4,

https://github.com/simonw/datasette/issues/1286#issuecomment-812664443,https://api.github.com/repos/simonw/datasette/issues/1286,812664443,MDEyOklzc3VlQ29tbWVudDgxMjY2NDQ0Mw==,9599,simonw,2021-04-02T18:52:45Z,2021-04-02T18:52:51Z,OWNER,"Idea: default to displaying single-dimension JSON arrays of strings as a comma-separated list but show the comma in a different colour - something like this:

I used this HTML for the prototype (re-using `.type-int` just to get the colour):

```html

tag1, tag2

```","{""total_count"": 1, ""+1"": 1, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",849220154,Better default display of arrays of items,

https://github.com/simonw/datasette/issues/1286#issuecomment-812663107,https://api.github.com/repos/simonw/datasette/issues/1286,812663107,MDEyOklzc3VlQ29tbWVudDgxMjY2MzEwNw==,9599,simonw,2021-04-02T18:49:22Z,2021-04-02T18:49:22Z,OWNER,"This makes senses - showing an array as `[""blah"", ""blah2"", ""blah3""]` isn't particularly human-friendly!","{""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",849220154,Better default display of arrays of items,

https://github.com/simonw/datasette/issues/1287#issuecomment-812662583,https://api.github.com/repos/simonw/datasette/issues/1287,812662583,MDEyOklzc3VlQ29tbWVudDgxMjY2MjU4Mw==,9599,simonw,2021-04-02T18:47:59Z,2021-04-02T18:47:59Z,OWNER,Once again having tests for the Dockerfile as seen in #1272 would be useful here.,"{""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",849396758,Upgrade to Python 3.9.4,

https://github.com/simonw/datasette/issues/1287#issuecomment-812662026,https://api.github.com/repos/simonw/datasette/issues/1287,812662026,MDEyOklzc3VlQ29tbWVudDgxMjY2MjAyNg==,9599,simonw,2021-04-02T18:46:20Z,2021-04-02T18:46:20Z,OWNER,https://devcenter.heroku.com/articles/python-support#supported-runtimes looks like Heroku still only have 3.9.2 for the moment.,"{""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",849396758,Upgrade to Python 3.9.4,

https://github.com/simonw/datasette/issues/1287#issuecomment-812661269,https://api.github.com/repos/simonw/datasette/issues/1287,812661269,MDEyOklzc3VlQ29tbWVudDgxMjY2MTI2OQ==,9599,simonw,2021-04-02T18:45:08Z,2021-04-02T18:45:19Z,OWNER,"A few places:

https://github.com/simonw/datasette/blob/7b1a9a1999eb9326ce8ec830d75ac200e5279c46/Dockerfile#L1

https://github.com/simonw/datasette/blob/8e18c7943181f228ce5ebcea48deb59ce50bee1f/datasette/utils/__init__.py#L350

https://github.com/simonw/datasette/blob/8e18c7943181f228ce5ebcea48deb59ce50bee1f/tests/test_package.py#L14

https://github.com/simonw/datasette/blob/8e18c7943181f228ce5ebcea48deb59ce50bee1f/datasette/publish/heroku.py#L177-L178","{""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",849396758,Upgrade to Python 3.9.4,

https://github.com/simonw/datasette/issues/1284#issuecomment-810740486,https://api.github.com/repos/simonw/datasette/issues/1284,810740486,MDEyOklzc3VlQ29tbWVudDgxMDc0MDQ4Ng==,9599,simonw,2021-03-31T03:57:55Z,2021-03-31T03:57:55Z,OWNER,"You're right, doing this is really hard at the moment - I'm not sure I know how I would tackle this either, and it's something I've wanted in the past!

I'll have a think about this one.","{""total_count"": 1, ""+1"": 1, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",845794436,Feature or Documentation Request: Individual table as home page template,

https://github.com/simonw/datasette/pull/1282#issuecomment-809670294,https://api.github.com/repos/simonw/datasette/issues/1282,809670294,MDEyOklzc3VlQ29tbWVudDgwOTY3MDI5NA==,9599,simonw,2021-03-29T19:57:29Z,2021-03-29T19:57:29Z,OWNER,Thanks!,"{""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",843739658,Fix little typo,

https://github.com/simonw/datasette/issues/696#issuecomment-809548363,https://api.github.com/repos/simonw/datasette/issues/696,809548363,MDEyOklzc3VlQ29tbWVudDgwOTU0ODM2Mw==,9599,simonw,2021-03-29T17:04:19Z,2021-03-29T17:04:19Z,OWNER,I tried this just now against Datasette 0.56 with the new Dockerfile from #1249 (that uses SQLite and SpatiaLite installed with `apt-get install`) and the tests all passed.,"{""total_count"": 1, ""+1"": 1, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",576722115,Single failing unit test when run inside the Docker image,

https://github.com/simonw/datasette/pull/1031#issuecomment-809010713,https://api.github.com/repos/simonw/datasette/issues/1031,809010713,MDEyOklzc3VlQ29tbWVudDgwOTAxMDcxMw==,9599,simonw,2021-03-29T01:46:45Z,2021-03-29T01:46:45Z,OWNER,Sorry I didn't get to this PR sooner. I've joint-credited you in the release notes for this fix: https://docs.datasette.io/en/stable/changelog.html#v0-56,"{""total_count"": 1, ""+1"": 1, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",724369025,Fallback to databases in inspect-data.json when no -i options are passed,

https://github.com/simonw/datasette/issues/1281#issuecomment-809009580,https://api.github.com/repos/simonw/datasette/issues/1281,809009580,MDEyOklzc3VlQ29tbWVudDgwOTAwOTU4MA==,9599,simonw,2021-03-29T01:41:48Z,2021-03-29T01:41:48Z,OWNER,"https://github.com/simonw/datasette/runs/2214871602?check_suite_focus=true worked:

Here's the 0.56 image on Docker Hub: https://hub.docker.com/layers/datasetteproject/datasette/0.56/images/sha256-701fc0f299a0ea79434a4852c46dab351254b9ac25dbe3c5f36fd5360caf52f9?context=explore","{""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",842881221,Latest Datasette tags missing from Docker Hub,

https://github.com/simonw/datasette/issues/1281#issuecomment-809008760,https://api.github.com/repos/simonw/datasette/issues/1281,809008760,MDEyOklzc3VlQ29tbWVudDgwOTAwODc2MA==,9599,simonw,2021-03-29T01:38:21Z,2021-03-29T01:38:21Z,OWNER,"Got this error:

```

""docker tag"" requires exactly 2 arguments.

See 'docker tag --help'.

Usage: docker tag SOURCE_IMAGE[:TAG] TARGET_IMAGE[:TAG]

Create a tag TARGET_IMAGE that refers to SOURCE_IMAGE

```","{""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",842881221,Latest Datasette tags missing from Docker Hub,

https://github.com/simonw/datasette/issues/1281#issuecomment-809007255,https://api.github.com/repos/simonw/datasette/issues/1281,809007255,MDEyOklzc3VlQ29tbWVudDgwOTAwNzI1NQ==,9599,simonw,2021-03-29T01:32:18Z,2021-03-29T01:32:18Z,OWNER,I'm going to build a new GitHub Actions workflow for this that lets me manually specify a tag to build and push as a Docker image.,"{""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",842881221,Latest Datasette tags missing from Docker Hub,

https://github.com/simonw/datasette/issues/1281#issuecomment-809001653,https://api.github.com/repos/simonw/datasette/issues/1281,809001653,MDEyOklzc3VlQ29tbWVudDgwOTAwMTY1Mw==,9599,simonw,2021-03-29T01:08:31Z,2021-03-29T01:08:31Z,OWNER,"I'm going to attempt to fix this manually for the 0.56 release, by building and tagging it by hand and then pushing the 0.56 tag to Docker Hub.","{""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",842881221,Latest Datasette tags missing from Docker Hub,

https://github.com/simonw/datasette/issues/1281#issuecomment-809001273,https://api.github.com/repos/simonw/datasette/issues/1281,809001273,MDEyOklzc3VlQ29tbWVudDgwOTAwMTI3Mw==,9599,simonw,2021-03-29T01:06:45Z,2021-03-29T01:06:45Z,OWNER,"https://docs.docker.com/engine/reference/commandline/push/#push-all-tags-of-an-image

> Use the `-a` (or `--all-tags`) option to push all tags of a local image.","{""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",842881221,Latest Datasette tags missing from Docker Hub,

https://github.com/simonw/datasette/issues/1281#issuecomment-809000903,https://api.github.com/repos/simonw/datasette/issues/1281,809000903,MDEyOklzc3VlQ29tbWVudDgwOTAwMDkwMw==,9599,simonw,2021-03-29T01:05:10Z,2021-03-29T01:05:10Z,OWNER,"https://github.com/simonw/datasette/runs/1763835467?check_suite_focus=true for Datasette 0.54 worked, and the output included this:

```

Successfully tagged ***/datasette:0.54

The push refers to repository [docker.io/***/datasette]

aedd33c6b161: Preparing

...

aedd33c6b161: Pushed

0.54: digest: sha256:65c7e579d1c29755dac5c1ca86b1e97fa88c48bd3d724ac3e02988d0da296140 size: 2005

aedd33c6b161: Preparing

...

5dacd731af1b: Layer already exists

latest: digest: sha256:65c7e579d1c29755dac5c1ca86b1e97fa88c48bd3d724ac3e02988d0da296140 size: 2005

```

Here's that same section of output from the 0.56 release:

```

Successfully tagged ***/datasette:0.56

Using default tag: latest

The push refers to repository [docker.io/***/datasette]

4d4a9976adcc: Preparing

...

9b2132a0d5cf: Pushed

latest: digest: sha256:2250d0fbe57b1d615a8d6df0c9d43deb9533532e00bac68854773d8ff8dcf00a size: 1793

```

The difference here is the ""Using default tag: latest"" bit.","{""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",842881221,Latest Datasette tags missing from Docker Hub,

https://github.com/simonw/datasette/issues/1281#issuecomment-808999525,https://api.github.com/repos/simonw/datasette/issues/1281,808999525,MDEyOklzc3VlQ29tbWVudDgwODk5OTUyNQ==,9599,simonw,2021-03-29T01:00:38Z,2021-03-29T01:00:38Z,OWNER,"Here's the diff between `Dockerfile` in 0.54.1 and 0.56: https://github.com/simonw/datasette/compare/0.54.1...0.56#diff-551d1fcf87f78cc3bc18a7b332a4dc5d8773a512062df881c5aba28a6f5c48d7

","{""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",842881221,Latest Datasette tags missing from Docker Hub,

https://github.com/simonw/datasette/issues/1249#issuecomment-808998719,https://api.github.com/repos/simonw/datasette/issues/1249,808998719,MDEyOklzc3VlQ29tbWVudDgwODk5ODcxOQ==,9599,simonw,2021-03-29T00:57:13Z,2021-03-29T00:57:13Z,OWNER,"I just shipped Datasette 0.56 - here's the CI run: https://github.com/simonw/datasette/runs/2214701802?check_suite_focus=true

It pushed a new `latest` tag to https://hub.docker.com/r/datasetteproject/datasette/tags?page=1&ordering=last_updated

docker pull datasetteproject/datasette:latest

And then:

docker run datasetteproject/datasette:latest datasette \

--load-extension=spatialite \

--get /-/versions.json | jq .sqlite

Outputs:

```json

{

""version"": ""3.27.2"",

""fts_versions"": [

""FTS5"",

""FTS4"",

""FTS3""

],

""extensions"": {

""json1"": null,

""spatialite"": ""5.0.1""

},

""compile_options"": [

""COMPILER=gcc-8.3.0"",

""ENABLE_COLUMN_METADATA"",

""ENABLE_DBSTAT_VTAB"",

""ENABLE_FTS3"",

""ENABLE_FTS3_PARENTHESIS"",

""ENABLE_FTS3_TOKENIZER"",

""ENABLE_FTS4"",

""ENABLE_FTS5"",

""ENABLE_JSON1"",

""ENABLE_LOAD_EXTENSION"",

""ENABLE_PREUPDATE_HOOK"",

""ENABLE_RTREE"",

""ENABLE_SESSION"",

""ENABLE_STMTVTAB"",

""ENABLE_UNLOCK_NOTIFY"",

""ENABLE_UPDATE_DELETE_LIMIT"",

""HAVE_ISNAN"",

""LIKE_DOESNT_MATCH_BLOBS"",

""MAX_SCHEMA_RETRY=25"",

""MAX_VARIABLE_NUMBER=250000"",

""OMIT_LOOKASIDE"",

""SECURE_DELETE"",

""SOUNDEX"",

""TEMP_STORE=1"",

""THREADSAFE=1"",

""USE_URI""

]

}

```","{""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",824064069,Updated Dockerfile with SpatiaLite version 5.0,

https://github.com/simonw/datasette/pull/1031#issuecomment-808989067,https://api.github.com/repos/simonw/datasette/issues/1031,808989067,MDEyOklzc3VlQ29tbWVudDgwODk4OTA2Nw==,9599,simonw,2021-03-29T00:23:41Z,2021-03-29T00:23:41Z,OWNER,"This bug should have been fixed in #1229 - let me know if that's not the case!

Thanks","{""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",724369025,Fallback to databases in inspect-data.json when no -i options are passed,

https://github.com/simonw/datasette/pull/1260#issuecomment-808988697,https://api.github.com/repos/simonw/datasette/issues/1260,808988697,MDEyOklzc3VlQ29tbWVudDgwODk4ODY5Nw==,9599,simonw,2021-03-29T00:22:21Z,2021-03-29T00:22:21Z,OWNER,"This is interesting!

I've decided to apply a subset of these - the `if` and `elif` blocks are a deliberate style choice from me, because I find code clearer when it has if/else as opposed to relying on early termination. Likewise the iteration against `.keys()` on dictionaries.

I like the other fixes though, I'm about to land them in a separate commit that credits you.","{""total_count"": 1, ""+1"": 1, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",831163537,Fix: code quality issues,

https://github.com/simonw/datasette/pull/1229#issuecomment-808987304,https://api.github.com/repos/simonw/datasette/issues/1229,808987304,MDEyOklzc3VlQ29tbWVudDgwODk4NzMwNA==,9599,simonw,2021-03-29T00:17:13Z,2021-03-29T00:17:13Z,OWNER,Thanks for figuring this out!,"{""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",810507413,ensure immutable databses when starting in configuration directory mode with,

https://github.com/simonw/datasette/pull/1252#issuecomment-808986495,https://api.github.com/repos/simonw/datasette/issues/1252,808986495,MDEyOklzc3VlQ29tbWVudDgwODk4NjQ5NQ==,9599,simonw,2021-03-29T00:13:59Z,2021-03-29T00:13:59Z,OWNER,"Neat fix, thank you!","{""total_count"": 1, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 1, ""eyes"": 0}",825217564,Add back styling to lists within table cells (fixes #1141),

https://github.com/simonw/datasette/pull/1279#issuecomment-808986036,https://api.github.com/repos/simonw/datasette/issues/1279,808986036,MDEyOklzc3VlQ29tbWVudDgwODk4NjAzNg==,9599,simonw,2021-03-29T00:11:50Z,2021-03-29T00:11:50Z,OWNER,Thanks for the fix.,"{""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",842556944,Minor Docs Update. Added `--app` to fly install command.,

https://github.com/simonw/datasette/issues/1280#issuecomment-808983160,https://api.github.com/repos/simonw/datasette/issues/1280,808983160,MDEyOklzc3VlQ29tbWVudDgwODk4MzE2MA==,9599,simonw,2021-03-28T23:59:28Z,2021-03-29T00:10:05Z,OWNER,"Might be easier to do this using https://github.com/coleifer/pysqlite3 rather than try to replace the system `sqlite3` on the Ubuntu GitHub Actions instances.

These instructions should help: https://github.com/coleifer/pysqlite3#building-a-statically-linked-library","{""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",842862708,Ability to run CI against multiple SQLite versions,

https://github.com/simonw/datasette/issues/1276#issuecomment-808981968,https://api.github.com/repos/simonw/datasette/issues/1276,808981968,MDEyOklzc3VlQ29tbWVudDgwODk4MTk2OA==,9599,simonw,2021-03-28T23:52:31Z,2021-03-28T23:52:31Z,OWNER,Testing this on Glitch by adding `https://github.com/simonw/datasette/archive/48d5e0e6ac8975cfd869d4e8c69c64ca0c65e29e.zip` as a dependency. That fixed it.,"{""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",841456306,"Invalid SQL: ""no such table: pragma_database_list"" on database page",

https://github.com/simonw/datasette/issues/1276#issuecomment-808979608,https://api.github.com/repos/simonw/datasette/issues/1276,808979608,MDEyOklzc3VlQ29tbWVudDgwODk3OTYwOA==,9599,simonw,2021-03-28T23:38:34Z,2021-03-28T23:38:34Z,OWNER,"Aha! https://www.sqlite.org/pragma.html says:

> The table-valued functions for PRAGMA feature was added in SQLite version 3.16.0 (2017-01-02). Prior versions of SQLite cannot use this feature. ","{""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",841456306,"Invalid SQL: ""no such table: pragma_database_list"" on database page",

https://github.com/simonw/datasette/issues/1276#issuecomment-808979218,https://api.github.com/repos/simonw/datasette/issues/1276,808979218,MDEyOklzc3VlQ29tbWVudDgwODk3OTIxOA==,9599,simonw,2021-03-28T23:35:53Z,2021-03-28T23:36:26Z,OWNER,"Here's where I run that: https://github.com/simonw/datasette/blob/6f41c8a2bef309a66588b2875c3e24d26adb4850/datasette/database.py#L249-L253

That's from when I added the `--crossdb` option in #1232: https://github.com/simonw/datasette/commit/6f41c8a2bef309a66588b2875c3e24d26adb4850#diff-4e20309c969326a0008dc9237f6807f48d55783315fbfc1e7dfa480b550e16f9R249","{""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",841456306,"Invalid SQL: ""no such table: pragma_database_list"" on database page",

https://github.com/simonw/datasette/issues/1276#issuecomment-808979049,https://api.github.com/repos/simonw/datasette/issues/1276,808979049,MDEyOklzc3VlQ29tbWVudDgwODk3OTA0OQ==,9599,simonw,2021-03-28T23:34:38Z,2021-03-28T23:34:38Z,OWNER,"The Glitch server logs showed:

> `ERROR: conn=, sql = 'select seq, name, file from pragma_database_list() where seq > 0', params = None: no such table: pragma_database_list`

","{""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",841456306,"Invalid SQL: ""no such table: pragma_database_list"" on database page",

https://github.com/simonw/datasette/issues/1276#issuecomment-808978808,https://api.github.com/repos/simonw/datasette/issues/1276,808978808,MDEyOklzc3VlQ29tbWVudDgwODk3ODgwOA==,9599,simonw,2021-03-28T23:32:58Z,2021-03-28T23:33:58Z,OWNER,"I just managed to replicate this bug on Glitch: https://nicar-2020.glitch.me/data

> Invalid SQL

> no such table: pragma_database_list

https://nicar-2020.glitch.me/-/versions says:

```json

{

""python"": {

""version"": ""3.7.10"",

""full"": ""3.7.10 (default, Feb 20 2021, 21:21:24) \n[GCC 5.4.0 20160609]""

},

""datasette"": {

""version"": ""0.55""

},

""asgi"": ""3.0"",

""uvicorn"": ""0.13.4"",

""sqlite"": {

""version"": ""3.11.0"",

""fts_versions"": [

""FTS4"",

""FTS3""

],

""extensions"": {

""json1"": null

},

""compile_options"": [

""ENABLE_COLUMN_METADATA"",

""ENABLE_DBSTAT_VTAB"",

""ENABLE_FTS3"",

""ENABLE_FTS3_PARENTHESIS"",

""ENABLE_JSON1"",

""ENABLE_LOAD_EXTENSION"",

""ENABLE_RTREE"",

""ENABLE_UNLOCK_NOTIFY"",

""ENABLE_UPDATE_DELETE_LIMIT"",

""HAVE_ISNAN"",

""LIKE_DOESNT_MATCH_BLOBS"",

""MAX_SCHEMA_RETRY=25"",

""OMIT_LOOKASIDE"",

""SECURE_DELETE"",

""SOUNDEX"",

""SYSTEM_MALLOC"",

""TEMP_STORE=1"",

""THREADSAFE=1""

]

}

}

```

That's [SQLite 3.11.0 from 2016-02-15](https://www.sqlite.org/releaselog/3_11_0.html) with no FTS5.","{""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",841456306,"Invalid SQL: ""no such table: pragma_database_list"" on database page",

https://github.com/simonw/datasette/issues/1273#issuecomment-808759984,https://api.github.com/repos/simonw/datasette/issues/1273,808759984,MDEyOklzc3VlQ29tbWVudDgwODc1OTk4NA==,9599,simonw,2021-03-27T16:43:17Z,2021-03-27T16:43:17Z,OWNER,That rivers example in the tutorial would work a lot better with a live demo.,"{""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",838382890,Refresh SpatiaLite documentation,

https://github.com/simonw/datasette/issues/1273#issuecomment-808757721,https://api.github.com/repos/simonw/datasette/issues/1273,808757721,MDEyOklzc3VlQ29tbWVudDgwODc1NzcyMQ==,9599,simonw,2021-03-27T16:25:48Z,2021-03-27T16:25:48Z,OWNER,"> This will give you back an additional column of GeoJSON. You can copy and paste GeoJSON from this column into the debugging tool at geojson.io to visualize it on a map.

That should promote `datasette-leaflet-geojson` instead.","{""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",838382890,Refresh SpatiaLite documentation,

https://github.com/simonw/datasette/issues/1090#issuecomment-808757659,https://api.github.com/repos/simonw/datasette/issues/1090,808757659,MDEyOklzc3VlQ29tbWVudDgwODc1NzY1OQ==,9599,simonw,2021-03-27T16:25:25Z,2021-03-27T16:25:25Z,OWNER,Related feature request: ability to set default values for canned queries: #1258,"{""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",741862364,Custom widgets for canned query forms,

https://github.com/simonw/datasette/issues/1090#issuecomment-808757155,https://api.github.com/repos/simonw/datasette/issues/1090,808757155,MDEyOklzc3VlQ29tbWVudDgwODc1NzE1NQ==,9599,simonw,2021-03-27T16:21:43Z,2021-03-27T16:21:43Z,OWNER,"Idea for these: imitate https://django-sql-dashboard.readthedocs.io/en/latest/widgets.html#custom-widgets and drive them with templates.

So a custom widget type of `textarea` would look for a template called `widgets/textarea.html` - which means users could define brand new custom widgets just by creating their own template files.","{""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",741862364,Custom widgets for canned query forms,

https://github.com/simonw/datasette/issues/1273#issuecomment-808756921,https://api.github.com/repos/simonw/datasette/issues/1273,808756921,MDEyOklzc3VlQ29tbWVudDgwODc1NjkyMQ==,9599,simonw,2021-03-27T16:19:45Z,2021-03-27T16:26:28Z,OWNER,"I have a better recipe for using spatial indexes now on https://simonwillison.net/2021/Jan/24/drawing-shapes-spatialite/

```sql

select

AsGeoJSON(geometry), *

from

CPAD_2020a_SuperUnits

where

PARK_NAME like '%mini%' and

Intersects(GeomFromGeoJSON(:freedraw), geometry) = 1

and CPAD_2020a_SuperUnits.rowid in (

select

rowid

from

SpatialIndex

where

f_table_name = 'CPAD_2020a_SuperUnits'

and search_frame = GeomFromGeoJSON(:freedraw)

)

```","{""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",838382890,Refresh SpatiaLite documentation,

https://github.com/simonw/datasette/issues/1278#issuecomment-808756366,https://api.github.com/repos/simonw/datasette/issues/1278,808756366,MDEyOklzc3VlQ29tbWVudDgwODc1NjM2Ng==,9599,simonw,2021-03-27T16:15:47Z,2021-03-27T16:15:47Z,OWNER,https://timezones-api.datasette.io/ is now up and running on Cloud Run.,"{""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",842416110,SpatiaLite timezones demo is broken,

https://github.com/simonw/datasette/issues/1278#issuecomment-808652008,https://api.github.com/repos/simonw/datasette/issues/1278,808652008,MDEyOklzc3VlQ29tbWVudDgwODY1MjAwOA==,9599,simonw,2021-03-27T04:47:17Z,2021-03-27T04:47:17Z,OWNER,"https://github.com/simonw/timezones-api is that project, it's pretty old now. I'll try to get it running on Cloud Run.","{""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",842416110,SpatiaLite timezones demo is broken,

https://github.com/simonw/datasette/issues/1258#issuecomment-808651088,https://api.github.com/repos/simonw/datasette/issues/1258,808651088,MDEyOklzc3VlQ29tbWVudDgwODY1MTA4OA==,9599,simonw,2021-03-27T04:41:52Z,2021-03-27T04:42:14Z,OWNER,"Right now they look like this:

```yaml

databases:

fixtures:

queries:

neighborhood_search:

params:

- text

```

In addition to being able to specify defaults, I'd also like to add other things in the future - most significantly the ability to specify a different input widget (e.g. textarea v.s. single-line input)

So maybe this looks like:

```yaml

params:

- name: text

default: """"

- name: age

widget: number

```","{""total_count"": 3, ""+1"": 3, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",828858421,Allow canned query params to specify default values,

https://github.com/simonw/datasette/issues/1258#issuecomment-808650266,https://api.github.com/repos/simonw/datasette/issues/1258,808650266,MDEyOklzc3VlQ29tbWVudDgwODY1MDI2Ng==,9599,simonw,2021-03-27T04:37:07Z,2021-03-27T04:37:07Z,OWNER,I like that idea.,"{""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",828858421,Allow canned query params to specify default values,

https://github.com/simonw/datasette/issues/1249#issuecomment-808649480,https://api.github.com/repos/simonw/datasette/issues/1249,808649480,MDEyOklzc3VlQ29tbWVudDgwODY0OTQ4MA==,9599,simonw,2021-03-27T04:32:10Z,2021-03-27T04:32:10Z,OWNER,I'll close this issue after I ship Datasette 0.56 and confirm that the Dockerfile was correctly built and published to Docker Hub.,"{""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",824064069,Updated Dockerfile with SpatiaLite version 5.0,

https://github.com/simonw/datasette/issues/1249#issuecomment-808649322,https://api.github.com/repos/simonw/datasette/issues/1249,808649322,MDEyOklzc3VlQ29tbWVudDgwODY0OTMyMg==,9599,simonw,2021-03-27T04:31:28Z,2021-03-27T04:31:28Z,OWNER,"One last test of that Dockerfile:

```

(datasette) datasette % docker build -f Dockerfile -t datasetteproject/datasette:0.55a --build-arg VERSION=0.55 .

(datasette) datasette % docker run datasetteproject/datasette:0.55a datasette --get '/-/versions.json' | jq

{

""python"": {

""version"": ""3.9.2"",

""full"": ""3.9.2 (default, Feb 19 2021, 17:23:45) \n[GCC 8.3.0]""

},

""datasette"": {

""version"": ""0.55""

},

""asgi"": ""3.0"",

""uvicorn"": ""0.13.4"",

""sqlite"": {

""version"": ""3.27.2"",

""fts_versions"": [

""FTS5"",

""FTS4"",

""FTS3""

],

""extensions"": {

""json1"": null

},

""compile_options"": [

""COMPILER=gcc-8.3.0"",

""ENABLE_COLUMN_METADATA"",

""ENABLE_DBSTAT_VTAB"",

""ENABLE_FTS3"",

""ENABLE_FTS3_PARENTHESIS"",

""ENABLE_FTS3_TOKENIZER"",

""ENABLE_FTS4"",

""ENABLE_FTS5"",

""ENABLE_JSON1"",

""ENABLE_LOAD_EXTENSION"",

""ENABLE_PREUPDATE_HOOK"",

""ENABLE_RTREE"",

""ENABLE_SESSION"",

""ENABLE_STMTVTAB"",

""ENABLE_UNLOCK_NOTIFY"",

""ENABLE_UPDATE_DELETE_LIMIT"",

""HAVE_ISNAN"",

""LIKE_DOESNT_MATCH_BLOBS"",

""MAX_SCHEMA_RETRY=25"",

""MAX_VARIABLE_NUMBER=250000"",

""OMIT_LOOKASIDE"",

""SECURE_DELETE"",

""SOUNDEX"",

""TEMP_STORE=1"",

""THREADSAFE=1"",

""USE_URI""

]

}

}

(datasette) datasette % docker run datasetteproject/datasette:0.55a datasette --get '/-/versions.json' --load-extension=spatialite | jq

{

""python"": {

""version"": ""3.9.2"",

""full"": ""3.9.2 (default, Feb 19 2021, 17:23:45) \n[GCC 8.3.0]""

},

""datasette"": {

""version"": ""0.55""

},

""asgi"": ""3.0"",

""uvicorn"": ""0.13.4"",

""sqlite"": {

""version"": ""3.27.2"",

""fts_versions"": [

""FTS5"",

""FTS4"",

""FTS3""

],

""extensions"": {

""json1"": null,

""spatialite"": ""5.0.1""

},

""compile_options"": [

""COMPILER=gcc-8.3.0"",

""ENABLE_COLUMN_METADATA"",

""ENABLE_DBSTAT_VTAB"",

""ENABLE_FTS3"",

""ENABLE_FTS3_PARENTHESIS"",

""ENABLE_FTS3_TOKENIZER"",

""ENABLE_FTS4"",

""ENABLE_FTS5"",

""ENABLE_JSON1"",

""ENABLE_LOAD_EXTENSION"",

""ENABLE_PREUPDATE_HOOK"",

""ENABLE_RTREE"",

""ENABLE_SESSION"",

""ENABLE_STMTVTAB"",

""ENABLE_UNLOCK_NOTIFY"",

""ENABLE_UPDATE_DELETE_LIMIT"",

""HAVE_ISNAN"",

""LIKE_DOESNT_MATCH_BLOBS"",

""MAX_SCHEMA_RETRY=25"",

""MAX_VARIABLE_NUMBER=250000"",

""OMIT_LOOKASIDE"",

""SECURE_DELETE"",

""SOUNDEX"",

""TEMP_STORE=1"",

""THREADSAFE=1"",

""USE_URI""

]

}

}

```","{""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",824064069,Updated Dockerfile with SpatiaLite version 5.0,

https://github.com/simonw/datasette/issues/1272#issuecomment-808648974,https://api.github.com/repos/simonw/datasette/issues/1272,808648974,MDEyOklzc3VlQ29tbWVudDgwODY0ODk3NA==,9599,simonw,2021-03-27T04:29:42Z,2021-03-27T04:29:42Z,OWNER,"I'm skipping this for the moment because the new Dockerfile shape introduced in https://github.com/simonw/datasette/issues/1249#issuecomment-804404544 isn't compatible with this technique, since it installs Datasette from PyPI rather than directly from the repo.

Will need to change that if I want to do this unit tests thing.","{""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",838245338,Unit tests for the Dockerfile,

https://github.com/simonw/datasette/issues/1272#issuecomment-808647937,https://api.github.com/repos/simonw/datasette/issues/1272,808647937,MDEyOklzc3VlQ29tbWVudDgwODY0NzkzNw==,9599,simonw,2021-03-27T04:23:19Z,2021-03-27T04:23:36Z,OWNER,"Part of the challenge here is only running if a Docker daemon is available. I think this pattern works, in `tests/test_dockerfile.py`:

```python

import httpx

import pathlib

import pytest

import subprocess

root = pathlib.Path(__file__).parent.parent

def docker_is_available():

try:

client = httpx.Client(

transport=httpx.HTTPTransport(uds=""/var/run/docker.sock"")

)

client.get(""http://docker/info"")

return True

except httpx.ConnectError:

return False

@pytest.fixture

def build_container():

assert (root / ""Dockerfile"").exists()

subprocess.check_call([

""docker"", ""build"", str(root), ""-t"", ""datasette-dockerfile-test""

])

@pytest.mark.skipif(not docker_is_available(),

reason=""Docker is not available""

)

def test_dockerfile(build_container):

output = subprocess.check_output([

""docker"", ""run"", ""datasette-dockerfile-test"", ""datasette"", ""--get"", ""/_memory?sql=select+1&shape=_array""

])

assert False, ""Implement better assertion here""

```","{""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",838245338,Unit tests for the Dockerfile,

https://github.com/simonw/datasette/issues/1276#issuecomment-808642405,https://api.github.com/repos/simonw/datasette/issues/1276,808642405,MDEyOklzc3VlQ29tbWVudDgwODY0MjQwNQ==,9599,simonw,2021-03-27T03:53:18Z,2021-03-27T03:53:18Z,OWNER,That's really odd. What version of SQLite are you using on the server? You can tell by visiting `https://your-site/-/versions`,"{""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",841456306,"Invalid SQL: ""no such table: pragma_database_list"" on database page",

https://github.com/simonw/datasette/issues/1277#issuecomment-808641846,https://api.github.com/repos/simonw/datasette/issues/1277,808641846,MDEyOklzc3VlQ29tbWVudDgwODY0MTg0Ng==,9599,simonw,2021-03-27T03:49:34Z,2021-03-27T03:49:34Z,OWNER,"I fixed this already, it's a duplicate of #1239","{""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",842212586,Facet by array breaks if table name contains a space,

https://github.com/simonw/sqlite-utils/issues/252#issuecomment-808302971,https://api.github.com/repos/simonw/sqlite-utils/issues/252,808302971,MDEyOklzc3VlQ29tbWVudDgwODMwMjk3MQ==,9599,simonw,2021-03-26T15:21:38Z,2021-03-26T15:21:38Z,OWNER,Already got that! It's the `--nl` option - works for both importing and exporting data: https://sqlite-utils.datasette.io/en/stable/cli.html#inserting-json-data,"{""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",842062949,Support json-line files,

https://github.com/simonw/sqlite-utils/issues/251#issuecomment-807647791,https://api.github.com/repos/simonw/sqlite-utils/issues/251,807647791,MDEyOklzc3VlQ29tbWVudDgwNzY0Nzc5MQ==,9599,simonw,2021-03-25T22:42:48Z,2021-03-25T22:44:31Z,OWNER,"Idea: enhance `lambda` to allow it to return a dictionary of values, which will then be used to populate new columns. Use a `--multicolumn` option to indicate this:

sqlite-utils convert lambda mydb.db mytable mycolumn \

--code '{""first_name"": value.split()[0], ""last_name"": value.split()[1]}' \

--multicolumn --drop

The `--drop` means ""drop the `mycolumn` column after making this change"".

Maybe `--multi` is a better name than `--multicolumn` here, since either way it's going to need additional explanation somewhere.

Would this overlap with #239 at all?","{""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",841377702,"""sqlite-utils convert"" command to replace the separate ""sqlite-transform"" tool",

https://github.com/simonw/sqlite-utils/issues/251#issuecomment-807642041,https://api.github.com/repos/simonw/sqlite-utils/issues/251,807642041,MDEyOklzc3VlQ29tbWVudDgwNzY0MjA0MQ==,9599,simonw,2021-03-25T22:39:22Z,2021-03-25T22:39:22Z,OWNER,"Here's the full current implementation of that tool: https://github.com/simonw/sqlite-transform/blob/0.5/sqlite_transform/cli.py

My current plan is to make this functionality available as the following:

sqlite-utils convert jsonsplit mydb.db mytable mycolumn

sqlite-utils convert parsedatetime mydb.db mytable mycolumn

sqlite-utils convert parsedate mydb.db mytable mycolumn

sqlite-utils convert lambda mydb.db mytable mycolumn --code='str(value).upper()'

","{""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",841377702,"""sqlite-utils convert"" command to replace the separate ""sqlite-transform"" tool",

https://github.com/simonw/datasette/issues/741#issuecomment-806166575,https://api.github.com/repos/simonw/datasette/issues/741,806166575,MDEyOklzc3VlQ29tbWVudDgwNjE2NjU3NQ==,9599,simonw,2021-03-24T20:30:33Z,2021-03-24T20:30:33Z,OWNER,"`datasette package` is a mostly unmaintained feature at this point - it has a bit of test coverage but I've not made any improvements to it in a few years, and I don't use it for my own projects.

I'll make this change to `package` at the same time as I land it for `publish` though.","{""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",607223136,"Replace ""datasette publish --extra-options"" with ""--setting""",

https://github.com/simonw/datasette/issues/1274#issuecomment-805216038,https://api.github.com/repos/simonw/datasette/issues/1274,805216038,MDEyOklzc3VlQ29tbWVudDgwNTIxNjAzOA==,9599,simonw,2021-03-23T20:14:53Z,2021-03-23T20:14:53Z,OWNER,"Yes this is one of the main reasons I'm planning to switch to encouraging YAML be default instead of JSON (while still supporting JSON) - YAML supports comments and multi-line strings.

See #1153 for YAML by default in the documentation.","{""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",839008371,Might there be some way to comment metadata.json?,

https://github.com/simonw/datasette/issues/1153#issuecomment-805109341,https://api.github.com/repos/simonw/datasette/issues/1153,805109341,MDEyOklzc3VlQ29tbWVudDgwNTEwOTM0MQ==,9599,simonw,2021-03-23T17:55:48Z,2021-03-23T18:41:57Z,OWNER,"Beginnings of a UI element for switching between them:

```html

` has a padding of 12px, so using 12px padding on the tab links should get them to line up better.","{""total_count"": 1, ""+1"": 1, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",771202454,"Use YAML examples in documentation by default, not JSON",

https://github.com/simonw/datasette/issues/1249#issuecomment-805033155,https://api.github.com/repos/simonw/datasette/issues/1249,805033155,MDEyOklzc3VlQ29tbWVudDgwNTAzMzE1NQ==,9599,simonw,2021-03-23T16:12:13Z,2021-03-23T16:12:13Z,OWNER,"Don't forget to update this bit of the docs: https://docs.datasette.io/en/0.55/spatialite.html#building-spatialite-from-source

> The packaged versions of SpatiaLite usually provide SpatiaLite 4.3.0a. For an example of how to build the most recent unstable version, 4.4.0-RC0 (which includes the powerful [VirtualKNN module](https://www.gaia-gis.it/fossil/libspatialite/wiki?name=KNN)), take a look at the [Datasette Dockerfile](https://github.com/simonw/datasette/blob/master/Dockerfile).

See also #1273","{""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",824064069,Updated Dockerfile with SpatiaLite version 5.0,

https://github.com/simonw/datasette/issues/1270#issuecomment-805058241,https://api.github.com/repos/simonw/datasette/issues/1270,805058241,MDEyOklzc3VlQ29tbWVudDgwNTA1ODI0MQ==,9599,simonw,2021-03-23T16:45:39Z,2021-03-23T16:45:39Z,OWNER,"I managed to build SpatiaLite such that this isn't necessary any more. I'm still interested in pursuing this further though - it feels like it could be a more robust way of implementing timeouts, but I need to prove to myself that it's better (maybe better performance, or handles more edge-cases?). Not sure how to prove that yet.","{""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",837350092,Try implementing SQLite timeouts using .interrupt() instead of using .set_progress_handler(),

https://github.com/simonw/datasette/issues/1153#issuecomment-805056806,https://api.github.com/repos/simonw/datasette/issues/1153,805056806,MDEyOklzc3VlQ29tbWVudDgwNTA1NjgwNg==,9599,simonw,2021-03-23T16:43:38Z,2021-03-23T16:43:38Z,OWNER,"I used this code to get that:

```javascript

var jsonVersion = JSON.stringify(window.jsyaml.load(document.querySelector('.highlight-yaml').textContent), null, 4);

div.querySelector('.highlight pre').innerText = jsonVersion;

div.querySelector('.highlight pre').style.whiteSpace = 'pre-wrap'

```","{""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",771202454,"Use YAML examples in documentation by default, not JSON",

https://github.com/simonw/datasette/issues/1153#issuecomment-805055291,https://api.github.com/repos/simonw/datasette/issues/1153,805055291,MDEyOklzc3VlQ29tbWVudDgwNTA1NTI5MQ==,9599,simonw,2021-03-23T16:41:31Z,2021-03-23T16:41:31Z,OWNER,"One downside of doing this conversion in JavaScript: it's much harder to get the same JSON syntax highlighting as that provided by Sphinx:

","{""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",771202454,"Use YAML examples in documentation by default, not JSON",

https://github.com/simonw/datasette/issues/1153#issuecomment-805050163,https://api.github.com/repos/simonw/datasette/issues/1153,805050163,MDEyOklzc3VlQ29tbWVudDgwNTA1MDE2Mw==,9599,simonw,2021-03-23T16:34:35Z,2021-03-23T16:35:32Z,OWNER,"https://docs.datasette.io/en/stable/metadata.html has this example:

```yaml

title: Demonstrating Metadata from YAML

description_html: |-

This description includes a long HTML string

YAML is better for embedding HTML strings than JSON!

license: ODbL

license_url: https://opendatacommons.org/licenses/odbl/

databases:

fixtures:

tables:

no_primary_key:

hidden: true

queries:

neighborhood_search:

sql: |-

select neighborhood, facet_cities.name, state

from facetable join facet_cities on facetable.city_id = facet_cities.id

where neighborhood like '%' || :text || '%' order by neighborhood;

title: Search neighborhoods

description_html: |-

This demonstrates basic LIKE search

```

I ran this in the browser dev tools:

```javascript

var s = document.createElement('script')

s.src = 'https://cdnjs.cloudflare.com/ajax/libs/js-yaml/4.0.0/js-yaml.min.js'

document.head.appendChild(s)

var yamlExample = document.querySelector('.highlight-yaml').textContent);

console.log(JSON.stringify(window.jsyaml.load(yamlExample), null, 4))

```

And got:

```json

{

""title"": ""Demonstrating Metadata from YAML"",

""description_html"": ""

This description includes a long HTML string

\n

\n

YAML is better for embedding HTML strings than JSON!

This demonstrates basic LIKE search""

}

}

}

}

}

```","{""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",771202454,"Use YAML examples in documentation by default, not JSON",

https://github.com/simonw/datasette/issues/1153#issuecomment-805047117,https://api.github.com/repos/simonw/datasette/issues/1153,805047117,MDEyOklzc3VlQ29tbWVudDgwNTA0NzExNw==,9599,simonw,2021-03-23T16:30:15Z,2021-03-23T16:46:06Z,OWNER,"https://cdnjs.cloudflare.com/ajax/libs/js-yaml/4.0.0/js-yaml.min.js is only 12.5KB zipped, 38KB total - so that's not a bad option.

https://github.com/nodeca/js-yaml","{""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",771202454,"Use YAML examples in documentation by default, not JSON",