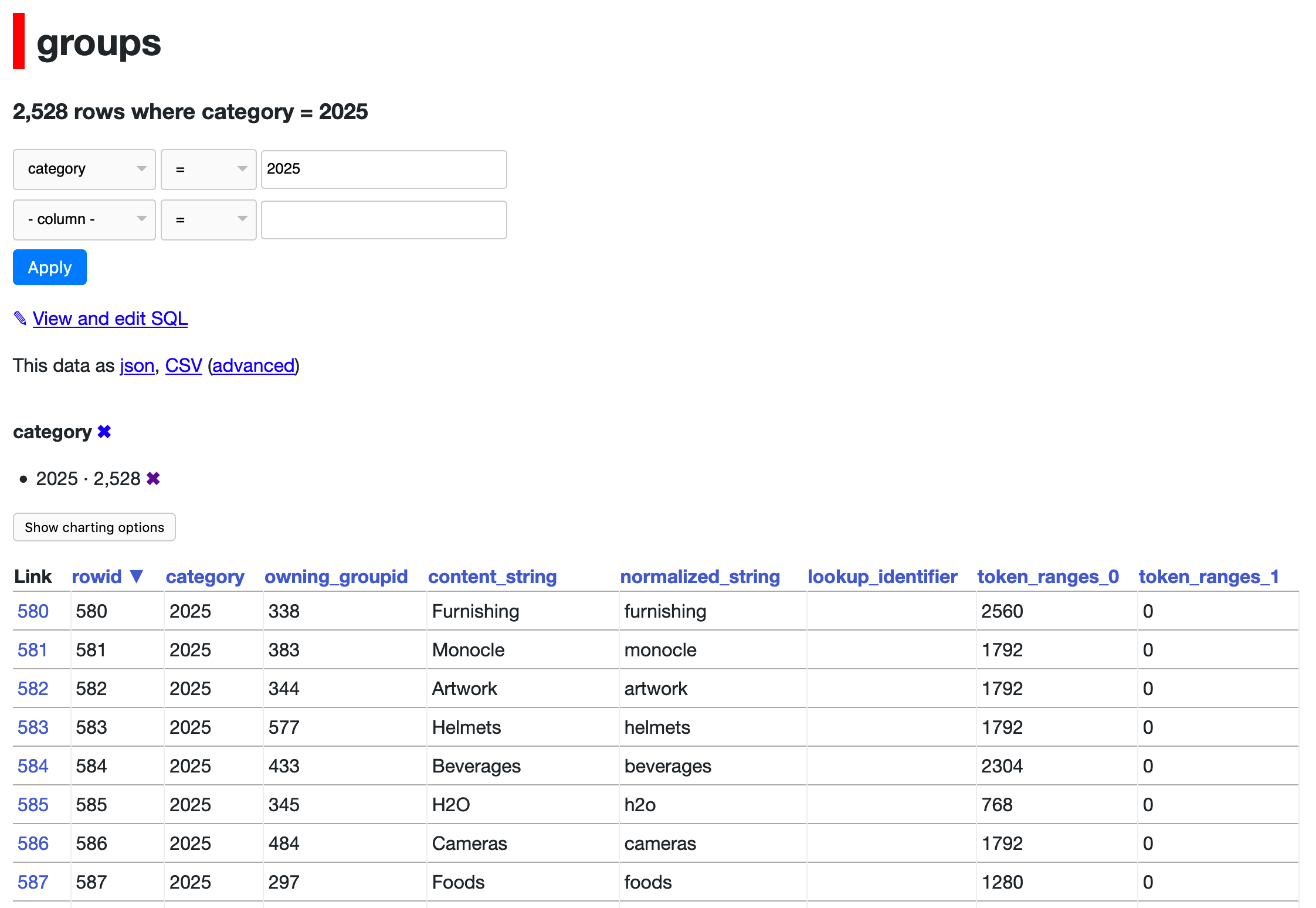

issue_comments

10,495 rows sorted by html_url

This data as json, CSV (advanced)

issue >1000

- Redesign default .json format 55

- Show column metadata plus links for foreign keys on arbitrary query results 51

- ?_extra= support (draft) 49

- Rethink how .ext formats (v.s. ?_format=) works before 1.0 48

- Upgrade to CodeMirror 6, add SQL autocomplete 48

- JavaScript plugin hooks mechanism similar to pluggy 47

- Updated Dockerfile with SpatiaLite version 5.0 45

- Complete refactor of TableView and table.html template 45

- Port Datasette to ASGI 42

- Authentication (and permissions) as a core concept 40

- invoke_startup() is not run in some conditions, e.g. gunicorn/uvicorn workers, breaking lots of things 36

- Deploy a live instance of demos/apache-proxy 34

- await datasette.client.get(path) mechanism for executing internal requests 33

- Maintain an in-memory SQLite table of connected databases and their tables 32

- Research: demonstrate if parallel SQL queries are worthwhile 32

- Ability to sort (and paginate) by column 31

- Server hang on parallel execution of queries to named in-memory databases 31

- Default API token authentication mechanism 30

- Port as many tests as possible to async def tests against ds_client 29

- link_or_copy_directory() error - Invalid cross-device link 28

- Add ?_extra= mechanism for requesting extra properties in JSON 27

- Export to CSV 27

- base_url configuration setting 27

- Documentation with recommendations on running Datasette in production without using Docker 27

- Optimize all those calls to index_list and foreign_key_list 27

- Support cross-database joins 26

- Ability for a canned query to write to the database 26

- table.transform() method for advanced alter table 26

- New pattern for views that return either JSON or HTML, available for plugins 26

- Proof of concept for Datasette on AWS Lambda with EFS 25

- WIP: Add Gmail takeout mbox import 25

- Make it easier to insert geometries, with documentation and maybe code 25

- DeprecationWarning: pkg_resources is deprecated as an API 25

- Redesign register_output_renderer callback 24

- API explorer tool 24

- De-tangling Metadata before Datasette 1.0 24

- Stream all results for arbitrary SQL and canned queries 23

- "datasette insert" command and plugin hook 23

- Option for importing CSV data using the SQLite .import mechanism 23

- If a row has a primary key of `null` various things break 23

- Datasette Plugins 22

- .json and .csv exports fail to apply base_url 22

- Use YAML examples in documentation by default, not JSON 22

- Idea: import CSV to memory, run SQL, export in a single command 22

- Plugin hook for dynamic metadata 22

- base_url is omitted in JSON and CSV views 22

- API to insert a single record into an existing table 22

- UI to create reduced scope tokens from the `/-/create-token` page 22

- Handle spatialite geometry columns better 21

- table.extract(...) method and "sqlite-utils extract" command 21

- Database page loads too slowly with many large tables (due to table counts) 21

- ?sort=colname~numeric to sort by by column cast to real 21

- Mechanism for storing metadata in _metadata tables 21

- Switch documentation theme to Furo 21

- "flash messages" mechanism 20

- Move CI to GitHub Issues 20

- load_template hook doesn't work for include/extends 20

- Introduce concept of a database `route`, separate from its name 20

- CSV files with too many values in a row cause errors 20

- register_permissions(datasette) plugin hook 20

- feat: Javascript Plugin API (Custom panels, column menu items with JS actions) 20

- API tokens with view-table but not view-database/view-instance cannot access the table 20

- Better way of representing binary data in .csv output 19

- Introspect if table is FTS4 or FTS5 19

- A proper favicon 19

- `datasette create-token` ability to create tokens with a reduced set of permissions 19

- Package as standalone binary 18

- Ability to ship alpha and beta releases 18

- Magic parameters for canned queries 18

- Support column descriptions in metadata.json 18

- datasette.client internal requests mechanism 18

- Extract columns cannot create foreign key relation: sqlite3.OperationalError: table sqlite_master may not be modified 18

- Figure out why SpatiaLite 5.0 hangs the database page on Linux 18

- Publish to Docker Hub failing with "libcrypt.so.1: cannot open shared object file" 18

- Update screenshots in documentation to match latest designs 18

- Consider using CSP to protect against future XSS 17

- Facets 16

- ?_col= and ?_nocol= support for toggling columns on table view 16

- Support "allow" block on root, databases and tables, not just queries 16

- Action menu for table columns 16

- Make it possible to download BLOB data from the Datasette UI 16

- sqlite-utils extract could handle nested objects 16

- `--batch-size 1` doesn't seem to commit for every item 16

- create-index should run analyze after creating index 16

- Add new spatialite helper methods 16

- Intermittent "Too many open files" error running tests 16

- Update a single record in an existing table 16

- Autocomplete text entry for filter values that correspond to facets 16

- Resolve the difference between `wrap_view()` and `BaseView` 16

- Bug: Sort by column with NULL in next_page URL 15

- Mechanism for customizing the SQL used to select specific columns in the table view 15

- The ".upsert()" method is misnamed 15

- Prototoype for Datasette on PostgreSQL 15

- --dirs option for scanning directories for SQLite databases 15

- Document (and reconsider design of) Database.execute() and Database.execute_against_connection_in_thread() 15

- latest.datasette.io is no longer updating 15

- "sqlite-utils convert" command to replace the separate "sqlite-transform" tool 15

- --lines and --text and --convert and --import 15

- Documentation should clarify /stable/ vs /latest/ 15

- Tests reliably failing on Python 3.7 15

- Transformation type `--type DATETIME` 15

- Ability to customize presentation of specific columns in HTML view 14

- Allow plugins to define additional URL routes and views 14

- Mechanism for turning nested JSON into foreign keys / many-to-many 14

- "Invalid SQL" page should let you edit the SQL 14

- .execute_write() and .execute_write_fn() methods on Database 14

- Upload all my photos to a secure S3 bucket 14

- Canned query permissions mechanism 14

- Incorrect URLs when served behind a proxy with base_url set 14

- "datasette -p 0 --root" gives the wrong URL 14

- Plugin hook for loading templates 14

- Support STRICT tables 14

- Advanced class-based `conversions=` mechanism 14

- "permissions" propery in metadata for configuring arbitrary permissions 14

- Design plugin hook for extras 14

- `handle_exception` plugin hook for custom error handling 14

- Refactor out the keyset pagination code 14

- Potential feature: special support for `?a=1&a=2` on the query page 14

- Dockerfile should build more recent SQLite with FTS5 and spatialite support 13

- Fix all the places that currently use .inspect() data 13

- Plugin hook: filters_from_request 13

- Get Datasette tests passing on Windows in GitHub Actions 13

- If you apply ?_facet_array=tags then &_facet=tags does nothing 13

- Mechanism for adding arbitrary pages like /about 13

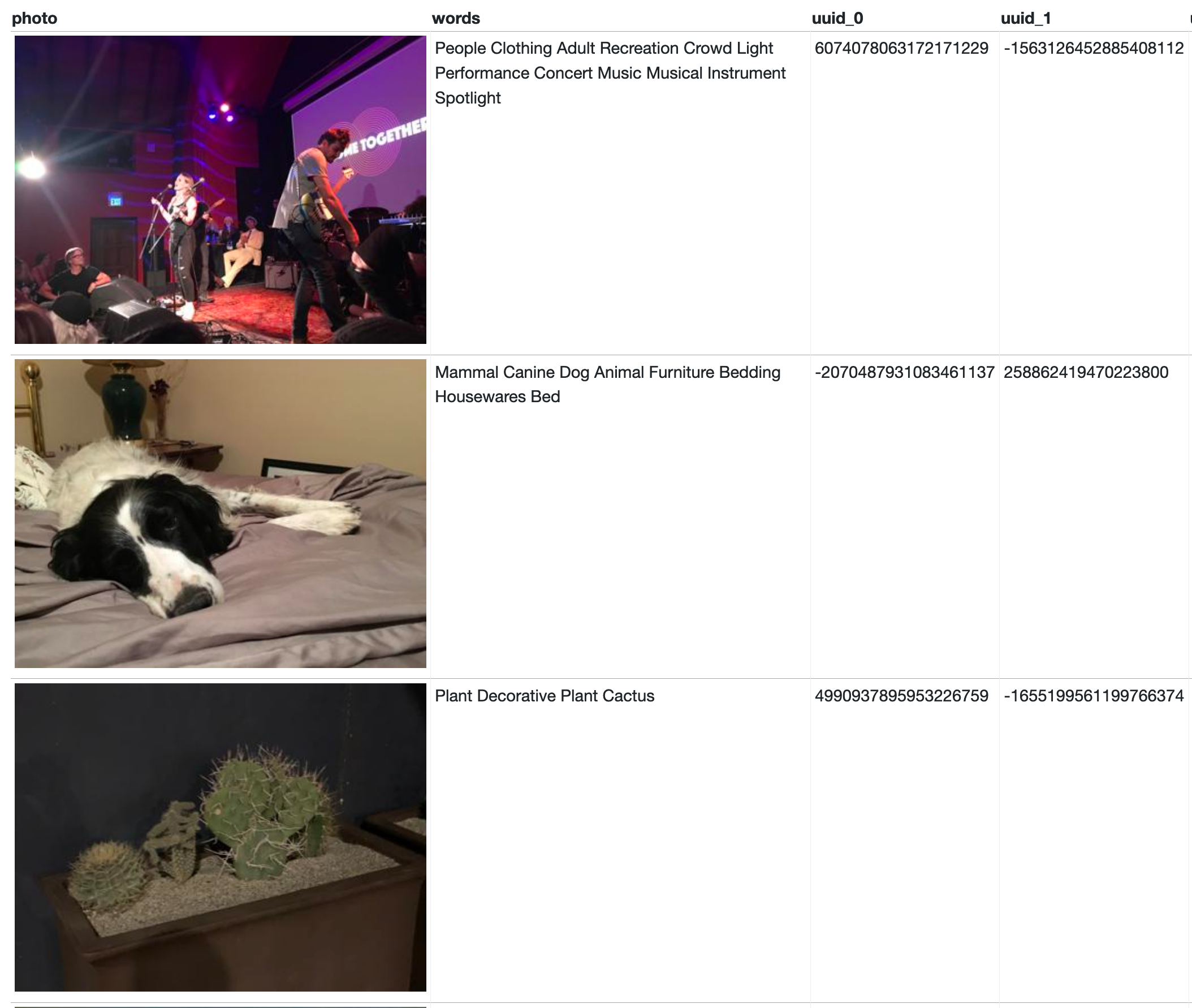

- Import machine-learning detected labels (dog, llama etc) from Apple Photos 13

- Mechanism for skipping CSRF checks on API posts 13

- table.transform() method 13

- register_output_renderer() should support streaming data 13

- Policy on documenting "public" datasette.utils functions 13

- Async support 13

- Serve using UNIX domain socket 13

- `register_commands()` plugin hook to register extra CLI commands 13

- Fix compatibility with Python 3.10 13

- Optional Pandas integration 13

- Refactor TableView to use asyncinject 13

- Writable canned queries fail with useless non-error against immutable databases 13

- google cloudrun updated their limits on maxscale based on memory and cpu count 13

- Mechanism for ensuring a table has all the columns 13

- docker image is duplicating db files somehow 13

- Write API in Datasette core 13

- Errors when using table filters behind a proxy 13

- Call for birthday presents: if you're using Datasette, let us know how you're using it here 13

- Make sure CORS works for write APIs 13

- Make detailed notes on how table, query and row views work right now 13

- .transform() instead of modifying sqlite_master for add_foreign_keys 13

- Add “updated” to metadata 12

- Metadata should be a nested arbitrary KV store 12

- Mechanism for ranking results from SQLite full-text search 12

- Sanely handle Infinity/-Infinity values in JSON using ?_json_infinity=1 12

- Package datasette for installation using homebrew 12

- Datasette Library 12

- _facet_array should work against views 12

- Full text search of all tables at once? 12

- Port Datasette from Sanic to ASGI + Uvicorn 12

- Populate "endpoint" key in ASGI scope 12

- --cp option for datasette publish and datasette package for shipping additional files and directories 12

- base_url doesn't entirely work for running Datasette inside Binder 12

- Having view-table permission but NOT view-database should still grant access to /db/table 12

- Efficiently calculate list of databases/tables a user can view 12

- Ability for plugins to collaborate when adding extra HTML to blocks in default templates 12

- Support creating descending order indexes 12

- Rethink approach to [ and ] in column names (currently throws error) 12

- Research: CTEs and union all to calculate facets AND query at the same time 12

- Traces should include SQL executed by subtasks created with `asyncio.gather` 12

- Ensure "pip install datasette" still works with Python 3.6 12

- Tilde encoding: use ~ instead of - for dash-encoding 12

- Document how to use a `--convert` function that runs initialization code first 12

- Code examples in the documentation should be formatted with Black 12

- Implement ?_extra and new API design for TableView 12

- Misleading progress bar against utf-16-le CSV input 12

- sqlite-utils query --functions mechanism for registering extra functions 12

- SQL query field can't begin by a comment 12

- API for bulk inserting records into a table 12

- `/db/-/create` API for creating tables 12

- WIP new JSON for queries 12

- Implement command-line tool interface 11

- Option to expose expanded foreign keys in JSON/CSV 11

- datasette publish lambda plugin 11

- Mechanism for checking if a SQLite database file is safe to open 11

- Expand plugins documentation to multiple pages 11

- Mechanism for plugins to add action menu items for various things 11

- --since feature can be confused by retweets 11

- bpylist.archiver.CircularReference: archive has a cycle with uid(13) 11

- Datasette secret mechanism - initially for signed cookies 11

- Writable canned queries live demo on Glitch 11

- base_url doesn't seem to work when adding criteria and clicking "apply" 11

- POST to /db/canned-query that returns JSON should be supported (for API clients) 11

- datasette.urls.table() / .instance() / .database() methods for constructing URLs, also exposed to templates 11

- Writable canned queries with magic parameters fail if POST body is empty 11

- Database class mechanism for cross-connection in-memory databases 11

- Race condition errors in new refresh_schemas() mechanism 11

- "Query parameters" form shows wrong input fields if query contains "03:31" style times 11

- render_cell() hook should support returning an awaitable 11

- sqlite-utils index-foreign-keys fails due to pre-existing index 11

- `sqlite-utils insert --convert` option 11

- Research how much of a difference analyze / sqlite_stat1 makes 11

- Table+query JSON and CSV links broken when using `base_url` setting 11

- Options for how `r.parsedate()` should handle invalid dates 11

- Research: how much overhead does the n=1 time limit have? 11

- Expose convert recipes to `sqlite-utils --functions` 11

- `prepare_jinja2_environment()` hook should take `datasette` argument 11

- Ensure insert API has good tests for rowid and compound primark key tables 11

- datasette package --spatialite throws error during build 11

- datasette --root running in Docker doesn't reliably show the magic URL 11

- New JSON design for query views 11

- Set up some example datasets on a Cloudflare-backed domain 10

- Filter UI on table page 10

- Support for units 10

- Build Dockerfile with recent Sqlite + Spatialite 10

- Table view should support filtering via many-to-many relationships 10

- Default to opening files in mutable mode, special option for immutable files 10

- New design for facet abstraction, including querystring and metadata.json 10

- Improvements to table label detection 10

- Syntactic sugar for creating m2m records 10

- Option to display binary data 10

- extracts= should support multiple-column extracts 10

- Documented internals API for use in plugins 10

- Mechanism for writing to database via a queue 10

- See if I can get Datasette working on Zeit Now v2 10

- Ability to serve thumbnailed Apple Photo from its place on disk 10

- Release Datasette 0.44 10

- Rename master branch to main 10

- Plugin hook for instance/database/table metadata 10

- Refactor default views to use register_routes 10

- CLI utility for inserting binary files into SQLite 10

- FTS table with 7 rows has _fts_docsize table with 9,141 rows 10

- Switch to .blob render extension for BLOB downloads 10

- Navigation menu plus plugin hook 10

- Adopt Prettier for JavaScript code formatting 10

- --no-headers option for CSV and TSV 10

- Add support for Jinja2 version 3.0 10

- Test Datasette Docker images built for different architectures 10

- Research: syntactic sugar for using --get with SQL queries, maybe "datasette query" 10

- `default_allow_sql` setting (a re-imagining of the old `allow_sql` setting) 10

- Add reference page to documentation using Sphinx autodoc 10

- [Enhancement] Please allow 'insert-files' to insert content as text. 10

- Docker configuration for exercising Datasette behind Apache mod_proxy 10

- Python library methods for calling ANALYZE 10

- Scripted exports 10

- Remove Hashed URL mode 10

- CLI eats my cursor 10

- If user can see table but NOT database/instance nav links should not display 10

- test_recreate failing on Windows Python 3.11 10

- `.json` errors should be returned as JSON 10

- Failing test: httpx.InvalidURL: URL too long 10

- Use sqlean if available in environment 10

- Config file with support for defining canned queries 9

- Datasette serve should accept paths/URLs to CSVs and other file formats 9

- Figure out some interesting example SQL queries 9

- bump uvicorn to 0.9.0 to be Python-3.8 friendly 9

- Handle really wide tables better 9

- Refactor TableView.data() method 9

- Set up a live demo Datasette instance 9

- Move hashed URL mode out to a plugin 9

- ?_searchmode=raw option for running FTS searches without escaping characters 9

- Option to automatically configure based on directory layout 9

- Replace "datasette publish --extra-options" with "--setting" 9

- New WIP writable canned queries 9

- Example permissions plugin 9

- Research feasibility of 100% test coverage 9

- canned_queries() plugin hook 9

- Consider dropping explicit CSRF protection entirely? 9

- Add insert --truncate option 9

- .delete_where() does not auto-commit (unlike .insert() or .upsert()) 9

- Improve performance of extract operations 9

- Figure out how to run an environment that exercises the base_url proxy setting 9

- sqlite-utils search command 9

- GENERATED column support 9

- Datasette on Amazon Linux on ARM returns 404 for static assets 9

- "Stream all rows" is not at all obvious 9

- Better internal database_name for _internal database 9

- Improve the display of facets information 9

- Mechanism for minifying JavaScript that ships with Datasette 9

- Mechanism for executing JavaScript unit tests 9

- Use _counts to speed up counts 9

- Use force_https_urls on when deploying with Cloud Run 9

- Ability to increase size of the SQL editor window 9

- Custom pages don't work with base_url setting 9

- CSV ?_stream=on redundantly calculates facets for every page 9

- "invalid reference format" publishing Docker image 9

- Show count of facet values if ?_facet_size=max 9

- Manage /robots.txt in Datasette core, block robots by default 9

- Exceeding Cloud Run memory limits when deploying a 4.8G database 9

- Test against pysqlite3 running SQLite 3.37 9

- Allow to set `facets_array` in metadata (like current `facets`) 9

- Add SpatiaLite helpers to CLI 9

- Get Datasette compatible with Pyodide 9

- Add --ignore option to more commands 9

- Ability to set a custom favicon 9

- i18n support 9

- Add new entrypoint option to `--load-extension` 9

- Ability to load JSON records held in a file with a single top level key that is a list of objects 9

- Tool for simulating permission checks against actors 9

- Serve schema JSON to the SQL editor to enable autocomplete 9

- Release Datasette 1.0a0 9

- Refactor test suite to use mostly `async def` tests 9

- 500 "attempt to write a readonly database" error caused by "PRAGMA schema_version" 9

- `table.upsert_all` fails to write rows when `not_null` is present 9

- Plugin system 9

- Get `add_foreign_keys()` to work without modifying `sqlite_master` 9

- [feature request]`datasette install plugins.json` options 9

- Bump sphinx, furo, blacken-docs dependencies 9

- `datasette publish` needs support for the new config/metadata split 9

- Make URLs immutable 8

- datasette publish heroku 8

- Ability to bundle and serve additional static files 8

- Add GraphQL endpoint 8

- prepare_context() plugin hook 8

- Wildcard support in query parameters 8

- URL hashing now optional: turn on with --config hash_urls:1 (#418) 8

- "datasette publish cloudrun" command to publish to Google Cloud Run 8

- Add register_output_renderer hook 8

- sqlite-utils create-table command 8

- Enforce import sort order with isort 8

- Add a universal navigation bar which can be modified by plugins 8

- Command to fetch stargazers for one or more repos 8

- allow leading comments in SQL input field 8

- Helper methods for working with SpatiaLite 8

- datasette publish cloudrun --memory option 8

- Commits in GitHub API can have null author 8

- extra_template_vars() sending wrong view_name for index 8

- Visually distinguish integer and text columns 8

- sqlite3.OperationalError: too many SQL variables in insert_all when using rows with varying numbers of columns 8

- Allow-list pragma_table_info(tablename) and similar 8

- Rename project to dogsheep-photos 8

- Consolidate request.raw_args and request.args 8

- Group permission checks by request on /-/permissions debug page 8

- Upgrade CodeMirror 8

- Wide tables should scroll horizontally within the page 8

- OPTIONS requests return a 500 error 8

- Bring date parsing into Datasette core 8

- Establish pattern for release branches to support bug fixes 8

- GitHub Actions workflow to build and sign macOS binary executables 8

- Make original path available to render hooks 8

- --sniff option for sniffing delimiters 8

- --crossdb option for joining across databases 8

- sqlite-utils memory command for directly querying CSV/JSON data 8

- absolute_url() behind a proxy assembles incorrect http://127.0.0.1:8001/ URLs 8

- Tests failing with FileNotFoundError in runner.isolated_filesystem 8

- Rename Datasette.__init__(config=) parameter to settings= 8

- Allow passing a file of code to "sqlite-utils convert" 8

- Documented JavaScript variables on different templates made available for plugins 8

- Support for generated columns 8

- Get rid of the no-longer necessary ?_format=json hack for tables called x.json 8

- query result page is using 400mb of browser memory 40x size of html page and 400x size of csv data 8

- Refactor and simplify Datasette routing and views 8

- Filters fail to work correctly against calculated numeric columns returned by SQL views because type affinity rules do not apply 8

- "Error: near "(": syntax error" when using sqlite-utils indexes CLI 8

- Table/database that is private due to inherited permissions does not show padlock 8

- /db/table/-/upsert API 8

- Some plugins show "home" breadcrumbs twice in the top left 8

- /db/table/-/upsert 8

- array facet: don't materialize unnecessary columns 8

- Datasette should serve Access-Control-Max-Age 8

- Proposal: Combine settings, metadata, static, etc. into a single `datasette.yaml` File 8

- Cascade for restricted token view-table/view-database/view-instance operations 8

- Deploy failing with "plugins/alternative_route.py: Not a directory" 8

- ?_group_count=country - return counts by specific column(s) 7

- Ship a Docker image of the whole thing 7

- add "format sql" button to query page, uses sql-formatter 7

- Windows installation error 7

- Keyset pagination doesn't work correctly for compound primary keys 7

- Validate metadata.json on startup 7

- inspect should record column types 7

- Travis should push tagged images to Docker Hub for each release 7

- Improve and document foreign_keys=... argument to insert/create/etc 7

- ?_where=sql-fragment parameter for table views 7

- Define mechanism for plugins to return structured data 7

- Rename metadata.json to config.json 7

- Utility mechanism for plugins to render templates 7

- Syntax for ?_through= that works as a form field 7

- Problem with square bracket in CSV column name 7

- Update SQLite bundled with Docker container 7

- index.html is not reliably loaded from a plugin 7

- .columns_dict doesn't work for all possible column types 7

- Only set .last_rowid and .last_pk for single update/inserts, not for .insert_all()/.upsert_all() with multiple records 7

- Expose scores from ZCOMPUTEDASSETATTRIBUTES 7

- publish heroku does not work on Windows 10 7

- Demo is failing to deploy 7

- Support reverse pagination (previous page, has-previous-items) 7

- Docker container is no longer being pushed (it's stuck on 0.45) 7

- insert_all(..., alter=True) should work for new columns introduced after the first 100 records 7

- Push to Docker Hub failed - but it shouldn't run for alpha releases anyway 7

- Simplify imports of common classes 7

- SQLITE_MAX_VARS maybe hard-coded too low 7

- Commands for making authenticated API calls 7

- Support the dbstat table 7

- Much, much faster extract() implementation 7

- Documented HTML hooks for JavaScript plugin authors 7

- Redesign application homepage 7

- "Edit SQL" button on canned queries 7

- Fix last remaining links to "/" that do not respect base_url 7

- .extract() shouldn't extract null values 7

- export.xml file name varies with different language settings 7

- Documentation and unit tests for urls.row() urls.row_blob() methods 7

- "View all" option for facets, to provide a (paginated) list of ALL of the facet counts plus a link to view them 7

- changes to allow for compound foreign keys 7

- Update for Big Sur 7

- table.pks_and_rows_where() method returning primary keys along with the rows 7

- Invalid SQL: "no such table: pragma_database_list" on database page 7

- Latest Datasette tags missing from Docker Hub 7

- "More" link for facets that shows _facet_size=max results 7

- ?_nocol= does not interact well with default facets 7

- sqlite-utils memory should handle TSV and JSON in addition to CSV 7

- Introspection property for telling if a table is a rowid table 7

- Add Gmail takeout mbox import (v2) 7

- Query page .csv and .json links are not correctly URL-encoded on Vercel under unknown specific conditions 7

- Win32 "used by another process" error with datasette publish 7

- New pattern for async view classes 7

- Extra options to `lookup()` which get passed to `insert()` 7

- Columns starting with an underscore behave poorly in filters 7

- Test failure in test_rebuild_fts 7

- Support for CHECK constraints 7

- `.execute_write(... block=True)` should be the default behaviour 7

- Maybe let plugins define custom serve options? 7

- Link to stable docs from older versions 7

- Add SpatiaLite helpers to CLI 7

- Use dash encoding for table names and row primary keys in URLs 7

- I forgot to include the changelog in the 3.25.1 release 7

- Remove hashed URL mode 7

- Extract out `check_permissions()` from `BaseView 7

- `--nolock` feature for opening locked databases 7

- truncate_cells_html does not work for links? 7

- progressbar for inserts/upserts of all fileformats, closes #485 7

- Ability to merge databases and tables 7

- Expose `sql` and `params` arguments to various plugin hooks 7

- Upgrade Datasette Docker to Python 3.11 7

- don't use immutable=1, only mode=ro 7

- Figure out design for JSON errors (consider RFC 7807) 7

- Hacker News Datasette write demo 7

- table.create(..., replace=True) 7

- CLI equivalents to `transform(add_foreign_keys=)` 7

- Detailed upgrade instructions for metadata.yaml -> datasette.yaml 7

- Addressable pages for every row in a table 6

- Default HTML/CSS needs to look reasonable and be responsive 6

- Support Django-style filters in querystring arguments 6

- Detect foreign keys and use them to link HTML pages together 6

- [WIP] Add publish to heroku support 6

- Nasty bug: last column not being correctly displayed 6

- Figure out how to bundle a more up-to-date SQLite 6

- Don't duplicate simple primary keys in the link column 6

- Load plugins from a `--plugins-dir=plugins/` directory 6

- Ability for plugins to define extra JavaScript and CSS 6

- inspect() should detect many-to-many relationships 6

- Deploy demo of Datasette on every commit that passes tests 6

- Plugin hook for loading metadata.json 6

- Faceted browse against a JSON list of tags 6

- CSV export in "Advanced export" pane doesn't respect query 6

- Additional Column Constraints? 6

- Easier way of creating custom row templates 6

- Experiment with type hints 6

- Command for running a search and saving tweets for that search 6

- Ways to improve fuzzy search speed on larger data sets? 6

- Improve UI of "datasette publish cloudrun" to reduce chances of accidentally over-writing a service 6

- Mechanism for indicating foreign key relationships in the table and query page URLs 6

- updating metadata.json without recreating the app 6

- Provide a cookiecutter template for creating new plugins 6

- upsert_all() throws issue when upserting to empty table 6

- "Templates considered" comment broken in >=0.35 6

- Documentation for the "request" object 6

- Support YAML in metadata - metadata.yaml 6

- Command for retrieving dependents for a repo 6

- Question: Access to immutable database-path 6

- Support decimal.Decimal type 6

- allow_by_query setting for configuring permissions with a SQL statement 6

- python tests/fixtures.py command has a bug 6

- Mechanism for specifying allow_sql permission in metadata.json 6

- Way to enable a default=False permission for anonymous users 6

- Ability to set ds_actor cookie such that it expires 6

- startup() plugin hook 6

- "Too many open files" error running tests 6

- datasette.add_message() doesn't work inside plugins 6

- Feature: pull request reviews and comments 6

- Datasette sdist is missing templates (hence broken when installing from Homebrew) 6

- End-user documentation 6

- extra_ plugin hooks should take the same arguments 6

- Private/secret databases: database files that are only visible to plugins 6

- Progress bar for sqlite-utils insert 6

- Rendering glitch with column headings on mobile 6

- Change "--config foo:bar" to "--setting foo bar" 6

- Add Link: pagination HTTP headers 6

- Figure out how to display images from <en-media> tags inline in Datasette 6

- Method for datasette.client() to forward on authentication 6

- Fallback to databases in inspect-data.json when no -i options are passed 6

- Better display of binary data on arbitrary query results page 6

- Table actions menu on view pages, not on query pages 6

- load_template() plugin hook 6

- PrefixedUrlString mechanism broke everything 6

- Support order by relevance against FTS4 6

- Support linking to compound foreign keys 6

- Support for generated columns 6

- sqlite-utils analyze-tables command and table.analyze_column() method 6

- More flexible CORS support in core, to encourage good security practices 6

- Share button for copying current URL 6

- Update Docker Spatialite version to 5.0.1 + add support for Spatialite topology functions 6

- `sqlite-utils indexes` command 6

- Error: Use either --since or --since_id, not both 6

- `db.query()` method (renamed `db.execute_returning_dicts()`) 6

- "searchmode": "raw" in table metadata 6

- `table.search(..., quote=True)` parameter and `sqlite-utils search --quote` option 6

- sqlite-utils insert errors should show SQL and parameters, if possible 6

- Mechanism to cause specific branches to deploy their own demos 6

- clean checkout & clean environment has test failures 6

- ReadTheDocs build failed for 0.59.2 release 6

- Command for creating an empty database 6

- Idea: hover to reveal details of linked row 6

- Writable canned queries fail to load custom templates 6

- filters_from_request plugin hook, now used in TableView 6

- Release Datasette 0.60 6

- introduce new option for datasette package to use a slim base image 6

- Drop support for Python 3.6 6

- Support mutating row in `--convert` without returning it 6

- Reconsider policy on blocking queries containing the string "pragma" 6

- datasette one.db one.db opens database twice, as one and one_2 6

- `deterministic=True` fails on versions of SQLite prior to 3.8.3 6

- Ship Datasette 0.61 6

- Proposal: datasette query 6

- .db downloads should be served with an ETag 6

- db[table].create(..., transform=True) and create-table --transform 6

- Upgrade `--load-extension` to accept entrypoints like Datasette 6

- Ability to set a custom facet_size per table 6

- Exclude virtual tables from datasette inspect 6

- Interactive demo of Datasette 1.0 write APIs 6

- register_permissions() plugin hook 6

- `datasette.create_token(...)` method for creating signed API tokens 6

- `publish cloudrun` reuses image tags, which can lead to very surprising deploy problems 6

- GitHub Action to lint Python code with ruff 6

- Try out Trogon for a tui interface 6

- Make as many examples in the CLI docs as possible copy-and-pastable 6

- Table renaming: db.rename_table() and sqlite-utils rename-table 6

- Plugin hook for database queries that are run 6

- DATASETTE_LOAD_PLUGINS environment variable for loading specific plugins 6

- Consider a request/response wrapping hook slightly higher level than asgi_wrapper() 6

- `table.transform()` should preserve `rowid` values 6

- Plugin hook: `actors_from_ids()` 6

- "Test DATASETTE_LOAD_PLUGINS" test shows errors but did not fail the CI run 6

- Experiment with patterns for concurrent long running queries 5

- Create neat example database 5

- Redesign JSON output, ditch jsono, offer variants controlled by parameter instead 5

- Option to open readonly but not immutable 5

- datasette publish can fail if /tmp is on a different device 5

- Refactor views 5

- Add links to example Datasette instances to appropiate places in docs 5

- Ability to enable/disable specific features via --config 5

- Custom URL routing with independent tests 5

- datasette inspect takes a very long time on large dbs 5

- Get Datasette working with Zeit Now v2's 100MB image size limit 5

- Hashed URLs should be optional 5

- Plugin for allowing CORS from specified hosts 5

- Design changes to homepage to support mutable files 5

- Option to facet by date using month or year 5

- extra_template_vars plugin hook 5

- Ability to list views, and to access db["view_name"].rows / rows_where / etc 5

- Rethink progress bars for various commands 5

- [enhancement] Method to delete a row in python 5

- Testing utilities should be available to plugins 5

- If you have databases called foo.db and foo-bar.db you cannot visit /foo-bar 5

- Don't auto-format SQL on page load 5

- stargazers command, refs #4 5

- Add this view for seeing new releases 5

- Escape_fts5_query-hookimplementation does not work with queries to standard tables 5

- on_create mechanism for after table creation 5

- Datasette.render_template() method 5

- Rethink how sanity checks work 5

- Release automation: automate the bit that posts the GitHub release 5

- table.disable_fts() method and "sqlite-utils disable-fts ..." command 5

- twitter-to-sqlite user-timeline [screen_names] --sql / --attach 5

- Option in metadata.json to set default sort order for a table 5

- Feature: record history of follower counts 5

- Custom CSS class on body for styling canned queries 5

- Repos have a big blob of JSON in the organization column 5

- Create a public demo 5

- Unit test that checks that all plugin hooks have corresponding unit tests 5

- Ability to sign in to Datasette as a root account 5

- CSRF protection 5

- Consider using enable_callback_tracebacks(True) 5

- Fix the demo - it breaks because of the tags table change 5

- Mechanism for passing additional options to `datasette my.db` that affect plugins 5

- sqlite-utils insert: options for column types 5

- Features for enabling and disabling WAL mode 5

- Add homebrew installation to documentation 5

- 'datasette --get' option, refs #926 5

- Path parameters for custom pages 5

- Try out CodeMirror SQL hints 5

- Handle case where subsequent records (after first batch) include extra columns 5

- Better handling of encodings other than utf-8 for "sqlite-utils insert" 5

- For 1.0 update trove classifier in setup.py 5

- How should datasette.client interact with base_url 5

- Add documentation on serving Datasette behind a proxy using base_url 5

- datasette.urls.static_plugins(...) method 5

- Refactor .csv to be an output renderer - and teach register_output_renderer to stream all rows 5

- Default menu links should check a real permission 5

- Rethink how table.search() method works 5

- Foreign key links break for compound foreign keys 5

- Rename datasette.config() method to datasette.setting() 5

- Show pysqlite3 version on /-/versions 5

- UNIQUE constraint failed: workouts.id 5

- Feature Request: Gmail 5

- Archive import appears to be broken on recent exports 5

- Release notes for Datasette 0.54 5

- 500 error caused by faceting if a column called `n` exists 5

- Allow canned query params to specify default values 5

- Research using CTEs for faster facet counts 5

- Better default display of arrays of items 5

- Upgrade to Python 3.9.4 5

- Make row available to `render_cell` plugin hook 5

- Add Docker multi-arch support with Buildx 5

- ?_facet_size=X to increase number of facets results on the page 5

- `table.xindexes` using `PRAGMA index_xinfo(table)` 5

- DRAFT: A new plugin hook for dynamic metadata 5

- feature: support "events" 5

- Serve all db files in a folder 5

- .transform(types=) turns rowid into a concrete column 5

- Stop using generated columns in fixtures.db 5

- `datasette publish cloudrun --cpu X` option 5

- Ability to search for text across all columns in a table 5

- Ability to insert file contents as text, in addition to blob 5

- Upgrade to httpx 0.20.0 (request() got an unexpected keyword argument 'allow_redirects') 5

- Allow routes to have extra options 5

- Way to test SQLite 3.37 (and potentially other versions) in CI 5

- Redesign CSV export to improve usability 5

- Add KNN and data_licenses to hidden tables list 5

- Move canned queries closer to the SQL input area 5

- Improvements to help make Datasette a better tool for learning SQL 5

- JSON link on row page is 404 if base_url setting is used 5

- Creating tables with custom datatypes 5

- Test failures with SQLite 3.37.0+ due to column affinity case 5

- Implement redirects from old % encoding to new dash encoding 5

- Adopt a code of conduct 5

- Display autodoc type information more legibly 5

- `sqlite3.NotSupportedError`: deterministic=True requires SQLite 3.8.3 or higher 5

- Datasette feature for publishing snapshots of query results 5

- Research running SQL in table view in parallel using `asyncio.gather()` 5

- Support `rows_where()`, `delete_where()` etc for attached alias databases 5

- CSV `extras_key=` and `ignore_extras=` equivalents for CLI tool 5

- Reading rows from a file => AttributeError: '_io.StringIO' object has no attribute 'readinto' 5

- Upgrade to 3.10.6-slim-bullseye Docker base image 5

- minor a11y: <select> has no visual indicator when tabbed to 5

- 500 error in github-to-sqlite demo 5

- feature request: pivot command 5

- Link from documentation to source code 5

- Move "datasette --get" from Getting Started to CLI Reference 5

- Database() constructor currently defaults is_mutable to False 5

- `sqlite-utils transform` should set empty strings to null when converting text columns to integer/float 5

- NoneType' object has no attribute 'actor' 5

- Create a new table from one or more records, `sqlite-utils` style 5

- Design URLs for the write API 5

- Make it easier to fix URL proxy problems 5

- upsert of new row with check constraints fails 5

- ignore:true/replace:true options for /db/-/create API 5

- More useful error message if enable_load_extension is not available 5

- `Table.convert()` skips falsey values 5

- Expand foreign key references in row view as well 5

- codespell test failure 5

- Plugin hook for adding new output formats 5

- Plan for getting the new JSON format query views working 5

- Build HTML version of /content?sql=... 5

- Add writable canned query demo to latest.datasette.io 5

- Datasette --get --actor option 5

- Bump sphinx, furo, blacken-docs dependencies 5

- Don't show foreign key links to tables the user cannot access 5

- Raise an exception if a "plugins" block exists in metadata.json 5

- Add spatialite arm64 linux path 5

- Protect against malicious SQL that causes damage even though our DB is immutable 4

- Homepage UI for editing metadata file 4

- Switch to ujson 4

- Pick a name 4

- datasette publish hyper 4

- Support for title/source/license metadata 4

- Enforce pagination (or at least limits) for arbitrary custom SQL 4

- Add NHS England Hospitals example to wiki 4

- Consider data-package as a format for metadata 4

- add support for ?field__isnull=1 4

- Plugin that adds an authentication layer of some sort 4

- ?_json=foo&_json=bar query string argument 4

- A primary key column that has foreign key restriction associated won't rendering label column 4

- Support WITH query 4

- 500 from missing table name 4

- Ability to bundle metadata and templates inside the SQLite file 4

- Ability to apply sort on mobile in portrait mode 4

- metadata.json support for plugin configuration options 4

- Explore "distinct values for column" in inspect() 4

- Escaping named parameters in canned queries 4

- Mechanism for automatically picking up changes when on-disk .db file changes 4

- Add version number support with Versioneer 4

- Support table names ending with .json or .csv 4

- Explore if SquashFS can be used to shrink size of packaged Docker containers 4

- Installation instructions, including how to use the docker image 4

- Limit text display in cells containing large amounts of text 4

- Datasette on Zeit Now returns http URLs for facet and next links 4

- Expose SANIC_RESPONSE_TIMEOUT config option in a sensible way 4

- Requesting support for query description 4

- render_cell(value) plugin hook 4

- Ability to display facet counts for many-to-many relationships 4

- Integration with JupyterLab 4

- add_column() should support REFERENCES {other_table}({other_column}) 4

- Figure out what to do about table counts in a mutable world 4

- Refactor facets to a class and new plugin, refs #427 4

- Tracing support for seeing what SQL queries were executed 4

- Paginate + search for databases/tables on the homepage 4

- Replace most of `.inspect()` (and `datasette inspect`) with table counting 4

- Decide what to do about /-/inspect 4

- Allow .insert(..., foreign_keys=()) to auto-detect table and primary key 4

- Facets not correctly persisted in hidden form fields 4

- Every datasette plugin on the ecosystem page should have a screenshot 4

- Support opening multiple databases with the same stem 4

- Decide what goes into Datasette 1.0 4

- Fix static mounts using relative paths and prevent traversal exploits 4

- Get tests running on Windows using Travis CI 4

- Support unicode in url 4

- Too many SQL variables 4

- "Too many SQL variables" on large inserts 4

- More advanced connection pooling 4

- Option to fetch only checkins more recent than the current max checkin 4

- Add triggers while enabling FTS 4

- --sql and --attach options for feeding commands from SQL queries 4

- Use better pagination (and implement progress bar) 4

- Command to import home-timeline 4

- retweets-of-me command 4

- `import` command fails on empty files 4

- Failed to import workout points 4

- Datasette should work with Python 3.8 (and drop compatibility with Python 3.5) 4

- Mechanism for register_output_renderer to suggest extension or not 4

- Assets table with downloads 4

- Exception running first command: IndexError: list index out of range 4

- Allow creation of virtual tables at startup 4

- order_by mechanism 4

- Remove .detect_column_types() from table, make it a documented API 4

- Cashe-header missing in http-response 4

- Add documentation on Database introspection methods to internals.rst 4

- Adding a "recreate" flag to the `Database` constructor 4

- Custom pages mechanism, refs #648 4

- escape_fts() does not correctly escape * wildcards 4

- Fall back to authentication via ENV 4

- Directory configuration mode should support metadata.yaml 4

- Cloud Run fails to serve database files larger than 32MB 4

- [Feature Request] Support Repo Name in Search 🥺 4

- Ability to set custom default _size on a per-table basis 4

- Try out ExifReader 4

- add_foreign_key(...., ignore=True) 4

- register_output_renderer can_render mechanism 4

- Error pages not correctly loading CSS 4

- Publish secrets 4

- Example authentication plugin 4

- /-/metadata and so on should respect view-instance permission 4

- Log out mechanism for clearing ds_actor cookie 4

- Take advantage of .coverage being a SQLite database 4

- Skip counting hidden tables 4

- Use white-space: pre-wrap on ALL table cell contents 4

- github-to-sqlite tags command for fetching tags 4

- Output binary columns in "sqlite-utils query" JSON 4

- Security issue: read-only canned queries leak CSRF token in URL 4

- Test failures caused by failed attempts to mock pip 4

- --load-extension option for sqlite-utils query 4

- github-to-sqlite should handle rate limits better 4

- request an "-o" option on "datasette server" to open the default browser at the running url 4

- Idea: conversions= could take Python functions 4

- sqlite-utils transform sub-command 4

- sqlite-utils transform/insert --detect-types 4

- from_json jinja2 filter 4

- column name links broken in 0.50.1 4

- extra_js_urls and extra_css_urls should respect base_url setting 4

- Some workout columns should be float, not text 4

- Include LICENSE in sdist 4

- Add template block prior to extra URL loaders 4

- .blob output renderer 4

- Table/database action menu cut off if too short 4

- Rebrand and redirect config.rst as settings.rst 4

- --load-extension=spatialite not working with datasetteproject/datasette docker image 4

- Fix footer not sticking to bottom in short pages 4

- "_searchmode=raw" throws an index out of range error when combined with "_search_COLUMN" 4

- sqlite-utils should suggest --csv if JSON parsing fails 4

- sqlite-utils analyze-tables command 4

- Modernize code to Python 3.6+ 4

- Prettier package not actually being cached 4

- reset_counts() method and command 4

- Use structlog for logging 4

- Certain database names results in 404: "Database not found: None" 4

- view_name = "query" for the query page 4

- Tests are very slow. 4

- Possible to deploy as a python app (for Rstudio connect server)? 4

- photo-to-sqlite: command not found 4

- Installing datasette via docker: Path 'fixtures.db' does not exist 4

- Support SSL/TLS directly 4

- Error reading csv files with large column data 4

- --port option should validate port is between 0 and 65535 4

- Escaping FTS search strings 4

- Refresh SpatiaLite documentation 4

- Feature or Documentation Request: Individual table as home page template 4

- Dockerfile: use Ubuntu 20.10 as base 4

- improve table horizontal scroll experience 4

- Document how to send multiple values for "Named parameters" 4

- Avoid error sorting by relationships if related tables are not allowed 4

- Can't use apt-get in Dockerfile when using datasetteproj/datasette as base 4

- Figure out how to publish alpha/beta releases to Docker Hub 4

- Intermittent CI failure: restore_working_directory FileNotFoundError 4

- row.update() or row.pk 4

- db.schema property and sqlite-utils schema command 4

- Cannot set type JSON 4

- Automatic type detection for CSV data 4

- Big performance boost on faceting: skip the inner order by 4

- Command for fetching Hacker News threads from the search API 4

- feature request: document minimum permissions for service account for cloudrun 4

- Ability to default to hiding the SQL for a canned query 4

- Document exceptions that can be raised by db.execute() and friends 4

- Add reference documentation generated from docstrings 4

- xml.etree.ElementTree.ParseError: not well-formed (invalid token) 4

- sqlite-utils memory can't deal with multiple files with the same name 4

- ?_sort=rowid with _next= returns error 4

- `table.lookup()` option to populate additional columns when creating a record 4

- Improve Apache proxy documentation, link to demo 4

- Provide function to generate hash_id from specified columns 4

- Use datasette-table Web Component to guide the design of the JSON API for 1.0 4

- Add `Link: rel="alternate"` header pointing to JSON for a table/query 4

- Maybe return JSON from HTML pages if `Accept: application/json` is sent 4

- `sqlite-utils insert --extract colname` 4

- Allow users to pass a full convert() function definition 4

- Update janus requirement from <0.8,>=0.6.2 to >=0.6.2,<1.1 4

- Confirm if documented nginx proxy config works for row pages with escaped characters in their primary key 4

- Better error message if `--convert` code fails to return a dict 4

- `--fmt` should imply `-t` 4

- Add documentation page with the output of `--help` 4

- Release notes for 0.60 4

- `sqlite-utils bulk --batch-size` option 4

- Document how to add a primary key to a rowid table using `sqlite-utils transform --pk` 4

- Update Dockerfile generated by `datasette publish` 4

- Sensible `cache-control` headers for static assets, including those served by plugins 4

- [feature] immutable mode for a directory, not just individual sqlite file 4

- Automated test for Pyodide compatibility 4

- ?_trace=1 fails with datasette-geojson for some reason 4

- Combining `rows_where()` and `search()` to limit which rows are searched 4

- Utilities for duplicating tables and creating a table with the results of a query 4

- 500 error if sorted by a column not in the ?_col= list 4

- Cross-link CLI to Python docs 4

- Adjust height of textarea for no JS case 4

- Research an upgrade to CodeMirror 6 4

- search_sql add include_rank option 4

- Parts of YAML file do not work when db name is "off" 4

- fails before generating views. ERR: table sqlite_master may not be modified 4

- Featured table(s) on the homepage 4

- Ability to insert multi-line files 4

- Setting to turn off table row counts entirely 4

- devrel/python api: Pylance type hinting 4

- Turn --flatten into a documented utility function 4

- Tests failing due to updated tabulate library 4

- `max_signed_tokens_ttl` setting for a maximum duration on API tokens 4

- Delete a single record from an existing table 4

- API to drop a table 4

- Datasette with many and large databases > Memory use 4

- 1.0a0 release notes 4

- Extract logic for resolving a URL to a database / table / row 4

- Clicking within the CodeMirror area below the SQL (i.e. when there's only a single line) doesn't cause the editor to get focused 4

- `publish heroku` failing due to old Python version 4

- Docs for replace:true and ignore:true options for insert API 4

- Incorrect link from the API explorer to the JSON API documentation 4

- Feature request: output number of ignored/replaced rows for insert command 4

- render_cell plugin hook's row object is not a sqlite.Row 4

- installpython3.com is now a spam website 4

- Reconsider pattern where plugins could break existing template context 4

- Datasette is not compatible with SQLite's strict quoting compilation option 4

- Repeated calls to `Table.convert()` fail 4

- How to redirect from "/" to a specific db/table 4

- Add paths for homebrew on Apple silicon 4

- Custom SQL queries should use new JSON ?_extra= format 4

- Datasette cannot be installed with Rye 4

- `--raw-lines` option, like `--raw` for multiple lines 4

- sphinx.builders.linkcheck build error 4

- feat: Implement a prepare_connection plugin hook 4

- Implement new /content.json?sql=... 4

- Query view shouldn't return `columns` 4

- form label { width: 15% } is a bad default 4

- datasette -s/--setting option for setting nested configuration options 4

- Add new `--internal internal.db` option, deprecate legacy `_internal` database 4

- `datasette.yaml` plugin support 4

- Move `permissions`, `allow` blocks, canned queries and more out of `metadata.yaml` and into `datasette.yaml` 4

- Add more STRICT table support 4

- Implement sensible query pagination 3

- Command line tool for uploading one or more DBs to Now 3

- Ability to plot a simple graph 3

- date, year, month and day querystring lookups 3

- Implement a better database index page 3

- Add more detailed API documentation to the README 3

- UI for editing named parameters 3

- Link to JSON for the list of tables 3

- UI support for running FTS searches 3

- If view is filtered, search should apply within those filtered rows 3

- ?_search=x should work if used directly against a FTS virtual table 3

- Show extra instructions with the interrupted 3

- apsw as alternative sqlite3 binding (for full text search) 3

- _group_count= feature improvements 3

- Datasette CSS should include content hash in the URL 3

- datasette skeleton command for kick-starting database and table metadata 3

- Custom template for named canned query 3

- proposal new option to disable user agents cache 3

- Cleaner mechanism for handling custom errors 3

- Run pks_for_table in inspect, executing once at build time rather than constantly 3

- Hide Spatialite system tables 3

- Support filtering with units and more 3

- Allow plugins to add new cli sub commands 3

- datasette publish --install=name-of-plugin 3

- label_column option in metadata.json 3

- External metadata.json 3

- Add new metadata key persistent_urls which removes the hash from all database urls 3

- Facets should not execute for ?shape=array|object 3

- Documentation for URL hashing, redirects and cache policy 3

- "config" section in metadata.json (root, database and table level) 3

- Build smallest possible Docker image with Datasette plus recent SQLite (with json1) plus Spatialite 4.4.0 3

- Support multiple filters of the same type 3

- ?_ttl= parameter to control caching 3

- Avoid plugins accidentally loading dependencies twice 3

- Per-database and per-table /-/ URL namespace 3

- Ability to configure SQLite cache_size 3

- Ensure --help examples in docs are always up to date 3

- Use pysqlite3 if available 3

- datasette publish digitalocean plugin 3

- Update official datasetteproject/datasette Docker container to SQLite 3.26.0 3

- Ensure downloading a 100+MB SQLite database file works 3

- How to pass configuration to plugins? 3

- Use SQLITE_DBCONFIG_DEFENSIVE plus other recommendations from SQLite security docs 3

- Experiment: run Jinja in async mode 3

- .insert_all() should accept a generator and process it efficiently 3

- Problems handling column names containing spaces or - 3

- Zeit API v1 does not work for new users - need to migrate to v2 3

- How to pass named parameter into spatialite MakePoint() function 3

- Utilities for adding indexes 3

- Add query parameter to hide SQL textarea 3

- Datasette doesn't reload when database file changes 3

- Installing installs the tests package 3

- Fix the "datasette now publish ... --alias=x" option 3

- Make it so Docker build doesn't delay PyPI release 3

- Option to ignore inserts if primary key exists already 3

- Accessibility for non-techie newsies? 3

- Test against Python 3.8-dev using Travis 3

- Exporting sqlite database(s)? 3

- "about" parameter in metadata does not appear when alone 3

- asgi_wrapper plugin hook 3

- Unable to use rank when fts-table generated with csvs-to-sqlite 3

- Mechanism for secrets in plugin configuration 3

- datasette publish option for setting plugin configuration secrets 3

- Potential improvements to facet-by-date 3

- CodeMirror fails to load on database page 3

- .add_column() doesn't match indentation of initial creation 3

- extracts= option for insert/update/etc 3

- Script uses a lot of RAM 3

- Datasette Edit 3

- "twitter-to-sqlite user-timeline" command for pulling tweets by a specific user 3

- Exposing Datasette via Jupyter-server-proxy 3

- Added support for multi arch builds 3

- Extract "source" into a separate lookup table 3

- Track and use the 'since' value 3

- Queries per DB table in metadata.json 3

- Handle spaces in DB names 3

- since_id support for home-timeline 3

- make uvicorn optional dependancy (because not ok on windows python yet) 3

- --since support for various commands for refresh-by-cron 3

- upgrade to uvicorn-0.9 to be Python-3.8 friendly 3

- Offer to format readonly SQL 3

- _where= parameter is not persisted in hidden form fields 3

- /-/plugins shows incorrect name for plugins 3

- Static assets no longer loading for installed plugins 3

- Add this repos_starred view 3

- Publish to Heroku is broken: "WARNING: You must pass the application as an import string to enable 'reload' or 'workers" 3

- rowid is not included in dropdown filter menus 3

- Custom queries with 0 results should say "0 results" 3

- Don't suggest column for faceting if all values are 1 3

- Command for importing events 3

- Make database level information from metadata.json available in the index.html template 3

- Feature request: enable extensions loading 3

- Add a glossary to the documentation 3

- fts5 syntax error when using punctuation 3

- Template debug mode that outputs template context 3

- Copy and paste doesn't work reliably on iPhone for SQL editor 3

- Tests are failing due to missing FTS5 3

- Test failures on openSUSE 15.1: AssertionError: Explicit other_table and other_column 3

- --port option to expose a port other than 8001 in "datasette package" 3

- Tutorial command no longer works 3

- Use inspect-file, if possible, for total row count 3

- prepare_connection() plugin hook should accept optional datasette argument 3

- Ability to customize columns used by extracts= feature 3

- Variables from extra_template_vars() not exposed in _context=1 3

- Search box CSS doesn't look great on OS X Safari 3

- Handle "User not found" error 3

- WIP implementation of writable canned queries 3

- --plugin-secret over-rides existing metadata.json plugin config 3

- Update aiofiles requirement from ~=0.4.0 to >=0.4,<0.6 3

- Pull repository contributors 3

- Mechanism for forcing column-type, over-riding auto-detection 3

- Issue and milestone should have foreign key to repo 3

- Issue comments don't appear to populate issues foreign key 3

- strange behavior using accented characters 3

- Configuration directory mode 3

- Annotate photos using the Google Cloud Vision API 3

- Create index on issue_comments(user) and other foreign keys 3

- Mechanism for creating views if they don't yet exist 3

- Add notlike table filter 3

- Question: Any fixed date for the release with the uft8-encoding fix? 3

- fts search on a column doesn't work anymore due to escape_fts 3

- Only install osxphotos if running on macOS 3

- Way of seeing full schema for a database 3

- Add PyPI project urls to setup.py 3

- …

user 391

- simonw 8,787

- codecov[bot] 240

- fgregg 82

- eyeseast 74

- russss 39

- dependabot[bot] 36

- psychemedia 35

- abdusco 26

- asg017 25

- bgrins 24

- cldellow 24

- mroswell 22

- chapmanjacobd 22

- aborruso 19

- chrismp 18

- brandonrobertz 15

- hydrosquall 15

- jacobian 14

- carlmjohnson 14

- RhetTbull 14

- tballison 13

- wragge 12

- tsibley 11

- rixx 11

- stonebig 11

- frafra 10

- maxhawkins 10

- terrycojones 10

- dracos 10

- rgieseke 10

- rayvoelker 10

- 20after4 9

- clausjuhl 9

- bobwhitelock 9

- UtahDave 8

- tomchristie 8

- bsilverm 8

- 4l1fe 8

- zaneselvans 7

- mhalle 7

- zeluspudding 7

- cobiadigital 7

- amjith 6

- jefftriplett 6

- simonwiles 6

- mcarpenter 6

- khimaros 6

- jaywgraves 6

- CharlesNepote 6

- ocdtrekkie 6

- davidbgk 5

- khusmann 5

- rdmurphy 5

- MarkusH 5

- lovasoa 5

- Mjboothaus 5

- dazzag24 5

- ar-jan 5

- xavdid 5

- davidhaley 5

- SteadBytes 5

- dependabot-preview[bot] 5

- jayvdb 4

- fs111 4

- bollwyvl 4

- ctb 4

- yozlet 4

- Btibert3 4

- dholth 4

- r4vi 4

- jsfenfen 4

- glasnt 4

- jungle-boogie 4

- ColinMaudry 4

- kbaikov 4

- JBPressac 4

- nitinpaultifr 4

- Kabouik 4

- dvizard 4

- henry501 4

- pjamargh 4

- benpickles 3

- frankieroberto 3

- obra 3

- janimo 3

- atomotic 3

- ghing 3

- briandorsey 3

- pkoppstein 3

- yschimke 3

- philroche 3

- macropin 3

- camallen 3

- coldclimate 3

- wsxiaoys 3

- johnfelipe 3

- mdrovdahl 3

- xrotwang 3

- robroc 3

- dmick 3

- betatim 3

- dufferzafar 3

- Florents-Tselai 3

- aki-k 3

- ashishdotme 3

- yejiyang 3

- henrikek 3

- swyxio 3

- Segerberg 3

- blairdrummond 3

- jsancho-gpl 3

- kevindkeogh 3

- gk7279 3

- daniel-butler 3

- learning4life 3

- mattmalcher 3

- FabianHertwig 3

- polyrand 3

- justmars 3

- garethr 2

- danp 2

- nelsonjchen 2

- dsisnero 2

- hubgit 2

- jackowayed 2

- ftrain 2

- chrishas35 2

- tannewt 2

- HaveF 2

- ingenieroariel 2

- pkulchenko 2

- coleifer 2

- gavinband 2

- aviflax 2

- iloveitaly 2

- tholo 2

- mungewell 2

- frankier 2

- lchski 2

- tmaier 2

- hcarter333 2

- gfrmin 2

- amitkoth 2

- mcint 2

- frosencrantz 2

- eads 2

- virtadpt 2

- leafgarland 2

- glyph 2

- rafguns 2

- strada 2

- adipasquale 2

- eelkevdbos 2

- ligurio 2

- n8henrie 2

- soobrosa 2

- nathancahill 2

- mustafa0x 2

- davidleejy 2

- bsmithgall 2

- noslouch 2

- willingc 2

- nattaylor 2

- durkie 2

- raynae 2

- cclauss 2

- wulfmann 2

- philshem 2

- bram2000 2

- zzeleznick 2

- chris48s 2

- plpxsk 2

- jeqo 2

- nickvazz 2

- aaronyih1 2

- luxint 2

- jussiarpalahti 2

- tkhattra 2

- sachaj 2

- lagolucas 2

- stevecrawshaw 2

- chekos 2

- ctsrc 2

- ad-si 2

- smithdc1 2

- gsajko 2

- jcmkk3 2

- null92 2

- publicmatt 2

- rachelmarconi 2

- tunguyenatwork 2

- LVerneyPEReN 2

- MichaelTiemannOSC 2

- tmcl-it 2

- anotherjesse 1

- jarib 1

- jokull 1

- fernand0 1

- precipice 1

- llimllib 1

- gijs 1

- blaine 1

- ashanan 1

- gravis 1

- nkirsch 1

- tomdyson 1

- mrchrisadams 1

- dkam 1

- harperreed 1

- nileshtrivedi 1

- chrismytton 1

- nedbat 1

- furilo 1

- kindly 1

- adamwolf 1

- prabhur 1

- palfrey 1

- dmd 1

- pquentin 1

- rubenv 1

- Uninen 1

- rtanglao 1

- carsonyl 1

- nryberg 1

- step21 1

- stefanocudini 1

- rcoup 1

- spookylukey 1

- scoates 1

- hpk42 1

- annapowellsmith 1

- cadeef 1

- aslakr 1

- thorn0 1

- yurivish 1

- pax 1

- lucapette 1

- jmelloy 1

- Krazybug 1

- dvhthomas 1

- dckc 1

- phubbard 1

- sethvincent 1

- andrewdotn 1

- meatcar 1

- aitoehigie 1

- julienma 1

- michaelmcandrew 1

- drewda 1

- stiles 1

- saulpw 1

- adamalton 1

- terinjokes 1

- thadk 1

- robintw 1

- astrojuanlu 1

- ipmb 1

- steren 1

- aidansteele 1

- mikepqr 1

- 0x1997 1

- jonafato 1

- gwk 1

- knutwannheden 1

- davidszotten 1

- chrislkeller 1

- kevboh 1

- eaubin 1

- yunzheng 1

- mhkeller 1

- lfdebrux 1

- karlcow 1

- heyarne 1

- ryanfox 1

- sopel 1

- cephillips 1

- ryascott 1

- simonrjones 1

- justinpinkney 1

- merwok 1

- mattkiefer 1

- snth 1

- adarshp 1

- joshmgrant 1

- bcongdon 1

- nickdirienzo 1

- adamjonas 1

- hannseman 1

- kaihendry 1

- urbas 1

- metamoof 1

- brimstone 1

- adamchainz 1

- PabloLerma 1

- heussd 1

- RayBB 1

- BryantD 1

- limar 1

- drkane 1

- Gagravarr 1

- radusuciu 1

- esagara 1

- agguser 1

- rclement 1

- dyllan-to-you 1

- justinallen 1

- jordaneremieff 1

- wdccdw 1

- wpears 1

- progpow 1

- DavidPratten 1

- ltrgoddard 1

- costrouc 1

- jratike80 1

- ment4list 1

- ccorcos 1

- choldgraf 1

- Olshansk 1

- qqilihq 1

- jdangerx 1

- fidiego 1

- OverkillGuy 1

- QAInsights 1

- secretGeek 1

- fkuhn 1

- jameslittle230 1

- Profpatsch 1

- dskrad 1

- kwladyka 1

- Carib0u 1

- fatihky 1

- phoenixjun 1

- JesperTreetop 1

- wenhoujx 1

- bapowell 1

- yairlenga 1

- louispotok 1

- ChristopherWilks 1

- Maltazar 1

- hueyy 1

- eumiro 1

- wuhland 1

- eric-burel 1

- foscoj 1

- dvot197007 1

- kokes 1

- RamiAwar 1

- csusanu 1

- rprimet 1

- metab0t 1

- spdkils 1

- sturzl 1

- jrdmb 1

- robmarkcole 1

- jfeiwell 1

- coisnepe 1

- chmaynard 1

- erlend-aasland 1

- tf13 1

- alecstein 1

- bendnorman 1

- noklam 1

- jakewilkins 1

- Thomascountz 1

- eigenfoo 1

- GmGniap 1

- rdtq 1

- AnkitKundariya 1

- LucasElArruda 1

- duarteocarmo 1

- mattiaborsoi 1

- sarcasticadmin 1

- yqlbu 1

- abeyerpath 1

- b0b5h4rp13 1

- Rik-de-Kort 1

- patricktrainer 1

- xmichele 1

- miuku 1

- philipp-heinrich 1

- jimmybutton 1

- thewchan 1

- izzues 1

- thisismyfuckingusername 1

- kirajano 1

- J450n-4-W 1

- mlaparie 1

- Dhyanesh97 1

- knowledgecamp12 1

- McEazy2700 1

- cycle-data 1

| id | html_url ▼ | issue_url | node_id | user | created_at | updated_at | author_association | body | reactions | issue | performed_via_github_app |

|---|---|---|---|---|---|---|---|---|---|---|---|

| 620771698 | https://github.com/dogsheep/dogsheep-photos/issues/14#issuecomment-620771698 | https://api.github.com/repos/dogsheep/dogsheep-photos/issues/14 | MDEyOklzc3VlQ29tbWVudDYyMDc3MTY5OA== | simonw 9599 | 2020-04-28T18:13:48Z | 2020-04-28T18:13:48Z | MEMBER | For face detection:

|

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Annotate photos using the Google Cloud Vision API 608512747 | |

| 620772190 | https://github.com/dogsheep/dogsheep-photos/issues/14#issuecomment-620772190 | https://api.github.com/repos/dogsheep/dogsheep-photos/issues/14 | MDEyOklzc3VlQ29tbWVudDYyMDc3MjE5MA== | simonw 9599 | 2020-04-28T18:14:43Z | 2020-04-28T18:14:43Z | MEMBER | Database schema for this will require some thought. Just dumping the output into a JSON column isn't going to be flexible enough - I want to be able to FTS against labels and OCR text, and potentially query against other characteristics too. |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Annotate photos using the Google Cloud Vision API 608512747 | |

| 620774507 | https://github.com/dogsheep/dogsheep-photos/issues/14#issuecomment-620774507 | https://api.github.com/repos/dogsheep/dogsheep-photos/issues/14 | MDEyOklzc3VlQ29tbWVudDYyMDc3NDUwNw== | simonw 9599 | 2020-04-28T18:19:06Z | 2020-04-28T18:19:06Z | MEMBER | The default timeout is a bit aggressive and sometimes failed for me if my resizing proxy took too long to fetch and resize the image.

|

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Annotate photos using the Google Cloud Vision API 608512747 | |

| 623723026 | https://github.com/dogsheep/dogsheep-photos/issues/15#issuecomment-623723026 | https://api.github.com/repos/dogsheep/dogsheep-photos/issues/15 | MDEyOklzc3VlQ29tbWVudDYyMzcyMzAyNg== | simonw 9599 | 2020-05-04T21:41:30Z | 2020-05-04T21:41:30Z | MEMBER | I'm going to put these in a table called

|

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Expose scores from ZCOMPUTEDASSETATTRIBUTES 612151767 | |

| 623723687 | https://github.com/dogsheep/dogsheep-photos/issues/15#issuecomment-623723687 | https://api.github.com/repos/dogsheep/dogsheep-photos/issues/15 | MDEyOklzc3VlQ29tbWVudDYyMzcyMzY4Nw== | simonw 9599 | 2020-05-04T21:43:06Z | 2020-05-04T21:43:06Z | MEMBER | It looks like I can map the photos I'm importing to these tables using the |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Expose scores from ZCOMPUTEDASSETATTRIBUTES 612151767 | |

| 623730934 | https://github.com/dogsheep/dogsheep-photos/issues/15#issuecomment-623730934 | https://api.github.com/repos/dogsheep/dogsheep-photos/issues/15 | MDEyOklzc3VlQ29tbWVudDYyMzczMDkzNA== | simonw 9599 | 2020-05-04T22:00:38Z | 2020-05-04T22:00:48Z | MEMBER | Here's the query to create the new table:

|

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Expose scores from ZCOMPUTEDASSETATTRIBUTES 612151767 | |

| 623739934 | https://github.com/dogsheep/dogsheep-photos/issues/15#issuecomment-623739934 | https://api.github.com/repos/dogsheep/dogsheep-photos/issues/15 | MDEyOklzc3VlQ29tbWVudDYyMzczOTkzNA== | simonw 9599 | 2020-05-04T22:24:26Z | 2020-05-04T22:24:26Z | MEMBER | Twitter thread with some examples of photos that are coming up from queries against these scores: https://twitter.com/simonw/status/1257434670750408705 |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Expose scores from ZCOMPUTEDASSETATTRIBUTES 612151767 | |

| 748436115 | https://github.com/dogsheep/dogsheep-photos/issues/15#issuecomment-748436115 | https://api.github.com/repos/dogsheep/dogsheep-photos/issues/15 | MDEyOklzc3VlQ29tbWVudDc0ODQzNjExNQ== | nickvazz 8573886 | 2020-12-19T07:43:38Z | 2020-12-19T07:47:36Z | NONE | Hey Simon! I really enjoy datasette so far, just started trying it out today following your iPhone photos example. I am not sure if you had run into this or not, but it seems like they might have changed one of the column names from

|

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Expose scores from ZCOMPUTEDASSETATTRIBUTES 612151767 | |

| 748436779 | https://github.com/dogsheep/dogsheep-photos/issues/15#issuecomment-748436779 | https://api.github.com/repos/dogsheep/dogsheep-photos/issues/15 | MDEyOklzc3VlQ29tbWVudDc0ODQzNjc3OQ== | RhetTbull 41546558 | 2020-12-19T07:49:00Z | 2020-12-19T07:49:00Z | CONTRIBUTOR | @nickvazz ZGENERICASSET changed to ZASSET in Big Sur. Here's a list of other changes to the schema in Big Sur: https://github.com/RhetTbull/osxphotos/wiki/Changes-in-Photos-6---Big-Sur |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Expose scores from ZCOMPUTEDASSETATTRIBUTES 612151767 | |

| 748562288 | https://github.com/dogsheep/dogsheep-photos/issues/15#issuecomment-748562288 | https://api.github.com/repos/dogsheep/dogsheep-photos/issues/15 | MDEyOklzc3VlQ29tbWVudDc0ODU2MjI4OA== | RhetTbull 41546558 | 2020-12-20T04:44:22Z | 2020-12-20T04:44:22Z | CONTRIBUTOR | @nickvazz @simonw I opened a PR that replaces the SQL for |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Expose scores from ZCOMPUTEDASSETATTRIBUTES 612151767 | |

| 623805823 | https://github.com/dogsheep/dogsheep-photos/issues/16#issuecomment-623805823 | https://api.github.com/repos/dogsheep/dogsheep-photos/issues/16 | MDEyOklzc3VlQ29tbWVudDYyMzgwNTgyMw== | simonw 9599 | 2020-05-05T02:45:56Z | 2020-05-05T02:45:56Z | MEMBER | I filed an issue with |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Import machine-learning detected labels (dog, llama etc) from Apple Photos 612287234 | |

| 623806085 | https://github.com/dogsheep/dogsheep-photos/issues/16#issuecomment-623806085 | https://api.github.com/repos/dogsheep/dogsheep-photos/issues/16 | MDEyOklzc3VlQ29tbWVudDYyMzgwNjA4NQ== | simonw 9599 | 2020-05-05T02:47:18Z | 2020-05-05T02:47:18Z | MEMBER | In https://github.com/RhetTbull/osxphotos/issues/121#issuecomment-623249263 Rhet Turnbull spotted a table called |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Import machine-learning detected labels (dog, llama etc) from Apple Photos 612287234 | |

| 623806533 | https://github.com/dogsheep/dogsheep-photos/issues/16#issuecomment-623806533 | https://api.github.com/repos/dogsheep/dogsheep-photos/issues/16 | MDEyOklzc3VlQ29tbWVudDYyMzgwNjUzMw== | simonw 9599 | 2020-05-05T02:50:16Z | 2020-05-05T02:50:16Z | MEMBER | I figured there must be a separate database that Photos uses to store the text of the identified labels. I used "Open Files and Ports" in Activity Monitor against the Photos app to try and spot candidates... and found

Here's the schema of that file:

|

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Import machine-learning detected labels (dog, llama etc) from Apple Photos 612287234 | |

| 623806687 | https://github.com/dogsheep/dogsheep-photos/issues/16#issuecomment-623806687 | https://api.github.com/repos/dogsheep/dogsheep-photos/issues/16 | MDEyOklzc3VlQ29tbWVudDYyMzgwNjY4Nw== | simonw 9599 | 2020-05-05T02:51:16Z | 2020-05-05T02:51:16Z | MEMBER | Running datasette against it directly doesn't work: ``` simon@Simons-MacBook-Pro search % datasette psi.sqlite Serve! files=('psi.sqlite',) (immutables=()) on port 8001 Usage: datasette serve [OPTIONS] [FILES]... Error: Connection to psi.sqlite failed check: no such tokenizer: PSITokenizer

|

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Import machine-learning detected labels (dog, llama etc) from Apple Photos 612287234 | |

| 623807568 | https://github.com/dogsheep/dogsheep-photos/issues/16#issuecomment-623807568 | https://api.github.com/repos/dogsheep/dogsheep-photos/issues/16 | MDEyOklzc3VlQ29tbWVudDYyMzgwNzU2OA== | simonw 9599 | 2020-05-05T02:56:06Z | 2020-05-05T02:56:06Z | MEMBER | I'm pretty sure this is what I'm after. The

Then there's a

And an

One major challenge: these UUIDs are split into two integer numbers,