issue_comments

10,495 rows sorted by updated_at descending

This data as json, CSV (advanced)

issue >30

- Show column metadata plus links for foreign keys on arbitrary query results 50

- Redesign default .json format 48

- Rethink how .ext formats (v.s. ?_format=) works before 1.0 48

- JavaScript plugin hooks mechanism similar to pluggy 47

- Updated Dockerfile with SpatiaLite version 5.0 45

- Complete refactor of TableView and table.html template 45

- Port Datasette to ASGI 42

- Authentication (and permissions) as a core concept 40

- Deploy a live instance of demos/apache-proxy 34

- await datasette.client.get(path) mechanism for executing internal requests 33

- Maintain an in-memory SQLite table of connected databases and their tables 32

- Ability to sort (and paginate) by column 31

- link_or_copy_directory() error - Invalid cross-device link 28

- Export to CSV 27

- base_url configuration setting 27

- Documentation with recommendations on running Datasette in production without using Docker 27

- Optimize all those calls to index_list and foreign_key_list 27

- Support cross-database joins 26

- Ability for a canned query to write to the database 26

- table.transform() method for advanced alter table 26

- New pattern for views that return either JSON or HTML, available for plugins 26

- Proof of concept for Datasette on AWS Lambda with EFS 25

- WIP: Add Gmail takeout mbox import 25

- Redesign register_output_renderer callback 24

- Make it easier to insert geometries, with documentation and maybe code 24

- "datasette insert" command and plugin hook 23

- Datasette Plugins 22

- .json and .csv exports fail to apply base_url 22

- Idea: import CSV to memory, run SQL, export in a single command 22

- Plugin hook for dynamic metadata 22

- …

| id | html_url | issue_url | node_id | user | created_at | updated_at ▲ | author_association | body | reactions | issue | performed_via_github_app |

|---|---|---|---|---|---|---|---|---|---|---|---|

| 1111553029 | https://github.com/simonw/datasette/issues/1727#issuecomment-1111553029 | https://api.github.com/repos/simonw/datasette/issues/1727 | IC_kwDOBm6k_c5CQPQF | simonw 9599 | 2022-04-27T22:48:21Z | 2022-04-27T22:48:21Z | OWNER | I wonder if it would be worth exploring multiprocessing here. |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Research: demonstrate if parallel SQL queries are worthwhile 1217759117 | |

| 1111551076 | https://github.com/simonw/datasette/issues/1727#issuecomment-1111551076 | https://api.github.com/repos/simonw/datasette/issues/1727 | IC_kwDOBm6k_c5CQOxk | simonw 9599 | 2022-04-27T22:44:51Z | 2022-04-27T22:45:04Z | OWNER | Really wild idea: what if I created three copies of the SQLite database file - as three separate file names - and then balanced the parallel queries across all these? Any chance that could avoid any mysterious locking issues? |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Research: demonstrate if parallel SQL queries are worthwhile 1217759117 | |

| 1111535818 | https://github.com/simonw/datasette/issues/1727#issuecomment-1111535818 | https://api.github.com/repos/simonw/datasette/issues/1727 | IC_kwDOBm6k_c5CQLDK | simonw 9599 | 2022-04-27T22:18:45Z | 2022-04-27T22:18:45Z | OWNER | Another avenue: https://twitter.com/weargoggles/status/1519426289920270337

Doesn't look like there's an obvious way to access that from Python via the |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Research: demonstrate if parallel SQL queries are worthwhile 1217759117 | |

| 1111506339 | https://github.com/simonw/sqlite-utils/issues/159#issuecomment-1111506339 | https://api.github.com/repos/simonw/sqlite-utils/issues/159 | IC_kwDOCGYnMM5CQD2j | dracos 154364 | 2022-04-27T21:35:13Z | 2022-04-27T21:35:13Z | NONE | Just stumbled across this, wondering why none of my deletes were working. |

{

"total_count": 2,

"+1": 2,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

.delete_where() does not auto-commit (unlike .insert() or .upsert()) 702386948 | |

| 1111485722 | https://github.com/simonw/datasette/issues/1727#issuecomment-1111485722 | https://api.github.com/repos/simonw/datasette/issues/1727 | IC_kwDOBm6k_c5CP-0a | simonw 9599 | 2022-04-27T21:08:20Z | 2022-04-27T21:08:20Z | OWNER | Tried that and it didn't seem to make a difference either. I really need a much deeper view of what's going on here. |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Research: demonstrate if parallel SQL queries are worthwhile 1217759117 | |

| 1111462442 | https://github.com/simonw/datasette/issues/1727#issuecomment-1111462442 | https://api.github.com/repos/simonw/datasette/issues/1727 | IC_kwDOBm6k_c5CP5Iq | simonw 9599 | 2022-04-27T20:40:59Z | 2022-04-27T20:42:49Z | OWNER | This looks VERY relevant: SQLite Shared-Cache Mode:

Enabled as part of the URI filename: Turns out I'm already using this for in-memory databases that have |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Research: demonstrate if parallel SQL queries are worthwhile 1217759117 | |

| 1111460068 | https://github.com/simonw/datasette/issues/1727#issuecomment-1111460068 | https://api.github.com/repos/simonw/datasette/issues/1727 | IC_kwDOBm6k_c5CP4jk | simonw 9599 | 2022-04-27T20:38:32Z | 2022-04-27T20:38:32Z | OWNER | WAL mode didn't seem to make a difference. I thought there was a chance it might help multiple read connections operate at the same time but it looks like it really does only matter for when writes are going on. |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Research: demonstrate if parallel SQL queries are worthwhile 1217759117 | |

| 1111456500 | https://github.com/simonw/datasette/issues/1727#issuecomment-1111456500 | https://api.github.com/repos/simonw/datasette/issues/1727 | IC_kwDOBm6k_c5CP3r0 | simonw 9599 | 2022-04-27T20:36:01Z | 2022-04-27T20:36:01Z | OWNER | Yeah all of this is pretty much assuming read-only connections. Datasette has a separate mechanism for ensuring that writes are executed one at a time against a dedicated connection from an in-memory queue: - https://github.com/simonw/datasette/issues/682 |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Research: demonstrate if parallel SQL queries are worthwhile 1217759117 | |

| 1111451790 | https://github.com/simonw/datasette/issues/1727#issuecomment-1111451790 | https://api.github.com/repos/simonw/datasette/issues/1727 | IC_kwDOBm6k_c5CP2iO | glyph 716529 | 2022-04-27T20:30:33Z | 2022-04-27T20:30:33Z | NONE |

I've only skimmed above but it looks like you're doing mainly read-only queries? WAL mode is about better interactions between writers & readers, primarily. |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Research: demonstrate if parallel SQL queries are worthwhile 1217759117 | |

| 1111448928 | https://github.com/simonw/datasette/issues/1727#issuecomment-1111448928 | https://api.github.com/repos/simonw/datasette/issues/1727 | IC_kwDOBm6k_c5CP11g | glyph 716529 | 2022-04-27T20:27:05Z | 2022-04-27T20:27:05Z | NONE | You don't want to re-use an SQLite connection from multiple threads anyway: https://www.sqlite.org/threadsafe.html Multiple connections can operate on the file in parallel, but a single connection can't:

(emphasis mine) |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Research: demonstrate if parallel SQL queries are worthwhile 1217759117 | |

| 1111442012 | https://github.com/simonw/datasette/issues/1727#issuecomment-1111442012 | https://api.github.com/repos/simonw/datasette/issues/1727 | IC_kwDOBm6k_c5CP0Jc | simonw 9599 | 2022-04-27T20:19:00Z | 2022-04-27T20:19:00Z | OWNER | Something worth digging into: are these parallel queries running against the same SQLite connection or are they each rubbing against a separate SQLite connection? Just realized I know the answer: they're running against separate SQLite connections, because that's how the time limit mechanism works: it installs a progress handler for each connection which terminates it after a set time. This means that if SQLite benefits from multiple threads using the same connection (due to shared caches or similar) then Datasette will not be seeing those benefits. It also means that if there's some mechanism within SQLite that penalizes you for having multiple parallel connections to a single file (just guessing here, maybe there's some kind of locking going on?) then Datasette will suffer those penalties. I should try seeing what happens with WAL mode enabled. |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Research: demonstrate if parallel SQL queries are worthwhile 1217759117 | |

| 1111432375 | https://github.com/simonw/datasette/issues/1727#issuecomment-1111432375 | https://api.github.com/repos/simonw/datasette/issues/1727 | IC_kwDOBm6k_c5CPxy3 | simonw 9599 | 2022-04-27T20:07:57Z | 2022-04-27T20:07:57Z | OWNER | Also useful: https://avi.im/blag/2021/fast-sqlite-inserts/ - from a tip on Twitter: https://twitter.com/ricardoanderegg/status/1519402047556235264 |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Research: demonstrate if parallel SQL queries are worthwhile 1217759117 | |

| 1111431785 | https://github.com/simonw/datasette/issues/1727#issuecomment-1111431785 | https://api.github.com/repos/simonw/datasette/issues/1727 | IC_kwDOBm6k_c5CPxpp | simonw 9599 | 2022-04-27T20:07:16Z | 2022-04-27T20:07:16Z | OWNER | I think I need some much more in-depth tracing tricks for this. https://www.maartenbreddels.com/perf/jupyter/python/tracing/gil/2021/01/14/Tracing-the-Python-GIL.html looks relevant - uses the |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Research: demonstrate if parallel SQL queries are worthwhile 1217759117 | |

| 1111408273 | https://github.com/simonw/datasette/issues/1727#issuecomment-1111408273 | https://api.github.com/repos/simonw/datasette/issues/1727 | IC_kwDOBm6k_c5CPr6R | simonw 9599 | 2022-04-27T19:40:51Z | 2022-04-27T19:42:17Z | OWNER | Relevant: here's the code that sets up a Datasette SQLite connection: https://github.com/simonw/datasette/blob/7a6654a253dee243518dc542ce4c06dbb0d0801d/datasette/database.py#L73-L96 It's using

This is why Datasette reserves a single connection for write queries and queues them up in memory, as described here. |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Research: demonstrate if parallel SQL queries are worthwhile 1217759117 | |

| 1111390433 | https://github.com/simonw/datasette/issues/1727#issuecomment-1111390433 | https://api.github.com/repos/simonw/datasette/issues/1727 | IC_kwDOBm6k_c5CPnjh | simonw 9599 | 2022-04-27T19:21:02Z | 2022-04-27T19:21:02Z | OWNER | One weird thing: I noticed that in the parallel trace above the SQL query bars are wider. Mousover shows duration in ms, and I got 13ms for this query: But in the Given those numbers though I would expect the overall page time to be MUCH worse for the parallel version - but the page load times are instead very close to each other, with parallel often winning. This is super-weird. |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Research: demonstrate if parallel SQL queries are worthwhile 1217759117 | |

| 1111385875 | https://github.com/simonw/datasette/issues/1727#issuecomment-1111385875 | https://api.github.com/repos/simonw/datasette/issues/1727 | IC_kwDOBm6k_c5CPmcT | simonw 9599 | 2022-04-27T19:16:57Z | 2022-04-27T19:16:57Z | OWNER | I just remembered the Would explain why the first trace never seems to show more than three SQL queries executing at once. |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Research: demonstrate if parallel SQL queries are worthwhile 1217759117 | |

| 1111380282 | https://github.com/simonw/datasette/issues/1727#issuecomment-1111380282 | https://api.github.com/repos/simonw/datasette/issues/1727 | IC_kwDOBm6k_c5CPlE6 | simonw 9599 | 2022-04-27T19:10:27Z | 2022-04-27T19:10:27Z | OWNER | Wrote more about that here: https://simonwillison.net/2022/Apr/27/parallel-queries/ Compare https://latest-with-plugins.datasette.io/github/commits?_facet=repo&_facet=committer&_trace=1

With the same thing but with parallel execution disabled:

Those total page load time numbers are very similar. Is this parallel optimization worthwhile? Maybe it's only worth it on larger databases? Or maybe larger databases perform worse with this? |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Research: demonstrate if parallel SQL queries are worthwhile 1217759117 | |

| 1110585475 | https://github.com/simonw/datasette/issues/1724#issuecomment-1110585475 | https://api.github.com/repos/simonw/datasette/issues/1724 | IC_kwDOBm6k_c5CMjCD | simonw 9599 | 2022-04-27T06:15:14Z | 2022-04-27T06:15:14Z | OWNER | Yeah, that page is 438K (but only 20K gzipped). |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

?_trace=1 doesn't work on Global Power Plants demo 1216619276 | |

| 1110370095 | https://github.com/simonw/datasette/issues/1724#issuecomment-1110370095 | https://api.github.com/repos/simonw/datasette/issues/1724 | IC_kwDOBm6k_c5CLucv | simonw 9599 | 2022-04-27T00:18:30Z | 2022-04-27T00:18:30Z | OWNER | So this isn't a bug here, it's working as intended. |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

?_trace=1 doesn't work on Global Power Plants demo 1216619276 | |

| 1110369004 | https://github.com/simonw/datasette/issues/1724#issuecomment-1110369004 | https://api.github.com/repos/simonw/datasette/issues/1724 | IC_kwDOBm6k_c5CLuLs | simonw 9599 | 2022-04-27T00:16:35Z | 2022-04-27T00:17:04Z | OWNER | {

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

?_trace=1 doesn't work on Global Power Plants demo 1216619276 | ||

| 1110330554 | https://github.com/simonw/datasette/issues/1723#issuecomment-1110330554 | https://api.github.com/repos/simonw/datasette/issues/1723 | IC_kwDOBm6k_c5CLky6 | simonw 9599 | 2022-04-26T23:06:20Z | 2022-04-26T23:06:20Z | OWNER | {

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Research running SQL in table view in parallel using `asyncio.gather()` 1216508080 | ||

| 1110305790 | https://github.com/simonw/datasette/issues/1723#issuecomment-1110305790 | https://api.github.com/repos/simonw/datasette/issues/1723 | IC_kwDOBm6k_c5CLev- | simonw 9599 | 2022-04-26T22:19:04Z | 2022-04-26T22:19:04Z | OWNER | I realized that seeing the total time in queries wasn't enough to understand this, because if the queries were executed in serial or parallel it should still sum up to the same amount of SQL time (roughly). Instead I need to know how long the page took to render. But that's hard to display on the page since you can't measure it until rendering has finished! So I built an ASGI plugin to handle that measurement: https://github.com/simonw/datasette-total-page-time And with that plugin installed,

While

|

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Research running SQL in table view in parallel using `asyncio.gather()` 1216508080 | |

| 1110279869 | https://github.com/simonw/datasette/issues/1723#issuecomment-1110279869 | https://api.github.com/repos/simonw/datasette/issues/1723 | IC_kwDOBm6k_c5CLYa9 | simonw 9599 | 2022-04-26T21:45:39Z | 2022-04-26T21:45:39Z | OWNER | Getting some nice traces out of this:

|

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Research running SQL in table view in parallel using `asyncio.gather()` 1216508080 | |

| 1110278577 | https://github.com/simonw/datasette/issues/1723#issuecomment-1110278577 | https://api.github.com/repos/simonw/datasette/issues/1723 | IC_kwDOBm6k_c5CLYGx | simonw 9599 | 2022-04-26T21:44:04Z | 2022-04-26T21:44:04Z | OWNER | And some simple benchmarks with ```

~ % ab -n 100 'http://127.0.0.1:8001/global-power-plants/global-power-plants?_facet=primary_fuel&_facet=other_fuel1&_facet=other_fuel3&_facet=other_fuel2' Benchmarking 127.0.0.1 (be patient).....done Server Software: uvicorn Server Hostname: 127.0.0.1 Server Port: 8001 Document Path: /global-power-plants/global-power-plants?_facet=primary_fuel&_facet=other_fuel1&_facet=other_fuel3&_facet=other_fuel2 Document Length: 314187 bytes Concurrency Level: 1 Time taken for tests: 68.279 seconds Complete requests: 100 Failed requests: 13 (Connect: 0, Receive: 0, Length: 13, Exceptions: 0) Total transferred: 31454937 bytes HTML transferred: 31418437 bytes Requests per second: 1.46 [#/sec] (mean) Time per request: 682.787 [ms] (mean) Time per request: 682.787 [ms] (mean, across all concurrent requests) Transfer rate: 449.89 [Kbytes/sec] received Connection Times (ms) min mean[+/-sd] median max Connect: 0 0 0.0 0 0 Processing: 621 683 68.0 658 993 Waiting: 620 682 68.0 657 992 Total: 621 683 68.0 658 993 Percentage of the requests served within a certain time (ms) 50% 658 66% 678 75% 687 80% 711 90% 763 95% 879 98% 926 99% 993 100% 993 (longest request) In parallel: ~ % ab -n 100 'http://127.0.0.1:8001/global-power-plants/global-power-plants?_facet=primary_fuel&_facet=other_fuel1&_facet=other_fuel3&_facet=other_fuel2&_parallel=1' This is ApacheBench, Version 2.3 <$Revision: 1879490 $> Copyright 1996 Adam Twiss, Zeus Technology Ltd, http://www.zeustech.net/ Licensed to The Apache Software Foundation, http://www.apache.org/ Benchmarking 127.0.0.1 (be patient).....done Server Software: uvicorn Server Hostname: 127.0.0.1 Server Port: 8001 Document Path: /global-power-plants/global-power-plants?_facet=primary_fuel&_facet=other_fuel1&_facet=other_fuel3&_facet=other_fuel2&_parallel=1 Document Length: 315703 bytes Concurrency Level: 1 Time taken for tests: 34.763 seconds Complete requests: 100 Failed requests: 11 (Connect: 0, Receive: 0, Length: 11, Exceptions: 0) Total transferred: 31607988 bytes HTML transferred: 31570288 bytes Requests per second: 2.88 [#/sec] (mean) Time per request: 347.632 [ms] (mean) Time per request: 347.632 [ms] (mean, across all concurrent requests) Transfer rate: 887.93 [Kbytes/sec] received Connection Times (ms) min mean[+/-sd] median max Connect: 0 0 0.0 0 0 Processing: 311 347 28.0 338 450 Waiting: 311 347 28.0 338 450 Total: 312 348 28.0 338 451 Percentage of the requests served within a certain time (ms) 50% 338 66% 348 75% 361 80% 367 90% 396 95% 408 98% 436 99% 451 100% 451 (longest request) With concurrency 10, not parallel: ~ % ab -c 10 -n 100 'http://127.0.0.1:8001/global-power-plants/global-power-plants?_facet=primary_fuel&_facet=other_fuel1&_facet=other_fuel3&_facet=other_fuel2&_parallel=' This is ApacheBench, Version 2.3 <$Revision: 1879490 $> Copyright 1996 Adam Twiss, Zeus Technology Ltd, http://www.zeustech.net/ Licensed to The Apache Software Foundation, http://www.apache.org/ Benchmarking 127.0.0.1 (be patient).....done Server Software: uvicorn Server Hostname: 127.0.0.1 Server Port: 8001 Document Path: /global-power-plants/global-power-plants?_facet=primary_fuel&_facet=other_fuel1&_facet=other_fuel3&_facet=other_fuel2&_parallel= Document Length: 314346 bytes Concurrency Level: 10 Time taken for tests: 38.408 seconds Complete requests: 100 Failed requests: 93 (Connect: 0, Receive: 0, Length: 93, Exceptions: 0) Total transferred: 31471333 bytes HTML transferred: 31433733 bytes Requests per second: 2.60 [#/sec] (mean) Time per request: 3840.829 [ms] (mean) Time per request: 384.083 [ms] (mean, across all concurrent requests) Transfer rate: 800.18 [Kbytes/sec] received Connection Times (ms) min mean[+/-sd] median max Connect: 0 0 0.1 0 1 Processing: 685 3719 354.0 3774 4096 Waiting: 684 3707 353.7 3750 4095 Total: 685 3719 354.0 3774 4096 Percentage of the requests served within a certain time (ms) 50% 3774 66% 3832 75% 3855 80% 3878 90% 3944 95% 4006 98% 4057 99% 4096 100% 4096 (longest request) Concurrency 10 parallel: ~ % ab -c 10 -n 100 'http://127.0.0.1:8001/global-power-plants/global-power-plants?_facet=primary_fuel&_facet=other_fuel1&_facet=other_fuel3&_facet=other_fuel2&_parallel=1' This is ApacheBench, Version 2.3 <$Revision: 1879490 $> Copyright 1996 Adam Twiss, Zeus Technology Ltd, http://www.zeustech.net/ Licensed to The Apache Software Foundation, http://www.apache.org/ Benchmarking 127.0.0.1 (be patient).....done Server Software: uvicorn Server Hostname: 127.0.0.1 Server Port: 8001 Document Path: /global-power-plants/global-power-plants?_facet=primary_fuel&_facet=other_fuel1&_facet=other_fuel3&_facet=other_fuel2&_parallel=1 Document Length: 315703 bytes Concurrency Level: 10 Time taken for tests: 36.762 seconds Complete requests: 100 Failed requests: 89 (Connect: 0, Receive: 0, Length: 89, Exceptions: 0) Total transferred: 31606516 bytes HTML transferred: 31568816 bytes Requests per second: 2.72 [#/sec] (mean) Time per request: 3676.182 [ms] (mean) Time per request: 367.618 [ms] (mean, across all concurrent requests) Transfer rate: 839.61 [Kbytes/sec] received Connection Times (ms) min mean[+/-sd] median max Connect: 0 0 0.1 0 0 Processing: 381 3602 419.6 3609 4458 Waiting: 381 3586 418.7 3607 4457 Total: 381 3603 419.6 3609 4458 Percentage of the requests served within a certain time (ms) 50% 3609 66% 3741 75% 3791 80% 3821 90% 3972 95% 4074 98% 4386 99% 4458 100% 4458 (longest request) Trying -c 3 instead. Non parallel: ~ % ab -c 3 -n 100 'http://127.0.0.1:8001/global-power-plants/global-power-plants?_facet=primary_fuel&_facet=other_fuel1&_facet=other_fuel3&_facet=other_fuel2&_parallel=' This is ApacheBench, Version 2.3 <$Revision: 1879490 $> Copyright 1996 Adam Twiss, Zeus Technology Ltd, http://www.zeustech.net/ Licensed to The Apache Software Foundation, http://www.apache.org/ Benchmarking 127.0.0.1 (be patient).....done Server Software: uvicorn Server Hostname: 127.0.0.1 Server Port: 8001 Document Path: /global-power-plants/global-power-plants?_facet=primary_fuel&_facet=other_fuel1&_facet=other_fuel3&_facet=other_fuel2&_parallel= Document Length: 314346 bytes Concurrency Level: 3 Time taken for tests: 39.365 seconds Complete requests: 100 Failed requests: 83 (Connect: 0, Receive: 0, Length: 83, Exceptions: 0) Total transferred: 31470808 bytes HTML transferred: 31433208 bytes Requests per second: 2.54 [#/sec] (mean) Time per request: 1180.955 [ms] (mean) Time per request: 393.652 [ms] (mean, across all concurrent requests) Transfer rate: 780.72 [Kbytes/sec] received Connection Times (ms) min mean[+/-sd] median max Connect: 0 0 0.0 0 0 Processing: 731 1153 126.2 1189 1359 Waiting: 730 1151 125.9 1188 1358 Total: 731 1153 126.2 1189 1359 Percentage of the requests served within a certain time (ms) 50% 1189 66% 1221 75% 1234 80% 1247 90% 1296 95% 1309 98% 1343 99% 1359 100% 1359 (longest request) Parallel: ~ % ab -c 3 -n 100 'http://127.0.0.1:8001/global-power-plants/global-power-plants?_facet=primary_fuel&_facet=other_fuel1&_facet=other_fuel3&_facet=other_fuel2&_parallel=1' This is ApacheBench, Version 2.3 <$Revision: 1879490 $> Copyright 1996 Adam Twiss, Zeus Technology Ltd, http://www.zeustech.net/ Licensed to The Apache Software Foundation, http://www.apache.org/ Benchmarking 127.0.0.1 (be patient).....done Server Software: uvicorn Server Hostname: 127.0.0.1 Server Port: 8001 Document Path: /global-power-plants/global-power-plants?_facet=primary_fuel&_facet=other_fuel1&_facet=other_fuel3&_facet=other_fuel2&_parallel=1 Document Length: 315703 bytes Concurrency Level: 3 Time taken for tests: 34.530 seconds Complete requests: 100 Failed requests: 18 (Connect: 0, Receive: 0, Length: 18, Exceptions: 0) Total transferred: 31606179 bytes HTML transferred: 31568479 bytes Requests per second: 2.90 [#/sec] (mean) Time per request: 1035.902 [ms] (mean) Time per request: 345.301 [ms] (mean, across all concurrent requests) Transfer rate: 893.87 [Kbytes/sec] received Connection Times (ms) min mean[+/-sd] median max Connect: 0 0 0.0 0 0 Processing: 412 1020 104.4 1018 1280 Waiting: 411 1018 104.1 1014 1275 Total: 412 1021 104.4 1018 1280 Percentage of the requests served within a certain time (ms) 50% 1018 66% 1041 75% 1061 80% 1079 90% 1136 95% 1176 98% 1251 99% 1280 100% 1280 (longest request) ``` |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Research running SQL in table view in parallel using `asyncio.gather()` 1216508080 | |

| 1110278182 | https://github.com/simonw/datasette/issues/1723#issuecomment-1110278182 | https://api.github.com/repos/simonw/datasette/issues/1723 | IC_kwDOBm6k_c5CLYAm | simonw 9599 | 2022-04-26T21:43:34Z | 2022-04-26T21:43:34Z | OWNER | Here's the diff I'm using: ```diff diff --git a/datasette/views/table.py b/datasette/views/table.py index d66adb8..f15ef1e 100644 --- a/datasette/views/table.py +++ b/datasette/views/table.py @@ -1,3 +1,4 @@ +import asyncio import itertools import json @@ -5,6 +6,7 @@ import markupsafe from datasette.plugins import pm from datasette.database import QueryInterrupted +from datasette import tracer from datasette.utils import ( await_me_maybe, CustomRow, @@ -150,6 +152,16 @@ class TableView(DataView): default_labels=False, _next=None, _size=None, + ): + with tracer.trace_child_tasks(): + return await self._data_traced(request, default_labels, _next, _size) + + async def _data_traced( + self, + request, + default_labels=False, + _next=None, + _size=None, ): database_route = tilde_decode(request.url_vars["database"]) table_name = tilde_decode(request.url_vars["table"]) @@ -159,6 +171,20 @@ class TableView(DataView): raise NotFound("Database not found: {}".format(database_route)) database_name = db.name

|

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Research running SQL in table view in parallel using `asyncio.gather()` 1216508080 | |

| 1110265087 | https://github.com/simonw/datasette/issues/1715#issuecomment-1110265087 | https://api.github.com/repos/simonw/datasette/issues/1715 | IC_kwDOBm6k_c5CLUz_ | simonw 9599 | 2022-04-26T21:26:17Z | 2022-04-26T21:26:17Z | OWNER | Running facets and facet suggestions in parallel using

|

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Refactor TableView to use asyncinject 1212823665 | |

| 1110246593 | https://github.com/simonw/datasette/issues/1715#issuecomment-1110246593 | https://api.github.com/repos/simonw/datasette/issues/1715 | IC_kwDOBm6k_c5CLQTB | simonw 9599 | 2022-04-26T21:03:56Z | 2022-04-26T21:03:56Z | OWNER | Well this is fun... I applied this change: ```diff diff --git a/datasette/views/table.py b/datasette/views/table.py index d66adb8..85f9e44 100644 --- a/datasette/views/table.py +++ b/datasette/views/table.py @@ -1,3 +1,4 @@ +import asyncio import itertools import json @@ -5,6 +6,7 @@ import markupsafe from datasette.plugins import pm from datasette.database import QueryInterrupted +from datasette import tracer from datasette.utils import ( await_me_maybe, CustomRow, @@ -174,8 +176,11 @@ class TableView(DataView): write=bool(canned_query.get("write")), )

|

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Refactor TableView to use asyncinject 1212823665 | |

| 1110219185 | https://github.com/simonw/datasette/issues/1715#issuecomment-1110219185 | https://api.github.com/repos/simonw/datasette/issues/1715 | IC_kwDOBm6k_c5CLJmx | simonw 9599 | 2022-04-26T20:28:40Z | 2022-04-26T20:56:48Z | OWNER | The refactor I did in #1719 pretty much clashes with all of the changes in https://github.com/simonw/datasette/commit/5053f1ea83194ecb0a5693ad5dada5b25bf0f7e6 so I'll probably need to start my Using a new |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Refactor TableView to use asyncinject 1212823665 | |

| 1110239536 | https://github.com/simonw/datasette/issues/1715#issuecomment-1110239536 | https://api.github.com/repos/simonw/datasette/issues/1715 | IC_kwDOBm6k_c5CLOkw | simonw 9599 | 2022-04-26T20:54:53Z | 2022-04-26T20:54:53Z | OWNER |

|

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Refactor TableView to use asyncinject 1212823665 | |

| 1110238896 | https://github.com/simonw/datasette/issues/1715#issuecomment-1110238896 | https://api.github.com/repos/simonw/datasette/issues/1715 | IC_kwDOBm6k_c5CLOaw | simonw 9599 | 2022-04-26T20:53:59Z | 2022-04-26T20:53:59Z | OWNER | I'm going to rename |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Refactor TableView to use asyncinject 1212823665 | |

| 1110229319 | https://github.com/simonw/datasette/issues/1715#issuecomment-1110229319 | https://api.github.com/repos/simonw/datasette/issues/1715 | IC_kwDOBm6k_c5CLMFH | simonw 9599 | 2022-04-26T20:41:32Z | 2022-04-26T20:44:38Z | OWNER | This time I'm not going to bother with the Most importantly: I want that |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Refactor TableView to use asyncinject 1212823665 | |

| 1110212021 | https://github.com/simonw/datasette/issues/1720#issuecomment-1110212021 | https://api.github.com/repos/simonw/datasette/issues/1720 | IC_kwDOBm6k_c5CLH21 | simonw 9599 | 2022-04-26T20:20:27Z | 2022-04-26T20:20:27Z | OWNER | Closing this because I have a good enough idea of the design for now - the details of the parameters can be figured out when I implement this. |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Design plugin hook for extras 1215174094 | |

| 1109309683 | https://github.com/simonw/datasette/issues/1720#issuecomment-1109309683 | https://api.github.com/repos/simonw/datasette/issues/1720 | IC_kwDOBm6k_c5CHrjz | simonw 9599 | 2022-04-26T04:12:39Z | 2022-04-26T04:12:39Z | OWNER | I think the rough shape of the three plugin hooks is right. The detailed decisions that are needed concern what the parameters should be, which I think will mainly happen as part of:

|

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Design plugin hook for extras 1215174094 | |

| 1109306070 | https://github.com/simonw/datasette/issues/1720#issuecomment-1109306070 | https://api.github.com/repos/simonw/datasette/issues/1720 | IC_kwDOBm6k_c5CHqrW | simonw 9599 | 2022-04-26T04:05:20Z | 2022-04-26T04:05:20Z | OWNER | The proposed plugin for annotations - allowing users to attach comments to database tables, columns and rows - would be a great application for all three of those |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Design plugin hook for extras 1215174094 | |

| 1109305184 | https://github.com/simonw/datasette/issues/1720#issuecomment-1109305184 | https://api.github.com/repos/simonw/datasette/issues/1720 | IC_kwDOBm6k_c5CHqdg | simonw 9599 | 2022-04-26T04:03:35Z | 2022-04-26T04:03:35Z | OWNER | I bet there's all kinds of interesting potential extras that could be calculated by loading the results of the query into a Pandas DataFrame. |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Design plugin hook for extras 1215174094 | |

| 1109200774 | https://github.com/simonw/datasette/issues/1720#issuecomment-1109200774 | https://api.github.com/repos/simonw/datasette/issues/1720 | IC_kwDOBm6k_c5CHQ-G | simonw 9599 | 2022-04-26T01:25:43Z | 2022-04-26T01:26:15Z | OWNER | Had a thought: if a custom HTML template is going to make use of stuff generated using these extras, it will need a way to tell Datasette to execute those extras even in the absence of the Is that necessary? Or should those kinds of plugins use the existing Or maybe the |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Design plugin hook for extras 1215174094 | |

| 1109200335 | https://github.com/simonw/datasette/issues/1720#issuecomment-1109200335 | https://api.github.com/repos/simonw/datasette/issues/1720 | IC_kwDOBm6k_c5CHQ3P | simonw 9599 | 2022-04-26T01:24:47Z | 2022-04-26T01:24:47Z | OWNER | Sketching out a ```python from datasette import hookimpl @hookimpl def register_table_extras(datasette): return [statistics] async def statistics(datasette, query, columns, sql): # ... need to figure out which columns are integer/floats # then build and execute a SQL query that calculates sum/avg/etc for each column ``` |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Design plugin hook for extras 1215174094 | |

| 1109190401 | https://github.com/simonw/sqlite-utils/issues/428#issuecomment-1109190401 | https://api.github.com/repos/simonw/sqlite-utils/issues/428 | IC_kwDOCGYnMM5CHOcB | simonw 9599 | 2022-04-26T01:05:29Z | 2022-04-26T01:05:29Z | OWNER | Django makes extensive use of savepoints for nested transactions: https://docs.djangoproject.com/en/4.0/topics/db/transactions/#savepoints |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Research adding support for savepoints 1215216249 | |

| 1109174715 | https://github.com/simonw/datasette/issues/1720#issuecomment-1109174715 | https://api.github.com/repos/simonw/datasette/issues/1720 | IC_kwDOBm6k_c5CHKm7 | simonw 9599 | 2022-04-26T00:40:13Z | 2022-04-26T00:43:33Z | OWNER | Some of the things I'd like to use

Looking at https://github-to-sqlite.dogsheep.net/github/commits.json?_labels=on&_shape=objects for inspiration. I think there's a separate potential mechanism in the future that lets you add custom columns to a table. This would affect |

{

"total_count": 1,

"+1": 1,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Design plugin hook for extras 1215174094 | |

| 1109171871 | https://github.com/simonw/datasette/issues/1720#issuecomment-1109171871 | https://api.github.com/repos/simonw/datasette/issues/1720 | IC_kwDOBm6k_c5CHJ6f | simonw 9599 | 2022-04-26T00:34:48Z | 2022-04-26T00:34:48Z | OWNER | Let's try sketching out a The first idea I came up with suggests adding new fields to the individual row records that come back - my mental model for extras so far has been that they add new keys to the root object. So if a table result looked like this:

Here's a plugin idea I came up with that would probably justify adding to the individual row objects instead:

This could also work by adding a I think I need some better plugin concepts before committing to this new hook. There's overlap between this and how I want the enrichments mechanism (see here) to work. |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Design plugin hook for extras 1215174094 | |

| 1109165411 | https://github.com/simonw/datasette/issues/1720#issuecomment-1109165411 | https://api.github.com/repos/simonw/datasette/issues/1720 | IC_kwDOBm6k_c5CHIVj | simonw 9599 | 2022-04-26T00:22:42Z | 2022-04-26T00:22:42Z | OWNER | Passing |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Design plugin hook for extras 1215174094 | |

| 1109164803 | https://github.com/simonw/datasette/issues/1720#issuecomment-1109164803 | https://api.github.com/repos/simonw/datasette/issues/1720 | IC_kwDOBm6k_c5CHIMD | simonw 9599 | 2022-04-26T00:21:40Z | 2022-04-26T00:21:40Z | OWNER | What would the existing https://latest.datasette.io/fixtures/simple_primary_key/1.json?_extras=foreign_key_tables feature look like if it was re-imagined as a Rough sketch, copying most of the code from https://github.com/simonw/datasette/blob/579f59dcec43a91dd7d404e00b87a00afd8515f2/datasette/views/row.py#L98 ```python from datasette import hookimpl @hookimpl def register_row_extras(datasette): return [foreign_key_tables] async def foreign_key_tables(datasette, database, table, pk_values): if len(pk_values) != 1: return [] db = datasette.get_database(database) all_foreign_keys = await db.get_all_foreign_keys() foreign_keys = all_foreign_keys[table]["incoming"] if len(foreign_keys) == 0: return [] ``` |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Design plugin hook for extras 1215174094 | |

| 1109162123 | https://github.com/simonw/datasette/issues/1720#issuecomment-1109162123 | https://api.github.com/repos/simonw/datasette/issues/1720 | IC_kwDOBm6k_c5CHHiL | simonw 9599 | 2022-04-26T00:16:42Z | 2022-04-26T00:16:51Z | OWNER | Actually I'm going to imitate the existing

So I'm going to call the new hooks:

They'll return a list of |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Design plugin hook for extras 1215174094 | |

| 1109160226 | https://github.com/simonw/datasette/issues/1720#issuecomment-1109160226 | https://api.github.com/repos/simonw/datasette/issues/1720 | IC_kwDOBm6k_c5CHHEi | simonw 9599 | 2022-04-26T00:14:11Z | 2022-04-26T00:14:11Z | OWNER | There are four existing plugin hooks that include the word "extra" but use it to mean something else - to mean additional CSS/JS/variables to be injected into the page:

I think |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Design plugin hook for extras 1215174094 | |

| 1109159307 | https://github.com/simonw/datasette/issues/1720#issuecomment-1109159307 | https://api.github.com/repos/simonw/datasette/issues/1720 | IC_kwDOBm6k_c5CHG2L | simonw 9599 | 2022-04-26T00:12:28Z | 2022-04-26T00:12:28Z | OWNER | I'm going to keep table and row separate. So I think I need to add three new plugin hooks:

|

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Design plugin hook for extras 1215174094 | |

| 1109158903 | https://github.com/simonw/datasette/issues/1720#issuecomment-1109158903 | https://api.github.com/repos/simonw/datasette/issues/1720 | IC_kwDOBm6k_c5CHGv3 | simonw 9599 | 2022-04-26T00:11:42Z | 2022-04-26T00:11:42Z | OWNER | Places this plugin hook (or hooks?) should be able to affect:

I'm going to combine those last two, which means there are three places. But maybe I can combine the table one and the row one as well? |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Design plugin hook for extras 1215174094 | |

| 1108907238 | https://github.com/simonw/datasette/issues/1719#issuecomment-1108907238 | https://api.github.com/repos/simonw/datasette/issues/1719 | IC_kwDOBm6k_c5CGJTm | simonw 9599 | 2022-04-25T18:34:21Z | 2022-04-25T18:34:21Z | OWNER | Well this refactor turned out to be pretty quick and really does greatly simplify both the |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Refactor `RowView` and remove `RowTableShared` 1214859703 | |

| 1108890170 | https://github.com/simonw/datasette/issues/262#issuecomment-1108890170 | https://api.github.com/repos/simonw/datasette/issues/262 | IC_kwDOBm6k_c5CGFI6 | simonw 9599 | 2022-04-25T18:17:09Z | 2022-04-25T18:18:39Z | OWNER | I spotted in https://github.com/simonw/datasette/issues/1719#issuecomment-1108888494 that there's actually already an undocumented implementation of I added that feature all the way back in November 2017! https://github.com/simonw/datasette/commit/a30c5b220c15360d575e94b0e67f3255e120b916 |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Add ?_extra= mechanism for requesting extra properties in JSON 323658641 | |

| 1108888494 | https://github.com/simonw/datasette/issues/1719#issuecomment-1108888494 | https://api.github.com/repos/simonw/datasette/issues/1719 | IC_kwDOBm6k_c5CGEuu | simonw 9599 | 2022-04-25T18:15:42Z | 2022-04-25T18:15:42Z | OWNER | Here's an undocumented feature I forgot existed: https://latest.datasette.io/fixtures/simple_primary_key/1.json?_extras=foreign_key_tables

It's even covered by the tests: |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Refactor `RowView` and remove `RowTableShared` 1214859703 | |

| 1108884171 | https://github.com/simonw/datasette/issues/1719#issuecomment-1108884171 | https://api.github.com/repos/simonw/datasette/issues/1719 | IC_kwDOBm6k_c5CGDrL | simonw 9599 | 2022-04-25T18:10:46Z | 2022-04-25T18:12:45Z | OWNER | It looks like the only class method from that shared class needed by Which I've been wanting to refactor to provide to

|

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Refactor `RowView` and remove `RowTableShared` 1214859703 | |

| 1108875068 | https://github.com/simonw/datasette/issues/1715#issuecomment-1108875068 | https://api.github.com/repos/simonw/datasette/issues/1715 | IC_kwDOBm6k_c5CGBc8 | simonw 9599 | 2022-04-25T18:03:13Z | 2022-04-25T18:06:33Z | OWNER | The I'm going to split the |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Refactor TableView to use asyncinject 1212823665 | |

| 1108877454 | https://github.com/simonw/datasette/issues/1715#issuecomment-1108877454 | https://api.github.com/repos/simonw/datasette/issues/1715 | IC_kwDOBm6k_c5CGCCO | simonw 9599 | 2022-04-25T18:04:27Z | 2022-04-25T18:04:27Z | OWNER | Pushed my WIP on this to the |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Refactor TableView to use asyncinject 1212823665 | |

| 1107873311 | https://github.com/simonw/datasette/issues/1718#issuecomment-1107873311 | https://api.github.com/repos/simonw/datasette/issues/1718 | IC_kwDOBm6k_c5CCM4f | simonw 9599 | 2022-04-24T16:24:14Z | 2022-04-24T16:24:14Z | OWNER | Wrote up what I learned in a TIL: https://til.simonwillison.net/sphinx/blacken-docs |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Code examples in the documentation should be formatted with Black 1213683988 | |

| 1107873271 | https://github.com/simonw/datasette/issues/1718#issuecomment-1107873271 | https://api.github.com/repos/simonw/datasette/issues/1718 | IC_kwDOBm6k_c5CCM33 | simonw 9599 | 2022-04-24T16:23:57Z | 2022-04-24T16:23:57Z | OWNER | Turns out I didn't need that Submitted a documentation PR to that project instead: |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Code examples in the documentation should be formatted with Black 1213683988 | |

| 1107870788 | https://github.com/simonw/datasette/issues/1718#issuecomment-1107870788 | https://api.github.com/repos/simonw/datasette/issues/1718 | IC_kwDOBm6k_c5CCMRE | simonw 9599 | 2022-04-24T16:09:23Z | 2022-04-24T16:09:23Z | OWNER | One more attempt at testing the |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Code examples in the documentation should be formatted with Black 1213683988 | |

| 1107869884 | https://github.com/simonw/datasette/issues/1718#issuecomment-1107869884 | https://api.github.com/repos/simonw/datasette/issues/1718 | IC_kwDOBm6k_c5CCMC8 | simonw 9599 | 2022-04-24T16:04:03Z | 2022-04-24T16:04:03Z | OWNER | OK, I'm expecting this one to fail at the |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Code examples in the documentation should be formatted with Black 1213683988 | |

| 1107869556 | https://github.com/simonw/datasette/issues/1718#issuecomment-1107869556 | https://api.github.com/repos/simonw/datasette/issues/1718 | IC_kwDOBm6k_c5CCL90 | simonw 9599 | 2022-04-24T16:02:27Z | 2022-04-24T16:02:27Z | OWNER | Looking at that first error it appears to be a place where I had deliberately omitted the body of the function: I can use Fixing those warnings actually helped me spot a couple of bugs, so I'm glad this happened. |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Code examples in the documentation should be formatted with Black 1213683988 | |

| 1107868585 | https://github.com/simonw/datasette/issues/1718#issuecomment-1107868585 | https://api.github.com/repos/simonw/datasette/issues/1718 | IC_kwDOBm6k_c5CCLup | simonw 9599 | 2022-04-24T15:57:10Z | 2022-04-24T15:57:19Z | OWNER | The tests failed there because of what I thought were warnings but turn out to be treated as errors:

|

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Code examples in the documentation should be formatted with Black 1213683988 | |

| 1107867281 | https://github.com/simonw/datasette/issues/1718#issuecomment-1107867281 | https://api.github.com/repos/simonw/datasette/issues/1718 | IC_kwDOBm6k_c5CCLaR | simonw 9599 | 2022-04-24T15:49:23Z | 2022-04-24T15:49:23Z | OWNER | I'm going to push the first commit with a deliberate missing formatting to check that the tests fail. |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Code examples in the documentation should be formatted with Black 1213683988 | |

| 1107866013 | https://github.com/simonw/datasette/issues/1718#issuecomment-1107866013 | https://api.github.com/repos/simonw/datasette/issues/1718 | IC_kwDOBm6k_c5CCLGd | simonw 9599 | 2022-04-24T15:42:07Z | 2022-04-24T15:42:07Z | OWNER | In the absence of |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Code examples in the documentation should be formatted with Black 1213683988 | |

| 1107865493 | https://github.com/simonw/datasette/issues/1718#issuecomment-1107865493 | https://api.github.com/repos/simonw/datasette/issues/1718 | IC_kwDOBm6k_c5CCK-V | simonw 9599 | 2022-04-24T15:39:02Z | 2022-04-24T15:39:02Z | OWNER | There's no |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Code examples in the documentation should be formatted with Black 1213683988 | |

| 1107863924 | https://github.com/simonw/datasette/issues/1718#issuecomment-1107863924 | https://api.github.com/repos/simonw/datasette/issues/1718 | IC_kwDOBm6k_c5CCKl0 | simonw 9599 | 2022-04-24T15:30:03Z | 2022-04-24T15:30:03Z | OWNER | On the one hand, I'm not crazy about some of the indentation decisions Black made here - in particular this one, which I had indented deliberately for readability:

Also: I've been mentally trying to keep the line lengths a bit shorter to help them be more readable on mobile devices. I'll try a different line length using I like this more - here's the result for that example:

|

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Code examples in the documentation should be formatted with Black 1213683988 | |

| 1107863365 | https://github.com/simonw/datasette/issues/1718#issuecomment-1107863365 | https://api.github.com/repos/simonw/datasette/issues/1718 | IC_kwDOBm6k_c5CCKdF | simonw 9599 | 2022-04-24T15:26:41Z | 2022-04-24T15:26:41Z | OWNER | Tried this:

Note that you need to pass @@ -412,12 +408,16 @@ To include an expiry, add a

The resulting cookie will encode data that looks something like this: diff --git a/docs/spatialite.rst b/docs/spatialite.rst index d1b300b..556bad8 100644 --- a/docs/spatialite.rst +++ b/docs/spatialite.rst @@ -58,19 +58,22 @@ Here's a recipe for taking a table with existing latitude and longitude columns, .. code-block:: python

Querying polygons using within()

diff --git a/docs/writing_plugins.rst b/docs/writing_plugins.rst

index bd60a4b..5af01f6 100644

--- a/docs/writing_plugins.rst

+++ b/docs/writing_plugins.rst

@@ -18,9 +18,10 @@ The quickest way to start writing a plugin is to create a + @hookimpl def prepare_connection(conn): - conn.create_function('hello_world', 0, lambda: 'Hello world!') + conn.create_function("hello_world", 0, lambda: "Hello world!") If you save this in @@ -60,22 +61,18 @@ The example consists of two files: a

And a Python module file,

Having built a plugin in this way you can turn it into an installable package using the following command:: @@ -123,11 +120,13 @@ To bundle the static assets for a plugin in the package that you publish to PyPI .. code-block:: python

Where @@ -152,11 +151,13 @@ Templates should be bundled for distribution using the same .. code-block:: python

You can also use wildcards here such as |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Code examples in the documentation should be formatted with Black 1213683988 | |

| 1107862882 | https://github.com/simonw/datasette/issues/1718#issuecomment-1107862882 | https://api.github.com/repos/simonw/datasette/issues/1718 | IC_kwDOBm6k_c5CCKVi | simonw 9599 | 2022-04-24T15:23:56Z | 2022-04-24T15:23:56Z | OWNER | {

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Code examples in the documentation should be formatted with Black 1213683988 | ||

| 1107848097 | https://github.com/simonw/datasette/pull/1717#issuecomment-1107848097 | https://api.github.com/repos/simonw/datasette/issues/1717 | IC_kwDOBm6k_c5CCGuh | simonw 9599 | 2022-04-24T14:02:37Z | 2022-04-24T14:02:37Z | OWNER | This is a neat feature, thanks! |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Add timeout option to Cloudrun build 1213281044 | |

| 1107459446 | https://github.com/simonw/datasette/pull/1717#issuecomment-1107459446 | https://api.github.com/repos/simonw/datasette/issues/1717 | IC_kwDOBm6k_c5CAn12 | codecov[bot] 22429695 | 2022-04-23T11:56:36Z | 2022-04-23T11:56:36Z | NONE | Codecov Report

```diff @@ Coverage Diff @@ main #1717 +/-=======================================

Coverage 91.75% 91.75% | Impacted Files | Coverage Δ | |

|---|---|---|

| datasette/publish/cloudrun.py | Continue to review full report at Codecov.

|

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Add timeout option to Cloudrun build 1213281044 | |

| 1106989581 | https://github.com/simonw/datasette/issues/1715#issuecomment-1106989581 | https://api.github.com/repos/simonw/datasette/issues/1715 | IC_kwDOBm6k_c5B-1IN | simonw 9599 | 2022-04-22T23:03:29Z | 2022-04-22T23:03:29Z | OWNER | I'm having second thoughts about injecting |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Refactor TableView to use asyncinject 1212823665 | |

| 1106947168 | https://github.com/simonw/datasette/issues/1715#issuecomment-1106947168 | https://api.github.com/repos/simonw/datasette/issues/1715 | IC_kwDOBm6k_c5B-qxg | simonw 9599 | 2022-04-22T22:25:57Z | 2022-04-22T22:26:06Z | OWNER | ```python async def database(request: Request, datasette: Datasette) -> Database: database_route = tilde_decode(request.url_vars["database"]) try: return datasette.get_database(route=database_route) except KeyError: raise NotFound("Database not found: {}".format(database_route)) async def table_name(request: Request) -> str: return tilde_decode(request.url_vars["table"]) ``` |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Refactor TableView to use asyncinject 1212823665 | |

| 1106945876 | https://github.com/simonw/datasette/issues/1715#issuecomment-1106945876 | https://api.github.com/repos/simonw/datasette/issues/1715 | IC_kwDOBm6k_c5B-qdU | simonw 9599 | 2022-04-22T22:24:29Z | 2022-04-22T22:24:29Z | OWNER | Looking at the start of I'm going to resolve |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Refactor TableView to use asyncinject 1212823665 | |

| 1106923258 | https://github.com/simonw/datasette/issues/1716#issuecomment-1106923258 | https://api.github.com/repos/simonw/datasette/issues/1716 | IC_kwDOBm6k_c5B-k76 | simonw 9599 | 2022-04-22T22:02:07Z | 2022-04-22T22:02:07Z | OWNER | {

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Configure git blame to ignore Black commit 1212838949 | ||

| 1106908642 | https://github.com/simonw/datasette/issues/1715#issuecomment-1106908642 | https://api.github.com/repos/simonw/datasette/issues/1715 | IC_kwDOBm6k_c5B-hXi | simonw 9599 | 2022-04-22T21:47:55Z | 2022-04-22T21:47:55Z | OWNER | I need a Something like this perhaps:

One thing I could do: break out is the code that turns a request into a list of pairs extracted from the request - this code here: https://github.com/simonw/datasette/blob/8338c66a57502ef27c3d7afb2527fbc0663b2570/datasette/views/table.py#L442-L449 I could turn that into a typed dependency injection function like this:

|

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Refactor TableView to use asyncinject 1212823665 | |

| 1105642187 | https://github.com/simonw/datasette/issues/1101#issuecomment-1105642187 | https://api.github.com/repos/simonw/datasette/issues/1101 | IC_kwDOBm6k_c5B5sLL | eyeseast 25778 | 2022-04-21T18:59:08Z | 2022-04-21T18:59:08Z | CONTRIBUTOR | Ha! That was your idea (and a good one). But it's probably worth measuring to see what overhead it adds. It did require both passing in the database and making the whole thing Just timing the queries themselves:

Looking at the network panel:

I'm not sure how best to time the GeoJSON generation, but it would be interesting to check. Maybe I'll write a plugin to add query times to response headers. The other thing to consider with async streaming is that it might be well-suited for a slower response. When I have to get the whole result and send a response in a fixed amount of time, I need the most efficient query possible. If I can hang onto a connection and get things one chunk at a time, maybe it's ok if there's some overhead. |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

register_output_renderer() should support streaming data 749283032 | |

| 1105615625 | https://github.com/simonw/datasette/issues/1101#issuecomment-1105615625 | https://api.github.com/repos/simonw/datasette/issues/1101 | IC_kwDOBm6k_c5B5lsJ | simonw 9599 | 2022-04-21T18:31:41Z | 2022-04-21T18:32:22Z | OWNER | The ```python

My PostgreSQL/MySQL engineering brain says that this would be better handled by doing a chunk of these (maybe 100) at once, to avoid the per-query-overhead - but with SQLite that might not be necessary. At any rate, this is one of the reasons I'm interested in "iterate over this sequence of chunks of 100 rows at a time" as a potential option here. Of course, a better solution would be for |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

register_output_renderer() should support streaming data 749283032 | |

| 1105608964 | https://github.com/simonw/datasette/issues/1101#issuecomment-1105608964 | https://api.github.com/repos/simonw/datasette/issues/1101 | IC_kwDOBm6k_c5B5kEE | simonw 9599 | 2022-04-21T18:26:29Z | 2022-04-21T18:26:29Z | OWNER | I'm questioning if the mechanisms should be separate at all now - a single response rendering is really just a case of a streaming response that only pulls the first N records from the iterator. It probably needs to be an This actually gets a fair bit more complicated due to the work I'm doing right now to improve the default JSON API:

I want to do things like make faceting results optionally available to custom renderers - which is a separate concern from streaming rows. I'm going to poke around with a bunch of prototypes and see what sticks. |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

register_output_renderer() should support streaming data 749283032 | |

| 1105588651 | https://github.com/simonw/datasette/issues/1101#issuecomment-1105588651 | https://api.github.com/repos/simonw/datasette/issues/1101 | IC_kwDOBm6k_c5B5fGr | eyeseast 25778 | 2022-04-21T18:15:39Z | 2022-04-21T18:15:39Z | CONTRIBUTOR | What if you split rendering and streaming into two things:

That way current plugins still work, and streaming is purely additive. A |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

register_output_renderer() should support streaming data 749283032 | |

| 1105571003 | https://github.com/simonw/datasette/issues/1101#issuecomment-1105571003 | https://api.github.com/repos/simonw/datasette/issues/1101 | IC_kwDOBm6k_c5B5ay7 | simonw 9599 | 2022-04-21T18:10:38Z | 2022-04-21T18:10:46Z | OWNER | Maybe the simplest design for this is to add an optional

Or it could use the existing |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

register_output_renderer() should support streaming data 749283032 | |

| 1105474232 | https://github.com/dogsheep/github-to-sqlite/issues/72#issuecomment-1105474232 | https://api.github.com/repos/dogsheep/github-to-sqlite/issues/72 | IC_kwDODFdgUs5B5DK4 | simonw 9599 | 2022-04-21T17:02:15Z | 2022-04-21T17:02:15Z | MEMBER | That's interesting - yeah it looks like the number of pages can be derived from the https://docs.github.com/en/rest/guides/traversing-with-pagination |

{

"total_count": 1,

"+1": 1,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

feature: display progress bar when downloading multi-page responses 1211283427 | |

| 1105464661 | https://github.com/simonw/datasette/pull/1574#issuecomment-1105464661 | https://api.github.com/repos/simonw/datasette/issues/1574 | IC_kwDOBm6k_c5B5A1V | dholth 208018 | 2022-04-21T16:51:24Z | 2022-04-21T16:51:24Z | NONE | tfw you have more ephemeral storage than upstream bandwidth ``` FROM python:3.10-slim AS base RUN apt update && apt -y install zstd ENV DATASETTE_SECRET 'sosecret' RUN --mount=type=cache,target=/root/.cache/pip pip install -U datasette datasette-pretty-json datasette-graphql ENV PORT 8080 EXPOSE 8080 FROM base AS pack COPY . /app WORKDIR /app RUN datasette inspect --inspect-file inspect-data.json RUN zstd --rm *.db FROM base AS unpack COPY --from=pack /app /app WORKDIR /app CMD ["/bin/bash", "-c", "shopt -s nullglob && zstd --rm -d .db.zst && datasette serve --host 0.0.0.0 --cors --inspect-file inspect-data.json --metadata metadata.json --create --port $PORT .db"] ``` |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

introduce new option for datasette package to use a slim base image 1084193403 | |

| 1103312860 | https://github.com/simonw/datasette/issues/1713#issuecomment-1103312860 | https://api.github.com/repos/simonw/datasette/issues/1713 | IC_kwDOBm6k_c5Bwzfc | fgregg 536941 | 2022-04-20T00:52:19Z | 2022-04-20T00:52:19Z | CONTRIBUTOR | feels related to #1402 |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Datasette feature for publishing snapshots of query results 1203943272 | |

| 1101594549 | https://github.com/simonw/sqlite-utils/issues/425#issuecomment-1101594549 | https://api.github.com/repos/simonw/sqlite-utils/issues/425 | IC_kwDOCGYnMM5BqP-1 | simonw 9599 | 2022-04-18T17:36:14Z | 2022-04-18T17:36:14Z | OWNER | Releated: - #408 |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

`sqlite3.NotSupportedError`: deterministic=True requires SQLite 3.8.3 or higher 1203842656 | |

| 1100243987 | https://github.com/simonw/datasette/pull/1159#issuecomment-1100243987 | https://api.github.com/repos/simonw/datasette/issues/1159 | IC_kwDOBm6k_c5BlGQT | lovasoa 552629 | 2022-04-15T17:24:43Z | 2022-04-15T17:24:43Z | NONE | @simonw : do you think this could be merged ? |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Improve the display of facets information 774332247 | |

| 1099540225 | https://github.com/simonw/datasette/issues/1713#issuecomment-1099540225 | https://api.github.com/repos/simonw/datasette/issues/1713 | IC_kwDOBm6k_c5BiacB | eyeseast 25778 | 2022-04-14T19:09:57Z | 2022-04-14T19:09:57Z | CONTRIBUTOR | I wonder if this overlaps with what I outlined in #1605. You could run something like this:

And maybe that does what you need. Of course, that plugin isn't built yet. But that's the idea. |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Datasette feature for publishing snapshots of query results 1203943272 | |

| 1099443468 | https://github.com/simonw/datasette/issues/1713#issuecomment-1099443468 | https://api.github.com/repos/simonw/datasette/issues/1713 | IC_kwDOBm6k_c5BiC0M | rayvoelker 9308268 | 2022-04-14T17:26:27Z | 2022-04-14T17:26:27Z | NONE | What would be an awesome feature as a plugin would be to be able to save a query (and possibly even results) to a github gist. Being able to share results that way would be super fantastic. Possibly even in Jupyter Notebook format (since github and github gists nicely render those)! I know there's the handy datasette-saved-queries plugin, but a button that could export stuff out and then even possibly import stuff back in (I'm sort of thinking the way that Google Colab allows you to save to github, and then pull the notebook back in is a really great workflow

|

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Datasette feature for publishing snapshots of query results 1203943272 | |

| 1098628334 | https://github.com/simonw/datasette/issues/1713#issuecomment-1098628334 | https://api.github.com/repos/simonw/datasette/issues/1713 | IC_kwDOBm6k_c5Be7zu | simonw 9599 | 2022-04-14T01:43:00Z | 2022-04-14T01:43:13Z | OWNER | Current workaround for fast publishing to S3: |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Datasette feature for publishing snapshots of query results 1203943272 | |

| 1098548931 | https://github.com/simonw/sqlite-utils/issues/421#issuecomment-1098548931 | https://api.github.com/repos/simonw/sqlite-utils/issues/421 | IC_kwDOCGYnMM5BeobD | simonw 9599 | 2022-04-13T22:41:59Z | 2022-04-13T22:41:59Z | OWNER | I'm going to close this ticket since it looks like this is a bug in the way the Dockerfile builds Python, but I'm going to ship a fix for that issue I found so the |

{

"total_count": 1,

"+1": 1,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

"Error: near "(": syntax error" when using sqlite-utils indexes CLI 1180427792 | |

| 1098548090 | https://github.com/simonw/sqlite-utils/issues/424#issuecomment-1098548090 | https://api.github.com/repos/simonw/sqlite-utils/issues/424 | IC_kwDOCGYnMM5BeoN6 | simonw 9599 | 2022-04-13T22:40:15Z | 2022-04-13T22:40:15Z | OWNER | New error: ```pycon

|

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Better error message if you try to create a table with no columns 1200866134 | |

| 1098545390 | https://github.com/simonw/sqlite-utils/issues/425#issuecomment-1098545390 | https://api.github.com/repos/simonw/sqlite-utils/issues/425 | IC_kwDOCGYnMM5Benju | simonw 9599 | 2022-04-13T22:34:52Z | 2022-04-13T22:34:52Z | OWNER | That broke Python 3.7 because it doesn't support

|

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

`sqlite3.NotSupportedError`: deterministic=True requires SQLite 3.8.3 or higher 1203842656 | |

| 1098537000 | https://github.com/simonw/sqlite-utils/issues/425#issuecomment-1098537000 | https://api.github.com/repos/simonw/sqlite-utils/issues/425 | IC_kwDOCGYnMM5Belgo | simonw 9599 | 2022-04-13T22:18:22Z | 2022-04-13T22:18:22Z | OWNER | I figured out a workaround in https://github.com/simonw/sqlite-utils/issues/421#issuecomment-1098535531 The current This alternative implementation worked in the environment where that failed:

|

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

`sqlite3.NotSupportedError`: deterministic=True requires SQLite 3.8.3 or higher 1203842656 | |

| 1098535531 | https://github.com/simonw/sqlite-utils/issues/421#issuecomment-1098535531 | https://api.github.com/repos/simonw/sqlite-utils/issues/421 | IC_kwDOCGYnMM5BelJr | simonw 9599 | 2022-04-13T22:15:48Z | 2022-04-13T22:15:48Z | OWNER | Trying this alternative implementation of the

countries idx_countries_country_name 0 1 country 0 BINARY 1 countries idx_countries_country_name 1 2 name 0 BINARY 1 ``` |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

"Error: near "(": syntax error" when using sqlite-utils indexes CLI 1180427792 | |

| 1098532220 | https://github.com/simonw/sqlite-utils/issues/421#issuecomment-1098532220 | https://api.github.com/repos/simonw/sqlite-utils/issues/421 | IC_kwDOCGYnMM5BekV8 | simonw 9599 | 2022-04-13T22:09:52Z | 2022-04-13T22:09:52Z | OWNER | That error is weird - it's not supposed to happen according to this code here: https://github.com/simonw/sqlite-utils/blob/95522ad919f96eb6cc8cd3cd30389b534680c717/sqlite_utils/db.py#L389-L400 |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

"Error: near "(": syntax error" when using sqlite-utils indexes CLI 1180427792 | |

| 1098531354 | https://github.com/simonw/sqlite-utils/issues/421#issuecomment-1098531354 | https://api.github.com/repos/simonw/sqlite-utils/issues/421 | IC_kwDOCGYnMM5BekIa | simonw 9599 | 2022-04-13T22:08:20Z | 2022-04-13T22:08:20Z | OWNER | OK I figured out what's going on here. First I added an extra Error: near "(": syntax error

Then I checked the version that So the problem here is that the Python in that Docker image is running a very old version of SQLite. I tried using the trick in https://til.simonwillison.net/sqlite/ld-preload as a workaround, and it almost worked:

|

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

"Error: near "(": syntax error" when using sqlite-utils indexes CLI 1180427792 | |

| 1098295517 | https://github.com/simonw/sqlite-utils/issues/421#issuecomment-1098295517 | https://api.github.com/repos/simonw/sqlite-utils/issues/421 | IC_kwDOCGYnMM5Bdqjd | simonw 9599 | 2022-04-13T17:16:20Z | 2022-04-13T17:16:20Z | OWNER | Aha! I was able to replicate the bug using your To build your |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

"Error: near "(": syntax error" when using sqlite-utils indexes CLI 1180427792 | |

| 1098288158 | https://github.com/simonw/sqlite-utils/issues/421#issuecomment-1098288158 | https://api.github.com/repos/simonw/sqlite-utils/issues/421 | IC_kwDOCGYnMM5Bdowe | simonw 9599 | 2022-04-13T17:07:53Z | 2022-04-13T17:07:53Z | OWNER | I can't replicate the bug I'm afraid:

```

% wget "https://github.com/wri/global-power-plant-database/blob/232a6666/output_database/global_power_plant_database.csv?raw=true" % sqlite-utils extract global.db power_plants country country_long \

--table countries \

--fk-column country_id \

--rename country_long name

% sqlite-utils indexes global.db --table countries idx_countries_country_name 0 1 country 0 BINARY 1 countries idx_countries_country_name 1 2 name 0 BINARY 1 ``` |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

"Error: near "(": syntax error" when using sqlite-utils indexes CLI 1180427792 | |

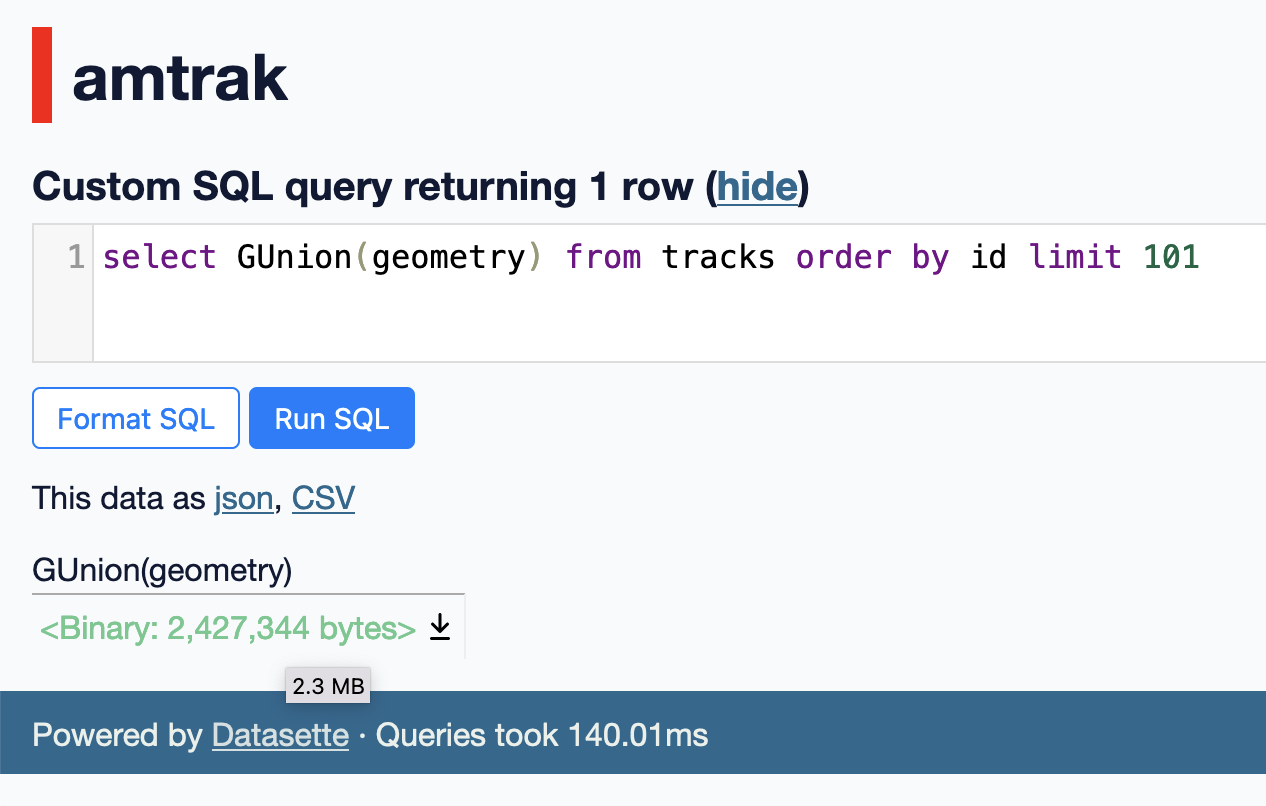

| 1097115034 | https://github.com/simonw/datasette/issues/1712#issuecomment-1097115034 | https://api.github.com/repos/simonw/datasette/issues/1712 | IC_kwDOBm6k_c5BZKWa | simonw 9599 | 2022-04-12T19:12:21Z | 2022-04-12T19:12:21Z | OWNER | Got a TIL out of this too: https://til.simonwillison.net/spatialite/gunion-to-combine-geometries |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Make "<Binary: 2427344 bytes>" easier to read 1202227104 | |

| 1097076622 | https://github.com/simonw/datasette/issues/1712#issuecomment-1097076622 | https://api.github.com/repos/simonw/datasette/issues/1712 | IC_kwDOBm6k_c5BZA-O | simonw 9599 | 2022-04-12T18:42:04Z | 2022-04-12T18:42:04Z | OWNER | I'm not going to show the tooltip if the formatted number is in bytes. |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Make "<Binary: 2427344 bytes>" easier to read 1202227104 | |

| 1097068474 | https://github.com/simonw/datasette/issues/1712#issuecomment-1097068474 | https://api.github.com/repos/simonw/datasette/issues/1712 | IC_kwDOBm6k_c5BY--6 | simonw 9599 | 2022-04-12T18:38:18Z | 2022-04-12T18:38:18Z | OWNER |

|

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Make "<Binary: 2427344 bytes>" easier to read 1202227104 | |