```",107914493,datasette,issue,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/2139/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,completed

1802613340,PR_kwDOBm6k_c5VZhfw,2100,Make primary key view accessible to render_cell hook,1563881,meowcat,open,0,,,,,0,2023-07-13T09:30:36Z,2023-08-10T13:15:41Z,,FIRST_TIME_CONTRIBUTOR,simonw/datasette/pulls/2100,"

----

:books: Documentation preview :books:: https://datasette--2100.org.readthedocs.build/en/2100/

",107914493,datasette,pull,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/2100/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",0,

1838469176,I_kwDOBm6k_c5tlNA4,2127,Context base class to support documenting the context,9599,simonw,open,0,,,3268330,Datasette 1.0,3,2023-08-07T00:01:02Z,2023-08-10T01:30:25Z,,OWNER,,"This idea first came up here:

- https://github.com/simonw/datasette/issues/2112#issuecomment-1652751140

If `datasette.render_template(...)` takes an optional `Context` subclass as an alternative to a context dictionary, I could then use dataclasses to define the context made available to specific templates - which then gives me something I can use to help document what they are.

Also refs:

- https://github.com/simonw/datasette/issues/1510",107914493,datasette,issue,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/2127/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,

1843391585,I_kwDOBm6k_c5t3-xh,2134,Add writable canned query demo to latest.datasette.io,9599,simonw,closed,0,,,,,5,2023-08-09T14:31:30Z,2023-08-10T01:22:46Z,2023-08-10T01:05:56Z,OWNER,,"This would be useful while working on:

- #2114",107914493,datasette,issue,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/2134/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,completed

1844213115,I_kwDOBm6k_c5t7HV7,2138,on_success_message_sql option for writable canned queries,9599,simonw,closed,0,,,8755003,Datasette 1.0a-next,2,2023-08-10T00:20:14Z,2023-08-10T00:39:40Z,2023-08-10T00:34:26Z,OWNER,,"> Or... how about if the `on_success_message` option could define a SQL query to be executed to generate that message? Maybe `on_success_message_sql`.

- https://github.com/simonw/datasette/issues/2134",107914493,datasette,issue,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/2138/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,completed

1841501975,I_kwDOBm6k_c5twxcX,2133,[feature request]`datasette install plugins.json` options,54462,HaveF,closed,0,,,,,9,2023-08-08T15:06:50Z,2023-08-10T00:31:24Z,2023-08-09T22:04:46Z,NONE,,"Hi, simon ❤️

`datasette plugins --all > plugins.json` could generate all plugins info. On another machine, it would be great to install all plugins just by `datasette install plugins.json`",107914493,datasette,issue,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/2133/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,completed

627794879,MDU6SXNzdWU2Mjc3OTQ4Nzk=,782,Redesign default .json format,9599,simonw,closed,0,,,8755003,Datasette 1.0a-next,55,2020-05-30T18:47:07Z,2023-08-10T00:07:17Z,2023-08-10T00:07:17Z,OWNER,,The default JSON just isn't right. I find myself using `?_shape=array` for almost everything I build against the API.,107914493,datasette,issue,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/782/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,completed

1843600087,I_kwDOBm6k_c5t4xrX,2135,Release notes for 1.0a3,9599,simonw,closed,0,,,9700784,Datasette 1.0a3,3,2023-08-09T16:09:26Z,2023-08-09T19:17:07Z,2023-08-09T19:17:06Z,OWNER,,118 commits! https://github.com/simonw/datasette/compare/1.0a2...26be9f0445b753fb84c802c356b0791a72269f25,107914493,datasette,issue,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/2135/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,completed

1843710170,I_kwDOBm6k_c5t5Mja,2136,Query view shouldn't return `columns`,9599,simonw,closed,0,,,9700784,Datasette 1.0a3,4,2023-08-09T17:23:57Z,2023-08-09T19:03:04Z,2023-08-09T19:03:04Z,OWNER,,"I just noticed that https://latest.datasette.io/fixtures/roadside_attraction_characteristics.json?_labels=on&_size=1 returns:

```json

{

""ok"": true,

""next"": ""1"",

""rows"": [

{

""rowid"": 1,

""attraction_id"": {

""value"": 1,

""label"": ""The Mystery Spot""

},

""characteristic_id"": {

""value"": 2,

""label"": ""Paranormal""

}

}

],

""truncated"": false

}

```

But https://latest.datasette.io/fixtures.json?sql=select+rowid%2C+attraction_id%2C+characteristic_id+from+roadside_attraction_characteristics+order+by+rowid+limit+1 returns:

```json

{

""rows"": [

{

""rowid"": 1,

""attraction_id"": 1,

""characteristic_id"": 2

}

],

""columns"": [

""rowid"",

""attraction_id"",

""characteristic_id""

],

""ok"": true,

""truncated"": false

}

```

The `columns` key in the query response is inconsistent with the table response.",107914493,datasette,issue,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/2136/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,completed

1843821954,I_kwDOBm6k_c5t5n2C,2137,Redesign row default JSON,9599,simonw,open,0,,,8755003,Datasette 1.0a-next,1,2023-08-09T18:49:11Z,2023-08-09T19:02:47Z,,OWNER,,"This URL here:

https://latest.datasette.io/fixtures/simple_primary_key/1.json?_extras=foreign_key_tables

```json

{

""database"": ""fixtures"",

""table"": ""simple_primary_key"",

""rows"": [

{

""id"": ""1"",

""content"": ""hello""

}

],

""columns"": [

""id"",

""content""

],

""primary_keys"": [

""id""

],

""primary_key_values"": [

""1""

],

""units"": {},

""foreign_key_tables"": [

{

""other_table"": ""foreign_key_references"",

""column"": ""id"",

""other_column"": ""foreign_key_with_blank_label"",

""count"": 0,

""link"": ""/fixtures/foreign_key_references?foreign_key_with_blank_label=1""

},

{

""other_table"": ""foreign_key_references"",

""column"": ""id"",

""other_column"": ""foreign_key_with_label"",

""count"": 1,

""link"": ""/fixtures/foreign_key_references?foreign_key_with_label=1""

},

{

""other_table"": ""complex_foreign_keys"",

""column"": ""id"",

""other_column"": ""f3"",

""count"": 1,

""link"": ""/fixtures/complex_foreign_keys?f3=1""

},

{

""other_table"": ""complex_foreign_keys"",

""column"": ""id"",

""other_column"": ""f2"",

""count"": 0,

""link"": ""/fixtures/complex_foreign_keys?f2=1""

},

{

""other_table"": ""complex_foreign_keys"",

""column"": ""id"",

""other_column"": ""f1"",

""count"": 1,

""link"": ""/fixtures/complex_foreign_keys?f1=1""

}

],

""query_ms"": 4.226590999678592,

""source"": ""tests/fixtures.py"",

""source_url"": ""https://github.com/simonw/datasette/blob/main/tests/fixtures.py"",

""license"": ""Apache License 2.0"",

""license_url"": ""https://github.com/simonw/datasette/blob/main/LICENSE"",

""ok"": true,

""truncated"": false

}

```

That `?_extras=` should be `?_extra=` - plus the row JSON should be redesigned to fit the new default JSON representation.",107914493,datasette,issue,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/2137/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,

1822939274,I_kwDOBm6k_c5sp9iK,2113,Implement and document extras for the new query view page,9599,simonw,open,0,,,8755003,Datasette 1.0a-next,3,2023-07-26T18:24:01Z,2023-08-09T17:35:22Z,,OWNER,,- #2109 ,107914493,datasette,issue,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/2113/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,

1560662739,I_kwDOBm6k_c5dBdLT,2007,`render_cell()` hook should take an optional `request` argument,9599,simonw,closed,0,,,,,1,2023-01-28T03:13:00Z,2023-08-09T17:15:03Z,2023-01-28T03:34:26Z,OWNER,,From Discord: https://discordapp.com/channels/823971286308356157/996877076982415491/1068227071156965486,107914493,datasette,issue,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/2007/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,completed

1840417903,I_kwDOBm6k_c5tsoxv,2131,Refactor code that supports templates_considered comment,9599,simonw,open,0,,,3268330,Datasette 1.0,1,2023-08-08T01:28:36Z,2023-08-09T15:27:41Z,,OWNER,,"I ended up duplicating it here: https://github.com/simonw/datasette/blob/7532feb424b1dce614351e21b2265c04f9669fe2/datasette/views/database.py#L164-L167

I think it should move to `datasette.render_template()` - and maybe have a renamed template variable too.",107914493,datasette,issue,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/2131/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,

1822940263,I_kwDOBm6k_c5sp9xn,2114,Implement canned queries against new query JSON work,9599,simonw,closed,0,,,9700784,Datasette 1.0a3,3,2023-07-26T18:24:50Z,2023-08-09T15:26:58Z,2023-08-09T15:26:57Z,OWNER,,- #2109 ,107914493,datasette,issue,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/2114/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,completed

771511344,MDExOlB1bGxSZXF1ZXN0NTQzMDE1ODI1,31,Update for Big Sur,41546558,RhetTbull,open,0,,,,,7,2020-12-20T04:36:45Z,2023-08-08T15:52:52Z,,CONTRIBUTOR,dogsheep/dogsheep-photos/pulls/31,Refactored out the SQL for extracting aesthetic scores to use osxphotos -- adds compatbility for Big Sur via osxphotos which has been updated for new table names in Big Sur. Have not yet refactored the SQL for extracting labels which is still compatible with Big Sur.,256834907,dogsheep-photos,pull,,,"{""url"": ""https://api.github.com/repos/dogsheep/dogsheep-photos/issues/31/reactions"", ""total_count"": 1, ""+1"": 1, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",0,

1841343173,I_kwDOBm6k_c5twKrF,2132,Get form fields on query page working again ,9599,simonw,closed,0,,,9700784,Datasette 1.0a3,1,2023-08-08T13:39:05Z,2023-08-08T13:45:10Z,2023-08-08T13:45:09Z,OWNER,,"Caused by:

- #2112

https://latest.datasette.io/fixtures?sql=select+pk1%2C+pk2%2C+pk3%2C+content+from+compound_three_primary_keys+where+%22pk1%22+%3D+%3Ap0+order+by+pk1%2C+pk2%2C+pk3+limit+101&p0=b

![]()

The `:p0` form field is missing. Submitting the form results in this error:

![]()

",107914493,datasette,issue,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/2132/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,completed

1840324765,I_kwDOBm6k_c5tsSCd,2129,CSV ?sql= should indicate errors,9599,simonw,open,0,,,3268330,Datasette 1.0,1,2023-08-07T23:13:04Z,2023-08-08T02:02:21Z,,OWNER,,"> https://latest.datasette.io/_memory.csv?sql=select+blah is a blank page right now:

```bash

curl -I 'https://latest.datasette.io/_memory.csv?sql=select+blah'

```

```

HTTP/2 200

access-control-allow-origin: *

access-control-allow-headers: Authorization, Content-Type

access-control-expose-headers: Link

access-control-allow-methods: GET, POST, HEAD, OPTIONS

access-control-max-age: 3600

content-type: text/plain; charset=utf-8

x-databases: _memory, _internal, fixtures, fixtures2, extra_database, ephemeral

date: Mon, 07 Aug 2023 23:12:15 GMT

server: Google Frontend

```

_Originally posted by @simonw in https://github.com/simonw/datasette/issues/2118#issuecomment-1668688947_",107914493,datasette,issue,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/2129/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,

1822982933,I_kwDOBm6k_c5sqIMV,2117,Figure out what to do about `DatabaseView.name`,9599,simonw,closed,0,,,9700784,Datasette 1.0a3,1,2023-07-26T18:58:06Z,2023-08-08T02:02:07Z,2023-08-08T02:02:07Z,OWNER,,"In the old code:

https://github.com/simonw/datasette/blob/08181823990a71ffa5a1b57b37259198eaa43e06/datasette/views/database.py#L34-L35

This `name` class attribute was later used by some of the plugin hooks, passed as `view_name`: https://github.com/simonw/datasette/blob/18dd88ee4d78fe9d760e9da96028ae06d938a85c/datasette/hookspecs.py#L50-L54

Figure out how that should work once I've refactored those classes to view functions instead.

Refs:

- #2109 ",107914493,datasette,issue,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/2117/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,completed

1822940964,I_kwDOBm6k_c5sp98k,2115,Ensure all tests pass against new query view JSON,9599,simonw,closed,0,,,9700784,Datasette 1.0a3,0,2023-07-26T18:25:20Z,2023-08-08T02:01:39Z,2023-08-08T02:01:38Z,OWNER,,- #2109 ,107914493,datasette,issue,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/2115/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,completed

1822938661,I_kwDOBm6k_c5sp9Yl,2112,Build HTML version of /content?sql=...,9599,simonw,closed,0,,,9700784,Datasette 1.0a3,5,2023-07-26T18:23:34Z,2023-08-08T02:01:09Z,2023-08-08T02:01:01Z,OWNER,,"This will help make the hook as robust as possible.

- #2109 ",107914493,datasette,issue,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/2112/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,completed

1822937426,I_kwDOBm6k_c5sp9FS,2111,Implement new /content.json?sql=...,9599,simonw,closed,0,,,9700784,Datasette 1.0a3,4,2023-07-26T18:22:39Z,2023-08-08T02:00:37Z,2023-08-08T02:00:22Z,OWNER,,"This will be the base that the remaining work builds on top of. Refs:

- #2109 ",107914493,datasette,issue,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/2111/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,completed

1840329615,I_kwDOBm6k_c5tsTOP,2130,Render plugin mechanism needs `error` and `truncated` fields,9599,simonw,closed,0,,,9700784,Datasette 1.0a3,2,2023-08-07T23:19:19Z,2023-08-08T01:51:54Z,2023-08-08T01:47:42Z,OWNER,,"While working on:

- https://github.com/simonw/datasette/pull/2118

It became clear that the `render` callback function documented here: https://docs.datasette.io/en/0.64.3/plugin_hooks.html#register-output-renderer-datasette

Needs to grow the ability to be told if an error occurred (an `error` string) and if the results were truncated (a `truncated` boolean).",107914493,datasette,issue,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/2130/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,completed

1823352380,PR_kwDOBm6k_c5Wfgd9,2118,New JSON design for query views,9599,simonw,closed,0,,,9700784,Datasette 1.0a3,11,2023-07-26T23:29:21Z,2023-08-08T01:47:40Z,2023-08-08T01:47:39Z,OWNER,simonw/datasette/pulls/2118,"WIP. Refs:

- #2109

----

:books: Documentation preview :books:: https://datasette--2118.org.readthedocs.build/en/2118/

",107914493,datasette,pull,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/2118/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",0,

1833193570,PR_kwDOBm6k_c5XArm3,2125,Bump sphinx from 6.1.3 to 7.1.2,49699333,dependabot[bot],closed,0,,,,,2,2023-08-02T13:28:39Z,2023-08-07T16:20:30Z,2023-08-07T16:20:27Z,CONTRIBUTOR,simonw/datasette/pulls/2125,"Bumps [sphinx](https://github.com/sphinx-doc/sphinx) from 6.1.3 to 7.1.2.

Release notes

Sourced from sphinx's releases.

Sphinx 7.1.2

Changelog: https://www.sphinx-doc.org/en/master/changes.html

Sphinx 7.1.1

Changelog: https://www.sphinx-doc.org/en/master/changes.html

Sphinx 7.1.0

Changelog: https://www.sphinx-doc.org/en/master/changes.html

v7.0.1

Changelog: https://www.sphinx-doc.org/en/master/changes.html

v7.0.0

Changelog: https://www.sphinx-doc.org/en/master/changes.html

v7.0.0rc1

Changelog: https://www.sphinx-doc.org/en/master/changes.html

v6.2.1

Changelog: https://www.sphinx-doc.org/en/master/changes.html

v6.2.0

Changelog: https://www.sphinx-doc.org/en/master/changes.html

Changelog

Sourced from sphinx's changelog.

Release 7.1.2 (released Aug 02, 2023)

Bugs fixed

- #11542: linkcheck: Properly respect :confval:

linkcheck_anchors

and do not spuriously report failures to validate anchors.

Patch by James Addison.

Release 7.1.1 (released Jul 27, 2023)

Bugs fixed

- #11514: Fix

SOURCE_DATE_EPOCH in multi-line copyright footer.

Patch by Bénédikt Tran.

Release 7.1.0 (released Jul 24, 2023)

Incompatible changes

Deprecated

- #11412: Emit warnings on using a deprecated Python-specific index entry type

(namely,

module, keyword, operator, object, exception,

statement, and builtin) in the :rst:dir:index directive, and

set the removal version to Sphinx 9. Patch by Adam Turner.

Features added

- #11415: Add a checksum to JavaScript and CSS asset URIs included within

generated HTML, using the CRC32 algorithm.

- :meth:

~sphinx.application.Sphinx.require_sphinx now allows the version

requirement to be specified as (major, minor).

- #11011: Allow configuring a line-length limit for object signatures, via

:confval:

maximum_signature_line_length and the domain-specific variants.

If the length of the signature (in characters) is greater than the configured

limit, each parameter in the signature will be split to its own logical line.

This behaviour may also be controlled by options on object description

directives, for example :rst:dir:py:function:single-line-parameter-list.

... (truncated)

Commits

[](https://docs.github.com/en/github/managing-security-vulnerabilities/about-dependabot-security-updates#about-compatibility-scores)

Dependabot will resolve any conflicts with this PR as long as you don't alter it yourself. You can also trigger a rebase manually by commenting `@dependabot rebase`.

[//]: # (dependabot-automerge-start)

[//]: # (dependabot-automerge-end)

---

Dependabot commands and options

You can trigger Dependabot actions by commenting on this PR:

- `@dependabot rebase` will rebase this PR

- `@dependabot recreate` will recreate this PR, overwriting any edits that have been made to it

- `@dependabot merge` will merge this PR after your CI passes on it

- `@dependabot squash and merge` will squash and merge this PR after your CI passes on it

- `@dependabot cancel merge` will cancel a previously requested merge and block automerging

- `@dependabot reopen` will reopen this PR if it is closed

- `@dependabot close` will close this PR and stop Dependabot recreating it. You can achieve the same result by closing it manually

- `@dependabot ignore this major version` will close this PR and stop Dependabot creating any more for this major version (unless you reopen the PR or upgrade to it yourself)

- `@dependabot ignore this minor version` will close this PR and stop Dependabot creating any more for this minor version (unless you reopen the PR or upgrade to it yourself)

- `@dependabot ignore this dependency` will close this PR and stop Dependabot creating any more for this dependency (unless you reopen the PR or upgrade to it yourself)

----

:books: Documentation preview :books:: https://datasette--2125.org.readthedocs.build/en/2125/

",107914493,datasette,pull,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/2125/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",0,

1824399610,PR_kwDOBm6k_c5WjCS8,2121,Bump furo from 2023.3.27 to 2023.7.26,49699333,dependabot[bot],closed,0,,,,,2,2023-07-27T13:40:48Z,2023-08-07T16:20:23Z,2023-08-07T16:20:20Z,CONTRIBUTOR,simonw/datasette/pulls/2121,"Bumps [furo](https://github.com/pradyunsg/furo) from 2023.3.27 to 2023.7.26.

Changelog

Sourced from furo's changelog.

Changelog

2023.07.26 -- Vigilant Volt

- Fix compatiblity with Sphinx 7.1.

- Improve how content overflow is handled.

- Improve how literal blocks containing inline code are handled.

2023.05.20 -- Unassuming Ultramarine

- ✨ Add support for Sphinx 7.

- Drop support for Sphinx 5.

- Improve the screen-reader label for sidebar collapse.

- Make it easier to create derived themes from Furo.

- Bump all JS dependencies (NodeJS and npm packages).

2023.03.27 -- Tasty Tangerine

- Regenerate with newer version of sphinx-theme-builder, to fix RECORD hashes.

- Add missing class to Font Awesome examples

2023.03.23 -- Sassy Saffron

- Update Python version classifiers.

- Increase the icon size in mobile header.

- Increase admonition title bg opacity.

- Change the default API background to transparent.

- Transition the API background change.

- Remove the "indent" of API entries which have a background.

- Break long inline code literals.

2022.12.07 -- Reverent Raspberry

- ✨ Add support for Sphinx 6.

- ✨ Improve footnote presentation with docutils 0.18+.

- Drop support for Sphinx 4.

- Improve documentation about what the edit button does.

- Improve handling of empty-flexboxes for better print experience on Chrome.

- Improve styling for inline signatures.

... (truncated)

Commits

35f5307 Prepare release: 2023.07.260a8bedc Update changeloga92dd0c Make _add_asset_hashes a no-op with Sphinx 7.1f8db95b Improve literals with inline code are handled1680dbe Document the use of figclass with figure directivebeebd7e Increase the specificity of the admonition title selector834e951 Setup uploads to Percy27bf2c0 [pre-commit.ci] pre-commit autoupdate (#672)c8b51d0 Fix how content overflow is handled80afa27 [pre-commit.ci] pre-commit autoupdate (#652)- Additional commits viewable in compare view

[](https://docs.github.com/en/github/managing-security-vulnerabilities/about-dependabot-security-updates#about-compatibility-scores)

Dependabot will resolve any conflicts with this PR as long as you don't alter it yourself. You can also trigger a rebase manually by commenting `@dependabot rebase`.

[//]: # (dependabot-automerge-start)

[//]: # (dependabot-automerge-end)

---

Dependabot commands and options

You can trigger Dependabot actions by commenting on this PR:

- `@dependabot rebase` will rebase this PR

- `@dependabot recreate` will recreate this PR, overwriting any edits that have been made to it

- `@dependabot merge` will merge this PR after your CI passes on it

- `@dependabot squash and merge` will squash and merge this PR after your CI passes on it

- `@dependabot cancel merge` will cancel a previously requested merge and block automerging

- `@dependabot reopen` will reopen this PR if it is closed

- `@dependabot close` will close this PR and stop Dependabot recreating it. You can achieve the same result by closing it manually

- `@dependabot ignore this major version` will close this PR and stop Dependabot creating any more for this major version (unless you reopen the PR or upgrade to it yourself)

- `@dependabot ignore this minor version` will close this PR and stop Dependabot creating any more for this minor version (unless you reopen the PR or upgrade to it yourself)

- `@dependabot ignore this dependency` will close this PR and stop Dependabot creating any more for this dependency (unless you reopen the PR or upgrade to it yourself)

----

:books: Documentation preview :books:: https://datasette--2121.org.readthedocs.build/en/2121/

",107914493,datasette,pull,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/2121/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",0,

1796830110,PR_kwDOBm6k_c5VFw3j,2098,Bump blacken-docs from 1.14.0 to 1.15.0,49699333,dependabot[bot],closed,0,,,,,2,2023-07-10T13:49:12Z,2023-08-07T16:20:22Z,2023-08-07T16:20:20Z,CONTRIBUTOR,simonw/datasette/pulls/2098,"Bumps [blacken-docs](https://github.com/asottile/blacken-docs) from 1.14.0 to 1.15.0.

Changelog

Sourced from blacken-docs's changelog.

1.15.0 (2023-07-09)

Commits

[](https://docs.github.com/en/github/managing-security-vulnerabilities/about-dependabot-security-updates#about-compatibility-scores)

Dependabot will resolve any conflicts with this PR as long as you don't alter it yourself. You can also trigger a rebase manually by commenting `@dependabot rebase`.

[//]: # (dependabot-automerge-start)

[//]: # (dependabot-automerge-end)

---

Dependabot commands and options

You can trigger Dependabot actions by commenting on this PR:

- `@dependabot rebase` will rebase this PR

- `@dependabot recreate` will recreate this PR, overwriting any edits that have been made to it

- `@dependabot merge` will merge this PR after your CI passes on it

- `@dependabot squash and merge` will squash and merge this PR after your CI passes on it

- `@dependabot cancel merge` will cancel a previously requested merge and block automerging

- `@dependabot reopen` will reopen this PR if it is closed

- `@dependabot close` will close this PR and stop Dependabot recreating it. You can achieve the same result by closing it manually

- `@dependabot ignore this major version` will close this PR and stop Dependabot creating any more for this major version (unless you reopen the PR or upgrade to it yourself)

- `@dependabot ignore this minor version` will close this PR and stop Dependabot creating any more for this minor version (unless you reopen the PR or upgrade to it yourself)

- `@dependabot ignore this dependency` will close this PR and stop Dependabot creating any more for this dependency (unless you reopen the PR or upgrade to it yourself)

----

:books: Documentation preview :books:: https://datasette--2098.org.readthedocs.build/en/2098/

",107914493,datasette,pull,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/2098/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",0,

1839766197,PR_kwDOBm6k_c5XWhWF,2128,"Bump blacken-docs, furo, blacken-docs",49699333,dependabot[bot],closed,0,,,,,1,2023-08-07T15:50:40Z,2023-08-07T16:19:25Z,2023-08-07T16:19:24Z,CONTRIBUTOR,simonw/datasette/pulls/2128,"Bumps the python-packages group with 3 updates: [sphinx](https://github.com/sphinx-doc/sphinx), [furo](https://github.com/pradyunsg/furo) and [blacken-docs](https://github.com/asottile/blacken-docs).

Updates `sphinx` from 6.1.3 to 7.1.2

Release notes

Sourced from sphinx's releases.

Sphinx 7.1.2

Changelog: https://www.sphinx-doc.org/en/master/changes.html

Sphinx 7.1.1

Changelog: https://www.sphinx-doc.org/en/master/changes.html

Sphinx 7.1.0

Changelog: https://www.sphinx-doc.org/en/master/changes.html

v7.0.1

Changelog: https://www.sphinx-doc.org/en/master/changes.html

v7.0.0

Changelog: https://www.sphinx-doc.org/en/master/changes.html

v7.0.0rc1

Changelog: https://www.sphinx-doc.org/en/master/changes.html

v6.2.1

Changelog: https://www.sphinx-doc.org/en/master/changes.html

v6.2.0

Changelog: https://www.sphinx-doc.org/en/master/changes.html

Changelog

Sourced from sphinx's changelog.

Release 7.1.2 (released Aug 02, 2023)

Bugs fixed

- #11542: linkcheck: Properly respect :confval:

linkcheck_anchors

and do not spuriously report failures to validate anchors.

Patch by James Addison.

Release 7.1.1 (released Jul 27, 2023)

Bugs fixed

- #11514: Fix

SOURCE_DATE_EPOCH in multi-line copyright footer.

Patch by Bénédikt Tran.

Release 7.1.0 (released Jul 24, 2023)

Incompatible changes

Deprecated

- #11412: Emit warnings on using a deprecated Python-specific index entry type

(namely,

module, keyword, operator, object, exception,

statement, and builtin) in the :rst:dir:index directive, and

set the removal version to Sphinx 9. Patch by Adam Turner.

Features added

- #11415: Add a checksum to JavaScript and CSS asset URIs included within

generated HTML, using the CRC32 algorithm.

- :meth:

~sphinx.application.Sphinx.require_sphinx now allows the version

requirement to be specified as (major, minor).

- #11011: Allow configuring a line-length limit for object signatures, via

:confval:

maximum_signature_line_length and the domain-specific variants.

If the length of the signature (in characters) is greater than the configured

limit, each parameter in the signature will be split to its own logical line.

This behaviour may also be controlled by options on object description

directives, for example :rst:dir:py:function:single-line-parameter-list.

... (truncated)

Commits

Updates `furo` from 2023.3.27 to 2023.7.26

Changelog

Sourced from furo's changelog.

Changelog

2023.07.26 -- Vigilant Volt

- Fix compatiblity with Sphinx 7.1.

- Improve how content overflow is handled.

- Improve how literal blocks containing inline code are handled.

2023.05.20 -- Unassuming Ultramarine

- ✨ Add support for Sphinx 7.

- Drop support for Sphinx 5.

- Improve the screen-reader label for sidebar collapse.

- Make it easier to create derived themes from Furo.

- Bump all JS dependencies (NodeJS and npm packages).

2023.03.27 -- Tasty Tangerine

- Regenerate with newer version of sphinx-theme-builder, to fix RECORD hashes.

- Add missing class to Font Awesome examples

2023.03.23 -- Sassy Saffron

- Update Python version classifiers.

- Increase the icon size in mobile header.

- Increase admonition title bg opacity.

- Change the default API background to transparent.

- Transition the API background change.

- Remove the "indent" of API entries which have a background.

- Break long inline code literals.

2022.12.07 -- Reverent Raspberry

- ✨ Add support for Sphinx 6.

- ✨ Improve footnote presentation with docutils 0.18+.

- Drop support for Sphinx 4.

- Improve documentation about what the edit button does.

- Improve handling of empty-flexboxes for better print experience on Chrome.

- Improve styling for inline signatures.

... (truncated)

Commits

35f5307 Prepare release: 2023.07.260a8bedc Update changeloga92dd0c Make _add_asset_hashes a no-op with Sphinx 7.1f8db95b Improve literals with inline code are handled1680dbe Document the use of figclass with figure directivebeebd7e Increase the specificity of the admonition title selector834e951 Setup uploads to Percy27bf2c0 [pre-commit.ci] pre-commit autoupdate (#672)c8b51d0 Fix how content overflow is handled80afa27 [pre-commit.ci] pre-commit autoupdate (#652)- Additional commits viewable in compare view

Updates `blacken-docs` from 1.14.0 to 1.15.0

Changelog

Sourced from blacken-docs's changelog.

1.15.0 (2023-07-09)

Commits

Dependabot will resolve any conflicts with this PR as long as you don't alter it yourself. You can also trigger a rebase manually by commenting `@dependabot rebase`.

[//]: # (dependabot-automerge-start)

[//]: # (dependabot-automerge-end)

---

Dependabot commands and options

You can trigger Dependabot actions by commenting on this PR:

- `@dependabot rebase` will rebase this PR

- `@dependabot recreate` will recreate this PR, overwriting any edits that have been made to it

- `@dependabot merge` will merge this PR after your CI passes on it

- `@dependabot squash and merge` will squash and merge this PR after your CI passes on it

- `@dependabot cancel merge` will cancel a previously requested merge and block automerging

- `@dependabot reopen` will reopen this PR if it is closed

- `@dependabot close` will close this PR and stop Dependabot recreating it. You can achieve the same result by closing it manually

----

:books: Documentation preview :books:: https://datasette--2128.org.readthedocs.build/en/2128/

",107914493,datasette,pull,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/2128/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",0,

1818838294,I_kwDOCGYnMM5saUUW,578,Plugin hook for adding new output formats,9599,simonw,open,0,,,,,5,2023-07-24T17:29:18Z,2023-08-07T15:41:49Z,,OWNER,,"> What would it take to add a format hook? I'm still thinking about my GIS workflow, and being able to do `sqlite-utils query ... --geojson` would be nice. It's the one place my Datasette workflow is messy, having to do `datasette . --get /path/to/query.geojson --setting max_rows_returned 10000 --load-extension spatialite`.

> I know the current pattern is `--csv`, but maybe `--format geojson` is more future-proof.

https://discord.com/channels/823971286308356157/997738192360964156/1133076679011602432",140912432,sqlite-utils,issue,,,"{""url"": ""https://api.github.com/repos/simonw/sqlite-utils/issues/578/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,

1839344979,I_kwDOCGYnMM5toi1T,582,Handling CSV/file input that contains NUL bytes,1448859,betatim,open,0,,,,,0,2023-08-07T12:24:14Z,2023-08-07T12:24:14Z,,NONE,,"I was using sqlite-utils to create a DB from a CSV and it turns out the CSV contains a NUL byte.

When the processing reaches the line that contains the NUL an exception is raised.

I'm wondering if there is something that can be done in `sqlite-utils` to say ""skip lines with encoding errors"" or some such. I think it isn't super straightforward though as the exception comes from inside the `csv` module that does all the parsing.

Concretely the file is the `KernelVersions.csv` from https://www.kaggle.com/datasets/kaggle/meta-kaggle

This is the command and output:

```

$ sqlite-utils insert --csv kaggle.db kaggle KernelVersions.csv

[------------------------------------] 0%

[#####################---------------] 60% 00:04:24Traceback (most recent call last):

File ""/home/foobar/miniconda/envs/meta-kaggle/bin/sqlite-utils"", line 10, in

sys.exit(cli())

File ""/home/foobar/miniconda/envs/meta-kaggle/lib/python3.10/site-packages/click/core.py"", line 1128, in __call__

return self.main(*args, **kwargs)

File ""/home/foobar/miniconda/envs/meta-kaggle/lib/python3.10/site-packages/click/core.py"", line 1053, in main

rv = self.invoke(ctx)

File ""/home/foobar/miniconda/envs/meta-kaggle/lib/python3.10/site-packages/click/core.py"", line 1659, in invoke

return _process_result(sub_ctx.command.invoke(sub_ctx))

File ""/home/foobar/miniconda/envs/meta-kaggle/lib/python3.10/site-packages/click/core.py"", line 1395, in invoke

return ctx.invoke(self.callback, **ctx.params)

File ""/home/foobar/miniconda/envs/meta-kaggle/lib/python3.10/site-packages/click/core.py"", line 754, in invoke

return __callback(*args, **kwargs)

File ""/home/foobar/miniconda/envs/meta-kaggle/lib/python3.10/site-packages/sqlite_utils/cli.py"", line 1223, in insert

insert_upsert_implementation(

File ""/home/foobar/miniconda/envs/meta-kaggle/lib/python3.10/site-packages/sqlite_utils/cli.py"", line 1085, in insert_upsert_implementation

db[table].insert_all(

File ""/home/foobar/miniconda/envs/meta-kaggle/lib/python3.10/site-packages/sqlite_utils/db.py"", line 3198, in insert_all

chunk = list(chunk)

File ""/home/foobar/miniconda/envs/meta-kaggle/lib/python3.10/site-packages/sqlite_utils/db.py"", line 3742, in fix_square_braces

for record in records:

File ""/home/foobar/miniconda/envs/meta-kaggle/lib/python3.10/site-packages/sqlite_utils/cli.py"", line 1071, in

docs = (decode_base64_values(doc) for doc in docs)

File ""/home/foobar/miniconda/envs/meta-kaggle/lib/python3.10/site-packages/sqlite_utils/cli.py"", line 1068, in

docs = (verify_is_dict(doc) for doc in docs)

File ""/home/foobar/miniconda/envs/meta-kaggle/lib/python3.10/site-packages/sqlite_utils/cli.py"", line 1003, in

docs = (dict(zip(headers, row)) for row in reader)

_csv.Error: line contains NUL

```",140912432,sqlite-utils,issue,,,"{""url"": ""https://api.github.com/repos/simonw/sqlite-utils/issues/582/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,

1826424151,PR_kwDOBm6k_c5Wp6Hs,2124,Bump sphinx from 6.1.3 to 7.1.1,49699333,dependabot[bot],closed,0,,,,,2,2023-07-28T13:23:11Z,2023-08-02T13:28:47Z,2023-08-02T13:28:44Z,CONTRIBUTOR,simonw/datasette/pulls/2124,"Bumps [sphinx](https://github.com/sphinx-doc/sphinx) from 6.1.3 to 7.1.1.

Release notes

Sourced from sphinx's releases.

Sphinx 7.1.1

Changelog: https://www.sphinx-doc.org/en/master/changes.html

Sphinx 7.1.0

Changelog: https://www.sphinx-doc.org/en/master/changes.html

v7.0.1

Changelog: https://www.sphinx-doc.org/en/master/changes.html

v7.0.0

Changelog: https://www.sphinx-doc.org/en/master/changes.html

v7.0.0rc1

Changelog: https://www.sphinx-doc.org/en/master/changes.html

v6.2.1

Changelog: https://www.sphinx-doc.org/en/master/changes.html

v6.2.0

Changelog: https://www.sphinx-doc.org/en/master/changes.html

Changelog

Sourced from sphinx's changelog.

Release 7.1.1 (released Jul 27, 2023)

Bugs fixed

- #11514: Fix

SOURCE_DATE_EPOCH in multi-line copyright footer.

Patch by Bénédikt Tran.

Release 7.1.0 (released Jul 24, 2023)

Incompatible changes

Deprecated

- #11412: Emit warnings on using a deprecated Python-specific index entry type

(namely,

module, keyword, operator, object, exception,

statement, and builtin) in the :rst:dir:index directive, and

set the removal version to Sphinx 9. Patch by Adam Turner.

Features added

- #11415: Add a checksum to JavaScript and CSS asset URIs included within

generated HTML, using the CRC32 algorithm.

- :meth:

~sphinx.application.Sphinx.require_sphinx now allows the version

requirement to be specified as (major, minor).

- #11011: Allow configuring a line-length limit for object signatures, via

:confval:

maximum_signature_line_length and the domain-specific variants.

If the length of the signature (in characters) is greater than the configured

limit, each parameter in the signature will be split to its own logical line.

This behaviour may also be controlled by options on object description

directives, for example :rst:dir:py:function:single-line-parameter-list.

Patch by Thomas Louf, Adam Turner, and Jean-François B.

- #10983: Support for multiline copyright statements in the footer block.

Patch by Stefanie Molin

sphinx.util.display.status_iterator now clears the current line

with ANSI control codes, rather than overprinting with space characters.- #11431: linkcheck: Treat SSL failures as broken links.

Patch by Bénédikt Tran

- #11157: Keep the

translated attribute on translated nodes.

- #11451: Improve the traceback displayed when using :option:

sphinx-build -T

in parallel builds. Patch by Bénédikt Tran

... (truncated)

Commits

[](https://docs.github.com/en/github/managing-security-vulnerabilities/about-dependabot-security-updates#about-compatibility-scores)

Dependabot will resolve any conflicts with this PR as long as you don't alter it yourself. You can also trigger a rebase manually by commenting `@dependabot rebase`.

[//]: # (dependabot-automerge-start)

[//]: # (dependabot-automerge-end)

---

Dependabot commands and options

You can trigger Dependabot actions by commenting on this PR:

- `@dependabot rebase` will rebase this PR

- `@dependabot recreate` will recreate this PR, overwriting any edits that have been made to it

- `@dependabot merge` will merge this PR after your CI passes on it

- `@dependabot squash and merge` will squash and merge this PR after your CI passes on it

- `@dependabot cancel merge` will cancel a previously requested merge and block automerging

- `@dependabot reopen` will reopen this PR if it is closed

- `@dependabot close` will close this PR and stop Dependabot recreating it. You can achieve the same result by closing it manually

- `@dependabot ignore this major version` will close this PR and stop Dependabot creating any more for this major version (unless you reopen the PR or upgrade to it yourself)

- `@dependabot ignore this minor version` will close this PR and stop Dependabot creating any more for this minor version (unless you reopen the PR or upgrade to it yourself)

- `@dependabot ignore this dependency` will close this PR and stop Dependabot creating any more for this dependency (unless you reopen the PR or upgrade to it yourself)

----

:books: Documentation preview :books:: https://datasette--2124.org.readthedocs.build/en/2124/

",107914493,datasette,pull,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/2124/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",0,

774332247,MDExOlB1bGxSZXF1ZXN0NTQ1MjY0NDM2,1159,Improve the display of facets information,552629,lovasoa,open,0,,,3268330,Datasette 1.0,9,2020-12-24T11:01:47Z,2023-07-31T18:57:59Z,,FIRST_TIME_CONTRIBUTOR,simonw/datasette/pulls/1159,"This PR changes the display of facets to hopefully make them more readable.

Before | After

---|---

|

",107914493,datasette,pull,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/1159/reactions"", ""total_count"": 4, ""+1"": 4, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",0,

1827436260,PR_kwDOD079W85WtVyk,39,Missing option in datasette instructions,319473,coldclimate,open,0,,,,,0,2023-07-29T10:34:48Z,2023-07-29T10:34:48Z,,FIRST_TIME_CONTRIBUTOR,dogsheep/dogsheep-photos/pulls/39,Gotta tell it where to look,256834907,dogsheep-photos,pull,,,"{""url"": ""https://api.github.com/repos/dogsheep/dogsheep-photos/issues/39/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",0,

1827427757,PR_kwDOD079W85WtUKG,38,photos-to-sql not found?,319473,coldclimate,closed,0,,,,,2,2023-07-29T09:59:42Z,2023-07-29T10:01:27Z,2023-07-29T10:01:23Z,FIRST_TIME_CONTRIBUTOR,dogsheep/dogsheep-photos/pulls/38,"I wonder if `photos-to-sql` is an old name for `dogsheep-photos`, because I can't find it anywhere.

I can't actually get this command to work (`sqlite3.OperationalError: no such table: attached.ZGENERICASSET` thrown) but I don't think that's related",256834907,dogsheep-photos,pull,,,"{""url"": ""https://api.github.com/repos/dogsheep/dogsheep-photos/issues/38/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",0,

1820346348,PR_kwDOBm6k_c5WVYor,2107,Bump sphinx from 6.1.3 to 7.1.0,49699333,dependabot[bot],closed,0,,,,,2,2023-07-25T13:28:30Z,2023-07-28T13:23:19Z,2023-07-28T13:23:17Z,CONTRIBUTOR,simonw/datasette/pulls/2107,"Bumps [sphinx](https://github.com/sphinx-doc/sphinx) from 6.1.3 to 7.1.0.

Release notes

Sourced from sphinx's releases.

Sphinx 7.1.0

Changelog: https://www.sphinx-doc.org/en/master/changes.html

v7.0.1

Changelog: https://www.sphinx-doc.org/en/master/changes.html

v7.0.0

Changelog: https://www.sphinx-doc.org/en/master/changes.html

v7.0.0rc1

Changelog: https://www.sphinx-doc.org/en/master/changes.html

v6.2.1

Changelog: https://www.sphinx-doc.org/en/master/changes.html

v6.2.0

Changelog: https://www.sphinx-doc.org/en/master/changes.html

Changelog

Sourced from sphinx's changelog.

Release 7.1.0 (released Jul 24, 2023)

Incompatible changes

Deprecated

- #11412: Emit warnings on using a deprecated Python-specific index entry type

(namely,

module, keyword, operator, object, exception,

statement, and builtin) in the :rst:dir:index directive, and

set the removal version to Sphinx 9. Patch by Adam Turner.

Features added

- #11415: Add a checksum to JavaScript and CSS asset URIs included within

generated HTML, using the CRC32 algorithm.

- :meth:

~sphinx.application.Sphinx.require_sphinx now allows the version

requirement to be specified as (major, minor).

- #11011: Allow configuring a line-length limit for object signatures, via

:confval:

maximum_signature_line_length and the domain-specific variants.

If the length of the signature (in characters) is greater than the configured

limit, each parameter in the signature will be split to its own logical line.

This behaviour may also be controlled by options on object description

directives, for example :rst:dir:py:function:single-line-parameter-list.

Patch by Thomas Louf, Adam Turner, and Jean-François B.

- #10983: Support for multiline copyright statements in the footer block.

Patch by Stefanie Molin

sphinx.util.display.status_iterator now clears the current line

with ANSI control codes, rather than overprinting with space characters.- #11431: linkcheck: Treat SSL failures as broken links.

Patch by Bénédikt Tran

- #11157: Keep the

translated attribute on translated nodes.

- #11451: Improve the traceback displayed when using :option:

sphinx-build -T

in parallel builds. Patch by Bénédikt Tran

- #11324: linkcheck: Use session-basd HTTP requests.

- #11438: Add support for the :rst:dir:

py:class and :rst:dir:py:function

directives for PEP 695 (generic classes and functions declarations) and

PEP 696 (default type parameters). Multi-line support (#11011) is enabled

for type parameters list and can be locally controlled on object description

directives, e.g., :rst:dir:py:function:single-line-type-parameter-list.

Patch by Bénédikt Tran.

- #11484: linkcheck: Allow HTML anchors to be ignored on a per-URL basis

via :confval:

linkcheck_anchors_ignore_for_url while

... (truncated)

Commits

e560f63 Bump to 7.1.0 final066e0fa Add translation progress information (#11509)0882914 Target PyPI in create-release.yml21fbee5 Fix OIDC token payload1a403e4 Add informational log messaging258b0ea Revert "Switch to using github.request"f9c89e5 Switch to using github.request52c7f66 Use the correct token minting URL for TestPyPI6079f28 Install twine in PyPI publish workflow3d43b9e Fix github-script syntax in create-release.yml- Additional commits viewable in compare view

[](https://docs.github.com/en/github/managing-security-vulnerabilities/about-dependabot-security-updates#about-compatibility-scores)

Dependabot will resolve any conflicts with this PR as long as you don't alter it yourself. You can also trigger a rebase manually by commenting `@dependabot rebase`.

[//]: # (dependabot-automerge-start)

[//]: # (dependabot-automerge-end)

---

Dependabot commands and options

You can trigger Dependabot actions by commenting on this PR:

- `@dependabot rebase` will rebase this PR

- `@dependabot recreate` will recreate this PR, overwriting any edits that have been made to it

- `@dependabot merge` will merge this PR after your CI passes on it

- `@dependabot squash and merge` will squash and merge this PR after your CI passes on it

- `@dependabot cancel merge` will cancel a previously requested merge and block automerging

- `@dependabot reopen` will reopen this PR if it is closed

- `@dependabot close` will close this PR and stop Dependabot recreating it. You can achieve the same result by closing it manually

- `@dependabot ignore this major version` will close this PR and stop Dependabot creating any more for this major version (unless you reopen the PR or upgrade to it yourself)

- `@dependabot ignore this minor version` will close this PR and stop Dependabot creating any more for this minor version (unless you reopen the PR or upgrade to it yourself)

- `@dependabot ignore this dependency` will close this PR and stop Dependabot creating any more for this dependency (unless you reopen the PR or upgrade to it yourself)

----

:books: Documentation preview :books:: https://datasette--2107.org.readthedocs.build/en/2107/

",107914493,datasette,pull,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/2107/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",0,

1824457306,I_kwDOBm6k_c5svwJa,2122,Parameters on canned queries: fixed or query-generated list?,1563881,meowcat,open,0,,,,,0,2023-07-27T14:07:07Z,2023-07-27T14:07:07Z,,NONE,,"Hi,

currently parameters in canned queries are just text fields. It would be cool to have one of the options below. Would you accept a PR doing something in this direction? (Possibly this could even work as a plugin.)

* adding facets, which would work like facets on tables or views, giving a list of selectable options (and leaving parameters as is)

* making it possible to provide a query which returns selectable values for a parameter, e.g.

```

calendar_entries_current_instrument:

sql: |

select * from calendar_entries

where

DTEND_UNIX > UNIXEPOCH() and

DTSTART_UNIX < UNIXEPOCH() + :days *24*60*60 and

current = 1 and

MACHINE = :instrument

order by

DTSTART_UNIX

params:

days:

sql: ""SELECT VALUE FROM generate_series(1, 30, 1)""

# this obviously requires the corresponding sqlite extension

instrument:

sql: ""SELECT DISTINCT MACHINE FROM calendar_entries""

```

* making it possible to provide a fixed list of parameters

```

calendar_entries_current_instrument:

sql: |

select * from calendar_entries

where

DTEND_UNIX > UNIXEPOCH() and

DTSTART_UNIX < UNIXEPOCH() + :days *24*60*60 and

current = 1 and

MACHINE = :instrument

order by

DTSTART_UNIX

params:

days:

values: [1, 2, 3, 5, 10, 20, 30]

instrument:

values: [supermachine, crappymachine, boringmachine]

```",107914493,datasette,issue,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/2122/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,

1719759468,PR_kwDOBm6k_c5RBXH_,2077,Bump furo from 2023.3.27 to 2023.5.20,49699333,dependabot[bot],closed,0,,,,,3,2023-05-22T13:58:16Z,2023-07-27T13:40:55Z,2023-07-27T13:40:53Z,CONTRIBUTOR,simonw/datasette/pulls/2077,"Bumps [furo](https://github.com/pradyunsg/furo) from 2023.3.27 to 2023.5.20.

Changelog

Sourced from furo's changelog.

Changelog

2023.05.20 -- Unassuming Ultramarine

- ✨ Add support for Sphinx 7.

- Drop support for Sphinx 5.

- Improve the screen-reader label for sidebar collapse.

- Make it easier to create derived themes from Furo.

- Bump all JS dependencies (NodeJS and npm packages).

2023.03.27 -- Tasty Tangerine

- Regenerate with newer version of sphinx-theme-builder, to fix RECORD hashes.

- Add missing class to Font Awesome examples

2023.03.23 -- Sassy Saffron

- Update Python version classifiers.

- Increase the icon size in mobile header.

- Increase admonition title bg opacity.

- Change the default API background to transparent.

- Transition the API background change.

- Remove the "indent" of API entries which have a background.

- Break long inline code literals.

2022.12.07 -- Reverent Raspberry

- ✨ Add support for Sphinx 6.

- ✨ Improve footnote presentation with docutils 0.18+.

- Drop support for Sphinx 4.

- Improve documentation about what the edit button does.

- Improve handling of empty-flexboxes for better print experience on Chrome.

- Improve styling for inline signatures.

- Replace the

meta generator tag with a comment.

- Tweak labels with icons to prevent users selecting icons as text on touch.

2022.09.29 -- Quaint Quartz

- Add ability to set arbitrary URLs for edit button.

... (truncated)

Commits

d2c9ca8 Prepare release: 2023.05.20662d21b Update changelog591780b Bump compatible Sphinx versionc2e7837 Bump NodeJS and package versionsdd85574 Use the reference HtmlFormatter class defined on PygmentsBridge. (#657)6bff419 Fix broken link (#654)e7f732e Improve the screen-reader label for sidebar collapse48c0bf2 Drop the check for the theme name1b17d81 [pre-commit.ci] pre-commit autoupdate (#646)4904fd5 Remove Python 3.8 constraint from Black pre-commit config (#647)- Additional commits viewable in compare view

[](https://docs.github.com/en/github/managing-security-vulnerabilities/about-dependabot-security-updates#about-compatibility-scores)

You can trigger a rebase of this PR by commenting `@dependabot rebase`.

[//]: # (dependabot-automerge-start)

[//]: # (dependabot-automerge-end)

---

Dependabot commands and options

You can trigger Dependabot actions by commenting on this PR:

- `@dependabot rebase` will rebase this PR

- `@dependabot recreate` will recreate this PR, overwriting any edits that have been made to it

- `@dependabot merge` will merge this PR after your CI passes on it

- `@dependabot squash and merge` will squash and merge this PR after your CI passes on it

- `@dependabot cancel merge` will cancel a previously requested merge and block automerging

- `@dependabot reopen` will reopen this PR if it is closed

- `@dependabot close` will close this PR and stop Dependabot recreating it. You can achieve the same result by closing it manually

- `@dependabot ignore this major version` will close this PR and stop Dependabot creating any more for this major version (unless you reopen the PR or upgrade to it yourself)

- `@dependabot ignore this minor version` will close this PR and stop Dependabot creating any more for this minor version (unless you reopen the PR or upgrade to it yourself)

- `@dependabot ignore this dependency` will close this PR and stop Dependabot creating any more for this dependency (unless you reopen the PR or upgrade to it yourself)

----

:books: Documentation preview :books:: https://datasette--2077.org.readthedocs.build/en/2077/

> **Note**

> Automatic rebases have been disabled on this pull request as it has been open for over 30 days.",107914493,datasette,pull,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/2077/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",0,

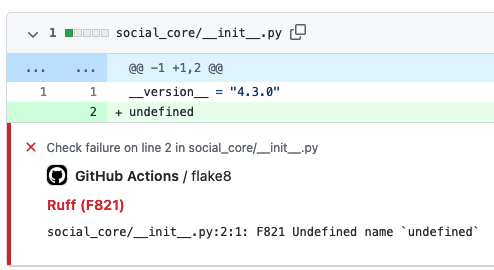

1823428714,I_kwDOBm6k_c5sr1Bq,2120,Add __all__ to datasette/__init__.py,9599,simonw,open,0,,,,,0,2023-07-27T01:07:10Z,2023-07-27T01:07:10Z,,OWNER,,"Currently looks like this: https://github.com/simonw/datasette/blob/08181823990a71ffa5a1b57b37259198eaa43e06/datasette/__init__.py#L1-L6

Adding `__all__ = [""Permission"", ""Forbidden""...]` would let me get rid of those `# noqa` comments.",107914493,datasette,issue,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/2120/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,

1822934563,I_kwDOBm6k_c5sp8Yj,2109,Plan for getting the new JSON format query views working,9599,simonw,closed,0,,,9700784,Datasette 1.0a3,5,2023-07-26T18:20:18Z,2023-07-27T00:24:47Z,2023-07-26T18:25:34Z,OWNER,,"I've been stuck on this for too long. I'm breaking it down into a full milestone:

https://github.com/simonw/datasette/milestone/29",107914493,datasette,issue,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/2109/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,completed

1823160748,I_kwDOCGYnMM5sqzms,581,`sqlite-utils convert --pdb` option,9599,simonw,closed,0,,,,,1,2023-07-26T21:02:50Z,2023-07-26T21:07:45Z,2023-07-26T21:06:10Z,OWNER,,While using `sqlite-utils convert` I realized it would be handy if you could pass `--pdb` to have it open the debugger at the first instance of a failed conversion.,140912432,sqlite-utils,issue,,,"{""url"": ""https://api.github.com/repos/simonw/sqlite-utils/issues/581/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,completed

1822936521,I_kwDOBm6k_c5sp83J,2110,Merge database index page and query view,9599,simonw,closed,0,,,9700784,Datasette 1.0a3,1,2023-07-26T18:21:57Z,2023-07-26T19:53:25Z,2023-07-26T19:53:25Z,OWNER,,"Refs:

- #2109

The idea here is that hitting `/content` without a `?sql=` will show an empty result set AND default to including a bunch of extras about the list of tables in the database.

Then I won't have to think about `/content` and `/content?sql=` as separate pages any more.",107914493,datasette,issue,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/2110/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,completed

1822949756,I_kwDOBm6k_c5sqAF8,2116,Turn DatabaseDownload into an async view function,9599,simonw,closed,0,,,9700784,Datasette 1.0a3,3,2023-07-26T18:31:59Z,2023-07-26T18:44:00Z,2023-07-26T18:44:00Z,OWNER,,"A minor refactor, but it is a good starting point for this new branch. Refs:

- #2109",107914493,datasette,issue,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/2116/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,completed

1656432059,PR_kwDOBm6k_c5NuBNG,2053,WIP new JSON for queries,9599,simonw,closed,0,,,,,12,2023-04-05T23:26:15Z,2023-07-26T18:28:59Z,2023-07-26T18:26:45Z,OWNER,simonw/datasette/pulls/2053,"Refs:

- #2049

TODO:

- [x] Read queries JSON

- Implement error display with `""ok"": false` and an errors key

- Read queries HTML

- Read queries other formats (plugins)

- Canned read queries (dispatched to from table)

- Write queries (a canned query thing)

- Implement different shapes, refactoring to share code with table

- Implement a sensible subset of extras, also refactoring to share code with table

- Get all tests passing

----

:books: Documentation preview :books:: https://datasette--2053.org.readthedocs.build/en/2053/

",107914493,datasette,pull,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/2053/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",1,

1816857442,I_kwDOBm6k_c5sSwti,2106,`datasette install -e` option,9599,simonw,closed,0,,,,,3,2023-07-22T18:33:42Z,2023-07-26T18:28:33Z,2023-07-22T18:42:54Z,OWNER,,"As seen in LLM and now in `sqlite-utils` too:

- https://github.com/simonw/sqlite-utils/issues/570

Useful for developing plugins, see tutorial at https://llm.datasette.io/en/stable/plugins/tutorial-model-plugin.html",107914493,datasette,issue,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/2106/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,completed

1822918995,I_kwDOCGYnMM5sp4lT,580,Add way to export to a csv file using the Python library,44324811,kevinlinxc,open,0,,,,,0,2023-07-26T18:09:26Z,2023-07-26T18:09:26Z,,NONE,,"According to the documentation, we can make a csv output using the CLI tool, but not the Python library. Could we have the latter?",140912432,sqlite-utils,issue,,,"{""url"": ""https://api.github.com/repos/simonw/sqlite-utils/issues/580/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,

1822813627,I_kwDOBm6k_c5spe27,2108,some (many?) SQL syntax errors are not throwing errors with a .csv endpoint,536941,fgregg,open,0,,,,,0,2023-07-26T16:57:45Z,2023-07-26T16:58:07Z,,CONTRIBUTOR,,"here's a CTE query that should always fail with a syntax error:

```sql

with foo as (nonsense)

select

*

from

foo;

```

when we make this query against the default endpoint, we do indeed get a 400 status code the problem is returned to the user: https://global-power-plants.datasettes.com/global-power-plants?sql=with+foo+as+%28nonsense%29+select+*+from+foo%3B

but, if we use the csv endpoint, we get a 200 status code and no indication of a problem: https://global-power-plants.datasettes.com/global-power-plants.csv?sql=with+foo+as+%28nonsense%29+select+*+from+foo%3B

same with this bad sql

```sql

select

a,

from

foo;

```

https://global-power-plants.datasettes.com/global-power-plants?sql=select%0D%0A++a%2C%0D%0Afrom%0D%0A++foo%3B

vs

https://global-power-plants.datasettes.com/global-power-plants.csv?sql=select%0D%0A++a%2C%0D%0Afrom%0D%0A++foo%3B

but, datasette catches this bad sql at both endpoints:

```sql

slect

a

from

foo;

```

https://global-power-plants.datasettes.com/global-power-plants?sql=slect%0D%0A++a%0D%0Afrom%0D%0A++foo%3B

https://global-power-plants.datasettes.com/global-power-plants.csv?sql=slect%0D%0A++a%0D%0Afrom%0D%0A++foo%3B

",107914493,datasette,issue,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/2108/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,

1821108702,I_kwDOCGYnMM5si-ne,579,Special handling for SQLite column of type `JSON`,15178711,asg017,open,0,,,,,0,2023-07-25T20:37:23Z,2023-07-25T20:37:23Z,,CONTRIBUTOR,,"`sqlite-utils` should detect and have specially handling for column with a `JSON` column. For example:

```sql

CREATE TABLE ""dogs"" (

id INTEGER PRIMARY KEY,

name TEXT,

friends JSON

);

```

## Automatic Nesting

According to [""Nested JSON Values""](https://sqlite-utils.datasette.io/en/stable/cli.html#nested-json-values), sqlite-utils will only expand JSON if the `--json-cols` flag is passed. It looks like it'll try to `json.load` all text column to test if its JSON, which can get expensive on non-json columns.

Instead, `sqlite-utils` should be default (ie without the `--json-cols` flags) do the `maybe_json()` operation on columns with a declared `JSON` type. So the above table would expand the `""friends""` column as expected, withoutthe `--json-cols` flag:

```bash

sqlite-utils dogs.db ""select * from dogs"" | python -mjson.tool

```

```

[

{

""id"": 1,

""name"": ""Cleo"",

""friends"": [

{

""name"": ""Pancakes""

},

{

""name"": ""Bailey""

}

]

}

]

```

---

I'm sure there's other ways `sqlite-utils` can specially handle JSON columns, so keeping this open while I think of more",140912432,sqlite-utils,issue,,,"{""url"": ""https://api.github.com/repos/simonw/sqlite-utils/issues/579/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,

1710164693,PR_kwDOBm6k_c5QhIL2,2075,Bump sphinx from 6.1.3 to 7.0.1,49699333,dependabot[bot],closed,0,,,,,2,2023-05-15T13:59:31Z,2023-07-25T13:28:39Z,2023-07-25T13:28:36Z,CONTRIBUTOR,simonw/datasette/pulls/2075,"Bumps [sphinx](https://github.com/sphinx-doc/sphinx) from 6.1.3 to 7.0.1.

Release notes

Sourced from sphinx's releases.

v7.0.1

Changelog: https://www.sphinx-doc.org/en/master/changes.html

v7.0.0

Changelog: https://www.sphinx-doc.org/en/master/changes.html

v7.0.0rc1

Changelog: https://www.sphinx-doc.org/en/master/changes.html

v6.2.1

Changelog: https://www.sphinx-doc.org/en/master/changes.html

v6.2.0

Changelog: https://www.sphinx-doc.org/en/master/changes.html

Changelog

Sourced from sphinx's changelog.

Release 7.0.1 (released May 12, 2023)

Dependencies

- #11411: Support

Docutils 0.20_. Patch by Adam Turner.

.. _Docutils 0.20: https://docutils.sourceforge.io/RELEASE-NOTES.html#release-0-20-2023-05-04

Bugs fixed

- #11418: Clean up remaining references to

sphinx.setup_command

following the removal of support for setuptools.

Patch by Willem Mulder.

Release 7.0.0 (released Apr 29, 2023)

Incompatible changes

- #11359: Remove long-deprecated aliases for

MecabSplitter and

DefaultSplitter in sphinx.search.ja.

- #11360: Remove deprecated

make_old_id functions in domain object

description classes.

- #11363: Remove the Setuptools integration (

build_sphinx hook in

setup.py).

- #11364: Remove deprecated

sphinx.ext.napoleon.iterators module.

- #11365: Remove support for the

jsdump format in sphinx.search.

- #11366: Make

locale a required argument to

sphinx.util.i18n.format_date().

- #11370: Remove deprecated

sphinx.util.stemmer module.

- #11371: Remove deprecated

sphinx.pycode.ast.parse() function.

- #11372: Remove deprecated

sphinx.io.read_doc() function.

- #11373: Removed deprecated

sphinx.util.get_matching_files() function.

- #11378: Remove deprecated

sphinx.util.docutils.is_html5_writer_available()

function.

- #11379: Make the

env argument to Builder subclasses required.

- #11380: autosummary: Always emit grouped import exceptions.

- #11381: Remove deprecated

style key for HTML templates.

- #11382: Remove deprecated

sphinx.writers.latex.LaTeXTranslator.docclasses

attribute.

- #11383: Remove deprecated

sphinx.builders.html.html5_ready and

sphinx.builders.html.HTMLTranslator attributes.

- #11385: Remove support for HTML 4 output.

Release 6.2.1 (released Apr 25, 2023)

... (truncated)

Commits

[](https://docs.github.com/en/github/managing-security-vulnerabilities/about-dependabot-security-updates#about-compatibility-scores)

You can trigger a rebase of this PR by commenting `@dependabot rebase`.

[//]: # (dependabot-automerge-start)

[//]: # (dependabot-automerge-end)

---

Dependabot commands and options

You can trigger Dependabot actions by commenting on this PR:

- `@dependabot rebase` will rebase this PR

- `@dependabot recreate` will recreate this PR, overwriting any edits that have been made to it

- `@dependabot merge` will merge this PR after your CI passes on it

- `@dependabot squash and merge` will squash and merge this PR after your CI passes on it

- `@dependabot cancel merge` will cancel a previously requested merge and block automerging

- `@dependabot reopen` will reopen this PR if it is closed

- `@dependabot close` will close this PR and stop Dependabot recreating it. You can achieve the same result by closing it manually

- `@dependabot ignore this major version` will close this PR and stop Dependabot creating any more for this major version (unless you reopen the PR or upgrade to it yourself)

- `@dependabot ignore this minor version` will close this PR and stop Dependabot creating any more for this minor version (unless you reopen the PR or upgrade to it yourself)

- `@dependabot ignore this dependency` will close this PR and stop Dependabot creating any more for this dependency (unless you reopen the PR or upgrade to it yourself)

----

:books: Documentation preview :books:: https://datasette--2075.org.readthedocs.build/en/2075/

> **Note**

> Automatic rebases have been disabled on this pull request as it has been open for over 30 days.

",107914493,datasette,pull,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/2075/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",0,

1817281557,I_kwDOC8SPRc5sUYQV,37,cannot use jinja filters in display?,10352819,rprimet,closed,0,,,,,1,2023-07-23T20:09:54Z,2023-07-23T20:18:27Z,2023-07-23T20:18:26Z,NONE,,"Hi, I'm trying to have a display function in Dogsheep's `config.yml` that includes something like this:

```

{{ display.snippet|safe }}

```

Unfortunately, rendering fails with a message 'urls is undefined'.

The same happens if I'm trying to build a row URL manually, using filters like `quote_plus` (as my keys are URLs).

Any hints?

Thanks!",197431109,dogsheep-beta,issue,,,"{""url"": ""https://api.github.com/repos/dogsheep/dogsheep-beta/issues/37/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,completed

1816997390,I_kwDOCGYnMM5sTS4O,576,Backfill the release notes prior to 0.4,9599,simonw,closed,0,,,,,2,2023-07-23T05:41:42Z,2023-07-23T05:49:51Z,2023-07-23T05:48:21Z,OWNER,,"Currently the changelog starts at 0.4:

https://sqlite-utils.datasette.io/en/3.34/changelog.html#id115

I want the other releases - according to https://pypi.org/project/sqlite-utils/#history there are three missing:

![]() ",140912432,sqlite-utils,issue,,,"{""url"": ""https://api.github.com/repos/simonw/sqlite-utils/issues/576/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,completed

1816919568,I_kwDOCGYnMM5sS_4Q,575,Python API ability to opt-out of connection plugins,9599,simonw,closed,0,,,,,2,2023-07-22T23:01:13Z,2023-07-22T23:17:22Z,2023-07-22T23:08:22Z,OWNER,,"Plugins affecting the CLI by default makes sense to me.

I'm less confident about them _always_ affecting users of the Python API.

I'm going to have them apply by default, but I'm going to add a mechanism to opt-out on an individual database basis. Basically this:

```python

from sqlite_utils import Database

db = Database(memory=True, execute_plugins=False)

# Anything using db from here on will not execute plugins

```

cc @asg017

Refs:

- #567

- #574 ",140912432,sqlite-utils,issue,,,"{""url"": ""https://api.github.com/repos/simonw/sqlite-utils/issues/575/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,completed

1816918185,I_kwDOCGYnMM5sS_ip,574,`prepare_connection()` plugin hook,9599,simonw,closed,0,,,,,3,2023-07-22T22:52:47Z,2023-07-22T23:13:14Z,2023-07-22T22:59:10Z,OWNER,,"> Splitting off an issue for `prepare_connection()` since Alex got the PR in seconds before I shipped 3.34!

_Originally posted by @simonw in https://github.com/simonw/sqlite-utils/issues/567#issuecomment-1646686424_

",140912432,sqlite-utils,issue,,,"{""url"": ""https://api.github.com/repos/simonw/sqlite-utils/issues/574/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,completed

1801394744,I_kwDOCGYnMM5rXxo4,567,Plugin system,15178711,asg017,closed,0,,,,,9,2023-07-12T17:02:14Z,2023-07-22T22:59:37Z,2023-07-22T22:59:36Z,CONTRIBUTOR,,"I'd like there to be a plugin system for sqlite-utils, similar to the datasette/llm plugins. I'd like to make plugins that would do things like:

- Register SQLite extensions for more SQL functions + virtual tables

- Register new subcommands

- Different input file formats for `sqlite-utils memory`

- Different output file formats (in addition to `--csv` `--tsv` `--nl` etc.

A few real-world use-cases of plugins I'd like to see in sqlite-utils:

- Register many of my sqlite extensions in sqlite-utils (`sqlite-http`, `sqlite-lines`, `sqlite-regex`, etc.)

- New subcommands to work with `sqlite-vss` vector tables

- Input/ouput Parquet/Avro/Arrow IPC files with `sqlite-arrow`",140912432,sqlite-utils,issue,,,"{""url"": ""https://api.github.com/repos/simonw/sqlite-utils/issues/567/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,completed

1816917522,PR_kwDOCGYnMM5WJ6Jm,573,feat: Implement a prepare_connection plugin hook,15178711,asg017,closed,0,,,,,4,2023-07-22T22:48:44Z,2023-07-22T22:59:09Z,2023-07-22T22:59:09Z,CONTRIBUTOR,simonw/sqlite-utils/pulls/573,"Just like the [Datasette prepare_connection hook](https://docs.datasette.io/en/stable/plugin_hooks.html#prepare-connection-conn-database-datasette), this PR adds a similar hook for the `sqlite-utils` plugin system.

The sole argument is `conn`, since I don't believe a `database` or `datasette` argument would be relevant here.

I want to do this so I can release `sqlite-utils` plugins for my [SQLite extensions](https://github.com/asg017/sqlite-ecosystem), similar to the Datasette plugins I've release for them.

An example plugin: https://gist.github.com/asg017/d7cdf0d56e2be87efda28cebee27fa3c

```bash

$ sqlite-utils install https://gist.github.com/asg017/d7cdf0d56e2be87efda28cebee27fa3c/archive/5f5ad549a40860787629c69ca120a08c32519e99.zip

$ sqlite-utils memory 'select hello(""alex"") as response'

[{""response"": ""Hello, alex!""}]

```

Refs:

- #574

----

:books: Documentation preview :books:: https://sqlite-utils--573.org.readthedocs.build/en/573/

",140912432,sqlite-utils,pull,,,"{""url"": ""https://api.github.com/repos/simonw/sqlite-utils/issues/573/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",0,

1816876211,I_kwDOCGYnMM5sS1Sz,571,`.transform(keep_table=...)` option,9599,simonw,closed,0,,,,,1,2023-07-22T19:49:29Z,2023-07-22T22:32:18Z,2023-07-22T22:32:18Z,OWNER,,">> Also need a design for an option for the `.transform()` method to indicate that the new table should be created with a new name without dropping the old one.

>

> I think `keep_table=""name_of_table""` is good for this.

_Originally posted by @simonw in https://github.com/simonw/sqlite-utils/issues/565#issuecomment-1646657324_

",140912432,sqlite-utils,issue,,,"{""url"": ""https://api.github.com/repos/simonw/sqlite-utils/issues/571/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,completed

1816877910,I_kwDOCGYnMM5sS1tW,572,Don't test Python 3.7 against textual,9599,simonw,closed,0,,,,,2,2023-07-22T19:57:03Z,2023-07-22T22:16:50Z,2023-07-22T22:16:50Z,OWNER,,"Spotted this in the GitHub Actions logs:

",140912432,sqlite-utils,issue,,,"{""url"": ""https://api.github.com/repos/simonw/sqlite-utils/issues/572/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,completed

1786243905,I_kwDOCGYnMM5qd-tB,564,Document that running `db.transform()` tidies up the schema indentation,9599,simonw,closed,0,,,,,0,2023-07-03T13:59:28Z,2023-07-22T22:15:34Z,2023-07-22T22:15:34Z,OWNER,,"> ... and it turns out running `.transform()` with no arguments still fixes the format of the schema!

```pycon

>>> db[""log""].add_column(""foo"", str)