for key in self.ds.renderers.keys()

File ""/home/zhe/miniconda3/lib/python3.7/site-packages/datasette/utils/__init__.py"", line 655, in path_with_format

path = request.path

File ""/home/zhe/miniconda3/lib/python3.7/site-packages/datasette/utils/asgi.py"", line 49, in path

self.scope.get(""raw_path"", self.scope[""path""].encode(""latin-1""))

UnicodeEncodeError: 'latin-1' codec can't encode characters in position 9-11: ordinal not in range(256)

```

This used to work when datasette was based on sanic.

Btw, thanks for the great work!",107914493,datasette,issue,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/558/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,completed

467623820,MDExOlB1bGxSZXF1ZXN0Mjk3MjQzMDcz,559,Bump to uvicorn 0.8.4,9599,simonw,closed,0,,,,,0,2019-07-12T22:30:29Z,2019-07-13T22:34:58Z,2019-07-13T22:34:58Z,OWNER,simonw/datasette/pulls/559,"https://github.com/encode/uvicorn/commits/0.8.4

Query strings will now be included in log files: https://github.com/encode/uvicorn/pull/384",107914493,datasette,pull,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/559/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",0,

465728430,MDExOlB1bGxSZXF1ZXN0Mjk1NzExNTA0,554,Fix static mounts using relative paths and prevent traversal exploits,3243482,abdusco,closed,0,,,,,4,2019-07-09T11:32:02Z,2019-07-11T16:29:26Z,2019-07-11T16:13:19Z,CONTRIBUTOR,simonw/datasette/pulls/554,"While debugging why my static mounts using a relative path (`--static mystatic:rel/path/to/dir`) not working, I noticed that the requests fail no matter what, returning 404 errors.

The reason is that datasette tries to prevent traversal exploits by checking if the path is relative to its registered directory. This check fails when the mount is a relative directory, because `/abs/dir/file` obviously not under `dir/file`.

https://github.com/simonw/datasette/blob/81fa8b6cdc5457b42a224779e5291952314e8d20/datasette/utils/asgi.py#L303-L306

This also has the consequence of returning any requested file, because when `/abs/dir/../../evil.file` resolves `aiofiles` happily returns it to the client after it resolves the path itself. The solution is to make sure we're checking relativity of paths after they're fully resolved.

I've implemented the mentioned changes and also updated the tests.",107914493,datasette,pull,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/554/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",0,

465731062,MDU6SXNzdWU0NjU3MzEwNjI=,555,Static mounts with relative paths not working,3243482,abdusco,closed,0,,,,,0,2019-07-09T11:38:35Z,2019-07-11T16:13:22Z,2019-07-11T16:13:22Z,CONTRIBUTOR,,"Datasette fails to serve files from static mounts that are created using relative paths `datasette --static mystatic:rel/path/to/static/dir`.

I've explained the problem and the solution in the pull request: https://github.com/simonw/datasette/pull/554",107914493,datasette,issue,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/555/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,completed

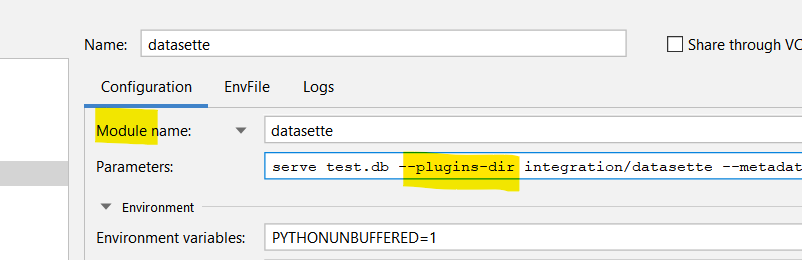

465773546,MDExOlB1bGxSZXF1ZXN0Mjk1NzQ4MjY4,556,Add support for running datasette as a module,3243482,abdusco,closed,0,,,,,1,2019-07-09T13:13:30Z,2019-07-11T16:07:45Z,2019-07-11T16:07:44Z,CONTRIBUTOR,simonw/datasette/pulls/556,"This PR allows running datasette using `python -m datasette` command in addition to just running the executable.

This function is quite useful when debugging a plugin in a project because IDEs like PyCharm can easily start a debug session when datasette is run as a module in contrast to trying to attach a debugger to a running process.

",107914493,datasette,pull,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/556/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",0,

465003070,MDU6SXNzdWU0NjUwMDMwNzA=,551,Ship many-to-many faceting support (and facet-by-delimiter),9599,simonw,open,0,,,,,2,2019-07-07T23:11:45Z,2019-07-08T15:45:23Z,,OWNER,,,107914493,datasette,issue,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/551/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,

456569067,MDU6SXNzdWU0NTY1NjkwNjc=,510,Ability to facet by delimiter (e.g. comma separated fields),9599,simonw,open,0,9599,simonw,,,1,2019-06-15T19:34:41Z,2019-07-08T15:44:51Z,,OWNER,,"E.g. if a field contains ""Tags,With,Commas"" be able to facet them in the same way as `_facet_array=` lets you facet `[""Tags"", ""With"", ""Commas""]`",107914493,datasette,issue,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/510/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,

462117311,MDU6SXNzdWU0NjIxMTczMTE=,531,/database/-/inspect,9599,simonw,open,0,,,,,1,2019-06-28T16:33:41Z,2019-07-08T15:43:57Z,,OWNER,,"Build `/database/-/inspect` which shows tables, columns, column types and foreign keys

It won't show table counts. Or maybe it will include them optionally but only for `-i` databases, in a special area of the JSON reserved for immutable-only inspect details.

_Originally posted by @simonw in https://github.com/simonw/datasette/issues/465#issuecomment-506797086_",107914493,datasette,issue,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/531/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,

465327844,MDU6SXNzdWU0NjUzMjc4NDQ=,553,Potential improvements to facet-by-date,9599,simonw,open,0,,,,,3,2019-07-08T15:37:53Z,2019-07-08T15:41:55Z,,OWNER,,"In addition to #483 Tobias had some useful suggestions on Twitter:

https://twitter.com/rixxtr/status/1148253926476701696

> I think for date facets, it might be more meaningful to order them by date, rather than by size? Or offer both? I'm *definitely* often interested in size-over-time, so https://data.rixx.de/django_tickets/tickets?_facet_date=created#facet-created … isn't all that helpful!

Screenshot of that link:

![]() ",107914493,datasette,issue,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/553/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,

465001185,MDU6SXNzdWU0NjUwMDExODU=,549,Send pull request to the repo that the _table.html template will break,9599,simonw,closed,0,,,4471010,Datasette 0.29,1,2019-07-07T22:45:17Z,2019-07-08T03:36:46Z,2019-07-08T03:36:45Z,OWNER,,"Bump this to 0.29 https://github.com/simonw/salaries-datasette/blob/master/requirements/base.txt

And rename https://github.com/simonw/salaries-datasette/blob/master/templates/_rows_and_columns.html to _table.html",107914493,datasette,issue,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/549/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,completed

464990184,MDU6SXNzdWU0NjQ5OTAxODQ=,547,Release notes for 0.29,9599,simonw,closed,0,,,4471010,Datasette 0.29,2,2019-07-07T20:30:28Z,2019-07-08T03:31:59Z,2019-07-08T03:31:59Z,OWNER,,There's a lot of stuff... https://github.com/simonw/datasette/compare/0.28...master,107914493,datasette,issue,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/547/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,completed

445868234,MDU6SXNzdWU0NDU4NjgyMzQ=,478,Make it so Docker build doesn't delay PyPI release,9599,simonw,closed,0,,,4471010,Datasette 0.29,3,2019-05-19T21:52:10Z,2019-07-08T03:30:41Z,2019-07-07T20:03:20Z,OWNER,,"Datasette automated releases currently include building a Docker image that has a full custom-compiled version of SQLite and SpatiaLite. This takes ages!

I still want to publish this Docker image (to https://hub.docker.com/r/datasetteproject/datasette/tags ) but I'd like it if this wasn't a blocker on pushing the new package to PyPI. Ideally PyPI publish would happen first.",107914493,datasette,issue,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/478/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,completed

464868844,MDU6SXNzdWU0NjQ4Njg4NDQ=,543,datasette publish option for setting plugin configuration secrets,9599,simonw,closed,0,,,4471010,Datasette 0.29,3,2019-07-06T16:21:23Z,2019-07-08T02:06:34Z,2019-07-08T02:06:34Z,OWNER,,Follow-on from #538 - the `datasette publish` command needs a way of passing secrets which will be made available to plugin configuration but will not be exposed in `/-/metadata.json`.,107914493,datasette,issue,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/543/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,completed

464894812,MDExOlB1bGxSZXF1ZXN0Mjk1MDY1Nzk2,544,--plugin-secret option,9599,simonw,closed,0,,,4471010,Datasette 0.29,1,2019-07-06T22:18:20Z,2019-07-08T02:06:31Z,2019-07-08T02:06:31Z,OWNER,simonw/datasette/pulls/544,"Refs #543

- [x] Zeit Now v1 support

- [x] Solve escaping of ENV in Dockerfile

- [x] Heroku support

- [x] Unit tests

- [x] Cloud Run support

- [x] Documentation

",107914493,datasette,pull,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/544/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",0,

464994105,MDU6SXNzdWU0NjQ5OTQxMDU=,548,Add datasette-cors and datasette-auth-github plugins to Ecosystem page,9599,simonw,closed,0,,,4471010,Datasette 0.29,0,2019-07-07T21:14:14Z,2019-07-08T02:02:36Z,2019-07-08T02:02:36Z,OWNER,,,107914493,datasette,issue,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/548/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,completed

465019882,MDU6SXNzdWU0NjUwMTk4ODI=,552,"Add --plugin-secret support to ""datasette package""",9599,simonw,open,0,,,,,1,2019-07-08T01:46:47Z,2019-07-08T01:47:30Z,,OWNER,,"Split out from #544.

I think I should combine this with #347 (renaming `datasette package` to `datasette publish docker`).",107914493,datasette,issue,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/552/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,

465002978,MDU6SXNzdWU0NjUwMDI5Nzg=,550,Pull m2m faceting out of master so we can ship a release without it,9599,simonw,closed,0,,,4471010,Datasette 0.29,1,2019-07-07T23:10:48Z,2019-07-07T23:21:22Z,2019-07-07T23:21:22Z,OWNER,,After spending some time with #495 I believe I need to make some pretty major changes to how m2m faceting works. I don't want it to block the release of ASGI Datasette so I'm going to revert it back out of master for the moment and merge it back in after the release has gone out.,107914493,datasette,issue,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/550/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,completed

446433735,MDU6SXNzdWU0NDY0MzM3MzU=,482,Example of a custom facet plugin is incorrect,9599,simonw,closed,0,,,4471010,Datasette 0.29,0,2019-05-21T06:12:47Z,2019-07-07T23:19:10Z,2019-07-07T23:19:10Z,OWNER,,"The function signatures are wrong on https://datasette.readthedocs.io/en/0.28/plugins.html#register-facet-classes

The new signatures are: `async def suggest(self)` and `async def facet_results(self)` - the `sql` and `params` are now passed to the class constructor.",107914493,datasette,issue,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/482/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,completed

453829910,MDU6SXNzdWU0NTM4Mjk5MTA=,505,Add white-space: pre-wrap to SQL create statement,9599,simonw,closed,0,9599,simonw,4471010,Datasette 0.29,0,2019-06-08T19:59:56Z,2019-07-07T20:26:55Z,2019-07-07T20:26:55Z,OWNER,,"Right now a super-long CREATE TABLE statement causes the table page to be even wider than the table itself:

",107914493,datasette,issue,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/553/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,

465001185,MDU6SXNzdWU0NjUwMDExODU=,549,Send pull request to the repo that the _table.html template will break,9599,simonw,closed,0,,,4471010,Datasette 0.29,1,2019-07-07T22:45:17Z,2019-07-08T03:36:46Z,2019-07-08T03:36:45Z,OWNER,,"Bump this to 0.29 https://github.com/simonw/salaries-datasette/blob/master/requirements/base.txt

And rename https://github.com/simonw/salaries-datasette/blob/master/templates/_rows_and_columns.html to _table.html",107914493,datasette,issue,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/549/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,completed

464990184,MDU6SXNzdWU0NjQ5OTAxODQ=,547,Release notes for 0.29,9599,simonw,closed,0,,,4471010,Datasette 0.29,2,2019-07-07T20:30:28Z,2019-07-08T03:31:59Z,2019-07-08T03:31:59Z,OWNER,,There's a lot of stuff... https://github.com/simonw/datasette/compare/0.28...master,107914493,datasette,issue,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/547/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,completed

445868234,MDU6SXNzdWU0NDU4NjgyMzQ=,478,Make it so Docker build doesn't delay PyPI release,9599,simonw,closed,0,,,4471010,Datasette 0.29,3,2019-05-19T21:52:10Z,2019-07-08T03:30:41Z,2019-07-07T20:03:20Z,OWNER,,"Datasette automated releases currently include building a Docker image that has a full custom-compiled version of SQLite and SpatiaLite. This takes ages!

I still want to publish this Docker image (to https://hub.docker.com/r/datasetteproject/datasette/tags ) but I'd like it if this wasn't a blocker on pushing the new package to PyPI. Ideally PyPI publish would happen first.",107914493,datasette,issue,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/478/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,completed

464868844,MDU6SXNzdWU0NjQ4Njg4NDQ=,543,datasette publish option for setting plugin configuration secrets,9599,simonw,closed,0,,,4471010,Datasette 0.29,3,2019-07-06T16:21:23Z,2019-07-08T02:06:34Z,2019-07-08T02:06:34Z,OWNER,,Follow-on from #538 - the `datasette publish` command needs a way of passing secrets which will be made available to plugin configuration but will not be exposed in `/-/metadata.json`.,107914493,datasette,issue,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/543/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,completed

464894812,MDExOlB1bGxSZXF1ZXN0Mjk1MDY1Nzk2,544,--plugin-secret option,9599,simonw,closed,0,,,4471010,Datasette 0.29,1,2019-07-06T22:18:20Z,2019-07-08T02:06:31Z,2019-07-08T02:06:31Z,OWNER,simonw/datasette/pulls/544,"Refs #543

- [x] Zeit Now v1 support

- [x] Solve escaping of ENV in Dockerfile

- [x] Heroku support

- [x] Unit tests

- [x] Cloud Run support

- [x] Documentation

",107914493,datasette,pull,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/544/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",0,

464994105,MDU6SXNzdWU0NjQ5OTQxMDU=,548,Add datasette-cors and datasette-auth-github plugins to Ecosystem page,9599,simonw,closed,0,,,4471010,Datasette 0.29,0,2019-07-07T21:14:14Z,2019-07-08T02:02:36Z,2019-07-08T02:02:36Z,OWNER,,,107914493,datasette,issue,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/548/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,completed

465019882,MDU6SXNzdWU0NjUwMTk4ODI=,552,"Add --plugin-secret support to ""datasette package""",9599,simonw,open,0,,,,,1,2019-07-08T01:46:47Z,2019-07-08T01:47:30Z,,OWNER,,"Split out from #544.

I think I should combine this with #347 (renaming `datasette package` to `datasette publish docker`).",107914493,datasette,issue,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/552/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,

465002978,MDU6SXNzdWU0NjUwMDI5Nzg=,550,Pull m2m faceting out of master so we can ship a release without it,9599,simonw,closed,0,,,4471010,Datasette 0.29,1,2019-07-07T23:10:48Z,2019-07-07T23:21:22Z,2019-07-07T23:21:22Z,OWNER,,After spending some time with #495 I believe I need to make some pretty major changes to how m2m faceting works. I don't want it to block the release of ASGI Datasette so I'm going to revert it back out of master for the moment and merge it back in after the release has gone out.,107914493,datasette,issue,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/550/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,completed

446433735,MDU6SXNzdWU0NDY0MzM3MzU=,482,Example of a custom facet plugin is incorrect,9599,simonw,closed,0,,,4471010,Datasette 0.29,0,2019-05-21T06:12:47Z,2019-07-07T23:19:10Z,2019-07-07T23:19:10Z,OWNER,,"The function signatures are wrong on https://datasette.readthedocs.io/en/0.28/plugins.html#register-facet-classes

The new signatures are: `async def suggest(self)` and `async def facet_results(self)` - the `sql` and `params` are now passed to the class constructor.",107914493,datasette,issue,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/482/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,completed

453829910,MDU6SXNzdWU0NTM4Mjk5MTA=,505,Add white-space: pre-wrap to SQL create statement,9599,simonw,closed,0,9599,simonw,4471010,Datasette 0.29,0,2019-06-08T19:59:56Z,2019-07-07T20:26:55Z,2019-07-07T20:26:55Z,OWNER,,"Right now a super-long CREATE TABLE statement causes the table page to be even wider than the table itself:

![]() Adding `white-space: pre-wrap` to that `

Adding `white-space: pre-wrap` to that `` element is an easy fix:

![]() ",107914493,datasette,issue,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/505/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,completed

464905894,MDU6SXNzdWU0NjQ5MDU4OTQ=,545,Fix header on 404 page,9599,simonw,closed,0,,,4471010,Datasette 0.29,1,2019-07-07T01:47:40Z,2019-07-07T20:26:55Z,2019-07-07T20:26:55Z,OWNER,,"

",107914493,datasette,issue,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/505/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,completed

464905894,MDU6SXNzdWU0NjQ5MDU4OTQ=,545,Fix header on 404 page,9599,simonw,closed,0,,,4471010,Datasette 0.29,1,2019-07-07T01:47:40Z,2019-07-07T20:26:55Z,2019-07-07T20:26:55Z,OWNER,,"![]() ",107914493,datasette,issue,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/545/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,completed

445875242,MDExOlB1bGxSZXF1ZXN0MjgwMjA1NTAy,480,Split pypi and docker travis tasks,813732,glasnt,closed,0,,,4471010,Datasette 0.29,1,2019-05-19T23:14:37Z,2019-07-07T20:03:20Z,2019-07-07T20:03:20Z,CONTRIBUTOR,simonw/datasette/pulls/480,"Resolves #478

This *should* work, but because this is a change that'll only really be testable on a) this repo, b) master branch, this might fail fast if I didn't get the configurations right.

Looking at #478 it should just be as simple as splitting out the docker and pypi processes into separate jobs, but it might end up being more complicated than that, depending on what pre-processes the pypi deployment needs, and how travisci treats deployment steps without scripts in general. ",107914493,datasette,pull,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/480/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",0,

464449570,MDU6SXNzdWU0NjQ0NDk1NzA=,540,Add a universal navigation bar which can be modified by plugins,9599,simonw,closed,0,,,,,8,2019-07-05T03:50:33Z,2019-07-06T23:13:29Z,2019-07-06T23:11:35Z,OWNER,,"Needed by https://github.com/simonw/datasette-auth-github/issues/5

We already have a navigation breadcrumbs header on some pages, I can extend that to be present on every page and make it easy to modify with custom templates.

",107914493,datasette,issue,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/545/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,completed

445875242,MDExOlB1bGxSZXF1ZXN0MjgwMjA1NTAy,480,Split pypi and docker travis tasks,813732,glasnt,closed,0,,,4471010,Datasette 0.29,1,2019-05-19T23:14:37Z,2019-07-07T20:03:20Z,2019-07-07T20:03:20Z,CONTRIBUTOR,simonw/datasette/pulls/480,"Resolves #478

This *should* work, but because this is a change that'll only really be testable on a) this repo, b) master branch, this might fail fast if I didn't get the configurations right.

Looking at #478 it should just be as simple as splitting out the docker and pypi processes into separate jobs, but it might end up being more complicated than that, depending on what pre-processes the pypi deployment needs, and how travisci treats deployment steps without scripts in general. ",107914493,datasette,pull,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/480/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",0,

464449570,MDU6SXNzdWU0NjQ0NDk1NzA=,540,Add a universal navigation bar which can be modified by plugins,9599,simonw,closed,0,,,,,8,2019-07-05T03:50:33Z,2019-07-06T23:13:29Z,2019-07-06T23:11:35Z,OWNER,,"Needed by https://github.com/simonw/datasette-auth-github/issues/5

We already have a navigation breadcrumbs header on some pages, I can extend that to be present on every page and make it easy to modify with custom templates.

![]() ",107914493,datasette,issue,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/540/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,completed

464779810,MDU6SXNzdWU0NjQ3Nzk4MTA=,541,Plugin hook for adding extra template context variables,9599,simonw,closed,0,,,,,2,2019-07-05T21:37:05Z,2019-07-06T00:05:59Z,2019-07-06T00:05:59Z,OWNER,,"It turns out I need this for https://github.com/simonw/datasette-auth-github/issues/5

It can be modelled on the `extra_body_script` hook: https://datasette.readthedocs.io/en/stable/plugins.html#extra-body-script-template-database-table-view-name-datasette",107914493,datasette,issue,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/541/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,completed

464786717,MDExOlB1bGxSZXF1ZXN0Mjk0OTkyNTc4,542,extra_template_vars plugin hook,9599,simonw,closed,0,,,,,5,2019-07-05T22:19:17Z,2019-07-06T00:05:57Z,2019-07-06T00:05:56Z,OWNER,simonw/datasette/pulls/542,Refs #541,107914493,datasette,pull,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/542/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",0,

463915863,MDU6SXNzdWU0NjM5MTU4NjM=,538,Mechanism for secrets in plugin configuration,9599,simonw,closed,0,,,,,3,2019-07-03T19:23:34Z,2019-07-04T05:47:54Z,2019-07-04T05:47:54Z,OWNER,,"See https://github.com/simonw/datasette-auth-github/issues/1

We need a mechanism where by plugins can tap into ""secret"" config options without exposing them in the visible metadata.json (where plugin configs currently live, see https://datasette.readthedocs.io/en/stable/plugins.html#plugin-configuration )",107914493,datasette,issue,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/538/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,completed

464040911,MDExOlB1bGxSZXF1ZXN0Mjk0NDAwNDQ2,539,Secret plugin configuration options,9599,simonw,closed,0,,,,,2,2019-07-04T03:21:20Z,2019-07-04T05:36:45Z,2019-07-04T05:36:45Z,OWNER,simonw/datasette/pulls/539,Refs #538 ,107914493,datasette,pull,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/539/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",0,

459598080,MDU6SXNzdWU0NTk1OTgwODA=,520,asgi_wrapper plugin hook,9599,simonw,closed,0,9599,simonw,,,3,2019-06-23T17:16:45Z,2019-07-03T04:40:34Z,2019-07-03T04:06:28Z,OWNER,,"After #272 we can finally add this hook. It will allow plugins to wrap their own ASGI middleware around Datasette. Potential use-cases include:

* adding authentication

* custom CORS headers (see #454)

* maybe gzip support?

* possibly defining entirely new routes, though that may be better handled by a separate hook",107914493,datasette,issue,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/520/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,completed

462928038,MDU6SXNzdWU0NjI5MjgwMzg=,532,Switch setup.py to using ~= for dependencies,9599,simonw,closed,0,,,,,0,2019-07-01T21:53:48Z,2019-07-03T04:32:58Z,2019-07-03T04:32:58Z,OWNER,,"`~=` means ""compatible release"" https://www.python.org/dev/peps/pep-0440/#compatible-release

See also https://stackoverflow.com/questions/39590187/in-requirements-txt-what-does-tilde-equals-mean",107914493,datasette,issue,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/532/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,completed

463534974,MDExOlB1bGxSZXF1ZXN0MjkzOTk0NDQz,536,"Switch to ~= dependencies, closes #532",9599,simonw,closed,0,,,,,0,2019-07-03T04:12:16Z,2019-07-03T04:32:55Z,2019-07-03T04:32:55Z,OWNER,simonw/datasette/pulls/536,,107914493,datasette,pull,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/536/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",0,

463531894,MDExOlB1bGxSZXF1ZXN0MjkzOTkyMzgy,535,"Added asgi_wrapper plugin hook, closes #520",9599,simonw,closed,0,,,,,0,2019-07-03T03:58:00Z,2019-07-03T04:06:26Z,2019-07-03T04:06:26Z,OWNER,simonw/datasette/pulls/535,,107914493,datasette,pull,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/535/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",0,

459621683,MDU6SXNzdWU0NTk2MjE2ODM=,521,Easier way of creating custom row templates,9599,simonw,closed,0,,,,,6,2019-06-23T21:49:27Z,2019-07-03T03:23:56Z,2019-07-03T03:23:56Z,OWNER,,"I was messing around with a custom `_rows_and_columns.html` template and ended up with this:

```html

{% for row in display_rows %}

",107914493,datasette,issue,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/540/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,completed

464779810,MDU6SXNzdWU0NjQ3Nzk4MTA=,541,Plugin hook for adding extra template context variables,9599,simonw,closed,0,,,,,2,2019-07-05T21:37:05Z,2019-07-06T00:05:59Z,2019-07-06T00:05:59Z,OWNER,,"It turns out I need this for https://github.com/simonw/datasette-auth-github/issues/5

It can be modelled on the `extra_body_script` hook: https://datasette.readthedocs.io/en/stable/plugins.html#extra-body-script-template-database-table-view-name-datasette",107914493,datasette,issue,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/541/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,completed

464786717,MDExOlB1bGxSZXF1ZXN0Mjk0OTkyNTc4,542,extra_template_vars plugin hook,9599,simonw,closed,0,,,,,5,2019-07-05T22:19:17Z,2019-07-06T00:05:57Z,2019-07-06T00:05:56Z,OWNER,simonw/datasette/pulls/542,Refs #541,107914493,datasette,pull,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/542/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",0,

463915863,MDU6SXNzdWU0NjM5MTU4NjM=,538,Mechanism for secrets in plugin configuration,9599,simonw,closed,0,,,,,3,2019-07-03T19:23:34Z,2019-07-04T05:47:54Z,2019-07-04T05:47:54Z,OWNER,,"See https://github.com/simonw/datasette-auth-github/issues/1

We need a mechanism where by plugins can tap into ""secret"" config options without exposing them in the visible metadata.json (where plugin configs currently live, see https://datasette.readthedocs.io/en/stable/plugins.html#plugin-configuration )",107914493,datasette,issue,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/538/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,completed

464040911,MDExOlB1bGxSZXF1ZXN0Mjk0NDAwNDQ2,539,Secret plugin configuration options,9599,simonw,closed,0,,,,,2,2019-07-04T03:21:20Z,2019-07-04T05:36:45Z,2019-07-04T05:36:45Z,OWNER,simonw/datasette/pulls/539,Refs #538 ,107914493,datasette,pull,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/539/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",0,

459598080,MDU6SXNzdWU0NTk1OTgwODA=,520,asgi_wrapper plugin hook,9599,simonw,closed,0,9599,simonw,,,3,2019-06-23T17:16:45Z,2019-07-03T04:40:34Z,2019-07-03T04:06:28Z,OWNER,,"After #272 we can finally add this hook. It will allow plugins to wrap their own ASGI middleware around Datasette. Potential use-cases include:

* adding authentication

* custom CORS headers (see #454)

* maybe gzip support?

* possibly defining entirely new routes, though that may be better handled by a separate hook",107914493,datasette,issue,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/520/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,completed

462928038,MDU6SXNzdWU0NjI5MjgwMzg=,532,Switch setup.py to using ~= for dependencies,9599,simonw,closed,0,,,,,0,2019-07-01T21:53:48Z,2019-07-03T04:32:58Z,2019-07-03T04:32:58Z,OWNER,,"`~=` means ""compatible release"" https://www.python.org/dev/peps/pep-0440/#compatible-release

See also https://stackoverflow.com/questions/39590187/in-requirements-txt-what-does-tilde-equals-mean",107914493,datasette,issue,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/532/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,completed

463534974,MDExOlB1bGxSZXF1ZXN0MjkzOTk0NDQz,536,"Switch to ~= dependencies, closes #532",9599,simonw,closed,0,,,,,0,2019-07-03T04:12:16Z,2019-07-03T04:32:55Z,2019-07-03T04:32:55Z,OWNER,simonw/datasette/pulls/536,,107914493,datasette,pull,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/536/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",0,

463531894,MDExOlB1bGxSZXF1ZXN0MjkzOTkyMzgy,535,"Added asgi_wrapper plugin hook, closes #520",9599,simonw,closed,0,,,,,0,2019-07-03T03:58:00Z,2019-07-03T04:06:26Z,2019-07-03T04:06:26Z,OWNER,simonw/datasette/pulls/535,,107914493,datasette,pull,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/535/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",0,

459621683,MDU6SXNzdWU0NTk2MjE2ODM=,521,Easier way of creating custom row templates,9599,simonw,closed,0,,,,,6,2019-06-23T21:49:27Z,2019-07-03T03:23:56Z,2019-07-03T03:23:56Z,OWNER,,"I was messing around with a custom `_rows_and_columns.html` template and ended up with this:

```html

{% for row in display_rows %}

{% for cell in row %}

{% if cell.column == ""First_Name"" %}

{{ cell.value }}

{% elif cell.column == ""Last_Name"" %}

{{ cell.value }}

{% elif cell.column == ""Short_Description"" %}

{{ cell.column }}: {{ cell.value }}

{% else %}

{{ cell.column }}: {{ cell.value }}

{% endif %}

{% endfor %}

{% endfor %}

```

This is nasty. I'd like to be able to do something like this instead:

```

{% for row in display_rows %}

{{ row[""First_Name""] }} {{ row[""Last_Name""] }}

...

```",107914493,datasette,issue,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/521/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,completed

463492395,MDExOlB1bGxSZXF1ZXN0MjkzOTYyNDA1,533,"Support cleaner custom templates for rows and tables, closes #521",9599,simonw,closed,0,,,,,1,2019-07-03T00:40:18Z,2019-07-03T03:23:06Z,2019-07-03T03:23:06Z,OWNER,simonw/datasette/pulls/533,"- [x] Rename `_rows_and_columns.html` to `_table.html`

- [x] Unit test

- [x] Documentation",107914493,datasette,pull,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/533/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",0,

462423839,MDU6SXNzdWU0NjI0MjM4Mzk=,33,index_foreign_keys / index-foreign-keys utilities,9599,simonw,closed,0,,,,,2,2019-06-30T16:42:03Z,2019-06-30T23:54:11Z,2019-06-30T23:50:55Z,OWNER,,"Sometimes it's good to have indices on all columns that are foreign keys, to allow for efficient reverse lookups.

This would be a useful utility:

$ sqlite-utils index-foreign-keys database.db

",140912432,sqlite-utils,issue,,,"{""url"": ""https://api.github.com/repos/simonw/sqlite-utils/issues/33/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,completed

462423972,MDExOlB1bGxSZXF1ZXN0MjkzMTE3MTgz,34,sqlite-utils index-foreign-keys / db.index_foreign_keys(),9599,simonw,closed,0,,,,,0,2019-06-30T16:43:40Z,2019-06-30T23:50:55Z,2019-06-30T23:50:55Z,OWNER,simonw/sqlite-utils/pulls/34,"Refs #33

- [x] `sqlite-utils index-foreign-keys` command

- [x] `db.index_foreign_keys()` method

- [x] unit tests

- [x] documentation",140912432,sqlite-utils,pull,,,"{""url"": ""https://api.github.com/repos/simonw/sqlite-utils/issues/34/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",0,

461237618,MDU6SXNzdWU0NjEyMzc2MTg=,31,Mechanism for adding multiple foreign key constraints at once,9599,simonw,closed,0,,,,,0,2019-06-27T00:04:30Z,2019-06-29T06:27:40Z,2019-06-29T06:27:40Z,OWNER,,"Needed by [db-to-sqlite](https://github.com/simonw/db-to-sqlite). It currently works by collecting all of the foreign key relationships it can find and then applying them at the end of the process.

The problem is, the `add_foreign_key()` method looks like this:

https://github.com/simonw/sqlite-utils/blob/86bd2bba689e25f09551d611ccfbee1e069e5b66/sqlite_utils/db.py#L498-L516

That means it's doing a full `VACUUM` for every single relationship it sets up - and if you have hundreds of foreign key relationships in your database this can take hours.

I think the right solution is to have a `.add_foreign_keys(list_of_args)` method which does the bulk operation and then a single `VACUUM`. `.add_foreign_key(...)` can then call the bulk action with a single list item.",140912432,sqlite-utils,issue,,,"{""url"": ""https://api.github.com/repos/simonw/sqlite-utils/issues/31/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,completed

462094937,MDExOlB1bGxSZXF1ZXN0MjkyODc5MjA0,32,db.add_foreign_keys() method,9599,simonw,closed,0,,,,,1,2019-06-28T15:40:33Z,2019-06-29T06:27:39Z,2019-06-29T06:27:39Z,OWNER,simonw/sqlite-utils/pulls/32,"Refs #31. Still TODO:

- [x] Unit tests

- [x] Documentation",140912432,sqlite-utils,pull,,,"{""url"": ""https://api.github.com/repos/simonw/sqlite-utils/issues/32/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",0,

327395270,MDU6SXNzdWUzMjczOTUyNzA=,296,Per-database and per-table /-/ URL namespace,9599,simonw,open,0,,,,,3,2018-05-29T16:23:13Z,2019-06-28T16:46:34Z,,OWNER,,"Initially this will be for subsets of `/-/inspect` and `/-/metadata` but it will also give us a URL namespace for future features like `/-/facet` (expanded list of a specific facet, linked to from `...`) and `/-/graph`

To start:

* `/dbname/-/inspect`

* `/dbname/-/metadata`

* `/dbname/tablename/-/inspect`

* `/dbname/tablename/-/metadata`

This means we will no longer allow databases or tables to have the name `""-""` - I think that's OK

We will continue to support rows with a primary key of `""-""` at the following URL:

* `/dbname/tablename/-`",107914493,datasette,issue,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/296/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,

327365110,MDU6SXNzdWUzMjczNjUxMTA=,294,inspect should record column types,9599,simonw,open,0,,,,,7,2018-05-29T15:10:41Z,2019-06-28T16:45:28Z,,OWNER,,"For each table we want to know the columns, their order and what type they are.

I'm going to break with SQLite defaults a little on this one and allow datasette to define additional types - to start with just a `geometry` type for columns that are detected as SpatiaLite geometries.

Possible JSON design:

""columns"": [{

""name"": ""title"",

""type"": ""text""

}, ...]

Refs #276",107914493,datasette,issue,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/294/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,

443038584,MDU6SXNzdWU0NDMwMzg1ODQ=,465,Decide what to do about /-/inspect,9599,simonw,closed,0,,,,,4,2019-05-11T21:39:46Z,2019-06-28T16:34:33Z,2019-06-28T16:34:33Z,OWNER,,"It's not clear to me what this endpoint should do now as a result of #419 - it's still useful to be able to introspect databases for tools like datasette-registry, but since we aren't pre-calculating introspection data any more I need to rethink the approach.

For one thing, this endpoint may need to be paginated. Or maybe it should be split up into separate endpoints for each connected database? Those should probably be paginated too seeing as fivethirtyeight has 400+ tables.",107914493,datasette,issue,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/465/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,completed

459622390,MDU6SXNzdWU0NTk2MjIzOTA=,522,Handle case-insensitive headers in a nicer way,9599,simonw,open,0,,,,,1,2019-06-23T21:56:34Z,2019-06-26T18:48:53Z,,OWNER,,Spun out from https://github.com/simonw/datasette/pull/518#discussion_r296486289,107914493,datasette,issue,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/522/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,

460396952,MDExOlB1bGxSZXF1ZXN0MjkxNTM0NTk2,529,Use keyed rows - fixes #521,1383872,nathancahill,closed,0,,,,,1,2019-06-25T12:33:48Z,2019-06-25T12:35:07Z,2019-06-25T12:35:07Z,NONE,simonw/datasette/pulls/529,"Supports template syntax like this:

```

{% for row in display_rows %}

{{ row[""First_Name""] }} {{ row[""Last_Name""] }}

...

```",107914493,datasette,pull,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/529/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",0,

460095928,MDU6SXNzdWU0NjAwOTU5Mjg=,528,Establish a pattern for Datasette plugins built on top of Pandas,9599,simonw,open,0,,,,,0,2019-06-24T21:05:52Z,2019-06-24T21:05:52Z,,OWNER,,"The Pandas ecosystem is huge, varied and full of tools that are really good at doing interesting analysis on top of tabular data.

Pandas should not be a dependency of Datasette core, but I think there is a lot of potential in having plugins which use Pandas to apply interesting analysis to data sucked out of Datasette's SQLite tables.

One example ([thanks, Tony](https://twitter.com/psychemedia/status/1143259809715752962)): https://github.com/ResidentMario/missingno could form the basis of a fantastic plugin for getting a high-level overview of how complete each column in a table is.

Some thought is needed here about what shape these kind of plugins might take, and what plugin hooks they would use.",107914493,datasette,issue,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/528/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,

438048318,MDExOlB1bGxSZXF1ZXN0Mjc0MTc0NjE0,437,Add inspect and prepare_sanic hooks,45057,russss,closed,0,,,,,2,2019-04-28T11:53:34Z,2019-06-24T16:38:57Z,2019-06-24T16:38:56Z,CONTRIBUTOR,simonw/datasette/pulls/437,"This adds two new plugin hooks:

The `inspect` hook allows plugins to add data to the inspect dictionary.

The `prepare_sanic` hook allows plugins to hook into the web router. I've attached a warning to this hook in the docs in light of #272 but I want this hook now...

On quick inspection, I don't think it's worthwhile to try and make this hook independent of the web framework (but it looks like Starlette would make the hook implementation a bit nicer).

Ref #14",107914493,datasette,pull,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/437/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",0,

459714943,MDU6SXNzdWU0NTk3MTQ5NDM=,525,Add section on sqite-utils enable-fts to the search documentation,9599,simonw,closed,0,9599,simonw,,,2,2019-06-24T06:39:16Z,2019-06-24T16:36:35Z,2019-06-24T16:29:43Z,OWNER,,"https://datasette.readthedocs.io/en/stable/full_text_search.html already has a section about csvs-to-sqlite, sqlite-utils is even more relevant.",107914493,datasette,issue,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/525/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,completed

459936585,MDU6SXNzdWU0NTk5MzY1ODU=,527,Unable to use rank when fts-table generated with csvs-to-sqlite,2181410,clausjuhl,closed,0,,,,,3,2019-06-24T14:49:48Z,2019-06-24T15:21:18Z,2019-06-24T15:09:10Z,NONE,,"Hi Simon.

If i generate a fts-table with the csvs-to-sqlite f-option, I'm unable to use (in datasette's GUI) the internal ranking of the table for sorting or viewing, but if I generate the fts-table with the enable-fts argument from sqlite-utils, everyrthing works ok. Eg.:

datasette, version 0.28

sqlite-utils, version 1.2.1

csvs-to-sqlite, version 0.9

No column named rank with these commands:

$ csvs-to-sqlite minutes.csv minutes.db -f text_data

$ datasette -i minutes.db

select rank, * from minutes_fts where minutes_fts match 'dog'

Everything ok with these commands:

$ csvs-to-sqlite minutes.csv minutes.db

$ sqlite-utils enable-fts minutes.db text_data

$ datasette -i minutes.db

select rank, * from minutes_fts where minutes_fts match 'dog'

Am I doing something wrong?

Thank you for a great application!",107914493,datasette,issue,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/527/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,completed

459587155,MDExOlB1bGxSZXF1ZXN0MjkwODk3MTA0,518,Port Datasette from Sanic to ASGI + Uvicorn,9599,simonw,closed,0,9599,simonw,3268330,Datasette 1.0,12,2019-06-23T15:18:42Z,2019-06-24T13:42:50Z,2019-06-24T03:13:09Z,OWNER,simonw/datasette/pulls/518,"Most of the code here was fleshed out in comments on #272 (Port Datasette to ASGI) - this pull request will track the final pieces:

- [x] Update test harness to more correctly simulate the `raw_path` issue

- [x] Use `raw_path` so table names containing `/` can work correctly

- [x] Bug: JSON not served with correct content-type

- [x] Get ?_trace=1 working again

- [x] Replacement for `@app.listener(""before_server_start"")`

- [x] Bug: `/fixtures/table%2Fwith%2Fslashes.csv?_format=json` downloads as CSV

- [x] Replace Sanic request and response objects with my own classes, so I can remove Sanic dependency

- [x] Final code tidy-up before merging to master",107914493,datasette,pull,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/518/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",0,

272391665,MDU6SXNzdWUyNzIzOTE2NjU=,48,Switch to ujson,9599,simonw,closed,0,,,,,4,2017-11-08T23:50:29Z,2019-06-24T06:57:54Z,2019-06-24T06:57:43Z,OWNER,,"ujson is already a dependency of Sanic, and should be quite a bit faster.",107914493,datasette,issue,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/48/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,completed

317714268,MDU6SXNzdWUzMTc3MTQyNjg=,238,External metadata.json,9599,simonw,closed,0,,,,,3,2018-04-25T17:02:30Z,2019-06-24T06:52:55Z,2019-06-24T06:52:45Z,OWNER,,"A frustration I'm having with https://register-of-members-interests.datasettes.com/ is that I keep coming up with new canned queries but I don't want to redeploy the whole thing just to add them to `metadata.json`

Maybe Datasette could optionally take a `--metadata-url` option which causes it to load from a URL instead and occasionally check for updates.",107914493,datasette,issue,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/238/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,completed

340730961,MDU6SXNzdWUzNDA3MzA5NjE=,340,Embrace black,9599,simonw,closed,0,,,,,1,2018-07-12T17:32:29Z,2019-06-24T06:50:27Z,2019-06-24T06:50:26Z,OWNER,,"Run [black](https://github.com/ambv/black) against everything. Then set up CI to fail if code doesn't conform to black's style.

Here's how Starlette does this:

* https://github.com/encode/starlette/blob/e3d090b3597167f7b3a4f76e4bb3c0d3e94be61a/.travis.yml#L14

* https://github.com/encode/starlette/blob/e3d090b3597167f7b3a4f76e4bb3c0d3e94be61a/scripts/lint - essentially runs `black starlette tests --check`

And here's an example of a test run that failed: https://travis-ci.org/encode/starlette/jobs/403172478",107914493,datasette,issue,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/340/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,completed

276455748,MDU6SXNzdWUyNzY0NTU3NDg=,146,datasette publish gcloud,9599,simonw,closed,0,,,,,2,2017-11-23T18:55:03Z,2019-06-24T06:48:20Z,2019-06-24T06:48:20Z,OWNER,,"See also #103

It looks like you can start a Google Cloud VM with a ""docker container"" option - and the Google Cloud Registry is easy to push containers to. So it would be feasible to have `datasette publish gcloud ...` automatically build a container, push it to GCR, then start a new VM instance with it:

https://cloud.google.com/container-registry/docs/pushing-and-pulling

",107914493,datasette,issue,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/146/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,completed

291639118,MDU6SXNzdWUyOTE2MzkxMTg=,183,Custom Queries - escaping strings,82988,psychemedia,closed,0,,,,,2,2018-01-25T16:49:13Z,2019-06-24T06:45:07Z,2019-06-24T06:45:07Z,CONTRIBUTOR,,"If a SQLite table column name contains spaces, they are usually referred to in double quotes:

`SELECT * FROM mytable WHERE ""gappy column name""=""my value"";`

In the JSON metadata file, this is passed by escaping the double quotes:

`""queries"": {""my query"": ""SELECT * FROM mytable WHERE \""gappy column name\""=\""my value\"";""}`

When specifying a custom query in `metadata.json` using double quotes, these are then rendered in the *datasette* query box using single quotes:

`SELECT * FROM mytable WHERE 'gappy column name'='my value';`

which does not work.

Alternatively, a valid custom query can be passed using backticks (\`) to quote the column name and single (unescaped) quotes for the matched value:

``""queries"": {""my query"": ""SELECT * FROM mytable WHERE `gappy column name`='my value';""}``

",107914493,datasette,issue,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/183/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,completed

275125805,MDU6SXNzdWUyNzUxMjU4MDU=,124,Option to open readonly but not immutable,9599,simonw,closed,0,,,,,5,2017-11-19T02:11:03Z,2019-06-24T06:43:46Z,2019-06-24T06:43:46Z,OWNER,,Immutable assumes no other process can modify the file. An option to open reqdonly instead would enable other processes to update the file in place.,107914493,datasette,issue,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/124/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,completed

274315193,MDU6SXNzdWUyNzQzMTUxOTM=,106,Document how pagination works,9599,simonw,closed,0,,,,,1,2017-11-15T21:44:32Z,2019-06-24T06:42:33Z,2019-06-24T06:42:33Z,OWNER,,I made a start at that in this comment: https://news.ycombinator.com/item?id=15691926,107914493,datasette,issue,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/106/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,completed

323716411,MDU6SXNzdWUzMjM3MTY0MTE=,267,"Documentation for URL hashing, redirects and cache policy",9599,simonw,closed,0,,,,,3,2018-05-16T17:29:01Z,2019-06-24T06:41:02Z,2019-06-24T06:41:02Z,OWNER,,See my comments on #258 for a starting point,107914493,datasette,issue,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/267/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,completed

329147284,MDU6SXNzdWUzMjkxNDcyODQ=,305,Add contributor guidelines to docs,9599,simonw,closed,0,,,,,2,2018-06-04T17:25:30Z,2019-06-24T06:40:19Z,2019-06-24T06:40:19Z,OWNER,,https://channels.readthedocs.io/en/latest/contributing.html is a nice example of this done well.,107914493,datasette,issue,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/305/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,completed

324188953,MDU6SXNzdWUzMjQxODg5NTM=,272,Port Datasette to ASGI,9599,simonw,closed,0,9599,simonw,3268330,Datasette 1.0,42,2018-05-17T21:16:32Z,2019-06-24T04:54:15Z,2019-06-24T03:33:06Z,OWNER,,"Datasette doesn't take much advantage of Sanic, and I'm increasingly having to work around parts of it because of idiosyncrasies that are specific to Datasette - caring about the exact order of querystring arguments for example.

Since Datasette is GET-only our needs from a web framework are actually pretty slim.

This becomes more important as I expand the plugins #14 framework. Am I sure I want the plugin ecosystem to depend on a Sanic if I might move away from it in the future?

If Datasette wasn't all about async/await I would use WSGI, but today it makes more sense to use ASGI. I'd like to be confident that switching to ASGI would still give me the excellent performance that Sanic provides.

https://github.com/django/asgiref/blob/master/specs/asgi.rst",107914493,datasette,issue,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/272/reactions"", ""total_count"": 1, ""+1"": 1, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,completed

459627549,MDU6SXNzdWU0NTk2Mjc1NDk=,523,Show total/unfiltered row count when filtering,2657547,rixx,closed,0,,,,,2,2019-06-23T22:56:48Z,2019-06-24T01:38:14Z,2019-06-24T01:38:14Z,CONTRIBUTOR,,"When I'm seeing a filtered view of a table, I'd like to be able to see something like '2 rows where status != ""closed"" (of 1000 total)' to have a context for the data I'm seeing – e.g. currently my database is being filled by an importer, so this information would be super helpful.

Since this information would be a performance hit, maybe something like '12 rows where status != ""closed"" (of ??? total)' with lazy-loading on-click(?) could be applied (Or via a ""How many total?"" tooltip, or …)",107914493,datasette,issue,,,"{""url"": ""https://api.github.com/repos/simonw/datasette/issues/523/reactions"", ""total_count"": 0, ""+1"": 0, ""-1"": 0, ""laugh"": 0, ""hooray"": 0, ""confused"": 0, ""heart"": 0, ""rocket"": 0, ""eyes"": 0}",,completed