issues

572 rows where comments = 0 sorted by title

This data as json, CSV (advanced)

Suggested facets: milestone, author_association, draft, state_reason, updated_at (date), closed_at (date)

created_at (date) >30 ✖

- 2018-04-16 5

- 2020-09-17 5

- 2022-12-28 5

- 2017-10-23 4

- 2017-11-13 4

- 2020-03-23 4

- 2023-03-09 4

- 2017-11-11 3

- 2017-11-14 3

- 2018-04-09 3

- 2019-05-19 3

- 2019-09-03 3

- 2019-10-11 3

- 2020-04-08 3

- 2020-09-01 3

- 2020-09-22 3

- 2020-10-11 3

- 2021-06-20 3

- 2023-07-26 3

- 2017-10-24 2

- 2017-11-15 2

- 2017-11-16 2

- 2017-11-19 2

- 2018-04-14 2

- 2018-04-18 2

- 2018-05-16 2

- 2019-02-24 2

- 2019-05-02 2

- 2019-05-03 2

- 2019-07-01 2

- …

repo 16

- datasette 352

- sqlite-utils 98

- twitter-to-sqlite 26

- github-to-sqlite 23

- dogsheep-beta 16

- healthkit-to-sqlite 8

- dogsheep-photos 8

- google-takeout-to-sqlite 7

- dogsheep.github.io 7

- apple-notes-to-sqlite 7

- swarm-to-sqlite 6

- evernote-to-sqlite 6

- pocket-to-sqlite 3

- inaturalist-to-sqlite 2

- hacker-news-to-sqlite 2

- genome-to-sqlite 1

| id | node_id | number | title ▼ | user | state | locked | assignee | milestone | comments | created_at | updated_at | closed_at | author_association | pull_request | body | repo | type | active_lock_reason | performed_via_github_app | reactions | draft | state_reason |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 351017365 | MDExOlB1bGxSZXF1ZXN0MjA4NzE5MDQz | 361 | Import pysqlite3 if available, closes #360 | simonw 9599 | closed | 0 | 0 | 2018-08-16T00:52:21Z | 2018-08-16T00:58:57Z | 2018-08-16T00:58:57Z | OWNER | simonw/datasette/pulls/361 | datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/361/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

| 1871935751 | I_kwDOD079W85vk3kH | 40 | ImportError: cannot import name 'formatargspec' from 'inspect' | hosslikw 36752421 | closed | 0 | 0 | 2023-08-29T15:36:31Z | 2023-08-31T03:18:07Z | 2023-08-31T03:18:06Z | NONE | I get the following error when running "pip3 install dogsheep-photos" " from inspect import ismethod, isclass, formatargspec ImportError: cannot import name 'formatargspec' from 'inspect' (/Library/Frameworks/Python.framework/Versions/3.12/lib/python3.12/inspect.py). Did you mean: 'formatargvalues'?" Python 3.12.0rc1 sqlite 3.43.0 datasette, version 0.64.3 |

dogsheep-photos 256834907 | issue | {

"url": "https://api.github.com/repos/dogsheep/dogsheep-photos/issues/40/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 1943259395 | I_kwDOEhK-wc5z08kD | 16 | time data '2014-11-21T11:44:12.000Z' does not match format '%Y%m%dT%H%M%SZ' | linonetwo 3746270 | open | 0 | 0 | 2023-10-14T13:24:39Z | 2023-10-14T13:24:39Z | NONE |

enex is exported by evernote mac client |

evernote-to-sqlite 303218369 | issue | {

"url": "https://api.github.com/repos/dogsheep/evernote-to-sqlite/issues/16/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 761713079 | MDU6SXNzdWU3NjE3MTMwNzk= | 1138 | "Powered by Datasette" should link to new datasette.io site | simonw 9599 | closed | 0 | 0 | 2020-12-10T23:33:41Z | 2020-12-15T02:28:10Z | 2020-12-10T23:37:14Z | OWNER | datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1138/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||||

| 1084007781 | I_kwDOBm6k_c5AnKVl | 1572 | "Query took" should be "Queries took" | simonw 9599 | closed | 0 | Datasette 0.60 7571612 | 0 | 2021-12-19T04:03:00Z | 2022-01-13T22:27:43Z | 2021-12-19T04:03:24Z | OWNER | This is misleading, since usually there have been more than one query executed:

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1572/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 275228834 | MDU6SXNzdWUyNzUyMjg4MzQ= | 136 | "Reformat SQL" button next to SQL editor textarea | simonw 9599 | closed | 0 | 0 | 2017-11-20T03:42:19Z | 2019-10-14T03:46:13Z | 2019-10-14T03:46:13Z | OWNER | datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/136/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||||

| 853672224 | MDU6SXNzdWU4NTM2NzIyMjQ= | 1294 | "You can check out any time you like. But you can never leave!" | mroswell 192568 | open | 0 | 0 | 2021-04-08T17:02:15Z | 2021-04-08T18:35:50Z | CONTRIBUTOR | (Feel free to rename this one.)

UPDATE: - It would be helpful to have a "Previous page" available for all but the first table page. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1294/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 679637501 | MDU6SXNzdWU2Nzk2Mzc1MDE= | 934 | --get doesn't fully invoke the startup routine | simonw 9599 | closed | 0 | 0 | 2020-08-15T20:30:25Z | 2020-08-15T20:53:49Z | 2020-08-15T20:53:49Z | OWNER | Spotted this working on https://github.com/simonw/latest-datasette-with-all-plugins/issues/3 - I'd like to be able to use |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/934/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 1931794126 | I_kwDOBm6k_c5zJNbO | 2198 | --load-extension=spatialite not working with Windows | hcarter333 363004 | open | 0 | 0 | 2023-10-08T12:50:22Z | 2023-10-08T12:50:22Z | NONE | Using each of

and

and

I got the error: ``` File "C:\Users\m3n7es\AppData\Local\Packages\PythonSoftwareFoundation.Python.3.11_qbz5n2kfra8p0\LocalCache\local-packages\Python311\site-packages\datasette\database.py", line 209, in in_thread self.ds._prepare_connection(conn, self.name) File "C:\Users\m3n7es\AppData\Local\Packages\PythonSoftwareFoundation.Python.3.11_qbz5n2kfra8p0\LocalCache\local-packages\Python311\site-packages\datasette\app.py", line 596, in _prepare_connection conn.execute("SELECT load_extension(?, ?)", [path, entrypoint]) sqlite3.OperationalError: The specified module could not be found. ``` I finally tried modifying the code in app.py to read: ``` def _prepare_connection(self, conn, database): conn.row_factory = sqlite3.Row conn.text_factory = lambda x: str(x, "utf-8", "replace") if self.sqlite_extensions: conn.enable_load_extension(True) for extension in self.sqlite_extensions: # "extension" is either a string path to the extension # or a 2-item tuple that specifies which entrypoint to load. #if isinstance(extension, tuple): # path, entrypoint = extension # conn.execute("SELECT load_extension(?, ?)", [path, entrypoint]) #else: conn.execute("SELECT load_extension('C:\Windows\System32\mod_spatialite.dll')") ``` At which point the counties example worked. Is there a correct way to install/use the extension on Windows? My method will cause issues if there's a second extension to be used. On an unrelated note, my next step is to figure out how to write a query across the two loaded databases supplied from the command line:

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/2198/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 665701216 | MDU6SXNzdWU2NjU3MDEyMTY= | 123 | --raw option for outputting binary content | simonw 9599 | closed | 0 | 0 | 2020-07-26T03:35:39Z | 2020-07-26T16:44:11Z | 2020-07-26T16:44:11Z | OWNER | Related to the One way to do that could be: The |

sqlite-utils 140912432 | issue | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/123/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 758944006 | MDU6SXNzdWU3NTg5NDQwMDY= | 57 | --readme throws 404 error if README does not exist in repo | simonw 9599 | closed | 0 | 0 | 2020-12-07T23:58:49Z | 2020-12-16T18:17:54Z | 2020-12-16T18:17:54Z | MEMBER | It should fail silently (populate the column with a null) instead. |

github-to-sqlite 207052882 | issue | {

"url": "https://api.github.com/repos/dogsheep/github-to-sqlite/issues/57/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 610853393 | MDU6SXNzdWU2MTA4NTMzOTM= | 104 | --schema option to "sqlite-utils tables" | simonw 9599 | closed | 0 | 0 | 2020-05-01T16:55:49Z | 2020-05-01T17:12:37Z | 2020-05-01T17:12:37Z | OWNER | Adds output showing the table schema. |

sqlite-utils 140912432 | issue | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/104/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 806861312 | MDExOlB1bGxSZXF1ZXN0NTcyMjA5MjQz | 1222 | --ssl-keyfile and --ssl-certfile, refs #1221 | simonw 9599 | closed | 0 | 0 | 2021-02-12T00:45:58Z | 2021-02-12T00:52:18Z | 2021-02-12T00:52:17Z | OWNER | simonw/datasette/pulls/1222 | datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1222/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

| 735648209 | MDU6SXNzdWU3MzU2NDgyMDk= | 193 | --tsv output format option | simonw 9599 | closed | 0 | 3.0 6079500 | 0 | 2020-11-03T21:31:18Z | 2020-11-07T00:09:52Z | 2020-11-07T00:09:52Z | OWNER | We already support |

sqlite-utils 140912432 | issue | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/193/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 783910901 | MDU6SXNzdWU3ODM5MTA5MDE= | 221 | .add_missing_columns() does not take case insensitivity into account | simonw 9599 | closed | 0 | 0 | 2021-01-12T05:01:00Z | 2021-01-12T23:17:33Z | 2021-01-12T23:17:33Z | OWNER | SQLite columns are case insensitive - but the |

sqlite-utils 140912432 | issue | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/221/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 787900412 | MDU6SXNzdWU3ODc5MDA0MTI= | 222 | .m2m() should accept alter=True parameter | simonw 9599 | closed | 0 | 0 | 2021-01-18T04:15:43Z | 2021-01-18T04:26:10Z | 2021-01-18T04:26:10Z | OWNER | sqlite-utils 140912432 | issue | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/222/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||||

| 561460274 | MDU6SXNzdWU1NjE0NjAyNzQ= | 84 | .upsert() with hash_id throws error | simonw 9599 | closed | 0 | 0 | 2020-02-07T07:08:19Z | 2020-02-07T07:17:11Z | 2020-02-07T07:17:11Z | OWNER |

The problem is, if you try this:

|

sqlite-utils 140912432 | issue | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/84/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 315548495 | MDU6SXNzdWUzMTU1NDg0OTU= | 225 | /-/(inspect|metadata|plugins)(.json)? introspection | simonw 9599 | closed | 0 | 0 | 2018-04-18T16:14:58Z | 2018-04-19T05:25:33Z | 2018-04-19T05:25:33Z | OWNER | 3 pages (and accompanying .json endpoints) for viewing:

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/225/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 778530523 | MDU6SXNzdWU3Nzg1MzA1MjM= | 1172 | /-/static should be excluded from auth and permission checks | simonw 9599 | open | 0 | 0 | 2021-01-05T02:53:41Z | 2021-01-05T02:53:41Z | OWNER | I want to set far future / immutable cache headers on everything served from This has security implications since it will be possible to see what plugins are installed by checking for known static URLs. I'm fine with that - performance is more important here. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1172/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 321624016 | MDU6SXNzdWUzMjE2MjQwMTY= | 252 | /-/versions should report the FTS version supported by SQLite | simonw 9599 | closed | 0 | 0 | 2018-05-09T15:43:47Z | 2018-05-11T13:19:52Z | 2018-05-11T13:19:52Z | OWNER | I can copy this function from |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/252/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 267886865 | MDU6SXNzdWUyNjc4ODY4NjU= | 28 | /database?sql= should redirect correctly | simonw 9599 | closed | 0 | Ship first public release 2857392 | 0 | 2017-10-24T03:38:44Z | 2017-10-24T23:54:30Z | 2017-10-24T23:54:30Z | OWNER | Needs to redirect to the location with the hash while retaining the query string. This should also work with the .json extension. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/28/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 647095808 | MDU6SXNzdWU2NDcwOTU4MDg= | 874 | /favicon.ico 500 error | simonw 9599 | closed | 0 | Datasette 0.45 5533512 | 0 | 2020-06-29T04:04:22Z | 2020-06-29T04:27:18Z | 2020-06-29T04:27:18Z | OWNER |

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/874/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 802583450 | MDU6SXNzdWU4MDI1ODM0NTA= | 226 | 3.4 release is broken - includes a rogue line | simonw 9599 | closed | 0 | 0 | 2021-02-06T02:08:01Z | 2021-02-06T02:10:26Z | 2021-02-06T02:10:26Z | OWNER | I started seeing weird errors, caused by this line: https://github.com/simonw/sqlite-utils/blob/f8010ca78fed8c5fca6cde19658ec09fdd468420/sqlite_utils/cli.py#L1-L3 That was added by accident in 1b666f9315d4ea6bb332b2e75e48480c26100199 I'm surprised the tests didn't catch this! |

sqlite-utils 140912432 | issue | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/226/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 405801771 | MDExOlB1bGxSZXF1ZXN0MjQ5NjgwOTQ0 | 9 | :pencil: Updates my_database.py to my_database.db | jefftriplett 50527 | closed | 0 | 0 | 2019-02-01T17:35:43Z | 2019-02-24T03:55:04Z | 2019-02-24T03:55:04Z | CONTRIBUTOR | simonw/sqlite-utils/pulls/9 | I noticed that both |

sqlite-utils 140912432 | pull | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/9/reactions",

"total_count": 1,

"+1": 1,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | |||||

| 426722204 | MDU6SXNzdWU0MjY3MjIyMDQ= | 423 | ?_search_col=X not reflected correctly in the UI | simonw 9599 | open | 0 | 0 | 2019-03-28T21:48:19Z | 2020-11-03T19:01:59Z | OWNER | datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/423/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

|||||||||

| 570327466 | MDExOlB1bGxSZXF1ZXN0Mzc5Mzc4Nzgw | 686 | ?_searchmode=raw option | simonw 9599 | closed | 0 | 0 | 2020-02-25T05:45:50Z | 2020-02-25T05:56:09Z | 2020-02-25T05:56:04Z | OWNER | simonw/datasette/pulls/686 | Closes #676 |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/686/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | |||||

| 610342575 | MDU6SXNzdWU2MTAzNDI1NzU= | 748 | ?_searchmode=raw should be documented on full-text search page | simonw 9599 | closed | 0 | 0 | 2020-04-30T19:50:06Z | 2020-04-30T21:06:12Z | 2020-04-30T21:06:12Z | OWNER | It's currently documented here: https://datasette.readthedocs.io/en/stable/json_api.html#special-table-arguments But it should also be described here: https://datasette.readthedocs.io/en/stable/full_text_search.html#the-table-view-api |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/748/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 319371036 | MDExOlB1bGxSZXF1ZXN0MTg1MzA3NDA3 | 246 | ?_shape=array and _timelimit= | simonw 9599 | closed | 0 | 0 | 2018-05-02T00:18:54Z | 2018-05-02T00:20:41Z | 2018-05-02T00:20:40Z | OWNER | simonw/datasette/pulls/246 | datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/246/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

| 432792459 | MDExOlB1bGxSZXF1ZXN0MjcwMTkxMDg0 | 430 | ?_where= parameter on table views, closes #429 | simonw 9599 | closed | 0 | 0 | 2019-04-13T01:15:09Z | 2019-04-13T01:37:23Z | 2019-04-13T01:37:23Z | OWNER | simonw/datasette/pulls/430 | datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/430/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

| 433297989 | MDU6SXNzdWU0MzMyOTc5ODk= | 433 | ?column__in=value1,value2,value3 filter | simonw 9599 | closed | 0 | 0 | 2019-04-15T13:58:24Z | 2019-04-15T23:00:20Z | 2019-04-15T23:00:20Z | OWNER | Support for the SQL

If comma separation won't work (because the values themselves contain commas) you can do this instead:

See also #288 |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/433/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 1345561209 | I_kwDOBm6k_c5QM6J5 | 1790 | A better HTML title for canned query pages | simonw 9599 | open | 0 | 0 | 2022-08-21T18:27:46Z | 2022-08-21T18:27:46Z | OWNER | https://scotrail.datasette.io/scotrail/assemble_sentence?terms=This+train+is+formed+of%2Cbomb+which Current title is:

I think a better title would be:

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1790/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 413857257 | MDU6SXNzdWU0MTM4NTcyNTc= | 15 | Ability to add columns to tables | simonw 9599 | closed | 0 | 0 | 2019-02-24T19:20:51Z | 2019-02-24T20:04:40Z | 2019-02-24T20:04:40Z | OWNER | Makes sense to do this before foreign keys in #2 Python: CLI: |

sqlite-utils 140912432 | issue | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/15/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 906355849 | MDExOlB1bGxSZXF1ZXN0NjU3MzczNzI2 | 262 | Ability to add descending order indexes | simonw 9599 | closed | 0 | 0 | 2021-05-29T04:51:04Z | 2021-05-29T05:01:42Z | 2021-05-29T05:01:39Z | OWNER | simonw/sqlite-utils/pulls/262 | Refs #260 |

sqlite-utils 140912432 | pull | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/262/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | |||||

| 1447465004 | I_kwDOBm6k_c5WRpAs | 1889 | Ability to create new tokens via the API | simonw 9599 | open | 0 | Datasette 1.0a-next 8755003 | 0 | 2022-11-14T06:21:36Z | 2022-12-13T05:29:08Z | OWNER | Refs: - #1850 Initially I decided that the API shouldn't be able to create new tokens at all - I don't like the idea of an API token holder creating themselves additional tokens. Then I realized that two of the API features are specifically more useful if you can generate fresh tokens via the API:

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1889/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

|||||||

| 1754174496 | I_kwDOCGYnMM5ojpQg | 558 | Ability to define unique columns when creating a table | aguinane 1910303 | open | 0 | 0 | 2023-06-13T06:56:19Z | 2023-08-18T01:06:03Z | NONE | When creating a new table, it would be good to have an option to set unique columns similar to how not_null is set. ```python from sqlite_utils import Database columns = {"mRID": str, "name": str} db = Database("example.db") db["ExampleTable"].create(columns, pk="mRID", not_null=["mRID"], if_not_exists=True) db["ExampleTable"].create_index(["mRID"], unique=True, if_not_exists=True) ``` So something like this would add the UNIQUE flag to the table definition.

|

sqlite-utils 140912432 | issue | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/558/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 975049826 | MDExOlB1bGxSZXF1ZXN0NzE2MjYyODI5 | 1444 | Ability to deploy demos of branches | simonw 9599 | closed | 0 | 0 | 2021-08-19T21:08:04Z | 2021-08-19T21:09:44Z | 2021-08-19T21:09:39Z | OWNER | simonw/datasette/pulls/1444 | See #1442. |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1444/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | |||||

| 897212458 | MDU6SXNzdWU4OTcyMTI0NTg= | 63 | Ability to fetch commits from branches other than the default | simonw 9599 | open | 0 | 0 | 2021-05-20T17:58:08Z | 2021-05-20T17:58:08Z | MEMBER | This tool is currently almost entirely ignorant of the concept of branches. One example: you can't retrieve commits from any branch other than the default (usually main). |

github-to-sqlite 207052882 | issue | {

"url": "https://api.github.com/repos/dogsheep/github-to-sqlite/issues/63/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 508024032 | MDU6SXNzdWU1MDgwMjQwMzI= | 22 | Ability to import from uncompressed archive or from specific files | simonw 9599 | closed | 0 | 0 | 2019-10-16T18:31:57Z | 2019-10-16T18:53:36Z | 2019-10-16T18:53:36Z | MEMBER | Currently you can only import like this: It would be useful if you could import from a folder that was decompressed from that zip: AND from individual files within that folder - since that would allow you to e.g. selectively import certain files: |

twitter-to-sqlite 206156866 | issue | {

"url": "https://api.github.com/repos/dogsheep/twitter-to-sqlite/issues/22/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 488338965 | MDU6SXNzdWU0ODgzMzg5NjU= | 59 | Ability to introspect triggers | simonw 9599 | closed | 0 | 0 | 2019-09-02T23:47:16Z | 2019-09-03T01:52:36Z | 2019-09-03T00:09:42Z | OWNER | Now that we're creating triggers (thanks to @amjith in #57) it would be neat if we could introspect them too. I'm thinking:

The underlying query for this is I'll return the trigger information in a new namedtuple, similar to how Indexes and ForeignKeys work. |

sqlite-utils 140912432 | issue | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/59/reactions",

"total_count": 1,

"+1": 1,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 312395790 | MDU6SXNzdWUzMTIzOTU3OTA= | 197 | Ability to sort by more than one column | simonw 9599 | open | 0 | 0 | 2018-04-09T05:13:30Z | 2018-07-10T17:45:37Z | OWNER | Split off from #189. I'd like to support "sort by X descending, then by Y ascending if there are dupes for X" as well. Suggested syntax for that: we currently only allow one argument to be sent. We should allow as many arguments as there are columns, for example: |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/197/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 312396095 | MDU6SXNzdWUzMTIzOTYwOTU= | 198 | Ability to sort with nulls last | simonw 9599 | open | 0 | 0 | 2018-04-09T05:15:40Z | 2018-07-10T17:45:37Z | OWNER | Split off from #189 Here's how to do that in SQL: https://fivethirtyeight.datasettes.com/fivethirtyeight-2628db9?sql=select+rowid%2C+*+from+%5Bnfl-wide-receivers%2Fadvanced-historical%5D%0D%0Aorder+by+case+when+career_ranypa+is+null+then+1+else+0+end%2C+career_ranypa%2C+rowid |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/198/reactions",

"total_count": 1,

"+1": 1,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 1399933513 | I_kwDOBm6k_c5TcUpJ | 1833 | Ability to submit long queries by POST | simonw 9599 | open | 0 | 0 | 2022-10-06T16:03:26Z | 2022-10-06T16:18:00Z | OWNER | Datasette doesn't limit URL lengths but some common web proxies do - the one in front of Google Cloud Run for example limits to 8KB total for incoming request headers: https://cloud.google.com/load-balancing/docs/quotas#https-lb-header-limits This means longer SQL queries can break! Need an optional mechanism for submitting queries by POST instead. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1833/reactions",

"total_count": 1,

"+1": 1,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 1674322631 | PR_kwDOBm6k_c5OpEz_ | 2061 | Add "Packaging a plugin using Poetry" section in docs | rclement 1238873 | open | 0 | 0 | 2023-04-19T07:23:28Z | 2023-04-19T07:27:18Z | FIRST_TIME_CONTRIBUTOR | simonw/datasette/pulls/2061 | This PR adds a new section about packaging a plugin using :books: Documentation preview :books:: https://datasette--2061.org.readthedocs.build/en/2061/ |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/2061/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

| 1243715381 | I_kwDOCGYnMM5KIZc1 | 436 | Add "copy to clipboard" button to code examples in documentation | simonw 9599 | closed | 0 | 0 | 2022-05-20T21:53:23Z | 2022-05-20T21:57:53Z | 2022-05-20T21:57:53Z | OWNER | Follows: - #435 Imitates: - https://github.com/simonw/datasette/issues/1748 I'll use https://github.com/executablebooks/sphinx-copybutton - here's the Datasette commit: https://github.com/simonw/datasette/commit/1465fea4798599eccfe7e8f012bd8d9adfac3039 |

sqlite-utils 140912432 | issue | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/436/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 668064778 | MDU6SXNzdWU2NjgwNjQ3Nzg= | 912 | Add "publishing to Vercel" to the publish docs | simonw 9599 | closed | 0 | 0 | 2020-07-29T18:50:58Z | 2020-07-31T17:06:35Z | 2020-07-31T17:06:35Z | OWNER | https://datasette.readthedocs.io/en/0.45/publish.html#datasette-publish currently only lists Cloud Run, Heroku and Fly. It should list Vercel too. (I should probably rename |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/912/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 924991194 | MDU6SXNzdWU5MjQ5OTExOTQ= | 280 | Add --encoding option to sqlite-utils memory | simonw 9599 | closed | 0 | 0 | 2021-06-18T15:03:32Z | 2021-06-18T15:29:46Z | 2021-06-18T15:29:46Z | OWNER | Follow-on from #272 - this will work like |

sqlite-utils 140912432 | issue | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/280/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 273895344 | MDU6SXNzdWUyNzM4OTUzNDQ= | 92 | Add --license --license_url --source --source_url --title arguments to datasette publish | simonw 9599 | closed | 0 | 0 | 2017-11-14T18:27:07Z | 2017-11-15T05:04:41Z | 2017-11-15T05:04:41Z | OWNER | I keep on using the https://gist.github.com/simonw/9f8bf23b37a42d7628c4dcc4bba10253 |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/92/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 1553425465 | I_kwDOCGYnMM5cl2Q5 | 522 | Add COLUMN_TYPE_MAPPING for timedelta | maport 81377 | closed | 0 | 0 | 2023-01-23T16:49:54Z | 2023-11-04T00:49:51Z | 2023-11-04T00:49:51Z | NONE | Currently trying to create a column with Python type ```

The reason this would be useful is that ```

So currently any attempt to convert a MySQL DB with a I was rather surprised that |

sqlite-utils 140912432 | issue | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/522/reactions",

"total_count": 1,

"+1": 1,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 635076066 | MDU6SXNzdWU2MzUwNzYwNjY= | 821 | Add Response class to internals documentation | simonw 9599 | closed | 0 | Datasette 0.44 5512395 | 0 | 2020-06-09T03:11:06Z | 2020-06-09T03:32:16Z | 2020-06-09T03:32:16Z | OWNER |

Originally posted by @simonw in https://github.com/simonw/datasette/issues/215#issuecomment-640971470 |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/821/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 273537940 | MDU6SXNzdWUyNzM1Mzc5NDA= | 77 | Add Travis CI badge to README | simonw 9599 | closed | 0 | Ship first public release 2857392 | 0 | 2017-11-13T18:52:25Z | 2017-11-13T21:24:15Z | 2017-11-13T21:24:15Z | OWNER | Also fix this newline issue:

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/77/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 1823428714 | I_kwDOBm6k_c5sr1Bq | 2120 | Add __all__ to datasette/__init__.py | simonw 9599 | open | 0 | 0 | 2023-07-27T01:07:10Z | 2023-07-27T01:07:10Z | OWNER | Currently looks like this: https://github.com/simonw/datasette/blob/08181823990a71ffa5a1b57b37259198eaa43e06/datasette/init.py#L1-L6 Adding |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/2120/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 931752773 | MDU6SXNzdWU5MzE3NTI3NzM= | 294 | Add a `sqlite-utils memory` example to the README | simonw 9599 | closed | 0 | 0 | 2021-06-28T16:35:59Z | 2021-08-18T21:40:03Z | 2021-08-18T21:40:03Z | OWNER | sqlite-utils 140912432 | issue | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/294/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||||

| 830901133 | MDExOlB1bGxSZXF1ZXN0NTkyMzY0MjU1 | 16 | Add a fallback ID, print if no ID found | n8henrie 1234956 | open | 0 | 0 | 2021-03-13T13:38:29Z | 2021-03-13T14:44:04Z | FIRST_TIME_CONTRIBUTOR | dogsheep/healthkit-to-sqlite/pulls/16 | healthkit-to-sqlite 197882382 | pull | {

"url": "https://api.github.com/repos/dogsheep/healthkit-to-sqlite/issues/16/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | |||||||

| 439487648 | MDExOlB1bGxSZXF1ZXN0Mjc1MjgxMzA3 | 444 | Add a max-line-length setting for flake8 | russss 45057 | closed | 0 | 0 | 2019-05-02T08:58:57Z | 2019-05-04T09:44:48Z | 2019-05-03T13:11:28Z | CONTRIBUTOR | simonw/datasette/pulls/444 | This stops my automatic editor linting from flagging lines which are too long. It's been lingering in my checkout for ages. 160 is an arbitrary large number - we could alter it if we have any opinions (but I find the line length limit to be my least favourite part of PEP8). |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/444/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | |||||

| 689800307 | MDU6SXNzdWU2ODk4MDAzMDc= | 1 | Add an index on the timestamp column | simonw 9599 | closed | 0 | 0 | 2020-09-01T04:33:37Z | 2020-09-01T04:49:23Z | 2020-09-01T04:49:23Z | MEMBER | Since default view will likely be ordered by timestamp descending. |

dogsheep-beta 197431109 | issue | {

"url": "https://api.github.com/repos/dogsheep/dogsheep-beta/issues/1/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 1102966378 | I_kwDOBm6k_c5Bve5q | 1599 | Add architecture documentation | simonw 9599 | open | 0 | 0 | 2022-01-14T04:55:38Z | 2022-01-14T04:56:03Z | OWNER | datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1599/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

|||||||||

| 558715564 | MDExOlB1bGxSZXF1ZXN0MzcwMDI0Njk3 | 4 | Add beeminder-to-sqlite | bcongdon 706257 | closed | 0 | 0 | 2020-02-02T15:51:36Z | 2020-10-12T00:36:16Z | 2020-10-12T00:36:16Z | CONTRIBUTOR | dogsheep/dogsheep.github.io/pulls/4 | dogsheep.github.io 214746582 | pull | {

"url": "https://api.github.com/repos/dogsheep/dogsheep.github.io/issues/4/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

| 927789811 | MDU6SXNzdWU5Mjc3ODk4MTE= | 292 | Add contributing documentation | simonw 9599 | closed | 0 | 0 | 2021-06-23T02:13:05Z | 2021-06-25T17:53:51Z | 2021-06-25T17:53:51Z | OWNER | Like https://docs.datasette.io/en/latest/contributing.html (but simpler) - should cover how to run |

sqlite-utils 140912432 | issue | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/292/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 1581218043 | PR_kwDOBm6k_c5JyqPy | 2025 | Add database metadata to index.html template context | palewire 9993 | open | 0 | 0 | 2023-02-12T11:16:58Z | 2023-02-12T11:17:14Z | FIRST_TIME_CONTRIBUTOR | simonw/datasette/pulls/2025 | Fixes #2016 :books: Documentation preview :books:: https://datasette--2025.org.readthedocs.build/en/2025/ |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/2025/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

| 464994105 | MDU6SXNzdWU0NjQ5OTQxMDU= | 548 | Add datasette-cors and datasette-auth-github plugins to Ecosystem page | simonw 9599 | closed | 0 | Datasette 0.29 4471010 | 0 | 2019-07-07T21:14:14Z | 2019-07-08T02:02:36Z | 2019-07-08T02:02:36Z | OWNER | datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/548/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 836064851 | MDExOlB1bGxSZXF1ZXN0NTk2NjI3Nzgw | 18 | Add datetime parsing | n8henrie 1234956 | open | 0 | 0 | 2021-03-19T14:34:22Z | 2021-03-19T14:34:22Z | FIRST_TIME_CONTRIBUTOR | dogsheep/healthkit-to-sqlite/pulls/18 | Parses the datetime columns so they are subsequently properly recognized as datetime. Fixes https://github.com/dogsheep/healthkit-to-sqlite/issues/17 |

healthkit-to-sqlite 197882382 | pull | {

"url": "https://api.github.com/repos/dogsheep/healthkit-to-sqlite/issues/18/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

| 291451116 | MDExOlB1bGxSZXF1ZXN0MTY1MDI5ODA3 | 182 | Add db filesize next to download link | raynae 3433657 | closed | 0 | 0 | 2018-01-25T04:58:56Z | 2019-03-22T13:50:57Z | 2019-02-06T04:59:38Z | CONTRIBUTOR | simonw/datasette/pulls/182 | Took a stab at #172, will this do the trick? |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/182/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | |||||

| 1396977994 | I_kwDOBm6k_c5TRDFK | 1830 | Add documentation for writing tests with signed actor cookies | simonw 9599 | open | 0 | 0 | 2022-10-04T23:51:26Z | 2022-10-04T23:51:26Z | OWNER | I use this pattirn in a lot of plugin tests, e.g. https://github.com/simonw/datasette-edit-templates/blob/087f6a6cabc20020f2b0524f11aa3a7836320848/tests/test_edit_templates.py#L55-L58

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1830/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 1099897648 | I_kwDOCGYnMM5Bjxsw | 384 | Add examples to every `--help` | simonw 9599 | closed | 0 | 0 | 2022-01-12T05:31:25Z | 2022-01-26T03:15:02Z | 2022-01-26T03:15:02Z | OWNER | Everything on https://sqlite-utils.datasette.io/en/stable/cli-reference.html would benefit from an example. |

sqlite-utils 140912432 | issue | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/384/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 723499985 | MDExOlB1bGxSZXF1ZXN0NTA1MDc2NDE4 | 5 | Add fitbit-to-sqlite | mrphil007 4632208 | open | 0 | 0 | 2020-10-16T20:04:05Z | 2020-10-16T20:04:05Z | FIRST_TIME_CONTRIBUTOR | dogsheep/dogsheep.github.io/pulls/5 | dogsheep.github.io 214746582 | pull | {

"url": "https://api.github.com/repos/dogsheep/dogsheep.github.io/issues/5/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | |||||||

| 1178456794 | I_kwDOCGYnMM5GPdLa | 418 | Add generated files to .gitignore | eyeseast 25778 | closed | 0 | 0 | 2022-03-23T17:48:12Z | 2022-03-24T21:01:44Z | 2022-03-24T21:01:44Z | CONTRIBUTOR | I end up with these in my local directory: Might as well gitignore them. |

sqlite-utils 140912432 | issue | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/418/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 925384329 | MDExOlB1bGxSZXF1ZXN0NjczODcyOTc0 | 7 | Add instagram-to-sqlite | gavindsouza 36654812 | open | 0 | 0 | 2021-06-19T12:26:16Z | 2021-07-28T07:58:59Z | FIRST_TIME_CONTRIBUTOR | dogsheep/dogsheep.github.io/pulls/7 | The tool covers only chat imports at the time of opening this PR but I'm planning to import everything else that I feel inquisitive about |

dogsheep.github.io 214746582 | pull | {

"url": "https://api.github.com/repos/dogsheep/dogsheep.github.io/issues/7/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

| 541274681 | MDU6SXNzdWU1NDEyNzQ2ODE= | 2 | Add linkedin-to-sqlite | mnp 881925 | open | 0 | 0 | 2019-12-21T03:13:40Z | 2019-12-21T03:13:40Z | NONE | There is an API available. https://developer.linkedin.com/docs/rest-api# At the minimum, I would think contact list and messages would be of interest. |

dogsheep.github.io 214746582 | issue | {

"url": "https://api.github.com/repos/dogsheep/dogsheep.github.io/issues/2/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 504720731 | MDU6SXNzdWU1MDQ3MjA3MzE= | 1 | Add more details on how to request data from google takeout correctly. | dazzag24 1055831 | open | 0 | 0 | 2019-10-09T15:17:34Z | 2019-10-09T15:17:34Z | NONE | The default is to download everything. This can result in an enormous amount of data when you only really need 2 types of data for now:

In addition unless you specify that "My Activity" is downloaded in JSON format the default is HTML. This then causes the

command to fail as it only contains html files not json files. Thanks |

google-takeout-to-sqlite 206649770 | issue | {

"url": "https://api.github.com/repos/dogsheep/google-takeout-to-sqlite/issues/1/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 697162939 | MDU6SXNzdWU2OTcxNjI5Mzk= | 20 | Add more tags so people can find your project. | ran88dom99 7902810 | open | 0 | 0 | 2020-09-09T21:14:09Z | 2020-09-09T21:14:09Z | NONE | quantified-self habit-tracking google-fit time-tracking wearables quantifiedself for example |

dogsheep-beta 197431109 | issue | {

"url": "https://api.github.com/repos/dogsheep/dogsheep-beta/issues/20/reactions",

"total_count": 1,

"+1": 0,

"-1": 1,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 1453134846 | I_kwDOCGYnMM5WnRP- | 513 | Add or document streamlined workflow for importing Datasette csv / json exports | henry501 19328961 | open | 0 | 0 | 2022-11-17T10:54:47Z | 2022-11-17T10:54:47Z | NONE | I'm working on some small front-end enhancements to the laion-aesthetic-datasette project, and I wanted to partially populate a database directly using exports from the existing Datasette instance instead of downloading the parquet files and creating my own multi-GB database. There have been a number of small issues that are certainly related to my relative lack of familiarity with the toolkit, but that are still surprising. For example: a CSV export of the images table (http://laion-aesthetic.datasette.io/laion-aesthetic-6pls.csv?sql=select+rowid%2C+url%2C+text%2C+domain_id%2C+width%2C+height%2C+similarity%2C+punsafe%2C+pwatermark%2C+aesthetic%2C+hash%2C+index_level_0+from+images+order+by+random%28%29+limit+100) has nested single quotes, double quotes, and commas that aren't handled by rows_from_file. Similarly, the json output has to be manually transformed to add the column names and remove extraneous information before sqlite_utils can import it. I was able to work through these issues, but as an enhancement it would be really helpful to create or document a clear workflow that avoids the friction of this data transformation. |

sqlite-utils 140912432 | issue | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/513/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 519979091 | MDExOlB1bGxSZXF1ZXN0MzM4NjQ3Mzc4 | 1 | Add parkrun-to-sqlite | mrw34 1101318 | closed | 0 | 0 | 2019-11-08T12:05:32Z | 2020-10-12T00:35:16Z | 2020-10-12T00:35:16Z | CONTRIBUTOR | dogsheep/dogsheep.github.io/pulls/1 | dogsheep.github.io 214746582 | pull | {

"url": "https://api.github.com/repos/dogsheep/dogsheep.github.io/issues/1/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

| 915488244 | MDU6SXNzdWU5MTU0ODgyNDQ= | 1372 | Add section to "writing plugins" about security, e.g. avoiding XSS | simonw 9599 | open | 0 | 0 | 2021-06-08T20:49:33Z | 2021-06-08T20:49:46Z | OWNER | https://docs.datasette.io/en/stable/writing_plugins.html should have tips on writing secure plugins. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1372/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 1513237712 | PR_kwDODEm0Qs5GUoG_ | 67 | Add support for app-only bearer tokens | sometimes-i-send-pull-requests 26161409 | open | 0 | 0 | 2022-12-28T23:31:20Z | 2022-12-28T23:31:20Z | FIRST_TIME_CONTRIBUTOR | dogsheep/twitter-to-sqlite/pulls/67 | Previously, twitter-to-sqlite only supported OAuth1 authentication, and the token must be on behalf of a user. However, Twitter also supports application-only bearer tokens, documented here: https://developer.twitter.com/en/docs/authentication/oauth-2-0/bearer-tokens This PR adds support to twitter-to-sqlite for using application-only bearer tokens. To use, the auth.json file just needs to contain a "bearer_token" key instead of "api_key", "api_secret_key", etc. |

twitter-to-sqlite 206156866 | pull | {

"url": "https://api.github.com/repos/dogsheep/twitter-to-sqlite/issues/67/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

| 1013506559 | PR_kwDODFdgUs4skaNS | 68 | Add support for retrieving teams / members | philwills 68329 | open | 0 | 0 | 2021-10-01T15:55:02Z | 2021-10-01T15:59:53Z | FIRST_TIME_CONTRIBUTOR | dogsheep/github-to-sqlite/pulls/68 | Adds a method for retrieving all the teams within an organisation and all the members in those teams. The latter is stored as a join table |

github-to-sqlite 207052882 | pull | {

"url": "https://api.github.com/repos/dogsheep/github-to-sqlite/issues/68/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

| 543717994 | MDExOlB1bGxSZXF1ZXN0MzU3OTc0MzI2 | 3 | Add todoist-to-sqlite | bcongdon 706257 | closed | 0 | 0 | 2019-12-30T04:02:59Z | 2020-10-12T00:35:58Z | 2020-10-12T00:35:57Z | CONTRIBUTOR | dogsheep/dogsheep.github.io/pulls/3 | Really enjoying getting into the dogsheep/datasette ecosystem. I made a downloader for Todoist, and I think/hope others might find this useful |

dogsheep.github.io 214746582 | pull | {

"url": "https://api.github.com/repos/dogsheep/dogsheep.github.io/issues/3/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | |||||

| 1822918995 | I_kwDOCGYnMM5sp4lT | 580 | Add way to export to a csv file using the Python library | kevinlinxc 44324811 | open | 0 | 0 | 2023-07-26T18:09:26Z | 2023-07-26T18:09:26Z | NONE | According to the documentation, we can make a csv output using the CLI tool, but not the Python library. Could we have the latter? |

sqlite-utils 140912432 | issue | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/580/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

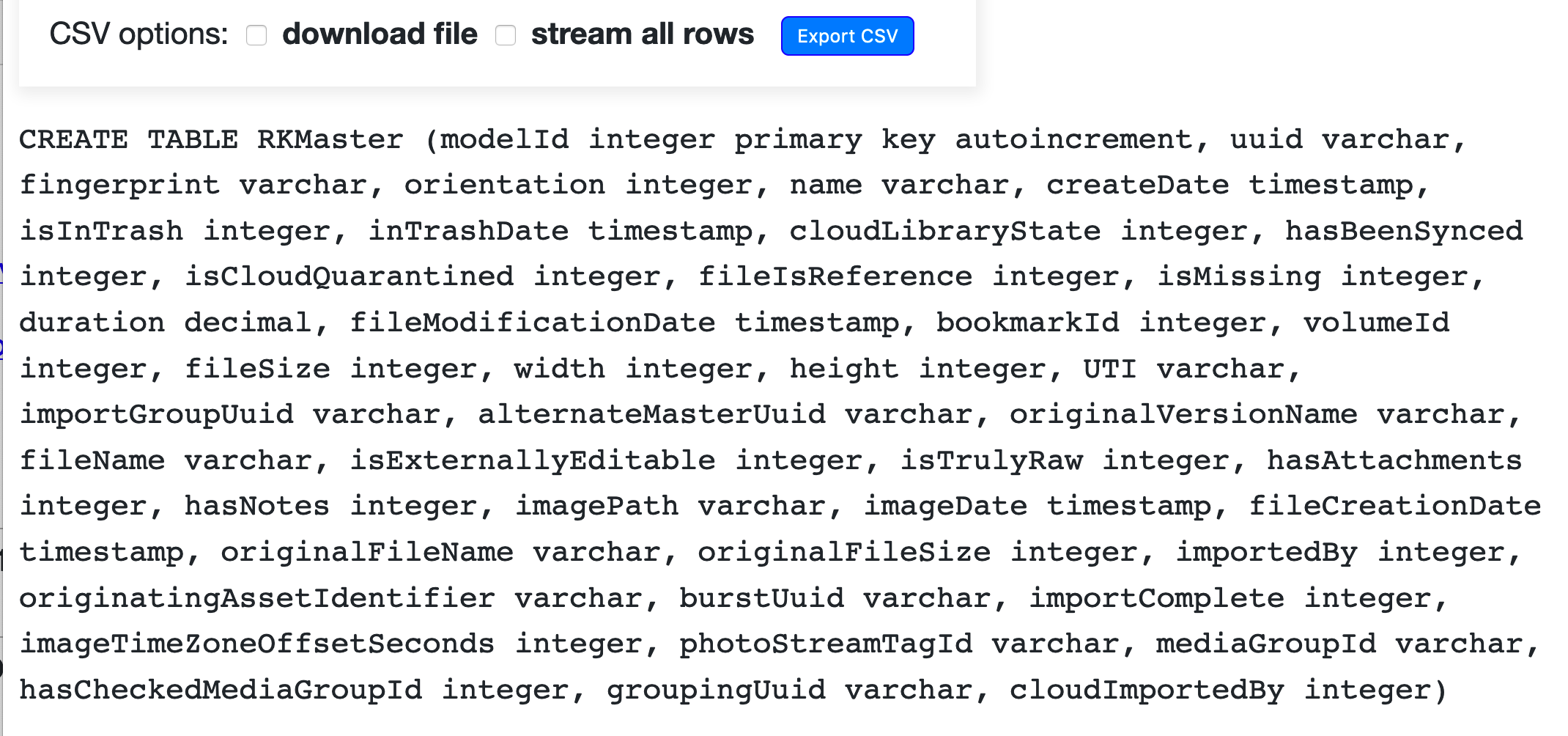

| 453829910 | MDU6SXNzdWU0NTM4Mjk5MTA= | 505 | Add white-space: pre-wrap to SQL create statement | simonw 9599 | closed | 0 | simonw 9599 | Datasette 0.29 4471010 | 0 | 2019-06-08T19:59:56Z | 2019-07-07T20:26:55Z | 2019-07-07T20:26:55Z | OWNER | Right now a super-long CREATE TABLE statement causes the table page to be even wider than the table itself:

Adding

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/505/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||

| 463531894 | MDExOlB1bGxSZXF1ZXN0MjkzOTkyMzgy | 535 | Added asgi_wrapper plugin hook, closes #520 | simonw 9599 | closed | 0 | 0 | 2019-07-03T03:58:00Z | 2019-07-03T04:06:26Z | 2019-07-03T04:06:26Z | OWNER | simonw/datasette/pulls/535 | datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/535/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

| 1244082183 | PR_kwDODEm0Qs44PPLy | 66 | Ageinfo workaround | ashanan 11887 | open | 0 | 0 | 2022-05-21T21:08:29Z | 2022-05-21T21:09:16Z | FIRST_TIME_CONTRIBUTOR | dogsheep/twitter-to-sqlite/pulls/66 | I'm not sure if this is due to a new format or just because my ageinfo file is blank, but trying to import an archive would crash when it got to that file. This PR adds a guard clause in the Let me know if you want any changes! |

twitter-to-sqlite 206156866 | pull | {

"url": "https://api.github.com/repos/dogsheep/twitter-to-sqlite/issues/66/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

| 1251700382 | I_kwDOBm6k_c5Km26e | 1750 | Allow `label_column` to specify array of columns | knutwannheden 408765 | open | 0 | 0 | 2022-05-28T18:45:48Z | 2022-05-28T18:45:48Z | NONE | I think it would be great if the Datasette metadata would allow the |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1750/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 1504352503 | I_kwDOBm6k_c5Zqpj3 | 1968 | Allow to hide some queries in metadata.yml | CharlesNepote 562352 | open | 0 | 0 | 2022-12-20T10:45:41Z | 2022-12-20T10:45:41Z | NONE | By default all queries are displayed. But there are many cases where it would be interesting to hide the queries by default: * the website is targeting non-tech people * the query is veeeeeery long (eg.) * reading the query is not important for the users, they only want to see the result Of course, the user still could have the option to see the query. It could be an option in the metadata file:

The priority could be: * no option in the metadata and nothing in the URL: query displayed * hide_sql in the metadata and nothing in the URL: query displayed as asked in the metadata * hide_sql in the metadata and &_hide_sql= in the URL: query as asked in the URL See also: #1824 |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1968/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 440237422 | MDExOlB1bGxSZXF1ZXN0Mjc1ODYxNTU5 | 449 | Apply black to everything | simonw 9599 | closed | 0 | 0 | 2019-05-03T21:57:26Z | 2019-05-04T02:17:14Z | 2019-05-04T02:15:15Z | OWNER | simonw/datasette/pulls/449 | I've been hesitating on this for literally months, because I'm not at all excited about the giant diff that will result. But I've been using black on many of my other projects (most actively sqlite-utils) and the productivity boost is undeniable: I don't have to spend a single second thinking about code formatting any more! So it's worth swallowing the one-off pain and moving on in a new, black-enabled world. |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/449/reactions",

"total_count": 4,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 4,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | |||||

| 1513238455 | PR_kwDODEm0Qs5GUoPm | 71 | Archive: Fix "ni devices" typo in importer | sometimes-i-send-pull-requests 26161409 | open | 0 | 0 | 2022-12-28T23:33:31Z | 2022-12-28T23:33:31Z | FIRST_TIME_CONTRIBUTOR | dogsheep/twitter-to-sqlite/pulls/71 | twitter-to-sqlite 206156866 | pull | {

"url": "https://api.github.com/repos/dogsheep/twitter-to-sqlite/issues/71/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | |||||||

| 1513238314 | PR_kwDODEm0Qs5GUoN6 | 70 | Archive: Import Twitter Circle data | sometimes-i-send-pull-requests 26161409 | open | 0 | 0 | 2022-12-28T23:33:09Z | 2022-12-28T23:33:09Z | FIRST_TIME_CONTRIBUTOR | dogsheep/twitter-to-sqlite/pulls/70 | twitter-to-sqlite 206156866 | pull | {

"url": "https://api.github.com/repos/dogsheep/twitter-to-sqlite/issues/70/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | |||||||

| 1513237982 | PR_kwDODEm0Qs5GUoKL | 68 | Archive: Import mute table | sometimes-i-send-pull-requests 26161409 | open | 0 | 0 | 2022-12-28T23:32:06Z | 2022-12-28T23:32:06Z | FIRST_TIME_CONTRIBUTOR | dogsheep/twitter-to-sqlite/pulls/68 | twitter-to-sqlite 206156866 | pull | {

"url": "https://api.github.com/repos/dogsheep/twitter-to-sqlite/issues/68/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | |||||||

| 1513238152 | PR_kwDODEm0Qs5GUoMM | 69 | Archive: Import new tweets table name | sometimes-i-send-pull-requests 26161409 | open | 0 | 0 | 2022-12-28T23:32:44Z | 2022-12-28T23:32:44Z | FIRST_TIME_CONTRIBUTOR | dogsheep/twitter-to-sqlite/pulls/69 | Given the code here, it seems like in the past this file was named "tweet.js". In recent exports, it's named "tweets.js". The archive importer needs to be modified to take this into account. Existing logic is reused for importing this table. (However, the resulting table name will be different, matching the different file name -- archive_tweets, rather than archive_tweet). |

twitter-to-sqlite 206156866 | pull | {

"url": "https://api.github.com/repos/dogsheep/twitter-to-sqlite/issues/69/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

| 627836898 | MDExOlB1bGxSZXF1ZXN0NDI1NTMxMjA1 | 783 | Authentication: plugin hooks plus default --root auth mechanism | simonw 9599 | closed | 0 | 0 | 2020-05-30T22:25:47Z | 2020-06-01T01:16:44Z | 2020-06-01T01:16:43Z | OWNER | simonw/datasette/pulls/783 | See #699 |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/783/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | |||||

| 1586980089 | PR_kwDOBm6k_c5KF-by | 2026 | Avoid repeating primary key columns if included in _col args | runderwood 8513 | open | 0 | 0 | 2023-02-16T04:16:25Z | 2023-02-16T04:16:41Z | FIRST_TIME_CONTRIBUTOR | simonw/datasette/pulls/2026 | ...while maintaining given order. Fixes #1975 (if I'm understanding correctly). :books: Documentation preview :books:: https://datasette--2026.org.readthedocs.build/en/2026/ |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/2026/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

| 1452360613 | I_kwDOBm6k_c5WkUOl | 1895 | Avoid using host name when building absolute URLs? | hubgit 14294 | open | 0 | 0 | 2022-11-16T22:21:27Z | 2022-11-16T22:21:27Z | NONE | When deploying Datasette to Cloud Run and rewriting certain routes from a Firebase app to the Cloud Run service, some of the URLs in the page start with I guess this is because a) the custom domain of the Firebase app isn't being passed through in the Would it be possible to not use the host name when building the absolute URLs, i.e. only include the path in the URL? |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1895/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 625922239 | MDExOlB1bGxSZXF1ZXN0NDI0MDMyNDQ1 | 769 | Backport of Python 3.8 shutil.copytree | simonw 9599 | closed | 0 | Datasette 0.43 5471110 | 0 | 2020-05-27T18:17:15Z | 2020-05-27T20:21:56Z | 2020-05-27T18:17:44Z | OWNER | simonw/datasette/pulls/769 | Closes #744 |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/769/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||

| 1196327155 | I_kwDOBm6k_c5HToDz | 1702 | Be more consistent with column quoting | simonw 9599 | open | 0 | 0 | 2022-04-07T16:59:20Z | 2022-04-07T16:59:20Z | OWNER | This tutorial made me notice that Datasette is pretty inconsistent with how column quoting works: https://datasette.io/tutorials/learn-sql It has examples of each of Datasette should generate SQL as consistently as possible to support learners. That tutorial should also provide a tiny bit of extra information about what's going on here. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1702/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 1107557831 | I_kwDOCGYnMM5CA_3H | 386 | Better "contributing" documentation | simonw 9599 | closed | 0 | 0 | 2022-01-19T02:11:48Z | 2022-01-19T02:15:21Z | 2022-01-19T02:15:21Z | OWNER | This page jumps straight into running the tests: https://sqlite-utils.datasette.io/en/latest/contributing.html It should add a little more about expected collaboration styles - opening an issue before filing a pull request - and probably link to https://simonwillison.net/2022/Jan/12/how-i-build-a-feature/ |

sqlite-utils 140912432 | issue | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/386/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 267516329 | MDU6SXNzdWUyNjc1MTYzMjk= | 6 | Better JSON response options | simonw 9599 | closed | 0 | Ship first public release 2857392 | 0 | 2017-10-23T01:18:47Z | 2017-10-24T15:07:58Z | 2017-10-24T15:07:58Z | OWNER | Default returns this: .jsono instead returns a list of objects each duplicating the headers in its keys. They both probably share the same pagination mechanism so it might not be a jsono flat list. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/6/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 1182143895 | I_kwDOBm6k_c5GdhWX | 1691 | Bug in pytest-httpx example | simonw 9599 | closed | 0 | 0 | 2022-03-26T22:45:30Z | 2022-03-26T22:46:09Z | 2022-03-26T22:46:09Z | OWNER |

https://github.com/Colin-b/pytest_httpx/blob/v0.20.0/README.md#reply-with-custom-body |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1691/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 703962917 | MDU6SXNzdWU3MDM5NjI5MTc= | 22 | Bug: UI says sorted by relevance in timeline view | simonw 9599 | closed | 0 | 0 | 2020-09-17T23:02:07Z | 2020-09-17T23:13:14Z | 2020-09-17T23:13:14Z | MEMBER | In regular timeline view sort defaults to newest, not relevance - so this UI is incorrect:

|

dogsheep-beta 197431109 | issue | {

"url": "https://api.github.com/repos/dogsheep/dogsheep-beta/issues/22/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 591613579 | MDU6SXNzdWU1OTE2MTM1Nzk= | 41 | Bug: recorded a since_id for None, None | simonw 9599 | closed | 0 | 0 | 2020-04-01T04:29:43Z | 2020-04-01T04:31:11Z | 2020-04-01T04:31:11Z | MEMBER | This shouldn't happen in the

|

twitter-to-sqlite 206156866 | issue | {

"url": "https://api.github.com/repos/dogsheep/twitter-to-sqlite/issues/41/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 340733753 | MDExOlB1bGxSZXF1ZXN0MjAxMDc1NTMy | 341 | Bump aiohttp to fix compatibility with Python 3.7 | simonw 9599 | closed | 0 | 0 | 2018-07-12T17:41:24Z | 2018-07-12T18:07:38Z | 2018-07-12T18:07:38Z | OWNER | simonw/datasette/pulls/341 | Tests failed here: https://travis-ci.org/simonw/datasette/jobs/403223333 |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/341/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | |||||

| 1491840863 | PR_kwDOBm6k_c5FMKSG | 1944 | Bump black from 22.10.0 to 22.12.0 | dependabot[bot] 49699333 | closed | 0 | 0 | 2022-12-12T13:05:11Z | 2022-12-13T05:23:31Z | 2022-12-13T05:23:30Z | CONTRIBUTOR | simonw/datasette/pulls/1944 | Bumps black from 22.10.0 to 22.12.0. Release notesSourced from black's releases.

ChangelogSourced from black's changelog.

Commits

Dependabot will resolve any conflicts with this PR as long as you don't alter it yourself. You can also trigger a rebase manually by commenting Dependabot commands and optionsYou can trigger Dependabot actions by commenting on this PR: - `@dependabot rebase` will rebase this PR - `@dependabot recreate` will recreate this PR, overwriting any edits that have been made to it - `@dependabot merge` will merge this PR after your CI passes on it - `@dependabot squash and merge` will squash and merge this PR after your CI passes on it - `@dependabot cancel merge` will cancel a previously requested merge and block automerging - `@dependabot reopen` will reopen this PR if it is closed - `@dependabot close` will close this PR and stop Dependabot recreating it. You can achieve the same result by closing it manually - `@dependabot ignore this major version` will close this PR and stop Dependabot creating any more for this major version (unless you reopen the PR or upgrade to it yourself) - `@dependabot ignore this minor version` will close this PR and stop Dependabot creating any more for this minor version (unless you reopen the PR or upgrade to it yourself) - `@dependabot ignore this dependency` will close this PR and stop Dependabot creating any more for this dependency (unless you reopen the PR or upgrade to it yourself) |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1944/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | |||||

| 1645098678 | PR_kwDOBm6k_c5NIQri | 2047 | Bump black from 22.12.0 to 23.3.0 | dependabot[bot] 49699333 | closed | 0 | 0 | 2023-03-29T06:09:06Z | 2023-03-29T06:12:21Z | 2023-03-29T06:12:05Z | CONTRIBUTOR | simonw/datasette/pulls/2047 | Bumps black from 22.12.0 to 23.3.0. Release notesSourced from black's releases.

... (truncated) ChangelogSourced from black's changelog.

... (truncated) Commits

Dependabot will resolve any conflicts with this PR as long as you don't alter it yourself. You can also trigger a rebase manually by commenting Dependabot commands and optionsYou can trigger Dependabot actions by commenting on this PR: - `@dependabot rebase` will rebase this PR - `@dependabot recreate` will recreate this PR, overwriting any edits that have been made to it - `@dependabot merge` will merge this PR after your CI passes on it - `@dependabot squash and merge` will squash and merge this PR after your CI passes on it - `@dependabot cancel merge` will cancel a previously requested merge and block automerging - `@dependabot reopen` will reopen this PR if it is closed - `@dependabot close` will close this PR and stop Dependabot recreating it. You can achieve the same result by closing it manually - `@dependabot ignore this major version` will close this PR and stop Dependabot creating any more for this major version (unless you reopen the PR or upgrade to it yourself) - `@dependabot ignore this minor version` will close this PR and stop Dependabot creating any more for this minor version (unless you reopen the PR or upgrade to it yourself) - `@dependabot ignore this dependency` will close this PR and stop Dependabot creating any more for this dependency (unless you reopen the PR or upgrade to it yourself) :books: Documentation preview :books:: https://datasette--2047.org.readthedocs.build/en/2047/ |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/2047/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 |

Advanced export

JSON shape: default, array, newline-delimited, object

CREATE TABLE [issues] (

[id] INTEGER PRIMARY KEY,

[node_id] TEXT,

[number] INTEGER,

[title] TEXT,

[user] INTEGER REFERENCES [users]([id]),

[state] TEXT,

[locked] INTEGER,

[assignee] INTEGER REFERENCES [users]([id]),

[milestone] INTEGER REFERENCES [milestones]([id]),

[comments] INTEGER,

[created_at] TEXT,

[updated_at] TEXT,

[closed_at] TEXT,

[author_association] TEXT,

[pull_request] TEXT,

[body] TEXT,

[repo] INTEGER REFERENCES [repos]([id]),

[type] TEXT

, [active_lock_reason] TEXT, [performed_via_github_app] TEXT, [reactions] TEXT, [draft] INTEGER, [state_reason] TEXT);

CREATE INDEX [idx_issues_repo]

ON [issues] ([repo]);

CREATE INDEX [idx_issues_milestone]

ON [issues] ([milestone]);

CREATE INDEX [idx_issues_assignee]

ON [issues] ([assignee]);

CREATE INDEX [idx_issues_user]

ON [issues] ([user]);

comments 1 ✖