issues

374 rows where comments = 2 and type = "issue" sorted by title

This data as json, CSV (advanced)

Suggested facets: milestone, author_association, state_reason, created_at (date), updated_at (date), closed_at (date)

repo 12

type 1

- issue · 374 ✖

| id | node_id | number | title ▼ | user | state | locked | assignee | milestone | comments | created_at | updated_at | closed_at | author_association | pull_request | body | repo | type | active_lock_reason | performed_via_github_app | reactions | draft | state_reason |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 628121234 | MDU6SXNzdWU2MjgxMjEyMzQ= | 788 | /-/permissions debugging tool | simonw 9599 | closed | 0 | Datasette 0.44 5512395 | 2 | 2020-06-01T03:13:47Z | 2020-06-06T00:43:40Z | 2020-06-01T05:01:01Z | OWNER |

Originally posted by @simonw in https://github.com/simonw/datasette/issues/699#issuecomment-636576603 |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/788/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 1524867951 | I_kwDOBm6k_c5a46Nv | 1980 | "Cannot sort table by id" when sortable_columns is used | simonw 9599 | open | 0 | 2 | 2023-01-09T03:21:33Z | 2023-01-09T03:23:53Z | OWNER | I had an instance with this in

It sent me to

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1980/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 1223527226 | I_kwDOBm6k_c5I7Ys6 | 1738 | "Cannot use _sort and _sort_desc at the same time" | simonw 9599 | closed | 0 | Datasette 0.62 8303187 | 2 | 2022-05-03T01:06:24Z | 2022-08-14T16:13:55Z | 2022-08-14T16:13:55Z | OWNER | Triggered this error while playing with the sort desc checkbox and the apply button that are only visible on this page at mobile screen width: https://latest.datasette.io/fixtures/compound_three_primary_keys?_sort_desc=pk1 Navigate to that page (with the browser narrow enough to show the box), un-check the box and click Apply:

Also notable: I managed to get to a page with |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1738/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 520508502 | MDU6SXNzdWU1MjA1MDg1MDI= | 31 | "friends" command (similar to "followers") | simonw 9599 | closed | 0 | 2 | 2019-11-09T20:20:20Z | 2022-09-20T05:05:03Z | 2020-02-07T07:03:28Z | MEMBER | Current list of commands:

|

twitter-to-sqlite 206156866 | issue | {

"url": "https://api.github.com/repos/dogsheep/twitter-to-sqlite/issues/31/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 668308777 | MDU6SXNzdWU2NjgzMDg3Nzc= | 129 | "insert-files --sqlar" for creating SQLite archives | simonw 9599 | closed | 0 | 2 | 2020-07-30T02:28:29Z | 2020-07-30T22:41:01Z | 2020-07-30T22:40:55Z | OWNER | A https://www.sqlite.org/sqlar.html and https://sqlite.org/sqlar/doc/trunk/README.md |

sqlite-utils 140912432 | issue | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/129/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

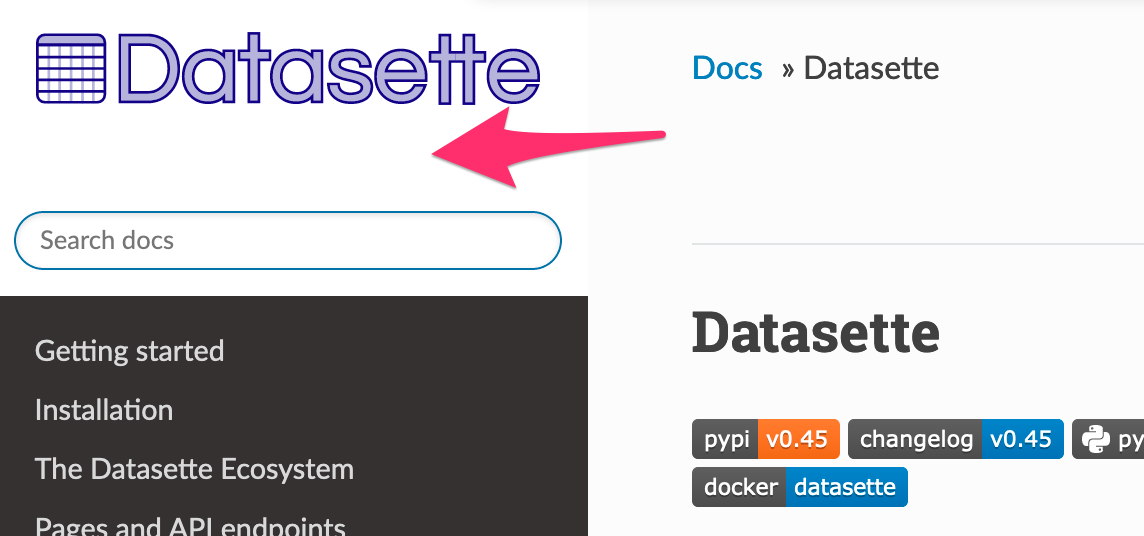

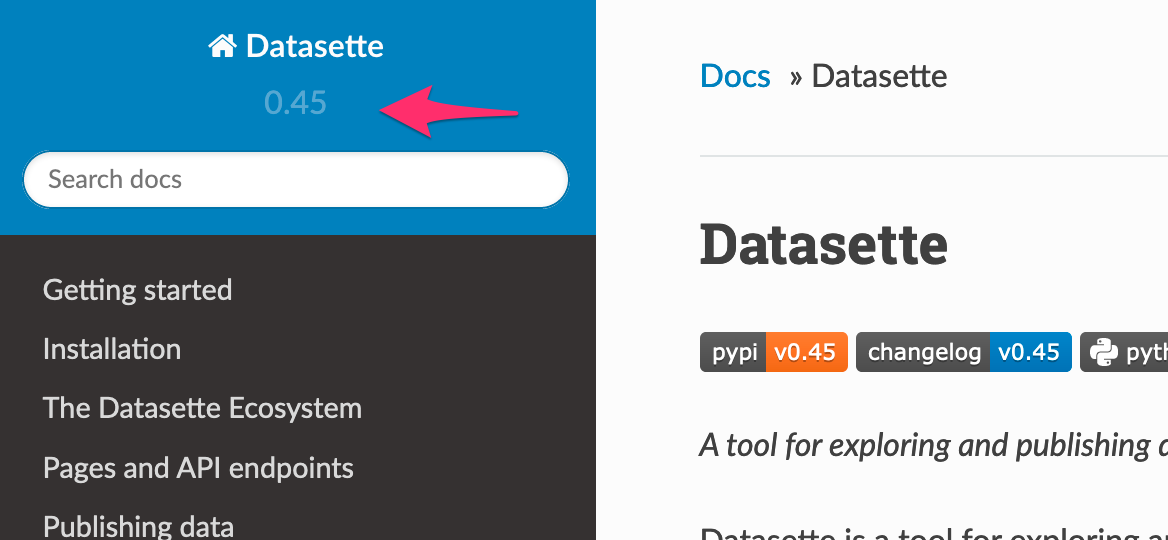

| 655465863 | MDU6SXNzdWU2NTU0NjU4NjM= | 892 | "latest" in new documentation navbar is invisible | simonw 9599 | closed | 0 | 2 | 2020-07-12T19:57:21Z | 2020-07-12T20:02:35Z | 2020-07-12T20:02:17Z | OWNER | On https://datasette.readthedocs.io/en/latest/

Compare with https://datasette.readthedocs.io/en/0.45/

Some custom CSS should fix it. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/892/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 706486323 | MDU6SXNzdWU3MDY0ODYzMjM= | 973 | 'bool' object is not callable error | simonw 9599 | closed | 0 | Datasette 0.50 5971510 | 2 | 2020-09-22T15:30:54Z | 2020-10-08T23:54:32Z | 2020-09-22T15:40:35Z | OWNER | I'm getting this when latest is deployed to Cloud Run:

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/973/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 788527932 | MDU6SXNzdWU3ODg1Mjc5MzI= | 223 | --delimiter option for CSV import | simonw 9599 | closed | 0 | 2 | 2021-01-18T20:25:03Z | 2021-02-06T01:39:47Z | 2021-02-06T01:34:54Z | OWNER |

Would be useful to be able to do this:

|

sqlite-utils 140912432 | issue | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/223/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 318737808 | MDU6SXNzdWUzMTg3Mzc4MDg= | 243 | --spatialite option for datasette publish commands | simonw 9599 | closed | 0 | 2 | 2018-04-29T18:19:32Z | 2018-05-31T14:17:53Z | 2018-05-31T14:17:53Z | OWNER | Performs the necessary incantations to install Spatialite on Zeit Now or Heroku and sets the corresponding environment variable to ensure the module is correctly loaded by datasette serve. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/243/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 413871266 | MDU6SXNzdWU0MTM4NzEyNjY= | 18 | .insert/.upsert/.insert_all/.upsert_all should add missing columns | simonw 9599 | closed | 0 | 1.0 4348046 | 2 | 2019-02-24T21:36:11Z | 2019-05-25T00:42:11Z | 2019-05-25T00:42:11Z | OWNER | This is a larger change, but it would be incredibly useful: if you attempt to insert or update a document with a field that does not currently exist in the underlying table, sqlite-utils should add the appropriate column for you. |

sqlite-utils 140912432 | issue | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/18/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 403617881 | MDU6SXNzdWU0MDM2MTc4ODE= | 405 | .json?_nl=on option for exporting newline-delimited JSON | simonw 9599 | closed | 0 | 2 | 2019-01-28T01:10:45Z | 2019-01-28T01:49:00Z | 2019-01-28T01:48:37Z | OWNER | The neat thing about newline-delimited JSON is that you don't have to read an entire array (of potentially thousands of objects) into memory in order to parse it - you can parse things a line at a time instead. It will look like this:

I added this as part of the It can be offered alongside |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/405/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 598640234 | MDU6SXNzdWU1OTg2NDAyMzQ= | 99 | .upsert_all() should maybe error if dictionaries passed to it do not have the same keys | simonw 9599 | closed | 0 | 2 | 2020-04-13T03:02:25Z | 2020-04-13T03:05:20Z | 2020-04-13T03:05:04Z | OWNER | While investigating #98 I stumbled across this: ``` def test_upsert_compound_primary_key(fresh_db): table = fresh_db["table"] table.upsert_all( [ {"species": "dog", "id": 1, "name": "Cleo", "age": 4}, {"species": "cat", "id": 1, "name": "Catbag"}, ], pk=("species", "id"), ) table.upsert_all( [ {"species": "dog", "id": 1, "age": 5}, {"species": "dog", "id": 2, "name": "New Dog", "age": 1}, ], pk=("species", "id"), )

|

sqlite-utils 140912432 | issue | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/99/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 1122416919 | I_kwDOBm6k_c5C5rkX | 1623 | /-/patterns returns link: alternate JSON header to 404 | simonw 9599 | closed | 0 | Datasette 1.0 3268330 | 2 | 2022-02-02T21:42:49Z | 2022-03-19T04:04:49Z | 2022-02-02T21:48:56Z | OWNER | Bug from: - #1620

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1623/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 319954545 | MDU6SXNzdWUzMTk5NTQ1NDU= | 248 | /-/plugins should show version of each installed plugin | simonw 9599 | closed | 0 | 2 | 2018-05-03T14:50:45Z | 2018-05-04T18:25:40Z | 2018-05-04T18:05:04Z | OWNER | Refs #244 https://stackoverflow.com/questions/20180543/how-to-check-version-of-python-modules ```

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/248/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 398089089 | MDU6SXNzdWUzOTgwODkwODk= | 399 | /-/versions for official Docker image returns wrong Datasette version | simonw 9599 | closed | 0 | 2 | 2019-01-11T01:19:58Z | 2019-01-13T23:31:59Z | 2019-01-13T23:10:45Z | OWNER |

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/399/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 663317875 | MDU6SXNzdWU2NjMzMTc4NzU= | 905 | /database.db download should include content-length header | simonw 9599 | closed | 0 | 2 | 2020-07-21T21:23:48Z | 2020-07-22T04:59:46Z | 2020-07-22T04:52:45Z | OWNER | I can do this by modifying this function: https://github.com/simonw/datasette/blob/02dc6298bdbfb1d63e0d2a39ff597b5fcc60e06b/datasette/utils/asgi.py#L248-L270 |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/905/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 1483320357 | I_kwDOBm6k_c5Yaawl | 1937 | /db/-/create API should require insert-rows permission to use row: or rows: option | simonw 9599 | closed | 0 | Datasette 1.0a2 8711695 | 2 | 2022-12-08T01:33:09Z | 2022-12-14T20:21:26Z | 2022-12-14T20:21:26Z | OWNER | Otherwise someone with |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1937/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 1501713288 | I_kwDOBm6k_c5ZglOI | 1963 | 0.63.3 bugfix release | simonw 9599 | closed | 0 | 2 | 2022-12-18T02:48:15Z | 2022-12-18T03:26:55Z | 2022-12-18T03:26:55Z | OWNER | I'm going to ship a release which back-ports these two fixes: |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1963/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 908446997 | MDU6SXNzdWU5MDg0NDY5OTc= | 1353 | ?_nocount=1 for opting out of table counts | simonw 9599 | closed | 0 | 2 | 2021-06-01T15:53:27Z | 2021-06-01T16:18:54Z | 2021-06-01T16:17:04Z | OWNER | Running a trace against a CSV streaming export with the new This is inefficient - a new |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1353/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 906977719 | MDU6SXNzdWU5MDY5Nzc3MTk= | 1350 | ?_nofacets=1 query string argument for disabling facets and suggested facets | simonw 9599 | closed | 0 | 2 | 2021-05-31T02:22:29Z | 2021-06-01T16:19:38Z | 2021-05-31T02:39:18Z | OWNER | This is needed as an internal option for #1349. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1350/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 792890765 | MDU6SXNzdWU3OTI4OTA3NjU= | 1200 | ?_size=10 option for the arbitrary query page would be useful | simonw 9599 | open | 0 | 2 | 2021-01-24T20:55:35Z | 2021-02-11T03:13:59Z | OWNER | https://latest.datasette.io/fixtures?sql=select+*+from+compound_three_primary_keys&_size=10 - Would also be good if it persisted in a hidden form field. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1200/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 705995722 | MDU6SXNzdWU3MDU5OTU3MjI= | 162 | A decorator for registering custom SQL functions | simonw 9599 | closed | 0 | 2 | 2020-09-22T00:18:32Z | 2020-09-22T00:40:44Z | 2020-09-22T00:32:17Z | OWNER | Syntactic sugar for ```python db = sqlite_utils.Database("mydb.db") @db.register_function

def scramble(text):

chars = list(text)

random.shuffle(chars)

return "".join(chars)

|

sqlite-utils 140912432 | issue | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/162/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 794554881 | MDU6SXNzdWU3OTQ1NTQ4ODE= | 1208 | A lot of open(file) functions are used without a context manager thus producing ResourceWarning: unclosed file <_io.TextIOWrapper | kbaikov 4488943 | closed | 0 | 2 | 2021-01-26T20:56:28Z | 2021-03-11T16:15:49Z | 2021-03-11T16:15:49Z | CONTRIBUTOR | Your code is full of open files that are never closed, especially when you deal with reading/writing json/yaml files. If you run python with warnings enabled this problem becomes evident. This probably contributes to some memory leaks in long running datasettes if the GC will not 'collect' those resources properly. This is easily fixed by using a context manager instead of just using open:

In some newer parts of the code you use Path objects 'read_text' and 'write_text' functions which close the file properly and are prefered in some cases. If you want I can create a PR for all places i found this pattern in. Bellow is a fraction of places where i found a ResourceWarning: ```python update-docs-help.py: 20 actual = actual.replace("Usage: cli ", "Usage: datasette ") 21: open(docs_path / filename, "w").write(actual) 22 datasette\app.py: 210 ): 211: inspect_data = json.load((config_dir / "inspect-data.json").open()) 212 if immutables is None: 266 if config_dir and (config_dir / "settings.json").exists() and not config: 267: config = json.load((config_dir / "settings.json").open()) 268 self._settings = dict(DEFAULT_SETTINGS, **(config or {})) 445 self._app_css_hash = hashlib.sha1( 446: open(os.path.join(str(app_root), "datasette/static/app.css")) 447 .read() datasette\cli.py: 130 else: 131: out = open(inspect_file, "w") 132 loop = asyncio.get_event_loop() 459 if inspect_file: 460: inspect_data = json.load(open(inspect_file)) 461 ``` |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1208/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 442330564 | MDU6SXNzdWU0NDIzMzA1NjQ= | 457 | Ability to "publish cloudrun" with no user input | simonw 9599 | closed | 0 | 2 | 2019-05-09T16:42:51Z | 2019-05-09T19:41:31Z | 2019-05-09T16:45:08Z | OWNER | If you attempt to deploy a new version of a cloudrun deployment, the script currently pauses and asks for user input for the service name like this:

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/457/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 448189298 | MDU6SXNzdWU0NDgxODkyOTg= | 486 | Ability to add extra routes and related templates | clausjuhl 2181410 | closed | 0 | 2 | 2019-05-24T14:04:25Z | 2019-05-24T14:43:28Z | 2019-05-24T14:43:09Z | NONE | Hi Simon Thank for an excellent job! Datasette is such an obviously good idea (once you have that idea!) and so well done. The only thing that I miss, is the ability to add extras routes (with associated jinja2-templates). For most of the datasets, that I would like to publish, I would also like at least a page, that describes the data (semantics, provenance, biases...) and a page explaining our cookie- and privacy-policies (which would allows us to use something like Goggle Analytics). |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/486/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 665819048 | MDU6SXNzdWU2NjU4MTkwNDg= | 126 | Ability to insert binary data on the CLI using JSON | simonw 9599 | closed | 0 | 2 | 2020-07-26T16:54:14Z | 2020-07-27T04:00:33Z | 2020-07-27T03:59:45Z | OWNER |

|

sqlite-utils 140912432 | issue | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/126/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 557825032 | MDU6SXNzdWU1NTc4MjUwMzI= | 77 | Ability to insert data that is transformed by a SQL function | simonw 9599 | closed | 0 | 2 | 2020-01-30T23:45:55Z | 2022-02-05T00:04:25Z | 2020-01-31T00:24:32Z | OWNER | I want to be able to run the equivalent of this SQL insert: ```python Convert to "Well Known Text" formatwkt = shape(geojson['geometry']).wkt Insert and commit the recordconn.execute("INSERT INTO places (id, name, geom) VALUES(null, ?, GeomFromText(?, 4326))", ( "Wales", wkt )) conn.commit() ``` From the Datasette SpatiaLite docs: https://datasette.readthedocs.io/en/stable/spatialite.html To do this, I need a way of telling |

sqlite-utils 140912432 | issue | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/77/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 842862708 | MDU6SXNzdWU4NDI4NjI3MDg= | 1280 | Ability to run CI against multiple SQLite versions | simonw 9599 | open | 0 | 2 | 2021-03-28T23:54:50Z | 2021-05-10T19:07:46Z | OWNER | Issue #1276 happened because I didn't run tests against a SQLite version prior to 3.16.0 (released 2017-01-02). Glitch is a deployment target and runs SQLite 3.11.0 from 2016-02-15. If CI ran against that version of SQLite this bug could have been avoided. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1280/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 268453968 | MDU6SXNzdWUyNjg0NTM5Njg= | 37 | Ability to serialize massive JSON without blocking event loop | simonw 9599 | closed | 0 | 2 | 2017-10-25T15:58:03Z | 2020-05-30T17:29:20Z | 2020-05-30T17:29:20Z | OWNER | We run the risk of someone attempting a select statement that returns thousands of rows and hence takes several seconds just to JSON encode the response, effectively blocking the event loop and pausing all other traffic. The Twisted community have a solution for this, can we adapt that in some way? http://as.ynchrono.us/2010/06/asynchronous-json_18.html?m=1 |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/37/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 791237799 | MDU6SXNzdWU3OTEyMzc3OTk= | 1196 | Access Denied Error in Windows | QAInsights 2826376 | open | 0 | 2 | 2021-01-21T15:40:40Z | 2021-04-14T19:28:38Z | NONE | I am trying to publish a db to vercel. But while issuing the below command throwing I am using PyCharm and Python 3.9. I have reinstalled both and launched PyCharm as Admin in Windows 10. But still the issue persists. Issued command PS: localhost is working fine. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1196/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 493671014 | MDU6SXNzdWU0OTM2NzEwMTQ= | 5 | Add "incomplete" boolean to users table for incomplete profiles | simonw 9599 | closed | 0 | 2 | 2019-09-14T22:01:50Z | 2020-03-23T19:23:31Z | 2020-03-23T19:23:30Z | MEMBER | User profiles that are fetched from e.g. stargazers (#4) are incomplete - they have a login but they don't have name, company etc. Add a |

github-to-sqlite 207052882 | issue | {

"url": "https://api.github.com/repos/dogsheep/github-to-sqlite/issues/5/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 274578142 | MDU6SXNzdWUyNzQ1NzgxNDI= | 110 | Add --load-extension option to datasette for loading extra SQLite extensions | simonw 9599 | closed | 0 | 2 | 2017-11-16T16:26:19Z | 2017-11-16T18:38:30Z | 2017-11-16T16:58:50Z | OWNER | This would allow users with extra SQLite extensions installed (like spatialite) to load them at runtime. Inspired by this comment: https://github.com/simonw/datasette/issues/46#issuecomment-344810525 |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/110/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 991467558 | MDU6SXNzdWU5OTE0Njc1NTg= | 1466 | Add Datasette Desktop to installation documentation | simonw 9599 | closed | 0 | Datasette 0.60 7571612 | 2 | 2021-09-08T19:41:27Z | 2022-01-13T22:28:28Z | 2022-01-13T21:55:18Z | OWNER | datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1466/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 1097040427 | I_kwDOBm6k_c5BY4Ir | 1587 | Add `sqlite_stat1`(-4) tables to hidden table list | simonw 9599 | closed | 0 | 2 | 2022-01-08T21:28:20Z | 2022-01-20T04:12:59Z | 2022-01-20T04:12:59Z | OWNER |

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1587/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 1447388809 | I_kwDOBm6k_c5WRWaJ | 1887 | Add a confirm step to the drop table API | simonw 9599 | closed | 0 | Datasette 1.0a0 8658075 | 2 | 2022-11-14T04:59:53Z | 2022-11-15T19:59:59Z | 2022-11-14T05:18:51Z | OWNER |

Originally posted by @simonw in https://github.com/simonw/datasette/issues/1871#issuecomment-1313097057 Added drop table API in: - #1874 |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1887/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 961008507 | MDU6SXNzdWU5NjEwMDg1MDc= | 308 | Add an interactive tutorial as a Jupyter notebook | simonw 9599 | open | 0 | 2 | 2021-08-04T20:34:22Z | 2021-08-04T21:30:59Z | OWNER | Can show people how to open this up in Binder. |

sqlite-utils 140912432 | issue | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/308/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 329147284 | MDU6SXNzdWUzMjkxNDcyODQ= | 305 | Add contributor guidelines to docs | simonw 9599 | closed | 0 | 2 | 2018-06-04T17:25:30Z | 2019-06-24T06:40:19Z | 2019-06-24T06:40:19Z | OWNER | https://channels.readthedocs.io/en/latest/contributing.html is a nice example of this done well. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/305/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 1243517592 | I_kwDOBm6k_c5KHpKY | 1748 | Add copy buttons next to code examples in the documentation | simonw 9599 | closed | 0 | 2 | 2022-05-20T19:09:00Z | 2022-05-20T19:15:00Z | 2022-05-20T19:11:32Z | OWNER | Similar to the ones in |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1748/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 1128120451 | I_kwDOCGYnMM5DPcCD | 404 | Add example of `--convert` to the help for `sqlite-utils insert` | simonw 9599 | closed | 0 | 2 | 2022-02-09T06:49:09Z | 2022-02-09T06:56:35Z | 2022-02-09T06:55:16Z | OWNER | https://sqlite-utils.datasette.io/en/3.23/cli-reference.html#insert would be more useful if it included an example of I can maybe use an example from https://simonwillison.net/2022/Jan/11/sqlite-utils/ |

sqlite-utils 140912432 | issue | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/404/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 975166271 | MDU6SXNzdWU5NzUxNjYyNzE= | 20 | Add index on workout_points.date | simonw 9599 | open | 0 | 2 | 2021-08-20T01:08:04Z | 2021-08-20T01:12:48Z | MEMBER | Sorting that by date makes sense for seeing most recent points, and my DB has 2.5m points in so it's an expensive sort! |

healthkit-to-sqlite 197882382 | issue | {

"url": "https://api.github.com/repos/dogsheep/healthkit-to-sqlite/issues/20/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 642127307 | MDU6SXNzdWU2NDIxMjczMDc= | 855 | Add instructions for using cookiecutter plugin template to plugin docs | simonw 9599 | closed | 0 | Datasette 0.45 5533512 | 2 | 2020-06-19T17:33:25Z | 2020-06-22T02:51:38Z | 2020-06-22T02:51:38Z | OWNER | Once I ship the |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/855/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 316621102 | MDU6SXNzdWUzMTY2MjExMDI= | 235 | Add limit on the size in KB of data returned from a single query | simonw 9599 | open | 0 | 2 | 2018-04-22T23:01:15Z | 2018-04-24T00:30:02Z | OWNER | Datasette limits the number of rows returned to 1,000 and limits the time spent executing a SQL query to 1000ms - and both of these limits can be customized. It does not have a limit on the size of the response returned. It's possible to compose maliciously large SQL responses in a small number of rows using mechanisms like the I think the easiest place to implement that is here: Currently we use The bigger challenge here is understanding how well this approach works and what impact it will have on overall Datasette performance. I think I need #33 for this. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/235/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 612089949 | MDU6SXNzdWU2MTIwODk5NDk= | 756 | Add pipx to installation documentation | simonw 9599 | closed | 0 | 2 | 2020-05-04T18:49:01Z | 2020-05-04T19:19:06Z | 2020-05-04T19:10:33Z | OWNER | Add to this page: https://datasette.readthedocs.io/en/stable/installation.html Here's how to install plugins: https://twitter.com/simonw/status/1257348687979778050 ``` $ datasette plugins [] $ pipx inject datasette datasette-json-html $ datasette plugins [ { "name": "datasette-json-html", "static": false, "templates": false, "version": "0.6" } ] ``` |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/756/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 470691622 | MDU6SXNzdWU0NzA2OTE2MjI= | 5 | Add progress bar | simonw 9599 | closed | 0 | 2 | 2019-07-20T16:29:07Z | 2019-07-22T03:30:13Z | 2019-07-22T02:49:22Z | MEMBER | Showing a progress bar would be nice, using Click. The easiest way to do this would probably be be to hook it up to the length of the compressed content, and update it as this code pushes more XML bytes through the parser: |

healthkit-to-sqlite 197882382 | issue | {

"url": "https://api.github.com/repos/dogsheep/healthkit-to-sqlite/issues/5/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 602575575 | MDU6SXNzdWU2MDI1NzU1NzU= | 6 | Add progress bar to upload command | simonw 9599 | closed | 0 | 2 | 2020-04-18T23:32:41Z | 2020-04-19T00:15:24Z | 2020-04-19T00:15:24Z | MEMBER | Upload was added in #4 |

dogsheep-photos 256834907 | issue | {

"url": "https://api.github.com/repos/dogsheep/dogsheep-photos/issues/6/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 459714943 | MDU6SXNzdWU0NTk3MTQ5NDM= | 525 | Add section on sqite-utils enable-fts to the search documentation | simonw 9599 | closed | 0 | simonw 9599 | 2 | 2019-06-24T06:39:16Z | 2019-06-24T16:36:35Z | 2019-06-24T16:29:43Z | OWNER | https://datasette.readthedocs.io/en/stable/full_text_search.html already has a section about csvs-to-sqlite, sqlite-utils is even more relevant. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/525/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 1059549523 | I_kwDOBm6k_c4_J3FT | 1526 | Add to vercel.json, rather than overwriting it. | mroswell 192568 | closed | 0 | 2 | 2021-11-22T00:47:12Z | 2021-11-22T04:49:45Z | 2021-11-22T04:13:47Z | CONTRIBUTOR | I'd like to be able to add to vercel.json. But Datasette overwrites whatever I put in that file. I originally reported this here: https://github.com/simonw/datasette-publish-vercel/issues/51 In that case, I wanted to do a rewrite... and now I need to do 301 redirects (because we had to rename our site). Can this be addressed? |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1526/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 610842926 | MDU6SXNzdWU2MTA4NDI5MjY= | 36 | Add view for better display of dependent repos | simonw 9599 | closed | 0 | 2 | 2020-05-01T16:33:44Z | 2020-05-02T16:50:31Z | 2020-05-02T16:30:11Z | MEMBER |

|

github-to-sqlite 207052882 | issue | {

"url": "https://api.github.com/repos/dogsheep/github-to-sqlite/issues/36/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 927766296 | MDU6SXNzdWU5Mjc3NjYyOTY= | 291 | Adopt flake8 | simonw 9599 | closed | 0 | 2 | 2021-06-23T01:19:37Z | 2021-06-24T17:50:27Z | 2021-06-24T17:50:27Z | OWNER | sqlite-utils 140912432 | issue | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/291/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||||

| 1781005740 | I_kwDOBm6k_c5qJ_2s | 2090 | Adopt ruff for linting | simonw 9599 | open | 0 | 2 | 2023-06-29T14:56:43Z | 2023-06-29T15:05:04Z | OWNER | datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/2090/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

|||||||||

| 736365306 | MDU6SXNzdWU3MzYzNjUzMDY= | 1083 | Advanced CSV export for arbitrary queries | simonw 9599 | open | 0 | 2 | 2020-11-04T19:23:05Z | 2021-06-17T18:12:31Z | OWNER | There's no link to download the CSV file - the table page has that as an advanced export option, but this is missing from the query page. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1083/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 1740150327 | I_kwDOCGYnMM5nuJY3 | 557 | Aliased ROWID option for tables created from alter=True commands | chapmanjacobd 7908073 | closed | 0 | 2 | 2023-06-04T05:29:28Z | 2023-06-14T06:09:21Z | 2023-06-05T19:26:26Z | CONTRIBUTOR |

ROWID should never be used with foreign keys but the simple act of aliasing rowid to id (which is what happens when one does It would be convenient if there were more options to use a string column (eg. filepath) as the PK, and be able to use it during upserts, but when creating a foreign key, to create an integer column which aliases rowid I made an attempt to switch to integer primary keys here but it is not going well... In my usecase the path column is a business key. Yes, it should be as simple as including the https://github.com/chapmanjacobd/library/actions/runs/5173602136/jobs/9319024777 |

sqlite-utils 140912432 | issue | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/557/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 626001501 | MDU6SXNzdWU2MjYwMDE1MDE= | 773 | All plugin hooks should have unit tests | simonw 9599 | closed | 0 | Datasette 0.43 5471110 | 2 | 2020-05-27T20:17:41Z | 2020-05-28T04:12:11Z | 2020-05-28T04:09:25Z | OWNER | Four hooks currently missing tests:

``` $ pytest -k test_plugin_hooks_have_tests -vv ====================================== test session starts ====================================== platform darwin -- Python 3.7.7, pytest-5.2.4, py-1.8.1, pluggy-0.13.1 -- /Users/simon/.local/share/virtualenvs/datasette-AWNrQs95/bin/python cachedir: .pytest_cache rootdir: /Users/simon/Dropbox/Development/datasette, inifile: pytest.ini plugins: asyncio-0.10.0 collected 486 items / 475 deselected / 11 selected tests/test_plugins.py::test_plugin_hooks_have_tests[asgi_wrapper] XPASS [ 9%] tests/test_plugins.py::test_plugin_hooks_have_tests[extra_body_script] XPASS [ 18%] tests/test_plugins.py::test_plugin_hooks_have_tests[extra_css_urls] XPASS [ 27%] tests/test_plugins.py::test_plugin_hooks_have_tests[extra_js_urls] XPASS [ 36%] tests/test_plugins.py::test_plugin_hooks_have_tests[extra_template_vars] XPASS [ 45%] tests/test_plugins.py::test_plugin_hooks_have_tests[prepare_connection] XPASS [ 54%] tests/test_plugins.py::test_plugin_hooks_have_tests[prepare_jinja2_environment] XFAIL [ 63%] tests/test_plugins.py::test_plugin_hooks_have_tests[publish_subcommand] XFAIL [ 72%] tests/test_plugins.py::test_plugin_hooks_have_tests[register_facet_classes] XFAIL [ 81%] tests/test_plugins.py::test_plugin_hooks_have_tests[register_output_renderer] XFAIL [ 90%] tests/test_plugins.py::test_plugin_hooks_have_tests[render_cell] XPASS [100%] ========================= 475 deselected, 4 xfailed, 7 xpassed in 1.70s ========================= Originally posted by @simonw in https://github.com/simonw/datasette/issues/771#issuecomment-634915104 |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/773/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 665403403 | MDU6SXNzdWU2NjU0MDM0MDM= | 907 | Allow documentation doesn't explain what happens with multiple allow keys | simonw 9599 | closed | 0 | Datasette 0.46 5607421 | 2 | 2020-07-24T20:34:40Z | 2020-07-24T22:53:07Z | 2020-07-24T22:53:07Z | OWNER | Documentation here: https://datasette.readthedocs.io/en/0.45/authentication.html#defining-permissions-with-allow-blocks Doesn't explain that with the following "allow" block:

The tests are missing this case too: https://github.com/simonw/datasette/blob/028f193dd6233fa116262ab4b07b13df7dcec9be/tests/test_utils.py#L504 Related to #906 |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/907/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 562787785 | MDU6SXNzdWU1NjI3ODc3ODU= | 667 | Allow injecting configuration data from plugins | xrotwang 870184 | closed | 0 | 2 | 2020-02-10T19:50:15Z | 2020-02-12T16:18:22Z | 2020-02-12T09:21:22Z | NONE | I'm trying to customize datasette as explorer for CLDF datasets. Such datasets can be converted automatically to SQLite, which then can be fed to datasette, (e.g. https://github.com/cldf/cookbook/blob/master/recipes/datasette/README.md). Part of this customization would be support for the "special" data types described in the CLDF ontology. But while rendering of the values can be customized via the It would be nice to be able to programmatically inject config data from plugins as well. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/667/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 620969465 | MDU6SXNzdWU2MjA5Njk0NjU= | 767 | Allow to specify a URL fragment for canned queries | rixx 2657547 | closed | 0 | Datasette 0.43 5471110 | 2 | 2020-05-19T13:17:42Z | 2020-05-27T21:52:25Z | 2020-05-27T21:52:25Z | CONTRIBUTOR | Canned queries are very useful to direct users to prepared data and views. I like to use them with charts using datasette-vega a lot, because people get a direct impression at first glance. datasette-vega doesn't show up by default though, and users have to click through to it. Also, datasette-vega does not always guess the best way to render columns correctly though, so it would be nice if I could specify a URL fragment in my canned queries to make sure people see what I want them to see. My current workaround is to include a fragement link in |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/767/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 607888367 | MDU6SXNzdWU2MDc4ODgzNjc= | 13 | Also upload movie files | simonw 9599 | open | 0 | 2 | 2020-04-27T22:11:25Z | 2020-04-28T00:39:45Z | MEMBER | The Need to cover movies taken by my phone and DSLR too. |

dogsheep-photos 256834907 | issue | {

"url": "https://api.github.com/repos/dogsheep/dogsheep-photos/issues/13/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 710819020 | MDU6SXNzdWU3MTA4MTkwMjA= | 980 | Another rendering glitch with column headers on mobile | simonw 9599 | closed | 0 | Datasette 0.50 5971510 | 2 | 2020-09-29T06:53:13Z | 2020-10-08T23:54:49Z | 2020-09-29T19:21:50Z | OWNER | datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/980/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 689809225 | MDU6SXNzdWU2ODk4MDkyMjU= | 2 | Apply porter stemming | simonw 9599 | closed | 0 | 2 | 2020-09-01T04:57:55Z | 2020-09-01T20:42:00Z | 2020-09-01T20:40:24Z | MEMBER | This can be on by default. You can turn it off for a table in the config file using |

dogsheep-beta 197431109 | issue | {

"url": "https://api.github.com/repos/dogsheep/dogsheep-beta/issues/2/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 667467128 | MDU6SXNzdWU2Njc0NjcxMjg= | 909 | AsgiFileDownload: filename not correctly passed | simonw 9599 | closed | 0 | 2 | 2020-07-29T00:41:43Z | 2020-07-30T00:56:17Z | 2020-07-29T21:34:48Z | OWNER | https://github.com/simonw/datasette/blob/3c33b421320c0be81a625ca7307b2e4416a9ed5b/datasette/utils/asgi.py#L396-L405

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/909/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 706167456 | MDU6SXNzdWU3MDYxNjc0NTY= | 168 | Automate (as much as possible) updates published to Homebrew | simonw 9599 | closed | 0 | 2 | 2020-09-22T08:08:37Z | 2020-11-09T07:43:30Z | 2020-11-09T07:43:30Z | OWNER | I'd like to get new |

sqlite-utils 140912432 | issue | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/168/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 1816997390 | I_kwDOCGYnMM5sTS4O | 576 | Backfill the release notes prior to 0.4 | simonw 9599 | closed | 0 | 2 | 2023-07-23T05:41:42Z | 2023-07-23T05:49:51Z | 2023-07-23T05:48:21Z | OWNER | Currently the changelog starts at 0.4: https://sqlite-utils.datasette.io/en/3.34/changelog.html#id115 I want the other releases - according to https://pypi.org/project/sqlite-utils/#history there are three missing: |

sqlite-utils 140912432 | issue | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/576/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 1495241162 | I_kwDOBm6k_c5ZH5HK | 1950 | Bad ?_sort returns a 500 error, should be a 400 | simonw 9599 | closed | 0 | 2 | 2022-12-13T22:08:16Z | 2022-12-13T22:23:22Z | 2022-12-13T22:23:22Z | OWNER | datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1950/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||||

| 1339444565 | I_kwDOBm6k_c5P1k1V | 1783 | Better guidance as to what to do after you've installed Datasette | simonw 9599 | open | 0 | 2 | 2022-08-15T20:11:06Z | 2022-08-15T20:14:01Z | OWNER | Feedback from Discord:

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1783/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 718949182 | MDU6SXNzdWU3MTg5NDkxODI= | 6 | Better handling of OCR data | simonw 9599 | closed | 0 | 2 | 2020-10-11T23:20:52Z | 2020-10-12T00:04:10Z | 2020-10-12T00:04:10Z | MEMBER |

Originally posted by @simonw in https://github.com/dogsheep/evernote-to-sqlite/issues/4#issuecomment-706784028 |

evernote-to-sqlite 303218369 | issue | {

"url": "https://api.github.com/repos/dogsheep/evernote-to-sqlite/issues/6/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 1420055377 | I_kwDOBm6k_c5UpFNR | 1847 | Both _local_metadata and _metadata_local? | simonw 9599 | closed | 0 | 2 | 2022-10-24T01:43:08Z | 2022-10-24T01:53:13Z | 2022-10-24T01:53:13Z | OWNER | Spotted this in the debugger against the

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1847/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 688659182 | MDU6SXNzdWU2ODg2NTkxODI= | 145 | Bug when first record contains fewer columns than subsequent records | simonwiles 96218 | closed | 0 | 2 | 2020-08-30T05:44:44Z | 2020-09-08T23:21:23Z | 2020-09-08T23:21:23Z | CONTRIBUTOR |

I suspect that this bug is masked somewhat by the fact that while:

it is common that it is increased at compile time. Debian-based systems, for example, seem to ship with a version of sqlite compiled with A test for this issue might look like this:

The best solution, I think, is simply to process all the records when determining columns, column types, and the batch size. In my tests this doesn't seem to be particularly costly at all, and cuts out a lot of complications (including obviating my implementation of #139 at #142). I'll raise a PR for your consideration. |

sqlite-utils 140912432 | issue | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/145/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 275493851 | MDU6SXNzdWUyNzU0OTM4NTE= | 139 | Build a visualization plugin for Vega | simonw 9599 | closed | 0 | 2 | 2017-11-20T20:47:41Z | 2018-07-10T17:48:18Z | 2018-07-10T17:48:18Z | OWNER | https://vega.github.io/vega/examples/population-pyramid/ for example looks pretty easy to hook up to Datasette. Depends on #14 |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/139/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 516748849 | MDU6SXNzdWU1MTY3NDg4NDk= | 612 | CSV export is broken for tables with null foreign keys | simonw 9599 | closed | 0 | 2 | 2019-11-02T22:52:47Z | 2021-06-17T18:13:20Z | 2019-11-02T23:12:53Z | OWNER | Following on from #406 - this CSV export appears to be broken: https://14da705.datasette.io/fixtures/foreign_key_references.csv?_labels=on&_size=max

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/612/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 1617602868 | I_kwDOJHON9s5gaqk0 | 6 | Character encoding problem | simonw 9599 | open | 0 | 2 | 2023-03-09T16:44:34Z | 2023-04-14T15:22:09Z | MEMBER | I ran against a recent note with this in it:

And got back:

Pasting that into https://ftfy.vercel.app/?s=Actions+%E2%80%9A%C3%B6%C3%B4%C3%94%E2%88%8F%C3%A8+ gives this:

|

apple-notes-to-sqlite 611552758 | issue | {

"url": "https://api.github.com/repos/dogsheep/apple-notes-to-sqlite/issues/6/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 611997130 | MDU6SXNzdWU2MTE5OTcxMzA= | 754 | Clean up aiofiles warnings on 3.8 | simonw 9599 | closed | 0 | 2 | 2020-05-04T16:14:59Z | 2020-05-04T16:22:30Z | 2020-05-04T16:22:30Z | OWNER | https://travis-ci.org/github/simonw/datasette/jobs/682624476 Lots of warnings like this: ``` /home/travis/virtualenv/python3.8.0/lib/python3.8/site-packages/aiofiles/threadpool/utils.py:33 /home/travis/virtualenv/python3.8.0/lib/python3.8/site-packages/aiofiles/threadpool/utils.py:33 /home/travis/virtualenv/python3.8.0/lib/python3.8/site-packages/aiofiles/threadpool/utils.py:33: DeprecationWarning: "@coroutine" decorator is deprecated since Python 3.8, use "async def" instead /home/travis/virtualenv/python3.8.0/lib/python3.8/site-packages/aiofiles/threadpool/init.py:27 /home/travis/virtualenv/python3.8.0/lib/python3.8/site-packages/aiofiles/threadpool/init.py:27: DeprecationWarning: "@coroutine" decorator is deprecated since Python 3.8, use "async def" instead ``` |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/754/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 1073712378 | I_kwDOBm6k_c4__4z6 | 1544 | Code that detects the label column for a table is case-sensitive | simonw 9599 | closed | 0 | 2 | 2021-12-07T20:01:25Z | 2021-12-07T20:03:43Z | 2021-12-07T20:03:43Z | OWNER | I just noticed that a column called |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1544/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 503190241 | MDU6SXNzdWU1MDMxOTAyNDE= | 584 | Codec error in some CSV exports | simonw 9599 | closed | 0 | 2 | 2019-10-07T01:15:34Z | 2021-06-17T18:13:20Z | 2019-10-18T05:23:16Z | OWNER | Got this exploring my Swarm checkins:

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/584/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 715072935 | MDU6SXNzdWU3MTUwNzI5MzU= | 993 | Column action menu should show column type | simonw 9599 | closed | 0 | Datasette 0.50 5971510 | 2 | 2020-10-05T18:40:49Z | 2020-10-08T23:55:19Z | 2020-10-06T00:33:15Z | OWNER |

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/993/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 994450961 | MDU6SXNzdWU5OTQ0NTA5NjE= | 1469 | Column cog shows "facet by this" when already default faceted | simonw 9599 | closed | 0 | 2 | 2021-09-13T04:51:26Z | 2021-10-13T21:20:07Z | 2021-10-13T21:20:07Z | OWNER | e.g. on https://covid-19.datasettes.com/covid/economist_excess_deaths

But if you add The logic that decides if the "Facet by this" item is shown does not take default |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1469/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 634783573 | MDU6SXNzdWU2MzQ3ODM1NzM= | 816 | Come up with a new example for extra_template_vars plugin | simonw 9599 | closed | 0 | Datasette 0.44 5512395 | 2 | 2020-06-08T16:57:59Z | 2020-06-08T19:06:44Z | 2020-06-08T19:06:11Z | OWNER | This example is obsolete, it's from a time before |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/816/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 578883725 | MDU6SXNzdWU1Nzg4ODM3MjU= | 17 | Command for importing commits | simonw 9599 | closed | 0 | 2 | 2020-03-10T21:55:12Z | 2020-03-11T02:47:37Z | 2020-03-11T02:47:37Z | MEMBER | Using this API: https://api.github.com/repos/dogsheep/github-to-sqlite/commits |

github-to-sqlite 207052882 | issue | {

"url": "https://api.github.com/repos/dogsheep/github-to-sqlite/issues/17/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 488835586 | MDU6SXNzdWU0ODg4MzU1ODY= | 4 | Command for importing data from a Twitter Export file | simonw 9599 | closed | 0 | 2 | 2019-09-03T21:34:13Z | 2019-10-11T06:45:02Z | 2019-10-11T06:45:02Z | MEMBER | Twitter lets you export all of your data as an archive file: https://twitter.com/settings/your_twitter_data A command for importing this data into SQLite would be extremely useful. |

twitter-to-sqlite 206156866 | issue | {

"url": "https://api.github.com/repos/dogsheep/twitter-to-sqlite/issues/4/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 273192789 | MDU6SXNzdWUyNzMxOTI3ODk= | 67 | Command that builds a local docker container | simonw 9599 | closed | 0 | Ship first public release 2857392 | 2 | 2017-11-12T02:13:29Z | 2017-11-13T16:17:52Z | 2017-11-13T16:17:52Z | OWNER | Be nice to indicate that this isn't just for Now. Shouldn't be too hard either. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/67/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 493670426 | MDU6SXNzdWU0OTM2NzA0MjY= | 3 | Command to fetch all repos belonging to a user or organization | simonw 9599 | closed | 0 | 2 | 2019-09-14T21:54:21Z | 2019-09-17T00:17:53Z | 2019-09-17T00:17:53Z | MEMBER | How about this: |

github-to-sqlite 207052882 | issue | {

"url": "https://api.github.com/repos/dogsheep/github-to-sqlite/issues/3/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 1353441389 | I_kwDOCGYnMM5Qq-Bt | 477 | Conda Forge | thewchan 49702524 | closed | 0 | 2 | 2022-08-28T19:03:08Z | 2022-09-07T03:46:55Z | 2022-09-07T03:46:55Z | NONE | Hello! I have successfully put this package on to Conda Forge, and I have extending the invitation for the owner/maintainers of this package to be maintainers on Conda Forge as well. Let me know if you are interested! Thanks. https://github.com/conda-forge/sqlite-utils-feedstock |

sqlite-utils 140912432 | issue | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/477/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 718938508 | MDU6SXNzdWU3MTg5Mzg1MDg= | 4 | Configure FTS + add an index on the date columns | simonw 9599 | closed | 0 | 2 | 2020-10-11T22:14:40Z | 2020-10-11T23:41:29Z | 2020-10-11T23:41:29Z | MEMBER | Sort by date descending is likely the most common way of sorting, so that column should be indexed. Also add FTS configuration for both notes and the OCR column on resources. |

evernote-to-sqlite 303218369 | issue | {

"url": "https://api.github.com/repos/dogsheep/evernote-to-sqlite/issues/4/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 638229448 | MDU6SXNzdWU2MzgyMjk0NDg= | 843 | Configure codecov.io | simonw 9599 | closed | 0 | 2 | 2020-06-13T20:45:00Z | 2020-06-13T21:36:52Z | 2020-06-13T21:36:52Z | OWNER | Originally posted by @simonw in https://github.com/simonw/datasette/issues/841#issuecomment-643660757 |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/843/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 958516743 | MDU6SXNzdWU5NTg1MTY3NDM= | 306 | Configure sphinx.ext.extlinks for issues | simonw 9599 | closed | 0 | 2 | 2021-08-02T21:19:19Z | 2021-08-02T21:39:34Z | 2021-08-02T21:29:22Z | OWNER | As seen in Datasette: https://github.com/simonw/datasette/issues/1227 |

sqlite-utils 140912432 | issue | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/306/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 1618249044 | I_kwDOBm6k_c5gdIVU | 2038 | Consider a `strict_templates` setting | simonw 9599 | open | 0 | 2 | 2023-03-10T02:09:13Z | 2023-03-10T02:11:06Z | OWNER | A setting which turns on Jinja strict mode, so any templates that access undefined variables raise a hard error. Prototype here:

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/2038/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 1171599874 | I_kwDOCGYnMM5F1TIC | 415 | Convert with `--multi` and `--dry-run` flag does not work | dotcs 3976183 | closed | 0 | 2 | 2022-03-16T21:59:46Z | 2022-03-21T04:18:24Z | 2022-03-21T04:18:24Z | NONE | It's not possible to combine Let's first create a simple database from JSON string

and then try to convert the "foo" column with a static value "bar" (see docs Converting a column into multiple columns)

But without the

|

sqlite-utils 140912432 | issue | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/415/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 291639118 | MDU6SXNzdWUyOTE2MzkxMTg= | 183 | Custom Queries - escaping strings | psychemedia 82988 | closed | 0 | 2 | 2018-01-25T16:49:13Z | 2019-06-24T06:45:07Z | 2019-06-24T06:45:07Z | CONTRIBUTOR | If a SQLite table column name contains spaces, they are usually referred to in double quotes:

In the JSON metadata file, this is passed by escaping the double quotes:

When specifying a custom query in

which does not work. Alternatively, a valid custom query can be passed using backticks (`) to quote the column name and single (unescaped) quotes for the matched value:

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/183/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 1221849746 | I_kwDOBm6k_c5I0_KS | 1732 | Custom page variables aren't decoded | tannewt 52649 | open | 0 | 2 | 2022-04-30T14:55:46Z | 2022-05-03T01:50:45Z | NONE | I have a page Datasette should unescape the url component before passing them into the template. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1732/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 1515883470 | I_kwDOC8tyDs5aWovO | 24 | DOC: xml.etree.ElementTree.ParseError due to healthkit version 12 | mmngreco 6231413 | open | 0 | 2 | 2023-01-01T23:00:38Z | 2023-03-30T10:17:31Z | NONE | Hi @simonw I hope you find this issue ok, the idea is provide some documentation to other users like me about how to solve this problem and save some time. Following the instructions from the

So, after debugging and searching on internet I found this useful link: https://discussions.apple.com/thread/254202523 (etresoft, the real hero). Which basically says that the xml given by the health app (healthkit version 12) has some bugs but fortunately, they can be solved with a couple of commads:

|

healthkit-to-sqlite 197882382 | issue | {

"url": "https://api.github.com/repos/dogsheep/healthkit-to-sqlite/issues/24/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 326599525 | MDU6SXNzdWUzMjY1OTk1MjU= | 286 | Database hash should include current datasette version | simonw 9599 | open | 0 | 2 | 2018-05-25T17:03:42Z | 2018-05-25T17:07:36Z | OWNER | Right now deploying a new version of datasette doesn't invalidate existing URLs, so users may still see a cached copy of the old templates. We can fix this by including the current datasette version in the input to the hash function (which currently just the database file contents). |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/286/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 1200649124 | I_kwDOBm6k_c5HkHOk | 1708 | Datasette 1.0 alpha upcoming release notes | simonw 9599 | open | 0 | Datasette 1.0a-next 8755003 | 2 | 2022-04-11T22:57:12Z | 2022-12-13T05:29:06Z | OWNER | I'm going to try writing the release notes first, to see if that helps unblock me. ⚠️ Any release notes in this issue are a draft, and should not be treated as the real thing ⚠️ |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1708/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

|||||||

| 518506242 | MDU6SXNzdWU1MTg1MDYyNDI= | 616 | Datasette FTS detection bug | null92 49656826 | closed | 0 | 2 | 2019-11-06T14:25:47Z | 2019-11-08T15:31:33Z | 2019-11-08T02:06:56Z | NONE | I'm having a trouble with datasette. I deployed EXACTLY the same project on two different apps on Heroku. Both have databases (not all) with FTS activated but only one detects and works fine. You can take a look here:

With search: http://teste-templates.herokuapp.com/amazonia_protege/car

Without search: http://bases.vortex.media/amazonia_protege/car

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/616/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 336936010 | MDU6SXNzdWUzMzY5MzYwMTA= | 331 | Datasette throws error when loading spatialite db without extension loaded | psychemedia 82988 | closed | 0 | 2 | 2018-06-29T09:51:14Z | 2022-01-20T21:29:40Z | 2018-07-10T15:13:36Z | CONTRIBUTOR | When starting datasette on a SpatialLite database without loading the SpatiaLite extension (using eg

It would be nice to trap this and return a message saying something like: ``` It looks like you're trying to load a SpatiaLite database? Make sure you load in the SpatiaLite extension when starting datasette. Read more: https://datasette.readthedocs.io/en/latest/spatialite.html ``` |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/331/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 1083573206 | I_kwDOBm6k_c5AlgPW | 1563 | Datasette(... files=) should not be a required argument | simonw 9599 | closed | 0 | Datasette 0.60 7571612 | 2 | 2021-12-17T19:54:18Z | 2022-01-13T22:27:18Z | 2021-12-18T02:19:40Z | OWNER | ```pycon

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1563/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 369716228 | MDU6SXNzdWUzNjk3MTYyMjg= | 366 | Default built image size over Zeit Now 100MiB limit | gfrmin 416374 | closed | 0 | 2 | 2018-10-12T21:27:17Z | 2018-11-05T06:23:32Z | 2018-11-05T06:23:32Z | CONTRIBUTOR | Using |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/366/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 695553522 | MDU6SXNzdWU2OTU1NTM1MjI= | 18 | Deleted records stay in the search index | simonw 9599 | open | 0 | 2 | 2020-09-08T05:14:23Z | 2020-09-08T05:15:51Z | MEMBER | That should probably do |

dogsheep-beta 197431109 | issue | {

"url": "https://api.github.com/repos/dogsheep/dogsheep-beta/issues/18/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 637899539 | MDU6SXNzdWU2Mzc4OTk1Mzk= | 40 | Demo deploy is broken | simonw 9599 | closed | 0 | 2 | 2020-06-12T17:20:17Z | 2020-06-12T18:06:48Z | 2020-06-12T18:06:48Z | MEMBER | https://github.com/dogsheep/github-to-sqlite/runs/766180404?check_suite_focus=true ``` The following NEW packages will be installed: sqlite3 0 upgraded, 1 newly installed, 0 to remove and 11 not upgraded. Need to get 752 kB of archives. After this operation, 2482 kB of additional disk space will be used. Ign:1 http://azure.archive.ubuntu.com/ubuntu bionic-updates/main amd64 sqlite3 amd64 3.22.0-1ubuntu0.3 Err:1 http://security.ubuntu.com/ubuntu bionic-updates/main amd64 sqlite3 amd64 3.22.0-1ubuntu0.3 404 Not Found [IP: 52.177.174.250 80] E: Failed to fetch http://security.ubuntu.com/ubuntu/pool/main/s/sqlite3/sqlite3_3.22.0-1ubuntu0.3_amd64.deb 404 Not Found [IP: 52.177.174.250 80] E: Unable to fetch some archives, maybe run apt-get update or try with --fix-missing? [error]Process completed with exit code 100.``` |

github-to-sqlite 207052882 | issue | {

"url": "https://api.github.com/repos/dogsheep/github-to-sqlite/issues/40/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 1570375808 | I_kwDODFdgUs5dmgiA | 79 | Deploy demo job is failing due to rate limit | simonw 9599 | open | 0 | 2 | 2023-02-03T20:05:01Z | 2023-12-08T14:50:15Z | MEMBER | github-to-sqlite 207052882 | issue | {

"url": "https://api.github.com/repos/dogsheep/github-to-sqlite/issues/79/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

|||||||||

| 273569477 | MDU6SXNzdWUyNzM1Njk0Nzc= | 80 | Deploy final versions of fivethirtyeight and parlgov datasets (with view pagination) | simonw 9599 | closed | 0 | Ship first public release 2857392 | 2 | 2017-11-13T20:37:46Z | 2017-11-13T22:09:46Z | 2017-11-13T22:09:46Z | OWNER | Final versions should be deployed using the first released version of datasette. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/80/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 413740684 | MDU6SXNzdWU0MTM3NDA2ODQ= | 11 | Detect numpy types when creating tables | simonw 9599 | closed | 0 | 2 | 2019-02-23T21:09:35Z | 2019-02-24T04:02:20Z | 2019-02-24T04:02:20Z | OWNER | Inspired by #8 |

sqlite-utils 140912432 | issue | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/11/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed |

Advanced export

JSON shape: default, array, newline-delimited, object

CREATE TABLE [issues] (

[id] INTEGER PRIMARY KEY,

[node_id] TEXT,

[number] INTEGER,

[title] TEXT,

[user] INTEGER REFERENCES [users]([id]),

[state] TEXT,

[locked] INTEGER,

[assignee] INTEGER REFERENCES [users]([id]),

[milestone] INTEGER REFERENCES [milestones]([id]),

[comments] INTEGER,

[created_at] TEXT,

[updated_at] TEXT,

[closed_at] TEXT,

[author_association] TEXT,

[pull_request] TEXT,

[body] TEXT,

[repo] INTEGER REFERENCES [repos]([id]),

[type] TEXT

, [active_lock_reason] TEXT, [performed_via_github_app] TEXT, [reactions] TEXT, [draft] INTEGER, [state_reason] TEXT);

CREATE INDEX [idx_issues_repo]

ON [issues] ([repo]);

CREATE INDEX [idx_issues_milestone]

ON [issues] ([milestone]);

CREATE INDEX [idx_issues_assignee]

ON [issues] ([assignee]);

CREATE INDEX [idx_issues_user]

ON [issues] ([user]);

comments 1 ✖