issues

21 rows where assignee = 9599 sorted by updated_at descending

This data as json, CSV (advanced)

Suggested facets: user, milestone, comments, author_association, created_at (date), updated_at (date), closed_at (date)

| id | node_id | number | title | user | state | locked | assignee | milestone | comments | created_at | updated_at ▲ | closed_at | author_association | pull_request | body | repo | type | active_lock_reason | performed_via_github_app | reactions | draft | state_reason |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 1651082214 | PR_kwDOBm6k_c5NcFCf | 2052 | feat: Javascript Plugin API (Custom panels, column menu items with JS actions) | hydrosquall 9020979 | closed | 0 | simonw 9599 | 20 | 2023-04-02T20:23:44Z | 2023-10-14T17:49:03Z | 2023-10-13T00:00:27Z | CONTRIBUTOR | simonw/datasette/pulls/2052 | Motivation

ChangesSummary: Provide 2 new surface areas for Datasette JS plugin developers. See alpha documentation

User Facing Changes

(plugin) Developer Facing Changes

See this file for example plugin usage. Core Developer Facing Changes

TestingFor Datasette plugin developers, please see the alpha-level documentation . To run the examples:

Open local server: Open to all feedback on this PR, from API design to variable naming, to what additional hooks might be useful for the future. My focus was more on the general shape of the API for developers, rather than on the UX of the test plugins. Design notes

Research Notes

|

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/2052/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||

| 1805076818 | I_kwDOBm6k_c5rl0lS | 2102 | API tokens with view-table but not view-database/view-instance cannot access the table | simonw 9599 | closed | 0 | simonw 9599 | 20 | 2023-07-14T15:34:27Z | 2023-08-29T16:32:36Z | 2023-08-29T16:32:35Z | OWNER |

Originally posted by @simonw in https://github.com/simonw/datasette-auth-tokens/issues/7#issuecomment-1636031702 |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/2102/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 1205687423 | I_kwDOCGYnMM5H3VR_ | 426 | CLI docs should link to Python docs and vice versa | simonw 9599 | closed | 0 | simonw 9599 | 1 | 2022-04-15T16:05:15Z | 2023-07-22T22:13:22Z | 2023-07-22T22:13:22Z | OWNER | For every command/API method there should be a link to the equivalent in the other form factor. Maybe also link to the API and CLI reference pages too. |

sqlite-utils 140912432 | issue | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/426/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 447469253 | MDU6SXNzdWU0NDc0NjkyNTM= | 485 | Improvements to table label detection | simonw 9599 | open | 0 | simonw 9599 | 10 | 2019-05-23T06:19:49Z | 2022-10-03T00:04:42Z | OWNER | Label detection doesn't work if the primary key is called pk rather than id, so this page doesn't work: https://latest.datasette.io/fixtures/roadside_attraction_characteristics Code is here: |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/485/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

|||||||

| 957529248 | MDU6SXNzdWU5NTc1MjkyNDg= | 302 | Python library version of `sqlite-utils convert` | simonw 9599 | closed | 0 | simonw 9599 | 1 | 2021-08-01T16:11:02Z | 2021-08-02T04:47:40Z | 2021-08-02T04:47:40Z | OWNER | Spin off from #251. The ability to execute Python functions to convert and split columns should be part of the library too, not just the CLI. |

sqlite-utils 140912432 | issue | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/302/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 456568880 | MDU6SXNzdWU0NTY1Njg4ODA= | 509 | Support opening multiple databases with the same stem | simonw 9599 | closed | 0 | simonw 9599 | Datasette 1.0 3268330 | 4 | 2019-06-15T19:32:00Z | 2020-12-22T20:04:35Z | 2020-12-22T20:04:35Z | OWNER | e.g. I should be able to do this: This currently errors because you can't have two databases taking the Instead, how about in this particular case assigning the second database |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/509/reactions",

"total_count": 2,

"+1": 2,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||

| 717746043 | MDExOlB1bGxSZXF1ZXN0NTAwMjU2NDg1 | 1000 | datasette.client internal requests mechanism | simonw 9599 | closed | 0 | simonw 9599 | Datasette 0.50 5971510 | 18 | 2020-10-08T23:58:25Z | 2020-10-09T16:11:26Z | 2020-10-09T16:11:25Z | OWNER | simonw/datasette/pulls/1000 | Refs #943 |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1000/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | |||

| 455965174 | MDU6SXNzdWU0NTU5NjUxNzQ= | 508 | Ability to set default sort order for a table or view in metadata.json | simonw 9599 | closed | 0 | simonw 9599 | 1 | 2019-06-13T21:40:51Z | 2020-05-28T18:53:03Z | 2020-05-28T18:53:02Z | OWNER | It can go here in the documentation: https://datasette.readthedocs.io/en/stable/metadata.html#setting-which-columns-can-be-used-for-sorting Also need to fix this sentence which is no longer true:

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/508/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 449931899 | MDU6SXNzdWU0NDk5MzE4OTk= | 494 | --reload should only trigger for -i databases | simonw 9599 | closed | 0 | simonw 9599 | 1 | 2019-05-29T17:28:43Z | 2020-02-24T19:45:05Z | 2020-02-24T19:45:05Z | OWNER | Right now it's triggering any time a mutable database changes. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/494/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 453639196 | MDU6SXNzdWU0NTM2MzkxOTY= | 504 | Remove TableView ?_group_count= feature | simonw 9599 | closed | 0 | simonw 9599 | 0 | 2019-06-07T18:25:18Z | 2019-11-06T05:13:10Z | 2019-11-06T05:13:10Z | OWNER | This feature really doesn't warrant continuing to exist. For reference: #150 and #44 Don't forget to remove it from the docs: https://github.com/simonw/datasette/blob/172da009d890aa029cff7138b4dcfd4f60948525/docs/json_api.rst#L322-L324 |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/504/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 453243459 | MDU6SXNzdWU0NTMyNDM0NTk= | 503 | Handle SQLite databases with spaces in their names? | chrismp 7936571 | closed | 0 | simonw 9599 | 1 | 2019-06-06T21:20:59Z | 2019-11-04T23:16:30Z | 2019-11-04T23:16:30Z | NONE | I named my SQLite database "Government workers" and published it to Heroku. When I clicked the "Government workers" database online it lead to a 404 page: I believe this is because the database name has a space. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/503/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 406055201 | MDU6SXNzdWU0MDYwNTUyMDE= | 406 | Support nullable foreign keys in _labels mode | simonw 9599 | closed | 0 | simonw 9599 | 2 | 2019-02-03T05:34:20Z | 2019-11-02T22:39:28Z | 2019-11-02T22:30:27Z | OWNER | Currently if there's a null in a foreign key we get "None" displayed in the inflated view:

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/406/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 440437037 | MDU6SXNzdWU0NDA0MzcwMzc= | 454 | Plugin for allowing CORS from specified hosts | simonw 9599 | closed | 0 | simonw 9599 | 5 | 2019-05-05T12:05:02Z | 2019-10-03T23:59:57Z | 2019-10-03T23:59:56Z | OWNER | It would be useful if Datasette could be configured to allow CORS requests from one or more origins, as opposed to only allowing either none or This is slightly tricky because the This means the application code needs to have a whitelist of allowed hosts and code that dynamically changes the outgoing |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/454/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 456569067 | MDU6SXNzdWU0NTY1NjkwNjc= | 510 | Ability to facet by delimiter (e.g. comma separated fields) | simonw 9599 | open | 0 | simonw 9599 | 1 | 2019-06-15T19:34:41Z | 2019-07-08T15:44:51Z | OWNER | E.g. if a field contains "Tags,With,Commas" be able to facet them in the same way as |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/510/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

|||||||

| 453829910 | MDU6SXNzdWU0NTM4Mjk5MTA= | 505 | Add white-space: pre-wrap to SQL create statement | simonw 9599 | closed | 0 | simonw 9599 | Datasette 0.29 4471010 | 0 | 2019-06-08T19:59:56Z | 2019-07-07T20:26:55Z | 2019-07-07T20:26:55Z | OWNER | Right now a super-long CREATE TABLE statement causes the table page to be even wider than the table itself:

Adding

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/505/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||

| 459598080 | MDU6SXNzdWU0NTk1OTgwODA= | 520 | asgi_wrapper plugin hook | simonw 9599 | closed | 0 | simonw 9599 | 3 | 2019-06-23T17:16:45Z | 2019-07-03T04:40:34Z | 2019-07-03T04:06:28Z | OWNER | After #272 we can finally add this hook. It will allow plugins to wrap their own ASGI middleware around Datasette. Potential use-cases include:

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/520/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 459714943 | MDU6SXNzdWU0NTk3MTQ5NDM= | 525 | Add section on sqite-utils enable-fts to the search documentation | simonw 9599 | closed | 0 | simonw 9599 | 2 | 2019-06-24T06:39:16Z | 2019-06-24T16:36:35Z | 2019-06-24T16:29:43Z | OWNER | https://datasette.readthedocs.io/en/stable/full_text_search.html already has a section about csvs-to-sqlite, sqlite-utils is even more relevant. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/525/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 459587155 | MDExOlB1bGxSZXF1ZXN0MjkwODk3MTA0 | 518 | Port Datasette from Sanic to ASGI + Uvicorn | simonw 9599 | closed | 0 | simonw 9599 | Datasette 1.0 3268330 | 12 | 2019-06-23T15:18:42Z | 2019-06-24T13:42:50Z | 2019-06-24T03:13:09Z | OWNER | simonw/datasette/pulls/518 | Most of the code here was fleshed out in comments on #272 (Port Datasette to ASGI) - this pull request will track the final pieces:

|

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/518/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | |||

| 324188953 | MDU6SXNzdWUzMjQxODg5NTM= | 272 | Port Datasette to ASGI | simonw 9599 | closed | 0 | simonw 9599 | Datasette 1.0 3268330 | 42 | 2018-05-17T21:16:32Z | 2019-06-24T04:54:15Z | 2019-06-24T03:33:06Z | OWNER | Datasette doesn't take much advantage of Sanic, and I'm increasingly having to work around parts of it because of idiosyncrasies that are specific to Datasette - caring about the exact order of querystring arguments for example. Since Datasette is GET-only our needs from a web framework are actually pretty slim. This becomes more important as I expand the plugins #14 framework. Am I sure I want the plugin ecosystem to depend on a Sanic if I might move away from it in the future? If Datasette wasn't all about async/await I would use WSGI, but today it makes more sense to use ASGI. I'd like to be confident that switching to ASGI would still give me the excellent performance that Sanic provides. https://github.com/django/asgiref/blob/master/specs/asgi.rst |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/272/reactions",

"total_count": 1,

"+1": 1,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||

| 309471814 | MDU6SXNzdWUzMDk0NzE4MTQ= | 189 | Ability to sort (and paginate) by column | simonw 9599 | closed | 0 | simonw 9599 | 31 | 2018-03-28T18:04:51Z | 2018-04-15T18:54:22Z | 2018-04-09T05:16:02Z | OWNER | As requested in https://github.com/simonw/datasette/issues/185#issuecomment-376614973 I've previously avoided this for performance reasons: sort-by-column on a column without an index is likely to perform badly for hundreds of thousands of rows. That's not a good enough reason to avoid the feature entirely though. A few options:

We already have the mechanism in place to cut off SQL queries that take more than X seconds, so if someone DOES try to sort by a column that's too expensive it won't actually hurt anything - but it would be nice to not show people a "sort" option which is guaranteed to throw a timeout error. The vast majority of datasette usage that I've seen so far is on smaller datasets where the performance penalties of sort-by-column are extremely unlikely to show up. Still left to do:

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/189/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 267759136 | MDU6SXNzdWUyNjc3NTkxMzY= | 20 | Config file with support for defining canned queries | simonw 9599 | closed | 0 | simonw 9599 | Custom templates edition 2949431 | 9 | 2017-10-23T17:53:06Z | 2017-12-05T19:05:35Z | 2017-12-05T17:44:09Z | OWNER | Probably using YAML because then we get support for multiline strings: |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/20/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed |

Advanced export

JSON shape: default, array, newline-delimited, object

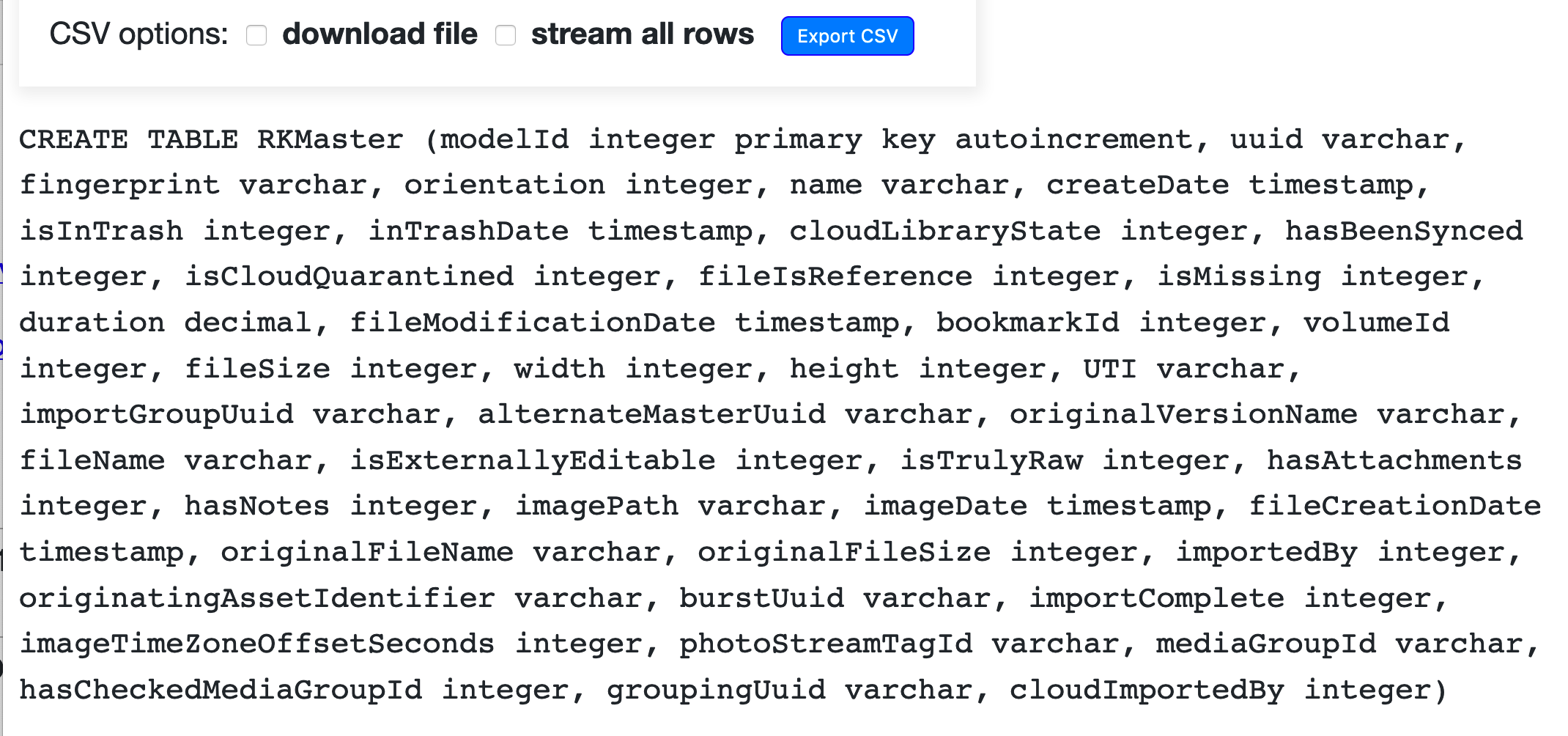

CREATE TABLE [issues] (

[id] INTEGER PRIMARY KEY,

[node_id] TEXT,

[number] INTEGER,

[title] TEXT,

[user] INTEGER REFERENCES [users]([id]),

[state] TEXT,

[locked] INTEGER,

[assignee] INTEGER REFERENCES [users]([id]),

[milestone] INTEGER REFERENCES [milestones]([id]),

[comments] INTEGER,

[created_at] TEXT,

[updated_at] TEXT,

[closed_at] TEXT,

[author_association] TEXT,

[pull_request] TEXT,

[body] TEXT,

[repo] INTEGER REFERENCES [repos]([id]),

[type] TEXT

, [active_lock_reason] TEXT, [performed_via_github_app] TEXT, [reactions] TEXT, [draft] INTEGER, [state_reason] TEXT);

CREATE INDEX [idx_issues_repo]

ON [issues] ([repo]);

CREATE INDEX [idx_issues_milestone]

ON [issues] ([milestone]);

CREATE INDEX [idx_issues_assignee]

ON [issues] ([assignee]);

CREATE INDEX [idx_issues_user]

ON [issues] ([user]);