issues

555 rows where repo = 107914493 and state = "open" sorted by type descending

This data as json, CSV (advanced)

Suggested facets: milestone, comments, author_association, draft, created_at (date), updated_at (date)

| id | node_id | number | title | user | state | locked | assignee | milestone | comments | created_at | updated_at | closed_at | author_association | pull_request | body | repo | type ▲ | active_lock_reason | performed_via_github_app | reactions | draft | state_reason |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 355299310 | MDExOlB1bGxSZXF1ZXN0MjExODYwNzA2 | 363 | Search all apps during heroku publish | kevboh 436032 | open | 0 | 1 | 2018-08-29T19:25:10Z | 2018-08-31T14:39:45Z | FIRST_TIME_CONTRIBUTOR | simonw/datasette/pulls/363 | Adds the |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/363/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

| 359075028 | MDExOlB1bGxSZXF1ZXN0MjE0NjUzNjQx | 364 | Support for other types of databases using external connectors | jsancho-gpl 11912854 | open | 0 | 0 | 2018-09-11T14:31:47Z | 2018-09-11T14:31:47Z | FIRST_TIME_CONTRIBUTOR | simonw/datasette/pulls/364 | This PR is related to #293, but now all commits have been merged. The purpose is to support other file formats that aren't SQLite, like files with PyTables format. I've tried to accomplish that using external connectors published with entry points. The modifications in the original datasette code are minimal and many are in a separated file. |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/364/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

| 440325850 | MDExOlB1bGxSZXF1ZXN0Mjc1OTIzMDY2 | 452 | SQL builder utility classes | russss 45057 | open | 0 | 0 | 2019-05-04T13:57:47Z | 2019-05-04T14:03:04Z | CONTRIBUTOR | simonw/datasette/pulls/452 | This adds a straightforward set of classes to aid in the construction of SQL queries. My plan for this was to allow plugins to manipulate the

Datasette-generated SQL in a more structured way. I'm not sure that's

going to work, but I feel like this is still a step forward - it

reduces the number of intermediate variables in There are a fair number of minor structure changes in here too as I've

tried to make the ordering of |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/452/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

| 464987783 | MDExOlB1bGxSZXF1ZXN0Mjk1MTI3MjEz | 546 | Facet by delimiter | simonw 9599 | open | 0 | 2 | 2019-07-07T20:06:05Z | 2019-11-18T23:46:01Z | OWNER | simonw/datasette/pulls/546 | Refs #510 |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/546/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

| 473288428 | MDExOlB1bGxSZXF1ZXN0MzAxNDgzNjEz | 564 | First proof-of-concept of Datasette Library | simonw 9599 | open | 0 | 1 | 2019-07-26T10:22:26Z | 2023-02-07T15:14:11Z | OWNER | simonw/datasette/pulls/564 | Refs #417. Run it like this: Uses a new plugin hook - available_databases() |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/564/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

1 | ||||||

| 501773982 | MDExOlB1bGxSZXF1ZXN0MzIzOTgzNzMy | 579 | New connection pooling | simonw 9599 | open | 0 | 1 | 2019-10-02T23:22:19Z | 2019-11-15T22:57:21Z | OWNER | simonw/datasette/pulls/579 | See #569 |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/579/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

| 565064079 | MDExOlB1bGxSZXF1ZXN0Mzc1MTgwODMy | 672 | --dirs option for scanning directories for SQLite databases | simonw 9599 | open | 0 | 15 | 2020-02-14T02:25:52Z | 2020-03-27T01:03:53Z | OWNER | simonw/datasette/pulls/672 | Refs #417. |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/672/reactions",

"total_count": 1,

"+1": 1,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

| 646448486 | MDExOlB1bGxSZXF1ZXN0NDQwNzM1ODE0 | 868 | initial windows ci setup | joshmgrant 702729 | open | 0 | 3 | 2020-06-26T18:49:13Z | 2021-07-10T23:41:43Z | FIRST_TIME_CONTRIBUTOR | simonw/datasette/pulls/868 | Picking up the work done on #557 with a new PR. Seeing if I can get this working. |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/868/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

| 648749062 | MDExOlB1bGxSZXF1ZXN0NDQyNTA1MDg4 | 883 | Skip counting hidden tables | abdusco 3243482 | open | 0 | 4 | 2020-07-01T07:38:08Z | 2020-07-02T00:25:44Z | CONTRIBUTOR | simonw/datasette/pulls/883 | Potential fix for https://github.com/simonw/datasette/issues/859. Disabling table counts for hidden tables speeds up database page quite a bit. In my setup it reduced load time by 2/3 (~300 -> ~90ms) |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/883/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

| 718395987 | MDExOlB1bGxSZXF1ZXN0NTAwNzk4MDkx | 1008 | Add json_loads and json_dumps jinja2 filters | mhalle 649467 | open | 0 | 1 | 2020-10-09T20:11:34Z | 2020-12-15T02:30:28Z | FIRST_TIME_CONTRIBUTOR | simonw/datasette/pulls/1008 | datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1008/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | |||||||

| 723982480 | MDExOlB1bGxSZXF1ZXN0NTA1NDUzOTAw | 1030 | Make `package` command deal with a configuration directory argument | frankier 299380 | open | 0 | 1 | 2020-10-18T11:07:02Z | 2020-10-19T08:01:51Z | FIRST_TIME_CONTRIBUTOR | simonw/datasette/pulls/1030 | Currently if we run |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1030/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

| 756876238 | MDExOlB1bGxSZXF1ZXN0NTMyMzQ4OTE5 | 1130 | Fix footer not sticking to bottom in short pages | abdusco 3243482 | open | 0 | 4 | 2020-12-04T07:29:01Z | 2021-06-15T13:27:48Z | CONTRIBUTOR | simonw/datasette/pulls/1130 | datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1130/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | |||||||

| 774332247 | MDExOlB1bGxSZXF1ZXN0NTQ1MjY0NDM2 | 1159 | Improve the display of facets information | lovasoa 552629 | open | 0 | Datasette 1.0 3268330 | 9 | 2020-12-24T11:01:47Z | 2023-07-31T18:57:59Z | FIRST_TIME_CONTRIBUTOR | simonw/datasette/pulls/1159 | This PR changes the display of facets to hopefully make them more readable. Before | After

---|---

|

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1159/reactions",

"total_count": 4,

"+1": 4,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | |||||

| 793002853 | MDExOlB1bGxSZXF1ZXN0NTYwNzYwMTQ1 | 1204 | WIP: Plugin includes | simonw 9599 | open | 0 | 3 | 2021-01-25T03:59:06Z | 2021-12-17T07:10:49Z | OWNER | simonw/datasette/pulls/1204 | Refs #1191 Next steps:

|

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1204/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

1 | ||||||

| 837956424 | MDExOlB1bGxSZXF1ZXN0NTk4MjEzNTY1 | 1271 | Use SQLite conn.interrupt() instead of sqlite_timelimit() | simonw 9599 | open | 0 | 3 | 2021-03-22T17:34:20Z | 2021-03-22T21:49:27Z | OWNER | simonw/datasette/pulls/1271 | Refs #1270, #1268, #1249 Before merging this I need to do some more testing (to make sure that expensive queries really are properly cancelled). I also need to delete a bunch of code relating to the old mechanism of cancelling queries. [See comment below: this doesn't actually cancel the query due to a thread-local confusion] |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1271/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

1 | ||||||

| 855446829 | MDExOlB1bGxSZXF1ZXN0NjEzMTc4OTY4 | 1296 | Dockerfile: use Ubuntu 20.10 as base | tmcl-it 82332573 | open | 0 | 4 | 2021-04-12T00:23:32Z | 2021-07-20T08:52:13Z | FIRST_TIME_CONTRIBUTOR | simonw/datasette/pulls/1296 | This PR changes the main Dockerfile to use ubuntu:20.10 as base image instead of python:3.9.2-slim-buster (itself based on debian:buster-slim). The Dockerfile is essentially the one from https://github.com/simonw/datasette/issues/1249#issuecomment-803698983 with some additional cleanups to slim it down. This fixes a couple of issues: 1. The SQLite version in Debian Buster (2.6.0) doesn't support generated columns 2. Installing SpatiaLite from the Debian sid repositories has the side effect of also installing updates to libc and libstdc++ from sid. As a bonus, the Docker image becomes smaller:

Reproduction of the first issue``` $ curl -O https://latest.datasette.io/fixtures.db % Total % Received % Xferd Average Speed Time Time Time Current Dload Upload Total Spent Left Speed 100 260k 0 260k 0 0 489k 0 --:--:-- --:--:-- --:--:-- 489k $ docker run -v Here is the SQLite version:

Reproduction of the second issue

Both libc and libstdc++ are backwards compatible, so the image still works, but it will result in a combination of libraries and Python versions that exists only in the Datasette image, so it's likely untested. In addition, since Debian sid is an always-changing rolling-release, the versions of libc, libstdc++, Spatialite, and their dependencies change frequently, so the library versions in the Datasette image will depend on the day when it was built. |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1296/reactions",

"total_count": 1,

"+1": 1,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

| 904598267 | MDExOlB1bGxSZXF1ZXN0NjU1NzQxNDI4 | 1348 | DRAFT: add test and scan for docker images | blairdrummond 10801138 | open | 0 | 2 | 2021-05-28T03:02:12Z | 2021-05-28T03:06:16Z | CONTRIBUTOR | simonw/datasette/pulls/1348 | NOTE: I don't think this PR is ready, since the arm/v6 and arm/v7 images are failing pytest due to missing dependencies (gcc and friends). But it's pretty close. Closes https://github.com/simonw/datasette/issues/1344 . Using a build-matrix for the platforms and this test, we test all the platforms in parallel. I also threw in container scanning. Switch

|

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1348/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

| 947596222 | MDExOlB1bGxSZXF1ZXN0NjkyNTU3Mzgx | 1399 | Multiple sort | jgryko5 87192257 | open | 0 | 0 | 2021-07-19T12:20:14Z | 2021-07-19T12:20:14Z | FIRST_TIME_CONTRIBUTOR | simonw/datasette/pulls/1399 | Closes #197. I have added support for sorting by multiple parameters as mentioned in the issue above, and together with that, a suggestion on how to implement such sorting in the user interface. |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1399/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

| 970463436 | MDExOlB1bGxSZXF1ZXN0NzEyNDEyODgz | 1434 | Enrich arbitrary query results with foreign key links and column descriptions | simonw 9599 | open | 0 | 1 | 2021-08-13T14:43:01Z | 2021-08-19T21:18:58Z | OWNER | simonw/datasette/pulls/1434 | Refs #1293, follows #942. |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1434/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

| 991206402 | MDExOlB1bGxSZXF1ZXN0NzI5NzA0NTM3 | 1465 | add support for -o --get /path | ctb 51016 | open | 0 | 0 | 2021-09-08T14:30:42Z | 2021-09-08T14:31:45Z | CONTRIBUTOR | simonw/datasette/pulls/1465 | Fixes https://github.com/simonw/datasette/issues/1459 Adds support for If TODO items:

- [ ] update documentation

- [ ] print out error message when note, '@CTB' is used in this PR to flag code that needs revisiting. |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1465/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

1 | ||||||

| 1001104942 | PR_kwDOBm6k_c4r-EVH | 1475 | feat: allow joins using _through in both directions | bram2000 5268174 | open | 0 | 0 | 2021-09-20T15:28:20Z | 2021-09-20T15:28:20Z | FIRST_TIME_CONTRIBUTOR | simonw/datasette/pulls/1475 | Currently the This is an admittedly hacky change to implement bidirectional joins using |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1475/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

| 1033678984 | PR_kwDOBm6k_c4tjgJ8 | 1495 | Allow routes to have extra options | fgregg 536941 | open | 0 | 5 | 2021-10-22T15:00:45Z | 2021-11-19T15:36:27Z | CONTRIBUTOR | simonw/datasette/pulls/1495 | Right now, datasette routes can only be a 2-tuple of If it was possible for datasette to handle extra options, like standard Django does, it would add flexibility for plugin authors. For example, if extra options were enabled, then it would be easy to make a single table the home page (#1284). This plugin would accomplish it. ```python from datasette import hookimpl from datasette.views.table import TableView @hookimpl def register_routes(datasette): return [ (r"^/$", TableView.as_view(datasette), {'db_name': 'DB_NAME', 'table': 'TABLE_NAME'}) ] ``` |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1495/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

| 1122451096 | PR_kwDOBm6k_c4x_mXy | 1626 | Try test suite against macOS and Windows | simonw 9599 | open | 0 | 3 | 2022-02-02T22:26:51Z | 2022-02-03T01:22:44Z | OWNER | simonw/datasette/pulls/1626 | Refs #1625 |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1626/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

| 1268121674 | PR_kwDOBm6k_c45fz-O | 1757 | feat: add a wildcard for _json columns | ytjohn 163156 | open | 0 | 1 | 2022-06-11T01:01:17Z | 2022-09-06T00:51:21Z | FIRST_TIME_CONTRIBUTOR | simonw/datasette/pulls/1757 | This allows _json to accept a wildcard for when there are many JSON columns that the user wants to convert. I hope this is useful. I've tested it on our datasette and haven't ran into any issues. I imagine on a large set of results, there could be some performance issues, but it will probably be negligible for most use cases. On a side note, I ran into an issue where I had to upgrade black on my system beyond the pinned version in setup.py. Here is the upstream issue <https://github.com/psf/black/issues/2964 . I didn't include this in the PR yet since I didn't look into the issue too far, but I can if you would like. |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1757/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

| 1307359454 | PR_kwDOBm6k_c47iWbd | 1772 | Convert to setup.cfg | kfdm 89725 | open | 0 | 0 | 2022-07-18T03:39:53Z | 2022-07-18T03:39:53Z | FIRST_TIME_CONTRIBUTOR | simonw/datasette/pulls/1772 | Recent versions of setuptools can run most things from setup.cfg so one can have a simpler version that does not require executing code on install. The bulk of the changes were automated by running https://pypi.org/project/setup-py-upgrade/ with a few minor edits for the bits that it can not auto convert (the initial |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1772/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

| 1386917344 | PR_kwDOBm6k_c4_prjN | 1823 | Keyword-only arguments for a bunch of internal methods | simonw 9599 | open | 0 | 3 | 2022-09-27T00:44:59Z | 2022-10-05T04:37:54Z | OWNER | simonw/datasette/pulls/1823 | Refs #1822 :books: Documentation preview :books:: https://datasette--1823.org.readthedocs.build/en/1823/ |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1823/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

| 1426379903 | PR_kwDOBm6k_c5BtJNn | 1870 | don't use immutable=1, only mode=ro | fgregg 536941 | open | 0 | 7 | 2022-10-27T23:33:04Z | 2023-10-03T19:12:37Z | CONTRIBUTOR | simonw/datasette/pulls/1870 | Opening db files in immutable mode sometimes leads to the file being mutated, which causes duplication in the docker image layers: see #1836, #1480 That this happens in "immutable" mode is surprising, because the sqlite docs say that setting this should open the database as read only. https://www.sqlite.org/c3ref/open.html

Perhaps this is a bug in sqlite? :books: Documentation preview :books:: https://datasette--1870.org.readthedocs.build/en/1870/ |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1870/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

| 1538342965 | PR_kwDOBm6k_c5HpNYo | 1996 | Document custom json encoder | eyeseast 25778 | open | 0 | 1 | 2023-01-18T16:54:14Z | 2023-01-19T12:55:57Z | CONTRIBUTOR | simonw/datasette/pulls/1996 | Closes #1983 All documentation here. Edits welcome. :books: Documentation preview :books:: https://datasette--1996.org.readthedocs.build/en/1996/ |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1996/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

| 1555701851 | PR_kwDOBm6k_c5IdsD7 | 2003 | Show referring tables and rows when the referring foreign key is compound | fgregg 536941 | open | 0 | 3 | 2023-01-24T21:31:31Z | 2023-01-25T18:44:42Z | CONTRIBUTOR | simonw/datasette/pulls/2003 | sqlite foreign keys can be compound, but that is not as well supported by datasette as single column foreign keys. in particular,

Both of these issues are discussed in #1099. This PR only fixes the second one, because it's not clear what the right UX is for the first issue.

Some things that might not be desirable about this approach.

|

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/2003/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

| 1556065335 | PR_kwDOBm6k_c5Ie5nA | 2004 | use single quotes for string literals, fixes #2001 | cldellow 193185 | open | 0 | 1 | 2023-01-25T05:08:46Z | 2023-02-01T06:37:18Z | CONTRIBUTOR | simonw/datasette/pulls/2004 | This modernizes some uses of double quotes for string literals to use only single quotes, fixes simonw/datasette#2001 While developing it, I manually enabled the stricter mode by using the code snippet at https://gist.github.com/cldellow/85bba507c314b127f85563869cd94820 I think that code snippet isn't generally safe/portable, so I haven't tried to automate it in the tests. :books: Documentation preview :books:: https://datasette--2004.org.readthedocs.build/en/2004/ |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/2004/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

| 1560982210 | PR_kwDOBm6k_c5IvYKw | 2008 | array facet: don't materialize unnecessary columns | cldellow 193185 | open | 0 | 8 | 2023-01-28T19:33:40Z | 2023-01-29T18:17:40Z | CONTRIBUTOR | simonw/datasette/pulls/2008 | The presence of Instead, we can select only the column we'll use. This lets SQLite's optimizer realize that the other columns in the CTE definition aren't needed. On a test table with 278K rows, 98K of which had an array, this speeds up the facet calculation from 4 sec to 1 sec. :books: Documentation preview :books:: https://datasette--2008.org.readthedocs.build/en/2008/ |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/2008/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

| 1581218043 | PR_kwDOBm6k_c5JyqPy | 2025 | Add database metadata to index.html template context | palewire 9993 | open | 0 | 0 | 2023-02-12T11:16:58Z | 2023-02-12T11:17:14Z | FIRST_TIME_CONTRIBUTOR | simonw/datasette/pulls/2025 | Fixes #2016 :books: Documentation preview :books:: https://datasette--2025.org.readthedocs.build/en/2025/ |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/2025/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

| 1586980089 | PR_kwDOBm6k_c5KF-by | 2026 | Avoid repeating primary key columns if included in _col args | runderwood 8513 | open | 0 | 0 | 2023-02-16T04:16:25Z | 2023-02-16T04:16:41Z | FIRST_TIME_CONTRIBUTOR | simonw/datasette/pulls/2026 | ...while maintaining given order. Fixes #1975 (if I'm understanding correctly). :books: Documentation preview :books:: https://datasette--2026.org.readthedocs.build/en/2026/ |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/2026/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

| 1605481359 | PR_kwDOBm6k_c5LDwrF | 2031 | Expand foreign key references in row view as well | tmcl-it 82332573 | open | 0 | 5 | 2023-03-01T18:43:09Z | 2023-03-24T18:35:25Z | FIRST_TIME_CONTRIBUTOR | simonw/datasette/pulls/2031 | Unlike the table view, the single row view does not resolve foreign key references into labels. This patch extracts the foreign key reference expansion code from TableView.data() into a standalone function that is then called by both TableView.data() and RowView.data(). :books: Documentation preview :books:: https://datasette--2031.org.readthedocs.build/en/2031/ |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/2031/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

| 1613974869 | PR_kwDOBm6k_c5LgPS- | 2034 | remove an unused `app` var in cli.py | wenhoujx 4370201 | open | 0 | 2 | 2023-03-07T18:19:05Z | 2023-03-29T20:56:20Z | FIRST_TIME_CONTRIBUTOR | simonw/datasette/pulls/2034 | this var Feel free to ignore this PR if the deleted line actually does something. :books: Documentation preview :books:: https://datasette--2034.org.readthedocs.build/en/2034/ |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/2034/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

| 1639873822 | PR_kwDOBm6k_c5M29tt | 2044 | Expand labels in row view as well (patch for 0.64.x branch) | tmcl-it 82332573 | open | 0 | 0 | 2023-03-24T18:44:44Z | 2023-03-24T18:44:57Z | FIRST_TIME_CONTRIBUTOR | simonw/datasette/pulls/2044 | This is a version of #2031 for the 0.64.x branch. :books: Documentation preview :books:: https://datasette--2044.org.readthedocs.build/en/2044/ |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/2044/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

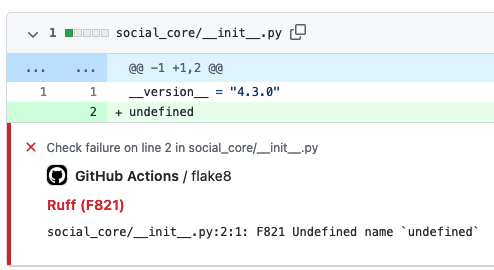

| 1661860507 | PR_kwDOBm6k_c5N_bMw | 2056 | GitHub Action to lint Python code with ruff | cclauss 3709715 | open | 0 | 6 | 2023-04-11T06:41:27Z | 2023-04-15T14:24:46Z | FIRST_TIME_CONTRIBUTOR | simonw/datasette/pulls/2056 | Ruff supports over 500 lint rules and can be used to replace Flake8 (plus dozens of plugins), isort, pydocstyle, yesqa, eradicate, pyupgrade, and autoflake, all while executing (in Rust) tens or hundreds of times faster than any individual tool. The ruff Action uses minimal steps to run in ~5 seconds, rapidly providing intuitive GitHub Annotations to contributors.

:books: Documentation preview :books:: https://datasette--2056.org.readthedocs.build/en/2056/ |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/2056/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

| 1674322631 | PR_kwDOBm6k_c5OpEz_ | 2061 | Add "Packaging a plugin using Poetry" section in docs | rclement 1238873 | open | 0 | 0 | 2023-04-19T07:23:28Z | 2023-04-19T07:27:18Z | FIRST_TIME_CONTRIBUTOR | simonw/datasette/pulls/2061 | This PR adds a new section about packaging a plugin using :books: Documentation preview :books:: https://datasette--2061.org.readthedocs.build/en/2061/ |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/2061/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

| 1708981860 | PR_kwDOBm6k_c5QdMea | 2074 | sort files by mtime | abbbi 3919561 | open | 0 | 0 | 2023-05-14T15:25:15Z | 2023-05-14T15:25:29Z | FIRST_TIME_CONTRIBUTOR | simonw/datasette/pulls/2074 | serving multiple database files and getting tired by the default sort, changes so the sort order puts the latest changed databases to be on top of the list so don't have to scroll down, lazy as i am ;) :books: Documentation preview :books:: https://datasette--2074.org.readthedocs.build/en/2074/ |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/2074/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

| 1715468032 | PR_kwDOBm6k_c5QzEAM | 2076 | Datsette gpt plugin | StudioCordillera 130708713 | open | 0 | 0 | 2023-05-18T11:22:30Z | 2023-05-18T11:22:45Z | FIRST_TIME_CONTRIBUTOR | simonw/datasette/pulls/2076 | :books: Documentation preview :books:: https://datasette--2076.org.readthedocs.build/en/2076/ |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/2076/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

| 1734786661 | PR_kwDOBm6k_c5R0fcK | 2082 | Catch query interrupted on facet suggest row count | redraw 10843208 | open | 0 | 0 | 2023-05-31T18:42:46Z | 2023-05-31T18:45:26Z | FIRST_TIME_CONTRIBUTOR | simonw/datasette/pulls/2082 | Just like facet's I've included :books: Documentation preview :books:: https://datasette--2082.org.readthedocs.build/en/2082/ |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/2082/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

| 1794604602 | PR_kwDOBm6k_c5U-akg | 2096 | Clarify docs for descriptions in metadata | garthk 15906 | open | 0 | 0 | 2023-07-08T01:57:58Z | 2023-07-08T01:58:13Z | FIRST_TIME_CONTRIBUTOR | simonw/datasette/pulls/2096 | G'day! I got confused while debugging, earlier today. That's on me, but it does strike me a little repetition in the metadata documentation might help those flicking around it rather than reading it from top to bottom. No worries if you think otherwise. :books: Documentation preview :books:: https://datasette--2096.org.readthedocs.build/en/2096/ |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/2096/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

| 1802613340 | PR_kwDOBm6k_c5VZhfw | 2100 | Make primary key view accessible to render_cell hook | meowcat 1563881 | open | 0 | 0 | 2023-07-13T09:30:36Z | 2023-08-10T13:15:41Z | FIRST_TIME_CONTRIBUTOR | simonw/datasette/pulls/2100 | :books: Documentation preview :books:: https://datasette--2100.org.readthedocs.build/en/2100/ |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/2100/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

| 1864112887 | PR_kwDOBm6k_c5Yo7bk | 2151 | Test Datasette on multiple SQLite versions | asg017 15178711 | open | 0 | 1 | 2023-08-23T22:42:51Z | 2023-08-23T22:58:13Z | CONTRIBUTOR | simonw/datasette/pulls/2151 | still testing, hope it works! :books: Documentation preview :books:: https://datasette--2151.org.readthedocs.build/en/2151/ |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/2151/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

1 | ||||||

| 1865572575 | PR_kwDOBm6k_c5Yt2eO | 2155 | Fix hupper.start_reloader entry point | cadeef 79087 | open | 0 | 2 | 2023-08-24T17:14:08Z | 2023-09-27T18:44:02Z | FIRST_TIME_CONTRIBUTOR | simonw/datasette/pulls/2155 | Update hupper's entry point so that click commands are processed properly. Fixes #2123 :books: Documentation preview :books:: https://datasette--2155.org.readthedocs.build/en/2155/ |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/2155/reactions",

"total_count": 2,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 2,

"eyes": 0

} |

0 | ||||||

| 1865983069 | PR_kwDOBm6k_c5YvQSi | 2158 | add brand option to metadata.json. | publicmatt 52261150 | open | 0 | 0 | 2023-08-24T22:37:41Z | 2023-08-24T22:37:57Z | FIRST_TIME_CONTRIBUTOR | simonw/datasette/pulls/2158 | This adds a brand link to the top navbar if 'brand' key is populated in metadata.json. The link will be either '#' or use the contents of 'brand_url' in metadata.json for href. I was able to get this done on my own site by replacing :books: Documentation preview :books:: https://datasette--2158.org.readthedocs.build/en/2158/ |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/2158/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

| 1866815458 | PR_kwDOBm6k_c5YyF-C | 2159 | Implement Dark Mode colour scheme | jamietanna 3315059 | open | 0 | 0 | 2023-08-25T10:46:23Z | 2023-08-25T10:46:35Z | FIRST_TIME_CONTRIBUTOR | simonw/datasette/pulls/2159 | Closes #2095. :books: Documentation preview :books:: https://datasette--2159.org.readthedocs.build/en/2159/ |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/2159/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

1 | ||||||

| 1884330740 | PR_kwDOBm6k_c5ZszDF | 2174 | Use $DATASETTE_INTERNAL in absence of --internal | asg017 15178711 | open | 0 | 3 | 2023-09-06T16:07:15Z | 2023-09-08T00:46:13Z | CONTRIBUTOR | simonw/datasette/pulls/2174 | refs 2157, specifically this commentPassing in This PR adds a new configurable env variable In draft mode for now, needs tests and documentation. Side note: Maybe we can have a sections in the docs that lists all the "configuration environment variables" that Datasette respects? I did a quick grep and found:

:books: Documentation preview :books:: https://datasette--2174.org.readthedocs.build/en/2174/ |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/2174/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

| 1983600865 | PR_kwDOBm6k_c5e7WH7 | 2206 | Bump the python-packages group with 1 update | dependabot[bot] 49699333 | open | 0 | 1 | 2023-11-08T13:18:56Z | 2023-12-08T13:46:24Z | CONTRIBUTOR | simonw/datasette/pulls/2206 | Bumps the python-packages group with 1 update: black. Release notesSourced from black's releases.

... (truncated) ChangelogSourced from black's changelog.

... (truncated) Commits

Dependabot will resolve any conflicts with this PR as long as you don't alter it yourself. You can also trigger a rebase manually by commenting Dependabot commands and optionsYou can trigger Dependabot actions by commenting on this PR: - `@dependabot rebase` will rebase this PR - `@dependabot recreate` will recreate this PR, overwriting any edits that have been made to it - `@dependabot merge` will merge this PR after your CI passes on it - `@dependabot squash and merge` will squash and merge this PR after your CI passes on it - `@dependabot cancel merge` will cancel a previously requested merge and block automerging - `@dependabot reopen` will reopen this PR if it is closed - `@dependabot close` will close this PR and stop Dependabot recreating it. You can achieve the same result by closing it manually - `@dependabot show <dependency name> ignore conditions` will show all of the ignore conditions of the specified dependency - `@dependabot ignore <dependency name> major version` will close this group update PR and stop Dependabot creating any more for the specific dependency's major version (unless you unignore this specific dependency's major version or upgrade to it yourself) - `@dependabot ignore <dependency name> minor version` will close this group update PR and stop Dependabot creating any more for the specific dependency's minor version (unless you unignore this specific dependency's minor version or upgrade to it yourself) - `@dependabot ignore <dependency name>` will close this group update PR and stop Dependabot creating any more for the specific dependency (unless you unignore this specific dependency or upgrade to it yourself) - `@dependabot unignore <dependency name>` will remove all of the ignore conditions of the specified dependency - `@dependabot unignore <dependency name> <ignore condition>` will remove the ignore condition of the specified dependency and ignore conditions :books: Documentation preview :books:: https://datasette--2206.org.readthedocs.build/en/2206/ |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/2206/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

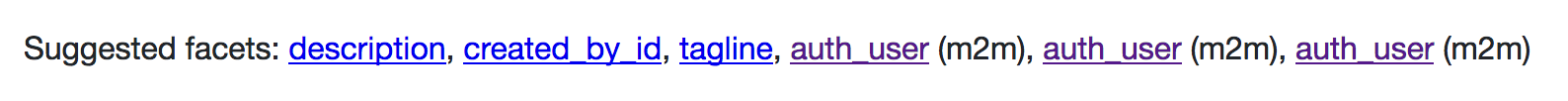

| 1994861266 | PR_kwDOBm6k_c5fhgOS | 2209 | Fix query for suggested facets with column named value | rgieseke 198537 | open | 0 | 3 | 2023-11-15T14:13:30Z | 2023-11-15T15:31:12Z | CONTRIBUTOR | simonw/datasette/pulls/2209 | See discussion in https://github.com/simonw/datasette/issues/2208 :books: Documentation preview :books:: https://datasette--2209.org.readthedocs.build/en/2209/ |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/2209/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

| 267515678 | MDU6SXNzdWUyNjc1MTU2Nzg= | 3 | Make individual column valuables addressable, with smart content types | simonw 9599 | open | 0 | 1 | 2017-10-23T01:11:32Z | 2017-12-10T03:11:58Z | OWNER | Some SQLite databases embed images in columns. It would be cool if these had URLs. The one without an explicit file extension auto-detects the correct extension. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/3/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 268110769 | MDU6SXNzdWUyNjgxMTA3Njk= | 33 | Use locust for benchmarking and load tests | simonw 9599 | open | 0 | 0 | 2017-10-24T17:00:09Z | 2017-12-10T03:12:16Z | OWNER | https://github.com/locustio/locust Needed for #32 |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/33/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 274615452 | MDU6SXNzdWUyNzQ2MTU0NTI= | 111 | Add “updated” to metadata | simonw 9599 | open | 0 | 12 | 2017-11-16T18:22:20Z | 2021-09-21T22:48:27Z | OWNER | To give an indication as to when the data was last updated. This should be a field in the metadata that is then shown on the index page and in the footer, if it is set. Also support setting it using an option to “datasette publish” and “datasette package” - which can either be a string or can be the magic string “today” to set it to today’s date: |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/111/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 275125561 | MDU6SXNzdWUyNzUxMjU1NjE= | 123 | Datasette serve should accept paths/URLs to CSVs and other file formats | simonw 9599 | open | 0 | 9 | 2017-11-19T02:05:48Z | 2021-07-19T00:04:32Z | OWNER | This would remove the csvs-to-sqlite step which I end up using for almost everything. I'm hesitant to introduce pandas as a required dependency though since it require compiling numpy. Could build it so this option is only available if you have pandas installed. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/123/reactions",

"total_count": 1,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 1,

"rocket": 0,

"eyes": 0

} |

||||||||

| 275159710 | MDU6SXNzdWUyNzUxNTk3MTA= | 128 | Every visualization should have an "embed" button | simonw 9599 | open | 0 | 0 | 2017-11-19T13:38:13Z | 2019-05-13T18:33:51Z | OWNER | At least for the first round of visualizations, any time you construct one using the UI the result should include an "embed this" button that returns source code to copy and paste These examples should use unpkg.com (or similarl) urls with SRI hashes, eg https://www.srihash.org - and should load data from the datasette JSON API. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/128/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 275415799 | MDU6SXNzdWUyNzU0MTU3OTk= | 137 | Ability to combine multiple SQL queries on a single graph | simonw 9599 | open | 0 | 1 | 2017-11-20T16:26:57Z | 2019-05-13T18:33:51Z | OWNER | This would make visualizations significantly more powerful. The interesting challenge will be around the URL design. It would be useful to be able to combine either multiple explicit SQL queries or multiple queries based on the filter string parameters passed to one or more table views. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/137/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 275755475 | MDU6SXNzdWUyNzU3NTU0NzU= | 140 | Heatmap visualization plugin | simonw 9599 | open | 0 | 2 | 2017-11-21T15:34:23Z | 2019-05-13T18:33:51Z | OWNER | datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/140/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

|||||||||

| 281110295 | MDU6SXNzdWUyODExMTAyOTU= | 173 | I18n and L10n support | janimo 50138 | open | 0 | 2 | 2017-12-11T17:49:58Z | 2021-04-26T12:10:01Z | NONE | It would be less geeky and more user friendly if the display strings in the filter menu and possibly other parts could be localized. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/173/reactions",

"total_count": 2,

"+1": 2,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 285168503 | MDU6SXNzdWUyODUxNjg1MDM= | 176 | Add GraphQL endpoint | yozlet 173848 | open | 0 | 8 | 2017-12-29T23:21:01Z | 2020-04-21T14:16:24Z | NONE | Would make it much easier to build React & similar frontends. Maybe with https://github.com/graphql-python/sanic-graphql ? |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/176/reactions",

"total_count": 1,

"+1": 1,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 288438570 | MDU6SXNzdWUyODg0Mzg1NzA= | 179 | More metadata options for template authors | simonw 9599 | open | 0 | 2 | 2018-01-14T20:51:04Z | 2019-05-13T18:33:33Z | OWNER | See this thread on Twitter: https://twitter.com/simonw/status/952637152797458432 |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/179/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 299760684 | MDU6SXNzdWUyOTk3NjA2ODQ= | 185 | Metadata should be a nested arbitrary KV store | carlmjohnson 222245 | open | 0 | 12 | 2018-02-23T16:02:07Z | 2019-05-13T18:33:33Z | NONE | I started using the metadata feature and was surprised to find that values are not inherited from the root object down to specific databases and tables. This makes metadata much less useful and requires a lot of pointless duplication. Ideally, metadata should allow arbitrary key-value pairs, and there should be a way of accessing metadata either in an inherited or non-inherited manner. Something like |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/185/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 309047460 | MDU6SXNzdWUzMDkwNDc0NjA= | 188 | Ability to bundle metadata and templates inside the SQLite file | simonw 9599 | open | 0 | 4 | 2018-03-27T16:42:07Z | 2020-12-04T17:18:34Z | OWNER | One of the nicest qualities of SQLite as a data format is that you get a single file which you can then backup or share with other people. Datasette breaks this a little once you start including custom metadata.json or template files and CSS. It would be cool if there was an optional mechanism for baking that extra configuration into the SQLite file itself. That way entire datasette mini-applications (including canned queries and custom HTML and CSS) could be constructed as single .db files. Since datasette configuration is all file-based, one way to achieve that would be to support a "datasette_files" table which, if present is used to search for file contents by path. This is inline with the philosophy described by https://www.sqlite.org/appfileformat.html |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/188/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 312395790 | MDU6SXNzdWUzMTIzOTU3OTA= | 197 | Ability to sort by more than one column | simonw 9599 | open | 0 | 0 | 2018-04-09T05:13:30Z | 2018-07-10T17:45:37Z | OWNER | Split off from #189. I'd like to support "sort by X descending, then by Y ascending if there are dupes for X" as well. Suggested syntax for that: we currently only allow one argument to be sent. We should allow as many arguments as there are columns, for example: |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/197/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 312396095 | MDU6SXNzdWUzMTIzOTYwOTU= | 198 | Ability to sort with nulls last | simonw 9599 | open | 0 | 0 | 2018-04-09T05:15:40Z | 2018-07-10T17:45:37Z | OWNER | Split off from #189 Here's how to do that in SQL: https://fivethirtyeight.datasettes.com/fivethirtyeight-2628db9?sql=select+rowid%2C+*+from+%5Bnfl-wide-receivers%2Fadvanced-historical%5D%0D%0Aorder+by+case+when+career_ranypa+is+null+then+1+else+0+end%2C+career_ranypa%2C+rowid |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/198/reactions",

"total_count": 1,

"+1": 1,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 314771615 | MDU6SXNzdWUzMTQ3NzE2MTU= | 218 | Support custom unit display in order to handle "$10,000" | simonw 9599 | open | 0 | 0 | 2018-04-16T18:39:31Z | 2018-07-10T17:45:38Z | OWNER | I tried to get Datasette to display It would be neat if there was a mechanism for specifying a custom unit display - maybe something like this:

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/218/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 314834783 | MDU6SXNzdWUzMTQ4MzQ3ODM= | 219 | Expose units in the JSON API? | russss 45057 | open | 0 | 0 | 2018-04-16T22:04:25Z | 2018-04-16T22:04:25Z | CONTRIBUTOR | From #203: it would be nice for the JSON API to (optionally) return columns rendered with units in them - if, for example, you're consuming the JSON to render the rows on a map. I'm not entirely sure how useful this will be though - at the moment my map queries are custom SQL queries (a few have joins in, the rest might be fetching large amounts of data so it makes sense to limit columns fetched). Perhaps the SQL function is a better approach in general. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/219/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 316621102 | MDU6SXNzdWUzMTY2MjExMDI= | 235 | Add limit on the size in KB of data returned from a single query | simonw 9599 | open | 0 | 2 | 2018-04-22T23:01:15Z | 2018-04-24T00:30:02Z | OWNER | Datasette limits the number of rows returned to 1,000 and limits the time spent executing a SQL query to 1000ms - and both of these limits can be customized. It does not have a limit on the size of the response returned. It's possible to compose maliciously large SQL responses in a small number of rows using mechanisms like the I think the easiest place to implement that is here: Currently we use The bigger challenge here is understanding how well this approach works and what impact it will have on overall Datasette performance. I think I need #33 for this. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/235/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 317001500 | MDU6SXNzdWUzMTcwMDE1MDA= | 236 | datasette publish lambda plugin | simonw 9599 | open | 0 | 11 | 2018-04-23T22:10:30Z | 2023-03-12T14:04:15Z | OWNER | Refs #217 - create a publish plugin that can deploy to AWS Lambda. https://docs.aws.amazon.com/lambda/latest/dg/limits.html says lambda packages can be up to 50 MB, so this would only work with smaller databases (the command can check the filesize before attempting to package and deploy it). Lambdas do get a 512 MB |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/236/reactions",

"total_count": 2,

"+1": 2,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 318490133 | MDU6SXNzdWUzMTg0OTAxMzM= | 241 | Default datasette logging format should be JSON | simonw 9599 | open | 0 | 0 | 2018-04-27T17:32:48Z | 2018-07-10T17:45:40Z | OWNER | Structured logs are better. Datasette should default to outputting it's HTTP access log lines as newline delimited JSON instead of the Sanic default format it uses at the moment. For improved greppability these logs should have keys ordered in a consistent way. Python's JSON module can do this with ordered dictionaries. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/241/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 319449852 | MDU6SXNzdWUzMTk0NDk4NTI= | 247 | SQLite code decoupled from Datasette | jsancho-gpl 11912854 | open | 0 | 1 | 2018-05-02T08:03:28Z | 2018-05-21T15:29:31Z | NONE | I'm working on the possibility of use Datasette with other file formats that aren't SQLite, like files with PyTables format. In order to accomplish that, I've started a fork for decoupling the code related with SQLite and putting it in an external connector to allow future connectors for a lot of file formats. It'd be nice if you could look at it and suggest improvements for a possible PR. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/247/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 320132682 | MDU6SXNzdWUzMjAxMzI2ODI= | 250 | Setup some issue templates | simonw 9599 | open | 0 | 0 | 2018-05-04T01:49:07Z | 2018-05-04T01:49:07Z | OWNER | https://twitter.com/left_pad/status/99216385740464537 I like the idea of using these to help people understand some of the ways I want to use issues. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/250/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 323223872 | MDU6SXNzdWUzMjMyMjM4NzI= | 260 | Validate metadata.json on startup | simonw 9599 | open | 0 | 7 | 2018-05-15T13:42:56Z | 2023-06-21T12:51:22Z | OWNER | It's easy to misspell the name of a database or table and then be puzzled when the metadata settings silently fail. To avoid this, let's sanity check the provided metadata.json on startup and quit with a useful error message if we find any obvious mistakes. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/260/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 323658641 | MDU6SXNzdWUzMjM2NTg2NDE= | 262 | Add ?_extra= mechanism for requesting extra properties in JSON | simonw 9599 | open | 0 | Datasette 1.0 3268330 | 27 | 2018-05-16T14:55:42Z | 2023-03-29T06:22:22Z | OWNER | Datasette views currently work by creating a set of data that should be returned as JSON, then defining an additional, optional This Example of how that is used today: https://github.com/simonw/datasette/blob/2b79f2bdeb1efa86e0756e741292d625f91cb93d/datasette/views/table.py#L672-L704 With features like Facets in #255 I'm beginning to want to move more items into the But... as an API user, I want to still optionally be able to access that information. Solution: Add a Then redefine as many of the current This could allow the JSON representation to be slimmed down further (removing e.g. the |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/262/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

|||||||

| 323718842 | MDU6SXNzdWUzMjM3MTg4NDI= | 268 | Mechanism for ranking results from SQLite full-text search | simonw 9599 | open | 0 | 12 | 2018-05-16T17:36:40Z | 2022-01-13T22:21:28Z | OWNER | This isn't particularly straight-forward - all the more reason for Datasette to implement it for you. This article is helpful: http://charlesleifer.com/blog/using-sqlite-full-text-search-with-python/ |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/268/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 326599525 | MDU6SXNzdWUzMjY1OTk1MjU= | 286 | Database hash should include current datasette version | simonw 9599 | open | 0 | 2 | 2018-05-25T17:03:42Z | 2018-05-25T17:07:36Z | OWNER | Right now deploying a new version of datasette doesn't invalidate existing URLs, so users may still see a cached copy of the old templates. We can fix this by including the current datasette version in the input to the hash function (which currently just the database file contents). |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/286/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 326778161 | MDU6SXNzdWUzMjY3NzgxNjE= | 290 | Consider increasing the default for num_sql_threads (currently 3) | simonw 9599 | open | 0 | 0 | 2018-05-27T00:52:41Z | 2018-05-27T00:52:41Z | OWNER | I ran a very rough micro-benchmark on the new Then | Number of threads | Requests/second | |---|---| | 1 | 4.57 | | 3 | 9.77 | | 10 | 13.53 | | 20 | 15.24 | 50 | 8.21 | This was on my early 2018 OS X laptop. Need to benchmark in other common environments before making a decision on changing the default. That said, the default of 3 was a number I plucked out of thin air. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/290/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 327365110 | MDU6SXNzdWUzMjczNjUxMTA= | 294 | inspect should record column types | simonw 9599 | open | 0 | 7 | 2018-05-29T15:10:41Z | 2019-06-28T16:45:28Z | OWNER | For each table we want to know the columns, their order and what type they are. I'm going to break with SQLite defaults a little on this one and allow datasette to define additional types - to start with just a Possible JSON design: Refs #276 |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/294/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 327395270 | MDU6SXNzdWUzMjczOTUyNzA= | 296 | Per-database and per-table /-/ URL namespace | simonw 9599 | open | 0 | 3 | 2018-05-29T16:23:13Z | 2019-06-28T16:46:34Z | OWNER | Initially this will be for subsets of To start:

This means we will no longer allow databases or tables to have the name We will continue to support rows with a primary key of

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/296/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 328155946 | MDU6SXNzdWUzMjgxNTU5NDY= | 301 | --spatialite option for "datasette publish heroku" | simonw 9599 | open | 0 | 1 | 2018-05-31T14:13:09Z | 2022-01-20T21:28:50Z | OWNER | Split off from #243. Need to figure out how to install and configure SpatiaLite on Heroku. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/301/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 330826972 | MDU6SXNzdWUzMzA4MjY5NzI= | 308 | Support extra Heroku apps:create options - region, space, team | annapowellsmith 78156 | open | 0 | 2 | 2018-06-08T23:08:33Z | 2018-09-21T14:09:28Z | NONE | It would be useful to document how to pass Heroku CLI options on |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/308/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 335200136 | MDU6SXNzdWUzMzUyMDAxMzY= | 327 | Explore if SquashFS can be used to shrink size of packaged Docker containers | simonw 9599 | open | 0 | 4 | 2018-06-24T18:15:16Z | 2022-02-17T23:37:24Z | OWNER | Inspired by this article: https://cldellow.com/2018/06/22/sqlite-parquet-vtable.html#sqlite-database-indexed--squashed https://en.wikipedia.org/wiki/SquashFS is "a compressed read-only file system for Linux" - which means it could be a really nice fit for Datasette and its read-only SQLite databases. It would be interesting to explore a Dockerfile recipe that used SquashFS to compress the SQLite database file that was bundled up by |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/327/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 341228846 | MDU6SXNzdWUzNDEyMjg4NDY= | 343 | Render boolean fields better by default | russss 45057 | open | 0 | 1 | 2018-07-14T11:10:29Z | 2018-07-14T14:17:14Z | CONTRIBUTOR | These show up as 0 or 1 because sqlite. I think Yes/No would be fine in most cases? |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/343/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 344654623 | MDU6SXNzdWUzNDQ2NTQ2MjM= | 347 | Rename "datasette package" to "datasette publish docker" | simonw 9599 | open | 0 | 0 | 2018-07-26T00:42:46Z | 2018-07-26T00:42:46Z | OWNER | datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/347/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

|||||||||

| 346026869 | MDU6SXNzdWUzNDYwMjY4Njk= | 354 | Handle many-to-many relationships | simonw 9599 | open | 0 | 0 | 2018-07-31T04:03:13Z | 2020-11-24T19:51:18Z | OWNER | This is a master tracking ticket for various many-2-many features. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/354/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 346027040 | MDU6SXNzdWUzNDYwMjcwNDA= | 355 | Table view should support filtering via many-to-many relationships | simonw 9599 | open | 0 | 10 | 2018-07-31T04:04:16Z | 2019-05-23T06:04:03Z | OWNER | Parent: #354 |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/355/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 348043884 | MDU6SXNzdWUzNDgwNDM4ODQ= | 357 | Plugin hook for loading metadata.json | simonw 9599 | open | 0 | 6 | 2018-08-06T19:00:01Z | 2020-06-21T22:19:58Z | OWNER | For https://github.com/simonw/russian-ira-facebook-ads-datasette/tree/af6d956995e14afd585c35a6a06bb01da32043ba I wrote a script to convert YAML to JSON because YAML is a better format for embedding multi-line HTML descriptions and canned SQL statements. Example yaml metadata file: https://github.com/simonw/russian-ira-facebook-ads-datasette/blob/af6d956995e14afd585c35a6a06bb01da32043ba/russian-ads-metadata.yaml It would be useful if Datasette could be fed a YAML file directly: Question is... should this be a native feature (hence adding a YAML dependency) or should it be handled by a |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/357/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 352768017 | MDU6SXNzdWUzNTI3NjgwMTc= | 362 | Add option to include/exclude columns in search filters | annapowellsmith 78156 | open | 0 | 1 | 2018-08-22T01:32:08Z | 2020-11-03T19:01:59Z | NONE | I have a dataset with many columns, of which only some are likely to be of interest for searching. It would be great for usability if the search filters in the UI could be configured to include/exclude columns. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/362/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 374953006 | MDU6SXNzdWUzNzQ5NTMwMDY= | 369 | Interface should show same JSON shape options for custom SQL queries | gfrmin 416374 | open | 0 | Datasette 1.0 3268330 | 2 | 2018-10-29T10:39:15Z | 2020-05-30T17:24:06Z | CONTRIBUTOR | At the moment the page returning a custom SQL query shows the JSON and CSV APIs, but not the multiple JSON shapes. However, adding the |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/369/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

|||||||

| 377155320 | MDU6SXNzdWUzNzcxNTUzMjA= | 370 | Integration with JupyterLab | psychemedia 82988 | open | 0 | 4 | 2018-11-04T13:57:13Z | 2022-09-29T08:17:47Z | CONTRIBUTOR | I just watched a demo video for the JupyterLab Chart Editor which wraps the plotly chart editor app in a JupyterLab panel and lets you open a plotly chart JSON file in that editor. Essentially, it pops an HTML app into a panel in JupyterLab, and I think registers the app as a file viewer for a particular file type. (I'm not completely taken by it, tbh, because it means you can do irreproducible things to the chart definition file, but that's another issue). JupyterLab extensions can also open files from a dialogue as the iframe/html previewer shows: https://github.com/timkpaine/jupyterlab_iframe. This made me wonder about what For example, by right-clicking on a CSV file (for which there is already a CSV table view) in the file browser, offer a View / Run as datasette file viewer option that will:

(? Create a new SQLite db for each CSV file and launch each datasette view on a new port? Or have a JupyterLab (session?) SQLite db that stores all As a freebie, the Related: |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/370/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 377166793 | MDU6SXNzdWUzNzcxNjY3OTM= | 372 | Docker build tools | psychemedia 82988 | open | 0 | 0 | 2018-11-04T16:02:35Z | 2018-11-04T16:02:35Z | CONTRIBUTOR | In terms of small pieces lightly joined, I note that there are several tools starting to appear for building generating Dockerfiles and building Docker containers from simpler components such as If plugin/extensions builders want to include additional packages, then things like incremental builds of composable builds that add additional items into a base Examples of Dockerfile generators / container builders: Discussions / threads (via Binderhub gitter) on:

- why Relates to things like: |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/372/reactions",

"total_count": 2,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 2,

"rocket": 0,

"eyes": 0

} |

||||||||

| 400340905 | MDU6SXNzdWU0MDAzNDA5MDU= | 402 | Use SQLITE_DBCONFIG_DEFENSIVE plus other recommendations from SQLite security docs | simonw 9599 | open | 0 | 3 | 2019-01-17T15:52:28Z | 2019-01-17T16:15:21Z | OWNER |

https://twitter.com/ignoredambience/status/1085926961413869568 |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/402/reactions",

"total_count": 1,

"+1": 1,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 411257981 | MDU6SXNzdWU0MTEyNTc5ODE= | 412 | Linked Data(sette) | sfkeller 43340 | open | 0 | 2 | 2019-02-18T00:38:14Z | 2019-03-19T10:09:46Z | NONE | I've a radical feature idea (possible first as an extension in order to experiment?): I'd like to link to a remote table from a remote database, e.g. with a function "linked_datasette()". So one could do following query:

There's a foundation in the SQL Standard called SQL/MED (https://rhaas.blogspot.com/2011/01/why-sqlmed-is-cool.html ). And here's an implementation from me in Postgres FDW to connect another Postgres "endpoint": https://pastebin.com/Fz2v64Cz . |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/412/reactions",

"total_count": 1,

"+1": 1,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 421546944 | MDU6SXNzdWU0MjE1NDY5NDQ= | 417 | Datasette Library | simonw 9599 | open | 0 | 12 | 2019-03-15T14:30:22Z | 2020-12-29T14:34:50Z | OWNER | The ability to run Datasette in a mode where it automatically picks up new (or modified) files in a directory tree without needing to restart the server. Suggested command: |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/417/reactions",

"total_count": 8,

"+1": 8,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 426722204 | MDU6SXNzdWU0MjY3MjIyMDQ= | 423 | ?_search_col=X not reflected correctly in the UI | simonw 9599 | open | 0 | 0 | 2019-03-28T21:48:19Z | 2020-11-03T19:01:59Z | OWNER | datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/423/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

|||||||||

| 443021509 | MDU6SXNzdWU0NDMwMjE1MDk= | 461 | Paginate + search for databases/tables on the homepage | simonw 9599 | open | 0 | Datasette 1.0 3268330 | 4 | 2019-05-11T18:05:34Z | 2020-12-17T22:14:46Z | OWNER | Split out from #460 - in order to support large numbers of connected databases the homepage needs to be paginated. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/461/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

|||||||

| 447408527 | MDU6SXNzdWU0NDc0MDg1Mjc= | 483 | Option to facet by date using month or year | simonw 9599 | open | 0 | 5 | 2019-05-23T01:25:29Z | 2019-05-29T21:38:27Z | OWNER | Facet by date (from #481) can take datetimes and facet them by the day component. https://latest.datasette.io/fixtures/facetable?_facet_date=created I'd like to also be able to facet by month or year. I'm not sure what the best way to achieve this is. Could be two more Facet classes (YearFacet and MonthFacet) but I think it might be nicer if the existing DateFacet could take an optional argument that changed its behaviour. But... if I do that, do I expose it in the UI somewhere or is it only available to URL-hackers? |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/483/reactions",

"total_count": 1,

"+1": 1,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 447451492 | MDU6SXNzdWU0NDc0NTE0OTI= | 484 | Mechanism for displaying summary of m2m relationships in rows on table view | simonw 9599 | open | 0 | 1 | 2019-05-23T05:02:41Z | 2019-05-23T06:34:05Z | OWNER | Part of #354 (m2m support) It would be fantastic if rows that are part of a m2m relationship could display it in an additional column in the table view. It might look something like this: https://russian-ira-facebook-ads.datasettes.com/russian-ads-919cbfd/display_ads?_search=black+lives+matter

That example was achieved using a custom SQL query and datasette-json-html - but I'd like this to be a built-in feature instead. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/484/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 447469253 | MDU6SXNzdWU0NDc0NjkyNTM= | 485 | Improvements to table label detection | simonw 9599 | open | 0 | simonw 9599 | 10 | 2019-05-23T06:19:49Z | 2022-10-03T00:04:42Z | OWNER | Label detection doesn't work if the primary key is called pk rather than id, so this page doesn't work: https://latest.datasette.io/fixtures/roadside_attraction_characteristics Code is here: |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/485/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

|||||||

| 449445715 | MDU6SXNzdWU0NDk0NDU3MTU= | 491 | Figure out how to use Firebase with cloudrun to enable vanity URLs and CDN caching | simonw 9599 | open | 0 | 0 | 2019-05-28T19:48:06Z | 2019-05-28T19:48:35Z | OWNER | It looks like Firebase can solve a couple of problems with the existing

https://firebase.google.com/docs/hosting/cloud-run looks like it can help with both of these. Lots of interesting questions:

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/491/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 450032134 | MDU6SXNzdWU0NTAwMzIxMzQ= | 495 | facet_m2m gets confused by multiple relationships | simonw 9599 | open | 0 | 2 | 2019-05-29T21:37:28Z | 2020-12-17T05:08:22Z | OWNER | I got this for a database I was playing with:

I think this is because of these three tables:

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/495/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |