pull_requests

69 rows where author_association = "FIRST_TIME_CONTRIBUTOR"

This data as json, CSV (advanced)

Suggested facets: state, draft, created_at (date), updated_at (date), closed_at (date)

| id ▼ | node_id | number | state | locked | title | user | body | created_at | updated_at | closed_at | merged_at | merge_commit_sha | assignee | milestone | draft | head | base | author_association | repo | url | merged_by | auto_merge |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 190901429 | MDExOlB1bGxSZXF1ZXN0MTkwOTAxNDI5 | 293 | closed | 0 | Support for external database connectors | jsancho-gpl 11912854 | I think it would be nice that Datasette could work with other file formats that aren't SQLite, like files with PyTables format. I've tried to accomplish that using external connectors published with entry points. These external connectors must have a structure similar to the structure [PyTables Datasette connector](https://github.com/PyTables/datasette-pytables) has. | 2018-05-28T11:02:45Z | 2018-09-11T14:32:45Z | 2018-09-11T14:32:45Z | ad2cb12473025ffab738d4df6bb47cd8b2e27859 | 0 | 59c94be46f9ccd806dd352fa28a6dba142d5ab82 | b7257a21bf3dfa7353980f343c83a616da44daa7 | FIRST_TIME_CONTRIBUTOR | datasette 107914493 | https://github.com/simonw/datasette/pull/293 | |||||

| 211860706 | MDExOlB1bGxSZXF1ZXN0MjExODYwNzA2 | 363 | open | 0 | Search all apps during heroku publish | kevboh 436032 | Adds the `-A` option to include apps from all organizations when searching app names for publish. | 2018-08-29T19:25:10Z | 2018-08-31T14:39:45Z | b684b04c30f6b8779a3d11f7599329092fb152f3 | 0 | 2dd363e01fa73b24ba72f539c0a854bc901d23a7 | b7257a21bf3dfa7353980f343c83a616da44daa7 | FIRST_TIME_CONTRIBUTOR | datasette 107914493 | https://github.com/simonw/datasette/pull/363 | ||||||

| 214653641 | MDExOlB1bGxSZXF1ZXN0MjE0NjUzNjQx | 364 | open | 0 | Support for other types of databases using external connectors | jsancho-gpl 11912854 | This PR is related to #293, but now all commits have been merged. The purpose is to support other file formats that aren't SQLite, like files with PyTables format. I've tried to accomplish that using external connectors published with entry points. The modifications in the original datasette code are minimal and many are in a separated file. | 2018-09-11T14:31:47Z | 2018-09-11T14:31:47Z | d84f3b1f585cb52b58aed0401c34214de2e8b47b | 0 | 592fd05f685859b271f0305c2fc8cdb7da58ebfb | b7257a21bf3dfa7353980f343c83a616da44daa7 | FIRST_TIME_CONTRIBUTOR | datasette 107914493 | https://github.com/simonw/datasette/pull/364 | ||||||

| 440735814 | MDExOlB1bGxSZXF1ZXN0NDQwNzM1ODE0 | 868 | open | 0 | initial windows ci setup | joshmgrant 702729 | Picking up the work done on #557 with a new PR. Seeing if I can get this working. | 2020-06-26T18:49:13Z | 2021-07-10T23:41:43Z | b99adb1720a0b53ff174db54d0e4a67357b47f33 | 0 | c99cabae638958ef057438a92cb9a182ba4f8188 | 180c7a5328457aefdf847ada366e296fef4744f1 | FIRST_TIME_CONTRIBUTOR | datasette 107914493 | https://github.com/simonw/datasette/pull/868 | ||||||

| 448355680 | MDExOlB1bGxSZXF1ZXN0NDQ4MzU1Njgw | 30 | open | 0 | Handle empty bucket on first upload. Allow specifying the endpoint_url for services other than S3 (like b2 and digitalocean spaces) | scanner 110038 | Finally got around to trying dogsheep-photos but I want to use backblaze's b2 service instead of AWS S3. Had to add a way to optionally specify the endpoint_url to connect to. Then with the bucket being empty the initial key retrieval would fail. Probably a better way to see that the bucket is empty than doing a test inside the paginator loop. Also probably a better way to specify the endpoint_url as we get and test for it twice using the same code in two different places but did not want to spend too much time worrying about it. | 2020-07-13T16:15:26Z | 2020-07-13T16:15:26Z | 583b26f244166aadf2dcc680e39d1ca59765da37 | 0 | 647d4b42c6f4d1fba4b99f73fe163946cea6ee36 | 45ce3f8bfb8c70f57ca5d8d82f22368fea1eb391 | FIRST_TIME_CONTRIBUTOR | dogsheep-photos 256834907 | https://github.com/dogsheep/dogsheep-photos/pull/30 | ||||||

| 500798091 | MDExOlB1bGxSZXF1ZXN0NTAwNzk4MDkx | 1008 | open | 0 | Add json_loads and json_dumps jinja2 filters | mhalle 649467 | 2020-10-09T20:11:34Z | 2020-12-15T02:30:28Z | e33e91ca7c9b2fdeab9d8179ce0d603918b066aa | 0 | 40858989d47043743d6b1c9108528bec6a317e43 | 1bdbc8aa7f4fd7a768d456146e44da86cb1b36d1 | FIRST_TIME_CONTRIBUTOR | datasette 107914493 | https://github.com/simonw/datasette/pull/1008 | |||||||

| 505076418 | MDExOlB1bGxSZXF1ZXN0NTA1MDc2NDE4 | 5 | open | 0 | Add fitbit-to-sqlite | mrphil007 4632208 | 2020-10-16T20:04:05Z | 2020-10-16T20:04:05Z | 9b9a677a4fcb6a31be8c406b3050cfe1c6e7e398 | 0 | db64d60ee92448b1d2a7e190d9da20eb306326b0 | d0686ebed6f08e9b18b4b96c2b8170e043a69adb | FIRST_TIME_CONTRIBUTOR | dogsheep.github.io 214746582 | https://github.com/dogsheep/dogsheep.github.io/pull/5 | |||||||

| 505453900 | MDExOlB1bGxSZXF1ZXN0NTA1NDUzOTAw | 1030 | open | 0 | Make `package` command deal with a configuration directory argument | frankier 299380 | Currently if we run `datasette package` on a configuration directory we'll get an exception when we try to hard link to the directory. This PR copies the tree and makes the Dockerfile run inspect on all *.db files. | 2020-10-18T11:07:02Z | 2020-10-19T08:01:51Z | 124142e4d2710525b09ff2bd2a7a787cbed163a4 | 0 | e0825334692967fec195e104cb6aa11095807a8e | c37a0a93ecb847e66cfe7b6f9452ba210fcae91b | FIRST_TIME_CONTRIBUTOR | datasette 107914493 | https://github.com/simonw/datasette/pull/1030 | ||||||

| 505769462 | MDExOlB1bGxSZXF1ZXN0NTA1NzY5NDYy | 1031 | closed | 0 | Fallback to databases in inspect-data.json when no -i options are passed | frankier 299380 | Currenlty `Datasette.__init__` checks immutables against None to decide whether to fallback to inspect-data.json. This patch modifies the serve command to pass None when no -i options are passed so this fallback works correctly. | 2020-10-19T07:51:06Z | 2021-03-29T01:46:45Z | 2021-03-29T00:23:41Z | 3ee6b39e96ef684e1ac393bb269d804e957fee1d | 0 | 7e7eaa4e712b01de0b5a8a1b90145bdc1c3cd731 | c37a0a93ecb847e66cfe7b6f9452ba210fcae91b | FIRST_TIME_CONTRIBUTOR | datasette 107914493 | https://github.com/simonw/datasette/pull/1031 | |||||

| 521054612 | MDExOlB1bGxSZXF1ZXN0NTIxMDU0NjEy | 13 | open | 0 | SQLite does not have case sensitive columns | tomaskrehlik 1689944 | This solves a weird issue when there is record with metadata key that is only different in letter cases. See the test for details. | 2020-11-14T20:12:32Z | 2021-08-24T13:28:26Z | 38856acbc724ffdb8beb9e9f4ef0dbfa8ff51ad1 | 0 | 3e1b2945bc7c31be59e89c5fed86a5d2a59ebd5a | 71e36e1cf034b96de2a8e6652265d782d3fdf63b | FIRST_TIME_CONTRIBUTOR | healthkit-to-sqlite 197882382 | https://github.com/dogsheep/healthkit-to-sqlite/pull/13 | ||||||

| 521287994 | MDExOlB1bGxSZXF1ZXN0NTIxMjg3OTk0 | 203 | open | 0 | changes to allow for compound foreign keys | drkane 1049910 | Add support for compound foreign keys, as per issue #117 Not sure if this is the right approach. In particular I'm unsure about: - the new `ForeignKey` class, which replaces the namedtuple in order to ensure that `column` and `other_column` are forced into tuples. The class does the job, but doesn't feel very elegant. - I haven't rewritten `guess_foreign_table` to take account of multiple columns, so it just checks for the first column in the foreign key definition. This isn't ideal. - I haven't added any ability to the CLI to add compound foreign keys, it's only in the python API at the moment. The PR also contains a minor related change that columns and tables are always quoted in foreign key definitions. | 2020-11-16T00:30:10Z | 2023-01-25T18:47:18Z | 0507a9464314f84e9e58b1931c583df51d757d7c | 0 | 5e43e31c2b9bcf6b5d1460b0f848fed019ed42a6 | f1277f638f3a54a821db6e03cb980adad2f2fa35 | FIRST_TIME_CONTRIBUTOR | sqlite-utils 140912432 | https://github.com/simonw/sqlite-utils/pull/203 | ||||||

| 545264436 | MDExOlB1bGxSZXF1ZXN0NTQ1MjY0NDM2 | 1159 | open | 0 | Improve the display of facets information | lovasoa 552629 | This PR changes the display of facets to hopefully make them more readable. Before | After ---|---  |  | 2020-12-24T11:01:47Z | 2023-07-31T18:57:59Z | 0276c5609da34bfb660f65212e1a367e637979d7 | Datasette 1.0 3268330 | 0 | c820abd0bcb34d1ea5a03be64a2158ae7c42920c | a882d679626438ba0d809944f06f239bcba8ee96 | FIRST_TIME_CONTRIBUTOR | datasette 107914493 | https://github.com/simonw/datasette/pull/1159 | |||||

| 561512503 | MDExOlB1bGxSZXF1ZXN0NTYxNTEyNTAz | 15 | open | 0 | added try / except to write_records | ryancheley 9857779 | to keep the data write from failing if it came across an error during processing. In particular when trying to convert my HealthKit zip file (and that of my wife's) it would consistently error out with the following: ``` db.py 1709 insert_chunk result = self.db.execute(query, params) db.py 226 execute return self.conn.execute(sql, parameters) sqlite3.OperationalError: too many SQL variables --------------------------------------------------------------------------------------------------------------------------------------------------------------------- db.py 1709 insert_chunk result = self.db.execute(query, params) db.py 226 execute return self.conn.execute(sql, parameters) sqlite3.OperationalError: too many SQL variables --------------------------------------------------------------------------------------------------------------------------------------------------------------------- db.py 1709 insert_chunk result = self.db.execute(query, params) db.py 226 execute return self.conn.execute(sql, parameters) sqlite3.OperationalError: table rBodyMass has no column named metadata_HKWasUserEntered --------------------------------------------------------------------------------------------------------------------------------------------------------------------- healthkit-to-sqlite 8 <module> sys.exit(cli()) core.py 829 __call__ return self.main(*args, **kwargs) core.py 782 main rv = self.invoke(ctx) core.py 1066 invoke return ctx.invoke(self.callback, **ctx.params) core.py 610 invoke return callback(*args, **kwargs) cli.py 57 cli convert_xml_to_sqlite(fp, db, progress_callback=bar.update, zipfile=zf) utils.py 42 convert_xml_to_sqlite write_records(records, db) utils.py 143 write_records db[table].insert_all( db.py 1899 insert_all self.insert_chunk( db.py 1720 insert_chunk self.insert_chunk( db.py 1720 insert_chunk self.insert_chunk( db.py 1714 insert_chunk result = self.db.execute(query, params) db.py 226 execute return self.co… | 2021-01-26T03:56:21Z | 2021-01-26T03:56:21Z | 8527278a87e448f57c7c6bd76a2d85f12d0233dd | 0 | 7f1b168c752b5af7c1f9052dfa61e26afc83d574 | 71e36e1cf034b96de2a8e6652265d782d3fdf63b | FIRST_TIME_CONTRIBUTOR | healthkit-to-sqlite 197882382 | https://github.com/dogsheep/healthkit-to-sqlite/pull/15 | ||||||

| 577953727 | MDExOlB1bGxSZXF1ZXN0NTc3OTUzNzI3 | 5 | open | 0 | WIP: Add Gmail takeout mbox import | UtahDave 306240 | WIP This PR adds the ability to import emails from a Gmail mbox export from Google Takeout. This is my first PR to a datasette/dogsheep repo. I've tested this on my personal Google Takeout mbox with ~520,000 emails going back to 2004. This took around ~20 minutes to process. To provide some feedback on the progress of the import I added the "rich" python module. I'm happy to remove that if adding a dependency is discouraged. However, I think it makes a nice addition to give feedback on the progress of a long import. Do we want to log emails that have errors when trying to import them? Dealing with encodings with emails is a bit tricky. I'm very open to feedback on how to deal with those better. As well as any other feedback for improvements. | 2021-02-22T21:30:40Z | 2021-07-28T07:18:56Z | 65182811d59451299e75f09b4366bb221bc32b20 | 0 | a3de045eba0fae4b309da21aa3119102b0efc576 | e54e544427f1cc3ea8189f0e95f54046301a8645 | FIRST_TIME_CONTRIBUTOR | google-takeout-to-sqlite 206649770 | https://github.com/dogsheep/google-takeout-to-sqlite/pull/5 | ||||||

| 592364255 | MDExOlB1bGxSZXF1ZXN0NTkyMzY0MjU1 | 16 | open | 0 | Add a fallback ID, print if no ID found | n8henrie 1234956 | Fixes https://github.com/dogsheep/healthkit-to-sqlite/issues/14 | 2021-03-13T13:38:29Z | 2021-03-13T14:44:04Z | 16ab307b2138891f226a66e4954c5470de753a0f | 0 | 27b3d54ccfe7d861770a9d0b173f6503580fea4a | 71e36e1cf034b96de2a8e6652265d782d3fdf63b | FIRST_TIME_CONTRIBUTOR | healthkit-to-sqlite 197882382 | https://github.com/dogsheep/healthkit-to-sqlite/pull/16 | ||||||

| 596627780 | MDExOlB1bGxSZXF1ZXN0NTk2NjI3Nzgw | 18 | open | 0 | Add datetime parsing | n8henrie 1234956 | Parses the datetime columns so they are subsequently properly recognized as datetime. Fixes https://github.com/dogsheep/healthkit-to-sqlite/issues/17 | 2021-03-19T14:34:22Z | 2021-03-19T14:34:22Z | c87f4e8aa88ec277c6b5a000670c2cb42a10c03d | 0 | e0e7a0f99f844db33964b27c29b0b8d5f160202b | 71e36e1cf034b96de2a8e6652265d782d3fdf63b | FIRST_TIME_CONTRIBUTOR | healthkit-to-sqlite 197882382 | https://github.com/dogsheep/healthkit-to-sqlite/pull/18 | ||||||

| 613178968 | MDExOlB1bGxSZXF1ZXN0NjEzMTc4OTY4 | 1296 | open | 0 | Dockerfile: use Ubuntu 20.10 as base | tmcl-it 82332573 | This PR changes the main Dockerfile to use ubuntu:20.10 as base image instead of python:3.9.2-slim-buster (itself based on debian:buster-slim). The Dockerfile is essentially the one from https://github.com/simonw/datasette/issues/1249#issuecomment-803698983 with some additional cleanups to slim it down. This fixes a couple of issues: 1. The SQLite version in Debian Buster (2.6.0) doesn't support generated columns 2. Installing SpatiaLite from the Debian sid repositories has the side effect of also installing updates to libc and libstdc++ from sid. As a bonus, the Docker image becomes smaller: ``` $ docker image ls REPOSITORY TAG IMAGE ID CREATED SIZE datasette 0.56-ubuntu f7aca255140a 5 hours ago 212MB datasetteproject/datasette 0.56 efb3b282f390 13 days ago 258MB ``` ### Reproduction of the first issue ``` $ curl -O https://latest.datasette.io/fixtures.db % Total % Received % Xferd Average Speed Time Time Time Current Dload Upload Total Spent Left Speed 100 260k 0 260k 0 0 489k 0 --:--:-- --:--:-- --:--:-- 489k $ docker run -v `pwd`:/mnt datasetteproject/datasette:0.56 datasette /mnt/fixtures.db Traceback (most recent call last): File "/usr/local/bin/datasette", line 8, in <module> sys.exit(cli()) File "/usr/local/lib/python3.9/site-packages/click/core.py", line 829, in __call__ return self.main(*args, **kwargs) File "/usr/local/lib/python3.9/site-packages/click/core.py", line 782, in main rv = self.invoke(ctx) File "/usr/local/lib/python3.9/site-packages/click/core.py", line 1259, in invoke return _process_result(sub_ctx.command.invoke(sub_ctx)) File "/usr/local/lib/python3.9/site-packages/click/core.py", line 1066, in invoke return ctx.invoke(self.callback, **ctx.params) File "/usr/local/lib/python3.9/site-packages/click/core.py", line 610, in invoke return callback(*args, … | 2021-04-12T00:23:32Z | 2021-07-20T08:52:13Z | 2ba522dbd7168a104a33621598c5a2460aae3e74 | 0 | 8f00c312f6b8ab5cecbb8a698ab4ad659aabf4ef | c73af5dd72305f6a01ea94a2c76d52e5e26de38b | FIRST_TIME_CONTRIBUTOR | datasette 107914493 | https://github.com/simonw/datasette/pull/1296 | ||||||

| 645100848 | MDExOlB1bGxSZXF1ZXN0NjQ1MTAwODQ4 | 12 | open | 0 | Recovering of malformed ENEX file | engdan77 8431437 | Hey .. Awesome work developing this project, that I found very useful to me and saved me some work.. Thanks.. :) Some background to this PR... I've been searching around for a tool allowing me to transforming my personal collection of Evernote notes to a format easier to search and potentially easier import to future services. Now I discovered problem processing my large data ~5GB using the existing source using Pythons builtin xml-parser that unfortunately was unable to succeed without exception breaking the process. My first attempt I tried to adapt to more robust lxml package allowing huge data and with "recover", but even if it worked better it also failed processing the whole data. Even using the memory efficient etree.iterparse() it also unfortunately got into trouble. And with no luck finding any other libraries successfully parsing this enormous file I instead chose to build a "hugexmlparser" module that allows parsing this huge file using yield (on a byte-to-byte-level) and allows you to set a maximum size for <note> to cater for potential malformed or undesirable large attachments to export, should succeed covering potential exceptions. Some cases found where the parses discover malformed XML within <content> so also in those cases try to save as much as possible by escaping (to be dealt at a later stage, better than nothing), and if a missing end </note> before new (malformed?) it would add this after encounter a new start-tag. The code for the recovery process is a bit rough and for certain room for refactoring, but at the moment is seem to achieve what I wanted. Now with the above we pass this a minor changed version of save_note_recovery() assure the existing works. Also adding this as a new recover-enex command to click and kept the original options. A couple of new tests was added as well to check against using this command. Now this currently works to me, but thought I might share a PR in such as you find use for this yourself or found useful to others finding this rep… | 2021-05-15T07:49:31Z | 2021-05-15T19:57:50Z | 95f21ca163606db74babd036e6fa44b7d484d137 | 0 | a5839dadaa43694f208ad74a53670cebbe756956 | 0bc6ba503eecedb947d2624adbe1327dd849d7fe | FIRST_TIME_CONTRIBUTOR | evernote-to-sqlite 303218369 | https://github.com/dogsheep/evernote-to-sqlite/pull/12 | ||||||

| 672053811 | MDExOlB1bGxSZXF1ZXN0NjcyMDUzODEx | 65 | open | 0 | basic support for events | khimaros 231498 | a quick first pass at implementing the feature requested in https://github.com/dogsheep/github-to-sqlite/issues/64 testing instructions: ``` $ github-to-sqlite events events.db user/khimaros ``` if the specified user is the authenticated user, it will also include private events. caveat: pagination appears to be broken (i don't see `next` in the response JSON from GitHub) | 2021-06-17T00:51:30Z | 2022-10-03T22:35:03Z | 0a252a06a15e307c8a67b2e0aac0907e2566bf19 | 0 | 82da9f91deda81d92ec64c9eda960aa64340c169 | 0e45b72312a0756e5a562effbba08cb8de1e480b | FIRST_TIME_CONTRIBUTOR | github-to-sqlite 207052882 | https://github.com/dogsheep/github-to-sqlite/pull/65 | ||||||

| 673872974 | MDExOlB1bGxSZXF1ZXN0NjczODcyOTc0 | 7 | open | 0 | Add instagram-to-sqlite | gavindsouza 36654812 | The tool covers only chat imports at the time of opening this PR but I'm planning to import everything else that I feel inquisitive about ref: https://github.com/gavindsouza/instagram-to-sqlite | 2021-06-19T12:26:16Z | 2021-07-28T07:58:59Z | 66e9828db4a8ddc4049ab9932e1304288e571821 | 0 | 4e4c6baf41778071a960d288b0ef02bd01cb6376 | 92c6bb77629feeed661c7b8d9183a11367de39e0 | FIRST_TIME_CONTRIBUTOR | dogsheep.github.io 214746582 | https://github.com/dogsheep/dogsheep.github.io/pull/7 | ||||||

| 692557381 | MDExOlB1bGxSZXF1ZXN0NjkyNTU3Mzgx | 1399 | open | 0 | Multiple sort | jgryko5 87192257 | Closes #197. I have added support for sorting by multiple parameters as mentioned in the issue above, and together with that, a suggestion on how to implement such sorting in the user interface. | 2021-07-19T12:20:14Z | 2021-07-19T12:20:14Z | 3161cd1202824921054cf78d82c1d8c07b140451 | 0 | 739697660382e4d2974619b4a5605baef87d233a | c73af5dd72305f6a01ea94a2c76d52e5e26de38b | FIRST_TIME_CONTRIBUTOR | datasette 107914493 | https://github.com/simonw/datasette/pull/1399 | ||||||

| 698423667 | MDExOlB1bGxSZXF1ZXN0Njk4NDIzNjY3 | 8 | open | 0 | Add Gmail takeout mbox import (v2) | maxhawkins 28565 | WIP This PR builds on #5 to continue implementing gmail import support. Building on @UtahDave's work, these commits add a few performance and bug fixes: * Decreased memory overhead for import by manually parsing mbox headers. * Fixed error where some messages in the mbox would yield a row with NULL in all columns. I will send more commits to fix any errors I encounter as I run the importer on my personal takeout data. | 2021-07-28T07:05:32Z | 2023-09-08T01:22:49Z | d2809fd3fd835358d01ad10401228a562539b29e | 0 | 8e6d487b697ce2e8ad885acf613a157bfba84c59 | e54e544427f1cc3ea8189f0e95f54046301a8645 | FIRST_TIME_CONTRIBUTOR | google-takeout-to-sqlite 206649770 | https://github.com/dogsheep/google-takeout-to-sqlite/pull/8 | ||||||

| 716357982 | MDExOlB1bGxSZXF1ZXN0NzE2MzU3OTgy | 66 | open | 0 | Add --merged-by flag to pull-requests sub command | sarcasticadmin 30531572 | ## Description Proposing a solution to the API limitation for `merged_by` in pull_requests. Specifically the following called out in the readme: ``` Note that the merged_by column on the pull_requests table will only be populated for pull requests that are loaded using the --pull-request option - the GitHub API does not return this field for pull requests that are loaded in bulk. ``` This approach might cause larger repos to hit rate limits called out in https://github.com/dogsheep/github-to-sqlite/issues/51 but seems to work well in the repos I tested and included below. ## Old Behavior - Had to list out the pull-requests individually via multiple `--pull-request` flags ## New Behavior - `--merged-by` flag for getting 'merge_by' information out of pull-requests without having to specify individual PR numbers. # Testing Picking some repo that has more than one merger (datasette only has 1 😉 ) ``` $ github-to-sqlite pull-requests ./github.db opnsense/tools --merged-by $ echo "select id, url, merged_by from pull_requests;" | sqlite3 ./github.db 83533612|https://github.com/opnsense/tools/pull/39|1915288 102632885|https://github.com/opnsense/tools/pull/43|1915288 149114810|https://github.com/opnsense/tools/pull/57|1915288 160394495|https://github.com/opnsense/tools/pull/64|1915288 163308408|https://github.com/opnsense/tools/pull/67|1915288 169723264|https://github.com/opnsense/tools/pull/69|1915288 171381422|https://github.com/opnsense/tools/pull/72|1915288 179938195|https://github.com/opnsense/tools/pull/77|1915288 196233824|https://github.com/opnsense/tools/pull/82|1915288 215289964|https://github.com/opnsense/tools/pull/93| 219696100|https://github.com/opnsense/tools/pull/97|1915288 223664843|https://github.com/opnsense/tools/pull/99| 228446172|https://github.com/opnsense/tools/pull/103|1915288 238930434|https://github.com/opnsense/tools/pull/110|1915288 255507110|https://github.com/opnsense/tools/pull/119|1915288 255980675|https://github.com/opnsense/tools/pull/120… | 2021-08-20T00:57:55Z | 2021-09-28T21:50:31Z | 6b4276d9469e4579c81588ac9e3d128026d919a0 | 0 | a92a31d5d446022baeaf7f3c9ea107094637e64d | ed3752022e45b890af63996efec804725e95d0d4 | FIRST_TIME_CONTRIBUTOR | github-to-sqlite 207052882 | https://github.com/dogsheep/github-to-sqlite/pull/66 | ||||||

| 718734191 | MDExOlB1bGxSZXF1ZXN0NzE4NzM0MTkx | 22 | open | 0 | Make sure that case-insensitive column names are unique | FabianHertwig 32016596 | This closes #21. When there are metadata entries with the same case insensitive string, then there is an error when trying to create a new column for that metadata entry in the database table, because a column with that case insensitive name already exists. ```xml <Record type="HKQuantityTypeIdentifierDietaryCholesterol" sourceName="MyFitnessPal" sourceVersion="35120" unit="mg" creationDate="2021-07-04 20:55:27 +0200" startDate="2021-07-04 20:55:00 +0200" endDate="2021-07-04 20:55:00 +0200" value="124"> <MetadataEntry key="meal" value="Dinner"/> <MetadataEntry key="Meal" value="Dinner"/> </Record> ``` The code added in this PR checks if a key already exists in a record and if so adds a number at its end. The resulting column names look like the example below then. Interestingly, the column names viewed with Datasette are not case insensitive. ```text startDate, endDate, value, unit, sourceName, sourceVersion, creationDate, metadata_meal, metadata_Meal_2, metadata_Mahlzeit ``` | 2021-08-24T13:13:38Z | 2021-08-24T13:26:20Z | c757d372c10284cd6fa58d144549bc89691341c3 | 0 | b16fb556f84a0eed262a518ca7ec82a467155d23 | 9fe3cb17e03d6c73222b63e643638cf951567c4c | FIRST_TIME_CONTRIBUTOR | healthkit-to-sqlite 197882382 | https://github.com/dogsheep/healthkit-to-sqlite/pull/22 | ||||||

| 721686721 | MDExOlB1bGxSZXF1ZXN0NzIxNjg2NzIx | 67 | open | 0 | Replacing step ID key with step_id | jshcmpbll 16374374 | Workflows that have an `id` in any step result in the following error when running `workflows`: e.g.`github-to-sqlite workflows github.db nixos/nixpkgs` ```Traceback (most recent call last): File "/usr/local/bin/github-to-sqlite", line 8, in <module> sys.exit(cli()) File "/usr/local/lib/python3.8/dist-packages/click/core.py", line 1137, in __call__ return self.main(*args, **kwargs) File "/usr/local/lib/python3.8/dist-packages/click/core.py", line 1062, in main rv = self.invoke(ctx) File "/usr/local/lib/python3.8/dist-packages/click/core.py", line 1668, in invoke```Traceback (most recent call last): File "/usr/local/bin/github-to-sqlite", line 8, in <module> sys.exit(cli()) File "/usr/local/lib/python3.8/dist-packages/click/core.py", line 1137, in __call__ return self.main(*args, **kwargs) File "/usr/local/lib/python3.8/dist-packages/click/core.py", line 1062, in main rv = self.invoke(ctx) File "/usr/local/lib/python3.8/dist-packages/click/core.py", line 1668, in invoke return _process_result(sub_ctx.command.invoke(sub_ctx)) File "/usr/local/lib/python3.8/dist-packages/click/core.py", line 1404, in invoke return ctx.invoke(self.callback, **ctx.params) File "/usr/local/lib/python3.8/dist-packages/click/core.py", line 763, in invoke return __callback(*args, **kwargs) File "/usr/local/lib/python3.8/dist-packages/github_to_sqlite/cli.py", line 601, in workflows utils.save_workflow(db, repo_id, filename, content) File "/usr/local/lib/python3.8/dist-packages/github_to_sqlite/utils.py", line 865, in save_workflow db["steps"].insert_all( File "/usr/local/lib/python3.8/dist-packages/sqlite_utils/db.py", line 2596, in insert_all self.insert_chunk( File "/usr/local/lib/python3.8/dist-packages/sqlite_utils/db.py", line 2378, in insert_chunk result = self.db.execute(query, params) File "/usr/local/lib/python3.8/dist-packages/sqlite_utils/db.py", line 419, in execute return self.conn.execute(sql, parameters) … | 2021-08-28T01:26:41Z | 2021-08-28T01:27:00Z | 9f73c9bf29dec9a1482d9af56b9fac271869585c | 0 | 9b5acceb25cf48b00e9c6c8293358b036440deb2 | ed3752022e45b890af63996efec804725e95d0d4 | FIRST_TIME_CONTRIBUTOR | github-to-sqlite 207052882 | https://github.com/dogsheep/github-to-sqlite/pull/67 | ||||||

| 726990680 | MDExOlB1bGxSZXF1ZXN0NzI2OTkwNjgw | 35 | open | 0 | Support for Datasette's --base-url setting | brandonrobertz 2670795 | This makes it so you can use Dogsheep if you're using Datasette with the `--base-url /some-path/` setting. | 2021-09-03T17:47:45Z | 2021-09-03T17:47:45Z | 0f5931da2099303111c49ec726b78bae814f755e | 0 | e6679d287b2e97fc94f50da64e1a7b91c1fbbf67 | a895bc360f2738c7af43deda35c847f1ee5bff51 | FIRST_TIME_CONTRIBUTOR | dogsheep-beta 197431109 | https://github.com/dogsheep/dogsheep-beta/pull/35 | ||||||

| 727390835 | MDExOlB1bGxSZXF1ZXN0NzI3MzkwODM1 | 36 | open | 0 | Correct naming of tool in readme | badboy 2129 | 2021-09-05T12:05:40Z | 2022-01-06T16:04:46Z | 358678c6b48072769f2985fe6be8fc5e54ed2e06 | 0 | bf26955c250e601a0d9e751311530940b704f81e | edc80a0d361006f478f2904a90bfe6c730ed6194 | FIRST_TIME_CONTRIBUTOR | dogsheep-photos 256834907 | https://github.com/dogsheep/dogsheep-photos/pull/36 | |||||||

| 737690951 | PR_kwDOBm6k_c4r-EVH | 1475 | open | 0 | feat: allow joins using _through in both directions | bram2000 5268174 | Currently the `_through` clause can only work if the FK relationship is defined in a specific direction. I don't think there is any reason for this limitation, as an FK allows joining in both directions. This is an admittedly hacky change to implement bidirectional joins using `_through`. It does work for our use-case, but I don't know if there are other implications that I haven't thought of. Also if this change is desirable we probably want to make the code a little nicer. | 2021-09-20T15:28:20Z | 2021-09-20T15:28:20Z | aa2f1c103730c0ede4ab67978288d91bbe1e00a6 | 0 | edf3c4c3271c8f13ab4c28ad88b817e115477e41 | b28b6cd2fe97f7e193a235877abeec2c8eb0a821 | FIRST_TIME_CONTRIBUTOR | datasette 107914493 | https://github.com/simonw/datasette/pull/1475 | ||||||

| 747742034 | PR_kwDODFdgUs4skaNS | 68 | open | 0 | Add support for retrieving teams / members | philwills 68329 | Adds a method for retrieving all the teams within an organisation and all the members in those teams. The latter is stored as a join table `team_members` beteween `teams` and `users`. | 2021-10-01T15:55:02Z | 2021-10-01T15:59:53Z | f46e276c356c893370d5893296f4b69f08baf02c | 0 | cc838e87b1eb19b299f277a07802923104f35ce2 | ed3752022e45b890af63996efec804725e95d0d4 | FIRST_TIME_CONTRIBUTOR | github-to-sqlite 207052882 | https://github.com/dogsheep/github-to-sqlite/pull/68 | ||||||

| 754942128 | PR_kwDOBm6k_c4s_4Cw | 1484 | closed | 0 | GitHub Actions: Add Python 3.10 to the tests | cclauss 3709715 | 2021-10-11T06:03:03Z | 2021-10-11T06:03:31Z | 2021-10-11T06:03:28Z | 69027b8c3e0e2236acd817a6fa5d32f762e3e9aa | 0 | 02c3218ca093df8b595d8ba7d88a32a0207b6385 | 0d5cc20aeffa3537cfc9296d01ec24b9c6e23dcf | FIRST_TIME_CONTRIBUTOR | datasette 107914493 | https://github.com/simonw/datasette/pull/1484 | ||||||

| 771790589 | PR_kwDOEhK-wc4uAJb9 | 15 | open | 0 | include note tags in the export | d-rep 436138 | When parsing the Evernote `<note>` elements, the script will now also parse any nested `<tag>` elements, writing them out into a separate sqlite table. Here is an example of how to query the data after the script has run: ``` select notes.*, (select group_concat(tag) from notes_tags where notes_tags.note_id=notes.id) as tags from notes; ``` My .enex source file is 3+ years old so I am assuming the structure hasn't changed. Interestingly, my _notebook names_ show up in the _tags_ list where the tag name is prefixed with `notebook_`, so this could maybe help work around the first limitation mentioned in the [evernote-to-sqlite blog post](https://simonwillison.net/2020/Oct/16/building-evernote-sqlite-exporter/). | 2021-11-02T20:04:31Z | 2021-11-02T20:04:31Z | ee36aba995b0a5385bdf9a451851dcfc316ff7f6 | 0 | 8cc3aa49c6e61496b04015c14048c5dac58d6b42 | fff89772b4404995400e33fe1d269050717ff4cf | FIRST_TIME_CONTRIBUTOR | evernote-to-sqlite 303218369 | https://github.com/dogsheep/evernote-to-sqlite/pull/15 | ||||||

| 775078665 | PR_kwDODFE5qs4uMsMJ | 9 | open | 0 | Removed space from filename My Activity.json | widadmogral 91880982 | File name from google takeout has no space. The code only runs without error if filename is "MyActivity.json" and not "My Activity.json". Is it a new change by Google? | 2021-11-08T00:04:31Z | 2021-11-08T00:04:31Z | 236da5c8302c09a20fcd4164c563cd9fa5c9595c | 0 | 6d111f65687e13ffd8b39aa05f1f8f4a351e7788 | e54e544427f1cc3ea8189f0e95f54046301a8645 | FIRST_TIME_CONTRIBUTOR | google-takeout-to-sqlite 206649770 | https://github.com/dogsheep/google-takeout-to-sqlite/pull/9 | ||||||

| 862538586 | PR_kwDODFdgUs4zaUta | 70 | open | 0 | scrape-dependents: enable paging through package menu option if present | stanbiryukov 36061055 | Some repos organize network dependents by a Package toggle. This PR adds the ability to page through those options and scrape underlying dependents. | 2022-02-24T15:07:25Z | 2022-02-24T15:07:25Z | 36cca3584a07d88d1e505111d1b23294d66ba73e | 0 | cc8f276a474525e55ed0bcacb0cd8cc560f89614 | 751bc900366ca52e662ea383b858cbf4365093d9 | FIRST_TIME_CONTRIBUTOR | github-to-sqlite 207052882 | https://github.com/dogsheep/github-to-sqlite/pull/70 | ||||||

| 872242672 | PR_kwDODEm0Qs4z_V3w | 65 | open | 0 | Update Twitter dev link, clarify apps vs projects | rixx 2657547 | Twitter pushes you heavily towards v2 projects instead of v1 apps – I know the README mentions v1 API compatibility at the top, but I still nearly got turned around here. | 2022-03-05T11:56:08Z | 2022-03-05T11:56:08Z | 765a450845ba26fac102d9154980cd936399546c | 0 | b7cfe9dcb7dbccc7ba8171cfe74f19227c4351ec | f09d611782a8372cfb002792dfa727325afb4db6 | FIRST_TIME_CONTRIBUTOR | twitter-to-sqlite 206156866 | https://github.com/dogsheep/twitter-to-sqlite/pull/65 | ||||||

| 943518450 | PR_kwDODEm0Qs44PPLy | 66 | open | 0 | Ageinfo workaround | ashanan 11887 | I'm not sure if this is due to a new format or just because my ageinfo file is blank, but trying to import an archive would crash when it got to that file. This PR adds a guard clause in the `ageinfo` transformer and sets a default value that doesn't throw an exception. Seems likely to be the same issue mentioned by danp in https://github.com/dogsheep/twitter-to-sqlite/issues/54, my ageinfo file looks the same. Added that same ageinfo file to the test archive as well to help confirm my workaround didn't break anything. Let me know if you want any changes! | 2022-05-21T21:08:29Z | 2022-05-21T21:09:16Z | c22e8eba634b70e914de9f72e452b1ebea55c6ef | 0 | 75ae7c94120d14083217bc76ebd603b396937104 | f09d611782a8372cfb002792dfa727325afb4db6 | FIRST_TIME_CONTRIBUTOR | twitter-to-sqlite 206156866 | https://github.com/dogsheep/twitter-to-sqlite/pull/66 | ||||||

| 948892757 | PR_kwDODFE5qs44jvRV | 11 | open | 0 | Update README.md | ashanan 11887 | Fix typo | 2022-05-27T03:13:59Z | 2022-05-27T03:13:59Z | 3d479a1052f2661de61b15c50b7a5b2daa20a33a | 0 | d4af1554a9b5ddedcd0b241450f7b935f38b9bf7 | e54e544427f1cc3ea8189f0e95f54046301a8645 | FIRST_TIME_CONTRIBUTOR | google-takeout-to-sqlite 206649770 | https://github.com/dogsheep/google-takeout-to-sqlite/pull/11 | ||||||

| 964640654 | PR_kwDOBm6k_c45fz-O | 1757 | open | 0 | feat: add a wildcard for _json columns | ytjohn 163156 | This allows _json to accept a wildcard for when there are many JSON columns that the user wants to convert. I hope this is useful. I've tested it on our datasette and haven't ran into any issues. I imagine on a large set of results, there could be some performance issues, but it will probably be negligible for most use cases. On a side note, I ran into an issue where I had to upgrade black on my system beyond the pinned version in setup.py. Here is the upstream issue <<https://github.com/psf/black/issues/2964> . I didn't include this in the PR yet since I didn't look into the issue too far, but I can if you would like. | 2022-06-11T01:01:17Z | 2022-09-06T00:51:21Z | f302b919cb78f1e353fc14cb449cab4a93dcedc6 | 0 | 1cdcd8894ce2bb76cf29f8ffcdadedbb6fa0dac1 | 2e9751672d4fe329b3c359d5b7b1992283185820 | FIRST_TIME_CONTRIBUTOR | datasette 107914493 | https://github.com/simonw/datasette/pull/1757 | ||||||

| 986925985 | PR_kwDOD079W84600uh | 37 | open | 0 | Fix former command name in readme | DanLipsitt 578773 | Looks like a previous commit missed a `photo-to-sqlite`→ `dogsheep-photos` replacement. | 2022-07-05T02:09:13Z | 2022-07-05T02:09:13Z | 1fa5a3b9ddab2a954aea21ea4292b944e826866a | 0 | b0d256c5bc480450627d98d8c8a5e3d8c61dc2ae | 325aa38cb23d0757bb1335ee2ea94a082475a66e | FIRST_TIME_CONTRIBUTOR | dogsheep-photos 256834907 | https://github.com/dogsheep/dogsheep-photos/pull/37 | ||||||

| 998860509 | PR_kwDOBm6k_c47iWbd | 1772 | open | 0 | Convert to setup.cfg | kfdm 89725 | Recent versions of setuptools can run most things from setup.cfg so one can have a simpler version that does not require executing code on install. The bulk of the changes were automated by running https://pypi.org/project/setup-py-upgrade/ with a few minor edits for the bits that it can not auto convert (the initial `get_long_description()` and `get_version()` can not be automatically converted) | 2022-07-18T03:39:53Z | 2022-07-18T03:39:53Z | 3abb0780f97901ae39f8a206c7c6d376f8574ffc | 0 | c1b2f539c8d4cabe0a48d07bd8ce3fd1439a8f08 | 01369176b0a8943ab45292ffc6f9c929b80a00e8 | FIRST_TIME_CONTRIBUTOR | datasette 107914493 | https://github.com/simonw/datasette/pull/1772 | ||||||

| 1038926741 | PR_kwDODtX3eM497MOV | 5 | open | 0 | The program fails when the user has no submissions | fernand0 2467 | Tested with: hacker-news-to-sqlite user hacker-news.db fernand0 Result: ` Traceback (most recent call last): File "/home/ftricas/.pyenv/versions/3.10.6/bin/hacker-news-to-sqlite", line 8, in <module> sys.exit(cli()) File "/home/ftricas/.pyenv/versions/3.10.6/lib/python3.10/site-packages/click/core.py", line 1130, in __call__ return self.main(*args, **kwargs) File "/home/ftricas/.pyenv/versions/3.10.6/lib/python3.10/site-packages/click/core.py", line 1055, in main rv = self.invoke(ctx) File "/home/ftricas/.pyenv/versions/3.10.6/lib/python3.10/site-packages/click/core.py", line 1657, in invoke return _process_result(sub_ctx.command.invoke(sub_ctx)) File "/home/ftricas/.pyenv/versions/3.10.6/lib/python3.10/site-packages/click/core.py", line 1404, in invoke return ctx.invoke(self.callback, **ctx.params) File "/home/ftricas/.pyenv/versions/3.10.6/lib/python3.10/site-packages/click/core.py", line 760, in invoke return __callback(*args, **kwargs) File "/home/ftricas/.pyenv/versions/3.10.6/lib/python3.10/site-packages/hacker_news_to_sqlite/cli.py", line 27, in user submitted = user.pop("submitted", None) or [] AttributeError: 'NoneType' object has no attribute 'pop' ` There is a problem of style with the patch (but not sure what to do) because with the new inicialization ( submitted = []) the part or [] is not needed. Maybe there is a more adequate way of doing this. | 2022-08-28T17:25:45Z | 2022-08-28T17:25:45Z | f0d7414305fc6cba4bcb7506b76a94938ccc7886 | 0 | ea97e640ad7a24020821fde5c647240120bd7099 | c5585c103d124b23ba1e163f8857d4ba49fe452a | FIRST_TIME_CONTRIBUTOR | hacker-news-to-sqlite 248903544 | https://github.com/dogsheep/hacker-news-to-sqlite/pull/5 | ||||||

| 1047561919 | PR_kwDODFdgUs4-cIa_ | 76 | open | 0 | Add organization support to repos command | OverkillGuy 2757699 | New --organization flag to signify all given "usernames" are private orgs. Adapts API URL to the organization path instead. Not the best implementation, but a first draft to talk around Fixes #75 (badly, no tests, overly vague, untested) | 2022-09-06T13:21:42Z | 2022-09-06T13:59:08Z | 1514acfa87f57261547bc3d7fc4f161e34285d76 | 0 | bb959b46e8a7647755c14dee180fdd5209451954 | ace13ec3d98090d99bd71871c286a4a612c96a50 | FIRST_TIME_CONTRIBUTOR | github-to-sqlite 207052882 | https://github.com/dogsheep/github-to-sqlite/pull/76 | ||||||

| 1073492809 | PR_kwDODD6af84__DNJ | 14 | open | 0 | Photo links | redmanmale 6782721 | * add to `checkin_details` view new column for a calculated photo links * supported multiple links split by newline * create `events` table if there's no events in the history to avoid SQL errors Fixes #9. | 2022-10-01T09:44:15Z | 2022-11-18T17:10:49Z | 6ca283dc30a2713bd3fda0dc35df1c7186a5996e | 0 | 5541d9496bad73c9edce98f5562a3135359d57d6 | 719b6e96a016d0ca8b316d3bed9c2a7a0cb499ee | FIRST_TIME_CONTRIBUTOR | swarm-to-sqlite 205429375 | https://github.com/dogsheep/swarm-to-sqlite/pull/14 | ||||||

| 1179812287 | PR_kwDODEm0Qs5GUoG_ | 67 | open | 0 | Add support for app-only bearer tokens | sometimes-i-send-pull-requests 26161409 | Previously, twitter-to-sqlite only supported OAuth1 authentication, and the token must be on behalf of a user. However, Twitter also supports application-only bearer tokens, documented here: https://developer.twitter.com/en/docs/authentication/oauth-2-0/bearer-tokens This PR adds support to twitter-to-sqlite for using application-only bearer tokens. To use, the auth.json file just needs to contain a "bearer_token" key instead of "api_key", "api_secret_key", etc. | 2022-12-28T23:31:20Z | 2022-12-28T23:31:20Z | 7825cd68047088cbdc9666586f1af9b7e1fa88c2 | 0 | 52050d06eeb85f3183b086944b7b75ae758096cd | f09d611782a8372cfb002792dfa727325afb4db6 | FIRST_TIME_CONTRIBUTOR | twitter-to-sqlite 206156866 | https://github.com/dogsheep/twitter-to-sqlite/pull/67 | ||||||

| 1179812491 | PR_kwDODEm0Qs5GUoKL | 68 | open | 0 | Archive: Import mute table | sometimes-i-send-pull-requests 26161409 | 2022-12-28T23:32:06Z | 2022-12-28T23:32:06Z | 47d4d3bda6d4123f58d8dbd634f9f146d97b037e | 0 | e1cd68ea0244c4689a3c49799c6b24371cdc4978 | f09d611782a8372cfb002792dfa727325afb4db6 | FIRST_TIME_CONTRIBUTOR | twitter-to-sqlite 206156866 | https://github.com/dogsheep/twitter-to-sqlite/pull/68 | |||||||

| 1179812620 | PR_kwDODEm0Qs5GUoMM | 69 | open | 0 | Archive: Import new tweets table name | sometimes-i-send-pull-requests 26161409 | Given the code here, it seems like in the past this file was named "tweet.js". In recent exports, it's named "tweets.js". The archive importer needs to be modified to take this into account. Existing logic is reused for importing this table. (However, the resulting table name will be different, matching the different file name -- archive_tweets, rather than archive_tweet). | 2022-12-28T23:32:44Z | 2022-12-28T23:32:44Z | 1a8c02a8d349c8fd4074139a6a3eed552676bdf3 | 0 | 11e8fa64ca30cebde047a4268e65f376c42e2b60 | f09d611782a8372cfb002792dfa727325afb4db6 | FIRST_TIME_CONTRIBUTOR | twitter-to-sqlite 206156866 | https://github.com/dogsheep/twitter-to-sqlite/pull/69 | ||||||

| 1179812730 | PR_kwDODEm0Qs5GUoN6 | 70 | open | 0 | Archive: Import Twitter Circle data | sometimes-i-send-pull-requests 26161409 | 2022-12-28T23:33:09Z | 2022-12-28T23:33:09Z | 1d2683101571550adf4a3b7bdf8e9ffbd8b77b61 | 0 | cc80cb31a9afb9a50295d6202f509e5b500607a0 | f09d611782a8372cfb002792dfa727325afb4db6 | FIRST_TIME_CONTRIBUTOR | twitter-to-sqlite 206156866 | https://github.com/dogsheep/twitter-to-sqlite/pull/70 | |||||||

| 1179812838 | PR_kwDODEm0Qs5GUoPm | 71 | open | 0 | Archive: Fix "ni devices" typo in importer | sometimes-i-send-pull-requests 26161409 | 2022-12-28T23:33:31Z | 2022-12-28T23:33:31Z | 7905dbd6e36bcabcfd9106c70ebb36ecf9e38260 | 0 | 0d3c62e8ba6e545785069cc0ffc8dc1bad03db80 | f09d611782a8372cfb002792dfa727325afb4db6 | FIRST_TIME_CONTRIBUTOR | twitter-to-sqlite 206156866 | https://github.com/dogsheep/twitter-to-sqlite/pull/71 | |||||||

| 1182000455 | PR_kwDOC8tyDs5Gc-VH | 23 | open | 0 | Include workout statistics | badboy 2129 | Not sure when this changed (iOS 16 maybe?), but the `WorkoutStatistics` now has a whole bunch of information about workouts, e.g. for runs it contains the distance (as a `<WorkoutStatistics type="HKQuantityTypeIdentifierDistanceWalkingRunning ...>` element). Adding it as another column at leat allows me to pull these out (using SQLite's JSON support). I'm running with this patch on my own data now. | 2023-01-01T17:29:56Z | 2023-01-01T17:29:57Z | e0ad45055f363810085119f26df87a6804451056 | 0 | d5b9e3609961515cc52bcc5ef070e3b83b473339 | 9fe3cb17e03d6c73222b63e643638cf951567c4c | FIRST_TIME_CONTRIBUTOR | healthkit-to-sqlite 197882382 | https://github.com/dogsheep/healthkit-to-sqlite/pull/23 | ||||||

| 1238017010 | PR_kwDOBm6k_c5JyqPy | 2025 | open | 0 | Add database metadata to index.html template context | palewire 9993 | Fixes #2016 <!-- readthedocs-preview datasette start --> ---- :books: Documentation preview :books:: https://datasette--2025.org.readthedocs.build/en/2025/ <!-- readthedocs-preview datasette end --> | 2023-02-12T11:16:58Z | 2023-02-12T11:17:14Z | a2d3bb02cf2c9b8ed7c788910fdda606108cd584 | 0 | 912ed9de92d1bb9a28f50a2e08c5e7df2b827c15 | 0b4a28691468b5c758df74fa1d72a823813c96bf | FIRST_TIME_CONTRIBUTOR | datasette 107914493 | https://github.com/simonw/datasette/pull/2025 | ||||||

| 1243080434 | PR_kwDOBm6k_c5KF-by | 2026 | open | 0 | Avoid repeating primary key columns if included in _col args | runderwood 8513 | ...while maintaining given order. Fixes #1975 (if I'm understanding correctly). <!-- readthedocs-preview datasette start --> ---- :books: Documentation preview :books:: https://datasette--2026.org.readthedocs.build/en/2026/ <!-- readthedocs-preview datasette end --> | 2023-02-16T04:16:25Z | 2023-02-16T04:16:41Z | ad2bfc72186e7af2244a6f27e02754f4c2f64910 | 0 | f15adf1d6211e05250e5492826dd3f8e8e328077 | 0b4a28691468b5c758df74fa1d72a823813c96bf | FIRST_TIME_CONTRIBUTOR | datasette 107914493 | https://github.com/simonw/datasette/pull/2026 | ||||||

| 1259276997 | PR_kwDOBm6k_c5LDwrF | 2031 | open | 0 | Expand foreign key references in row view as well | tmcl-it 82332573 | Unlike the table view, the single row view does not resolve foreign key references into labels. This patch extracts the foreign key reference expansion code from TableView.data() into a standalone function that is then called by both TableView.data() and RowView.data(). <!-- readthedocs-preview datasette start --> ---- :books: Documentation preview :books:: https://datasette--2031.org.readthedocs.build/en/2031/ <!-- readthedocs-preview datasette end --> | 2023-03-01T18:43:09Z | 2023-03-24T18:35:25Z | ee7aec6175d0934c0420002529d21a767d2a527d | 0 | ef25867492ce6eb69492aa37fcde98936a95365c | 3feed1f66e2b746f349ee56970a62246a18bb164 | FIRST_TIME_CONTRIBUTOR | datasette 107914493 | https://github.com/simonw/datasette/pull/2031 | ||||||

| 1266742462 | PR_kwDOBm6k_c5LgPS- | 2034 | open | 0 | remove an unused `app` var in cli.py | wenhoujx 4370201 | this var `app` isn't actually used? unless init it does some side-effect outside of the event loop, idon't think it's necessary. Feel free to ignore this PR if the deleted line actually does something. <!-- readthedocs-preview datasette start --> ---- :books: Documentation preview :books:: https://datasette--2034.org.readthedocs.build/en/2034/ <!-- readthedocs-preview datasette end --> | 2023-03-07T18:19:05Z | 2023-03-29T20:56:20Z | 9bd2128399e6dff33f97b3aa7adbd8f3a36daad7 | 0 | 28239c5bed362f2b9ee9e780bf23e5f31b680b5d | 1ad92a1d87d79084ebe524ed186c900ff042328c | FIRST_TIME_CONTRIBUTOR | datasette 107914493 | https://github.com/simonw/datasette/pull/2034 | ||||||

| 1289476973 | PR_kwDOBm6k_c5M29tt | 2044 | open | 0 | Expand labels in row view as well (patch for 0.64.x branch) | tmcl-it 82332573 | This is a version of #2031 for the 0.64.x branch. <!-- readthedocs-preview datasette start --> ---- :books: Documentation preview :books:: https://datasette--2044.org.readthedocs.build/en/2044/ <!-- readthedocs-preview datasette end --> | 2023-03-24T18:44:44Z | 2023-03-24T18:44:57Z | 175202f5238c23fc6b76a0119d3ce9917d1570d1 | 0 | c039d23a730448a7d82f9239cfb445aa1f7a4f16 | 2a0a94fe972e4b1556e73026dc381d297bc906bc | FIRST_TIME_CONTRIBUTOR | datasette 107914493 | https://github.com/simonw/datasette/pull/2044 | ||||||

| 1290512937 | PR_kwDODtX3eM5M66op | 6 | open | 0 | Add permalink virtual field to items table | xavdid 1231935 | I added a virtual column (no storage overhead) to the output that easily links back to the source. It works nicely out of the box with datasette:  I got bit a bit by https://github.com/simonw/sqlite-utils/issues/411, so I went with a manual `table_xinfo` and creating the table via execute. Happy to adjust if that issue moves, but this seems like it works. I also added my best-guess instructions for local development on this package. I'm shooting in the dark, so feel free to replace with how you work on it locally. | 2023-03-26T22:22:38Z | 2023-03-29T18:38:52Z | 99bda9434e0adaa8459bc0abbe6262785cd4086c | 0 | b04d6c76c26820f2e0b04da58dd82789e83cbb42 | c5585c103d124b23ba1e163f8857d4ba49fe452a | FIRST_TIME_CONTRIBUTOR | hacker-news-to-sqlite 248903544 | https://github.com/dogsheep/hacker-news-to-sqlite/pull/6 | ||||||

| 1299129869 | PR_kwDOJHON9s5NbyYN | 13 | open | 0 | use universal command | amlestin 14314871 | 2023-04-02T15:10:54Z | 2023-04-02T15:37:34Z | b40fdee5efac03f10257f749ee7f69e4692ad6c5 | 0 | 8111718e747f59dddcb5bf7820ce922e0723c04a | e55a802d37a896475b6cf475c1ba947af63cca73 | FIRST_TIME_CONTRIBUTOR | apple-notes-to-sqlite 611552758 | https://github.com/dogsheep/apple-notes-to-sqlite/pull/13 | |||||||

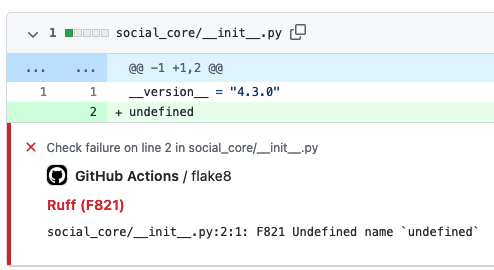

| 1308472112 | PR_kwDOBm6k_c5N_bMw | 2056 | open | 0 | GitHub Action to lint Python code with ruff | cclauss 3709715 | [Ruff](https://beta.ruff.rs/) supports [over 500 lint rules](https://beta.ruff.rs/docs/rules) and can be used to replace [Flake8](https://pypi.org/project/flake8/) (plus dozens of plugins), [isort](https://pypi.org/project/isort/), [pydocstyle](https://pypi.org/project/pydocstyle/), [yesqa](https://github.com/asottile/yesqa), [eradicate](https://pypi.org/project/eradicate/), [pyupgrade](https://pypi.org/project/pyupgrade/), and [autoflake](https://pypi.org/project/autoflake/), all while executing (in Rust) tens or hundreds of times faster than any individual tool. The ruff Action uses minimal steps to run in ~5 seconds, rapidly providing intuitive GitHub Annotations to contributors.  <!-- readthedocs-preview datasette start --> ---- :books: Documentation preview :books:: https://datasette--2056.org.readthedocs.build/en/2056/ <!-- readthedocs-preview datasette end --> | 2023-04-11T06:41:27Z | 2023-04-15T14:24:46Z | 608507fe15679221ff74d437339ee66e3d21a7ae | 0 | f53b78029321cd9bd5661accb5bd043d62e35a85 | 5890a20c374fb0812d88c9b0ef26a838bfa06c76 | FIRST_TIME_CONTRIBUTOR | datasette 107914493 | https://github.com/simonw/datasette/pull/2056 | ||||||

| 1319390463 | PR_kwDOBm6k_c5OpEz_ | 2061 | open | 0 | Add "Packaging a plugin using Poetry" section in docs | rclement 1238873 | This PR adds a new section about packaging a plugin using `poetry` within the "Writing plugins" page of the documentation. <!-- readthedocs-preview datasette start --> ---- :books: Documentation preview :books:: https://datasette--2061.org.readthedocs.build/en/2061/ <!-- readthedocs-preview datasette end --> | 2023-04-19T07:23:28Z | 2023-04-19T07:27:18Z | e777f394dc770714055e48c952e04c1620454e3e | 0 | 2650e3ca2c5ae4f21efe216f9959be31d9e58eed | 5890a20c374fb0812d88c9b0ef26a838bfa06c76 | FIRST_TIME_CONTRIBUTOR | datasette 107914493 | https://github.com/simonw/datasette/pull/2061 | ||||||

| 1349830554 | PR_kwDOBm6k_c5QdMea | 2074 | open | 0 | sort files by mtime | abbbi 3919561 | serving multiple database files and getting tired by the default sort, changes so the sort order puts the latest changed databases to be on top of the list so don't have to scroll down, lazy as i am ;) <!-- readthedocs-preview datasette start --> ---- :books: Documentation preview :books:: https://datasette--2074.org.readthedocs.build/en/2074/ <!-- readthedocs-preview datasette end --> | 2023-05-14T15:25:15Z | 2023-05-14T15:25:29Z | 4fe4822c999a3003a9e3093814274190ea05e522 | 0 | 689e3b0155612c766607feea10bc2e67e1c2a6da | 49184c569cd70efbda4f3f062afef3a34401d8d5 | FIRST_TIME_CONTRIBUTOR | datasette 107914493 | https://github.com/simonw/datasette/pull/2074 | ||||||

| 1355563020 | PR_kwDOBm6k_c5QzEAM | 2076 | open | 0 | Datsette gpt plugin | StudioCordillera 130708713 | <!-- readthedocs-preview datasette start --> ---- :books: Documentation preview :books:: https://datasette--2076.org.readthedocs.build/en/2076/ <!-- readthedocs-preview datasette end --> | 2023-05-18T11:22:30Z | 2023-05-18T11:22:45Z | 71658bfe72b75bdceb2b29e13fd610511c8b908c | 0 | 093693edd2a177a38cbc5570aaef769e5cbffac1 | 49184c569cd70efbda4f3f062afef3a34401d8d5 | FIRST_TIME_CONTRIBUTOR | datasette 107914493 | https://github.com/simonw/datasette/pull/2076 | ||||||

| 1372714762 | PR_kwDOBm6k_c5R0fcK | 2082 | open | 0 | Catch query interrupted on facet suggest row count | redraw 10843208 | Just like facet's `suggest()` is trapping `QueryInterrupted` for facet columns, we also need to trap `get_row_count()`, which can reach timeout if database tables are big enough. I've included `get_columns()` inside the block as that's just another query, despite it's a really cheap one and might never raise the exception. <!-- readthedocs-preview datasette start --> ---- :books: Documentation preview :books:: https://datasette--2082.org.readthedocs.build/en/2082/ <!-- readthedocs-preview datasette end --> | 2023-05-31T18:42:46Z | 2023-05-31T18:45:26Z | eadc26004d50f38f28b032056821ba319c9f2e87 | 0 | dfe99af36c11b88ffcb5ca602d72cee1b8acd8bc | dda99fc09fb0b5523948f6d481c6c051c1c7b5de | FIRST_TIME_CONTRIBUTOR | datasette 107914493 | https://github.com/simonw/datasette/pull/2082 | ||||||

| 1425647904 | PR_kwDOBm6k_c5U-akg | 2096 | open | 0 | Clarify docs for descriptions in metadata | garthk 15906 | G'day! I got confused while debugging, earlier today. That's on me, but it does strike me a little repetition in the metadata documentation might help those flicking around it rather than reading it from top to bottom. No worries if you think otherwise. <!-- readthedocs-preview datasette start --> ---- :books: Documentation preview :books:: https://datasette--2096.org.readthedocs.build/en/2096/ <!-- readthedocs-preview datasette end --> | 2023-07-08T01:57:58Z | 2023-07-08T01:58:13Z | 88388864859b24707f4be9574e357910c368b0fe | 0 | 3b787aae74b683414f3546bc9979e9f2c0ae13e9 | 8cd60fd1d899952f1153460469b3175465f33f80 | FIRST_TIME_CONTRIBUTOR | datasette 107914493 | https://github.com/simonw/datasette/pull/2096 | ||||||

| 1432754160 | PR_kwDOBm6k_c5VZhfw | 2100 | open | 0 | Make primary key view accessible to render_cell hook | meowcat 1563881 | <!-- readthedocs-preview datasette start --> ---- :books: Documentation preview :books:: https://datasette--2100.org.readthedocs.build/en/2100/ <!-- readthedocs-preview datasette end --> | 2023-07-13T09:30:36Z | 2023-08-10T13:15:41Z | 37e72ded81cba41c7597a4287e73c47570dca2c7 | 0 | 5639f9d943e55d6990b40db726aa59790724899a | 33251d04e78d575cca62bb59069bb43a7d924746 | FIRST_TIME_CONTRIBUTOR | datasette 107914493 | https://github.com/simonw/datasette/pull/2100 | ||||||

| 1454719622 | PR_kwDOD079W85WtUKG | 38 | closed | 0 | photos-to-sql not found? | coldclimate 319473 | I wonder if `photos-to-sql` is an old name for `dogsheep-photos`, because I can't find it anywhere. I can't actually get this command to work (`sqlite3.OperationalError: no such table: attached.ZGENERICASSET` thrown) but I don't think that's related | 2023-07-29T09:59:42Z | 2023-07-29T10:01:27Z | 2023-07-29T10:01:23Z | 78898d8344bd37379b9ef44384629c26ed8c4558 | 0 | dbf0213a98cfb92f49a60695d0e0094b851b1cbb | 325aa38cb23d0757bb1335ee2ea94a082475a66e | FIRST_TIME_CONTRIBUTOR | dogsheep-photos 256834907 | https://github.com/dogsheep/dogsheep-photos/pull/38 | |||||

| 1454726308 | PR_kwDOD079W85WtVyk | 39 | open | 0 | Missing option in datasette instructions | coldclimate 319473 | Gotta tell it where to look | 2023-07-29T10:34:48Z | 2023-07-29T10:34:48Z | bd9c51b4e3e110122f921fc6ebf10b69d7fcbb7a | 0 | 17c0ddf5113d8587247d4736e1390fe07ec33b8c | 325aa38cb23d0757bb1335ee2ea94a082475a66e | FIRST_TIME_CONTRIBUTOR | dogsheep-photos 256834907 | https://github.com/dogsheep/dogsheep-photos/pull/39 | ||||||

| 1488414606 | PR_kwDOBm6k_c5Yt2eO | 2155 | open | 0 | Fix hupper.start_reloader entry point | cadeef 79087 | Update hupper's entry point so that click commands are processed properly. Fixes #2123 <!-- readthedocs-preview datasette start --> ---- :books: Documentation preview :books:: https://datasette--2155.org.readthedocs.build/en/2155/ <!-- readthedocs-preview datasette end --> | 2023-08-24T17:14:08Z | 2023-09-27T18:44:02Z | 8b993f48927cfb89f31251a94dc7b48b6b2d3021 | 0 | ba2c235a8ea7240549af38988b4cbff8d7ec55bd | bdf59eb7db42559e538a637bacfe86d39e5d17ca | FIRST_TIME_CONTRIBUTOR | datasette 107914493 | https://github.com/simonw/datasette/pull/2155 | ||||||

| 1488782498 | PR_kwDOBm6k_c5YvQSi | 2158 | open | 0 | add brand option to metadata.json. | publicmatt 52261150 | This adds a brand link to the top navbar if 'brand' key is populated in metadata.json. The link will be either '#' or use the contents of 'brand_url' in metadata.json for href. I was able to get this done on my own site by replacing `templates/_crumbs.html` with a custom version, but I thought it would be nice to incorporate this in the tool directly.  <!-- readthedocs-preview datasette start --> ---- :books: Documentation preview :books:: https://datasette--2158.org.readthedocs.build/en/2158/ <!-- readthedocs-preview datasette end --> | 2023-08-24T22:37:41Z | 2023-08-24T22:37:57Z | 20fad9361ea7173a3543fa16e4b6913a3a0f4b9e | 0 | 0d89c4bd19732487232ab2637cf5e8632f48986b | 527cec66b0403e689c8fb71fc8b381a1d7a46516 | FIRST_TIME_CONTRIBUTOR | datasette 107914493 | https://github.com/simonw/datasette/pull/2158 | ||||||

| 1489526658 | PR_kwDOBm6k_c5YyF-C | 2159 | open | 0 | Implement Dark Mode colour scheme | jamietanna 3315059 | Closes #2095. <!-- readthedocs-preview datasette start --> ---- :books: Documentation preview :books:: https://datasette--2159.org.readthedocs.build/en/2159/ <!-- readthedocs-preview datasette end --> | 2023-08-25T10:46:23Z | 2023-08-25T10:46:35Z | 97687cea4bd9c94ae76a780311e044b3c77ef902 | 1 | 4b969945d106e48b50545fc029ec020593ee1db0 | 527cec66b0403e689c8fb71fc8b381a1d7a46516 | FIRST_TIME_CONTRIBUTOR | datasette 107914493 | https://github.com/simonw/datasette/pull/2159 | ||||||

| 1501923826 | PR_kwDOJHON9s5ZhYny | 14 | open | 0 | fix: fix the problem of Chinese character garbling | barretlee 2698003 | 1. The code uses two different ways of writing encoding formats, `mac_roman` and `macroman`. It is uncertain whether there are any typo errors. 2. When there are Chinese characters in the content, exporting it results in garbled code. Changing it to `utf8` can fix the issue. | 2023-09-04T23:48:28Z | 2023-09-04T23:48:28Z | 66b5b73948b1bedc275432956dda43cfe151c78c | 0 | 5febe19b8922aa818e7dc265bdee30bcc5004eb4 | e55a802d37a896475b6cf475c1ba947af63cca73 | FIRST_TIME_CONTRIBUTOR | apple-notes-to-sqlite 611552758 | https://github.com/dogsheep/apple-notes-to-sqlite/pull/14 | ||||||

| 1505067804 | PR_kwDODFE5qs5ZtYMc | 13 | open | 0 | use poetry for packages, asdf for versioning, and gh actions for ci | iloveitaly 150855 | - build: use poetry for package management, asdf for python version - build: cleanup poetry config, add keywords, ignore dist - ci: migrate circleci to gh actions - fix: dup method definition | 2023-09-06T17:59:16Z | 2023-09-06T17:59:16Z | cd4d8c4a7ecd231f6c5a8886245271934177f104 | 0 | b5f0ebe91755c46e01dc4aefb808f0292848fbed | e54e544427f1cc3ea8189f0e95f54046301a8645 | FIRST_TIME_CONTRIBUTOR | google-takeout-to-sqlite 206649770 | https://github.com/dogsheep/google-takeout-to-sqlite/pull/13 |

Advanced export

JSON shape: default, array, newline-delimited, object

CREATE TABLE [pull_requests] (

[id] INTEGER PRIMARY KEY,

[node_id] TEXT,

[number] INTEGER,

[state] TEXT,

[locked] INTEGER,

[title] TEXT,

[user] INTEGER REFERENCES [users]([id]),

[body] TEXT,

[created_at] TEXT,

[updated_at] TEXT,

[closed_at] TEXT,

[merged_at] TEXT,

[merge_commit_sha] TEXT,

[assignee] INTEGER REFERENCES [users]([id]),

[milestone] INTEGER REFERENCES [milestones]([id]),

[draft] INTEGER,

[head] TEXT,

[base] TEXT,

[author_association] TEXT,

[repo] INTEGER REFERENCES [repos]([id]),

[url] TEXT,

[merged_by] INTEGER REFERENCES [users]([id])

, [auto_merge] TEXT);

CREATE INDEX [idx_pull_requests_merged_by]

ON [pull_requests] ([merged_by]);

CREATE INDEX [idx_pull_requests_repo]

ON [pull_requests] ([repo]);

CREATE INDEX [idx_pull_requests_milestone]

ON [pull_requests] ([milestone]);

CREATE INDEX [idx_pull_requests_assignee]

ON [pull_requests] ([assignee]);

CREATE INDEX [idx_pull_requests_user]

ON [pull_requests] ([user]);