github

| id | node_id | number | title | user | state | locked | assignee | milestone | comments | created_at | updated_at | closed_at | author_association | pull_request | body | repo | type | active_lock_reason | performed_via_github_app | reactions | draft | state_reason |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 408518024 | MDU6SXNzdWU0MDg1MTgwMjQ= | 410 | How to setup a multi database environment? | 30607 | closed | 0 | 1 | 2019-02-10T09:39:24Z | 2019-04-12T04:42:28Z | 2019-04-12T04:42:27Z | NONE | Hi, first of all I need to write that Simon Willison and datasette are really great. I have probably a stupid question, but it seems to me that I do not have the reply in the documentation. I have installed datasette and run it with `datasette mydb.db`, and I can reach it on `http://127.0.0.1:8001`. But how to work with more than one db? Imagine I have ten sqlite databases, and that I need to explore/query these via datasette, how to run datasette? Is it possibile to create a sort of db index and than run `datasette serve myindex`? Thank you | 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/410/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 431756352 | MDExOlB1bGxSZXF1ZXN0MjY5MzY0OTI0 | 426 | Upgrade to Jinja2==2.10.1 | 9599 | closed | 0 | 1 | 2019-04-10T23:03:08Z | 2019-04-22T21:23:22Z | 2019-04-10T23:13:31Z | OWNER | simonw/datasette/pulls/426 | https://nvd.nist.gov/vuln/detail/CVE-2019-10906 This is only a security issue of concern if evaluating templates from untrusted sources, which isn't something I would ever expect a Datasette user to do. | 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/426/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | |||||

| 398559195 | MDU6SXNzdWUzOTg1NTkxOTU= | 400 | datasette publish cloudrun plugin | 10352819 | closed | 0 | 1 | 2019-01-12T14:35:11Z | 2019-05-03T16:57:35Z | 2019-05-03T16:57:35Z | CONTRIBUTOR | Google announced that they may launch a simple service for running Docker containers (previously serverless containers, now called "cloud run" -- link to alpha [here](https://services.google.com/fb/forms/serverlesscontainers/)). If/when this happens, it might be a good fit for publishing datasettes? (at least using the current version, manually publishing a datasette seems relatively painless). | 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/400/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 440159137 | MDExOlB1bGxSZXF1ZXN0Mjc1ODAxNDYz | 447 | Use dist: xenial and python: 3.7 on Travis | 9599 | closed | 0 | 1 | 2019-05-03T18:07:07Z | 2019-05-03T18:17:05Z | 2019-05-03T18:16:53Z | OWNER | simonw/datasette/pulls/447 | 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/447/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

| 440332621 | MDU6SXNzdWU0NDAzMzI2MjE= | 453 | Error pages do not return CORS header with --cors | 9599 | closed | 0 | 1 | 2019-05-04T15:07:44Z | 2019-05-05T12:24:24Z | 2019-05-05T12:11:33Z | OWNER | This is very confusing. It means that if you send invalid SQL you will get back a CORS error, because the resulting 400 page cannot be accessed via JavaScript. | 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/453/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 275415799 | MDU6SXNzdWUyNzU0MTU3OTk= | 137 | Ability to combine multiple SQL queries on a single graph | 9599 | open | 0 | 1 | 2017-11-20T16:26:57Z | 2019-05-13T18:33:51Z | OWNER | This would make visualizations significantly more powerful. The interesting challenge will be around the URL design. It would be useful to be able to combine either multiple explicit SQL queries or multiple queries based on the filter string parameters passed to one or more table views. | 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/137/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 441858747 | MDU6SXNzdWU0NDE4NTg3NDc= | 455 | Hidden tables shown on the index page | 9599 | closed | 0 | 4305096 | 1 | 2019-05-08T18:02:13Z | 2019-05-14T15:49:29Z | 2019-05-14T15:48:08Z | OWNER | Minor bug in master right now. https://csvconf.now.sh/ <img width="521" alt="csv_conf_v4__csvconf" src="https://user-images.githubusercontent.com/9599/57397073-b2bd1700-7180-11e9-82b8-677338c50987.png"> | 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/455/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 377266351 | MDU6SXNzdWUzNzcyNjYzNTE= | 373 | Views should be shown on root/index page along with tables | 416374 | closed | 0 | 4305096 | 1 | 2018-11-05T06:28:41Z | 2019-05-16T00:29:22Z | 2019-05-16T00:29:22Z | CONTRIBUTOR | At the moment the number of views is given on a datasette "homepage", but not links to any views themselves | 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/373/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 443034218 | MDU6SXNzdWU0NDMwMzQyMTg= | 464 | Add Glitch to Getting Started docs section | 9599 | closed | 0 | 4305096 | 1 | 2019-05-11T20:39:39Z | 2019-05-16T05:04:35Z | 2019-05-16T05:03:46Z | OWNER | Glitch is by far the easiest way to start trying out Datasette. Add a section to https://datasette.readthedocs.io/en/latest/getting_started.html | 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/464/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 445003029 | MDU6SXNzdWU0NDUwMDMwMjk= | 471 | ?_hash=1 and --config hash_urls:1 should only work for immutable databases | 9599 | closed | 0 | 4305096 | 1 | 2019-05-16T14:54:25Z | 2019-05-16T15:11:03Z | 2019-05-16T15:11:03Z | OWNER | Split from #419. | 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/471/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 445230077 | MDU6SXNzdWU0NDUyMzAwNzc= | 472 | Rename "publish now" to "publish nowv1" | 9599 | closed | 0 | 4305096 | 1 | 2019-05-17T01:58:52Z | 2019-05-19T18:07:39Z | 2019-05-19T18:07:39Z | OWNER | This will help clarify that you need a nowv1 account use it. | 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/472/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 444997937 | MDU6SXNzdWU0NDQ5OTc5Mzc= | 470 | /-/databases showing currently attached database details | 9599 | closed | 0 | 4305096 | 1 | 2019-05-16T14:45:18Z | 2019-05-19T19:28:44Z | 2019-05-16T14:50:26Z | OWNER | Split from #419. Mainly useful to see what is connected as mutable v.s. immutable. Also helps fill the gap left by `/-/inspect` until #465 | 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/470/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 445855789 | MDU6SXNzdWU0NDU4NTU3ODk= | 474 | Do not allow downloads of mutable databases | 9599 | closed | 0 | 4305096 | 1 | 2019-05-19T19:35:32Z | 2019-05-19T20:41:17Z | 2019-05-19T20:41:16Z | OWNER | If the file changes during download it will probably result in a corrupt download. Safer not to allow downloads at all of mutable databases. | 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/474/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 443034003 | MDU6SXNzdWU0NDMwMzQwMDM= | 463 | Write release notes for 0.28 | 9599 | closed | 0 | 4305096 | 1 | 2019-05-11T20:36:56Z | 2019-05-19T21:24:44Z | 2019-05-19T21:24:20Z | OWNER | So much new stuff! https://github.com/simonw/datasette/compare/0.27...master | 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/463/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 440313209 | MDU6SXNzdWU0NDAzMTMyMDk= | 451 | Update README | 9599 | closed | 0 | 4305096 | 1 | 2019-05-04T11:26:07Z | 2019-05-19T22:23:43Z | 2019-05-19T22:23:43Z | OWNER | The README is quite out of date now. It includes out-dated copies of help files, promotes the old Zeit Now integration and duplicates a lot of material from the docs. | 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/451/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 444749373 | MDU6SXNzdWU0NDQ3NDkzNzM= | 469 | publish commands should use new -i option | 9599 | closed | 0 | 1 | 2019-05-16T04:31:40Z | 2019-05-19T22:53:41Z | 2019-05-19T22:53:41Z | OWNER | I can make this change only after releasing 0.28 - if I make the change earlier than that `publish heroku` etc will break because they will install the latest release of Datasette which will not understand the `-i` option. This is a one-line fix: replace this: https://github.com/simonw/datasette/blob/2ad9d15cd6901654e6801e2faa29e6fc08bae5fa/datasette/utils.py#L489 With this: (need to do it for other publishers too though) ``` quoted_files = " ".join( ["-i {}".format(shlex.quote(file_name)) for file_name in file_names] ) ``` | 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/469/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 445873563 | MDExOlB1bGxSZXF1ZXN0MjgwMjA0Mjc2 | 479 | doc typo fix | 98555 | closed | 0 | 1 | 2019-05-19T22:54:25Z | 2019-05-20T16:42:29Z | 2019-05-20T16:42:29Z | CONTRIBUTOR | simonw/datasette/pulls/479 | Fix typo in performance doc page | 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/479/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | |||||

| 447451492 | MDU6SXNzdWU0NDc0NTE0OTI= | 484 | Mechanism for displaying summary of m2m relationships in rows on table view | 9599 | open | 0 | 1 | 2019-05-23T05:02:41Z | 2019-05-23T06:34:05Z | OWNER | Part of #354 (m2m support) It would be fantastic if rows that are part of a m2m relationship could display it in an additional column in the table view. It might look something like this: https://russian-ira-facebook-ads.datasettes.com/russian-ads-919cbfd/display_ads?_search=black+lives+matter <img width="1475" alt="russian-ads__display_ads__50_rows_where_where_search_matches__black_lives_matter_" src="https://user-images.githubusercontent.com/9599/58226788-12690580-7cdd-11e9-9925-73153b27a413.png"> That example [was achieved](https://github.com/simonw/russian-ira-facebook-ads-datasette/blob/daf51a8c50a78e8bc7971c211005fd85e66ccf64/russian-ads-metadata.yaml#L72-L77) using a custom SQL query and [datasette-json-html](https://github.com/simonw/datasette-json-html) - but I'd like this to be a built-in feature instead. | 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/484/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 432727685 | MDU6SXNzdWU0MzI3Mjc2ODU= | 20 | JSON column values get extraneously quoted | 649467 | closed | 0 | 4348046 | 1 | 2019-04-12T20:15:30Z | 2019-05-25T00:57:19Z | 2019-05-25T00:57:19Z | NONE | If the input to `sqlite-utils insert` includes a column that is a JSON array or object, `sqlite-utils query` will introduce an extra level of quoting on output: ``` # echo '[{"key": ["one", "two", "three"]}]' | sqlite-utils insert t.db t - # sqlite-utils t.db 'select * from t' [{"key": "[\"one\", \"two\", \"three\"]"}] # sqlite3 t.db 'select * from t' ["one", "two", "three"] ``` This might require an imperfect solution, since sqlite3 doesn't have a JSON type. Perhaps fields that start with `["` or `{"` and end with `"]` or `"}` could be detected, with a flag to turn off that behavior for weird text fields (or vice versa). | 140912432 | issue | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/20/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 448664792 | MDU6SXNzdWU0NDg2NjQ3OTI= | 487 | Refactor database methods off Datasette class | 9599 | closed | 0 | 1 | 2019-05-27T04:52:41Z | 2019-05-27T20:05:34Z | 2019-05-27T05:08:01Z | OWNER | Methods like this one: https://github.com/simonw/datasette/blob/182a3017c24e3fa3af60e4ac0c91c7e48f8736fd/datasette/app.py#L497-L503 Should live on the `ConnectedDatabase` class instead. | 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/487/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 448978907 | MDU6SXNzdWU0NDg5Nzg5MDc= | 490 | Rename InterruptedError exception class | 9599 | closed | 0 | 1 | 2019-05-27T20:04:25Z | 2019-05-28T00:16:45Z | 2019-05-28T00:16:45Z | OWNER | https://github.com/simonw/datasette/blob/edb36629e7356f70f42b9d37fea5dfe9cc3c364a/datasette/utils.py#L49-L50 Python has a built-in exception called this, so we should call ours something else. | 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/490/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 448977444 | MDU6SXNzdWU0NDg5Nzc0NDQ= | 489 | Pagination breaks when combined with expanded foreign keys | 9599 | closed | 0 | 1 | 2019-05-27T19:56:56Z | 2019-05-28T02:48:57Z | 2019-05-28T02:23:27Z | OWNER | Consider https://edb3662.datasette.io/fixtures/roadside_attraction_characteristics?_sort=attraction_id&_size=2 The "Next page" link goes here, which returns 0 rows: https://edb3662.datasette.io/fixtures/roadside_attraction_characteristics?_size=2&_next=%257B%2527value%2527%253A%2B2%252C%2B%2527label%2527%253A%2B%2527Winchester%2BMystery%2BHouse%2527%257D%2C2&_sort=attraction_id That's because if you double-url-decode that `_next` link you get this: `_next={'value': 2, 'label': 'Winchester Mystery House'},2` It should be `_next=2,2` | 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/489/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 334148669 | MDU6SXNzdWUzMzQxNDg2Njk= | 318 | Facets with value of 0 displayed incorrectly | 9599 | closed | 0 | 3439337 | 1 | 2018-06-20T16:06:46Z | 2019-05-29T21:39:12Z | 2018-06-21T04:30:45Z | OWNER | https://registry.datasette.io/registry-7d4f81f/tables?_facet=is_hidden#facet-is_hidden  Displays correctly if you select it: https://registry.datasette.io/registry-7d4f81f/tables?_facet=is_hidden&is_hidden=0  | 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/318/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 446429421 | MDU6SXNzdWU0NDY0Mjk0MjE= | 481 | Facet by date | 9599 | closed | 0 | 1 | 2019-05-21T05:55:54Z | 2019-05-29T21:39:12Z | 2019-05-21T06:09:49Z | OWNER | Ability to facet on datetime fields by their date. | 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/481/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 450862577 | MDU6SXNzdWU0NTA4NjI1Nzc= | 496 | Additional options to gcloud build command in cloudrun - timeout | 1740337 | closed | 0 | 1 | 2019-05-31T15:43:55Z | 2019-05-31T23:05:05Z | 2019-05-31T23:05:05Z | NONE | I am trying to deploy a 3.1 GB dataset to cloudrun with datasette. Currrently the docker build times out. Would be nice to have a timeout flag or additional gcloud commands that could be specified. Here is the line https://github.com/simonw/datasette/blob/f825e2012109247fa246e2b938f8174069e574f1/datasette/publish/cloudrun.py#L78 I would be happy to submit a PR to allow for a timeout option. What are your ideas of allowing the user additional build publishing flag options? | 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/496/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 451705509 | MDExOlB1bGxSZXF1ZXN0Mjg0NzQzNzk0 | 500 | Fix typo in install step: should be install -e | 32314 | closed | 0 | 1 | 2019-06-03T21:50:51Z | 2019-06-11T18:48:43Z | 2019-06-11T18:48:40Z | CONTRIBUTOR | simonw/datasette/pulls/500 | 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/500/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

| 274315193 | MDU6SXNzdWUyNzQzMTUxOTM= | 106 | Document how pagination works | 9599 | closed | 0 | 1 | 2017-11-15T21:44:32Z | 2019-06-24T06:42:33Z | 2019-06-24T06:42:33Z | OWNER | I made a start at that in this comment: https://news.ycombinator.com/item?id=15691926 | 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/106/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 340730961 | MDU6SXNzdWUzNDA3MzA5NjE= | 340 | Embrace black | 9599 | closed | 0 | 1 | 2018-07-12T17:32:29Z | 2019-06-24T06:50:27Z | 2019-06-24T06:50:26Z | OWNER | Run [black](https://github.com/ambv/black) against everything. Then set up CI to fail if code doesn't conform to black's style. Here's how Starlette does this: * https://github.com/encode/starlette/blob/e3d090b3597167f7b3a4f76e4bb3c0d3e94be61a/.travis.yml#L14 * https://github.com/encode/starlette/blob/e3d090b3597167f7b3a4f76e4bb3c0d3e94be61a/scripts/lint - essentially runs `black starlette tests --check` And here's an example of a test run that failed: https://travis-ci.org/encode/starlette/jobs/403172478 | 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/340/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 460396952 | MDExOlB1bGxSZXF1ZXN0MjkxNTM0NTk2 | 529 | Use keyed rows - fixes #521 | 1383872 | closed | 0 | 1 | 2019-06-25T12:33:48Z | 2019-06-25T12:35:07Z | 2019-06-25T12:35:07Z | NONE | simonw/datasette/pulls/529 | Supports template syntax like this: ``` {% for row in display_rows %} <h2 class="scientist">{{ row["First_Name"] }} {{ row["Last_Name"] }}</h2> ... ``` | 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/529/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | |||||

| 459622390 | MDU6SXNzdWU0NTk2MjIzOTA= | 522 | Handle case-insensitive headers in a nicer way | 9599 | open | 0 | 1 | 2019-06-23T21:56:34Z | 2019-06-26T18:48:53Z | OWNER | Spun out from https://github.com/simonw/datasette/pull/518#discussion_r296486289 | 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/522/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 462094937 | MDExOlB1bGxSZXF1ZXN0MjkyODc5MjA0 | 32 | db.add_foreign_keys() method | 9599 | closed | 0 | 1 | 2019-06-28T15:40:33Z | 2019-06-29T06:27:39Z | 2019-06-29T06:27:39Z | OWNER | simonw/sqlite-utils/pulls/32 | Refs #31. Still TODO: - [x] Unit tests - [x] Documentation | 140912432 | pull | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/32/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | |||||

| 463492395 | MDExOlB1bGxSZXF1ZXN0MjkzOTYyNDA1 | 533 | Support cleaner custom templates for rows and tables, closes #521 | 9599 | closed | 0 | 1 | 2019-07-03T00:40:18Z | 2019-07-03T03:23:06Z | 2019-07-03T03:23:06Z | OWNER | simonw/datasette/pulls/533 | - [x] Rename `_rows_and_columns.html` to `_table.html` - [x] Unit test - [x] Documentation | 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/533/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | |||||

| 445875242 | MDExOlB1bGxSZXF1ZXN0MjgwMjA1NTAy | 480 | Split pypi and docker travis tasks | 813732 | closed | 0 | 4471010 | 1 | 2019-05-19T23:14:37Z | 2019-07-07T20:03:20Z | 2019-07-07T20:03:20Z | CONTRIBUTOR | simonw/datasette/pulls/480 | Resolves #478 This *should* work, but because this is a change that'll only really be testable on a) this repo, b) master branch, this might fail fast if I didn't get the configurations right. Looking at #478 it should just be as simple as splitting out the docker and pypi processes into separate jobs, but it might end up being more complicated than that, depending on what pre-processes the pypi deployment needs, and how travisci treats deployment steps without scripts in general. | 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/480/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||

| 464905894 | MDU6SXNzdWU0NjQ5MDU4OTQ= | 545 | Fix header on 404 page | 9599 | closed | 0 | 4471010 | 1 | 2019-07-07T01:47:40Z | 2019-07-07T20:26:55Z | 2019-07-07T20:26:55Z | OWNER | <img width="707" alt="Error_404" src="https://user-images.githubusercontent.com/9599/60762932-83127a00-a01e-11e9-853a-6ea6aa5e8f6d.png"> | 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/545/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 465002978 | MDU6SXNzdWU0NjUwMDI5Nzg= | 550 | Pull m2m faceting out of master so we can ship a release without it | 9599 | closed | 0 | 4471010 | 1 | 2019-07-07T23:10:48Z | 2019-07-07T23:21:22Z | 2019-07-07T23:21:22Z | OWNER | After spending some time with #495 I believe I need to make some pretty major changes to how m2m faceting works. I don't want it to block the release of ASGI Datasette so I'm going to revert it back out of master for the moment and merge it back in after the release has gone out. | 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/550/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 465019882 | MDU6SXNzdWU0NjUwMTk4ODI= | 552 | Add --plugin-secret support to "datasette package" | 9599 | open | 0 | 1 | 2019-07-08T01:46:47Z | 2019-07-08T01:47:30Z | OWNER | Split out from #544. I think I should combine this with #347 (renaming `datasette package` to `datasette publish docker`). | 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/552/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 464894812 | MDExOlB1bGxSZXF1ZXN0Mjk1MDY1Nzk2 | 544 | --plugin-secret option | 9599 | closed | 0 | 4471010 | 1 | 2019-07-06T22:18:20Z | 2019-07-08T02:06:31Z | 2019-07-08T02:06:31Z | OWNER | simonw/datasette/pulls/544 | Refs #543 - [x] Zeit Now v1 support - [x] Solve escaping of ENV in Dockerfile - [x] Heroku support - [x] Unit tests - [x] Cloud Run support - [x] Documentation | 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/544/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||

| 465001185 | MDU6SXNzdWU0NjUwMDExODU= | 549 | Send pull request to the repo that the _table.html template will break | 9599 | closed | 0 | 4471010 | 1 | 2019-07-07T22:45:17Z | 2019-07-08T03:36:46Z | 2019-07-08T03:36:45Z | OWNER | Bump this to 0.29 https://github.com/simonw/salaries-datasette/blob/master/requirements/base.txt And rename https://github.com/simonw/salaries-datasette/blob/master/templates/_rows_and_columns.html to _table.html | 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/549/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 462117311 | MDU6SXNzdWU0NjIxMTczMTE= | 531 | /database/-/inspect | 9599 | open | 0 | 1 | 2019-06-28T16:33:41Z | 2019-07-08T15:43:57Z | OWNER | Build `/database/-/inspect` which shows tables, columns, column types and foreign keys It won't show table counts. Or maybe it will include them optionally but only for `-i` databases, in a special area of the JSON reserved for immutable-only inspect details. _Originally posted by @simonw in https://github.com/simonw/datasette/issues/465#issuecomment-506797086_ | 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/531/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 456569067 | MDU6SXNzdWU0NTY1NjkwNjc= | 510 | Ability to facet by delimiter (e.g. comma separated fields) | 9599 | open | 0 | 9599 | 1 | 2019-06-15T19:34:41Z | 2019-07-08T15:44:51Z | OWNER | E.g. if a field contains "Tags,With,Commas" be able to facet them in the same way as `_facet_array=` lets you facet `["Tags", "With", "Commas"]` | 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/510/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

|||||||

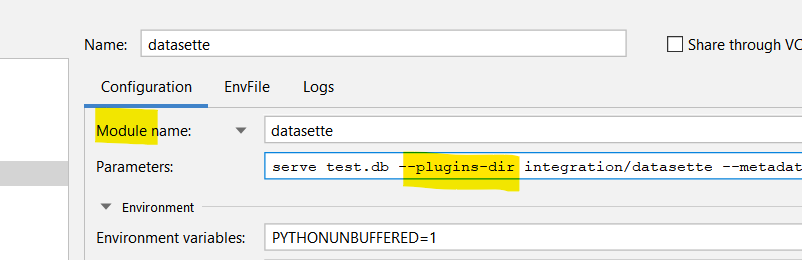

| 465773546 | MDExOlB1bGxSZXF1ZXN0Mjk1NzQ4MjY4 | 556 | Add support for running datasette as a module | 3243482 | closed | 0 | 1 | 2019-07-09T13:13:30Z | 2019-07-11T16:07:45Z | 2019-07-11T16:07:44Z | CONTRIBUTOR | simonw/datasette/pulls/556 | This PR allows running datasette using `python -m datasette` command in addition to just running the executable. This function is quite useful when debugging a plugin in a project because IDEs like PyCharm can easily start a debug session when datasette is run as a module in contrast to trying to attach a debugger to a running process.  | 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/556/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | |||||

| 455996809 | MDU6SXNzdWU0NTU5OTY4MDk= | 28 | Rearrange the docs by area, not CLI vs Python | 9599 | closed | 0 | 1 | 2019-06-13T23:33:35Z | 2019-07-15T02:37:20Z | 2019-07-15T02:37:20Z | OWNER | The docs for eg inserting data should live on the same page, rather than being split across the API and CLI pages. | 140912432 | issue | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/28/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 467928674 | MDExOlB1bGxSZXF1ZXN0Mjk3NDU5Nzk3 | 40 | .get() method plus support for compound primary keys | 9599 | closed | 0 | 1 | 2019-07-15T03:43:13Z | 2019-07-15T04:28:57Z | 2019-07-15T04:28:52Z | OWNER | simonw/sqlite-utils/pulls/40 | - [x] Tests for the `NotFoundError` exception - [x] Documentation for `.get()` method - [x] Support `--pk` multiple times to define CLI compound primary keys - [x] Documentation for compound primary keys | 140912432 | pull | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/40/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | |||||

| 470637068 | MDU6SXNzdWU0NzA2MzcwNjg= | 1 | Use XML Analyser to figure out the structure of the export XML | 9599 | closed | 0 | 1 | 2019-07-20T05:19:02Z | 2019-07-20T05:20:09Z | 2019-07-20T05:20:09Z | MEMBER | https://github.com/simonw/xml_analyser | 197882382 | issue | {

"url": "https://api.github.com/repos/dogsheep/healthkit-to-sqlite/issues/1/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 470637152 | MDU6SXNzdWU0NzA2MzcxNTI= | 2 | Import workouts | 9599 | closed | 0 | 1 | 2019-07-20T05:20:21Z | 2019-07-20T06:21:41Z | 2019-07-20T06:21:41Z | MEMBER | From #1 | 197882382 | issue | {

"url": "https://api.github.com/repos/dogsheep/healthkit-to-sqlite/issues/2/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 470640505 | MDU6SXNzdWU0NzA2NDA1MDU= | 4 | Import Records | 9599 | closed | 0 | 1 | 2019-07-20T06:11:20Z | 2019-07-20T06:21:41Z | 2019-07-20T06:21:41Z | MEMBER | From #1: ```python 'Record': {'attr_counts': {'creationDate': 2672233, 'device': 2665111, 'endDate': 2672233, 'sourceName': 2672233, 'sourceVersion': 2671779, 'startDate': 2672233, 'type': 2672233, 'unit': 2650012, 'value': 2672232}, 'child_counts': {'HeartRateVariabilityMetadataList': 2318, 'MetadataEntry': 287974}, 'count': 2672233, 'parent_counts': {'Correlation': 2, 'HealthData': 2672231}}, ``` | 197882382 | issue | {

"url": "https://api.github.com/repos/dogsheep/healthkit-to-sqlite/issues/4/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 470856782 | MDU6SXNzdWU0NzA4NTY3ODI= | 6 | Break up records into different tables for each type | 9599 | closed | 0 | 1 | 2019-07-22T01:54:59Z | 2019-07-22T03:28:55Z | 2019-07-22T03:28:50Z | MEMBER | I don't think there's much benefit to having all of the different record types stored in the same enormous table. Here's what I get when I use `_facet=type`: <img width="358" alt="hello2__records__2_672_233_rows" src="https://user-images.githubusercontent.com/9599/61601118-e2f54d00-abe8-11e9-8bf6-3df2ef969112.png"> I'm going to try splitting these up into separate tables - so `HKQuantityTypeIdentifierBodyMassIndex` becomes a table called `rBodyMassIndex` - and see if that's nicer to work with. | 197882382 | issue | {

"url": "https://api.github.com/repos/dogsheep/healthkit-to-sqlite/issues/6/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 351845423 | MDU6SXNzdWUzNTE4NDU0MjM= | 3 | Experiment with contentless FTS tables | 9599 | closed | 0 | 1 | 2018-08-18T19:31:01Z | 2019-07-22T20:58:55Z | 2019-07-22T20:58:55Z | OWNER | Could greatly reduce size of resulting database for large datasets: http://cocoamine.net/blog/2015/09/07/contentless-fts4-for-large-immutable-documents/ | 140912432 | issue | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/3/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 473733752 | MDExOlB1bGxSZXF1ZXN0MzAxODI0MDk3 | 51 | Fix for too many SQL variables, closes #50 | 9599 | closed | 0 | 1 | 2019-07-28T11:30:30Z | 2019-07-28T11:59:32Z | 2019-07-28T11:59:32Z | OWNER | simonw/sqlite-utils/pulls/51 | 140912432 | pull | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/51/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

| 476437213 | MDU6SXNzdWU0NzY0MzcyMTM= | 566 | Unexpected keyword argument 'hidden' | 8330931 | closed | 0 | 1 | 2019-08-03T10:07:57Z | 2019-08-03T16:13:36Z | 2019-08-03T16:13:36Z | NONE | I couldn't get a test example running. I am running python 3.6.8 and tried both windows and windows subsystem for linux, getting the same error. My test.db was created by converting a five line csv file with csvs-to-sqlite. The csv file is: col1, col2, col3 1,2,3 4,5,6 7,8,9 10,11,12 Here is the error message: (myvenv) davido@DESKTOP-L29G79U:~/dot/datasette-eg$ datasette test.db Traceback (most recent call last): File "/home/davido/dot/datasette-eg/myvenv/bin/datasette", line 7, in <module> from datasette.cli import cli File "/home/davido/dot/datasette-eg/myvenv/lib/python3.6/site-packages/datasette/cli.py", line 2, in <module> import uvicorn File "/home/davido/dot/datasette-eg/myvenv/lib/python3.6/site-packages/uvicorn/__init__.py", line 2, in <module> from uvicorn.main import Server, main, run File "/home/davido/dot/datasette-eg/myvenv/lib/python3.6/site-packages/uvicorn/main.py", line 224, in <module> headers: typing.List[str], File "/home/davido/dot/datasette-eg/myvenv/lib/python3.6/site-packages/click/decorators.py", line 170, in decorator _param_memo(f, OptionClass(param_decls, **attrs)) File "/home/davido/dot/datasette-eg/myvenv/lib/python3.6/site-packages/click/core.py", line 1430, in __init__ Parameter.__init__(self, param_decls, type=type, **attrs) TypeError: __init__() got an unexpected keyword a… | 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/566/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 481887482 | MDExOlB1bGxSZXF1ZXN0MzA4MjkyNDQ3 | 55 | Ability to introspect and run queries against views | 9599 | closed | 0 | 1 | 2019-08-17T13:40:56Z | 2019-08-23T12:19:42Z | 2019-08-23T12:19:42Z | OWNER | simonw/sqlite-utils/pulls/55 | See #54 | 140912432 | pull | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/55/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | |||||

| 487598468 | MDU6SXNzdWU0ODc1OTg0Njg= | 2 | --save option to dump checkins to a JSON file on disk | 9599 | closed | 0 | 1 | 2019-08-30T17:41:06Z | 2019-08-31T02:40:21Z | 2019-08-31T02:40:21Z | MEMBER | This is a complement to the `--load` option - mainly useful for development purposes. (I'll rename `--file` to `--load` as part of this issue). | 205429375 | issue | {

"url": "https://api.github.com/repos/dogsheep/swarm-to-sqlite/issues/2/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 487601121 | MDU6SXNzdWU0ODc2MDExMjE= | 4 | Online tool for getting a Foursquare OAuth token | 9599 | closed | 0 | 1 | 2019-08-30T17:48:14Z | 2019-08-31T18:07:26Z | 2019-08-31T18:07:26Z | MEMBER | I will link to this from the documentation. See also this conversation on Twitter: https://twitter.com/simonw/status/1166822603023011840 I've decided to go with "copy and paste in a token" rather than hooking up a local web server that can have tokens passed to it. | 205429375 | issue | {

"url": "https://api.github.com/repos/dogsheep/swarm-to-sqlite/issues/4/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 488874815 | MDU6SXNzdWU0ODg4NzQ4MTU= | 5 | Write tests that simulate the Twitter API | 9599 | open | 0 | 1 | 2019-09-03T23:55:35Z | 2019-09-03T23:56:28Z | MEMBER | I can use betamax for this: https://pypi.org/project/betamax/ | 206156866 | issue | {

"url": "https://api.github.com/repos/dogsheep/twitter-to-sqlite/issues/5/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 491791152 | MDU6SXNzdWU0OTE3OTExNTI= | 9 | followers-ids and friends-ids subcommands | 9599 | closed | 0 | 1 | 2019-09-10T16:58:15Z | 2019-09-10T17:36:55Z | 2019-09-10T17:36:55Z | MEMBER | These will import follower and friendship IDs into the following tables, using these APIs: https://developer.twitter.com/en/docs/accounts-and-users/follow-search-get-users/api-reference/get-followers-ids https://developer.twitter.com/en/docs/accounts-and-users/follow-search-get-users/api-reference/get-friends-ids | 206156866 | issue | {

"url": "https://api.github.com/repos/dogsheep/twitter-to-sqlite/issues/9/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 503045221 | MDU6SXNzdWU1MDMwNDUyMjE= | 11 | Commands for recording real-time tweets from the streaming API | 9599 | closed | 0 | 1 | 2019-10-06T03:09:30Z | 2019-10-06T04:54:17Z | 2019-10-06T04:48:31Z | MEMBER | https://developer.twitter.com/en/docs/tweets/filter-realtime/api-reference/post-statuses-filter We can support tracking keywords and following specific users. | 206156866 | issue | {

"url": "https://api.github.com/repos/dogsheep/twitter-to-sqlite/issues/11/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 503128914 | MDU6SXNzdWU1MDMxMjg5MTQ= | 583 | Enable "explain" and "explain query plan" for CTEs | 9599 | closed | 0 | 1 | 2019-10-06T17:00:10Z | 2019-10-06T17:24:07Z | 2019-10-06T17:24:07Z | OWNER | This currently throws an error: https://latest.datasette.io/fixtures?sql=explain+WITH+RECURSIVE%0D%0A++xaxis%28x%29+AS+%28VALUES%28-2.0%29+UNION+ALL+SELECT+x%2B0.05+FROM+xaxis+WHERE+x%3C1.2%29%2C%0D%0A++yaxis%28y%29+AS+%28VALUES%28-1.0%29+UNION+ALL+SELECT+y%2B0.1+FROM+yaxis+WHERE+y%3C1.0%29%2C%0D%0A++m%28iter%2C+cx%2C+cy%2C+x%2C+y%29+AS+%28%0D%0A++++SELECT+0%2C+x%2C+y%2C+0.0%2C+0.0+FROM+xaxis%2C+yaxis%0D%0A++++UNION+ALL%0D%0A++++SELECT+iter%2B1%2C+cx%2C+cy%2C+x*x-y*y+%2B+cx%2C+2.0*x*y+%2B+cy+FROM+m+%0D%0A+++++WHERE+%28x*x+%2B+y*y%29+%3C+4.0+AND+iter%3C28%0D%0A++%29%2C%0D%0A++m2%28iter%2C+cx%2C+cy%29+AS+%28%0D%0A++++SELECT+max%28iter%29%2C+cx%2C+cy+FROM+m+GROUP+BY+cx%2C+cy%0D%0A++%29%2C%0D%0A++a%28t%29+AS+%28%0D%0A++++SELECT+group_concat%28+substr%28%27+.%2B*%23%27%2C+1%2Bmin%28iter%2F7%2C4%29%2C+1%29%2C+%27%27%29+%0D%0A++++FROM+m2+GROUP+BY+cy%0D%0A++%29%0D%0ASELECT+group_concat%28rtrim%28t%29%2Cx%270a%27%29+FROM+a%3B | 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/583/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 503085013 | MDU6SXNzdWU1MDMwODUwMTM= | 13 | statuses-lookup command | 9599 | closed | 0 | 1 | 2019-10-06T11:00:20Z | 2019-10-07T00:33:49Z | 2019-10-07T00:31:44Z | MEMBER | For bulk retrieving tweets by their ID. https://developer.twitter.com/en/docs/tweets/post-and-engage/api-reference/get-statuses-lookup Rate limit is 900/15 minutes (1 call per second) but each call can pull up to 100 IDs, so we can pull 6,000 per minute. Should support `--SQL` and `--attach` #8 | 206156866 | issue | {

"url": "https://api.github.com/repos/dogsheep/twitter-to-sqlite/issues/13/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 503217375 | MDU6SXNzdWU1MDMyMTczNzU= | 585 | Databases on index page should display in order they were passed to "datasette serve"? | 9599 | closed | 0 | 1 | 2019-10-07T03:42:39Z | 2019-10-14T03:52:34Z | 2019-10-14T03:52:34Z | OWNER | If you run this: datasette serve -h 127.0.0.1 -p 8000 -m phone-locations.db healthkit.db locations.db genome.db Then the index page for that Datasette instance should show the databases in the order they were specified on the command-line. Mind you when we add pagination to that page in #468 we may want to do something different here. | 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/585/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 505950145 | MDExOlB1bGxSZXF1ZXN0MzI3Mjc5ODE4 | 592 | Offer SQL formatting | 2657547 | closed | 0 | 1 | 2019-10-11T16:35:49Z | 2019-10-14T08:57:12Z | 2019-10-14T03:46:13Z | CONTRIBUTOR | simonw/datasette/pulls/592 | SQL code will be formatted on page load, and can additionally be formatted by clicking the "Format SQL" button. Closes #136 | 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/592/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | |||||

| 505837199 | MDExOlB1bGxSZXF1ZXN0MzI3MTg4MDg3 | 591 | Sort databases on homepage by argument order | 2657547 | closed | 0 | 1 | 2019-10-11T12:57:38Z | 2019-10-14T08:57:50Z | 2019-10-14T03:52:34Z | CONTRIBUTOR | simonw/datasette/pulls/591 | Closes #585 | 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/591/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | |||||

| 506276893 | MDU6SXNzdWU1MDYyNzY4OTM= | 7 | issue-comments command for importing issue comments | 9599 | closed | 0 | 1 | 2019-10-13T05:23:58Z | 2019-10-14T14:44:12Z | 2019-10-13T05:24:30Z | MEMBER | Using this API: https://developer.github.com/v3/issues/comments/ | 207052882 | issue | {

"url": "https://api.github.com/repos/dogsheep/github-to-sqlite/issues/7/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 506432572 | MDU6SXNzdWU1MDY0MzI1NzI= | 21 | Fix & escapes in tweet text | 9599 | closed | 0 | 1 | 2019-10-14T03:37:28Z | 2019-10-15T18:48:16Z | 2019-10-15T18:48:16Z | MEMBER | <img width="1136" alt="twitter__tweets__21_773_rows_where_sorted_by_id_descending" src="https://user-images.githubusercontent.com/9599/66728360-38f91b80-edf9-11e9-95b5-ce6d097fe18e.png"> Shouldn't be storing `&` here. | 206156866 | issue | {

"url": "https://api.github.com/repos/dogsheep/twitter-to-sqlite/issues/21/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 513074501 | MDU6SXNzdWU1MTMwNzQ1MDE= | 26 | Command for importing mentions timeline | 9599 | closed | 0 | 1 | 2019-10-28T03:14:27Z | 2019-10-30T02:36:13Z | 2019-10-30T02:20:47Z | MEMBER | https://developer.twitter.com/en/docs/tweets/timelines/api-reference/get-statuses-mentions_timeline Almost identical to home-timeline #18 but it uses `https://api.twitter.com/1.1/statuses/mentions_timeline.json` instead. | 206156866 | issue | {

"url": "https://api.github.com/repos/dogsheep/twitter-to-sqlite/issues/26/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 516874735 | MDU6SXNzdWU1MTY4NzQ3MzU= | 613 | Basic join support for table view | 9599 | open | 0 | 1 | 2019-11-03T19:12:53Z | 2019-11-03T19:14:01Z | OWNER | I think it would be possible to support basic foreign key joins on the table page. The user could specify columns that should result in a join (from a set of suggestions similar to how facets work right now) and they could then be passed as `?_join=city_id` arguments. This feature will make a lot of sense when combined with the ability to show / hide / customize columns, see #292 | 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/613/reactions",

"total_count": 1,

"+1": 1,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 516950748 | MDU6SXNzdWU1MTY5NTA3NDg= | 614 | Add "not in" filter - ?pk__notin=x,y,z | 9599 | closed | 0 | 1 | 2019-11-04T04:07:17Z | 2019-11-04T04:31:58Z | 2019-11-04T04:12:00Z | OWNER | We have a `__in` filter at the moment: https://latest.datasette.io/fixtures/facetable?pk__in=1,2,3 Today I found myself needing the inverse, a `?pk__notin=` filter, which isn't currently supported. | 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/614/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 517241040 | MDU6SXNzdWU1MTcyNDEwNDA= | 63 | ensure_index() method | 9599 | closed | 0 | 1 | 2019-11-04T15:51:22Z | 2019-11-04T16:20:36Z | 2019-11-04T16:20:35Z | OWNER | ```python db["table"].ensure_index(["col1", "col2"]) ``` This will do the following: - if the specified table or column does not exist, do nothing - if they exist and already have an index, do nothing - otherwise, create the index I want this for tools like [twitter-to-sqlite search](https://github.com/dogsheep/twitter-to-sqlite/blob/801c0c2daf17d8abce9dcb5d8d610410e7e25dbe/README.md#running-searches) where the `search_runs` table may or not have been created yet but, if it IS created, I want to put an index on the `hash` column. | 140912432 | issue | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/63/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 453243459 | MDU6SXNzdWU0NTMyNDM0NTk= | 503 | Handle SQLite databases with spaces in their names? | 7936571 | closed | 0 | 9599 | 1 | 2019-06-06T21:20:59Z | 2019-11-04T23:16:30Z | 2019-11-04T23:16:30Z | NONE | I named my SQLite database "Government workers" and published it to Heroku. When I clicked the "Government workers" database online it lead to a 404 page: `Database not found: Government%20workers`. I believe this is because the database name has a space. | 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/503/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 476413293 | MDU6SXNzdWU0NzY0MTMyOTM= | 52 | Throws error if .insert_all() / .upsert_all() called with empty list | 9599 | closed | 0 | 1 | 2019-08-03T04:09:00Z | 2019-11-07T04:32:39Z | 2019-11-07T04:32:39Z | OWNER | See also https://github.com/simonw/db-to-sqlite/issues/18 | 140912432 | issue | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/52/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 515658861 | MDU6SXNzdWU1MTU2NTg4NjE= | 28 | Add indexes to followers table | 9599 | closed | 0 | 1 | 2019-10-31T18:40:22Z | 2019-11-09T20:15:42Z | 2019-11-09T20:11:48Z | MEMBER | `select follower_id from following where followed_id = 12497` takes over a second for me at the moment. | 206156866 | issue | {

"url": "https://api.github.com/repos/dogsheep/twitter-to-sqlite/issues/28/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 521282013 | MDU6SXNzdWU1MjEyODIwMTM= | 626 | Unit tests should fail under Python 3.8 | 9599 | closed | 0 | 1 | 2019-11-12T01:54:11Z | 2019-11-12T04:31:26Z | 2019-11-12T04:31:13Z | OWNER | The unit tests currently pass under Python 3.8. But... when you actually attempt to run Datasette you get an error: ``` ~/Dropbox/Development/datasette $ venv-py3.8.0/bin/datasette --memory -p 8855 Serve! files=() (immutables=()) on port 8855 Traceback (most recent call last): File "venv-py3.8.0/bin/datasette", line 11, in <module> load_entry_point('datasette', 'console_scripts', 'datasette')() File "/Users/simonw/Dropbox/Development/datasette/venv-py3.8.0/lib/python3.8/site-packages/click/core.py", line 764, in __call__ return self.main(*args, **kwargs) File "/Users/simonw/Dropbox/Development/datasette/venv-py3.8.0/lib/python3.8/site-packages/click/core.py", line 717, in main rv = self.invoke(ctx) File "/Users/simonw/Dropbox/Development/datasette/venv-py3.8.0/lib/python3.8/site-packages/click/core.py", line 1137, in invoke return _process_result(sub_ctx.command.invoke(sub_ctx)) File "/Users/simonw/Dropbox/Development/datasette/venv-py3.8.0/lib/python3.8/site-packages/click/core.py", line 956, in invoke return ctx.invoke(self.callback, **ctx.params) File "/Users/simonw/Dropbox/Development/datasette/venv-py3.8.0/lib/python3.8/site-packages/click/core.py", line 555, in invoke return callback(*args, **kwargs) File "/Users/simonw/Dropbox/Development/datasette/datasette/cli.py", line 365, in serve uvicorn.run(ds.app(), host=host, port=port, log_level="info") File "/Users/simonw/Dropbox/Development/datasette/venv-py3.8.0/lib/python3.8/site-packages/uvicorn/main.py", line 279, in run server.run() File "/Users/simonw/Dropbox/Development/datasette/venv-py3.8.0/lib/python3.8/site-packages/uvicorn/main.py", line 305, in run self.config.setup_event_loop() File "/Users/simonw/Dropbox/Development/datasette/venv-py3.8.0/lib/python3.8/site-packages/uvicorn/config.py", line 218, in setup_event_loop loop_setup() File "/Users/simonw/Dropbox/Development/datasette/venv-py3.8.0/lib/python3.8/site-packages/uvicorn/loops/auto.py", line 3, in auto_l… | 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/626/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 521335335 | MDU6SXNzdWU1MjEzMzUzMzU= | 629 | "datasette publish" commands should deploy with Python 3.8 | 9599 | closed | 0 | 1 | 2019-11-12T05:22:31Z | 2019-11-12T06:03:10Z | 2019-11-12T06:03:10Z | OWNER | Now that we support 3.8 (#627) `datasette publish` should always deploy using Python 3.8. | 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/629/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 521923131 | MDExOlB1bGxSZXF1ZXN0MzQwMjExMTQ5 | 631 | bugfix issue 572 | 3683993 | closed | 0 | 1 | 2019-11-13T02:46:50Z | 2019-11-13T04:28:43Z | 2019-11-13T04:28:42Z | CONTRIBUTOR | simonw/datasette/pulls/631 | closes bugfix issue #572 | 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/631/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | |||||

| 489429284 | MDU6SXNzdWU0ODk0MjkyODQ= | 572 | Error running datasette publish with just --source_url | 9599 | closed | 0 | 1 | 2019-09-04T22:19:22Z | 2019-11-13T04:28:44Z | 2019-11-13T04:28:44Z | OWNER | ``` datasette publish now cleo.db \ --source_url="https://twitter.com/cleopaws" \ ``` Gave me this error: <img width="338" alt="Error_500" src="https://user-images.githubusercontent.com/9599/64295924-74b1e300-cf27-11e9-9aed-c69e99c97030.png"> | 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/572/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 501773982 | MDExOlB1bGxSZXF1ZXN0MzIzOTgzNzMy | 579 | New connection pooling | 9599 | open | 0 | 1 | 2019-10-02T23:22:19Z | 2019-11-15T22:57:21Z | OWNER | simonw/datasette/pulls/579 | See #569 | 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/579/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

| 528442126 | MDU6SXNzdWU1Mjg0NDIxMjY= | 641 | Better documentation for --static option | 9599 | closed | 0 | 1 | 2019-11-26T02:07:57Z | 2019-11-26T03:30:02Z | 2019-11-26T02:31:53Z | OWNER | This is misleading: https://github.com/simonw/datasette/blob/aca41618f8761f99c47c8ae8e81b07a6d4af4d7a/docs/datasette-serve-help.txt#L23 The correct format is e.g. `static:static/` Also it's not mentioned in the regular documentation at all. | 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/641/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 527710055 | MDU6SXNzdWU1Mjc3MTAwNTU= | 640 | Nicer error message for heroku publish name clash | 82988 | open | 0 | 1 | 2019-11-24T14:57:07Z | 2019-12-06T07:19:34Z | CONTRIBUTOR | If you try to publish to Heroku using no set name (i.e. the default `datasette` name) and a project already exists under that name, you get a meaningful error report on the first line followed by Py error messages that drown it out: ``` Creating datasette... ! ▸ Name datasette is already taken Traceback (most recent call last): File "/usr/local/bin/datasette", line 10, in <module> sys.exit(cli()) File "/usr/local/lib/python3.7/site-packages/click/core.py", line 764, in __call__ return self.main(*args, **kwargs) File "/usr/local/lib/python3.7/site-packages/click/core.py", line 717, in main rv = self.invoke(ctx) File "/usr/local/lib/python3.7/site-packages/click/core.py", line 1137, in invoke return _process_result(sub_ctx.command.invoke(sub_ctx)) File "/usr/local/lib/python3.7/site-packages/click/core.py", line 1137, in invoke return _process_result(sub_ctx.command.invoke(sub_ctx)) File "/usr/local/lib/python3.7/site-packages/click/core.py", line 956, in invoke return ctx.invoke(self.callback, **ctx.params) File "/usr/local/lib/python3.7/site-packages/click/core.py", line 555, in invoke return callback(*args, **kwargs) File "/Users/NNNNN/Library/Python/3.7/lib/python/site-packages/datasette/publish/heroku.py", line 124, in heroku create_output = check_output(cmd).decode("utf8") File "/usr/local/Cellar/python/3.7.5/Frameworks/Python.framework/Versions/3.7/lib/python3.7/subprocess.py", line 411, in check_output **kwargs).stdout File "/usr/local/Cellar/python/3.7.5/Frameworks/Python.framework/Versions/3.7/lib/python3.7/subprocess.py", line 512, in run output=stdout, stderr=stderr) subprocess.CalledProcessError: Command '['heroku', 'apps:create', 'datasette', '--json']' returned non-zero exit status 1. ``` It would be neater if: - the Py error message was caught; - the report suggested setting a project name using `-n` etc. It may also be useful to provide a command to list the current names that are being used, which… | 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/640/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 386459810 | MDExOlB1bGxSZXF1ZXN0MjM1MTk0Mjg2 | 390 | tiny typo in customization docs | 418191 | closed | 0 | 1 | 2018-12-01T13:44:42Z | 2019-12-19T02:30:35Z | 2018-12-16T21:32:56Z | CONTRIBUTOR | simonw/datasette/pulls/390 | was looking to add some custom templates to my use of datasette and saw this small typo. | 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/390/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | |||||

| 278190321 | MDU6SXNzdWUyNzgxOTAzMjE= | 157 | Teach "datasette publish" about custom template directories | 9599 | closed | 0 | 2949431 | 1 | 2017-11-30T16:44:57Z | 2020-01-15T16:05:13Z | 2017-12-09T18:28:54Z | OWNER | The following command should copy the custom templates into the deployment and ensure `datasette serve` correctly serves them: datasette publish now mydb.db --template-dir=custom-templates/ | 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/157/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 546961357 | MDU6SXNzdWU1NDY5NjEzNTc= | 656 | Display of the column definitions | 6371750 | closed | 0 | 1 | 2020-01-08T16:16:53Z | 2020-01-20T14:17:11Z | 2020-01-20T14:14:33Z | CONTRIBUTOR | Hello, Is the nice display of headers and definitions at the top of https://fivethirtyeight.datasettes.com/fivethirtyeight-ac35616/antiquities-act%2Factions_under_antiquities_act is configured in the metadata.json file ? Thank you, | 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/656/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 552773632 | MDExOlB1bGxSZXF1ZXN0MzY1MjE4Mzkx | 660 | gcloud run is now GA, s/beta// | 813732 | closed | 0 | 1 | 2020-01-21T10:08:38Z | 2020-01-22T03:41:09Z | 2020-01-21T23:28:12Z | CONTRIBUTOR | simonw/datasette/pulls/660 | 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/660/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

| 557077945 | MDExOlB1bGxSZXF1ZXN0MzY4NzM0NTAw | 663 | -p argument for datasette package, plus tests - refs #661 | 9599 | closed | 0 | 1 | 2020-01-29T19:47:50Z | 2020-01-29T22:46:43Z | 2020-01-29T22:46:43Z | OWNER | simonw/datasette/pulls/663 | 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/663/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

| 546078359 | MDExOlB1bGxSZXF1ZXN0MzU5ODIyNzcz | 75 | Explicitly include tests and docs in sdist | 15092 | closed | 0 | 1 | 2020-01-07T04:53:20Z | 2020-01-31T00:21:27Z | 2020-01-31T00:21:27Z | CONTRIBUTOR | simonw/sqlite-utils/pulls/75 | Also exclude 'tests' from runtime installation. | 140912432 | pull | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/75/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | |||||

| 559964149 | MDU6SXNzdWU1NTk5NjQxNDk= | 665 | Introduce a SQL statement parser in Python | 9599 | open | 0 | 1 | 2020-02-04T20:36:05Z | 2020-02-04T20:36:48Z | OWNER | #254 and #653 are both examples of problems that could be solved using a real SQL parser in Python. | 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/665/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 559374410 | MDU6SXNzdWU1NTkzNzQ0MTA= | 83 | Make db["table"].exists a documented API | 9599 | closed | 0 | 1 | 2020-02-03T22:31:44Z | 2020-02-08T23:58:35Z | 2020-02-08T23:56:23Z | OWNER | Right now it's a static thing which might get out-of-sync with the database. It should probably be a live check. Maybe call it `.exists()` instead? | 140912432 | issue | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/83/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 562911863 | MDU6SXNzdWU1NjI5MTE4NjM= | 85 | Create index doesn't work for columns containing spaces | 9599 | closed | 0 | 1 | 2020-02-11T00:34:46Z | 2020-02-11T05:13:20Z | 2020-02-11T05:13:20Z | OWNER | 140912432 | issue | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/85/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||||

| 565041624 | MDU6SXNzdWU1NjUwNDE2MjQ= | 671 | datasette.add_database(name, db) and datasette.remove_database(name) methods | 9599 | closed | 0 | 1 | 2020-02-14T01:05:48Z | 2020-02-14T01:30:35Z | 2020-02-14T01:30:30Z | OWNER | - `datasette.add_database(name, db)` - adds a new named database to the list of connected databases. `db` will be a `Database()` object, which may prove useful in the future for things like #670 and could also allow some plugins to provide in-memory SQLite databases. - `datasette.remove_database(name)` _Originally posted by @simonw in https://github.com/simonw/datasette/issues/417#issuecomment-586047995_ | 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/671/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 563348959 | MDExOlB1bGxSZXF1ZXN0MzczNzc1Nzg4 | 669 | fix db-to-sqlite command in ecosystem doc page | 883348 | closed | 0 | 1 | 2020-02-11T17:05:41Z | 2020-02-22T02:32:18Z | 2020-02-22T02:32:17Z | CONTRIBUTOR | simonw/datasette/pulls/669 | the `--connection` parameter has become positional | 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/669/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | |||||

| 449931899 | MDU6SXNzdWU0NDk5MzE4OTk= | 494 | --reload should only trigger for -i databases | 9599 | closed | 0 | 9599 | 1 | 2019-05-29T17:28:43Z | 2020-02-24T19:45:05Z | 2020-02-24T19:45:05Z | OWNER | Right now it's triggering any time a mutable database changes. | 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/494/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 574035432 | MDU6SXNzdWU1NzQwMzU0MzI= | 692 | is_hidden_table context variable on table.html page | 9599 | open | 0 | 1 | 2020-03-02T15:03:25Z | 2020-03-02T15:03:48Z | OWNER | It's useful to know if a table is hidden when rendering that page. `datasette-configure-fts` for example may want to disallow enabling search on hidden tables. | 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/692/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 582713554 | MDU6SXNzdWU1ODI3MTM1NTQ= | 700 | Request object utility for handling POST form data | 9599 | closed | 0 | 1 | 2020-03-17T02:44:59Z | 2020-03-17T02:47:50Z | 2020-03-17T02:47:50Z | OWNER | > This is also going to need me to handle POST form submissions which means I need to be able to parse the form body. I guess that will go in [datasette/utils/asgi.py](https://github.com/simonw/datasette/blob/master/datasette/utils/asgi.py). _Originally posted by @simonw in https://github.com/simonw/datasette/issues/698#issuecomment-599704264_ | 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/700/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 561469252 | MDExOlB1bGxSZXF1ZXN0MzcyMjczNjA4 | 33 | Upgrade to sqlite-utils 2.2.1 | 9599 | closed | 0 | 1 | 2020-02-07T07:32:12Z | 2020-03-20T19:21:42Z | 2020-03-20T19:21:41Z | MEMBER | dogsheep/twitter-to-sqlite/pulls/33 | 206156866 | pull | {

"url": "https://api.github.com/repos/dogsheep/twitter-to-sqlite/issues/33/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

| 585359363 | MDU6SXNzdWU1ODUzNTkzNjM= | 38 | Screen name display for user-timeline is uneven | 9599 | closed | 0 | 1 | 2020-03-20T22:30:23Z | 2020-03-20T22:37:17Z | 2020-03-20T22:37:17Z | MEMBER | ``` CDPHE [####################################] 67 CHFSKy [####################################] 3216 DHSWI [####################################] 41 DPHHSMT [####################################] 742 Delaware_DHSS [####################################] 3231 DhhsNevada [####################################] 639 ``` I could format them to match the length of the longest screen name instead. | 206156866 | issue | {

"url": "https://api.github.com/repos/dogsheep/twitter-to-sqlite/issues/38/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 585526292 | MDU6SXNzdWU1ODU1MjYyOTI= | 1 | Set up full text search | 9599 | closed | 0 | 1 | 2020-03-21T15:57:35Z | 2020-03-21T19:47:46Z | 2020-03-21T19:45:52Z | MEMBER | Should run against `title` and `text` in `items`, and `about` and `id` in `users`. | 248903544 | issue | {

"url": "https://api.github.com/repos/dogsheep/hacker-news-to-sqlite/issues/1/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 569237568 | MDU6SXNzdWU1NjkyMzc1Njg= | 677 | The first time you click sort by ID it should show you results in reverse order | 9599 | closed | 0 | 1 | 2020-02-21T23:38:50Z | 2020-03-21T23:57:46Z | 2020-03-21T23:57:46Z | OWNER | e.g. on https://latest.datasette.io/fixtures/roadside_attractions Clicking the "pk" column header doesn't actually do anything - it sorts by pk asc but since the page was already sorted like that nothing useful changes. The first click on a primary key column that the page is already implicitly sorted by should instead enable sort descending on that column. | 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/677/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 586595839 | MDU6SXNzdWU1ODY1OTU4Mzk= | 23 | Release 1.0 | 9599 | closed | 0 | 5225818 | 1 | 2020-03-24T00:03:55Z | 2020-03-24T00:15:50Z | 2020-03-24T00:15:50Z | MEMBER | Need to compile release notes. | 207052882 | issue | {

"url": "https://api.github.com/repos/dogsheep/github-to-sqlite/issues/23/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 539985017 | MDExOlB1bGxSZXF1ZXN0MzU0ODY5Mzkx | 652 | Quick (and uninformed and perhaps misguided) attempt to add a <base> url for hosting datasette at a particular host/URI | 132978 | closed | 0 | 1 | 2019-12-18T23:37:16Z | 2020-03-24T22:14:50Z | 2020-03-24T22:14:50Z | NONE | simonw/datasette/pulls/652 | As usual, I don't really know what I'm doing... so this is just a suggested approach. I've not written tests, I've not run the tests, I don't know if I've missed some absolute URLs that would need to have the leading slash dropped. BUT, I tested it with `--config base_url:http://127.0.0.1:8001/` on the command line and from what little I know about datasette it's at least working in some obvious cases. My changes are based on what I saw in https://github.com/simonw/datasette/commit/8da2db4b71096b19e7a9ef1929369b8483d448bf (thanks!) I'm happy to be more thorough on this if you think it's worth pursuing. Fixes #394 (he said, optimistically). | 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/652/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | |||||

| 587314002 | MDU6SXNzdWU1ODczMTQwMDI= | 709 | Each plugin hook should link to example plugins built with it | 9599 | closed | 0 | 5234079 | 1 | 2020-03-24T22:18:48Z | 2020-03-24T22:30:10Z | 2020-03-24T22:29:43Z | OWNER | 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/709/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 585597329 | MDU6SXNzdWU1ODU1OTczMjk= | 704 | Add datasette-publish-fly to Datasette Publish documentation | 9599 | closed | 0 | 5234079 | 1 | 2020-03-21T22:25:10Z | 2020-03-24T22:39:09Z | 2020-03-24T22:39:09Z | OWNER | It's a cool example of a plugin that provides a new publish provider - worth mentioning on https://datasette.readthedocs.io/en/stable/publish.html | 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/704/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 543355051 | MDExOlB1bGxSZXF1ZXN0MzU3NjQwMTg2 | 6 | don't break if source is missing | 78035 | closed | 0 | 1 | 2019-12-29T10:46:47Z | 2020-03-28T02:28:11Z | 2020-03-28T02:28:11Z | CONTRIBUTOR | dogsheep/swarm-to-sqlite/pulls/6 | broke for me. very old checkins in 2010 had no source set. | 205429375 | pull | {

"url": "https://api.github.com/repos/dogsheep/swarm-to-sqlite/issues/6/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | |||||

| 592844348 | MDExOlB1bGxSZXF1ZXN0Mzk3NzQ5NjUz | 714 | --metadata accepts YAML as well as JSON | 9599 | closed | 0 | 1 | 2020-04-02T18:36:02Z | 2020-04-02T19:30:54Z | 2020-04-02T19:30:54Z | OWNER | simonw/datasette/pulls/714 | Refs #713. Still needs tests and documentation. | 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/714/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 |