issue_comments

10,495 rows sorted by updated_at descending

This data as json, CSV (advanced)

issue >30

- JavaScript plugin hooks mechanism similar to pluggy 47

- Redesign default .json format 46

- Updated Dockerfile with SpatiaLite version 5.0 45

- Port Datasette to ASGI 42

- Authentication (and permissions) as a core concept 40

- await datasette.client.get(path) mechanism for executing internal requests 33

- Maintain an in-memory SQLite table of connected databases and their tables 32

- Ability to sort (and paginate) by column 31

- link_or_copy_directory() error - Invalid cross-device link 28

- Export to CSV 27

- base_url configuration setting 27

- Documentation with recommendations on running Datasette in production without using Docker 27

- Support cross-database joins 26

- Ability for a canned query to write to the database 26

- table.transform() method for advanced alter table 26

- Proof of concept for Datasette on AWS Lambda with EFS 25

- WIP: Add Gmail takeout mbox import 25

- Redesign register_output_renderer callback 24

- "datasette insert" command and plugin hook 23

- Datasette Plugins 22

- .json and .csv exports fail to apply base_url 22

- Idea: import CSV to memory, run SQL, export in a single command 22

- table.extract(...) method and "sqlite-utils extract" command 21

- Handle spatialite geometry columns better 20

- "flash messages" mechanism 20

- Move CI to GitHub Issues 20

- load_template hook doesn't work for include/extends 20

- Mechanism for storing metadata in _metadata tables 20

- ?sort=colname~numeric to sort by by column cast to real 19

- Better way of representing binary data in .csv output 19

- …

| id | html_url | issue_url | node_id | user | created_at | updated_at ▲ | author_association | body | reactions | issue | performed_via_github_app |

|---|---|---|---|---|---|---|---|---|---|---|---|

| 890390342 | https://github.com/simonw/datasette/issues/1406#issuecomment-890390342 | https://api.github.com/repos/simonw/datasette/issues/1406 | IC_kwDOBm6k_c41EkdG | simonw 9599 | 2021-07-31T18:56:35Z | 2021-07-31T18:56:35Z | OWNER | But... I've lost enough time to this already, and removing |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Tests failing with FileNotFoundError in runner.isolated_filesystem 956303470 | |

| 890390198 | https://github.com/simonw/datasette/issues/1406#issuecomment-890390198 | https://api.github.com/repos/simonw/datasette/issues/1406 | IC_kwDOBm6k_c41Eka2 | simonw 9599 | 2021-07-31T18:55:33Z | 2021-07-31T18:55:33Z | OWNER | To clarify: the core problem here is that an error is thrown any time you call

Maybe there's a larger problem here that I play a bit fast and loose with |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Tests failing with FileNotFoundError in runner.isolated_filesystem 956303470 | |

| 890388656 | https://github.com/simonw/datasette/issues/1407#issuecomment-890388656 | https://api.github.com/repos/simonw/datasette/issues/1407 | IC_kwDOBm6k_c41EkCw | simonw 9599 | 2021-07-31T18:42:41Z | 2021-07-31T18:42:41Z | OWNER | I'll try |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

OSError: AF_UNIX path too long in ds_unix_domain_socket_server 957298475 | |

| 890388200 | https://github.com/simonw/datasette/issues/1407#issuecomment-890388200 | https://api.github.com/repos/simonw/datasette/issues/1407 | IC_kwDOBm6k_c41Ej7o | simonw 9599 | 2021-07-31T18:38:41Z | 2021-07-31T18:38:41Z | OWNER | The

I think what's happening here is that So for this code to work with |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

OSError: AF_UNIX path too long in ds_unix_domain_socket_server 957298475 | |

| 890259755 | https://github.com/simonw/datasette/issues/1406#issuecomment-890259755 | https://api.github.com/repos/simonw/datasette/issues/1406 | IC_kwDOBm6k_c41EEkr | simonw 9599 | 2021-07-31T00:04:54Z | 2021-07-31T00:04:54Z | OWNER | STILL failing. I'm going to try removing all instances of |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Tests failing with FileNotFoundError in runner.isolated_filesystem 956303470 | |

| 889599513 | https://github.com/simonw/datasette/issues/1398#issuecomment-889599513 | https://api.github.com/repos/simonw/datasette/issues/1398 | IC_kwDOBm6k_c41BjYZ | aitoehigie 192984 | 2021-07-30T03:21:49Z | 2021-07-30T03:21:49Z | NONE | Does the library support this now? |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Documentation on using Datasette as a library 947044667 | |

| 889555977 | https://github.com/simonw/datasette/issues/1406#issuecomment-889555977 | https://api.github.com/repos/simonw/datasette/issues/1406 | IC_kwDOBm6k_c41BYwJ | simonw 9599 | 2021-07-30T01:06:57Z | 2021-07-30T01:06:57Z | OWNER | Looking at the source code in Click for

|

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Tests failing with FileNotFoundError in runner.isolated_filesystem 956303470 | |

| 889553052 | https://github.com/simonw/datasette/issues/1406#issuecomment-889553052 | https://api.github.com/repos/simonw/datasette/issues/1406 | IC_kwDOBm6k_c41BYCc | simonw 9599 | 2021-07-30T00:58:43Z | 2021-07-30T00:58:43Z | OWNER | Tests are still failing in the job that calculates coverage. |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Tests failing with FileNotFoundError in runner.isolated_filesystem 956303470 | |

| 889550391 | https://github.com/simonw/datasette/issues/1406#issuecomment-889550391 | https://api.github.com/repos/simonw/datasette/issues/1406 | IC_kwDOBm6k_c41BXY3 | simonw 9599 | 2021-07-30T00:49:31Z | 2021-07-30T00:49:31Z | OWNER | That fixed it. My hunch is that Click's |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Tests failing with FileNotFoundError in runner.isolated_filesystem 956303470 | |

| 889548536 | https://github.com/simonw/datasette/issues/1406#issuecomment-889548536 | https://api.github.com/repos/simonw/datasette/issues/1406 | IC_kwDOBm6k_c41BW74 | simonw 9599 | 2021-07-30T00:43:47Z | 2021-07-30T00:43:47Z | OWNER | Still couldn't replicate on my laptop. On a hunch, I'm going to add |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Tests failing with FileNotFoundError in runner.isolated_filesystem 956303470 | |

| 889547142 | https://github.com/simonw/datasette/issues/1406#issuecomment-889547142 | https://api.github.com/repos/simonw/datasette/issues/1406 | IC_kwDOBm6k_c41BWmG | simonw 9599 | 2021-07-30T00:39:49Z | 2021-07-30T00:39:49Z | OWNER | It happens in CI but not on my laptop. I think I need to run the tests on my laptop like this: https://github.com/simonw/datasette/blob/121e10c29c5b412fddf0326939f1fe46c3ad9d4a/.github/workflows/test.yml#L27-L30 |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Tests failing with FileNotFoundError in runner.isolated_filesystem 956303470 | |

| 889539227 | https://github.com/simonw/datasette/issues/1241#issuecomment-889539227 | https://api.github.com/repos/simonw/datasette/issues/1241 | IC_kwDOBm6k_c41BUqb | simonw 9599 | 2021-07-30T00:15:26Z | 2021-07-30T00:15:26Z | OWNER | One possible treatment:

```html <style> a.action-button { display: inline-block; border: 1.5px solid #666; border-radius: 0.5em; background-color: #ffffff; color: #666; padding: 0.1em 0.8em; text-decoration: none; margin-right: 0.3em; font-size: 0.85em; } </style>{% if query.sql and allow_execute_sql %} View and edit SQL {% endif %} Copy and share link ``` |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Share button for copying current URL 814595021 | |

| 889525741 | https://github.com/simonw/datasette/issues/1405#issuecomment-889525741 | https://api.github.com/repos/simonw/datasette/issues/1405 | IC_kwDOBm6k_c41BRXt | simonw 9599 | 2021-07-29T23:33:30Z | 2021-07-29T23:33:30Z | OWNER | New documentation section for |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

utils.parse_metadata() should be a documented internal function 955316250 | |

| 888694261 | https://github.com/simonw/datasette/issues/1405#issuecomment-888694261 | https://api.github.com/repos/simonw/datasette/issues/1405 | IC_kwDOBm6k_c40-GX1 | simonw 9599 | 2021-07-28T23:52:21Z | 2021-07-28T23:52:21Z | OWNER | Document that it can raise a |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

utils.parse_metadata() should be a documented internal function 955316250 | |

| 888694144 | https://github.com/simonw/datasette/issues/1405#issuecomment-888694144 | https://api.github.com/repos/simonw/datasette/issues/1405 | IC_kwDOBm6k_c40-GWA | simonw 9599 | 2021-07-28T23:51:59Z | 2021-07-28T23:51:59Z | OWNER | {

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

utils.parse_metadata() should be a documented internal function 955316250 | ||

| 888075098 | https://github.com/dogsheep/google-takeout-to-sqlite/pull/5#issuecomment-888075098 | https://api.github.com/repos/dogsheep/google-takeout-to-sqlite/issues/5 | IC_kwDODFE5qs407vNa | maxhawkins 28565 | 2021-07-28T07:18:56Z | 2021-07-28T07:18:56Z | NONE |

I did some investigation into this issue and made a fix here. The problem was that some messages (like gchat logs) don't have a @simonw While looking into this I found something unexpected about how sqlite_utils handles upserts if the pkey column is |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

WIP: Add Gmail takeout mbox import 813880401 | |

| 887095569 | https://github.com/simonw/datasette/issues/1404#issuecomment-887095569 | https://api.github.com/repos/simonw/datasette/issues/1404 | IC_kwDOBm6k_c404AER | simonw 9599 | 2021-07-26T23:27:07Z | 2021-07-26T23:27:07Z | OWNER | {

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

`register_routes()` hook should take `datasette` argument 953352015 | ||

| 886969541 | https://github.com/simonw/datasette/issues/1402#issuecomment-886969541 | https://api.github.com/repos/simonw/datasette/issues/1402 | IC_kwDOBm6k_c403hTF | simonw 9599 | 2021-07-26T19:31:40Z | 2021-07-26T19:31:40Z | OWNER | Datasette could do a pretty good job of this by default, using I could also provide a mechanism to customize these - in particular to add images of some sort. It feels like something that should tie in to the metadata mechanism. |

{

"total_count": 1,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 1,

"rocket": 0,

"eyes": 0

} |

feature request: social meta tags 951185411 | |

| 886968648 | https://github.com/simonw/datasette/issues/1402#issuecomment-886968648 | https://api.github.com/repos/simonw/datasette/issues/1402 | IC_kwDOBm6k_c403hFI | simonw 9599 | 2021-07-26T19:30:14Z | 2021-07-26T19:30:14Z | OWNER | I really like this idea. I was thinking it might make a good plugin, but there's not a great mechanism for plugins to inject extra |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

feature request: social meta tags 951185411 | |

| 886241674 | https://github.com/dogsheep/hacker-news-to-sqlite/issues/3#issuecomment-886241674 | https://api.github.com/repos/dogsheep/hacker-news-to-sqlite/issues/3 | IC_kwDODtX3eM400vmK | simonw 9599 | 2021-07-25T18:41:17Z | 2021-07-25T18:41:17Z | MEMBER | Got a TIL out of this: https://til.simonwillison.net/jq/extracting-objects-recursively |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Use HN algolia endpoint to retrieve trees 952189173 | |

| 886237834 | https://github.com/dogsheep/hacker-news-to-sqlite/issues/3#issuecomment-886237834 | https://api.github.com/repos/dogsheep/hacker-news-to-sqlite/issues/3 | IC_kwDODtX3eM400uqK | simonw 9599 | 2021-07-25T18:05:32Z | 2021-07-25T18:05:32Z | MEMBER | If you hit the endpoint for a comment that's part of a thread you get that comment and its recursive children: https://hn.algolia.com/api/v1/items/27941552 You can tell that it's not the top-level because the

|

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Use HN algolia endpoint to retrieve trees 952189173 | |

| 886142671 | https://github.com/dogsheep/hacker-news-to-sqlite/issues/3#issuecomment-886142671 | https://api.github.com/repos/dogsheep/hacker-news-to-sqlite/issues/3 | IC_kwDODtX3eM400XbP | simonw 9599 | 2021-07-25T03:51:05Z | 2021-07-25T03:51:05Z | MEMBER | Prototype: |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Use HN algolia endpoint to retrieve trees 952189173 | |

| 886140431 | https://github.com/dogsheep/hacker-news-to-sqlite/issues/2#issuecomment-886140431 | https://api.github.com/repos/dogsheep/hacker-news-to-sqlite/issues/2 | IC_kwDODtX3eM400W4P | simonw 9599 | 2021-07-25T03:12:57Z | 2021-07-25T03:12:57Z | MEMBER | I'm going to build a general-purpose |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Command for fetching Hacker News threads from the search API 952179830 | |

| 886136224 | https://github.com/dogsheep/hacker-news-to-sqlite/issues/2#issuecomment-886136224 | https://api.github.com/repos/dogsheep/hacker-news-to-sqlite/issues/2 | IC_kwDODtX3eM400V2g | simonw 9599 | 2021-07-25T02:08:29Z | 2021-07-25T02:08:29Z | MEMBER | Prototype: |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Command for fetching Hacker News threads from the search API 952179830 | |

| 886135922 | https://github.com/dogsheep/hacker-news-to-sqlite/issues/2#issuecomment-886135922 | https://api.github.com/repos/dogsheep/hacker-news-to-sqlite/issues/2 | IC_kwDODtX3eM400Vxy | simonw 9599 | 2021-07-25T02:06:20Z | 2021-07-25T02:06:20Z | MEMBER | https://hn.algolia.com/api/v1/search_by_date?query=simonwillison.net&restrictSearchableAttributes=url looks like it does what I want. https://hn.algolia.com/api/v1/search_by_date?query=simonwillison.net&restrictSearchableAttributes=url&hitsPerPage=1000 - returns 1000 at once. Otherwise you have to paginate using https://www.algolia.com/doc/api-reference/api-parameters/hitsPerPage/ says 1000 is the maximum. |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Command for fetching Hacker News threads from the search API 952179830 | |

| 886135562 | https://github.com/dogsheep/hacker-news-to-sqlite/issues/2#issuecomment-886135562 | https://api.github.com/repos/dogsheep/hacker-news-to-sqlite/issues/2 | IC_kwDODtX3eM400VsK | simonw 9599 | 2021-07-25T02:01:11Z | 2021-07-25T02:01:11Z | MEMBER | That page doesn't have an API but does look easy to scrape. The other option here is the HN Search API powered by Algolia, documented at https://hn.algolia.com/api |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Command for fetching Hacker News threads from the search API 952179830 | |

| 886122696 | https://github.com/simonw/sqlite-utils/issues/251#issuecomment-886122696 | https://api.github.com/repos/simonw/sqlite-utils/issues/251 | IC_kwDOCGYnMM400SjI | simonw 9599 | 2021-07-24T23:21:32Z | 2021-07-24T23:21:32Z | OWNER |

This is a bit verbose - and having added New idea: ditch the sub-sub-commands and move the or: or |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

"sqlite-utils convert" command to replace the separate "sqlite-transform" tool 841377702 | |

| 886117120 | https://github.com/simonw/sqlite-utils/issues/299#issuecomment-886117120 | https://api.github.com/repos/simonw/sqlite-utils/issues/299 | IC_kwDOCGYnMM400RMA | simonw 9599 | 2021-07-24T22:12:01Z | 2021-07-24T22:12:01Z | OWNER | Documentation here: https://sqlite-utils.datasette.io/en/latest/cli.html#showing-the-schema |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Ability to see just specific table schemas with `sqlite-utils schema` 952154468 | |

| 885964242 | https://github.com/dogsheep/github-to-sqlite/pull/65#issuecomment-885964242 | https://api.github.com/repos/dogsheep/github-to-sqlite/issues/65 | IC_kwDODFdgUs40zr3S | khimaros 231498 | 2021-07-23T23:45:35Z | 2021-07-23T23:45:35Z | NONE | @simonw is this PR of interest to you? |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

basic support for events 923270900 | |

| 885098025 | https://github.com/dogsheep/google-takeout-to-sqlite/pull/5#issuecomment-885098025 | https://api.github.com/repos/dogsheep/google-takeout-to-sqlite/issues/5 | IC_kwDODFE5qs40wYYp | UtahDave 306240 | 2021-07-22T17:47:50Z | 2021-07-22T17:47:50Z | NONE | Hi @maxhawkins , I'm sorry, I haven't had any time to work on this. I'll have some time tomorrow to test your commits. I think they look great. I'm great with your commits superseding my initial attempt here. |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

WIP: Add Gmail takeout mbox import 813880401 | |

| 885094284 | https://github.com/dogsheep/google-takeout-to-sqlite/pull/5#issuecomment-885094284 | https://api.github.com/repos/dogsheep/google-takeout-to-sqlite/issues/5 | IC_kwDODFE5qs40wXeM | maxhawkins 28565 | 2021-07-22T17:41:32Z | 2021-07-22T17:41:32Z | NONE | I added a follow-up commit that deals with emails that don't have a |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

WIP: Add Gmail takeout mbox import 813880401 | |

| 885022230 | https://github.com/dogsheep/google-takeout-to-sqlite/pull/5#issuecomment-885022230 | https://api.github.com/repos/dogsheep/google-takeout-to-sqlite/issues/5 | IC_kwDODFE5qs40wF4W | maxhawkins 28565 | 2021-07-22T15:51:46Z | 2021-07-22T15:51:46Z | NONE | One thing I noticed is this importer doesn't save attachments along with the body of the emails. It would be nice if those got stored as blobs in a separate attachments table so attachments can be included while fetching search results. |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

WIP: Add Gmail takeout mbox import 813880401 | |

| 884672647 | https://github.com/dogsheep/google-takeout-to-sqlite/pull/5#issuecomment-884672647 | https://api.github.com/repos/dogsheep/google-takeout-to-sqlite/issues/5 | IC_kwDODFE5qs40uwiH | maxhawkins 28565 | 2021-07-22T05:56:31Z | 2021-07-22T14:03:08Z | NONE | How does this commit look? https://github.com/maxhawkins/google-takeout-to-sqlite/commit/72802a83fee282eb5d02d388567731ba4301050d It seems that Takeout's mbox format is pretty simple, so we can get away with just splitting the file on lines begining with I was able to load a 12GB takeout mbox without the program using more than a couple hundred MB of memory during the import process. It does make us lose the progress bar, but maybe I can add that back in a later commit. |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

WIP: Add Gmail takeout mbox import 813880401 | |

| 884910320 | https://github.com/simonw/datasette/issues/1401#issuecomment-884910320 | https://api.github.com/repos/simonw/datasette/issues/1401 | IC_kwDOBm6k_c40vqjw | fgregg 536941 | 2021-07-22T13:26:01Z | 2021-07-22T13:26:01Z | CONTRIBUTOR | ordered lists didn't work either, btw |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

unordered list is not rendering bullet points in description_html on database page 950664971 | |

| 884688833 | https://github.com/dogsheep/dogsheep-photos/issues/32#issuecomment-884688833 | https://api.github.com/repos/dogsheep/dogsheep-photos/issues/32 | IC_kwDOD079W840u0fB | aaronyih1 10793464 | 2021-07-22T06:40:25Z | 2021-07-22T06:40:25Z | NONE | The solution here is to upload an image to the bucket first. It is caused because it does not properly handle the case when there are no images in the bucket. |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

KeyError: 'Contents' on running upload 803333769 | |

| 817403642 | https://github.com/simonw/datasette/pull/1296#issuecomment-817403642 | https://api.github.com/repos/simonw/datasette/issues/1296 | MDEyOklzc3VlQ29tbWVudDgxNzQwMzY0Mg== | codecov[bot] 22429695 | 2021-04-12T00:29:05Z | 2021-07-20T08:52:12Z | NONE | Codecov Report

```diff @@ Coverage Diff @@ main #1296 +/-==========================================

- Coverage 91.62% 91.51% -0.12% | Impacted Files | Coverage Δ | |

|---|---|---|

| datasette/tracer.py | Continue to review full report at Codecov.

|

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Dockerfile: use Ubuntu 20.10 as base 855446829 | |

| 882542519 | https://github.com/simonw/datasette/pull/1400#issuecomment-882542519 | https://api.github.com/repos/simonw/datasette/issues/1400 | IC_kwDOBm6k_c40moe3 | codecov[bot] 22429695 | 2021-07-19T13:20:52Z | 2021-07-19T13:20:52Z | NONE | Codecov Report

```diff @@ Coverage Diff @@ main #1400 +/-=======================================

Coverage 91.62% 91.62% Continue to review full report at Codecov.

|

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Bump black from 21.6b0 to 21.7b0 947640902 | |

| 882138084 | https://github.com/simonw/datasette/issues/123#issuecomment-882138084 | https://api.github.com/repos/simonw/datasette/issues/123 | IC_kwDOBm6k_c40lFvk | simonw 9599 | 2021-07-19T00:04:31Z | 2021-07-19T00:04:31Z | OWNER | I've been thinking more about this one today too. An extension of this (touched on in #417, Datasette Library) would be to support pointing Datasette at a directory and having it automatically load any CSV files it finds anywhere in that folder or its descendants - either loading them fully, or providing a UI that allows users to select a file to open it in Datasette. For larger files I think the right thing to do is import them into an on-disk SQLite database, which is limited only by available disk space. For smaller files loading them into an in-memory database should work fine. |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Datasette serve should accept paths/URLs to CSVs and other file formats 275125561 | |

| 882096402 | https://github.com/simonw/datasette/issues/123#issuecomment-882096402 | https://api.github.com/repos/simonw/datasette/issues/123 | IC_kwDOBm6k_c40k7kS | RayBB 921217 | 2021-07-18T18:07:29Z | 2021-07-18T18:07:29Z | NONE | I also love the idea for this feature and wonder if it could work without having to download the whole database into memory at once if it's a rather large db. Obviously this could be slower but could have many use cases. My comment is partially inspired by this post about streaming sqlite dbs from github pages or such https://news.ycombinator.com/item?id=27016630 |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Datasette serve should accept paths/URLs to CSVs and other file formats 275125561 | |

| 882091516 | https://github.com/dogsheep/dogsheep-photos/issues/32#issuecomment-882091516 | https://api.github.com/repos/dogsheep/dogsheep-photos/issues/32 | IC_kwDOD079W840k6X8 | aaronyih1 10793464 | 2021-07-18T17:29:39Z | 2021-07-18T17:33:02Z | NONE | Same here for US West (N. California) us-west-1. Running on Catalina. |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

KeyError: 'Contents' on running upload 803333769 | |

| 882052852 | https://github.com/simonw/sqlite-utils/issues/297#issuecomment-882052852 | https://api.github.com/repos/simonw/sqlite-utils/issues/297 | IC_kwDOCGYnMM40kw70 | simonw 9599 | 2021-07-18T12:59:20Z | 2021-07-18T12:59:20Z | OWNER | I'm not too worried about |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Option for importing CSV data using the SQLite .import mechanism 944846776 | |

| 881932880 | https://github.com/simonw/datasette/issues/1199#issuecomment-881932880 | https://api.github.com/repos/simonw/datasette/issues/1199 | IC_kwDOBm6k_c40kTpQ | simonw 9599 | 2021-07-17T17:39:17Z | 2021-07-17T17:39:17Z | OWNER | I asked about optimizing performance on the SQLite forum and this came up as a suggestion: https://sqlite.org/forum/forumpost/9a6b9ae8e2048c8b?t=c I can start by trying this: |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Experiment with PRAGMA mmap_size=N 792652391 | |

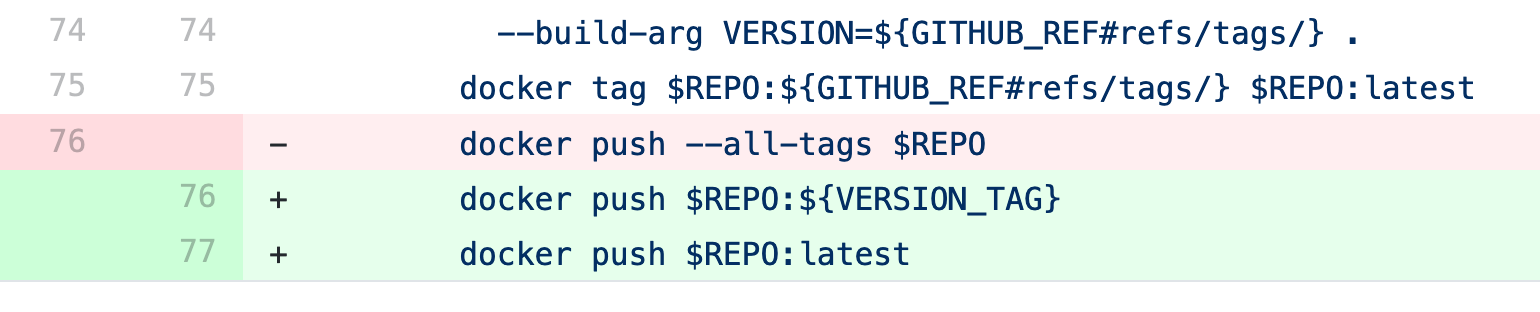

| 881686662 | https://github.com/simonw/datasette/issues/1396#issuecomment-881686662 | https://api.github.com/repos/simonw/datasette/issues/1396 | IC_kwDOBm6k_c40jXiG | simonw 9599 | 2021-07-16T20:02:44Z | 2021-07-16T20:02:44Z | OWNER | Confirmed fixed: 0.58.1 was successfully published to Docker Hub in https://github.com/simonw/datasette/runs/3089447346 and the |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

"invalid reference format" publishing Docker image 944903881 | |

| 881677620 | https://github.com/simonw/datasette/issues/1231#issuecomment-881677620 | https://api.github.com/repos/simonw/datasette/issues/1231 | IC_kwDOBm6k_c40jVU0 | simonw 9599 | 2021-07-16T19:44:12Z | 2021-07-16T19:44:12Z | OWNER | That fixed the race condition in the |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Race condition errors in new refresh_schemas() mechanism 811367257 | |

| 881674857 | https://github.com/simonw/datasette/issues/1231#issuecomment-881674857 | https://api.github.com/repos/simonw/datasette/issues/1231 | IC_kwDOBm6k_c40jUpp | simonw 9599 | 2021-07-16T19:38:39Z | 2021-07-16T19:38:39Z | OWNER | I can't replicate the race condition locally with or without this patch. I'm going to push the commit and then test the CI run from |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Race condition errors in new refresh_schemas() mechanism 811367257 | |

| 881671706 | https://github.com/simonw/datasette/issues/1231#issuecomment-881671706 | https://api.github.com/repos/simonw/datasette/issues/1231 | IC_kwDOBm6k_c40jT4a | simonw 9599 | 2021-07-16T19:32:05Z | 2021-07-16T19:32:05Z | OWNER | The test suite passes with that change. |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Race condition errors in new refresh_schemas() mechanism 811367257 | |

| 881668759 | https://github.com/simonw/datasette/issues/1231#issuecomment-881668759 | https://api.github.com/repos/simonw/datasette/issues/1231 | IC_kwDOBm6k_c40jTKX | simonw 9599 | 2021-07-16T19:27:46Z | 2021-07-16T19:27:46Z | OWNER | Second attempt at this: ```diff diff --git a/datasette/app.py b/datasette/app.py index 5976d8b..5f348cb 100644 --- a/datasette/app.py +++ b/datasette/app.py @@ -224,6 +224,7 @@ class Datasette: self.inspect_data = inspect_data self.immutables = set(immutables or []) self.databases = collections.OrderedDict() + self._refresh_schemas_lock = asyncio.Lock() self.crossdb = crossdb if memory or crossdb or not self.files: self.add_database(Database(self, is_memory=True), name="_memory") @@ -332,6 +333,12 @@ class Datasette: self.client = DatasetteClient(self)

|

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Race condition errors in new refresh_schemas() mechanism 811367257 | |

| 881665383 | https://github.com/simonw/datasette/issues/1231#issuecomment-881665383 | https://api.github.com/repos/simonw/datasette/issues/1231 | IC_kwDOBm6k_c40jSVn | simonw 9599 | 2021-07-16T19:21:35Z | 2021-07-16T19:21:35Z | OWNER | https://stackoverflow.com/a/25799871/6083 has a good example of using ```python stuff_lock = asyncio.Lock() async def get_stuff(url): async with stuff_lock: if url in cache: return cache[url] stuff = await aiohttp.request('GET', url) cache[url] = stuff return stuff ``` |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Race condition errors in new refresh_schemas() mechanism 811367257 | |

| 881664408 | https://github.com/simonw/datasette/issues/1231#issuecomment-881664408 | https://api.github.com/repos/simonw/datasette/issues/1231 | IC_kwDOBm6k_c40jSGY | simonw 9599 | 2021-07-16T19:19:35Z | 2021-07-16T19:19:35Z | OWNER | The only place that calls Ideally only one call to |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Race condition errors in new refresh_schemas() mechanism 811367257 | |

| 881663968 | https://github.com/simonw/datasette/issues/1231#issuecomment-881663968 | https://api.github.com/repos/simonw/datasette/issues/1231 | IC_kwDOBm6k_c40jR_g | simonw 9599 | 2021-07-16T19:18:42Z | 2021-07-16T19:18:42Z | OWNER | The race condition happens inside this method - initially with the call to |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Race condition errors in new refresh_schemas() mechanism 811367257 | |

| 881204782 | https://github.com/simonw/datasette/issues/1231#issuecomment-881204782 | https://api.github.com/repos/simonw/datasette/issues/1231 | IC_kwDOBm6k_c40hh4u | simonw 9599 | 2021-07-16T06:14:12Z | 2021-07-16T06:14:12Z | OWNER | Here's the traceback I got from ``` tests/test_utils.py . [100%] =================================== FAILURES =================================== ____ testgraphql_examples[path0] _____ ds = <datasette.app.Datasette object at 0x7f6b8b6f8fd0> path = PosixPath('/home/runner/work/datasette-graphql/datasette-graphql/examples/filters.md')

tests/test_graphql.py:142: AssertionError ----------------------------- Captured stderr call ----------------------------- table databases already exists table databases already exists table databases already exists table databases already exists table databases already exists table databases already exists table databases already exists table databases already exists table databases already exists table databases already exists table databases already exists table databases already exists table databases already exists table databases already exists table databases already exists table databases already exists table databases already exists table databases already exists table databases already exists table databases already exists table databases already exists Traceback (most recent call last): File "/opt/hostedtoolcache/Python/3.7.11/x64/lib/python3.7/site-packages/datasette/app.py", line 1171, in route_path response = await view(request, send) File "/opt/hostedtoolcache/Python/3.7.11/x64/lib/python3.7/site-packages/datasette/views/base.py", line 151, in view request, **request.scope["url_route"]["kwargs"] File "/opt/hostedtoolcache/Python/3.7.11/x64/lib/python3.7/site-packages/datasette/views/base.py", line 123, in dispatch_request await self.ds.refresh_schemas() File "/opt/hostedtoolcache/Python/3.7.11/x64/lib/python3.7/site-packages/datasette/app.py", line 338, in refresh_schemas await init_internal_db(internal_db) File "/opt/hostedtoolcache/Python/3.7.11/x64/lib/python3.7/site-packages/datasette/utils/internal_db.py", line 16, in init_internal_db block=True, File "/opt/hostedtoolcache/Python/3.7.11/x64/lib/python3.7/site-packages/datasette/database.py", line 102, in execute_write return await self.execute_write_fn(_inner, block=block) File "/opt/hostedtoolcache/Python/3.7.11/x64/lib/python3.7/site-packages/datasette/database.py", line 118, in execute_write_fn raise result File "/opt/hostedtoolcache/Python/3.7.11/x64/lib/python3.7/site-packages/datasette/database.py", line 139, in _execute_writes result = task.fn(conn) File "/opt/hostedtoolcache/Python/3.7.11/x64/lib/python3.7/site-packages/datasette/database.py", line 100, in _inner return conn.execute(sql, params or []) sqlite3.OperationalError: table databases already exists ``` |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Race condition errors in new refresh_schemas() mechanism 811367257 | |

| 881204343 | https://github.com/simonw/datasette/issues/1231#issuecomment-881204343 | https://api.github.com/repos/simonw/datasette/issues/1231 | IC_kwDOBm6k_c40hhx3 | simonw 9599 | 2021-07-16T06:13:11Z | 2021-07-16T06:13:11Z | OWNER | This just broke the |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Race condition errors in new refresh_schemas() mechanism 811367257 | |

| 881129149 | https://github.com/simonw/datasette/issues/1394#issuecomment-881129149 | https://api.github.com/repos/simonw/datasette/issues/1394 | IC_kwDOBm6k_c40hPa9 | simonw 9599 | 2021-07-16T02:23:32Z | 2021-07-16T02:23:32Z | OWNER | Wrote about this in the annotated release notes for 0.58: https://simonwillison.net/2021/Jul/16/datasette-058/ |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Big performance boost on faceting: skip the inner order by 944870799 | |

| 881125124 | https://github.com/simonw/datasette/issues/759#issuecomment-881125124 | https://api.github.com/repos/simonw/datasette/issues/759 | IC_kwDOBm6k_c40hOcE | simonw 9599 | 2021-07-16T02:11:48Z | 2021-07-16T02:11:54Z | OWNER | I added |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

fts search on a column doesn't work anymore due to escape_fts 612673948 | |

| 880967052 | https://github.com/simonw/datasette/issues/1396#issuecomment-880967052 | https://api.github.com/repos/simonw/datasette/issues/1396 | MDEyOklzc3VlQ29tbWVudDg4MDk2NzA1Mg== | simonw 9599 | 2021-07-15T19:47:25Z | 2021-07-15T19:47:25Z | OWNER | Actually I'm going to close this now and re-open it if the problem occurs again in the future. |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

"invalid reference format" publishing Docker image 944903881 | |

| 880900534 | https://github.com/simonw/datasette/issues/1394#issuecomment-880900534 | https://api.github.com/repos/simonw/datasette/issues/1394 | MDEyOklzc3VlQ29tbWVudDg4MDkwMDUzNA== | simonw 9599 | 2021-07-15T17:58:03Z | 2021-07-15T17:58:03Z | OWNER | Started a conversation about this on the SQLite forum: https://sqlite.org/forum/forumpost/2d76f2bcf65d256a?t=h |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Big performance boost on faceting: skip the inner order by 944870799 | |

| 880374156 | https://github.com/simonw/datasette/issues/1396#issuecomment-880374156 | https://api.github.com/repos/simonw/datasette/issues/1396 | MDEyOklzc3VlQ29tbWVudDg4MDM3NDE1Ng== | simonw 9599 | 2021-07-15T04:03:18Z | 2021-07-15T04:03:18Z | OWNER | I fixed |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

"invalid reference format" publishing Docker image 944903881 | |

| 880372149 | https://github.com/simonw/datasette/issues/1396#issuecomment-880372149 | https://api.github.com/repos/simonw/datasette/issues/1396 | MDEyOklzc3VlQ29tbWVudDg4MDM3MjE0OQ== | simonw 9599 | 2021-07-15T03:56:49Z | 2021-07-15T03:56:49Z | OWNER | I'm going to leave this open until I next successfully publish a new version. |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

"invalid reference format" publishing Docker image 944903881 | |

| 880326049 | https://github.com/simonw/datasette/issues/1396#issuecomment-880326049 | https://api.github.com/repos/simonw/datasette/issues/1396 | MDEyOklzc3VlQ29tbWVudDg4MDMyNjA0OQ== | simonw 9599 | 2021-07-15T01:50:05Z | 2021-07-15T01:50:05Z | OWNER | I think I made a mistake in this commit: https://github.com/simonw/datasette/commit/0486303b60ce2784fd2e2ecdbecf304b7d6e6659

It looks like I copied |

{

"total_count": 1,

"+1": 1,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

"invalid reference format" publishing Docker image 944903881 | |

| 880325362 | https://github.com/simonw/datasette/issues/1396#issuecomment-880325362 | https://api.github.com/repos/simonw/datasette/issues/1396 | MDEyOklzc3VlQ29tbWVudDg4MDMyNTM2Mg== | simonw 9599 | 2021-07-15T01:48:11Z | 2021-07-15T01:48:11Z | OWNER | In particular these three lines: https://github.com/simonw/datasette/blob/084cfe1e00e1a4c0515390a513aca286eeea20c2/.github/workflows/publish.yml#L117-L119 |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

"invalid reference format" publishing Docker image 944903881 | |

| 880325004 | https://github.com/simonw/datasette/issues/1396#issuecomment-880325004 | https://api.github.com/repos/simonw/datasette/issues/1396 | MDEyOklzc3VlQ29tbWVudDg4MDMyNTAwNA== | simonw 9599 | 2021-07-15T01:47:17Z | 2021-07-15T01:47:17Z | OWNER | This is the part of the publish workflow that failed and threw the "invalid reference format" error: https://github.com/simonw/datasette/blob/084cfe1e00e1a4c0515390a513aca286eeea20c2/.github/workflows/publish.yml#L100-L119 |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

"invalid reference format" publishing Docker image 944903881 | |

| 880324637 | https://github.com/simonw/datasette/issues/1396#issuecomment-880324637 | https://api.github.com/repos/simonw/datasette/issues/1396 | MDEyOklzc3VlQ29tbWVudDg4MDMyNDYzNw== | simonw 9599 | 2021-07-15T01:46:26Z | 2021-07-15T01:46:26Z | OWNER | I manually published the Docker image using https://github.com/simonw/datasette/actions/workflows/push_docker_tag.yml https://github.com/simonw/datasette/runs/3072505126 The 0.58 release shows up on https://hub.docker.com/r/datasetteproject/datasette/tags?page=1&ordering=last_updated now - BUT the |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

"invalid reference format" publishing Docker image 944903881 | |

| 880287483 | https://github.com/simonw/datasette/issues/1394#issuecomment-880287483 | https://api.github.com/repos/simonw/datasette/issues/1394 | MDEyOklzc3VlQ29tbWVudDg4MDI4NzQ4Mw== | simonw 9599 | 2021-07-15T00:01:47Z | 2021-07-15T00:01:47Z | OWNER | I wrote this code: ```python _order_by_re = re.compile(r"(^.) order by [a-zA-Z_][a-zA-Z0-9_]+( desc)?$", re.DOTALL) _order_by_braces_re = re.compile(r"(^.) order by [[^]]+]( desc)?$", re.DOTALL) def strip_order_by(sql): for regex in (_order_by_re, _order_by_braces_re): match = regex.match(sql) if match is not None: return match.group(1) return sql @pytest.mark.parametrize( "sql,expected", [ ("blah", "blah"), ("select * from foo", "select * from foo"), ("select * from foo order by bah", "select * from foo"), ("select * from foo order by bah desc", "select * from foo"), ("select * from foo order by [select]", "select * from foo"), ("select * from foo order by [select] desc", "select * from foo"), ], ) def test_strip_order_by(sql, expected): assert strip_order_by(sql) == expected ``` But it turns out I don't need it! The SQL that is passed to the facet class is created by this code: https://github.com/simonw/datasette/blob/ba11ef27edd6981eeb26d7ecf5aa236707f5f8ce/datasette/views/table.py#L677-L684 And the only place that uses that So I can change that to |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Big performance boost on faceting: skip the inner order by 944870799 | |

| 880278256 | https://github.com/simonw/datasette/issues/1394#issuecomment-880278256 | https://api.github.com/repos/simonw/datasette/issues/1394 | MDEyOklzc3VlQ29tbWVudDg4MDI3ODI1Ng== | simonw 9599 | 2021-07-14T23:35:18Z | 2021-07-14T23:35:18Z | OWNER | The challenge here is that faceting doesn't currently modify the inner SQL at all - it wraps it so that it can work against any SQL statement (though Datasette itself does not yet take advantage of that ability, only offering faceting on table pages). So just removing the order by wouldn't be appropriate if the inner query looked something like this:

In SQLite the |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Big performance boost on faceting: skip the inner order by 944870799 | |

| 880259255 | https://github.com/simonw/sqlite-utils/issues/297#issuecomment-880259255 | https://api.github.com/repos/simonw/sqlite-utils/issues/297 | MDEyOklzc3VlQ29tbWVudDg4MDI1OTI1NQ== | simonw 9599 | 2021-07-14T22:48:41Z | 2021-07-14T22:48:41Z | OWNER | Should also take advantage of |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Option for importing CSV data using the SQLite .import mechanism 944846776 | |

| 880257587 | https://github.com/simonw/sqlite-utils/issues/297#issuecomment-880257587 | https://api.github.com/repos/simonw/sqlite-utils/issues/297 | MDEyOklzc3VlQ29tbWVudDg4MDI1NzU4Nw== | simonw 9599 | 2021-07-14T22:44:05Z | 2021-07-14T22:44:05Z | OWNER | https://unix.stackexchange.com/a/642364 suggests you can also use this to import from stdin, like so: Here the |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Option for importing CSV data using the SQLite .import mechanism 944846776 | |

| 880256865 | https://github.com/simonw/sqlite-utils/issues/297#issuecomment-880256865 | https://api.github.com/repos/simonw/sqlite-utils/issues/297 | MDEyOklzc3VlQ29tbWVudDg4MDI1Njg2NQ== | simonw 9599 | 2021-07-14T22:42:11Z | 2021-07-14T22:42:11Z | OWNER | Potential workaround for missing

|

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Option for importing CSV data using the SQLite .import mechanism 944846776 | |

| 880256058 | https://github.com/simonw/sqlite-utils/issues/297#issuecomment-880256058 | https://api.github.com/repos/simonw/sqlite-utils/issues/297 | MDEyOklzc3VlQ29tbWVudDg4MDI1NjA1OA== | simonw 9599 | 2021-07-14T22:40:01Z | 2021-07-14T22:40:47Z | OWNER | Full docs here: https://www.sqlite.org/draft/cli.html#csv One catch: how this works has changed in recent SQLite versions: https://www.sqlite.org/changes.html

The "skip" feature is particularly important to understand. https://www.sqlite.org/draft/cli.html#csv says:

But the |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Option for importing CSV data using the SQLite .import mechanism 944846776 | |

| 880153069 | https://github.com/simonw/datasette/issues/268#issuecomment-880153069 | https://api.github.com/repos/simonw/datasette/issues/268 | MDEyOklzc3VlQ29tbWVudDg4MDE1MzA2OQ== | simonw 9599 | 2021-07-14T19:31:00Z | 2021-07-14T19:31:00Z | OWNER | ... though interestingly I can't replicate that error on That's running https://latest.datasette.io/-/versions SQLite 3.35.4 whereas https://www.niche-museums.com/-/versions is running 3.27.2 (the most recent version available with Vercel) - but there's nothing in the SQLite changelog between those two versions that suggests changes to how the FTS5 parser works. https://www.sqlite.org/changes.html |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Mechanism for ranking results from SQLite full-text search 323718842 | |

| 880150755 | https://github.com/simonw/datasette/issues/268#issuecomment-880150755 | https://api.github.com/repos/simonw/datasette/issues/268 | MDEyOklzc3VlQ29tbWVudDg4MDE1MDc1NQ== | simonw 9599 | 2021-07-14T19:26:47Z | 2021-07-14T19:29:08Z | OWNER |

Mainly that it's possible to generate SQL queries that crash with an error. This was the example that convinced me to default to escaping:

|

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Mechanism for ranking results from SQLite full-text search 323718842 | |

| 579675357 | https://github.com/simonw/datasette/issues/651#issuecomment-579675357 | https://api.github.com/repos/simonw/datasette/issues/651 | MDEyOklzc3VlQ29tbWVudDU3OTY3NTM1Nw== | clausjuhl 2181410 | 2020-01-29T09:45:00Z | 2021-07-14T19:26:06Z | NONE | Hi Simon Thank you for adding the escape_function, but it does not work on my datasette-installation (0.33). I've added the following file to my datasette-dir: ```python from datasette import hookimpl def escape_fts_query(query): bits = query.split() return ' '.join('"{}"'.format(bit.replace('"', '')) for bit in bits) @hookimpl def prepare_connection(conn): conn.create_function("escape_fts_query", 1, escape_fts_query)` ``` It has no effect on the standard queries to the tables though, as they still produce errors when including any characters like '-', '/', '+' or '?' Does the function only work when using costum queries, where I can include the escape_fts-function explicitly in the sql-query? PS. I'm calling datasette with --plugins=plugins, and my other plugins work just fine. PPS. The fts5 virtual table is created with 'sqlite3' like so:

Thanks! |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

fts5 syntax error when using punctuation 539590148 | |

| 879477586 | https://github.com/dogsheep/healthkit-to-sqlite/issues/12#issuecomment-879477586 | https://api.github.com/repos/dogsheep/healthkit-to-sqlite/issues/12 | MDEyOklzc3VlQ29tbWVudDg3OTQ3NzU4Ng== | simonw 9599 | 2021-07-13T23:50:06Z | 2021-07-13T23:50:06Z | MEMBER | Unfortunately I don't think updating the database is practical, because the export doesn't include unique identifiers which can be used to update existing records and create new ones. Recreating from scratch works around that limitation. I've not explored workouts with SpatiaLite but that's a really good idea. |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Some workout columns should be float, not text 727848625 | |

| 879309636 | https://github.com/simonw/datasette/pull/1393#issuecomment-879309636 | https://api.github.com/repos/simonw/datasette/issues/1393 | MDEyOklzc3VlQ29tbWVudDg3OTMwOTYzNg== | simonw 9599 | 2021-07-13T18:32:25Z | 2021-07-13T18:32:25Z | OWNER | Thanks |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Update deploying.rst 941412189 | |

| 879277953 | https://github.com/simonw/datasette/pull/1392#issuecomment-879277953 | https://api.github.com/repos/simonw/datasette/issues/1392 | MDEyOklzc3VlQ29tbWVudDg3OTI3Nzk1Mw== | simonw 9599 | 2021-07-13T17:42:31Z | 2021-07-13T17:42:31Z | OWNER | Thanks! |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Update deploying.rst 941403676 | |

| 877874117 | https://github.com/dogsheep/healthkit-to-sqlite/issues/12#issuecomment-877874117 | https://api.github.com/repos/dogsheep/healthkit-to-sqlite/issues/12 | MDEyOklzc3VlQ29tbWVudDg3Nzg3NDExNw== | Mjboothaus 956433 | 2021-07-11T23:03:37Z | 2021-07-11T23:03:37Z | NONE | P.s. wondering if you have explored using the spatialite functionality with the location data in workouts? |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Some workout columns should be float, not text 727848625 | |

| 877835171 | https://github.com/simonw/datasette/issues/511#issuecomment-877835171 | https://api.github.com/repos/simonw/datasette/issues/511 | MDEyOklzc3VlQ29tbWVudDg3NzgzNTE3MQ== | simonw 9599 | 2021-07-11T17:23:05Z | 2021-07-11T17:23:05Z | OWNER | https://github.com/simonw/datasette/runs/3038188870?check_suite_focus=true Full copy of log here: https://gist.github.com/simonw/4b1fdd24496b989fca56bc757be345ad |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Get Datasette tests passing on Windows in GitHub Actions 456578474 | |

| 877805513 | https://github.com/dogsheep/healthkit-to-sqlite/issues/12#issuecomment-877805513 | https://api.github.com/repos/dogsheep/healthkit-to-sqlite/issues/12 | MDEyOklzc3VlQ29tbWVudDg3NzgwNTUxMw== | Mjboothaus 956433 | 2021-07-11T14:03:01Z | 2021-07-11T14:03:01Z | NONE | Hi Simon -- just experimenting with your excellent software! Up to this point in time I have been using the (paid) HealthFit App to export my workouts from my Apple Watch, one walk at the time into either .GPX or .FIT format and then using another library to suck it into Python and eventually here to my "Emmaus Walking" app: https://share.streamlit.io/mjboothaus/emmaus_walking/emmaus_walking/app.py I just used I did notice the issue with various numeric fields being stored in the SQLite db as TEXT for now and just thought I'd flag it - but you're already self-reported this issue. Keep up the great work! I was curious if you have any thoughts about periodically exporting "export.zip" and how to just update the SQLite file instead of re-creating it each time. Hopefully Apple will give some thought to managing this data in a more sensible fashion as it grows over time. Ideally one could pull it from iCloud (where it is allegedly being backed up). |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Some workout columns should be float, not text 727848625 | |

| 877726495 | https://github.com/simonw/datasette/issues/511#issuecomment-877726495 | https://api.github.com/repos/simonw/datasette/issues/511 | MDEyOklzc3VlQ29tbWVudDg3NzcyNjQ5NQ== | simonw 9599 | 2021-07-11T01:32:27Z | 2021-07-11T01:32:27Z | OWNER | I'm using I'll try not using the |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Get Datasette tests passing on Windows in GitHub Actions 456578474 | |

| 877726288 | https://github.com/simonw/datasette/issues/511#issuecomment-877726288 | https://api.github.com/repos/simonw/datasette/issues/511 | MDEyOklzc3VlQ29tbWVudDg3NzcyNjI4OA== | simonw 9599 | 2021-07-11T01:29:41Z | 2021-07-11T01:29:41Z | OWNER | Lots of errors that look like this:

|

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Get Datasette tests passing on Windows in GitHub Actions 456578474 | |

| 877725742 | https://github.com/simonw/datasette/issues/511#issuecomment-877725742 | https://api.github.com/repos/simonw/datasette/issues/511 | MDEyOklzc3VlQ29tbWVudDg3NzcyNTc0Mg== | simonw 9599 | 2021-07-11T01:25:01Z | 2021-07-11T01:26:38Z | OWNER | That's weird. https://github.com/simonw/datasette/runs/3037862798 finished running and came up green - but actually a TON of the tests failed on Windows. Not sure why that didn't fail the whole test suite:

Also the test suite took 50 minutes on Windows! Here's a copy of the full log file for the tests on Python 3.8 on Windows: https://gist.github.com/simonw/2900ef33693c1bbda09188eb31c8212d |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Get Datasette tests passing on Windows in GitHub Actions 456578474 | |

| 877725193 | https://github.com/simonw/datasette/issues/1388#issuecomment-877725193 | https://api.github.com/repos/simonw/datasette/issues/1388 | MDEyOklzc3VlQ29tbWVudDg3NzcyNTE5Mw== | simonw 9599 | 2021-07-11T01:18:38Z | 2021-07-11T01:18:38Z | OWNER | Wrote up a TIL: https://til.simonwillison.net/nginx/proxy-domain-sockets |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Serve using UNIX domain socket 939051549 | |

| 877721003 | https://github.com/simonw/datasette/issues/1388#issuecomment-877721003 | https://api.github.com/repos/simonw/datasette/issues/1388 | MDEyOklzc3VlQ29tbWVudDg3NzcyMTAwMw== | simonw 9599 | 2021-07-11T00:21:19Z | 2021-07-11T00:21:19Z | OWNER | {

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Serve using UNIX domain socket 939051549 | ||

| 877718364 | https://github.com/simonw/datasette/issues/511#issuecomment-877718364 | https://api.github.com/repos/simonw/datasette/issues/511 | MDEyOklzc3VlQ29tbWVudDg3NzcxODM2NA== | simonw 9599 | 2021-07-10T23:54:37Z | 2021-07-10T23:54:37Z | OWNER | Looks like it's not even 10% of the way through, and already a bunch of errors:

|

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Get Datasette tests passing on Windows in GitHub Actions 456578474 | |

| 877718286 | https://github.com/simonw/datasette/issues/511#issuecomment-877718286 | https://api.github.com/repos/simonw/datasette/issues/511 | MDEyOklzc3VlQ29tbWVudDg3NzcxODI4Ng== | simonw 9599 | 2021-07-10T23:53:29Z | 2021-07-10T23:53:29Z | OWNER | Test suite on Windows seems to run a lot slower:

From https://github.com/simonw/datasette/actions/runs/1018938850 which is still going. |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Get Datasette tests passing on Windows in GitHub Actions 456578474 | |

| 877717791 | https://github.com/simonw/datasette/issues/511#issuecomment-877717791 | https://api.github.com/repos/simonw/datasette/issues/511 | MDEyOklzc3VlQ29tbWVudDg3NzcxNzc5MQ== | simonw 9599 | 2021-07-10T23:45:35Z | 2021-07-10T23:45:35Z | OWNER |

Good news: that line was removed in #1094. |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Get Datasette tests passing on Windows in GitHub Actions 456578474 | |

| 650340914 | https://github.com/simonw/datasette/pull/868#issuecomment-650340914 | https://api.github.com/repos/simonw/datasette/issues/868 | MDEyOklzc3VlQ29tbWVudDY1MDM0MDkxNA== | codecov[bot] 22429695 | 2020-06-26T18:53:02Z | 2021-07-10T23:41:42Z | NONE | Codecov Report

```diff @@ Coverage Diff @@ master #868 +/-==========================================

+ Coverage 82.91% 83.40% +0.49% | Impacted Files | Coverage Δ | |

|---|---|---|

| datasette/plugins.py | Continue to review full report at Codecov.

|

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

initial windows ci setup 646448486 | |

| 877717392 | https://github.com/simonw/datasette/pull/557#issuecomment-877717392 | https://api.github.com/repos/simonw/datasette/issues/557 | MDEyOklzc3VlQ29tbWVudDg3NzcxNzM5Mg== | simonw 9599 | 2021-07-10T23:39:48Z | 2021-07-10T23:39:48Z | OWNER | Abandoning this - need to switch to using GitHub Actions for this instead. |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Get tests running on Windows using Travis CI 466996584 | |

| 877717262 | https://github.com/simonw/datasette/issues/1388#issuecomment-877717262 | https://api.github.com/repos/simonw/datasette/issues/1388 | MDEyOklzc3VlQ29tbWVudDg3NzcxNzI2Mg== | simonw 9599 | 2021-07-10T23:37:54Z | 2021-07-10T23:37:54Z | OWNER |

I'm going to hold off on implementing this until someone asks for it. |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Serve using UNIX domain socket 939051549 | |

| 877716993 | https://github.com/simonw/datasette/issues/1388#issuecomment-877716993 | https://api.github.com/repos/simonw/datasette/issues/1388 | MDEyOklzc3VlQ29tbWVudDg3NzcxNjk5Mw== | simonw 9599 | 2021-07-10T23:34:02Z | 2021-07-10T23:34:02Z | OWNER | Figured out an example nginx configuration. This in Then run Then run nginx like this: Then hits to |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Serve using UNIX domain socket 939051549 | |

| 877716359 | https://github.com/simonw/datasette/issues/1388#issuecomment-877716359 | https://api.github.com/repos/simonw/datasette/issues/1388 | MDEyOklzc3VlQ29tbWVudDg3NzcxNjM1OQ== | simonw 9599 | 2021-07-10T23:24:58Z | 2021-07-10T23:24:58Z | OWNER | Apparently Windows 10 has Unix domain socket support: https://bugs.python.org/issue33408

But it's not clear if this is going to work. That same issue thread (the issue is still open) suggests using |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Serve using UNIX domain socket 939051549 | |

| 877716156 | https://github.com/simonw/datasette/issues/1388#issuecomment-877716156 | https://api.github.com/repos/simonw/datasette/issues/1388 | MDEyOklzc3VlQ29tbWVudDg3NzcxNjE1Ng== | simonw 9599 | 2021-07-10T23:22:21Z | 2021-07-10T23:22:21Z | OWNER | I don't have the Datasette test suite running on Windows yet, but I'd like it to run there some day - so ideally this test would be skipped if Unix domain sockets are not supported by the underlying operating system. |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Serve using UNIX domain socket 939051549 | |

| 877715654 | https://github.com/simonw/datasette/issues/1388#issuecomment-877715654 | https://api.github.com/repos/simonw/datasette/issues/1388 | MDEyOklzc3VlQ29tbWVudDg3NzcxNTY1NA== | simonw 9599 | 2021-07-10T23:15:06Z | 2021-07-10T23:15:06Z | OWNER | I can run tests against it using

|

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Serve using UNIX domain socket 939051549 | |

| 877714698 | https://github.com/simonw/datasette/issues/1388#issuecomment-877714698 | https://api.github.com/repos/simonw/datasette/issues/1388 | MDEyOklzc3VlQ29tbWVudDg3NzcxNDY5OA== | simonw 9599 | 2021-07-10T23:01:37Z | 2021-07-10T23:01:37Z | OWNER | Can test this with:

|

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Serve using UNIX domain socket 939051549 | |

| 877691558 | https://github.com/simonw/datasette/issues/1391#issuecomment-877691558 | https://api.github.com/repos/simonw/datasette/issues/1391 | MDEyOklzc3VlQ29tbWVudDg3NzY5MTU1OA== | simonw 9599 | 2021-07-10T19:26:57Z | 2021-07-10T19:26:57Z | OWNER | The |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Stop using generated columns in fixtures.db 941300946 | |

| 877691427 | https://github.com/simonw/datasette/issues/1391#issuecomment-877691427 | https://api.github.com/repos/simonw/datasette/issues/1391 | MDEyOklzc3VlQ29tbWVudDg3NzY5MTQyNw== | simonw 9599 | 2021-07-10T19:26:00Z | 2021-07-10T19:26:00Z | OWNER | I had to run the tests locally on my macOS laptop using |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Stop using generated columns in fixtures.db 941300946 | |

| 877687196 | https://github.com/simonw/datasette/issues/1391#issuecomment-877687196 | https://api.github.com/repos/simonw/datasette/issues/1391 | MDEyOklzc3VlQ29tbWVudDg3NzY4NzE5Ng== | simonw 9599 | 2021-07-10T18:58:40Z | 2021-07-10T18:58:40Z | OWNER | I can use the |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Stop using generated columns in fixtures.db 941300946 | |

| 877686784 | https://github.com/simonw/datasette/issues/1391#issuecomment-877686784 | https://api.github.com/repos/simonw/datasette/issues/1391 | MDEyOklzc3VlQ29tbWVudDg3NzY4Njc4NA== | simonw 9599 | 2021-07-10T18:56:03Z | 2021-07-10T18:56:03Z | OWNER | Here's the SQL used to generate the table for the test: https://github.com/simonw/datasette/blob/02b19c7a9afd328f22040ab33b5c1911cd904c7c/tests/fixtures.py#L723-L733 |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Stop using generated columns in fixtures.db 941300946 | |

| 877682533 | https://github.com/simonw/datasette/issues/1391#issuecomment-877682533 | https://api.github.com/repos/simonw/datasette/issues/1391 | MDEyOklzc3VlQ29tbWVudDg3NzY4MjUzMw== | simonw 9599 | 2021-07-10T18:28:05Z | 2021-07-10T18:28:05Z | OWNER | Here's the test in question: https://github.com/simonw/datasette/blob/a6c55afe8c82ead8deb32f90c9324022fd422324/tests/test_api.py#L2033-L2046 Various other places in the test code also need changing - anything that calls |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Stop using generated columns in fixtures.db 941300946 | |

| 877681031 | https://github.com/simonw/datasette/issues/1389#issuecomment-877681031 | https://api.github.com/repos/simonw/datasette/issues/1389 | MDEyOklzc3VlQ29tbWVudDg3NzY4MTAzMQ== | simonw 9599 | 2021-07-10T18:17:29Z | 2021-07-10T18:17:29Z | OWNER | I don't like I'm going with |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

"searchmode": "raw" in table metadata 940077168 | |

| 877310125 | https://github.com/simonw/datasette/issues/1390#issuecomment-877310125 | https://api.github.com/repos/simonw/datasette/issues/1390 | MDEyOklzc3VlQ29tbWVudDg3NzMxMDEyNQ== | simonw 9599 | 2021-07-09T16:32:57Z | 2021-07-09T16:32:57Z | OWNER | {

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Mention restarting systemd in documentation 940891698 |

Advanced export

JSON shape: default, array, newline-delimited, object

CREATE TABLE [issue_comments] (

[html_url] TEXT,

[issue_url] TEXT,

[id] INTEGER PRIMARY KEY,

[node_id] TEXT,

[user] INTEGER REFERENCES [users]([id]),

[created_at] TEXT,

[updated_at] TEXT,

[author_association] TEXT,

[body] TEXT,

[reactions] TEXT,

[issue] INTEGER REFERENCES [issues]([id])

, [performed_via_github_app] TEXT);

CREATE INDEX [idx_issue_comments_issue]

ON [issue_comments] ([issue]);

CREATE INDEX [idx_issue_comments_user]

ON [issue_comments] ([user]);

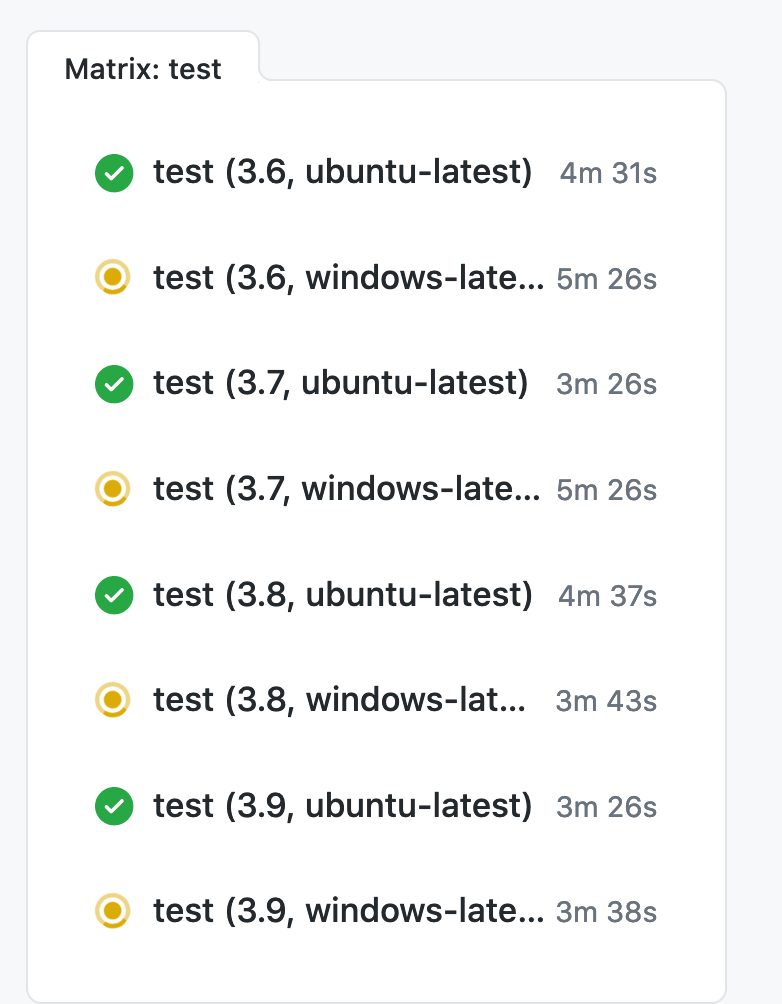

user >30