issues

477 rows where comments = 2 sorted by locked

This data as json, CSV (advanced)

repo 12

| id | node_id | number | title | user | state | locked ▼ | assignee | milestone | comments | created_at | updated_at | closed_at | author_association | pull_request | body | repo | type | active_lock_reason | performed_via_github_app | reactions | draft | state_reason |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 503218205 | MDU6SXNzdWU1MDMyMTgyMDU= | 586 | Enable browser caching for plugin statics with datasette-auth | simonw 9599 | closed | 0 | 2 | 2019-10-07T03:47:14Z | 2019-10-07T15:46:04Z | 2019-10-07T15:46:03Z | OWNER | An authenticated Datasette I run is seeing delays on every page load. On looking at the network inspector it turns out it's because datasette-vega is nearly 1MB and a

This may well turn out to be a bug in |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/586/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 504238461 | MDU6SXNzdWU1MDQyMzg0NjE= | 6 | sqlite3.OperationalError: table users has no column named bio | dazzag24 1055831 | closed | 0 | 2 | 2019-10-08T19:39:52Z | 2019-10-13T05:31:28Z | 2019-10-13T05:30:19Z | NONE | ``` $ github-to-sqlite repos github.db $ github-to-sqlite starred github.db dazzag24 Traceback (most recent call last): File "/home/darreng/.virtualenvs/dogsheep-d2PjdrD7/bin/github-to-sqlite", line 10, in <module> sys.exit(cli()) File "/home/darreng/.virtualenvs/dogsheep-d2PjdrD7/lib/python3.6/site-packages/click/core.py", line 764, in call return self.main(args, kwargs) File "/home/darreng/.virtualenvs/dogsheep-d2PjdrD7/lib/python3.6/site-packages/click/core.py", line 717, in main rv = self.invoke(ctx) File "/home/darreng/.virtualenvs/dogsheep-d2PjdrD7/lib/python3.6/site-packages/click/core.py", line 1137, in invoke return _process_result(sub_ctx.command.invoke(sub_ctx)) File "/home/darreng/.virtualenvs/dogsheep-d2PjdrD7/lib/python3.6/site-packages/click/core.py", line 956, in invoke return ctx.invoke(self.callback, ctx.params) File "/home/darreng/.virtualenvs/dogsheep-d2PjdrD7/lib/python3.6/site-packages/click/core.py", line 555, in invoke return callback(args, **kwargs) File "/home/darreng/.virtualenvs/dogsheep-d2PjdrD7/lib/python3.6/site-packages/github_to_sqlite/cli.py", line 106, in starred utils.save_stars(db, user, stars) File "/home/darreng/.virtualenvs/dogsheep-d2PjdrD7/lib/python3.6/site-packages/github_to_sqlite/utils.py", line 177, in save_stars user_id = save_user(db, user) File "/home/darreng/.virtualenvs/dogsheep-d2PjdrD7/lib/python3.6/site-packages/github_to_sqlite/utils.py", line 61, in save_user return db["users"].upsert(to_save, pk="id").last_pk File "/home/darreng/.virtualenvs/dogsheep-d2PjdrD7/lib/python3.6/site-packages/sqlite_utils/db.py", line 1067, in upsert extracts=extracts, File "/home/darreng/.virtualenvs/dogsheep-d2PjdrD7/lib/python3.6/site-packages/sqlite_utils/db.py", line 916, in insert extracts=extracts, File "/home/darreng/.virtualenvs/dogsheep-d2PjdrD7/lib/python3.6/site-packages/sqlite_utils/db.py", line 1024, in insert_all result = self.db.conn.execute(sql, values) sqlite3.OperationalError: table users has no column named bio ``` ``` $ pipenv graph github-to-sqlite==0.4 - requests [required: Any, installed: 2.22.0] - certifi [required: >=2017.4.17, installed: 2019.9.11] - chardet [required: >=3.0.2,<3.1.0, installed: 3.0.4] - idna [required: >=2.5,<2.9, installed: 2.8] - urllib3 [required: >=1.21.1,<1.26,!=1.25.1,!=1.25.0, installed: 1.25.6] - sqlite-utils [required: ~=1.11, installed: 1.11] - click [required: Any, installed: 7.0] - click-default-group [required: Any, installed: 1.2.2] - click [required: Any, installed: 7.0] - tabulate [required: Any, installed: 0.8.5] Python 3.6.8 ``` |

github-to-sqlite 207052882 | issue | {

"url": "https://api.github.com/repos/dogsheep/github-to-sqlite/issues/6/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 505674949 | MDU6SXNzdWU1MDU2NzQ5NDk= | 17 | import command should empty all archive-* tables first | simonw 9599 | closed | 0 | 2 | 2019-10-11T06:58:43Z | 2019-10-11T15:40:08Z | 2019-10-11T15:40:08Z | MEMBER | Can have a CLI option for NOT doing that. |

twitter-to-sqlite 206156866 | issue | {

"url": "https://api.github.com/repos/dogsheep/twitter-to-sqlite/issues/17/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 508190730 | MDU6SXNzdWU1MDgxOTA3MzA= | 23 | Extremely simple migration system | simonw 9599 | closed | 0 | 2 | 2019-10-17T02:13:57Z | 2019-10-17T16:57:17Z | 2019-10-17T16:57:17Z | MEMBER | Needed for #12. This is going to be an incredibly simple version of the Django migration system.

The function names will be detected and used as the names of the migrations. Every time you run the CLI tool it will call the Needs to take into account that there might be no tables at all. As such, migration functions should sanity check that the tables they are going to work on actually exist. |

twitter-to-sqlite 206156866 | issue | {

"url": "https://api.github.com/repos/dogsheep/twitter-to-sqlite/issues/23/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 508578780 | MDU6SXNzdWU1MDg1Nzg3ODA= | 25 | Ensure migrations don't accidentally create foreign key twice | simonw 9599 | closed | 0 | 2 | 2019-10-17T16:08:50Z | 2019-10-17T16:56:47Z | 2019-10-17T16:56:47Z | MEMBER | Is it possible for these lines to run against a database table that already has these foreign keys? |

twitter-to-sqlite 206156866 | issue | {

"url": "https://api.github.com/repos/dogsheep/twitter-to-sqlite/issues/25/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 509612217 | MDExOlB1bGxSZXF1ZXN0MzMwMTI5MzU4 | 603 | always pop as_format off args dict | chris48s 6025893 | closed | 0 | 2 | 2019-10-20T15:44:22Z | 2019-10-30T19:12:22Z | 2019-10-21T02:03:09Z | CONTRIBUTOR | simonw/datasette/pulls/603 | closes #563 |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/603/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | |||||

| 510076368 | MDU6SXNzdWU1MTAwNzYzNjg= | 605 | Support queries at the table level | bsilverm 12617395 | open | 0 | 2 | 2019-10-21T15:58:30Z | 2019-10-30T18:55:37Z | NONE | Per the issue described in issue #588, it was determined queries are not supported at the table level. Per my last comment in the issue, I'd like to request support for this as it would help eliminate errors in the event certain tables are not present in the database. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/605/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 516748849 | MDU6SXNzdWU1MTY3NDg4NDk= | 612 | CSV export is broken for tables with null foreign keys | simonw 9599 | closed | 0 | 2 | 2019-11-02T22:52:47Z | 2021-06-17T18:13:20Z | 2019-11-02T23:12:53Z | OWNER | Following on from #406 - this CSV export appears to be broken: https://14da705.datasette.io/fixtures/foreign_key_references.csv?_labels=on&_size=max

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/612/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 518506242 | MDU6SXNzdWU1MTg1MDYyNDI= | 616 | Datasette FTS detection bug | null92 49656826 | closed | 0 | 2 | 2019-11-06T14:25:47Z | 2019-11-08T15:31:33Z | 2019-11-08T02:06:56Z | NONE | I'm having a trouble with datasette. I deployed EXACTLY the same project on two different apps on Heroku. Both have databases (not all) with FTS activated but only one detects and works fine. You can take a look here:

With search: http://teste-templates.herokuapp.com/amazonia_protege/car

Without search: http://bases.vortex.media/amazonia_protege/car

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/616/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 518739697 | MDU6SXNzdWU1MTg3Mzk2OTc= | 30 | `followers` fails because `transform_user` is called twice | jacobian 21148 | closed | 0 | 2 | 2019-11-06T20:44:52Z | 2019-11-09T20:15:28Z | 2019-11-09T19:55:52Z | CONTRIBUTOR | Trying to run

This appears to be because https://github.com/dogsheep/twitter-to-sqlite/blob/master/twitter_to_sqlite/cli.py#L111 calls I was able to work around this by commenting out https://github.com/dogsheep/twitter-to-sqlite/blob/master/twitter_to_sqlite/cli.py#L116. Shall I work up a patch for that, or is there a better approach? |

twitter-to-sqlite 206156866 | issue | {

"url": "https://api.github.com/repos/dogsheep/twitter-to-sqlite/issues/30/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 520507306 | MDU6SXNzdWU1MjA1MDczMDY= | 618 | Mechanism for seeing indexes on a specific table | simonw 9599 | closed | 0 | 2 | 2019-11-09T20:10:41Z | 2019-11-10T01:40:05Z | 2019-11-10T01:30:25Z | OWNER | The only way to see the indexes that apply to a specific table at the moment is to run the following SQL manually:

It would be good if this list of indexes was displayed in a neater way on the table page. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/618/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 520508502 | MDU6SXNzdWU1MjA1MDg1MDI= | 31 | "friends" command (similar to "followers") | simonw 9599 | closed | 0 | 2 | 2019-11-09T20:20:20Z | 2022-09-20T05:05:03Z | 2020-02-07T07:03:28Z | MEMBER | Current list of commands:

|

twitter-to-sqlite 206156866 | issue | {

"url": "https://api.github.com/repos/dogsheep/twitter-to-sqlite/issues/31/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 520718056 | MDExOlB1bGxSZXF1ZXN0MzM5MjM2NjQ3 | 623 | Test against Python 3.8 in Travis | simonw 9599 | closed | 0 | 2 | 2019-11-11T03:24:54Z | 2019-11-11T03:45:35Z | 2019-11-11T03:45:35Z | OWNER | simonw/datasette/pulls/623 | Needed for #622 |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/623/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | |||||

| 521323012 | MDExOlB1bGxSZXF1ZXN0MzM5NzIyNzkw | 627 | Support Python 3.8, stop supporting Python 3.5 | simonw 9599 | closed | 0 | 2 | 2019-11-12T04:36:33Z | 2020-04-05T10:23:58Z | 2019-11-12T05:09:12Z | OWNER | simonw/datasette/pulls/627 | Refs #622 |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/627/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | |||||

| 521329771 | MDU6SXNzdWU1MjEzMjk3NzE= | 628 | Render jinja2 templates in async mode | simonw 9599 | closed | 0 | 2 | 2019-11-12T05:01:55Z | 2019-11-14T23:28:09Z | 2019-11-14T23:14:24Z | OWNER | I started playing with this in #404 and got good results but it didn't work in Python 3.5. As of #627 I don't support 3.5 any more so this can go ahead. Rendering templates in async mode will mean that template plugins can include async code... which opens the door to custom template functions that execute SQL queries! |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/628/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 521995039 | MDU6SXNzdWU1MjE5OTUwMzk= | 632 | Upgrade datasette publish Heroku runtime | simonw 9599 | closed | 0 | 2 | 2019-11-13T06:46:19Z | 2019-11-13T16:44:07Z | 2019-11-13T16:43:23Z | OWNER |

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/632/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 522352520 | MDU6SXNzdWU1MjIzNTI1MjA= | 634 | Don't run tests twice when releasing a tag | simonw 9599 | closed | 0 | 2 | 2019-11-13T17:02:42Z | 2020-09-15T20:37:58Z | 2020-09-15T20:37:58Z | OWNER | Shipping a release currently runs the tests twice: https://travis-ci.org/simonw/datasette/builds/611463728 It does a regular test run on Python 3.6/7/8 - then the "Release tagged version" step runs the tests again before publishing to PyPI! This second run is not necessary. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/634/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 529376481 | MDExOlB1bGxSZXF1ZXN0MzQ2MjY0OTI2 | 67 | Run tests against 3.5 too | simonw 9599 | closed | 0 | 2 | 2019-11-27T14:20:35Z | 2019-12-31T01:29:44Z | 2019-12-31T01:29:43Z | OWNER | simonw/sqlite-utils/pulls/67 | sqlite-utils 140912432 | pull | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/67/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

| 534530973 | MDU6SXNzdWU1MzQ1MzA5NzM= | 649 | Reduce table counts on index page with many databases | simonw 9599 | closed | 0 | 2 | 2019-12-08T11:56:37Z | 2020-02-29T01:08:29Z | 2020-02-29T01:08:29Z | OWNER | Since #467 the index page has attempted to optimistically count times. My personal Dogsheep has enough connected databases and tables that the page can still take way too long to load - sometimes more than twenty seconds. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/649/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 539204432 | MDU6SXNzdWU1MzkyMDQ0MzI= | 70 | Implement ON DELETE and ON UPDATE actions for foreign keys | LucasElArruda 26292069 | open | 0 | 2 | 2019-12-17T17:19:10Z | 2020-02-27T04:18:53Z | NONE | Hi! I did not find any mention on the library about ON DELETE and ON UPDATE actions for foreign keys. Are those expected to be implemented? If not, it would be a nice thing to include! |

sqlite-utils 140912432 | issue | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/70/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 550293770 | MDU6SXNzdWU1NTAyOTM3NzA= | 658 | How do I use the app.css as style sheet? | null92 49656826 | open | 0 | 2 | 2020-01-15T16:27:57Z | 2020-02-07T00:29:50Z | NONE | Simon, I'm trying to use the app.css (in static folder) as style sheet but the datasette on Heroku simply ignore it! I read everything about customization here and on readthedocs but still can't. Is this possible? Thanks! |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/658/reactions",

"total_count": 1,

"+1": 1,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 557825032 | MDU6SXNzdWU1NTc4MjUwMzI= | 77 | Ability to insert data that is transformed by a SQL function | simonw 9599 | closed | 0 | 2 | 2020-01-30T23:45:55Z | 2022-02-05T00:04:25Z | 2020-01-31T00:24:32Z | OWNER | I want to be able to run the equivalent of this SQL insert: ```python Convert to "Well Known Text" formatwkt = shape(geojson['geometry']).wkt Insert and commit the recordconn.execute("INSERT INTO places (id, name, geom) VALUES(null, ?, GeomFromText(?, 4326))", ( "Wales", wkt )) conn.commit() ``` From the Datasette SpatiaLite docs: https://datasette.readthedocs.io/en/stable/spatialite.html To do this, I need a way of telling |

sqlite-utils 140912432 | issue | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/77/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 562787785 | MDU6SXNzdWU1NjI3ODc3ODU= | 667 | Allow injecting configuration data from plugins | xrotwang 870184 | closed | 0 | 2 | 2020-02-10T19:50:15Z | 2020-02-12T16:18:22Z | 2020-02-12T09:21:22Z | NONE | I'm trying to customize datasette as explorer for CLDF datasets. Such datasets can be converted automatically to SQLite, which then can be fed to datasette, (e.g. https://github.com/cldf/cookbook/blob/master/recipes/datasette/README.md). Part of this customization would be support for the "special" data types described in the CLDF ontology. But while rendering of the values can be customized via the It would be nice to be able to programmatically inject config data from plugins as well. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/667/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 563347679 | MDU6SXNzdWU1NjMzNDc2Nzk= | 668 | Make it easier to load SpatiaLite | simonw 9599 | closed | 0 | 2 | 2020-02-11T17:03:43Z | 2022-01-20T21:29:41Z | 2021-01-04T20:18:39Z | OWNER | ``` $ datasette spatial.db Serve! files=('spatial.db',) (immutables=()) on port 8001 ERROR: conn=<sqlite3.Connection object at 0x11e388f10>, sql = 'PRAGMA table_info(SpatialIndex);', params = None: no such module: VirtualSpatialIndex Usage: datasette serve [OPTIONS] [FILES]... Error: It looks like you're trying to load a SpatiaLite database without first loading the SpatiaLite module. Read more: https://datasette.readthedocs.io/en/latest/spatialite.html

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/668/reactions",

"total_count": 1,

"+1": 1,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 565837965 | MDU6SXNzdWU1NjU4Mzc5NjU= | 87 | Should detect collections.OrderedDict as a regular dictionary | simonw 9599 | closed | 0 | 2 | 2020-02-16T02:06:34Z | 2020-02-16T02:20:59Z | 2020-02-16T02:20:59Z | OWNER |

|

sqlite-utils 140912432 | issue | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/87/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 569268612 | MDU6SXNzdWU1NjkyNjg2MTI= | 679 | Release 0.36 | simonw 9599 | closed | 0 | 2 | 2020-02-22T02:41:01Z | 2020-02-22T03:52:13Z | 2020-02-22T03:52:13Z | OWNER | I think we have enough changes to warrant a release - and I want to take advantage of the changes to the Changes since 0.35 so far: https://github.com/simonw/datasette/compare/0.35...be2265b0e811d0ac2875c2f748125c17b0f9289e

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/679/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 576722115 | MDU6SXNzdWU1NzY3MjIxMTU= | 696 | Single failing unit test when run inside the Docker image | simonw 9599 | closed | 0 | Datasette 1.0 3268330 | 2 | 2020-03-06T06:16:36Z | 2021-03-29T17:04:19Z | 2021-03-07T07:41:18Z | OWNER |

It was for

Originally posted by @simonw in https://github.com/simonw/datasette/issues/695#issuecomment-595614469 |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/696/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 578883725 | MDU6SXNzdWU1Nzg4ODM3MjU= | 17 | Command for importing commits | simonw 9599 | closed | 0 | 2 | 2020-03-10T21:55:12Z | 2020-03-11T02:47:37Z | 2020-03-11T02:47:37Z | MEMBER | Using this API: https://api.github.com/repos/dogsheep/github-to-sqlite/commits |

github-to-sqlite 207052882 | issue | {

"url": "https://api.github.com/repos/dogsheep/github-to-sqlite/issues/17/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 585266763 | MDU6SXNzdWU1ODUyNjY3NjM= | 34 | IndexError running user-timeline command | simonw 9599 | closed | 0 | 2 | 2020-03-20T18:54:08Z | 2020-03-20T19:20:52Z | 2020-03-20T19:20:37Z | MEMBER |

|

twitter-to-sqlite 206156866 | issue | {

"url": "https://api.github.com/repos/dogsheep/twitter-to-sqlite/issues/34/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 585850715 | MDU6SXNzdWU1ODU4NTA3MTU= | 19 | Enable full-text search for more stuff (like commits, issues and issue_comments) | simonw 9599 | closed | 0 | 1.0 5225818 | 2 | 2020-03-23T00:19:56Z | 2020-03-23T19:06:39Z | 2020-03-23T19:06:39Z | MEMBER | Currently FTS is only enabled for repos and releases. |

github-to-sqlite 207052882 | issue | {

"url": "https://api.github.com/repos/dogsheep/github-to-sqlite/issues/19/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 586561727 | MDU6SXNzdWU1ODY1NjE3Mjc= | 21 | Turn GitHub API errors into exceptions | simonw 9599 | closed | 0 | 1.0 5225818 | 2 | 2020-03-23T22:37:24Z | 2020-03-23T23:48:23Z | 2020-03-23T23:48:22Z | MEMBER | This would have really helped in debugging the mess in #13. Running with this |

github-to-sqlite 207052882 | issue | {

"url": "https://api.github.com/repos/dogsheep/github-to-sqlite/issues/21/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 587302139 | MDExOlB1bGxSZXF1ZXN0MzkzMjc0NDMz | 708 | base_url configuration setting, refs #394 | simonw 9599 | closed | 0 | Datasette 0.39 5234079 | 2 | 2020-03-24T21:52:00Z | 2020-03-25T00:18:44Z | 2020-03-25T00:18:44Z | OWNER | simonw/datasette/pulls/708 | Pull request implementing #394 |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/708/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||

| 587322443 | MDU6SXNzdWU1ODczMjI0NDM= | 710 | Remove Zeit Now v1 support | simonw 9599 | closed | 0 | 2 | 2020-03-24T22:39:49Z | 2020-04-04T23:05:12Z | 2020-04-04T23:05:12Z | OWNER | It will remain supported as a plugin but since no-one can sign up for Docker hosting any more (for over a year now) there's no point including it in Datasette core. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/710/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 587398703 | MDU6SXNzdWU1ODczOTg3MDM= | 711 | Release notes for Datasette 0.39 | simonw 9599 | closed | 0 | Datasette 0.39 5234079 | 2 | 2020-03-25T02:31:13Z | 2020-03-25T04:06:55Z | 2020-03-25T04:06:55Z | OWNER | Then I can ship it. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/711/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 589801352 | MDExOlB1bGxSZXF1ZXN0Mzk1MjU4Njg3 | 96 | Add type conversion for Panda's Timestamp | b0b5h4rp13 32605365 | closed | 0 | 2 | 2020-03-29T14:13:09Z | 2020-03-31T04:40:49Z | 2020-03-31T04:40:48Z | CONTRIBUTOR | simonw/sqlite-utils/pulls/96 | Add type conversion for Panda's Timestamp, if Panda library is present in system (thanks for this project, I was about to do the same thing from scratch) |

sqlite-utils 140912432 | pull | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/96/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | |||||

| 598640234 | MDU6SXNzdWU1OTg2NDAyMzQ= | 99 | .upsert_all() should maybe error if dictionaries passed to it do not have the same keys | simonw 9599 | closed | 0 | 2 | 2020-04-13T03:02:25Z | 2020-04-13T03:05:20Z | 2020-04-13T03:05:04Z | OWNER | While investigating #98 I stumbled across this: ``` def test_upsert_compound_primary_key(fresh_db): table = fresh_db["table"] table.upsert_all( [ {"species": "dog", "id": 1, "name": "Cleo", "age": 4}, {"species": "cat", "id": 1, "name": "Catbag"}, ], pk=("species", "id"), ) table.upsert_all( [ {"species": "dog", "id": 1, "age": 5}, {"species": "dog", "id": 2, "name": "New Dog", "age": 1}, ], pk=("species", "id"), )

|

sqlite-utils 140912432 | issue | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/99/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 601271612 | MDU6SXNzdWU2MDEyNzE2MTI= | 26 | Topics are missing from repositories | simonw 9599 | closed | 0 | 2 | 2020-04-16T17:36:32Z | 2020-04-16T17:41:11Z | 2020-04-16T17:41:11Z | MEMBER | I'm sure this used to work, but right now repositories are fetched without their topics. https://developer.github.com/v3/repos/ says you need to send a custom |

github-to-sqlite 207052882 | issue | {

"url": "https://api.github.com/repos/dogsheep/github-to-sqlite/issues/26/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 602533481 | MDU6SXNzdWU2MDI1MzM0ODE= | 3 | Import EXIF data into SQLite - lens used, ISO, aperture etc | simonw 9599 | open | 0 | Apple Photos online and securely browsable 5324096 | 2 | 2020-04-18T19:24:31Z | 2021-10-05T12:38:24Z | MEMBER | dogsheep-photos 256834907 | issue | {

"url": "https://api.github.com/repos/dogsheep/dogsheep-photos/issues/3/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 602575575 | MDU6SXNzdWU2MDI1NzU1NzU= | 6 | Add progress bar to upload command | simonw 9599 | closed | 0 | 2 | 2020-04-18T23:32:41Z | 2020-04-19T00:15:24Z | 2020-04-19T00:15:24Z | MEMBER | Upload was added in #4 |

dogsheep-photos 256834907 | issue | {

"url": "https://api.github.com/repos/dogsheep/dogsheep-photos/issues/6/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 602585497 | MDU6SXNzdWU2MDI1ODU0OTc= | 7 | Integrate image content hashing | simonw 9599 | open | 0 | 2 | 2020-04-19T00:36:58Z | 2021-08-26T02:01:01Z | MEMBER | To spot duplicate images (where the file content differs such that the sha256 is no longer a match) it would be useful to calculate and store perceptual hashes of some sort. |

dogsheep-photos 256834907 | issue | {

"url": "https://api.github.com/repos/dogsheep/dogsheep-photos/issues/7/reactions",

"total_count": 1,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 1,

"rocket": 0,

"eyes": 0

} |

||||||||

| 603618244 | MDU6SXNzdWU2MDM2MTgyNDQ= | 30 | Issues milestone column is the wrong type | simonw 9599 | closed | 0 | 2 | 2020-04-21T00:24:34Z | 2020-04-21T00:45:23Z | 2020-04-21T00:36:22Z | MEMBER | https://github-to-sqlite.dogsheep.net/github/issues?milestone=2857392

It is TEXT when it should be an INTEGER - which is why the foreign key label is not correctly displayed. |

github-to-sqlite 207052882 | issue | {

"url": "https://api.github.com/repos/dogsheep/github-to-sqlite/issues/30/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 605147638 | MDU6SXNzdWU2MDUxNDc2Mzg= | 8 | Should I have used MD5 instead of SHA256? | simonw 9599 | closed | 0 | 2 | 2020-04-23T00:02:08Z | 2020-04-23T00:03:35Z | 2020-04-23T00:03:35Z | MEMBER | https://docs.aws.amazon.com/AmazonS3/latest/API/RESTCommonResponseHeaders.html

|

dogsheep-photos 256834907 | issue | {

"url": "https://api.github.com/repos/dogsheep/dogsheep-photos/issues/8/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 605806386 | MDU6SXNzdWU2MDU4MDYzODY= | 735 | Error when I click on "View and edit SQL" | aborruso 30607 | closed | 0 | 2 | 2020-04-23T19:31:32Z | 2020-04-28T06:10:20Z | 2020-04-27T19:00:30Z | NONE | datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/735/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||||

| 605938063 | MDU6SXNzdWU2MDU5MzgwNjM= | 9 | upload command should be resumable, should only upload photos not already uploaded | simonw 9599 | closed | 0 | 2 | 2020-04-23T23:31:08Z | 2020-04-23T23:39:14Z | 2020-04-23T23:39:14Z | MEMBER | Follow on from #4. |

dogsheep-photos 256834907 | issue | {

"url": "https://api.github.com/repos/dogsheep/dogsheep-photos/issues/9/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 607888367 | MDU6SXNzdWU2MDc4ODgzNjc= | 13 | Also upload movie files | simonw 9599 | open | 0 | 2 | 2020-04-27T22:11:25Z | 2020-04-28T00:39:45Z | MEMBER | The Need to cover movies taken by my phone and DSLR too. |

dogsheep-photos 256834907 | issue | {

"url": "https://api.github.com/repos/dogsheep/dogsheep-photos/issues/13/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 608613033 | MDU6SXNzdWU2MDg2MTMwMzM= | 745 | Extract the hash-URL mechanism out into a plugin | simonw 9599 | closed | 0 | 2 | 2020-04-28T21:00:38Z | 2020-10-23T19:47:18Z | 2020-10-23T19:47:10Z | OWNER | 0.28 in May 2019 made this feature not-the-default: https://datasette.readthedocs.io/en/stable/changelog.html#v0-28 - see #418 I've not felt the need to use it myself since. I think I should move it into a plugin. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/745/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 610842926 | MDU6SXNzdWU2MTA4NDI5MjY= | 36 | Add view for better display of dependent repos | simonw 9599 | closed | 0 | 2 | 2020-05-01T16:33:44Z | 2020-05-02T16:50:31Z | 2020-05-02T16:30:11Z | MEMBER |

|

github-to-sqlite 207052882 | issue | {

"url": "https://api.github.com/repos/dogsheep/github-to-sqlite/issues/36/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 611222968 | MDU6SXNzdWU2MTEyMjI5Njg= | 107 | sqlite-utils create-view CLI command | simonw 9599 | closed | 0 | 2 | 2020-05-02T16:15:13Z | 2020-05-03T15:36:58Z | 2020-05-03T15:36:37Z | OWNER | Can go with #27 - |

sqlite-utils 140912432 | issue | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/107/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 611326701 | MDU6SXNzdWU2MTEzMjY3MDE= | 108 | Documentation unit tests for CLI commands | simonw 9599 | closed | 0 | 2 | 2020-05-03T03:58:42Z | 2020-05-03T04:13:57Z | 2020-05-03T04:13:57Z | OWNER | Have a test that ensures all CLI commands are documented. |

sqlite-utils 140912432 | issue | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/108/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 611997130 | MDU6SXNzdWU2MTE5OTcxMzA= | 754 | Clean up aiofiles warnings on 3.8 | simonw 9599 | closed | 0 | 2 | 2020-05-04T16:14:59Z | 2020-05-04T16:22:30Z | 2020-05-04T16:22:30Z | OWNER | https://travis-ci.org/github/simonw/datasette/jobs/682624476 Lots of warnings like this: ``` /home/travis/virtualenv/python3.8.0/lib/python3.8/site-packages/aiofiles/threadpool/utils.py:33 /home/travis/virtualenv/python3.8.0/lib/python3.8/site-packages/aiofiles/threadpool/utils.py:33 /home/travis/virtualenv/python3.8.0/lib/python3.8/site-packages/aiofiles/threadpool/utils.py:33: DeprecationWarning: "@coroutine" decorator is deprecated since Python 3.8, use "async def" instead /home/travis/virtualenv/python3.8.0/lib/python3.8/site-packages/aiofiles/threadpool/init.py:27 /home/travis/virtualenv/python3.8.0/lib/python3.8/site-packages/aiofiles/threadpool/init.py:27: DeprecationWarning: "@coroutine" decorator is deprecated since Python 3.8, use "async def" instead ``` |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/754/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 612089949 | MDU6SXNzdWU2MTIwODk5NDk= | 756 | Add pipx to installation documentation | simonw 9599 | closed | 0 | 2 | 2020-05-04T18:49:01Z | 2020-05-04T19:19:06Z | 2020-05-04T19:10:33Z | OWNER | Add to this page: https://datasette.readthedocs.io/en/stable/installation.html Here's how to install plugins: https://twitter.com/simonw/status/1257348687979778050 ``` $ datasette plugins [] $ pipx inject datasette datasette-json-html $ datasette plugins [ { "name": "datasette-json-html", "static": false, "templates": false, "version": "0.6" } ] ``` |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/756/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 615477131 | MDU6SXNzdWU2MTU0NzcxMzE= | 111 | sqlite-utils drop-table and drop-view commands | simonw 9599 | closed | 0 | 2 | 2020-05-10T21:10:42Z | 2020-05-11T01:58:36Z | 2020-05-11T00:44:26Z | OWNER | Would be useful to be able to drop views and tables from the CLI. |

sqlite-utils 140912432 | issue | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/111/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 617323873 | MDU6SXNzdWU2MTczMjM4NzM= | 766 | Enable wildcard-searches by default | clausjuhl 2181410 | open | 0 | 2 | 2020-05-13T10:14:48Z | 2021-03-05T16:35:21Z | NONE | Hi Simon. It seems that datasette currently has wildcard-searches disabled by default (along with the boolean search-options, NEAR-queries and more, and despite the docs). If I try out the search-url provided in the docs (https://fara.datasettes.com/fara/FARA_All_ShortForms?_search=manafort), it does not handle wildcard-searches, and I'm unable to make it work on my datasette-instance. I would argue that wildcard-searches is such a standard query, that it should be enabled by default. Requiring "_searchmode=raw" when using prefix-searches seems unnecessary. Plus: What happens to non-ascii searches when using "_searchmode=raw"? Is the "escape_fts"-function from datasette.utils ignored? Thanks! /Claus |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/766/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 620969465 | MDU6SXNzdWU2MjA5Njk0NjU= | 767 | Allow to specify a URL fragment for canned queries | rixx 2657547 | closed | 0 | Datasette 0.43 5471110 | 2 | 2020-05-19T13:17:42Z | 2020-05-27T21:52:25Z | 2020-05-27T21:52:25Z | CONTRIBUTOR | Canned queries are very useful to direct users to prepared data and views. I like to use them with charts using datasette-vega a lot, because people get a direct impression at first glance. datasette-vega doesn't show up by default though, and users have to click through to it. Also, datasette-vega does not always guess the best way to render columns correctly though, so it would be nice if I could specify a URL fragment in my canned queries to make sure people see what I want them to see. My current workaround is to include a fragement link in |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/767/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 621286870 | MDU6SXNzdWU2MjEyODY4NzA= | 113 | Syntactic sugar for ATTACH DATABASE | simonw 9599 | closed | 0 | 2 | 2020-05-19T21:10:00Z | 2021-02-19T05:09:12Z | 2021-02-19T04:56:36Z | OWNER | https://www.sqlite.org/lang_attach.html Maybe something like this:

|

sqlite-utils 140912432 | issue | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/113/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 626001501 | MDU6SXNzdWU2MjYwMDE1MDE= | 773 | All plugin hooks should have unit tests | simonw 9599 | closed | 0 | Datasette 0.43 5471110 | 2 | 2020-05-27T20:17:41Z | 2020-05-28T04:12:11Z | 2020-05-28T04:09:25Z | OWNER | Four hooks currently missing tests:

``` $ pytest -k test_plugin_hooks_have_tests -vv ====================================== test session starts ====================================== platform darwin -- Python 3.7.7, pytest-5.2.4, py-1.8.1, pluggy-0.13.1 -- /Users/simon/.local/share/virtualenvs/datasette-AWNrQs95/bin/python cachedir: .pytest_cache rootdir: /Users/simon/Dropbox/Development/datasette, inifile: pytest.ini plugins: asyncio-0.10.0 collected 486 items / 475 deselected / 11 selected tests/test_plugins.py::test_plugin_hooks_have_tests[asgi_wrapper] XPASS [ 9%] tests/test_plugins.py::test_plugin_hooks_have_tests[extra_body_script] XPASS [ 18%] tests/test_plugins.py::test_plugin_hooks_have_tests[extra_css_urls] XPASS [ 27%] tests/test_plugins.py::test_plugin_hooks_have_tests[extra_js_urls] XPASS [ 36%] tests/test_plugins.py::test_plugin_hooks_have_tests[extra_template_vars] XPASS [ 45%] tests/test_plugins.py::test_plugin_hooks_have_tests[prepare_connection] XPASS [ 54%] tests/test_plugins.py::test_plugin_hooks_have_tests[prepare_jinja2_environment] XFAIL [ 63%] tests/test_plugins.py::test_plugin_hooks_have_tests[publish_subcommand] XFAIL [ 72%] tests/test_plugins.py::test_plugin_hooks_have_tests[register_facet_classes] XFAIL [ 81%] tests/test_plugins.py::test_plugin_hooks_have_tests[register_output_renderer] XFAIL [ 90%] tests/test_plugins.py::test_plugin_hooks_have_tests[render_cell] XPASS [100%] ========================= 475 deselected, 4 xfailed, 7 xpassed in 1.70s ========================= Originally posted by @simonw in https://github.com/simonw/datasette/issues/771#issuecomment-634915104 |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/773/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 626593402 | MDU6SXNzdWU2MjY1OTM0MDI= | 780 | Internals documentation for datasette.metadata() method | simonw 9599 | open | 0 | Datasette 1.0 3268330 | 2 | 2020-05-28T15:14:22Z | 2022-03-15T20:50:34Z | OWNER | datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/780/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 628087971 | MDU6SXNzdWU2MjgwODc5NzE= | 786 | Documentation page describing Datasette's authentication system | simonw 9599 | closed | 0 | Datasette 0.44 5512395 | 2 | 2020-06-01T01:10:06Z | 2020-06-06T19:40:20Z | 2020-06-06T19:40:20Z | OWNER | Originally posted by @simonw in https://github.com/simonw/datasette/issues/699#issuecomment-636562999 |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/786/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 628121234 | MDU6SXNzdWU2MjgxMjEyMzQ= | 788 | /-/permissions debugging tool | simonw 9599 | closed | 0 | Datasette 0.44 5512395 | 2 | 2020-06-01T03:13:47Z | 2020-06-06T00:43:40Z | 2020-06-01T05:01:01Z | OWNER |

Originally posted by @simonw in https://github.com/simonw/datasette/issues/699#issuecomment-636576603 |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/788/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 628156527 | MDU6SXNzdWU2MjgxNTY1Mjc= | 789 | Mechanism for enabling pluggy tracing | simonw 9599 | open | 0 | 2 | 2020-06-01T05:10:14Z | 2020-06-01T05:11:03Z | OWNER | Could be useful for debugging plugins: https://pluggy.readthedocs.io/en/latest/#call-tracing I tried this out by adding these two lines in Added these:pm.trace.root.setwriter(print)

pm.enable_tracing()

finish actor_from_request --> [] [hook] extra_body_script [hook] template: show_json.html database: None table: None view_name: json_data datasette: <datasette.app.Datasette object at 0x106277ad0> finish extra_body_script --> [] [hook] extra_template_vars [hook] template: show_json.html database: None table: None view_name: json_data request: <datasette.utils.asgi.Request object at 0x1065504d0> datasette: <datasette.app.Datasette object at 0x106277ad0> finish extra_template_vars --> [] [hook] extra_css_urls [hook] template: show_json.html database: None table: None datasette: <datasette.app.Datasette object at 0x106277ad0> finish extra_css_urls --> [] [hook] extra_js_urls [hook] template: show_json.html database: None table: None datasette: <datasette.app.Datasette object at 0x106277ad0> finish extra_js_urls --> [] [hook] INFO: 127.0.0.1:52724 - "GET /-/actor HTTP/1.1" 200 OK actor_from_request [hook] datasette: <datasette.app.Datasette object at 0x106277ad0> request: <datasette.utils.asgi.Request object at 0x1065500d0> finish actor_from_request --> [] [hook] ``` |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/789/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 628572716 | MDU6SXNzdWU2Mjg1NzI3MTY= | 791 | Tutorial: building a something-interesting with writable canned queries | simonw 9599 | open | 0 | 2 | 2020-06-01T16:32:05Z | 2020-10-10T23:34:42Z | OWNER | Initial idea: TODO list, as a tutorial for #698 writable canned queries. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/791/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 629535669 | MDU6SXNzdWU2Mjk1MzU2Njk= | 794 | Show hooks implemented by each plugin on /-/plugins | simonw 9599 | closed | 0 | Datasette 1.0 3268330 | 2 | 2020-06-02T21:44:38Z | 2020-06-02T22:30:17Z | 2020-06-02T21:50:10Z | OWNER | e.g.

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/794/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 629541395 | MDU6SXNzdWU2Mjk1NDEzOTU= | 795 | response.set_cookie() method | simonw 9599 | closed | 0 | Datasette 0.44 5512395 | 2 | 2020-06-02T21:57:05Z | 2020-06-09T22:33:33Z | 2020-06-09T22:19:48Z | OWNER | Mainly to clean up this code: |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/795/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 631789422 | MDU6SXNzdWU2MzE3ODk0MjI= | 799 | TestResponse needs to handle multiple set-cookie headers | simonw 9599 | closed | 0 | 2 | 2020-06-05T17:39:52Z | 2020-06-05T18:34:10Z | 2020-06-05T18:34:10Z | OWNER | Seeing this test failure on #798: ``` ___ test_auth_token ___ app_client = <tests.fixtures.TestClient object at 0x11285c910> def test_auth_token(app_client): "The /-/auth-token endpoint sets the correct cookie" assert app_client.ds._root_token is not None path = "/-/auth-token?token={}".format(app_client.ds._root_token) response = app_client.get(path, allow_redirects=False,) assert 302 == response.status assert "/" == response.headers["Location"]

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/799/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 632918799 | MDU6SXNzdWU2MzI5MTg3OTk= | 808 | Permission check for every view in Datasette (plus docs) | simonw 9599 | closed | 0 | Datasette 0.44 5512395 | 2 | 2020-06-07T01:59:23Z | 2020-06-07T05:30:49Z | 2020-06-07T05:30:49Z | OWNER | Every view in Datasette should perform a permission check to see if the current user/actor is allowed to view that page. This permission check will default to allowed, but having this check will allow plugins to lock down access selectively or even to everything in a Datasette instance. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/808/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 634783573 | MDU6SXNzdWU2MzQ3ODM1NzM= | 816 | Come up with a new example for extra_template_vars plugin | simonw 9599 | closed | 0 | Datasette 0.44 5512395 | 2 | 2020-06-08T16:57:59Z | 2020-06-08T19:06:44Z | 2020-06-08T19:06:11Z | OWNER | This example is obsolete, it's from a time before |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/816/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 635049296 | MDU6SXNzdWU2MzUwNDkyOTY= | 820 | Idea: Plugin hook for registering canned queries | simonw 9599 | closed | 0 | 2 | 2020-06-09T01:58:21Z | 2020-06-18T17:58:02Z | 2020-06-18T17:58:02Z | OWNER | Thought of this while thinking about possible permissions plugins (#818). Imagine an API key plugin which allows access for API keys. It could let users register new API keys by providing a writable canned query for writing to the To do this the plugin needs to register the query. At the moment queries have to be registered in One challenge: how does the plugin know which named database the query should be registered for? It could default to the first attached database and allow users to optionally tell the plugin "actually use this named database instead" in plugin configuration. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/820/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 635077656 | MDU6SXNzdWU2MzUwNzc2NTY= | 822 | request.url_vars helper property | simonw 9599 | closed | 0 | Datasette 0.44 5512395 | 2 | 2020-06-09T03:15:53Z | 2020-06-09T03:40:07Z | 2020-06-09T03:40:06Z | OWNER | This example: https://github.com/simonw/datasette/blob/f5e79adf26d0daa3831e3fba022f1b749a9efdee/docs/plugins.rst#register_routes ```python from datasette.utils.asgi import Response import html async def hello_from(scope): name = scope["url_route"]["kwargs"]["name"] return Response.html("Hello from {}".format( html.escape(name) )) @hookimpl

def register_routes():

return [

(r"^/hello-from/(?P<name>.*)$"), hello_from)

]

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/822/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 637899539 | MDU6SXNzdWU2Mzc4OTk1Mzk= | 40 | Demo deploy is broken | simonw 9599 | closed | 0 | 2 | 2020-06-12T17:20:17Z | 2020-06-12T18:06:48Z | 2020-06-12T18:06:48Z | MEMBER | https://github.com/dogsheep/github-to-sqlite/runs/766180404?check_suite_focus=true ``` The following NEW packages will be installed: sqlite3 0 upgraded, 1 newly installed, 0 to remove and 11 not upgraded. Need to get 752 kB of archives. After this operation, 2482 kB of additional disk space will be used. Ign:1 http://azure.archive.ubuntu.com/ubuntu bionic-updates/main amd64 sqlite3 amd64 3.22.0-1ubuntu0.3 Err:1 http://security.ubuntu.com/ubuntu bionic-updates/main amd64 sqlite3 amd64 3.22.0-1ubuntu0.3 404 Not Found [IP: 52.177.174.250 80] E: Failed to fetch http://security.ubuntu.com/ubuntu/pool/main/s/sqlite3/sqlite3_3.22.0-1ubuntu0.3_amd64.deb 404 Not Found [IP: 52.177.174.250 80] E: Unable to fetch some archives, maybe run apt-get update or try with --fix-missing? [error]Process completed with exit code 100.``` |

github-to-sqlite 207052882 | issue | {

"url": "https://api.github.com/repos/dogsheep/github-to-sqlite/issues/40/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 638229448 | MDU6SXNzdWU2MzgyMjk0NDg= | 843 | Configure codecov.io | simonw 9599 | closed | 0 | 2 | 2020-06-13T20:45:00Z | 2020-06-13T21:36:52Z | 2020-06-13T21:36:52Z | OWNER | Originally posted by @simonw in https://github.com/simonw/datasette/issues/841#issuecomment-643660757 |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/843/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 642127307 | MDU6SXNzdWU2NDIxMjczMDc= | 855 | Add instructions for using cookiecutter plugin template to plugin docs | simonw 9599 | closed | 0 | Datasette 0.45 5533512 | 2 | 2020-06-19T17:33:25Z | 2020-06-22T02:51:38Z | 2020-06-22T02:51:38Z | OWNER | Once I ship the |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/855/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 644122661 | MDU6SXNzdWU2NDQxMjI2NjE= | 116 | Documentation for table.pks introspection property | simonw 9599 | closed | 0 | 2 | 2020-06-23T20:27:24Z | 2020-06-23T21:21:33Z | 2020-06-23T21:03:14Z | OWNER | sqlite-utils 140912432 | issue | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/116/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||||

| 648245071 | MDU6SXNzdWU2NDgyNDUwNzE= | 8 | Error thrown: table photos has no column named hasSticker | harperreed 18504 | closed | 0 | 2 | 2020-06-30T14:54:37Z | 2020-10-12T20:35:06Z | 2020-10-12T20:25:24Z | NONE | While running Where should i dig in? |

swarm-to-sqlite 205429375 | issue | {

"url": "https://api.github.com/repos/dogsheep/swarm-to-sqlite/issues/8/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 648673556 | MDU6SXNzdWU2NDg2NzM1NTY= | 882 | Release notes for 0.45 | simonw 9599 | closed | 0 | Datasette 0.45 5533512 | 2 | 2020-07-01T05:00:17Z | 2020-07-01T21:48:08Z | 2020-07-01T21:48:08Z | OWNER | These are mostly done thanks to the alphas, but I went to have more paragraphs of prose and less bullet points. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/882/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 649429772 | MDU6SXNzdWU2NDk0Mjk3NzI= | 886 | Reconsider how _actor_X magic parameter deals with missing values | simonw 9599 | open | 0 | 2 | 2020-07-02T00:00:38Z | 2020-09-11T21:35:26Z | OWNER | I had to build a custom @hookimpl

def register_magic_parameters():

return [

("actorornull", actorornull),

]

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/886/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 650305298 | MDExOlB1bGxSZXF1ZXN0NDQzODIzMDQw | 890 | Load only python files from plugins-dir. | amjith 49260 | closed | 0 | 2 | 2020-07-03T02:47:32Z | 2020-07-03T03:08:33Z | 2020-07-03T03:08:33Z | CONTRIBUTOR | simonw/datasette/pulls/890 | The current behavior for This PR restricts the module loading to only python files. |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/890/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | |||||

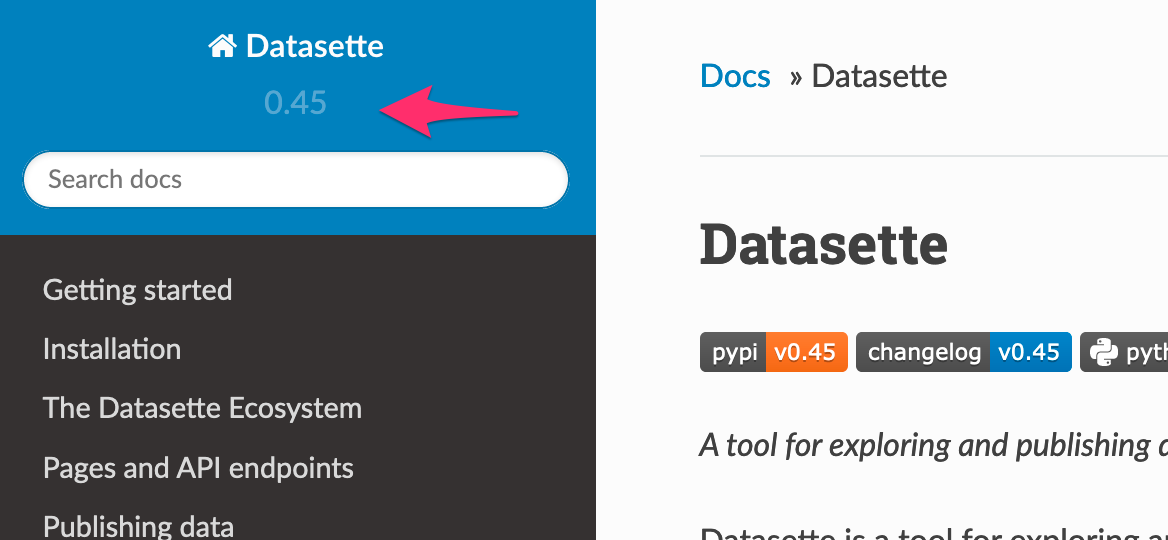

| 655465863 | MDU6SXNzdWU2NTU0NjU4NjM= | 892 | "latest" in new documentation navbar is invisible | simonw 9599 | closed | 0 | 2 | 2020-07-12T19:57:21Z | 2020-07-12T20:02:35Z | 2020-07-12T20:02:17Z | OWNER | On https://datasette.readthedocs.io/en/latest/

Compare with https://datasette.readthedocs.io/en/0.45/

Some custom CSS should fix it. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/892/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 659873662 | MDU6SXNzdWU2NTk4NzM2NjI= | 898 | datasette.utils.testing module | simonw 9599 | open | 0 | 2 | 2020-07-18T03:53:24Z | 2020-07-18T03:57:46Z | OWNER | The unit tests for plugins could benefit from reusing code from Datasette's own testing fixtures, e.g.:

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/898/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 662439034 | MDExOlB1bGxSZXF1ZXN0NDUzOTk1MTc5 | 902 | Don't install tests package | abeyerpath 32467826 | closed | 0 | 2 | 2020-07-21T01:08:50Z | 2020-07-24T20:39:54Z | 2020-07-24T20:39:54Z | CONTRIBUTOR | simonw/datasette/pulls/902 | The Fixes: #456 |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/902/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | |||||

| 663317875 | MDU6SXNzdWU2NjMzMTc4NzU= | 905 | /database.db download should include content-length header | simonw 9599 | closed | 0 | 2 | 2020-07-21T21:23:48Z | 2020-07-22T04:59:46Z | 2020-07-22T04:52:45Z | OWNER | I can do this by modifying this function: https://github.com/simonw/datasette/blob/02dc6298bdbfb1d63e0d2a39ff597b5fcc60e06b/datasette/utils/asgi.py#L248-L270 |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/905/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 665403403 | MDU6SXNzdWU2NjU0MDM0MDM= | 907 | Allow documentation doesn't explain what happens with multiple allow keys | simonw 9599 | closed | 0 | Datasette 0.46 5607421 | 2 | 2020-07-24T20:34:40Z | 2020-07-24T22:53:07Z | 2020-07-24T22:53:07Z | OWNER | Documentation here: https://datasette.readthedocs.io/en/0.45/authentication.html#defining-permissions-with-allow-blocks Doesn't explain that with the following "allow" block:

The tests are missing this case too: https://github.com/simonw/datasette/blob/028f193dd6233fa116262ab4b07b13df7dcec9be/tests/test_utils.py#L504 Related to #906 |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/907/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 665819048 | MDU6SXNzdWU2NjU4MTkwNDg= | 126 | Ability to insert binary data on the CLI using JSON | simonw 9599 | closed | 0 | 2 | 2020-07-26T16:54:14Z | 2020-07-27T04:00:33Z | 2020-07-27T03:59:45Z | OWNER |

|

sqlite-utils 140912432 | issue | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/126/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 667467128 | MDU6SXNzdWU2Njc0NjcxMjg= | 909 | AsgiFileDownload: filename not correctly passed | simonw 9599 | closed | 0 | 2 | 2020-07-29T00:41:43Z | 2020-07-30T00:56:17Z | 2020-07-29T21:34:48Z | OWNER | https://github.com/simonw/datasette/blob/3c33b421320c0be81a625ca7307b2e4416a9ed5b/datasette/utils/asgi.py#L396-L405

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/909/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 668308777 | MDU6SXNzdWU2NjgzMDg3Nzc= | 129 | "insert-files --sqlar" for creating SQLite archives | simonw 9599 | closed | 0 | 2 | 2020-07-30T02:28:29Z | 2020-07-30T22:41:01Z | 2020-07-30T22:40:55Z | OWNER | A https://www.sqlite.org/sqlar.html and https://sqlite.org/sqlar/doc/trunk/README.md |

sqlite-utils 140912432 | issue | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/129/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 677250834 | MDU6SXNzdWU2NzcyNTA4MzQ= | 926 | datasette fixtures.db --get "/fixtures.json" | simonw 9599 | closed | 0 | 2 | 2020-08-11T22:55:36Z | 2020-08-12T00:26:17Z | 2020-08-12T00:24:42Z | OWNER | I can expose ALL of Datasette's functionality on the command-line (without even running a web server) by adding This would instantiate the Datasette ASGI app, run a fake request for A |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/926/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 679650632 | MDExOlB1bGxSZXF1ZXN0NDY4MzcwNjU4 | 936 | Don't hang in db.execute_write_fn() if connection fails | simonw 9599 | closed | 0 | 2 | 2020-08-15T22:20:12Z | 2020-08-15T22:35:33Z | 2020-08-15T22:35:32Z | OWNER | simonw/datasette/pulls/936 | Refs #935 |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/936/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | |||||

| 681228542 | MDExOlB1bGxSZXF1ZXN0NDY5NjUxNzMy | 48 | Add pull requests | adamjonas 755825 | closed | 0 | 2 | 2020-08-18T17:58:44Z | 2020-11-29T23:51:09Z | 2020-11-29T23:51:09Z | CONTRIBUTOR | dogsheep/github-to-sqlite/pulls/48 | ref #46 Issues don't have merge information on them, which means that PRs need to be pulled separately. Did my best to mimic the API of issues. |

github-to-sqlite 207052882 | pull | {

"url": "https://api.github.com/repos/dogsheep/github-to-sqlite/issues/48/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | |||||

| 684118950 | MDU6SXNzdWU2ODQxMTg5NTA= | 138 | extracts= doesn't configure foreign keys | simonw 9599 | closed | 0 | 2 | 2020-08-23T05:21:15Z | 2020-09-24T22:47:01Z | 2020-09-24T22:46:52Z | OWNER | In using

|

sqlite-utils 140912432 | issue | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/138/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 687711713 | MDU6SXNzdWU2ODc3MTE3MTM= | 955 | Release updated datasette-atom and datasette-ics | simonw 9599 | closed | 0 | Datasette 0.49 5818042 | 2 | 2020-08-28T04:55:21Z | 2020-09-14T22:19:46Z | 2020-09-14T22:19:46Z | OWNER | These should release straight after Datasette 0.49 with the change from #953. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/955/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 688395275 | MDU6SXNzdWU2ODgzOTUyNzU= | 144 | Run some tests against numpy | simonw 9599 | closed | 0 | 2 | 2020-08-28T22:53:00Z | 2020-08-28T22:57:05Z | 2020-08-28T22:57:04Z | OWNER | Accidentally removed in #143: |

sqlite-utils 140912432 | issue | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/144/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 688659182 | MDU6SXNzdWU2ODg2NTkxODI= | 145 | Bug when first record contains fewer columns than subsequent records | simonwiles 96218 | closed | 0 | 2 | 2020-08-30T05:44:44Z | 2020-09-08T23:21:23Z | 2020-09-08T23:21:23Z | CONTRIBUTOR |

I suspect that this bug is masked somewhat by the fact that while:

it is common that it is increased at compile time. Debian-based systems, for example, seem to ship with a version of sqlite compiled with A test for this issue might look like this:

The best solution, I think, is simply to process all the records when determining columns, column types, and the batch size. In my tests this doesn't seem to be particularly costly at all, and cuts out a lot of complications (including obviating my implementation of #139 at #142). I'll raise a PR for your consideration. |

sqlite-utils 140912432 | issue | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/145/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 689809225 | MDU6SXNzdWU2ODk4MDkyMjU= | 2 | Apply porter stemming | simonw 9599 | closed | 0 | 2 | 2020-09-01T04:57:55Z | 2020-09-01T20:42:00Z | 2020-09-01T20:40:24Z | MEMBER | This can be on by default. You can turn it off for a table in the config file using |

dogsheep-beta 197431109 | issue | {

"url": "https://api.github.com/repos/dogsheep/dogsheep-beta/issues/2/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 694500679 | MDU6SXNzdWU2OTQ1MDA2Nzk= | 17 | Rename "table" to "type" | simonw 9599 | closed | 0 | 2 | 2020-09-06T19:34:41Z | 2020-09-09T03:03:22Z | 2020-09-09T03:03:22Z | MEMBER | I think "table" is the wrong name for the concept I'm using it for here. Two reasons: firstly, |

dogsheep-beta 197431109 | issue | {

"url": "https://api.github.com/repos/dogsheep/dogsheep-beta/issues/17/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 695376054 | MDU6SXNzdWU2OTUzNzYwNTQ= | 152 | Turn on recursive_triggers by default | simonw 9599 | closed | 0 | 2 | 2020-09-07T20:26:36Z | 2020-09-07T21:17:48Z | 2020-09-07T20:45:14Z | OWNER | https://www.sqlite.org/pragma.html#pragma_recursive_triggers says:

So I think the fix for the complex issue in #149 is to turn on Originally posted by @simonw in https://github.com/simonw/sqlite-utils/issues/149#issuecomment-688499924 |

sqlite-utils 140912432 | issue | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/152/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 695441530 | MDU6SXNzdWU2OTU0NDE1MzA= | 154 | OperationalError: cannot change into wal mode from within a transaction | simonw 9599 | open | 0 | 2 | 2020-09-07T23:42:44Z | 2020-09-07T23:47:10Z | OWNER | I'm getting this error when running:

I'm worried that maybe that's because of this new code from #152: |

sqlite-utils 140912432 | issue | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/154/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 695553522 | MDU6SXNzdWU2OTU1NTM1MjI= | 18 | Deleted records stay in the search index | simonw 9599 | open | 0 | 2 | 2020-09-08T05:14:23Z | 2020-09-08T05:15:51Z | MEMBER | That should probably do |

dogsheep-beta 197431109 | issue | {

"url": "https://api.github.com/repos/dogsheep/dogsheep-beta/issues/18/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 695556681 | MDU6SXNzdWU2OTU1NTY2ODE= | 19 | Figure out incremental re-indexing | simonw 9599 | open | 0 | 2 | 2020-09-08T05:23:31Z | 2020-09-08T05:27:07Z | MEMBER | As tables get bigger reindexing everything on a schedule (essentially recreating the entire index from scratch) will start to become a performance bottleneck. |

dogsheep-beta 197431109 | issue | {

"url": "https://api.github.com/repos/dogsheep/dogsheep-beta/issues/19/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 696045581 | MDU6SXNzdWU2OTYwNDU1ODE= | 155 | rebuild-fts command and table.rebuild_fts() method | simonw 9599 | closed | 0 | 2 | 2020-09-08T17:19:26Z | 2020-09-24T20:35:46Z | 2020-09-08T23:16:10Z | OWNER | https://sqlite.org/forum/forumpost/fa777fff86

|

sqlite-utils 140912432 | issue | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/155/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 696908389 | MDU6SXNzdWU2OTY5MDgzODk= | 961 | Verification checks for metadata.json on startup | simonw 9599 | open | 0 | 2 | 2020-09-09T15:21:53Z | 2020-09-09T15:24:31Z | OWNER | I lost a bunch of time yesterday trying to figure out why a Datasette instance wasn't starting up - it turned out it was because I had a Catching these on startup would be good. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/961/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 697179806 | MDU6SXNzdWU2OTcxNzk4MDY= | 157 | sqlite-utils add-foreign-keys command | simonw 9599 | closed | 0 | 2.19 5896742 | 2 | 2020-09-09T21:44:30Z | 2020-09-24T20:34:50Z | 2020-09-20T20:14:30Z | OWNER | Like

|

sqlite-utils 140912432 | issue | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/157/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed |

Advanced export

JSON shape: default, array, newline-delimited, object

CREATE TABLE [issues] (

[id] INTEGER PRIMARY KEY,

[node_id] TEXT,

[number] INTEGER,

[title] TEXT,

[user] INTEGER REFERENCES [users]([id]),

[state] TEXT,

[locked] INTEGER,

[assignee] INTEGER REFERENCES [users]([id]),

[milestone] INTEGER REFERENCES [milestones]([id]),

[comments] INTEGER,

[created_at] TEXT,

[updated_at] TEXT,

[closed_at] TEXT,

[author_association] TEXT,

[pull_request] TEXT,

[body] TEXT,

[repo] INTEGER REFERENCES [repos]([id]),

[type] TEXT

, [active_lock_reason] TEXT, [performed_via_github_app] TEXT, [reactions] TEXT, [draft] INTEGER, [state_reason] TEXT);

CREATE INDEX [idx_issues_repo]

ON [issues] ([repo]);

CREATE INDEX [idx_issues_milestone]

ON [issues] ([milestone]);

CREATE INDEX [idx_issues_assignee]

ON [issues] ([assignee]);

CREATE INDEX [idx_issues_user]

ON [issues] ([user]);

comments 1 ✖