issues

2,098 rows where repo = 107914493 sorted by updated_at descending

This data as json, CSV (advanced)

| id | node_id | number | title | user | state | locked | assignee | milestone | comments | created_at | updated_at ▲ | closed_at | author_association | pull_request | body | repo | type | active_lock_reason | performed_via_github_app | reactions | draft | state_reason |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 771208009 | MDU6SXNzdWU3NzEyMDgwMDk= | 1154 | Documentation for new _internal database and tables | simonw 9599 | closed | 0 | Datasette 0.54 6346396 | 2 | 2020-12-18T22:34:52Z | 2021-01-25T00:09:22Z | 2021-01-25T00:08:41Z | OWNER |

Originally posted by @simonw in https://github.com/simonw/datasette/issues/1150#issuecomment-748352106 |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1154/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 792931244 | MDU6SXNzdWU3OTI5MzEyNDQ= | 1202 | Documentation convention for marking unstable APIs. | simonw 9599 | closed | 0 | Datasette 0.54 6346396 | 2 | 2021-01-24T23:47:18Z | 2021-01-25T00:01:02Z | 2021-01-25T00:01:02Z | OWNER |

Originally posted by @simonw in https://github.com/simonw/datasette/issues/1154#issuecomment-766462197 |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1202/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 785588942 | MDU6SXNzdWU3ODU1ODg5NDI= | 1187 | extra_body_script() support for script type="module" | simonw 9599 | closed | 0 | Datasette 0.54 6346396 | 1 | 2021-01-14T02:01:47Z | 2021-01-24T21:21:44Z | 2021-01-14T02:14:39Z | OWNER | Follows #1186. The Relevant docs: https://docs.datasette.io/en/stable/plugin_hooks.html#extra-body-script-template-database-table-columns-view-name-request-datasette |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1187/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 785573793 | MDU6SXNzdWU3ODU1NzM3OTM= | 1186 | script type="module" support | simonw 9599 | closed | 0 | Datasette 0.54 6346396 | 1 | 2021-01-14T01:17:47Z | 2021-01-24T21:21:41Z | 2021-01-14T01:50:58Z | OWNER | Custom JavaScript can be loaded in |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1186/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 784628163 | MDU6SXNzdWU3ODQ2MjgxNjM= | 1185 | "Statement may not contain PRAGMA" error is not strictly true | simonw 9599 | closed | 0 | Datasette 0.54 6346396 | 3 | 2021-01-12T22:07:10Z | 2021-01-24T21:21:37Z | 2021-01-12T22:26:26Z | OWNER | It says "Statement may not contain PRAGMA" - but that's not actually true. Datasette has an allow-list of PRAGMA that are OK - in this case there was a typo in So the error message is misleading. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1185/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 783714076 | MDU6SXNzdWU3ODM3MTQwNzY= | 1184 | request.full_path property | simonw 9599 | closed | 0 | Datasette 0.54 6346396 | 0 | 2021-01-11T21:21:58Z | 2021-01-24T21:21:16Z | 2021-01-11T21:34:47Z | OWNER |

Originally posted by @simonw in https://github.com/simonw/datasette/issues/1179#issuecomment-755495387 |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1184/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 782692159 | MDU6SXNzdWU3ODI2OTIxNTk= | 1182 | Retire "Ecosystem" page in favour of datasette.io/plugins and /tools | simonw 9599 | closed | 0 | Datasette 0.54 6346396 | 3 | 2021-01-09T21:54:47Z | 2021-01-24T21:21:09Z | 2021-01-09T22:17:28Z | OWNER | https://docs.datasette.io/en/stable/ecosystem.html is no longer needed. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1182/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 780267857 | MDU6SXNzdWU3ODAyNjc4NTc= | 1178 | Use force_https_urls on when deploying with Cloud Run | simonw 9599 | closed | 0 | Datasette 0.54 6346396 | 9 | 2021-01-06T08:20:55Z | 2021-01-24T21:21:05Z | 2021-01-06T18:24:47Z | OWNER | Original title: datasette.absolute_url() should return https:// not http:// on Cloud Run https://latest-with-plugins.datasette.io/github/issue_comments.Notebook?_labels=on currently provides |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1178/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 778126516 | MDExOlB1bGxSZXF1ZXN0NTQ4MjcxNDcy | 1170 | Install Prettier via package.json | benpickles 3637 | closed | 0 | Datasette 0.54 6346396 | 3 | 2021-01-04T14:18:03Z | 2021-01-24T21:21:01Z | 2021-01-04T19:52:34Z | CONTRIBUTOR | simonw/datasette/pulls/1170 | This adds a package.json with Prettier and means that developers/CI will use the same version. It also ensures that NPM packages are cached on GitHub Actions which fixes #1169. |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1170/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||

| 773913793 | MDExOlB1bGxSZXF1ZXN0NTQ0OTIzNDM3 | 1158 | Modernize code to Python 3.6+ | eumiro 6774676 | closed | 0 | Datasette 0.54 6346396 | 4 | 2020-12-23T16:21:38Z | 2021-01-24T21:20:50Z | 2020-12-23T17:04:32Z | CONTRIBUTOR | simonw/datasette/pulls/1158 |

please feel free to accept/reject any of these independent commits |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1158/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||

| 772438273 | MDU6SXNzdWU3NzI0MzgyNzM= | 1157 | Use time.perf_counter() instead of time.time() to measure performance | simonw 9599 | closed | 0 | Datasette 0.54 6346396 | 1 | 2020-12-21T20:21:41Z | 2021-01-24T21:20:42Z | 2020-12-21T21:49:20Z | OWNER | I do that in a bunch of places: https://ripgrep.datasette.io/-/ripgrep?pattern=time%28%29&literal=on&glob=datasette%2F%2A%2A https://docs.python.org/3/library/time.html#time.perf_counter

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1157/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 772408750 | MDU6SXNzdWU3NzI0MDg3NTA= | 1156 | Rename _schemas to _internal | simonw 9599 | closed | 0 | Datasette 0.54 6346396 | 1 | 2020-12-21T19:27:58Z | 2021-01-24T21:20:39Z | 2020-12-21T19:51:18Z | OWNER | I like Refs #1154 #1150 #1155 |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1156/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 766494367 | MDExOlB1bGxSZXF1ZXN0NTM5NDg5NTI1 | 1145 | Update pytest requirement from <6.2.0,>=5.2.2 to >=5.2.2,<6.3.0 | dependabot-preview[bot] 27856297 | closed | 0 | Datasette 0.54 6346396 | 1 | 2020-12-14T14:22:16Z | 2021-01-24T21:20:29Z | 2020-12-16T21:44:39Z | CONTRIBUTOR | simonw/datasette/pulls/1145 | Updates the requirements on pytest to permit the latest version. Release notesSourced from pytest's releases.

ChangelogSourced from pytest's changelog. Commits

Dependabot will resolve any conflicts with this PR as long as you don't alter it yourself. You can also trigger a rebase manually by commenting Dependabot commands and optionsYou can trigger Dependabot actions by commenting on this PR: - `@dependabot rebase` will rebase this PR - `@dependabot recreate` will recreate this PR, overwriting any edits that have been made to it - `@dependabot merge` will merge this PR after your CI passes on it - `@dependabot squash and merge` will squash and merge this PR after your CI passes on it - `@dependabot cancel merge` will cancel a previously requested merge and block automerging - `@dependabot reopen` will reopen this PR if it is closed - `@dependabot close` will close this PR and stop Dependabot recreating it. You can achieve the same result by closing it manually - `@dependabot ignore this major version` will close this PR and stop Dependabot creating any more for this major version (unless you reopen the PR or upgrade to it yourself) - `@dependabot ignore this minor version` will close this PR and stop Dependabot creating any more for this minor version (unless you reopen the PR or upgrade to it yourself) - `@dependabot ignore this dependency` will close this PR and stop Dependabot creating any more for this dependency (unless you reopen the PR or upgrade to it yourself) - `@dependabot use these labels` will set the current labels as the default for future PRs for this repo and language - `@dependabot use these reviewers` will set the current reviewers as the default for future PRs for this repo and language - `@dependabot use these assignees` will set the current assignees as the default for future PRs for this repo and language - `@dependabot use this milestone` will set the current milestone as the default for future PRs for this repo and language - `@dependabot badge me` will comment on this PR with code to add a "Dependabot enabled" badge to your readme Additionally, you can set the following in your Dependabot [dashboard](https://app.dependabot.com): - Update frequency (including time of day and day of week) - Pull request limits (per update run and/or open at any time) - Out-of-range updates (receive only lockfile updates, if desired) - Security updates (receive only security updates, if desired) |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1145/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||

| 742011049 | MDU6SXNzdWU3NDIwMTEwNDk= | 1091 | .json and .csv exports fail to apply base_url | simonw 9599 | closed | 0 | Datasette 0.54 6346396 | 22 | 2020-11-12T23:45:16Z | 2021-01-24T21:20:24Z | 2021-01-09T22:19:29Z | OWNER |

Originally posted by @tballison in https://github.com/simonw/datasette/issues/865#issuecomment-726385422 |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1091/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 760605882 | MDU6SXNzdWU3NjA2MDU4ODI= | 1135 | Feature: --create option to create database file if it does not yet exist | simonw 9599 | closed | 0 | 0 | 2020-12-09T19:23:58Z | 2021-01-24T21:19:39Z | 2020-12-09T19:45:52Z | OWNER | I'd like to be able to tell people to run the following in the Datasette documentation to get started: This would give them a local Datasette instance with the ability to drag-and-drop CSV files directly into it. Just one catch: I don't want to have to talk them through creating an empty SQLite database file. So I want to add a new |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1135/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 791381623 | MDU6SXNzdWU3OTEzODE2MjM= | 1197 | DB size limit for publishing with Heroku | mtdukes 1186275 | closed | 0 | 1 | 2021-01-21T18:08:43Z | 2021-01-24T20:53:44Z | 2021-01-24T20:53:44Z | NONE | Hello, I tried searching for this, but can't seem to get a great answer: Does anybody know the size limit for databases deploying to Heroku? The files I'm working with are pretty large, but I might be able to pare them down if I have a limit in mind. I'm getting the following error when running

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1197/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 792625812 | MDU6SXNzdWU3OTI2MjU4MTI= | 1198 | Plugin testing documentation on using pytest-httpx | simonw 9599 | closed | 0 | 1 | 2021-01-23T18:46:16Z | 2021-01-24T20:40:38Z | 2021-01-24T20:38:43Z | OWNER | I keep on having to figure this out: if you use the https://pypi.org/project/pytest-httpx/ fixture to write tests against mocked external APIs, they will fail because that module will break Datasette's own You can fix this using:

See https://github.com/simonw/datasette-indieauth/blob/1.2/tests/test_indieauth.py I can add this tip to the https://docs.datasette.io/en/stable/testing_plugins.html page. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1198/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 725996507 | MDU6SXNzdWU3MjU5OTY1MDc= | 1036 | Make it possible to download BLOB data from the Datasette UI | simonw 9599 | closed | 0 | 0.51 6026070 | 16 | 2020-10-20T22:47:56Z | 2021-01-18T17:45:00Z | 2020-10-25T00:14:52Z | OWNER | Currently you can only extract binary BLOB data as base64-encoded JSON, which is not user friendly at all. It should always be possible for end-users to get the binary data out. I'm worried about XSS vulnerabilities here, but hopefully sending |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1036/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 548591089 | MDU6SXNzdWU1NDg1OTEwODk= | 657 | Allow creation of virtual tables at startup | dazzag24 1055831 | open | 0 | 4 | 2020-01-12T16:10:55Z | 2021-01-15T20:24:35Z | NONE | Hi, I've been experimenting with SQLite reading from huge datasets using this excellent Parquet extension from @cldellow. https://cldellow.com/2018/06/22/sqlite-parquet-vtable.html https://github.com/cldellow/sqlite-parquet-vtable This works really well, but I was keen to see if I could combine datasette with this. Having previously experimented with the spatialite extension I knew that datasette supports loading extensions in the underlying sqlite instance. However I hit a blocker as the current design only allows SELECT statements to be executed and so I am unable to execute the crucial CREATE VIRTUAL TABLE ......... command that is required to load the data from the parquet file into the table. It seems like this would be a simple-ish change, but I don't know enough about the architecture of datasette to start implementing this myself? Could this be done as a datasette plugin? or would this require more fundamental changes at initialisation time? My thoughts are that something at init time could detect that the user was loading a .parquet file and then switch to a mode were it loads that via the "CREATE VIRTUAL TABLE..." rather than loading the .db file in the default case?? I'm happy to contribute code and testing, I just need some pointers on the best approach. Thanks Darren |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/657/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 787104850 | MDU6SXNzdWU3ODcxMDQ4NTA= | 1192 | Form Plugin for in-depth Datasette Querying | tomershvueli 1024355 | open | 0 | 0 | 2021-01-15T18:24:50Z | 2021-01-15T18:24:50Z | NONE | I envision a sort of easy-to-build form plugin that would be able to map a user's inputs to different fields/columns in a Datasette database. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1192/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 782708469 | MDU6SXNzdWU3ODI3MDg0Njk= | 1183 | Take advantage of sqlite-utils cached table counts, if available | simonw 9599 | open | 0 | 2 | 2021-01-09T23:51:48Z | 2021-01-12T02:42:08Z | OWNER | sqlite-utils 3.2 now has a mechanism for creating a https://sqlite-utils.datasette.io/en/stable/python-api.html#cached-table-counts-using-triggers |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1183/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 778450486 | MDU6SXNzdWU3Nzg0NTA0ODY= | 1171 | GitHub Actions workflow to build and sign macOS binary executables | simonw 9599 | open | 0 | 8 | 2021-01-04T23:36:59Z | 2021-01-07T19:36:00Z | OWNER | Using PyInstaller, as explored in #93 and https://til.simonwillison.net/python/packaging-pyinstaller The bigger challenge will be the code signing bit. I'll need a Apple Developer account ($99/year) and some extensive CI fiddling. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1171/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 780767542 | MDU6SXNzdWU3ODA3Njc1NDI= | 1180 | Lazily evaluated arguments for call_with_supported_arguments | simonw 9599 | open | 0 | 2 | 2021-01-06T18:43:34Z | 2021-01-07T18:56:24Z | OWNER | While building https://github.com/simonw/datasette-export-notebook I thought it would be nice to be able to show a count of exported records on the page "This will stream 10,422 records to your notebook". None of the documented arguments on https://docs.datasette.io/en/0.53/plugin_hooks.html#register-output-renderer-datasette expose the count. The closest is So, idea: if your defined render function takes a To implement this I would need to teach the |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1180/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 497170355 | MDU6SXNzdWU0OTcxNzAzNTU= | 576 | Documented internals API for use in plugins | simonw 9599 | closed | 0 | Datasette 1.0 3268330 | 10 | 2019-09-23T15:28:50Z | 2021-01-05T23:12:51Z | 2021-01-05T23:12:37Z | OWNER | Quite a few of the plugin hooks make a This means it should provide a documented, stable API so that plugin authors can rely on it. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/576/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 778682317 | MDU6SXNzdWU3Nzg2ODIzMTc= | 1173 | GitHub Actions workflow to build manylinux binary | simonw 9599 | open | 0 | 1 | 2021-01-05T07:41:11Z | 2021-01-05T07:41:43Z | OWNER | Refs #1171 and #93 |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1173/reactions",

"total_count": 1,

"+1": 1,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 778530523 | MDU6SXNzdWU3Nzg1MzA1MjM= | 1172 | /-/static should be excluded from auth and permission checks | simonw 9599 | open | 0 | 0 | 2021-01-05T02:53:41Z | 2021-01-05T02:53:41Z | OWNER | I want to set far future / immutable cache headers on everything served from This has security implications since it will be possible to see what plugins are installed by checking for known static URLs. I'm fine with that - performance is more important here. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1172/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 435819321 | MDU6SXNzdWU0MzU4MTkzMjE= | 436 | 400 Error when trying to register new user via https://publish.datasettes.com/ | nniiicc 317694 | closed | 0 | 1 | 2019-04-22T17:55:00Z | 2021-01-04T20:15:42Z | 2021-01-04T20:15:41Z | NONE | Behavior: When registering a new user via Zeit - confirmation is sent and screen acknowledges registered user... When clicking grant access the next screen is a white 400 error message. Replicated: Chrome and Firefox; 2 different email accounts |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/436/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 459537047 | MDU6SXNzdWU0NTk1MzcwNDc= | 517 | Add unit test for "static" mechanism in plugins | simonw 9599 | closed | 0 | 1 | 2019-06-23T05:03:31Z | 2021-01-04T20:15:19Z | 2021-01-04T20:15:19Z | OWNER | Split out from #272 - this is actually quite tricky. Here's the relevant code: |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/517/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 377156339 | MDU6SXNzdWUzNzcxNTYzMzk= | 371 | datasette publish digitalocean plugin | psychemedia 82988 | closed | 0 | 3 | 2018-11-04T14:07:41Z | 2021-01-04T20:14:28Z | 2021-01-04T20:14:28Z | CONTRIBUTOR | Provide support for launching Example: Deploy Docker containers into Digital Ocean. Digital Ocean also has a preconfigured VM running Docker that can be launched from the command line via the Digital Ocean API: Docker One-Click Application. Related: - Launching containers in Digital Ocean servers running docker: How To Provision and Manage Remote Docker Hosts with Docker Machine on Ubuntu 16.04 - How To Use Doctl, the Official DigitalOcean Command-Line Client |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/371/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 274264175 | MDU6SXNzdWUyNzQyNjQxNzU= | 102 | datasette publish elasticbeanstalk | simonw 9599 | closed | 0 | 1 | 2017-11-15T18:48:31Z | 2021-01-04T20:13:20Z | 2021-01-04T20:13:19Z | OWNER | It looks like Elastic Beanstalk is the most convenient way to deploy a docker container to AWS without first deploying a cluster. https://aws.amazon.com/blogs/devops/dockerizing-a-python-web-app/ looks helpful. We would need to automate the deployment with Boto: http://boto3.readthedocs.io/en/latest/reference/services/elasticbeanstalk.html |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/102/reactions",

"total_count": 1,

"+1": 1,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 315142414 | MDU6SXNzdWUzMTUxNDI0MTQ= | 221 | Allow plugins to add new cli sub commands | simonw 9599 | closed | 0 | 3 | 2018-04-17T16:40:13Z | 2021-01-04T20:12:14Z | 2021-01-04T20:12:14Z | OWNER | I could then test this out by having https://github.com/simonw/csvs-to-sqlite register itself as a plugin |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/221/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 267739593 | MDU6SXNzdWUyNjc3Mzk1OTM= | 18 | See if I can get a websockets interface working | simonw 9599 | closed | 0 | 1 | 2017-10-23T16:46:41Z | 2021-01-04T20:05:52Z | 2021-01-04T20:05:48Z | OWNER | Since I am already running on Sanic, how hard would it be to add a websocket ebdpoint that lets you talk to sqlite interactively? Could this be used to efficiently support streaming in answers to giant queries? |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/18/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 274265878 | MDU6SXNzdWUyNzQyNjU4Nzg= | 103 | datasette publish appengine | simonw 9599 | closed | 0 | 1 | 2017-11-15T18:54:18Z | 2021-01-04T20:05:14Z | 2021-01-04T20:05:14Z | OWNER | Similar approach to Heroku, discussed in #90 Looks like this could be pretty easy: https://cloud.google.com/appengine/docs/flexible/python/quickstart |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/103/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 670209331 | MDU6SXNzdWU2NzAyMDkzMzE= | 913 | Mechanism for passing additional options to `datasette my.db` that affect plugins | simonw 9599 | open | 0 | 5 | 2020-07-31T20:38:26Z | 2021-01-04T20:04:11Z | OWNER |

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/913/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 751195017 | MDU6SXNzdWU3NTExOTUwMTc= | 1111 | Accessing a database's `.json` is slow for very large SQLite files | asg017 15178711 | open | 0 | 3 | 2020-11-26T00:27:27Z | 2021-01-04T19:57:53Z | CONTRIBUTOR | I have a SQLite DB that's pretty large, 23GB and something like 300 million rows. I expect that most queries I run on it will be slow, which is fine, but there are some things that Datasette does that makes working with the DB very slow. Specifically, when I access the

I suspect this is because a ```bash $ time sqlite3 out.db < <(echo "select count(*) from PageviewsHour;") 362794272 real 0m44.523s user 0m2.497s sys 0m6.703s ``` I'm using the

More than happy to debug further, or send a PR if you like one of the proposals above! |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1111/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 777677671 | MDU6SXNzdWU3Nzc2Nzc2NzE= | 1169 | Prettier package not actually being cached | benpickles 3637 | closed | 0 | 4 | 2021-01-03T17:04:41Z | 2021-01-04T19:52:34Z | 2021-01-04T19:52:33Z | CONTRIBUTOR | With the current configuration Prettier seems to be installed on every run - which can been seen from the output:

Prettier isn't explicitly being installed (it's surprising that actually installing the dependencies isn't included in the actions/cache docs) but it turns out that

I think there are a couple of approaches to tackling this, you could manually install/cache Prettier within the action, or add a I've tested the |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1169/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 776101101 | MDU6SXNzdWU3NzYxMDExMDE= | 1161 | Update a whole bunch of links to datasette.io instead of datasette.readthedocs.io | simonw 9599 | open | 0 | 1 | 2020-12-29T21:47:31Z | 2020-12-29T21:49:57Z | OWNER | datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1161/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

|||||||||

| 421546944 | MDU6SXNzdWU0MjE1NDY5NDQ= | 417 | Datasette Library | simonw 9599 | open | 0 | 12 | 2019-03-15T14:30:22Z | 2020-12-29T14:34:50Z | OWNER | The ability to run Datasette in a mode where it automatically picks up new (or modified) files in a directory tree without needing to restart the server. Suggested command: |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/417/reactions",

"total_count": 8,

"+1": 8,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 567902704 | MDU6SXNzdWU1Njc5MDI3MDQ= | 675 | --cp option for datasette publish and datasette package for shipping additional files and directories | aviflax 141844 | open | 0 | 12 | 2020-02-19T22:55:56Z | 2020-12-28T18:49:21Z | NONE | I’m working on integrating Datasette into a documentation-oriented publishing workflow internally in my company, and in order to deploy the Docker image created by So it’d be excellent if there was an additional option for this command, something like, like, I’d envision it looking something like:

I’d be happy to help design, specify, implement, and test this feature, if you’d be interested. Thanks for the fantastic tools! |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/675/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 770436876 | MDU6SXNzdWU3NzA0MzY4NzY= | 1150 | Maintain an in-memory SQLite table of connected databases and their tables | simonw 9599 | closed | 0 | Datasette 1.0 3268330 | 32 | 2020-12-17T23:02:13Z | 2020-12-27T14:51:39Z | 2020-12-18T22:34:12Z | OWNER | I want Datasette to have its own internal metadata about connected tables, to power features like a paginated searchable homepage in #461. I want this to be a SQLite table. This could also be part of the directory scanning mechanism prototyped in #672 - where Datasette can be set to continually scan a directory for new database files that it can serve. Also relevant to the Datasette Library concept in #417. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1150/reactions",

"total_count": 1,

"+1": 1,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 456568880 | MDU6SXNzdWU0NTY1Njg4ODA= | 509 | Support opening multiple databases with the same stem | simonw 9599 | closed | 0 | simonw 9599 | Datasette 1.0 3268330 | 4 | 2019-06-15T19:32:00Z | 2020-12-22T20:04:35Z | 2020-12-22T20:04:35Z | OWNER | e.g. I should be able to do this: This currently errors because you can't have two databases taking the Instead, how about in this particular case assigning the second database |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/509/reactions",

"total_count": 2,

"+1": 2,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||

| 771216293 | MDU6SXNzdWU3NzEyMTYyOTM= | 1155 | Better internal database_name for _internal database | simonw 9599 | closed | 0 | 9 | 2020-12-18T22:47:27Z | 2020-12-22T20:04:35Z | 2020-12-22T20:04:35Z | OWNER | https://latest.datasette.io/login-as-root then https://latest.datasette.io/_internal

That |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1155/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 443021509 | MDU6SXNzdWU0NDMwMjE1MDk= | 461 | Paginate + search for databases/tables on the homepage | simonw 9599 | open | 0 | Datasette 1.0 3268330 | 4 | 2019-05-11T18:05:34Z | 2020-12-17T22:14:46Z | OWNER | Split out from #460 - in order to support large numbers of connected databases the homepage needs to be paginated. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/461/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

|||||||

| 750089847 | MDU6SXNzdWU3NTAwODk4NDc= | 1109 | Deprecate --config in Datasette 1.0 (in favour of --setting) | simonw 9599 | open | 0 | Datasette 1.0 3268330 | 0 | 2020-11-24T21:43:57Z | 2020-12-17T22:07:49Z | OWNER | I added a deprecation warning to this in #992. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1109/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

|||||||

| 634663505 | MDU6SXNzdWU2MzQ2NjM1MDU= | 815 | Group permission checks by request on /-/permissions debug page | simonw 9599 | open | 0 | 8 | 2020-06-08T14:25:23Z | 2020-12-17T22:06:48Z | OWNER | Now that we're making a LOT more permission checks (on the DB index page we do a check for every listed table for example) the Can make this more readable by grouping permission checks by request. Have most recent request at the top of the page but the permission requests within that page sorted chronologically by most recent last. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/815/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 450032134 | MDU6SXNzdWU0NTAwMzIxMzQ= | 495 | facet_m2m gets confused by multiple relationships | simonw 9599 | open | 0 | 2 | 2019-05-29T21:37:28Z | 2020-12-17T05:08:22Z | OWNER | I got this for a database I was playing with:

I think this is because of these three tables:

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/495/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 463492815 | MDU6SXNzdWU0NjM0OTI4MTU= | 534 | 500 error on m2m facet detection | simonw 9599 | open | 0 | 1 | 2019-07-03T00:42:42Z | 2020-12-17T05:08:22Z | OWNER | This may help debug:

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/534/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 767561886 | MDU6SXNzdWU3Njc1NjE4ODY= | 1148 | Syntax error with + symbol when deployed to Vercel | kaihendry 765871 | closed | 0 | 2 | 2020-12-15T12:53:12Z | 2020-12-16T21:57:42Z | 2020-12-16T21:51:54Z | NONE | Works locally:

Copy the SQL into https://aws-partners-singapore.vercel.app/partners and get syntax error:

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1148/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 718395987 | MDExOlB1bGxSZXF1ZXN0NTAwNzk4MDkx | 1008 | Add json_loads and json_dumps jinja2 filters | mhalle 649467 | open | 0 | 1 | 2020-10-09T20:11:34Z | 2020-12-15T02:30:28Z | FIRST_TIME_CONTRIBUTOR | simonw/datasette/pulls/1008 | datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1008/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | |||||||

| 761713079 | MDU6SXNzdWU3NjE3MTMwNzk= | 1138 | "Powered by Datasette" should link to new datasette.io site | simonw 9599 | closed | 0 | 0 | 2020-12-10T23:33:41Z | 2020-12-15T02:28:10Z | 2020-12-10T23:37:14Z | OWNER | datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1138/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||||

| 765637324 | MDU6SXNzdWU3NjU2MzczMjQ= | 1144 | JavaScript to help plugins interact with the fragment part of the URL | simonw 9599 | open | 0 | 1 | 2020-12-13T20:36:06Z | 2020-12-14T14:47:11Z | OWNER | Suggested by Markus Holtermann on Twitter, who is building https://github.com/MarkusH/datasette-chartjs

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1144/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 717699884 | MDU6SXNzdWU3MTc2OTk4ODQ= | 998 | Wide tables should scroll horizontally within the page | simonw 9599 | closed | 0 | 0.51 6026070 | 8 | 2020-10-08T22:13:27Z | 2020-12-11T09:25:09Z | 2020-10-22T01:12:26Z | OWNER | Wrap the main table in |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/998/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 551834842 | MDU6SXNzdWU1NTE4MzQ4NDI= | 659 | README information is obscured by feature history | labstersteve 55480210 | closed | 0 | 1 | 2020-01-18T22:34:51Z | 2020-12-10T23:28:51Z | 2020-12-10T23:28:51Z | NONE | While it's sometimes valuable to know how a project has developed, there is usually little justification for including this information in the README, and certainly not immediately after other key information such as "what does this package do, and who might want to use it?" Might I recommend that the feature history is migrated to an Appendix in the documentation? |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/659/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 761706858 | MDU6SXNzdWU3NjE3MDY4NTg= | 1137 | Update README to reflect new datasette.io site | simonw 9599 | closed | 0 | 0 | 2020-12-10T23:22:06Z | 2020-12-10T23:28:50Z | 2020-12-10T23:28:50Z | OWNER | Can finally close #659. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1137/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 760312579 | MDU6SXNzdWU3NjAzMTI1Nzk= | 1134 | "_searchmode=raw" throws an index out of range error when combined with "_search_COLUMN" | clausjuhl 2181410 | closed | 0 | 4 | 2020-12-09T13:05:37Z | 2020-12-10T05:57:17Z | 2020-12-09T19:56:55Z | NONE | Hi Simon! Maybe it's just me, but when using _searchmode=raw (trying to enable wildcard-searching) in combination with the "_search_COLUMN"-table argument, I get a list index out of range error. When combining with the simpler "_search"-argument everything works, including wildcard-seaches.. Here's the traceback: ``` Traceback (most recent call last): File "/Users/cjk/.local/share/virtualenvs/minutes-jMDZ8Ssk/lib/python3.7/site-packages/datasette/utils/asgi.py", line 122, in route_path return await view(new_scope, receive, send) File "/Users/cjk/.local/share/virtualenvs/minutes-jMDZ8Ssk/lib/python3.7/site-packages/datasette/utils/asgi.py", line 196, in view request, scope["url_route"]["kwargs"] File "/Users/cjk/.local/share/virtualenvs/minutes-jMDZ8Ssk/lib/python3.7/site-packages/datasette/views/base.py", line 204, in get request, database, hash, correct_hash_provided, kwargs File "/Users/cjk/.local/share/virtualenvs/minutes-jMDZ8Ssk/lib/python3.7/site-packages/datasette/views/base.py", line 342, in view_get request, database, hash, **kwargs File "/Users/cjk/.local/share/virtualenvs/minutes-jMDZ8Ssk/lib/python3.7/site-packages/datasette/views/table.py", line 393, in data search_col = key.split("search", 1)[1] IndexError: list index out of range ``` |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1134/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 760621356 | MDU6SXNzdWU3NjA2MjEzNTY= | 1136 | Establish pattern for release branches to support bug fixes | simonw 9599 | closed | 0 | 8 | 2020-12-09T19:48:18Z | 2020-12-09T20:17:02Z | 2020-12-09T20:14:41Z | OWNER | I want to fix the bug in #1134 and ship it as Datasette 0.52.5 - but the I'm not ready for a feature release, so instead I want to release 0.52.5 with just that bug fix. This is the first time I will have shipped a release from a branch. I need to establish that pattern and add it to the documentation in https://docs.datasette.io/en/stable/contributing.html |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1136/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 758899581 | MDU6SXNzdWU3NTg4OTk1ODE= | 1132 | New filter: array does not contain | simonw 9599 | closed | 0 | 1 | 2020-12-07T22:28:20Z | 2020-12-07T22:50:36Z | 2020-12-07T22:41:09Z | OWNER | I want to see all of my GitHub repos that are tagged |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1132/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 756867924 | MDExOlB1bGxSZXF1ZXN0NTMyMzQyMDI1 | 1128 | Fix startup error on windows | abdusco 3243482 | closed | 0 | 2 | 2020-12-04T07:12:26Z | 2020-12-06T08:41:45Z | 2020-12-05T19:35:04Z | CONTRIBUTOR | simonw/datasette/pulls/1128 | Fixes https://github.com/simonw/datasette/issues/1094 This import isn't used at all, and causes error on startup on Windows. |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1128/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | |||||

| 757481949 | MDU6SXNzdWU3NTc0ODE5NDk= | 1131 | "datasette inspect" outputs invalid JSON if an error is logged | simonw 9599 | closed | 0 | 3 | 2020-12-05T00:00:45Z | 2020-12-05T20:48:34Z | 2020-12-05T05:21:19Z | OWNER | See https://github.com/simonw/register-of-members-interests/issues/6:

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1131/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 398011658 | MDU6SXNzdWUzOTgwMTE2NTg= | 398 | Ensure downloading a 100+MB SQLite database file works | simonw 9599 | closed | 0 | Datasette 1.0 3268330 | 3 | 2019-01-10T20:57:52Z | 2020-12-05T19:36:27Z | 2020-12-05T19:36:27Z | OWNER | I've seen attempted downloads of large files fail after about ten seconds. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/398/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 743011397 | MDU6SXNzdWU3NDMwMTEzOTc= | 1094 | import EX_CANTCREAT means datasette fails to work on Windows | drkane 1049910 | closed | 0 | 1 | 2020-11-14T14:17:11Z | 2020-12-05T19:35:04Z | 2020-12-05T19:35:04Z | NONE | Trying to use datasette 0.51.1 gives the following error:

Looks like that code is only available on unix: https://docs.python.org/3/library/os.html#os.EX_CANTCREAT Removing the line makes it work fine ( |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1094/reactions",

"total_count": 3,

"+1": 3,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 309047460 | MDU6SXNzdWUzMDkwNDc0NjA= | 188 | Ability to bundle metadata and templates inside the SQLite file | simonw 9599 | open | 0 | 4 | 2018-03-27T16:42:07Z | 2020-12-04T17:18:34Z | OWNER | One of the nicest qualities of SQLite as a data format is that you get a single file which you can then backup or share with other people. Datasette breaks this a little once you start including custom metadata.json or template files and CSS. It would be cool if there was an optional mechanism for baking that extra configuration into the SQLite file itself. That way entire datasette mini-applications (including canned queries and custom HTML and CSS) could be constructed as single .db files. Since datasette configuration is all file-based, one way to achieve that would be to support a "datasette_files" table which, if present is used to search for file contents by path. This is inline with the philosophy described by https://www.sqlite.org/appfileformat.html |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/188/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 756875827 | MDU6SXNzdWU3NTY4NzU4Mjc= | 1129 | Fix footer to the bottom of the page | abdusco 3243482 | open | 0 | 0 | 2020-12-04T07:28:07Z | 2020-12-04T16:04:29Z | CONTRIBUTOR | Footer doesn't stick to the bottom if the body content isn't long enough to reach the end of viewport.

This can be fixed using flexbox. ```css body { min-height: 100vh; display: flex; flex-direction: column; } .content { flex-grow: 1; } ``` |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1129/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 756622648 | MDU6SXNzdWU3NTY2MjI2NDg= | 1125 | Show pysqlite3 version on /-/versions | simonw 9599 | closed | 0 | 5 | 2020-12-03T21:57:23Z | 2020-12-04T04:16:57Z | 2020-12-04T04:16:57Z | OWNER | This code can use |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1125/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 756761963 | MDU6SXNzdWU3NTY3NjE5NjM= | 1126 | Switch to google-github-actions/setup-gcloud for demo deploy | simonw 9599 | closed | 0 | 1 | 2020-12-04T03:11:24Z | 2020-12-04T03:51:38Z | 2020-12-04T03:51:38Z | OWNER | That workflow is showing warnings: https://github.com/simonw/datasette/actions/runs/399400077

https://github.com/google-github-actions/setup-gcloud has a Cloud Run example here: https://github.com/google-github-actions/setup-gcloud/blob/master/example-workflows/cloud-run/.github/workflows/cloud-run.yml |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1126/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 756439516 | MDU6SXNzdWU3NTY0Mzk1MTY= | 1124 | Datasette on Amazon Linux on ARM returns 404 for static assets | simonw 9599 | closed | 0 | 9 | 2020-12-03T18:20:37Z | 2020-12-03T21:42:02Z | 2020-12-03T21:10:54Z | OWNER | Very weird bug this one. Steps to reproduce: ``` I started a amzn2-ami-hvm-2.0.20201126.0-arm64-gp2 t4g.micro instanceec2 % ssh -i simonw-ec2.pem ec2-user@ec2-18-219-238-192.us-east-2.compute.amazonaws.com

[ec2-user@ip-172-31-30-7 ~]$ sudo yum install python3

Loaded plugins: extras_suggestions, langpacks, priorities, update-motd

...

Is this ok [y/d/N]: y

Downloading packages:

(1/4): python3-3.7.9-1.amzn2.0.1.aarch64.rpm | 72 kB 00:00:00 Total 68 MB/s | 12 MB 00:00:00 Installed: python3.aarch64 0:3.7.9-1.amzn2.0.1 Dependency Installed: python3-libs.aarch64 0:3.7.9-1.amzn2.0.1 python3-pip.noarch 0:9.0.3-1.amzn2.0.2 python3-setuptools.noarch 0:38.4.0-3.amzn2.0.6 Complete! [ec2-user@ip-172-31-30-7 ~]$ python3 -m pip install --user pipx Collecting pipx Downloading https://files.pythonhosted.org/packages/42/8a/8447ec14562c5d97afbee54ec390864718bccce9dfb0506c8c77852489d3/pipx-0.15.6.0-py3-none-any.whl (43kB) 100% |████████████████████████████████| 51kB 3.8MB/s Collecting packaging>=20.0 (from pipx) Downloading https://files.pythonhosted.org/packages/28/87/8edcf555adaf60d053ead881bc056079e29319b643ca710339ce84413136/packaging-20.7-py2.py3-none-any.whl Collecting argcomplete<2.0,>=1.9.4 (from pipx) Downloading https://files.pythonhosted.org/packages/e3/d0/ee7fc6ceac8957196def9bfa3b955d02163058defd3edd51ef7b1ff2769e/argcomplete-1.12.2-py2.py3-none-any.whl Collecting userpath>=1.4.1 (from pipx) Downloading https://files.pythonhosted.org/packages/fb/f1/faca8a3cc86bd2223aaf72edd222bc31d6ae2eea5feaf17a144634a92e6d/userpath-1.4.1-py2.py3-none-any.whl Collecting pyparsing>=2.0.2 (from packaging>=20.0->pipx) Downloading https://files.pythonhosted.org/packages/8a/bb/488841f56197b13700afd5658fc279a2025a39e22449b7cf29864669b15d/pyparsing-2.4.7-py2.py3-none-any.whl (67kB) 100% |████████████████████████████████| 71kB 8.8MB/s Collecting importlib-metadata<4,>=0.23; python_version == "3.7" (from argcomplete<2.0,>=1.9.4->pipx) Downloading https://files.pythonhosted.org/packages/99/c7/4ccf2baa455613aa9e61372365aba8594ff2806c82189c31a6c65e7c679e/importlib_metadata-3.1.1-py3-none-any.whl Collecting click (from userpath>=1.4.1->pipx) Downloading https://files.pythonhosted.org/packages/d2/3d/fa76db83bf75c4f8d338c2fd15c8d33fdd7ad23a9b5e57eb6c5de26b430e/click-7.1.2-py2.py3-none-any.whl (82kB) 100% |████████████████████████████████| 92kB 10.5MB/s Collecting distro; platform_system == "Linux" (from userpath>=1.4.1->pipx) Downloading https://files.pythonhosted.org/packages/25/b7/b3c4270a11414cb22c6352ebc7a83aaa3712043be29daa05018fd5a5c956/distro-1.5.0-py2.py3-none-any.whl Collecting zipp>=0.5 (from importlib-metadata<4,>=0.23; python_version == "3.7"->argcomplete<2.0,>=1.9.4->pipx) Downloading https://files.pythonhosted.org/packages/41/ad/6a4f1a124b325618a7fb758b885b68ff7b058eec47d9220a12ab38d90b1f/zipp-3.4.0-py3-none-any.whl Installing collected packages: pyparsing, packaging, zipp, importlib-metadata, argcomplete, click, distro, userpath, pipx Successfully installed argcomplete-1.12.2 click-7.1.2 distro-1.5.0 importlib-metadata-3.1.1 packaging-20.7 pipx-0.15.6.0 pyparsing-2.4.7 userpath-1.4.1 zipp-3.4.0 [ec2-user@ip-172-31-30-7 ~]$ python3 -m pipx ensurepath /home/ec2-user/.local/bin is already in PATH. /home/ec2-user/.local/bin is already in PATH. All pipx binary directories have been added to PATH. If you are sure you want to proceed, try again with the '--force' flag. Otherwise pipx is ready to go! ✨ 🌟 ✨ [ec2-user@ip-172-31-30-7 ~]$ pipx install datasette installed package datasette 0.52.2, Python 3.7.9 These apps are now globally available - datasette done! ✨ 🌟 ✨ [ec2-user@ip-172-31-30-7 ~]$ datasette --version datasette, version 0.52.2 [ec2-user@ip-172-31-30-7 ~]$ datasette --get /-/static/app.css 404 ``` |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1124/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 754179035 | MDExOlB1bGxSZXF1ZXN0NTMwMTI1Njk1 | 1122 | Fix misaligned table actions cog | abdusco 3243482 | closed | 0 | 2 | 2020-12-01T08:41:46Z | 2020-12-03T10:56:40Z | 2020-12-03T00:33:37Z | CONTRIBUTOR | simonw/datasette/pulls/1122 | datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1122/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

| 754178780 | MDU6SXNzdWU3NTQxNzg3ODA= | 1121 | Table actions cog is misaligned | abdusco 3243482 | closed | 0 | 1 | 2020-12-01T08:41:25Z | 2020-12-03T01:03:19Z | 2020-12-03T00:33:36Z | CONTRIBUTOR | At the moment it looks like this https://datasette-graphql-demo.datasette.io/github/repos

Adding a few flex statements fixes the alignment and centers |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1121/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 747702144 | MDU6SXNzdWU3NDc3MDIxNDQ= | 1100 | Error on OPTIONS request to database | akehrer 1319404 | closed | 0 | 2 | 2020-11-20T18:16:43Z | 2020-12-03T00:57:35Z | 2020-12-03T00:50:17Z | NONE | When I perform an OPTIONS request against a database or table datasette fails with an internal error. All these tests result in the traceback below.

Making the

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1100/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 755721275 | MDU6SXNzdWU3NTU3MjEyNzU= | 1123 | Table actions hook are order dependent, should not be | simonw 9599 | closed | 0 | 1 | 2020-12-03T00:47:12Z | 2020-12-03T00:50:17Z | 2020-12-03T00:50:17Z | OWNER | Got this error: https://github.com/simonw/datasette/runs/1489770800 ```

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1123/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 610829227 | MDU6SXNzdWU2MTA4MjkyMjc= | 749 | Cloud Run fails to serve database files larger than 32MB | simonw 9599 | closed | 0 | 4 | 2020-05-01T16:06:46Z | 2020-12-03T00:31:15Z | 2020-12-03T00:31:14Z | OWNER | https://cloud.google.com/run/quotas lists the maximum response size as 32MB. I spotted a bug where attempting to download a database file larger than that from a Cloud Run deployment (in this case it was https://github-to-sqlite.dogsheep.net/github.db after I accidentally increased the size of that database) returned a 500 error because of this. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/749/reactions",

"total_count": 1,

"+1": 1,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 753876808 | MDU6SXNzdWU3NTM4NzY4MDg= | 1119 | Include generated columns in fixtures.db, if SQLite version supports it | simonw 9599 | closed | 0 | 1 | 2020-11-30T23:25:31Z | 2020-12-01T00:41:14Z | 2020-12-01T00:28:04Z | OWNER | In #1117 I added a one-off test that creates a DB with generated columns in it: If this table was conditionally added to |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1119/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 753898359 | MDExOlB1bGxSZXF1ZXN0NTI5ODg3ODYx | 1120 | generated_columns table in fixtures.py | simonw 9599 | closed | 0 | 1 | 2020-12-01T00:17:19Z | 2020-12-01T00:28:03Z | 2020-12-01T00:28:02Z | OWNER | simonw/datasette/pulls/1120 | Refs #1119 |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1120/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | |||||

| 753767911 | MDExOlB1bGxSZXF1ZXN0NTI5NzgzMjc1 | 1117 | Support for generated columns | simonw 9599 | closed | 0 | 6 | 2020-11-30T20:10:46Z | 2020-11-30T22:23:19Z | 2020-11-30T21:29:58Z | OWNER | simonw/datasette/pulls/1117 | Refs #1116. My first attempt at this worked on my laptop but broke in CI, so I'm going to iterate on it in a pull request instead. |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1117/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | |||||

| 753668177 | MDU6SXNzdWU3NTM2NjgxNzc= | 1116 | GENERATED column support | nattaylor 2789593 | closed | 0 | 9 | 2020-11-30T17:33:47Z | 2020-11-30T21:29:59Z | 2020-11-30T21:29:59Z | NONE | I think this is a feature request... perhaps I should just try to contribute it myself, but thought I'd check in case support is planned already. For a table with the following schema, datasette 0.51.1 doesn't pick up the GENERATED columns and the column list only contains At first glance it appears that

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1116/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 753788261 | MDU6SXNzdWU3NTM3ODgyNjE= | 1118 | messagge_is_html typo | simonw 9599 | closed | 0 | 0 | 2020-11-30T20:43:22Z | 2020-11-30T21:24:28Z | 2020-11-30T21:24:28Z | OWNER | datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1118/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||||

| 743370900 | MDU6SXNzdWU3NDMzNzA5MDA= | 1098 | Foreign key links break for compound foreign keys | simonw 9599 | closed | 0 | 5 | 2020-11-15T23:22:14Z | 2020-11-29T19:50:31Z | 2020-11-29T19:30:23Z | OWNER | Reported on Twitter here: https://twitter.com/ZaneSelvans/status/1328093641395548161

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1098/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 749289611 | MDU6SXNzdWU3NDkyODk2MTE= | 1102 | Plugin testing docs should show datasette.client | simonw 9599 | closed | 0 | 1 | 2020-11-24T02:34:46Z | 2020-11-29T07:47:22Z | 2020-11-29T07:44:58Z | OWNER | https://docs.datasette.io/en/stable/testing_plugins.html currently shows how to use HTTPX directly. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1102/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

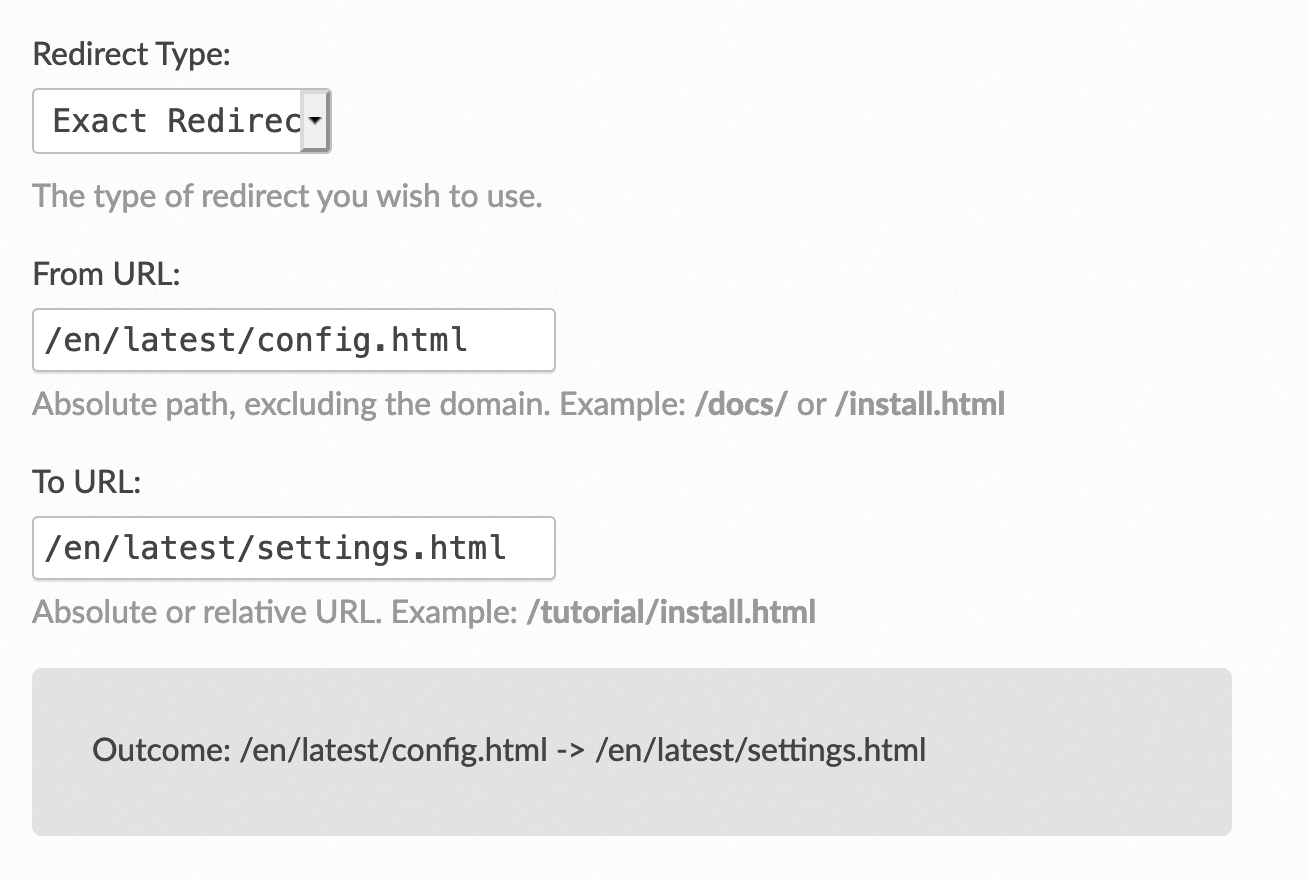

| 750087350 | MDU6SXNzdWU3NTAwODczNTA= | 1108 | Configure /en/stable/config.html redirect when I ship 0.52 | simonw 9599 | closed | 0 | Datasette 0.52 6055094 | 1 | 2020-11-24T21:39:19Z | 2020-11-29T02:42:42Z | 2020-11-29T02:42:42Z | OWNER | Like this:

Originally posted by @simonw in https://github.com/simonw/datasette/issues/1106#issuecomment-733248437 |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1108/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 752789159 | MDU6SXNzdWU3NTI3ODkxNTk= | 1113 | 500 error on row page if query against foreign keys hits time limit | simonw 9599 | closed | 0 | 1 | 2020-11-28T23:20:08Z | 2020-11-29T02:40:01Z | 2020-11-28T23:23:31Z | OWNER | This page exhibited the following error: https://data.catalyst.coop/ferc1/f1_respondent_id/145

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1113/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 750330029 | MDU6SXNzdWU3NTAzMzAwMjk= | 1110 | datasette publish option for installing extra apt-get packages | simonw 9599 | closed | 0 | Datasette 0.52 6055094 | 2 | 2020-11-25T03:03:43Z | 2020-11-28T23:28:56Z | 2020-11-25T03:05:41Z | OWNER | I ran into a need for this while playing with https://github.com/simonw/datasette-ripgrep - I need to install the |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1110/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 741665726 | MDU6SXNzdWU3NDE2NjU3MjY= | 1089 | Sweep documentation for words that minimize involved difficulty | simonw 9599 | closed | 0 | Datasette 0.52 6055094 | 1 | 2020-11-12T14:53:05Z | 2020-11-28T23:28:43Z | 2020-11-12T20:07:26Z | OWNER | Inspired by https://github.com/django/django/pull/11482 |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1089/reactions",

"total_count": 1,

"+1": 1,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 741268956 | MDU6SXNzdWU3NDEyNjg5NTY= | 1088 | OperationalError('interrupted') can 500 on row page | simonw 9599 | closed | 0 | Datasette 0.52 6055094 | 3 | 2020-11-12T04:29:55Z | 2020-11-28T23:28:35Z | 2020-11-12T04:36:52Z | OWNER | I got this on my (private) https://dogsheep.simonwillison.net/twitter/tweets/1188612004572880896 page:

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1088/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 741021342 | MDU6SXNzdWU3NDEwMjEzNDI= | 1086 | Foreign keys with blank titles result in non-clickable links | simonw 9599 | closed | 0 | Datasette 0.52 6055094 | 3 | 2020-11-11T19:41:09Z | 2020-11-28T23:28:29Z | 2020-11-11T23:46:20Z | OWNER | datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1086/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 752749485 | MDExOlB1bGxSZXF1ZXN0NTI4OTk3NjE0 | 1112 | Fix --metadata doc usage | jefftriplett 50527 | closed | 0 | Datasette 0.52 6055094 | 3 | 2020-11-28T19:19:51Z | 2020-11-28T23:28:21Z | 2020-11-28T19:53:48Z | CONTRIBUTOR | simonw/datasette/pulls/1112 | I stumbled on this while trying to figure out how to configure datasette-ripgrep via https://github.com/simonw/datasette-ripgrep/issues/15 You may not want to update the changelog (those are annoying) so I added two commits in case that's easier. |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1112/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||

| 750079085 | MDU6SXNzdWU3NTAwNzkwODU= | 1107 | Rename datasette.config() method to datasette.setting() | simonw 9599 | closed | 0 | Datasette 0.52 6055094 | 5 | 2020-11-24T21:24:11Z | 2020-11-24T22:09:11Z | 2020-11-24T22:06:38Z | OWNER | Part of #1105. Thankfully this isn't yet part of the documented public API on https://docs.datasette.io/en/stable/internals.html |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1107/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 749982022 | MDU6SXNzdWU3NDk5ODIwMjI= | 1105 | Rebrand config as settings | simonw 9599 | closed | 0 | Datasette 0.52 6055094 | 2 | 2020-11-24T19:35:12Z | 2020-11-24T21:40:28Z | 2020-11-24T21:40:28Z | OWNER | I realized I need a tracking ticket for this. I want to start splitting things like plugin configuration and default facets / sort order out of |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1105/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 749983857 | MDU6SXNzdWU3NDk5ODM4NTc= | 1106 | Rebrand and redirect config.rst as settings.rst | simonw 9599 | closed | 0 | Datasette 0.52 6055094 | 4 | 2020-11-24T19:38:17Z | 2020-11-24T21:39:58Z | 2020-11-24T21:39:58Z | OWNER |

Originally posted by @simonw in https://github.com/simonw/datasette/issues/1105#issuecomment-733190827 |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1106/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 749981663 | MDU6SXNzdWU3NDk5ODE2NjM= | 1104 | config.json in directory config mode should be settings.json | simonw 9599 | closed | 0 | Datasette 0.52 6055094 | 2 | 2020-11-24T19:34:38Z | 2020-11-24T20:37:42Z | 2020-11-24T20:37:41Z | OWNER | Another knock-on effect of #992. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1104/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 749979454 | MDU6SXNzdWU3NDk5Nzk0NTQ= | 1103 | Rename /-/config to /-/settings | simonw 9599 | closed | 0 | Datasette 0.52 6055094 | 2 | 2020-11-24T19:31:00Z | 2020-11-24T20:19:20Z | 2020-11-24T20:19:19Z | OWNER | As part of rebranding config to settings, see also #992. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1103/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 714449879 | MDU6SXNzdWU3MTQ0NDk4Nzk= | 992 | Change "--config foo:bar" to "--setting foo bar" | simonw 9599 | closed | 0 | Datasette 0.52 6055094 | 6 | 2020-10-05T01:27:45Z | 2020-11-24T20:01:54Z | 2020-11-24T20:01:54Z | OWNER | I designed the config format before I had a good feel for CLI design using Click. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/992/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 315738696 | MDU6SXNzdWUzMTU3Mzg2OTY= | 226 | Unit tests for installable plugins | simonw 9599 | closed | 0 | 2 | 2018-04-19T06:05:32Z | 2020-11-24T19:52:51Z | 2020-11-24T19:52:46Z | OWNER | I'd like more thorough unit test coverage of the plugins mechanism - in particular for installable plugins. I think I can do this while still having the code live in the same repo, by creating a subdirectory in tests/example_plugin with its own setup.py and then running I imagine I will need to bump the version number every time I change the plugin in case someone runs the test again in the same virtual environment. If that doesn't work I can instead ship a datasette-plugins-tests two to PyPI and add that as a tests_require dependency. Refs #14 |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/226/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 722673818 | MDU6SXNzdWU3MjI2NzM4MTg= | 1023 | Fix issues relating to base_url | simonw 9599 | closed | 0 | 0.51 6026070 | 3 | 2020-10-15T21:02:06Z | 2020-11-24T19:51:44Z | 2020-10-31T20:51:01Z | OWNER | Lots of |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1023/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 346026869 | MDU6SXNzdWUzNDYwMjY4Njk= | 354 | Handle many-to-many relationships | simonw 9599 | open | 0 | 0 | 2018-07-31T04:03:13Z | 2020-11-24T19:51:18Z | OWNER | This is a master tracking ticket for various many-2-many features. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/354/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 737394470 | MDU6SXNzdWU3MzczOTQ0NzA= | 1084 | Table/database action menu cut off if too short | simonw 9599 | closed | 0 | Datasette 0.52 6055094 | 4 | 2020-11-06T01:55:23Z | 2020-11-21T23:45:59Z | 2020-11-21T23:45:59Z | OWNER |

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1084/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 743369188 | MDExOlB1bGxSZXF1ZXN0NTIxMjc2Mjk2 | 1097 | Use f-strings | simonw 9599 | closed | 0 | 1 | 2020-11-15T23:12:36Z | 2020-11-15T23:24:24Z | 2020-11-15T23:24:23Z | OWNER | simonw/datasette/pulls/1097 | Since Datasette now requires Python 3.6, how about some f-strings? I ran this in the |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1097/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | |||||

| 644582921 | MDU6SXNzdWU2NDQ1ODI5MjE= | 865 | base_url doesn't seem to work when adding criteria and clicking "apply" | tballison 6739646 | closed | 0 | 0.51 6026070 | 11 | 2020-06-24T12:39:57Z | 2020-11-12T23:49:24Z | 2020-10-20T05:22:59Z | NONE | Over on Apache Tika, we're using datasette to allow users to make sense of the metadata for our file regression testing corpus. This could be user error in how I've set up the reverse proxy! I started datasette like so:

I then reverse proxied like so: ProxyPreserveHost On ProxyPass /datasette http://x.y.z.q:xxxx ProxyPassReverse /datasette http://x.y.z.q:xxx Regular sql works perfectly: https://corpora.tika.apache.org/datasette/corpora-metadata?sql=select+mime_string%2C+count%281%29+as+cnt%0D%0Afrom+profiles+p%0D%0Ajoin+mimes+m+on+p.mime_id%3Dm.mime_id%0D%0Agroup+by+mime_string%0D%0Aorder+by+cnt+desc However, adding criteria and clicking 'Apply' https://corpora.tika.apache.org/datasette/corpora-metadata/tika_1_24_1_mimes?_sort=file&mime__exact=text%2Fplain bounces back to: https://corpora.tika.apache.org/corpora-metadata/tika_1_24_1_mimes?_sort=file&file__contains=bug&mime__exact=text%2Fplain |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/865/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 735644513 | MDU6SXNzdWU3MzU2NDQ1MTM= | 1081 | Fixtures should use FTS4 or FTS5, not FTS3 | simonw 9599 | closed | 0 | Datasette 0.52 6055094 | 0 | 2020-11-03T21:24:13Z | 2020-11-12T00:03:00Z | 2020-11-12T00:02:59Z | OWNER | Just spotted that |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1081/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 740512882 | MDExOlB1bGxSZXF1ZXN0NTE4OTg4ODc5 | 1085 | Use FTS4 in fixtures | simonw 9599 | closed | 0 | 1 | 2020-11-11T06:44:30Z | 2020-11-12T00:02:59Z | 2020-11-12T00:02:58Z | OWNER | simonw/datasette/pulls/1085 | Refs #1081 |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1085/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | |||||

| 735852274 | MDU6SXNzdWU3MzU4NTIyNzQ= | 1082 | DigitalOcean buildpack memory errors for large sqlite db? | justmars 39538958 | open | 0 | 3 | 2020-11-04T06:35:32Z | 2020-11-04T19:35:44Z | NONE |

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1082/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,