issue_comments

10,495 rows sorted by updated_at descending

This data as json, CSV (advanced)

issue >30

- JavaScript plugin hooks mechanism similar to pluggy 47

- Redesign default .json format 45

- Port Datasette to ASGI 42

- Authentication (and permissions) as a core concept 40

- await datasette.client.get(path) mechanism for executing internal requests 33

- Maintain an in-memory SQLite table of connected databases and their tables 32

- Ability to sort (and paginate) by column 31

- link_or_copy_directory() error - Invalid cross-device link 28

- Export to CSV 27

- base_url configuration setting 27

- Documentation with recommendations on running Datasette in production without using Docker 27

- Ability for a canned query to write to the database 26

- table.transform() method for advanced alter table 26

- Support cross-database joins 25

- Proof of concept for Datasette on AWS Lambda with EFS 25

- Redesign register_output_renderer callback 24

- "datasette insert" command and plugin hook 23

- Datasette Plugins 22

- .json and .csv exports fail to apply base_url 22

- table.extract(...) method and "sqlite-utils extract" command 21

- Handle spatialite geometry columns better 20

- "flash messages" mechanism 20

- Move CI to GitHub Issues 20

- load_template hook doesn't work for include/extends 20

- ?sort=colname~numeric to sort by by column cast to real 19

- Better way of representing binary data in .csv output 19

- Introspect if table is FTS4 or FTS5 19

- WIP: Add Gmail takeout mbox import 19

- Ability to ship alpha and beta releases 18

- Magic parameters for canned queries 18

- …

| id | html_url | issue_url | node_id | user | created_at | updated_at ▲ | author_association | body | reactions | issue | performed_via_github_app |

|---|---|---|---|---|---|---|---|---|---|---|---|

| 802032152 | https://github.com/simonw/sqlite-utils/issues/159#issuecomment-802032152 | https://api.github.com/repos/simonw/sqlite-utils/issues/159 | MDEyOklzc3VlQ29tbWVudDgwMjAzMjE1Mg== | limar 1025224 | 2021-03-18T15:42:52Z | 2021-03-18T15:42:52Z | NONE | I confirm the bug. Happens for me in version 3.6. I use the call to delete all the records:

I see that |

{

"total_count": 1,

"+1": 1,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

.delete_where() does not auto-commit (unlike .insert() or .upsert()) 702386948 | |

| 801816980 | https://github.com/simonw/sqlite-utils/issues/246#issuecomment-801816980 | https://api.github.com/repos/simonw/sqlite-utils/issues/246 | MDEyOklzc3VlQ29tbWVudDgwMTgxNjk4MA== | polyrand 37962604 | 2021-03-18T10:40:32Z | 2021-03-18T10:43:04Z | NONE | I have found a similar problem, but I only when using that type of query (with

I thought I could build a query like the first one using this function:

And then I use the output of that function as the query parameter for the standard However, my use case is different because I'm the one "deciding" when to use a prefix search, not the end user. I also haven't done many tests, but maybe you found that useful. One thing I could think of is checking if the query has an This is just for prefix queries, I think having the escaping function is still useful for other use cases. |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Escaping FTS search strings 831751367 | |

| 799479175 | https://github.com/simonw/sqlite-utils/issues/246#issuecomment-799479175 | https://api.github.com/repos/simonw/sqlite-utils/issues/246 | MDEyOklzc3VlQ29tbWVudDc5OTQ3OTE3NQ== | simonw 9599 | 2021-03-15T14:47:31Z | 2021-03-15T14:47:31Z | OWNER | This is a smart feature. I have something that does this in Datasette, extracting it out to https://github.com/simonw/datasette/blob/8e18c7943181f228ce5ebcea48deb59ce50bee1f/datasette/utils/init.py#L818-L829 |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Escaping FTS search strings 831751367 | |

| 799066252 | https://github.com/simonw/datasette/issues/236#issuecomment-799066252 | https://api.github.com/repos/simonw/datasette/issues/236 | MDEyOklzc3VlQ29tbWVudDc5OTA2NjI1Mg== | simonw 9599 | 2021-03-15T03:34:52Z | 2021-03-15T03:34:52Z | OWNER | Yeah the Lambda Docker stuff is pretty odd - you still don't get to speak HTTP, you have to speak their custom event protocol instead. https://github.com/glassechidna/serverlessish looks interesting here - it adds a proxy inside the container which allows your existing HTTP Docker image to run within Docker-on-Lambda. I've not tried it out yet though. |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

datasette publish lambda plugin 317001500 | |

| 799003172 | https://github.com/simonw/datasette/issues/236#issuecomment-799003172 | https://api.github.com/repos/simonw/datasette/issues/236 | MDEyOklzc3VlQ29tbWVudDc5OTAwMzE3Mg== | jacobian 21148 | 2021-03-14T23:42:57Z | 2021-03-14T23:42:57Z | CONTRIBUTOR | Oh, and the container image can be up to 10GB, so the EFS step might not be needed except for pretty big stuff. |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

datasette publish lambda plugin 317001500 | |

| 799002993 | https://github.com/simonw/datasette/issues/236#issuecomment-799002993 | https://api.github.com/repos/simonw/datasette/issues/236 | MDEyOklzc3VlQ29tbWVudDc5OTAwMjk5Mw== | jacobian 21148 | 2021-03-14T23:41:51Z | 2021-03-14T23:41:51Z | CONTRIBUTOR | Now that Lambda supports Docker, this probably is a bit easier and may be able to build on top of the existing package command. There are weirdnesses in how the command actually gets invoked; the aws-lambda-python image shows a bit of that. So Datasette would probably need some sort of Lambda-specific entry point to make this work. |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

datasette publish lambda plugin 317001500 | |

| 798913090 | https://github.com/simonw/datasette/pull/1260#issuecomment-798913090 | https://api.github.com/repos/simonw/datasette/issues/1260 | MDEyOklzc3VlQ29tbWVudDc5ODkxMzA5MA== | codecov[bot] 22429695 | 2021-03-14T14:01:30Z | 2021-03-14T14:01:30Z | NONE | Codecov Report

```diff @@ Coverage Diff @@ main #1260 +/-=======================================

Coverage 91.51% 91.51% | Impacted Files | Coverage Δ | |

|---|---|---|

| datasette/inspect.py | Continue to review full report at Codecov.

|

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Fix: code quality issues 831163537 | |

| 798468572 | https://github.com/dogsheep/healthkit-to-sqlite/issues/14#issuecomment-798468572 | https://api.github.com/repos/dogsheep/healthkit-to-sqlite/issues/14 | MDEyOklzc3VlQ29tbWVudDc5ODQ2ODU3Mg== | n8henrie 1234956 | 2021-03-13T14:47:31Z | 2021-03-13T14:47:31Z | NONE | Ok, new PR works. I'm not I still end up with a lot of activities that are missing an

I also end up with some unhappy characters (in the skipped events), such as: But it's successfully making it through the file, and the resulting db opens in datasette, so I'd call that progress. |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

UNIQUE constraint failed: workouts.id 771608692 | |

| 798436026 | https://github.com/dogsheep/healthkit-to-sqlite/issues/14#issuecomment-798436026 | https://api.github.com/repos/dogsheep/healthkit-to-sqlite/issues/14 | MDEyOklzc3VlQ29tbWVudDc5ODQzNjAyNg== | n8henrie 1234956 | 2021-03-13T14:23:16Z | 2021-03-13T14:23:16Z | NONE | This PR allows my import to succeed. It looks like some events don't have an If a record has neither of these, I changed it to just print the record (for debugging) and For some odd reason this ran fine at first, and now (after removing the generated db and trying again) I'm getting a different error (duplicate column name). Looks like it may have run when I had two successive runs without remembering to delete the db in between. Will try to refactor. |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

UNIQUE constraint failed: workouts.id 771608692 | |

| 797827038 | https://github.com/simonw/datasette/issues/1259#issuecomment-797827038 | https://api.github.com/repos/simonw/datasette/issues/1259 | MDEyOklzc3VlQ29tbWVudDc5NzgyNzAzOA== | simonw 9599 | 2021-03-13T00:15:40Z | 2021-03-13T00:15:40Z | OWNER | If all of the facets were being calculated in a single query, I'd be willing to bump the facet time limit up to something a lot higher, maybe even a full second. There's a chance that could work amazingly well with a materialized CTE. |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Research using CTEs for faster facet counts 830567275 | |

| 797804869 | https://github.com/simonw/datasette/issues/1259#issuecomment-797804869 | https://api.github.com/repos/simonw/datasette/issues/1259 | MDEyOklzc3VlQ29tbWVudDc5NzgwNDg2OQ== | simonw 9599 | 2021-03-12T23:05:05Z | 2021-03-12T23:05:05Z | OWNER | I wonder if I could optimize facet suggestion in the same way? One challenge: the query time limit will apply to the full CTE query, not to the individual columns. |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Research using CTEs for faster facet counts 830567275 | |

| 797801075 | https://github.com/simonw/datasette/issues/1259#issuecomment-797801075 | https://api.github.com/repos/simonw/datasette/issues/1259 | MDEyOklzc3VlQ29tbWVudDc5NzgwMTA3NQ== | simonw 9599 | 2021-03-12T22:53:56Z | 2021-03-12T22:55:16Z | OWNER | OK, a better comparison: https://global-power-plants.datasettes.com/global-power-plants?sql=WITH+data+as+%28%0D%0A++select%0D%0A++++%0D%0A++from%0D%0A++++%5Bglobal-power-plants%5D%0D%0A%29%2C%0D%0Acountry_long+as+%28select+%0D%0A++%27country_long%27+as+col%2C+country_long+as+value%2C+count%28%29+as+c+from+data+group+by+country_long%0D%0A++order+by+c+desc+limit+31%0D%0A%29%2C%0D%0Aprimary_fuel+as+%28%0D%0Aselect%0D%0A++%27primary_fuel%27+as+col%2C+primary_fuel+as+value%2C+count%28%29+as+c+from+data+group+by+primary_fuel%0D%0A++order+by+c+desc+limit+31%0D%0A%29%2C%0D%0Aowner+as+%28%0D%0Aselect%0D%0A++%27owner%27+as+col%2C+owner+as+value%2C+count%28%29+as+c+from+data+group+by+owner%0D%0A++order+by+c+desc+limit+31%0D%0A%29%0D%0Aselect++from+primary_fuel+union+select++from+country_long%0D%0Aunion+select++from+owner+order+by+col%2C+c+desc calculates facets against three columns. It takes 78.5ms* (and 34.5ms when I refreshed it, presumably after warming some SQLite caches of some sort). https://global-power-plants.datasettes.com/global-power-plants/global-power-plants?_facet=country_long&_facet=primary_fuel&_trace=1&_size=0 shows those facets with size=0 on the SQL query - and shows a SQL trace at the bottom of the page. The country_long facet query takes 45.36ms, owner takes 38.45ms, primary_fuel takes 49.04ms - so a total of 132.85ms That's against https://global-power-plants.datasettes.com/-/versions says SQLite 3.27.3 - so even on a SQLite version that doesn't materialize the CTEs there's a significant performance boost to doing all three facets in a single CTE query. |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Research using CTEs for faster facet counts 830567275 | |

| 797790017 | https://github.com/simonw/datasette/issues/1259#issuecomment-797790017 | https://api.github.com/repos/simonw/datasette/issues/1259 | MDEyOklzc3VlQ29tbWVudDc5Nzc5MDAxNw== | simonw 9599 | 2021-03-12T22:22:12Z | 2021-03-12T22:22:12Z | OWNER | https://sqlite.org/lang_with.html

It looks like this optimization is completely unavailable on SQLite prior to 3.35.0 (released 12th March 2021). But I could still rewrite the faceting to work in this way, using the exact same SQL - it would just be significantly faster on 3.35.0+ (assuming it's actually faster in practice - would need to benchmark). |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Research using CTEs for faster facet counts 830567275 | |

| 797159434 | https://github.com/simonw/datasette/issues/1193#issuecomment-797159434 | https://api.github.com/repos/simonw/datasette/issues/1193 | MDEyOklzc3VlQ29tbWVudDc5NzE1OTQzNA== | simonw 9599 | 2021-03-12T01:01:54Z | 2021-03-12T01:01:54Z | OWNER | DuckDB has a read-only mechanism: https://duckdb.org/docs/api/python

|

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Research plugin hook for alternative database backends 787173276 | |

| 797159221 | https://github.com/simonw/datasette/issues/1250#issuecomment-797159221 | https://api.github.com/repos/simonw/datasette/issues/1250 | MDEyOklzc3VlQ29tbWVudDc5NzE1OTIyMQ== | simonw 9599 | 2021-03-12T01:01:17Z | 2021-03-12T01:01:17Z | OWNER | This is a duplicate of #1193. |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Research: Plugin hook for alternative database connections 824067604 | |

| 797158641 | https://github.com/simonw/datasette/issues/670#issuecomment-797158641 | https://api.github.com/repos/simonw/datasette/issues/670 | MDEyOklzc3VlQ29tbWVudDc5NzE1ODY0MQ== | simonw 9599 | 2021-03-12T00:59:49Z | 2021-03-12T00:59:49Z | OWNER |

It looks like the answer to this is yes - I'll need users to setup read-only credentials. Here's a TIL about that: https://til.simonwillison.net/postgresql/read-only-postgresql-user |

{

"total_count": 1,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 1,

"rocket": 0,

"eyes": 0

} |

Prototoype for Datasette on PostgreSQL 564833696 | |

| 796854370 | https://github.com/simonw/datasette/pull/1211#issuecomment-796854370 | https://api.github.com/repos/simonw/datasette/issues/1211 | MDEyOklzc3VlQ29tbWVudDc5Njg1NDM3MA== | simonw 9599 | 2021-03-11T16:15:29Z | 2021-03-11T16:15:29Z | OWNER | Thanks very much for this - it's really comprehensive. I need to bake some of these patterns into my coding habits better! |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Use context manager instead of plain open 797649915 | |

| 795950636 | https://github.com/simonw/datasette/issues/838#issuecomment-795950636 | https://api.github.com/repos/simonw/datasette/issues/838 | MDEyOklzc3VlQ29tbWVudDc5NTk1MDYzNg== | tsibley 79913 | 2021-03-10T19:24:13Z | 2021-03-10T19:24:13Z | NONE | I think this could be solved by one of:

Option 1 seems like the easiest to me, if you can get away with never having to generate an absolute URL. |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Incorrect URLs when served behind a proxy with base_url set 637395097 | |

| 795939998 | https://github.com/simonw/datasette/issues/838#issuecomment-795939998 | https://api.github.com/repos/simonw/datasette/issues/838 | MDEyOklzc3VlQ29tbWVudDc5NTkzOTk5OA== | tsibley 79913 | 2021-03-10T19:16:55Z | 2021-03-10T19:16:55Z | NONE | Nod. The problem with the tests is that they're ignoring the origin (hostname, port) of links. In a reverse proxy situation, the frontend request origin is different than the backend request origin. The problem is Datasette generates links with the backend request origin. |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Incorrect URLs when served behind a proxy with base_url set 637395097 | |

| 795918377 | https://github.com/simonw/datasette/issues/838#issuecomment-795918377 | https://api.github.com/repos/simonw/datasette/issues/838 | MDEyOklzc3VlQ29tbWVudDc5NTkxODM3Nw== | simonw 9599 | 2021-03-10T19:01:48Z | 2021-03-10T19:01:48Z | OWNER | The biggest challenge here I think is to replicate the exact situation here this happens in a Python unit test. The fix should be easy once we have a test in place. |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Incorrect URLs when served behind a proxy with base_url set 637395097 | |

| 795895436 | https://github.com/simonw/datasette/issues/838#issuecomment-795895436 | https://api.github.com/repos/simonw/datasette/issues/838 | MDEyOklzc3VlQ29tbWVudDc5NTg5NTQzNg== | simonw 9599 | 2021-03-10T18:44:46Z | 2021-03-10T18:44:57Z | OWNER | Let's reopen this. |

{

"total_count": 1,

"+1": 1,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Incorrect URLs when served behind a proxy with base_url set 637395097 | |

| 795893813 | https://github.com/simonw/datasette/issues/838#issuecomment-795893813 | https://api.github.com/repos/simonw/datasette/issues/838 | MDEyOklzc3VlQ29tbWVudDc5NTg5MzgxMw== | tsibley 79913 | 2021-03-10T18:43:39Z | 2021-03-10T18:43:39Z | NONE | @simonw Unfortunately this issue as I reported it is not actually solved in version 0.55. Every link which is returned by the What I wrote originally still stands:

Would you prefer to re-open this issue or have me create a new one? |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Incorrect URLs when served behind a proxy with base_url set 637395097 | |

| 795870524 | https://github.com/simonw/datasette/pull/1254#issuecomment-795870524 | https://api.github.com/repos/simonw/datasette/issues/1254 | MDEyOklzc3VlQ29tbWVudDc5NTg3MDUyNA== | simonw 9599 | 2021-03-10T18:27:45Z | 2021-03-10T18:27:45Z | OWNER | What other breaks did you spot? |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Update Docker Spatialite version to 5.0.1 + add support for Spatialite topology functions 826613352 | |

| 795869144 | https://github.com/simonw/datasette/pull/1256#issuecomment-795869144 | https://api.github.com/repos/simonw/datasette/issues/1256 | MDEyOklzc3VlQ29tbWVudDc5NTg2OTE0NA== | simonw 9599 | 2021-03-10T18:26:46Z | 2021-03-10T18:26:46Z | OWNER | Thanks! |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Minor type in IP adress 827341657 | |

| 795112935 | https://github.com/simonw/datasette/pull/1256#issuecomment-795112935 | https://api.github.com/repos/simonw/datasette/issues/1256 | MDEyOklzc3VlQ29tbWVudDc5NTExMjkzNQ== | JBPressac 6371750 | 2021-03-10T08:59:45Z | 2021-03-10T08:59:45Z | CONTRIBUTOR | Sorry, I meant "minor typo" not "minor type". |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Minor type in IP adress 827341657 | |

| 795085921 | https://github.com/simonw/datasette/pull/1256#issuecomment-795085921 | https://api.github.com/repos/simonw/datasette/issues/1256 | MDEyOklzc3VlQ29tbWVudDc5NTA4NTkyMQ== | codecov[bot] 22429695 | 2021-03-10T08:35:17Z | 2021-03-10T08:35:17Z | NONE | Codecov Report

```diff @@ Coverage Diff @@ main #1256 +/-=======================================

Coverage 91.56% 91.56% Continue to review full report at Codecov.

|

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Minor type in IP adress 827341657 | |

| 794518438 | https://github.com/simonw/datasette/pull/1254#issuecomment-794518438 | https://api.github.com/repos/simonw/datasette/issues/1254 | MDEyOklzc3VlQ29tbWVudDc5NDUxODQzOA== | durkie 3200608 | 2021-03-09T22:04:23Z | 2021-03-09T22:04:23Z | NONE | Dang, you're absolutely right. Spatialite 5.0 had been working fine for a plugin I was developing, but it apparently is broken in several other ways. |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Update Docker Spatialite version to 5.0.1 + add support for Spatialite topology functions 826613352 | |

| 794441034 | https://github.com/simonw/datasette/pull/1254#issuecomment-794441034 | https://api.github.com/repos/simonw/datasette/issues/1254 | MDEyOklzc3VlQ29tbWVudDc5NDQ0MTAzNA== | codecov[bot] 22429695 | 2021-03-09T20:54:18Z | 2021-03-09T21:12:15Z | NONE | Codecov Report

```diff @@ Coverage Diff @@ main #1254 +/-==========================================

- Coverage 91.56% 91.51% -0.05% | Impacted Files | Coverage Δ | |

|---|---|---|

| datasette/database.py | Continue to review full report at Codecov.

|

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Update Docker Spatialite version to 5.0.1 + add support for Spatialite topology functions 826613352 | |

| 794443710 | https://github.com/simonw/datasette/pull/1254#issuecomment-794443710 | https://api.github.com/repos/simonw/datasette/issues/1254 | MDEyOklzc3VlQ29tbWVudDc5NDQ0MzcxMA== | durkie 3200608 | 2021-03-09T20:56:45Z | 2021-03-09T20:56:45Z | NONE | Oh wow I didn't even see that you had opened an issue about this so recently. I'll check on |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Update Docker Spatialite version to 5.0.1 + add support for Spatialite topology functions 826613352 | |

| 794439632 | https://github.com/simonw/datasette/pull/1254#issuecomment-794439632 | https://api.github.com/repos/simonw/datasette/issues/1254 | MDEyOklzc3VlQ29tbWVudDc5NDQzOTYzMg== | simonw 9599 | 2021-03-09T20:53:02Z | 2021-03-09T20:53:02Z | OWNER | Thanks for catching that documentation update! |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Update Docker Spatialite version to 5.0.1 + add support for Spatialite topology functions 826613352 | |

| 794437715 | https://github.com/simonw/datasette/pull/1254#issuecomment-794437715 | https://api.github.com/repos/simonw/datasette/issues/1254 | MDEyOklzc3VlQ29tbWVudDc5NDQzNzcxNQ== | simonw 9599 | 2021-03-09T20:51:19Z | 2021-03-09T20:51:19Z | OWNER | Did you see my note on https://github.com/simonw/datasette/issues/1249#issuecomment-792384382 about a weird issue I was having with the |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Update Docker Spatialite version to 5.0.1 + add support for Spatialite topology functions 826613352 | |

| 793308483 | https://github.com/simonw/datasette/pull/1252#issuecomment-793308483 | https://api.github.com/repos/simonw/datasette/issues/1252 | MDEyOklzc3VlQ29tbWVudDc5MzMwODQ4Mw== | codecov[bot] 22429695 | 2021-03-09T03:06:10Z | 2021-03-09T03:06:10Z | NONE | Codecov Report

```diff @@ Coverage Diff @@ main #1252 +/-==========================================

- Coverage 91.56% 91.51% -0.05% | Impacted Files | Coverage Δ | |

|---|---|---|

| datasette/database.py | Continue to review full report at Codecov.

|

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Add back styling to lists within table cells (fixes #1141) 825217564 | |

| 792386484 | https://github.com/simonw/datasette/issues/1250#issuecomment-792386484 | https://api.github.com/repos/simonw/datasette/issues/1250 | MDEyOklzc3VlQ29tbWVudDc5MjM4NjQ4NA== | simonw 9599 | 2021-03-08T00:29:06Z | 2021-03-08T00:29:06Z | OWNER | DuckDB has a read-only mechanism: https://duckdb.org/docs/api/python

|

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Research: Plugin hook for alternative database connections 824067604 | |

| 792385274 | https://github.com/simonw/datasette/issues/1248#issuecomment-792385274 | https://api.github.com/repos/simonw/datasette/issues/1248 | MDEyOklzc3VlQ29tbWVudDc5MjM4NTI3NA== | simonw 9599 | 2021-03-08T00:25:10Z | 2021-03-08T00:25:10Z | OWNER | It's not possible yet, unfortunately. This came up on the forums recently: https://github.com/simonw/datasette/discussions/968 I'm leaning further towards making the database connection layer itself work via a plugin hook, which would open up the possibility of supporting DuckDB and other databases as well. I've not committed to doing this yet though. |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

duckdb database (very low performance in SQLite) 823035080 | |

| 792384854 | https://github.com/simonw/datasette/issues/1249#issuecomment-792384854 | https://api.github.com/repos/simonw/datasette/issues/1249 | MDEyOklzc3VlQ29tbWVudDc5MjM4NDg1NA== | simonw 9599 | 2021-03-08T00:23:38Z | 2021-03-08T00:23:38Z | OWNER | One reason to prioritize this issue: Homebrew upgraded to SpatiaLite 5.0 recently https://formulae.brew.sh/formula/spatialite-tools and as a result SpatiaLite database created on my laptop don't appear to be compatible with Datasette when published using |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Updated Dockerfile with SpatiaLite version 5.0 824064069 | |

| 792384382 | https://github.com/simonw/datasette/issues/1249#issuecomment-792384382 | https://api.github.com/repos/simonw/datasette/issues/1249 | MDEyOklzc3VlQ29tbWVudDc5MjM4NDM4Mg== | simonw 9599 | 2021-03-08T00:22:02Z | 2021-03-08T00:22:02Z | OWNER | I tried this patch against # Setup build dependencies RUN apt update \ -&& apt install -y python3-dev build-essential wget libxml2-dev libproj-dev libgeos-dev libsqlite3-dev zlib1g-dev pkg-config git \ - && apt clean + && apt install -y python3-dev build-essential wget libxml2-dev libproj-dev \ + libminizip-dev libgeos-dev libsqlite3-dev zlib1g-dev pkg-config git \ + && apt clean - -RUN wget "https://www.sqlite.org/2020/sqlite-autoconf-3310100.tar.gz" && tar xzf sqlite-autoconf-3310100.tar.gz \ - && cd sqlite-autoconf-3310100 && ./configure --disable-static --enable-fts5 --enable-json1 CFLAGS="-g -O2 -DSQLITE_ENABLE_FTS3=1 -DSQLITE_ENABLE_FTS3_PARENTHESIS -DSQLITE_ENABLE_FTS4=1 -DSQLITE_ENABLE_RTREE=1 -DSQLITE_ENABLE_JSON1" \ +RUN wget "https://www.sqlite.org/2021/sqlite-autoconf-3340100.tar.gz" && tar xzf sqlite-autoconf-3340100.tar.gz \ + && cd sqlite-autoconf-3340100 && ./configure --disable-static --enable-fts5 --enable-json1 \ + CFLAGS="-g -O2 -DSQLITE_ENABLE_FTS3=1 -DSQLITE_ENABLE_FTS3_PARENTHESIS -DSQLITE_ENABLE_FTS4=1 -DSQLITE_ENABLE_RTREE=1 -DSQLITE_ENABLE_JSON1" \ && make && make install -RUN wget "http://www.gaia-gis.it/gaia-sins/freexl-sources/freexl-1.0.5.tar.gz" && tar zxf freexl-1.0.5.tar.gz \ - && cd freexl-1.0.5 && ./configure && make && make install +RUN wget "http://www.gaia-gis.it/gaia-sins/freexl-1.0.6.tar.gz" && tar zxf freexl-1.0.6.tar.gz \ + && cd freexl-1.0.6 && ./configure && make && make install -RUN wget "http://www.gaia-gis.it/gaia-sins/libspatialite-sources/libspatialite-4.4.0-RC0.tar.gz" && tar zxf libspatialite-4.4.0-RC0.tar.gz \ - && cd libspatialite-4.4.0-RC0 && ./configure && make && make install +RUN wget "http://www.gaia-gis.it/gaia-sins/libspatialite-5.0.1.tar.gz" && tar zxf libspatialite-5.0.1.tar.gz \ + && cd libspatialite-5.0.1 && ./configure --disable-rttopo && make && make install RUN wget "http://www.gaia-gis.it/gaia-sins/readosm-sources/readosm-1.1.0.tar.gz" && tar zxf readosm-1.1.0.tar.gz && cd readosm-1.1.0 && ./configure && make && make install -RUN wget "http://www.gaia-gis.it/gaia-sins/spatialite-tools-sources/spatialite-tools-4.4.0-RC0.tar.gz" && tar zxf spatialite-tools-4.4.0-RC0.tar.gz \ - && cd spatialite-tools-4.4.0-RC0 && ./configure && make && make install +RUN wget "http://www.gaia-gis.it/gaia-sins/spatialite-tools-5.0.0.tar.gz" && tar zxf spatialite-tools-5.0.0.tar.gz \ + && cd spatialite-tools-5.0.0 && ./configure --disable-rttopo && make && make install # Add local code to the image instead of fetching from pypi. @@ -27,7 +28,7 @@ COPY . /datasette RUN pip install /datasette -FROM python:3.7.10-slim-stretch +FROM python:3.9.2-slim-buster # Copy python dependencies and spatialite libraries COPY --from=build /usr/local/lib/ /usr/local/lib/ ``` I had to use This works, sort of... I'm getting a weird issue where the |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Updated Dockerfile with SpatiaLite version 5.0 824064069 | |

| 792383956 | https://github.com/simonw/datasette/issues/1249#issuecomment-792383956 | https://api.github.com/repos/simonw/datasette/issues/1249 | MDEyOklzc3VlQ29tbWVudDc5MjM4Mzk1Ng== | simonw 9599 | 2021-03-08T00:20:09Z | 2021-03-08T00:20:09Z | OWNER | Worth noting that the Docker image used by https://github.com/simonw/datasette/blob/d0fd833b8cdd97e1b91d0f97a69b494895d82bee/datasette/utils/init.py#L349-L353 Where the apt extras for SpatiaLite are: https://github.com/simonw/datasette/blob/d0fd833b8cdd97e1b91d0f97a69b494895d82bee/datasette/utils/init.py#L344-L345

|

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Updated Dockerfile with SpatiaLite version 5.0 824064069 | |

| 792308036 | https://github.com/simonw/datasette/issues/858#issuecomment-792308036 | https://api.github.com/repos/simonw/datasette/issues/858 | MDEyOklzc3VlQ29tbWVudDc5MjMwODAzNg== | robroc 1219001 | 2021-03-07T16:41:54Z | 2021-03-07T16:41:54Z | NONE | Apologies if I sound dense but I don't see where you would pass 'shell=True'. I'm using the CLI installed via pip. On Sun., Mar. 7, 2021, 2:15 a.m. David Smith, notifications@github.com wrote:

|

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

publish heroku does not work on Windows 10 642388564 | |

| 792233255 | https://github.com/simonw/datasette/pull/1223#issuecomment-792233255 | https://api.github.com/repos/simonw/datasette/issues/1223 | MDEyOklzc3VlQ29tbWVudDc5MjIzMzI1NQ== | simonw 9599 | 2021-03-07T07:41:01Z | 2021-03-07T07:41:01Z | OWNER | This is fantastic, thanks so much for tracking this down. |

{

"total_count": 1,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 1,

"rocket": 0,

"eyes": 0

} |

Add compile option to Dockerfile to fix failing test (fixes #696) 806918878 | |

| 792230560 | https://github.com/simonw/datasette/issues/858#issuecomment-792230560 | https://api.github.com/repos/simonw/datasette/issues/858 | MDEyOklzc3VlQ29tbWVudDc5MjIzMDU2MA== | smithdc1 39445562 | 2021-03-07T07:14:58Z | 2021-03-07T07:14:58Z | NONE | To get it to work I had to:

|

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

publish heroku does not work on Windows 10 642388564 | |

| 792129022 | https://github.com/simonw/datasette/issues/858#issuecomment-792129022 | https://api.github.com/repos/simonw/datasette/issues/858 | MDEyOklzc3VlQ29tbWVudDc5MjEyOTAyMg== | robroc 1219001 | 2021-03-07T00:23:34Z | 2021-03-07T00:23:34Z | NONE | @smithdc1 Can you tell us what you did to get it to publish in Windows? What commands did you pass? |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

publish heroku does not work on Windows 10 642388564 | |

| 791509910 | https://github.com/simonw/datasette/issues/766#issuecomment-791509910 | https://api.github.com/repos/simonw/datasette/issues/766 | MDEyOklzc3VlQ29tbWVudDc5MTUwOTkxMA== | JBPressac 6371750 | 2021-03-05T15:57:35Z | 2021-03-05T16:35:21Z | CONTRIBUTOR | Hello, I have the same wildcards search problems with an instance of Datasette. http://crbc-dataset.huma-num.fr/inventaires/fonds_auguste_dupouy_1872_1967?_search=gwerz&_sort=rowid is OK but http://crbc-dataset.huma-num.fr/inventaires/fonds_auguste_dupouy_1872_1967?_search=gwe* is not (FTS is activated on "Reference" "IntituleAnalyse" "NomDuProducteur" "PresentationDuContenu" "Notes"). Notice that a SQL query as below launched directly from SQLite in the server's shell, retrieves results.

Thanks, |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Enable wildcard-searches by default 617323873 | |

| 791530093 | https://github.com/dogsheep/google-takeout-to-sqlite/pull/5#issuecomment-791530093 | https://api.github.com/repos/dogsheep/google-takeout-to-sqlite/issues/5 | MDEyOklzc3VlQ29tbWVudDc5MTUzMDA5Mw== | UtahDave 306240 | 2021-03-05T16:28:07Z | 2021-03-05T16:28:07Z | NONE |

@maxhawkins a limitation of the python mbox module is it loads the entire mbox into memory. I did find another approach to this problem that didn't use the builtin python mbox module and created a generator so that it didn't have to load the whole mbox into memory. I was hoping to use standard library modules, but this might be a good reason to investigate that approach a bit more. My worry is making sure a custom processor handles all the ins and outs of the mbox format correctly. Hm. As I'm writing this, I thought of something. I think I can parse each message one at a time, and then use an mbox function to load each message using the python mbox module. That way the mbox module can still deal with the specifics of the mbox format, but I can use a generator. I'll give that a try. Thanks for the feedback @maxhawkins and @simonw. I'll give that a try. @simonw can we hold off on merging this until I can test this new approach? |

{

"total_count": 3,

"+1": 3,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

WIP: Add Gmail takeout mbox import 813880401 | |

| 791089881 | https://github.com/dogsheep/google-takeout-to-sqlite/pull/5#issuecomment-791089881 | https://api.github.com/repos/dogsheep/google-takeout-to-sqlite/issues/5 | MDEyOklzc3VlQ29tbWVudDc5MTA4OTg4MQ== | maxhawkins 28565 | 2021-03-05T02:03:19Z | 2021-03-05T02:03:19Z | NONE | I just tried to run this on a small VPS instance with 2GB of memory and it crashed out of memory while processing a 12GB mbox from Takeout. Is it possible to stream the emails to sqlite instead of loading it all into memory and upserting at once? |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

WIP: Add Gmail takeout mbox import 813880401 | |

| 791053721 | https://github.com/dogsheep/dogsheep-photos/issues/32#issuecomment-791053721 | https://api.github.com/repos/dogsheep/dogsheep-photos/issues/32 | MDEyOklzc3VlQ29tbWVudDc5MTA1MzcyMQ== | dsisnero 6213 | 2021-03-05T00:31:27Z | 2021-03-05T00:31:27Z | NONE | I am getting the same thing for US West (N. California) us-west-1 |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

KeyError: 'Contents' on running upload 803333769 | |

| 790934616 | https://github.com/dogsheep/google-takeout-to-sqlite/issues/4#issuecomment-790934616 | https://api.github.com/repos/dogsheep/google-takeout-to-sqlite/issues/4 | MDEyOklzc3VlQ29tbWVudDc5MDkzNDYxNg== | Btibert3 203343 | 2021-03-04T20:54:44Z | 2021-03-04T20:54:44Z | NONE | Sorry for the delay, I got sidetracked after class last night. I am getting the following error: ``` /content# google-takeout-to-sqlite mbox takeout.db Takeout/Mail/gmail.mbox Usage: google-takeout-to-sqlite [OPTIONS] COMMAND [ARGS]...Try 'google-takeout-to-sqlite --help' for help. Error: No such command 'mbox'. ``` On the box, I installed with pip after cloning: https://github.com/UtahDave/google-takeout-to-sqlite.git |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Feature Request: Gmail 778380836 | |

| 790857004 | https://github.com/simonw/datasette/issues/1238#issuecomment-790857004 | https://api.github.com/repos/simonw/datasette/issues/1238 | MDEyOklzc3VlQ29tbWVudDc5MDg1NzAwNA== | tsibley 79913 | 2021-03-04T19:06:55Z | 2021-03-04T19:06:55Z | NONE | @rgieseke Ah, that's super helpful. Thank you for the workaround for now! |

{

"total_count": 1,

"+1": 1,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Custom pages don't work with base_url setting 813899472 | |

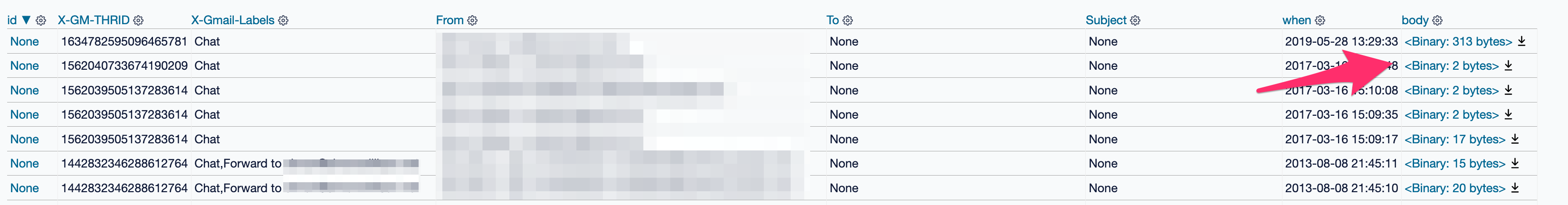

| 790695126 | https://github.com/dogsheep/google-takeout-to-sqlite/pull/5#issuecomment-790695126 | https://api.github.com/repos/dogsheep/google-takeout-to-sqlite/issues/5 | MDEyOklzc3VlQ29tbWVudDc5MDY5NTEyNg== | simonw 9599 | 2021-03-04T15:20:42Z | 2021-03-04T15:20:42Z | MEMBER | I'm not sure why but my most recent import, when displayed in Datasette, looks like this:

Sorting by |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

WIP: Add Gmail takeout mbox import 813880401 | |

| 790693674 | https://github.com/dogsheep/google-takeout-to-sqlite/pull/5#issuecomment-790693674 | https://api.github.com/repos/dogsheep/google-takeout-to-sqlite/issues/5 | MDEyOklzc3VlQ29tbWVudDc5MDY5MzY3NA== | simonw 9599 | 2021-03-04T15:18:36Z | 2021-03-04T15:18:36Z | MEMBER | I imported my 10GB mbox with 750,000 emails in it, ran this tool (with a hacked fix for the blob column problem) - and now a search that returns 92 results takes 25.37ms! This is fantastic. |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

WIP: Add Gmail takeout mbox import 813880401 | |

| 790669767 | https://github.com/dogsheep/google-takeout-to-sqlite/pull/5#issuecomment-790669767 | https://api.github.com/repos/dogsheep/google-takeout-to-sqlite/issues/5 | MDEyOklzc3VlQ29tbWVudDc5MDY2OTc2Nw== | simonw 9599 | 2021-03-04T14:46:06Z | 2021-03-04T14:46:06Z | MEMBER | Solution could be to pre-process that string by splitting on |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

WIP: Add Gmail takeout mbox import 813880401 | |

| 790668263 | https://github.com/dogsheep/google-takeout-to-sqlite/pull/5#issuecomment-790668263 | https://api.github.com/repos/dogsheep/google-takeout-to-sqlite/issues/5 | MDEyOklzc3VlQ29tbWVudDc5MDY2ODI2Mw== | simonw 9599 | 2021-03-04T14:43:58Z | 2021-03-04T14:43:58Z | MEMBER | I added this code to output a message ID on errors:

This was for the following error:

|

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

WIP: Add Gmail takeout mbox import 813880401 | |

| 790391711 | https://github.com/dogsheep/google-takeout-to-sqlite/pull/5#issuecomment-790391711 | https://api.github.com/repos/dogsheep/google-takeout-to-sqlite/issues/5 | MDEyOklzc3VlQ29tbWVudDc5MDM5MTcxMQ== | UtahDave 306240 | 2021-03-04T07:36:24Z | 2021-03-04T07:36:24Z | NONE |

Ah, that's good to know. I think explicitly creating the tables will be a great improvement. I'll add that. Also, I noticed after I opened this PR that the Thanks for the feedback. I should have time tomorrow to put together some improvements. |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

WIP: Add Gmail takeout mbox import 813880401 | |

| 790389335 | https://github.com/dogsheep/google-takeout-to-sqlite/pull/5#issuecomment-790389335 | https://api.github.com/repos/dogsheep/google-takeout-to-sqlite/issues/5 | MDEyOklzc3VlQ29tbWVudDc5MDM4OTMzNQ== | UtahDave 306240 | 2021-03-04T07:32:04Z | 2021-03-04T07:32:04Z | NONE |

The wait is from python loading the mbox file. This happens regardless if you're getting the length of the mbox. The mbox module is on the slow side. It is possible to do one's own parsing of the mbox, but I kind of wanted to avoid doing that. |

{

"total_count": 1,

"+1": 1,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

WIP: Add Gmail takeout mbox import 813880401 | |

| 790384087 | https://github.com/dogsheep/google-takeout-to-sqlite/issues/6#issuecomment-790384087 | https://api.github.com/repos/dogsheep/google-takeout-to-sqlite/issues/6 | MDEyOklzc3VlQ29tbWVudDc5MDM4NDA4Nw== | simonw 9599 | 2021-03-04T07:22:51Z | 2021-03-04T07:22:51Z | MEMBER | 3 also mentions the conflicting version with other tools. |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Upgrade to latest sqlite-utils 821841046 | |

| 790380839 | https://github.com/dogsheep/google-takeout-to-sqlite/pull/5#issuecomment-790380839 | https://api.github.com/repos/dogsheep/google-takeout-to-sqlite/issues/5 | MDEyOklzc3VlQ29tbWVudDc5MDM4MDgzOQ== | simonw 9599 | 2021-03-04T07:17:05Z | 2021-03-04T07:17:05Z | MEMBER | Looks like you're doing this:

I imagine the reason the column is a |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

WIP: Add Gmail takeout mbox import 813880401 | |

| 790379629 | https://github.com/dogsheep/google-takeout-to-sqlite/pull/5#issuecomment-790379629 | https://api.github.com/repos/dogsheep/google-takeout-to-sqlite/issues/5 | MDEyOklzc3VlQ29tbWVudDc5MDM3OTYyOQ== | simonw 9599 | 2021-03-04T07:14:41Z | 2021-03-04T07:14:41Z | MEMBER | Confirmed: removing the |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

WIP: Add Gmail takeout mbox import 813880401 | |

| 790378658 | https://github.com/dogsheep/google-takeout-to-sqlite/pull/5#issuecomment-790378658 | https://api.github.com/repos/dogsheep/google-takeout-to-sqlite/issues/5 | MDEyOklzc3VlQ29tbWVudDc5MDM3ODY1OA== | simonw 9599 | 2021-03-04T07:12:48Z | 2021-03-04T07:12:48Z | MEMBER | It looks like the

If I

It would be great if we could store the |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

WIP: Add Gmail takeout mbox import 813880401 | |

| 790373024 | https://github.com/dogsheep/google-takeout-to-sqlite/pull/5#issuecomment-790373024 | https://api.github.com/repos/dogsheep/google-takeout-to-sqlite/issues/5 | MDEyOklzc3VlQ29tbWVudDc5MDM3MzAyNA== | simonw 9599 | 2021-03-04T07:01:58Z | 2021-03-04T07:04:06Z | MEMBER | I got 9 warnings that look like this:

|

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

WIP: Add Gmail takeout mbox import 813880401 | |

| 790372621 | https://github.com/dogsheep/google-takeout-to-sqlite/pull/5#issuecomment-790372621 | https://api.github.com/repos/dogsheep/google-takeout-to-sqlite/issues/5 | MDEyOklzc3VlQ29tbWVudDc5MDM3MjYyMQ== | simonw 9599 | 2021-03-04T07:01:18Z | 2021-03-04T07:01:18Z | MEMBER | I'm not sure if it would work, but there is an alternative pattern for showing a progress bar against a really large file that I've used in https://github.com/dogsheep/healthkit-to-sqlite/blob/3eb2b06bfe3b4faaf10e9cf9dfcb28e3d16c14ff/healthkit_to_sqlite/cli.py#L24-L57 and https://github.com/dogsheep/healthkit-to-sqlite/blob/3eb2b06bfe3b4faaf10e9cf9dfcb28e3d16c14ff/healthkit_to_sqlite/utils.py#L4-L19 (the It can be a bit of a convoluted pattern, and I'm not at all sure it would work for |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

WIP: Add Gmail takeout mbox import 813880401 | |

| 790370485 | https://github.com/dogsheep/google-takeout-to-sqlite/pull/5#issuecomment-790370485 | https://api.github.com/repos/dogsheep/google-takeout-to-sqlite/issues/5 | MDEyOklzc3VlQ29tbWVudDc5MDM3MDQ4NQ== | simonw 9599 | 2021-03-04T06:57:25Z | 2021-03-04T06:57:48Z | MEMBER | The command takes quite a while to start running, presumably because this line causes it to have to scan the WHOLE file in order to generate a count: I'm fine with waiting though. It's not like this is a command people run every day - and without that count we can't show a progress bar, which seems pretty important for a process that takes this long. |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

WIP: Add Gmail takeout mbox import 813880401 | |

| 790369076 | https://github.com/dogsheep/google-takeout-to-sqlite/pull/5#issuecomment-790369076 | https://api.github.com/repos/dogsheep/google-takeout-to-sqlite/issues/5 | MDEyOklzc3VlQ29tbWVudDc5MDM2OTA3Ng== | simonw 9599 | 2021-03-04T06:54:46Z | 2021-03-04T06:54:46Z | MEMBER | The Rich-powered progress bar is pretty:

|

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

WIP: Add Gmail takeout mbox import 813880401 | |

| 790312268 | https://github.com/dogsheep/google-takeout-to-sqlite/pull/5#issuecomment-790312268 | https://api.github.com/repos/dogsheep/google-takeout-to-sqlite/issues/5 | MDEyOklzc3VlQ29tbWVudDc5MDMxMjI2OA== | simonw 9599 | 2021-03-04T05:48:16Z | 2021-03-04T05:48:16Z | MEMBER | Wow, my mbox is a 10.35 GB download! |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

WIP: Add Gmail takeout mbox import 813880401 | |

| 790311215 | https://github.com/simonw/datasette/pull/1243#issuecomment-790311215 | https://api.github.com/repos/simonw/datasette/issues/1243 | MDEyOklzc3VlQ29tbWVudDc5MDMxMTIxNQ== | simonw 9599 | 2021-03-04T05:45:57Z | 2021-03-04T05:45:57Z | OWNER | Thanks! |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

fix small typo 815955014 | |

| 790257263 | https://github.com/simonw/datasette/issues/268#issuecomment-790257263 | https://api.github.com/repos/simonw/datasette/issues/268 | MDEyOklzc3VlQ29tbWVudDc5MDI1NzI2Mw== | mhalle 649467 | 2021-03-04T03:20:23Z | 2021-03-04T03:20:23Z | NONE | It's kind of an ugly hack, but you can try out what using the fts5 table as an actual datasette-accessible table looks like without changing any datasette code by creating yet another view on top of the fts5 table:

That's now visible from datasette, just like any other view, but you can use This is only good as a proof of concept because you're inefficiently going from view -> fts5 external content table -> view -> data table. However, it does show it works. |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Mechanism for ranking results from SQLite full-text search 323718842 | |

| 790198930 | https://github.com/dogsheep/google-takeout-to-sqlite/issues/4#issuecomment-790198930 | https://api.github.com/repos/dogsheep/google-takeout-to-sqlite/issues/4 | MDEyOklzc3VlQ29tbWVudDc5MDE5ODkzMA== | Btibert3 203343 | 2021-03-04T00:58:40Z | 2021-03-04T00:58:40Z | NONE | I am just seeing this sorry, yes! I will kick the tires later on tonight. My apologies for the delay. |

{

"total_count": 1,

"+1": 1,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Feature Request: Gmail 778380836 | |

| 789680230 | https://github.com/simonw/datasette/issues/283#issuecomment-789680230 | https://api.github.com/repos/simonw/datasette/issues/283 | MDEyOklzc3VlQ29tbWVudDc4OTY4MDIzMA== | justinpinkney 605492 | 2021-03-03T12:28:42Z | 2021-03-03T12:28:42Z | NONE | One note on using this pragma I got an error on starting datasette I diagnosed this to an older version of sqlite3 (3.14.2) and upgrading to a newer version (3.34.2) fixed the issue. |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Support cross-database joins 325958506 | |

| 789409126 | https://github.com/simonw/datasette/issues/268#issuecomment-789409126 | https://api.github.com/repos/simonw/datasette/issues/268 | MDEyOklzc3VlQ29tbWVudDc4OTQwOTEyNg== | mhalle 649467 | 2021-03-03T03:57:15Z | 2021-03-03T03:58:40Z | NONE | In FTS5, I think doing an FTS search is actually much easier than doing a join against the main table like datasette does now. In fact, FTS5 external content tables provide a transparent interface back to the original table or view. Here's what I'm currently doing:

* build a view that joins whatever tables I want and rename the columns to non-joiny names (e.g, Unfortunately, datasette doesn't currently seem happy being coerced into doing a real query on an fts5 table. This works:

But this doesn't work in the datasette SQL query interface:

For what datasette is doing right now, I think you could just use contentless fts5 tables ( I guess if you want to follow this suggestion, you'd need a somewhat different code path for fts5. |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Mechanism for ranking results from SQLite full-text search 323718842 | |

| 789186458 | https://github.com/simonw/datasette/issues/1238#issuecomment-789186458 | https://api.github.com/repos/simonw/datasette/issues/1238 | MDEyOklzc3VlQ29tbWVudDc4OTE4NjQ1OA== | rgieseke 198537 | 2021-03-02T20:19:30Z | 2021-03-02T20:19:30Z | CONTRIBUTOR | A custom |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Custom pages don't work with base_url setting 813899472 | |

| 787616446 | https://github.com/simonw/datasette/issues/1247#issuecomment-787616446 | https://api.github.com/repos/simonw/datasette/issues/1247 | MDEyOklzc3VlQ29tbWVudDc4NzYxNjQ0Ng== | simonw 9599 | 2021-03-01T03:50:37Z | 2021-03-01T03:50:37Z | OWNER | I like the |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

datasette.add_memory_database() method 818430405 | |

| 787616158 | https://github.com/simonw/datasette/issues/1247#issuecomment-787616158 | https://api.github.com/repos/simonw/datasette/issues/1247 | MDEyOklzc3VlQ29tbWVudDc4NzYxNjE1OA== | simonw 9599 | 2021-03-01T03:49:27Z | 2021-03-01T03:49:27Z | OWNER | A couple of options: ```python datasette.add_memory_database("test_json_array") or make that first argument to add_database() optional and support:datasette.add_database(memory_name="test_json_array") ``` |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

datasette.add_memory_database() method 818430405 | |

| 787611153 | https://github.com/simonw/datasette/issues/1246#issuecomment-787611153 | https://api.github.com/repos/simonw/datasette/issues/1246 | MDEyOklzc3VlQ29tbWVudDc4NzYxMTE1Mw== | simonw 9599 | 2021-03-01T03:30:57Z | 2021-03-01T03:30:57Z | OWNER | I'm going to try a new pattern for testing this, enabled by #1151 - the test will create a new named in-memory database, write some records to it and then run some test facets against that. This will save me from having to add yet another fixtures table for this. |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Suggest for ArrayFacet possibly confused by blank values 817597268 | |

| 787536267 | https://github.com/simonw/datasette/issues/1005#issuecomment-787536267 | https://api.github.com/repos/simonw/datasette/issues/1005 | MDEyOklzc3VlQ29tbWVudDc4NzUzNjI2Nw== | simonw 9599 | 2021-02-28T22:30:37Z | 2021-02-28T22:30:37Z | OWNER | {

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Remove xfail tests when new httpx is released 718259202 | ||

| 787532279 | https://github.com/simonw/sqlite-utils/issues/242#issuecomment-787532279 | https://api.github.com/repos/simonw/sqlite-utils/issues/242 | MDEyOklzc3VlQ29tbWVudDc4NzUzMjI3OQ== | simonw 9599 | 2021-02-28T22:09:37Z | 2021-02-28T22:09:37Z | OWNER | Microsoft's playwright Python library solves this problem by code generating both their sync AND their async libraries https://github.com/microsoft/playwright-python/tree/master/scripts |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Async support 817989436 | |

| 787198202 | https://github.com/simonw/sqlite-utils/issues/242#issuecomment-787198202 | https://api.github.com/repos/simonw/sqlite-utils/issues/242 | MDEyOklzc3VlQ29tbWVudDc4NzE5ODIwMg== | simonw 9599 | 2021-02-27T22:33:58Z | 2021-02-27T22:33:58Z | OWNER | Hah or use this trick, which genuinely rewrites the code at runtime using a class decorator! https://github.com/python-happybase/aiohappybase/blob/0990ef45cfdb720dc987afdb4957a0fac591cb99/aiohappybase/sync/_util.py#L19-L32 |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Async support 817989436 | |

| 787195536 | https://github.com/simonw/sqlite-utils/issues/242#issuecomment-787195536 | https://api.github.com/repos/simonw/sqlite-utils/issues/242 | MDEyOklzc3VlQ29tbWVudDc4NzE5NTUzNg== | simonw 9599 | 2021-02-27T22:13:24Z | 2021-02-27T22:13:24Z | OWNER | Some other interesting background reading: https://docs.sqlalchemy.org/en/14/orm/extensions/asyncio.html - in particular see how SQLALchemy has a |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Async support 817989436 | |

| 787190562 | https://github.com/simonw/sqlite-utils/issues/242#issuecomment-787190562 | https://api.github.com/repos/simonw/sqlite-utils/issues/242 | MDEyOklzc3VlQ29tbWVudDc4NzE5MDU2Mg== | simonw 9599 | 2021-02-27T22:04:00Z | 2021-02-27T22:04:00Z | OWNER | From the poster here: https://github.com/sethmlarson/pycon-async-sync-poster/blob/master/poster.pdf

|

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Async support 817989436 | |

| 787186826 | https://github.com/simonw/sqlite-utils/issues/242#issuecomment-787186826 | https://api.github.com/repos/simonw/sqlite-utils/issues/242 | MDEyOklzc3VlQ29tbWVudDc4NzE4NjgyNg== | simonw 9599 | 2021-02-27T22:01:54Z | 2021-02-27T22:01:54Z | OWNER |

|

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Async support 817989436 | |

| 787175126 | https://github.com/simonw/sqlite-utils/issues/242#issuecomment-787175126 | https://api.github.com/repos/simonw/sqlite-utils/issues/242 | MDEyOklzc3VlQ29tbWVudDc4NzE3NTEyNg== | simonw 9599 | 2021-02-27T21:55:05Z | 2021-02-27T21:55:05Z | OWNER | "how to use some new tools to more easily maintain a codebase that supports both async and synchronous I/O and multiple async libraries" - yeah that's exactly what I need, thank you! |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Async support 817989436 | |

| 787150276 | https://github.com/simonw/sqlite-utils/issues/242#issuecomment-787150276 | https://api.github.com/repos/simonw/sqlite-utils/issues/242 | MDEyOklzc3VlQ29tbWVudDc4NzE1MDI3Ng== | polyrand 37962604 | 2021-02-27T21:27:26Z | 2021-02-27T21:27:26Z | NONE | I had this resource by Seth Michael Larson saved https://github.com/sethmlarson/pycon-async-sync-poster I haven't had a look at it, but it may contain useful info. On twitter, I mentioned passing an aiosqlite connection during the |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Async support 817989436 | |

| 787144523 | https://github.com/simonw/sqlite-utils/issues/242#issuecomment-787144523 | https://api.github.com/repos/simonw/sqlite-utils/issues/242 | MDEyOklzc3VlQ29tbWVudDc4NzE0NDUyMw== | simonw 9599 | 2021-02-27T21:18:46Z | 2021-02-27T21:18:46Z | OWNER | Here's a really wild idea: I wonder if it would be possible to run a source transformation against either the sync or the async versions of the code to produce the equivalent for the other paradigm? Could that even be as simple as a set of regular expressions against the If so... I could maintain just the async version, generate the sync version with a script and rely on robust unit testing to guarantee that this actually works. |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Async support 817989436 | |

| 787142066 | https://github.com/simonw/sqlite-utils/issues/242#issuecomment-787142066 | https://api.github.com/repos/simonw/sqlite-utils/issues/242 | MDEyOklzc3VlQ29tbWVudDc4NzE0MjA2Ng== | simonw 9599 | 2021-02-27T21:17:10Z | 2021-02-27T21:17:10Z | OWNER | I have a hunch this is actually going to be quite difficult, due to the internal complexity of some of the Consider Writing this method twice - looking similar but with One thing that would help a LOT is figuring out how to share the majority of the test code. If the exact same tests could run against both the sync and async versions with a bit of test trickery, maintaining parallel implementations would at least be a bit more feasible. |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Async support 817989436 | |

| 787121933 | https://github.com/simonw/sqlite-utils/issues/242#issuecomment-787121933 | https://api.github.com/repos/simonw/sqlite-utils/issues/242 | MDEyOklzc3VlQ29tbWVudDc4NzEyMTkzMw== | eyeseast 25778 | 2021-02-27T19:18:57Z | 2021-02-27T19:18:57Z | CONTRIBUTOR | I think HTTPX gets it exactly right, with a clear separation between sync and async clients, each with a basically identical API. (I'm about to switch feed-to-sqlite over to it, from Requests, to eventually make way for async support.) |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Async support 817989436 | |

| 787120136 | https://github.com/simonw/sqlite-utils/issues/242#issuecomment-787120136 | https://api.github.com/repos/simonw/sqlite-utils/issues/242 | MDEyOklzc3VlQ29tbWVudDc4NzEyMDEzNg== | simonw 9599 | 2021-02-27T19:04:47Z | 2021-02-27T19:04:47Z | OWNER | Another option here would be to add https://github.com/omnilib/aiosqlite/blob/main/aiosqlite/core.py as a dependency - it's four years old now and actively marinated, and the code is pretty small so it looks like a solid, stable, reliable dependency. |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Async support 817989436 | |

| 787118691 | https://github.com/simonw/sqlite-utils/issues/242#issuecomment-787118691 | https://api.github.com/repos/simonw/sqlite-utils/issues/242 | MDEyOklzc3VlQ29tbWVudDc4NzExODY5MQ== | simonw 9599 | 2021-02-27T18:53:23Z | 2021-02-27T18:53:23Z | OWNER | Datasette has its own implementation of a write queue for exactly this purpose - and there's no reason at all that should stay in Datasette rather than being extracted out and moved over here to One small concern I have is around the API design. I'd want to keep supporting the existing synchronous API while also providing a similar API with await-based methods. What are some good examples of libraries that do this? I like how https://www.python-httpx.org/ handles it, maybe that's a good example to imitate? |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Async support 817989436 | |

| 786925280 | https://github.com/dogsheep/google-takeout-to-sqlite/pull/5#issuecomment-786925280 | https://api.github.com/repos/dogsheep/google-takeout-to-sqlite/issues/5 | MDEyOklzc3VlQ29tbWVudDc4NjkyNTI4MA== | simonw 9599 | 2021-02-26T22:23:10Z | 2021-02-26T22:23:10Z | MEMBER | Thanks! I requested my Gmail export from takeout - once that arrives I'll test it against this and then merge the PR. |

{

"total_count": 1,

"+1": 1,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

WIP: Add Gmail takeout mbox import 813880401 | |

| 786849095 | https://github.com/simonw/datasette/issues/1238#issuecomment-786849095 | https://api.github.com/repos/simonw/datasette/issues/1238 | MDEyOklzc3VlQ29tbWVudDc4Njg0OTA5NQ== | simonw 9599 | 2021-02-26T19:29:38Z | 2021-02-26T19:29:38Z | OWNER | Here's the test I wrote: ```diff git diff tests/test_custom_pages.py diff --git a/tests/test_custom_pages.py b/tests/test_custom_pages.py index 6a23192..5a71f56 100644 --- a/tests/test_custom_pages.py +++ b/tests/test_custom_pages.py @@ -2,11 +2,19 @@ import pathlib import pytest from .fixtures import make_app_client +TEST_TEMPLATE_DIRS = str(pathlib.Path(file).parent / "test_templates") + @pytest.fixture(scope="session") def custom_pages_client(): + with make_app_client(template_dir=TEST_TEMPLATE_DIRS) as client: + yield client + + +@pytest.fixture(scope="session") +def custom_pages_client_with_base_url(): with make_app_client( - template_dir=str(pathlib.Path(file).parent / "test_templates") + template_dir=TEST_TEMPLATE_DIRS, config={"base_url": "/prefix/"} ) as client: yield client @@ -23,6 +31,12 @@ def test_request_is_available(custom_pages_client): assert "path:/request" == response.text +def test_custom_pages_with_base_url(custom_pages_client_with_base_url): + response = custom_pages_client_with_base_url.get("/prefix/request") + assert 200 == response.status + assert "path:/prefix/request" == response.text + + def test_custom_pages_nested(custom_pages_client): response = custom_pages_client.get("/nested/nest") assert 200 == response.status ``` |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Custom pages don't work with base_url setting 813899472 | |

| 786848654 | https://github.com/simonw/datasette/issues/1238#issuecomment-786848654 | https://api.github.com/repos/simonw/datasette/issues/1238 | MDEyOklzc3VlQ29tbWVudDc4Njg0ODY1NA== | simonw 9599 | 2021-02-26T19:28:48Z | 2021-02-26T19:28:48Z | OWNER | I added a debug line just before And it showed that for some reason |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Custom pages don't work with base_url setting 813899472 | |

| 786841261 | https://github.com/simonw/datasette/issues/1238#issuecomment-786841261 | https://api.github.com/repos/simonw/datasette/issues/1238 | MDEyOklzc3VlQ29tbWVudDc4Njg0MTI2MQ== | simonw 9599 | 2021-02-26T19:13:44Z | 2021-02-26T19:13:44Z | OWNER | Sounds like a bug - thanks for reporting this. |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Custom pages don't work with base_url setting 813899472 | |

| 786840734 | https://github.com/simonw/datasette/issues/1246#issuecomment-786840734 | https://api.github.com/repos/simonw/datasette/issues/1246 | MDEyOklzc3VlQ29tbWVudDc4Njg0MDczNA== | simonw 9599 | 2021-02-26T19:12:39Z | 2021-02-26T19:12:47Z | OWNER | Could I take this part:

|

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Suggest for ArrayFacet possibly confused by blank values 817597268 | |

| 786840425 | https://github.com/simonw/datasette/issues/1246#issuecomment-786840425 | https://api.github.com/repos/simonw/datasette/issues/1246 | MDEyOklzc3VlQ29tbWVudDc4Njg0MDQyNQ== | simonw 9599 | 2021-02-26T19:11:56Z | 2021-02-26T19:11:56Z | OWNER | {

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Suggest for ArrayFacet possibly confused by blank values 817597268 | ||

| 786830832 | https://github.com/simonw/sqlite-utils/issues/239#issuecomment-786830832 | https://api.github.com/repos/simonw/sqlite-utils/issues/239 | MDEyOklzc3VlQ29tbWVudDc4NjgzMDgzMg== | simonw 9599 | 2021-02-26T18:52:40Z | 2021-02-26T18:52:40Z | OWNER | Could this handle lists of objects too? That would be pretty amazing - if the column has a |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

sqlite-utils extract could handle nested objects 816526538 | |

| 786813506 | https://github.com/simonw/datasette/issues/1240#issuecomment-786813506 | https://api.github.com/repos/simonw/datasette/issues/1240 | MDEyOklzc3VlQ29tbWVudDc4NjgxMzUwNg== | simonw 9599 | 2021-02-26T18:19:46Z | 2021-02-26T18:19:46Z | OWNER | Linking to rows from custom queries is a lot harder - because given an arbitrary string of SQL it's difficult to analyze it and figure out which (if any) of the returned columns represent a primary key. It's possible to manually write a SQL query that returns a column that will be treated as a link to another page using this plugin, but it's not particularly straight-forward: https://datasette.io/plugins/datasette-json-html |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Allow facetting on custom queries 814591962 | |

| 786812716 | https://github.com/simonw/datasette/issues/1240#issuecomment-786812716 | https://api.github.com/repos/simonw/datasette/issues/1240 | MDEyOklzc3VlQ29tbWVudDc4NjgxMjcxNg== | simonw 9599 | 2021-02-26T18:18:18Z | 2021-02-26T18:18:18Z | OWNER | Agreed, this would be extremely useful. I'd love to be able to facet against custom queries. It's a fair bit of work to implement but it's not impossible. Closing this as a duplicate of #972. |

{

"total_count": 1,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 1,

"rocket": 0,

"eyes": 0

} |

Allow facetting on custom queries 814591962 | |

| 786795132 | https://github.com/simonw/sqlite-utils/issues/239#issuecomment-786795132 | https://api.github.com/repos/simonw/sqlite-utils/issues/239 | MDEyOklzc3VlQ29tbWVudDc4Njc5NTEzMg== | simonw 9599 | 2021-02-26T17:45:53Z | 2021-02-26T17:45:53Z | OWNER | If there's no primary key in the JSON could use the |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

sqlite-utils extract could handle nested objects 816526538 | |

| 786794435 | https://github.com/simonw/sqlite-utils/issues/239#issuecomment-786794435 | https://api.github.com/repos/simonw/sqlite-utils/issues/239 | MDEyOklzc3VlQ29tbWVudDc4Njc5NDQzNQ== | simonw 9599 | 2021-02-26T17:44:38Z | 2021-02-26T17:44:38Z | OWNER | This came up in office hours! |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

sqlite-utils extract could handle nested objects 816526538 | |

| 786786645 | https://github.com/simonw/datasette/issues/1244#issuecomment-786786645 | https://api.github.com/repos/simonw/datasette/issues/1244 | MDEyOklzc3VlQ29tbWVudDc4Njc4NjY0NQ== | simonw 9599 | 2021-02-26T17:30:38Z | 2021-02-26T17:30:38Z | OWNER | New paragraph at the top of https://docs.datasette.io/en/latest/writing_plugins.html

|

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Plugin tip: look at the examples linked from the hooks page 817528452 | |

| 786050562 | https://github.com/simonw/sqlite-utils/issues/237#issuecomment-786050562 | https://api.github.com/repos/simonw/sqlite-utils/issues/237 | MDEyOklzc3VlQ29tbWVudDc4NjA1MDU2Mg== | simonw 9599 | 2021-02-25T16:57:56Z | 2021-02-25T16:57:56Z | OWNER |

|

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

db["my_table"].drop(ignore=True) parameter, plus sqlite-utils drop-table --ignore and drop-view --ignore 815554385 | |

| 786049686 | https://github.com/simonw/sqlite-utils/issues/237#issuecomment-786049686 | https://api.github.com/repos/simonw/sqlite-utils/issues/237 | MDEyOklzc3VlQ29tbWVudDc4NjA0OTY4Ng== | simonw 9599 | 2021-02-25T16:56:42Z | 2021-02-25T16:56:42Z | OWNER | So:

|

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

db["my_table"].drop(ignore=True) parameter, plus sqlite-utils drop-table --ignore and drop-view --ignore 815554385 | |

| 786049394 | https://github.com/simonw/sqlite-utils/issues/237#issuecomment-786049394 | https://api.github.com/repos/simonw/sqlite-utils/issues/237 | MDEyOklzc3VlQ29tbWVudDc4NjA0OTM5NA== | simonw 9599 | 2021-02-25T16:56:14Z | 2021-02-25T16:56:14Z | OWNER | Other methods ( I don't like using it as the default partly because that would be a very minor breaking API change, but mainly because I don't want to hide mistakes people make - e.g. if you mistype the name of the table you are trying to drop. |

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

db["my_table"].drop(ignore=True) parameter, plus sqlite-utils drop-table --ignore and drop-view --ignore 815554385 | |

| 786037219 | https://github.com/simonw/sqlite-utils/issues/240#issuecomment-786037219 | https://api.github.com/repos/simonw/sqlite-utils/issues/240 | MDEyOklzc3VlQ29tbWVudDc4NjAzNzIxOQ== | simonw 9599 | 2021-02-25T16:39:23Z | 2021-02-25T16:39:23Z | OWNER | Example from the docs: ```pycon

|

{

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

table.pks_and_rows_where() method returning primary keys along with the rows 816560819 |

Advanced export

JSON shape: default, array, newline-delimited, object

CREATE TABLE [issue_comments] (

[html_url] TEXT,

[issue_url] TEXT,

[id] INTEGER PRIMARY KEY,

[node_id] TEXT,

[user] INTEGER REFERENCES [users]([id]),

[created_at] TEXT,

[updated_at] TEXT,

[author_association] TEXT,

[body] TEXT,

[reactions] TEXT,

[issue] INTEGER REFERENCES [issues]([id])

, [performed_via_github_app] TEXT);

CREATE INDEX [idx_issue_comments_issue]

ON [issue_comments] ([issue]);

CREATE INDEX [idx_issue_comments_user]

ON [issue_comments] ([user]);

user >30