issues

555 rows where repo = 107914493 and state = "open" sorted by reactions descending

This data as json, CSV (advanced)

Suggested facets: milestone, comments, draft, created_at (date), updated_at (date)

| id | node_id | number | title | user | state | locked | assignee | milestone | comments | created_at | updated_at | closed_at | author_association | pull_request | body | repo | type | active_lock_reason | performed_via_github_app | reactions ▲ | draft | state_reason |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

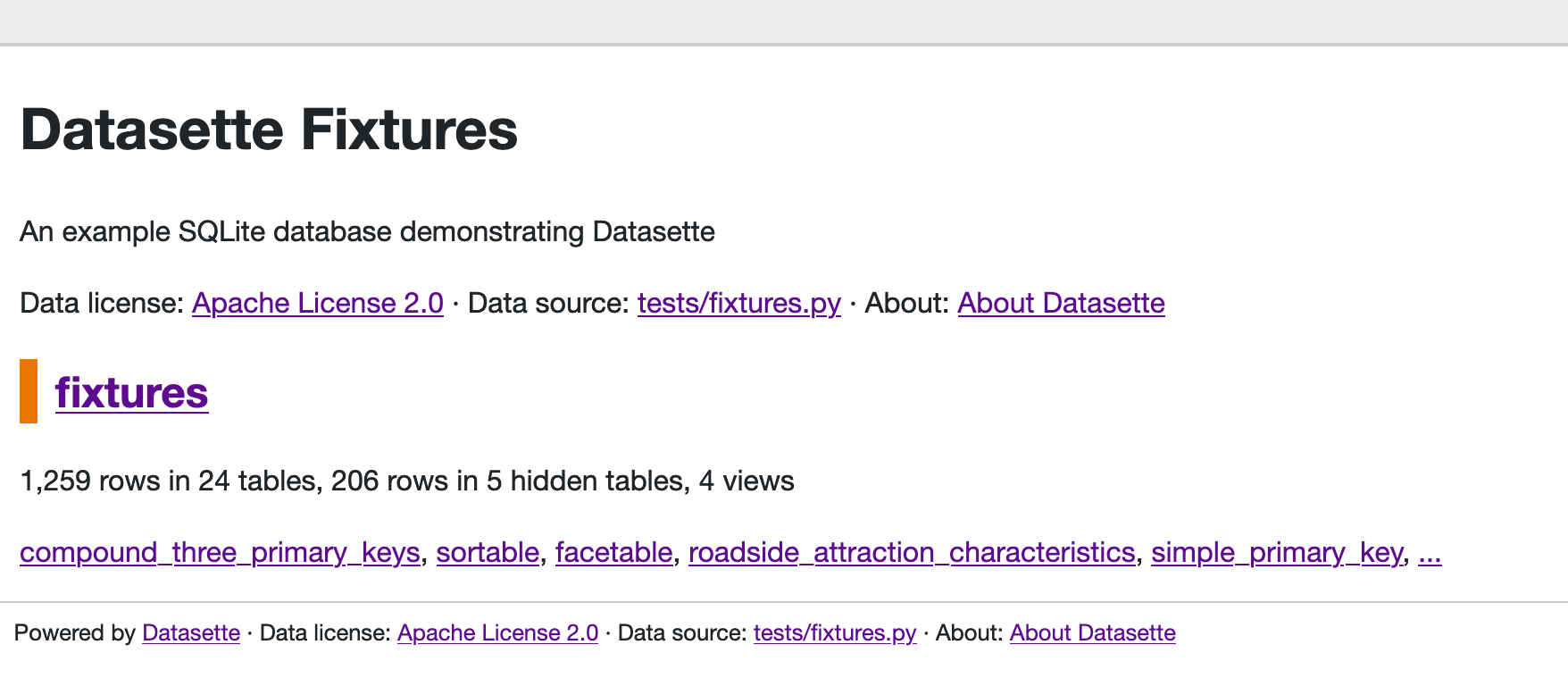

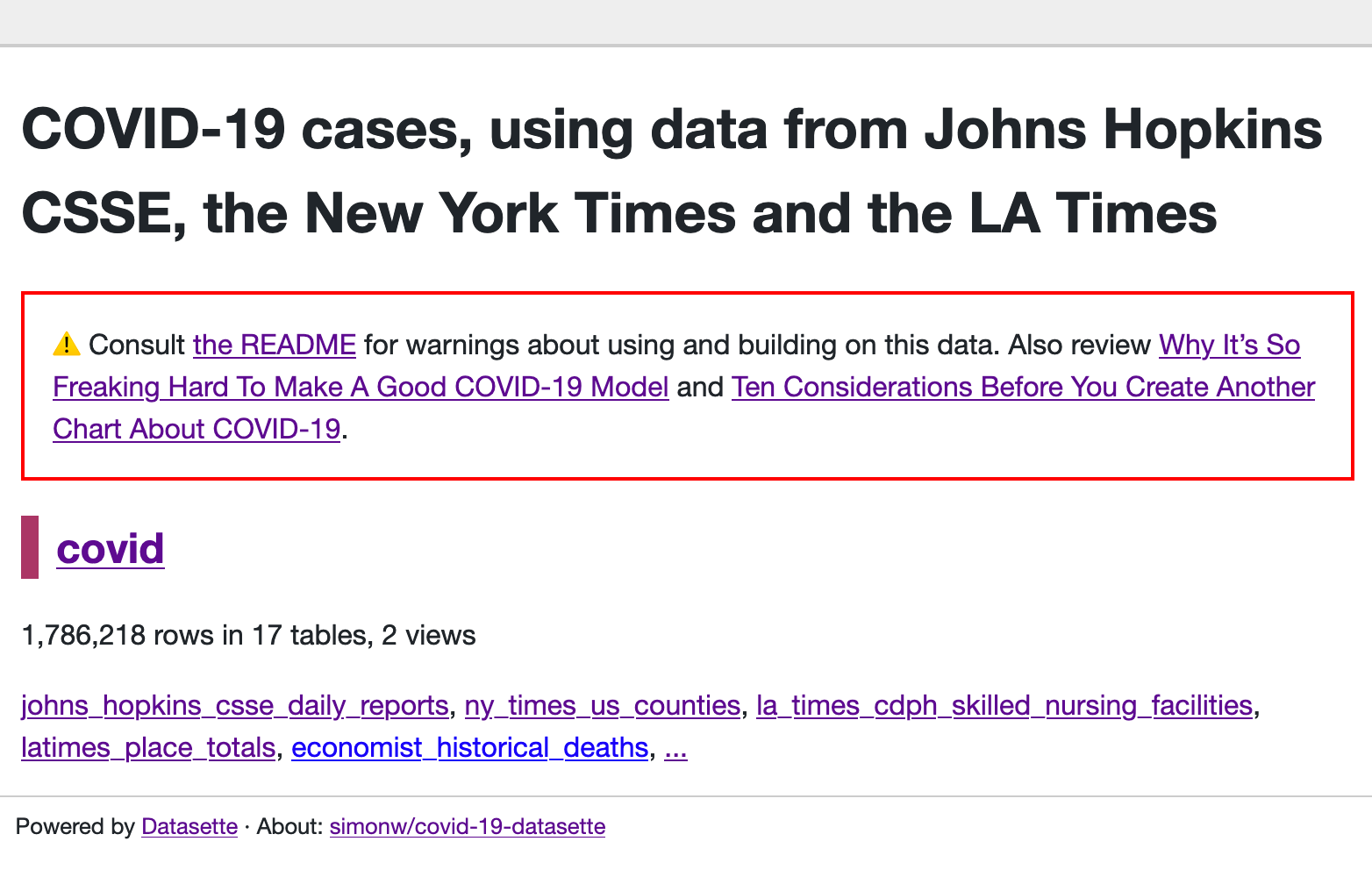

| 714377268 | MDU6SXNzdWU3MTQzNzcyNjg= | 991 | Redesign application homepage | simonw 9599 | open | 0 | 7 | 2020-10-04T18:48:45Z | 2021-01-26T19:06:36Z | OWNER | Most Datasette instances only host a single database, but the current homepage design assumes that it should leave plenty of space for multiple databases:

Reconsider this design - should the default show more information? The Covid-19 Datasette homepage looks particularly sparse I think: https://covid-19.datasettes.com/

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/991/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 712984738 | MDU6SXNzdWU3MTI5ODQ3Mzg= | 987 | Documented HTML hooks for JavaScript plugin authors | simonw 9599 | open | 0 | 7 | 2020-10-01T16:10:14Z | 2021-01-25T04:00:03Z | OWNER | In #981 I added |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/987/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 712368432 | MDU6SXNzdWU3MTIzNjg0MzI= | 984 | Review accessibility of new column action menus | simonw 9599 | open | 0 | 1 | 2020-09-30T23:56:44Z | 2020-10-01T00:01:36Z | OWNER | Feature added in #981 |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/984/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 712260429 | MDU6SXNzdWU3MTIyNjA0Mjk= | 983 | JavaScript plugin hooks mechanism similar to pluggy | simonw 9599 | open | 0 | 47 | 2020-09-30T20:32:43Z | 2021-01-25T04:43:58Z | OWNER |

Originally posted by @simonw in https://github.com/simonw/datasette/issues/981#issuecomment-701616922 |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/983/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 712202333 | MDU6SXNzdWU3MTIyMDIzMzM= | 982 | SQL editor should allow execution of write queries, if you have permission | simonw 9599 | open | 0 | 2 | 2020-09-30T19:04:35Z | 2022-01-13T22:21:29Z | OWNER | The UI concept: if you have write permission then the existing SQL editor gets an "execute write" checkbox underneath it. JavaScript can spot if you appear to be trying to execute an UPDATE or INSERT or DELETE query and check that checkbox for you. If you link to a query page with a non-SELECT then that query will be displayed in the box ready for you to POST submit it. The page will also then get "cannot be embedded" headers to protect against clickjacking. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/982/reactions",

"total_count": 1,

"+1": 1,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 707849175 | MDU6SXNzdWU3MDc4NDkxNzU= | 974 | static assets and favicon aren't cached by the browser | obra 45416 | open | 0 | 1 | 2020-09-24T04:44:55Z | 2022-01-13T22:21:28Z | NONE | Using datasette to solve some frustrating problems with our fulfillment provider today, I was surprised to see repeated requests for assets under /-/static and the favicon. While it won't likely be a huge performance bottleneck, I bet datasette would feel a bit zippier if you had Uvicorn serving up some caching-related headers telling the browser it was safe to cache static assets. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/974/reactions",

"total_count": 1,

"+1": 1,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 705840673 | MDU6SXNzdWU3MDU4NDA2NzM= | 972 | Support faceting against arbitrary SQL queries | simonw 9599 | open | 0 | 1 | 2020-09-21T19:00:43Z | 2021-12-15T18:02:20Z | OWNER |

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/972/reactions",

"total_count": 3,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 3,

"rocket": 0,

"eyes": 0

} |

||||||||

| 696908389 | MDU6SXNzdWU2OTY5MDgzODk= | 961 | Verification checks for metadata.json on startup | simonw 9599 | open | 0 | 2 | 2020-09-09T15:21:53Z | 2020-09-09T15:24:31Z | OWNER | I lost a bunch of time yesterday trying to figure out why a Datasette instance wasn't starting up - it turned out it was because I had a Catching these on startup would be good. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/961/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 691537426 | MDU6SXNzdWU2OTE1Mzc0MjY= | 959 | Internals API idea: results.dicts in addition to results.rows | simonw 9599 | open | 0 | 0 | 2020-09-03T00:50:17Z | 2020-09-03T00:50:17Z | OWNER | I just wrote this code:

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/959/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 687694947 | MDU6SXNzdWU2ODc2OTQ5NDc= | 954 | Remove old register_output_renderer dict mechanism in Datasette 1.0 | simonw 9599 | open | 0 | Datasette 1.0 3268330 | 1 | 2020-08-28T04:04:23Z | 2020-08-28T04:56:31Z | OWNER |

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/954/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

|||||||

| 678760988 | MDU6SXNzdWU2Nzg3NjA5ODg= | 932 | End-user documentation | simonw 9599 | open | 0 | Datasette 1.0 3268330 | 6 | 2020-08-13T22:04:39Z | 2022-03-08T15:20:48Z | OWNER | Datasette's documentation is aimed at people who install and configure it. What about end users of preconfigured and deployed Datasette instances? Something that can be linked to from the Datasette UI would be really useful. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/932/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

|||||||

| 675594325 | MDU6SXNzdWU2NzU1OTQzMjU= | 917 | Idea: "datasette publish" option for "only if the data has changed | simonw 9599 | open | 0 | 0 | 2020-08-08T21:58:27Z | 2020-08-08T21:58:27Z | OWNER | This is a pattern I often find myself needing. I usually implement this in GitHub Actions like this:

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/917/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 672421411 | MDU6SXNzdWU2NzI0MjE0MTE= | 916 | Support reverse pagination (previous page, has-previous-items) | simonw 9599 | open | 0 | 7 | 2020-08-04T00:32:06Z | 2021-04-03T23:43:11Z | OWNER | I need this for

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/916/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 670209331 | MDU6SXNzdWU2NzAyMDkzMzE= | 913 | Mechanism for passing additional options to `datasette my.db` that affect plugins | simonw 9599 | open | 0 | 5 | 2020-07-31T20:38:26Z | 2021-01-04T20:04:11Z | OWNER |

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/913/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 668064026 | MDU6SXNzdWU2NjgwNjQwMjY= | 911 | Rethink the --name option to "datasette publish" | simonw 9599 | open | 0 | Datasette 1.0 3268330 | 0 | 2020-07-29T18:49:49Z | 2020-07-29T18:49:49Z | OWNER |

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/911/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

|||||||

| 659873662 | MDU6SXNzdWU2NTk4NzM2NjI= | 898 | datasette.utils.testing module | simonw 9599 | open | 0 | 2 | 2020-07-18T03:53:24Z | 2020-07-18T03:57:46Z | OWNER | The unit tests for plugins could benefit from reusing code from Datasette's own testing fixtures, e.g.:

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/898/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 657572753 | MDU6SXNzdWU2NTc1NzI3NTM= | 894 | ?sort=colname~numeric to sort by by column cast to real | simonw 9599 | open | 0 | 21 | 2020-07-15T18:47:48Z | 2021-08-20T02:07:53Z | OWNER | If a text column actually contains numbers, being able to "sort by column, treated as numeric" would be really useful. Probably depends on column actions enabled by #690 |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/894/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 649429772 | MDU6SXNzdWU2NDk0Mjk3NzI= | 886 | Reconsider how _actor_X magic parameter deals with missing values | simonw 9599 | open | 0 | 2 | 2020-07-02T00:00:38Z | 2020-09-11T21:35:26Z | OWNER | I had to build a custom @hookimpl

def register_magic_parameters():

return [

("actorornull", actorornull),

]

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/886/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 648749062 | MDExOlB1bGxSZXF1ZXN0NDQyNTA1MDg4 | 883 | Skip counting hidden tables | abdusco 3243482 | open | 0 | 4 | 2020-07-01T07:38:08Z | 2020-07-02T00:25:44Z | CONTRIBUTOR | simonw/datasette/pulls/883 | Potential fix for https://github.com/simonw/datasette/issues/859. Disabling table counts for hidden tables speeds up database page quite a bit. In my setup it reduced load time by 2/3 (~300 -> ~90ms) |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/883/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

| 648659536 | MDU6SXNzdWU2NDg2NTk1MzY= | 881 | Figure out why restore_working_directory is needed in some places | simonw 9599 | open | 0 | 0 | 2020-07-01T04:19:25Z | 2020-07-01T04:19:25Z | OWNER | This is a frustrating workaround. I have a /usr/local/opt/python/Frameworks/Python.framework/Versions/3.7/lib/python3.7/contextlib.py:112: in enter return next(self.gen) self = <click.testing.CliRunner object at 0x1135ad110>

I'd like to not have to do this. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/881/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 648435885 | MDU6SXNzdWU2NDg0MzU4ODU= | 878 | New pattern for views that return either JSON or HTML, available for plugins | simonw 9599 | open | 0 | Datasette 1.0 3268330 | 26 | 2020-06-30T19:26:13Z | 2022-03-19T16:19:30Z | OWNER | Can be part of #870 - refactoring existing views to use

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/878/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

|||||||

| 647095487 | MDU6SXNzdWU2NDcwOTU0ODc= | 873 | "datasette -p 0 --root" gives the wrong URL | simonw 9599 | open | 0 | 14 | 2020-06-29T04:03:06Z | 2020-08-18T17:26:10Z | OWNER |

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/873/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 646737558 | MDU6SXNzdWU2NDY3Mzc1NTg= | 870 | Refactor default views to use register_routes | simonw 9599 | open | 0 | 10 | 2020-06-27T18:53:12Z | 2022-03-15T20:07:18Z | OWNER | It would be much cleaner if Datasette's default views were all registered using the new

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/870/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 646448486 | MDExOlB1bGxSZXF1ZXN0NDQwNzM1ODE0 | 868 | initial windows ci setup | joshmgrant 702729 | open | 0 | 3 | 2020-06-26T18:49:13Z | 2021-07-10T23:41:43Z | FIRST_TIME_CONTRIBUTOR | simonw/datasette/pulls/868 | Picking up the work done on #557 with a new PR. Seeing if I can get this working. |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/868/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

| 643510821 | MDU6SXNzdWU2NDM1MTA4MjE= | 862 | Set an upper limit on total facet suggestion time for a page | simonw 9599 | open | 0 | 1 | 2020-06-23T03:57:55Z | 2020-06-23T03:58:48Z | OWNER | If a table has 100 columns the facet suggestion code will currently run 100 times, taking a max of So for 100 columns, that's 100 * 50ms = 5s total time that might be spent attempting to calculate facets on a large table! I should implement a hard upper limit on the total amount of time taken suggesting facets - probably of around 500ms. If it takes longer than that the remaining columns will not be considered. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/862/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 642572841 | MDU6SXNzdWU2NDI1NzI4NDE= | 859 | Database page loads too slowly with many large tables (due to table counts) | abdusco 3243482 | open | 0 | 21 | 2020-06-21T14:23:17Z | 2021-08-25T21:59:55Z | CONTRIBUTOR | Hey, I have a database that I save in HTML from couple of web scrapers. There are around 200k+, 50+ rows in a couple of tables, with sqlite file weighing around 600MB. The app runs on a VPS with 2 core CPU, 4GB RAM and refreshing database page regularly takes more than 10 seconds. I was suspecting that counting tables was the culprit, but manually running I've looked at the source code. There's a check for index page for mutable databases larger than 100MB https://github.com/simonw/datasette/blob/799c5d53570d773203527f19530cf772dc2eeb24/datasette/views/index.py#L15 but this check is not performed for database page.

I've manually crippled now the page loads in <100ms. Is it possible to apply size check on database page too? /-/versions output{

"python": {

"version": "3.8.0",

"full": "3.8.0 (default, Oct 28 2019, 16:14:01) \n[GCC 8.3.0]"

},

"datasette": {

"version": "0.44"

},

"asgi": "3.0",

"uvicorn": "0.11.5",

"sqlite": {

"version": "3.22.0",

"fts_versions": [

"FTS5",

"FTS4",

"FTS3"

],

"extensions": {

"json1": null

},

"compile_options": [

"COMPILER=gcc-7.4.0",

"ENABLE_COLUMN_METADATA",

"ENABLE_DBSTAT_VTAB",

"ENABLE_FTS3",

"ENABLE_FTS3_PARENTHESIS",

"ENABLE_FTS3_TOKENIZER",

"ENABLE_FTS4",

"ENABLE_FTS5",

"ENABLE_JSON1",

"ENABLE_LOAD_EXTENSION",

"ENABLE_PREUPDATE_HOOK",

"ENABLE_RTREE",

"ENABLE_SESSION",

"ENABLE_STMTVTAB",

"ENABLE_UNLOCK_NOTIFY",

"ENABLE_UPDATE_DELETE_LIMIT",

"HAVE_ISNAN",

"LIKE_DOESNT_MATCH_BLOBS",

"MAX_SCHEMA_RETRY=25",

"MAX_VARIABLE_NUMBER=250000",

"OMIT_LOOKASIDE",

"SECURE_DELETE",

"SOUNDEX",

"TEMP_STORE=1",

"THREADSAFE=1"

]

}

}

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/859/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 642388564 | MDU6SXNzdWU2NDIzODg1NjQ= | 858 | publish heroku does not work on Windows 10 | simonlau 870912 | open | 0 | 7 | 2020-06-20T14:40:28Z | 2021-06-10T17:44:09Z | NONE | When executing "datasette publish heroku schools.db" on Windows 10, I get the following error

to

as well as the other |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/858/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 642296989 | MDU6SXNzdWU2NDIyOTY5ODk= | 856 | Consider pagination of canned queries | simonw 9599 | open | 0 | 3 | 2020-06-20T03:15:59Z | 2021-05-21T14:22:41Z | OWNER | The new |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/856/reactions",

"total_count": 1,

"+1": 1,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 639993467 | MDU6SXNzdWU2Mzk5OTM0Njc= | 850 | Proof of concept for Datasette on AWS Lambda with EFS | simonw 9599 | open | 0 | 25 | 2020-06-16T21:48:31Z | 2020-06-16T23:52:16Z | OWNER | If Datasette can run on Lambda with access to EFS it could both read AND write large databases there. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/850/reactions",

"total_count": 1,

"+1": 1,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 638238548 | MDU6SXNzdWU2MzgyMzg1NDg= | 845 | Code coverage should ignore files in .coveragerc | simonw 9599 | open | 0 | 0 | 2020-06-13T21:45:42Z | 2020-06-13T21:46:03Z | OWNER | I'm not sure why this is, but the code coverage I have running in a GitHub Action doesn't take my Here's the bit that's ignored: https://github.com/simonw/datasette/blob/cf7a2bdb404734910ec07abc7571351a2d934828/.coveragerc#L1-L2 As a result my coverage score is 84%, when it should be 92%:

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/845/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 636511683 | MDU6SXNzdWU2MzY1MTE2ODM= | 830 | Redesign register_facet_classes plugin hook | simonw 9599 | open | 0 | Datasette 1.0 3268330 | 3 | 2020-06-10T20:03:27Z | 2021-12-16T19:58:22Z | OWNER | Nothing uses this plugin hook yet, so the design is not yet proven. I'm going to build a real plugin against it and use that process to inform any design changes that may need to be made. I'll add a warning about this to the documentation. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/830/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

|||||||

| 634663505 | MDU6SXNzdWU2MzQ2NjM1MDU= | 815 | Group permission checks by request on /-/permissions debug page | simonw 9599 | open | 0 | 8 | 2020-06-08T14:25:23Z | 2020-12-17T22:06:48Z | OWNER | Now that we're making a LOT more permission checks (on the DB index page we do a check for every listed table for example) the Can make this more readable by grouping permission checks by request. Have most recent request at the top of the page but the permission requests within that page sorted chronologically by most recent last. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/815/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 632724154 | MDU6SXNzdWU2MzI3MjQxNTQ= | 805 | Writable canned queries live demo on Glitch | simonw 9599 | open | 0 | 11 | 2020-06-06T20:52:13Z | 2020-07-01T22:44:01Z | OWNER | Needs to run somewhere with a mutable disk drive, so not Cloud Run or Heroku or Vercel. I think I'll put it on Glitch. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/805/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 628572716 | MDU6SXNzdWU2Mjg1NzI3MTY= | 791 | Tutorial: building a something-interesting with writable canned queries | simonw 9599 | open | 0 | 2 | 2020-06-01T16:32:05Z | 2020-10-10T23:34:42Z | OWNER | Initial idea: TODO list, as a tutorial for #698 writable canned queries. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/791/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 628156527 | MDU6SXNzdWU2MjgxNTY1Mjc= | 789 | Mechanism for enabling pluggy tracing | simonw 9599 | open | 0 | 2 | 2020-06-01T05:10:14Z | 2020-06-01T05:11:03Z | OWNER | Could be useful for debugging plugins: https://pluggy.readthedocs.io/en/latest/#call-tracing I tried this out by adding these two lines in Added these:pm.trace.root.setwriter(print)

pm.enable_tracing()

finish actor_from_request --> [] [hook] extra_body_script [hook] template: show_json.html database: None table: None view_name: json_data datasette: <datasette.app.Datasette object at 0x106277ad0> finish extra_body_script --> [] [hook] extra_template_vars [hook] template: show_json.html database: None table: None view_name: json_data request: <datasette.utils.asgi.Request object at 0x1065504d0> datasette: <datasette.app.Datasette object at 0x106277ad0> finish extra_template_vars --> [] [hook] extra_css_urls [hook] template: show_json.html database: None table: None datasette: <datasette.app.Datasette object at 0x106277ad0> finish extra_css_urls --> [] [hook] extra_js_urls [hook] template: show_json.html database: None table: None datasette: <datasette.app.Datasette object at 0x106277ad0> finish extra_js_urls --> [] [hook] INFO: 127.0.0.1:52724 - "GET /-/actor HTTP/1.1" 200 OK actor_from_request [hook] datasette: <datasette.app.Datasette object at 0x106277ad0> request: <datasette.utils.asgi.Request object at 0x1065500d0> finish actor_from_request --> [] [hook] ``` |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/789/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 626593402 | MDU6SXNzdWU2MjY1OTM0MDI= | 780 | Internals documentation for datasette.metadata() method | simonw 9599 | open | 0 | Datasette 1.0 3268330 | 2 | 2020-05-28T15:14:22Z | 2022-03-15T20:50:34Z | OWNER | datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/780/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 626582657 | MDU6SXNzdWU2MjY1ODI2NTc= | 779 | Make human_description_en explicitly available to output renderers | simonw 9599 | open | 0 | 0 | 2020-05-28T14:59:54Z | 2020-05-28T14:59:54Z | OWNER |

https://github.com/simonw/datasette-atom/blob/df98a6c43a443224b6cd232f84703ec297ef046b/datasette_atom/init.py#L36-L37

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/779/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 626211658 | MDU6SXNzdWU2MjYyMTE2NTg= | 778 | Ability to configure keyset pagination for views and queries | simonw 9599 | open | 0 | 1 | 2020-05-28T04:48:56Z | 2020-10-02T02:26:25Z | OWNER | Currently views offer pagination, but it uses offset/limit - e.g. https://latest.datasette.io/fixtures/paginated_view?_next=100 This means pagination will perform poorly on deeper pages. If a view is based on a table that has a primary key it should be possible to configure efficient keyset pagination that works the same way that table pagination works. This may be as simple as configuring a column that can be treated as a "primary key" for the purpose of pagination using |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/778/reactions",

"total_count": 1,

"+1": 1,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 617323873 | MDU6SXNzdWU2MTczMjM4NzM= | 766 | Enable wildcard-searches by default | clausjuhl 2181410 | open | 0 | 2 | 2020-05-13T10:14:48Z | 2021-03-05T16:35:21Z | NONE | Hi Simon. It seems that datasette currently has wildcard-searches disabled by default (along with the boolean search-options, NEAR-queries and more, and despite the docs). If I try out the search-url provided in the docs (https://fara.datasettes.com/fara/FARA_All_ShortForms?_search=manafort), it does not handle wildcard-searches, and I'm unable to make it work on my datasette-instance. I would argue that wildcard-searches is such a standard query, that it should be enabled by default. Requiring "_searchmode=raw" when using prefix-searches seems unnecessary. Plus: What happens to non-ascii searches when using "_searchmode=raw"? Is the "escape_fts"-function from datasette.utils ignored? Thanks! /Claus |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/766/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 616087149 | MDU6SXNzdWU2MTYwODcxNDk= | 765 | publish heroku should default to currently tagged version | simonw 9599 | open | 0 | 1 | 2020-05-11T18:24:06Z | 2020-05-11T18:25:43Z | OWNER | Had a report that deploying to Heroku was using the previously installed version of Datasette, not the latest. Could be because of this: Heroku documentation recommends pinning to specific versions https://devcenter.heroku.com/articles/python-pip So... we could ensure we default to an install value of |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/765/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 613491342 | MDU6SXNzdWU2MTM0OTEzNDI= | 762 | Experiment with PRAGMA hard_heap_limit | simonw 9599 | open | 0 | 0 | 2020-05-06T17:33:23Z | 2020-05-07T03:08:44Z | OWNER | This was added in SQLite 2020-01-22 (3.31.0): https://www.sqlite.org/changes.html#version_3_31_0

This sounds like it could be a nice extra safety measure. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/762/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 613422636 | MDU6SXNzdWU2MTM0MjI2MzY= | 760 | Way of seeing full schema for a database | simonw 9599 | open | 0 | 3 | 2020-05-06T15:46:08Z | 2020-05-06T23:49:06Z | OWNER | I find myself wanting to quickly figure out all of the BLOB columns in a database. A It would need to be carefully constructed from various queries against |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/760/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 612382643 | MDU6SXNzdWU2MTIzODI2NDM= | 758 | Question: Access to immutable database-path | clausjuhl 2181410 | open | 0 | 6 | 2020-05-05T07:01:18Z | 2020-05-28T08:23:27Z | NONE | Hi Simon Is there anywhere in the app-context where one can access the hashed urlpath of the database? Currently it's included in the template-context ( |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/758/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 607223136 | MDU6SXNzdWU2MDcyMjMxMzY= | 741 | Replace "datasette publish --extra-options" with "--setting" | simonw 9599 | open | 0 | Datasette 1.0 3268330 | 9 | 2020-04-27T04:29:04Z | 2022-05-12T19:21:16Z | OWNER | See https://github.com/simonw/datasette-publish-now/issues/9#issuecomment-618155764 - the A neater design would be to support |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/741/reactions",

"total_count": 1,

"+1": 1,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

|||||||

| 594237015 | MDU6SXNzdWU1OTQyMzcwMTU= | 718 | Plugin idea: datasette-redirects | simonw 9599 | open | 0 | 0 | 2020-04-05T03:41:38Z | 2023-08-30T22:17:31Z | OWNER | I just had to write a one-off custom plugin to redirect niche-musems.com to www.niche-museums.com (https://github.com/simonw/museums/issues/21) - it would be great if this kind of thing could be handled by a configurable plugin. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/718/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

reopened | |||||||

| 593006814 | MDU6SXNzdWU1OTMwMDY4MTQ= | 715 | Refactor duplicate cell display logic | simonw 9599 | open | 0 | 0 | 2020-04-03T00:58:11Z | 2020-04-03T00:58:11Z | OWNER | The logic for rendering cells in table view and in database (or canned query) view is currently very similar: Compared with: I'll be changing this a bit in #698 but I should still try to clean this up more further in the future. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/715/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 574035432 | MDU6SXNzdWU1NzQwMzU0MzI= | 692 | is_hidden_table context variable on table.html page | simonw 9599 | open | 0 | 1 | 2020-03-02T15:03:25Z | 2020-03-02T15:03:48Z | OWNER | It's useful to know if a table is hidden when rendering that page. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/692/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 574021194 | MDU6SXNzdWU1NzQwMjExOTQ= | 691 | --reload sould reload server if code in --plugins-dir changes | simonw 9599 | open | 0 | 1 | 2020-03-02T14:42:21Z | 2020-06-14T02:35:17Z | OWNER | datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/691/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

|||||||||

| 567902704 | MDU6SXNzdWU1Njc5MDI3MDQ= | 675 | --cp option for datasette publish and datasette package for shipping additional files and directories | aviflax 141844 | open | 0 | 12 | 2020-02-19T22:55:56Z | 2020-12-28T18:49:21Z | NONE | I’m working on integrating Datasette into a documentation-oriented publishing workflow internally in my company, and in order to deploy the Docker image created by So it’d be excellent if there was an additional option for this command, something like, like, I’d envision it looking something like:

I’d be happy to help design, specify, implement, and test this feature, if you’d be interested. Thanks for the fantastic tools! |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/675/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 565064079 | MDExOlB1bGxSZXF1ZXN0Mzc1MTgwODMy | 672 | --dirs option for scanning directories for SQLite databases | simonw 9599 | open | 0 | 15 | 2020-02-14T02:25:52Z | 2020-03-27T01:03:53Z | OWNER | simonw/datasette/pulls/672 | Refs #417. |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/672/reactions",

"total_count": 1,

"+1": 1,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

| 564833696 | MDU6SXNzdWU1NjQ4MzM2OTY= | 670 | Prototoype for Datasette on PostgreSQL | simonw 9599 | open | 0 | 15 | 2020-02-13T17:17:55Z | 2023-11-17T15:32:21Z | OWNER | I thought this would never happen, but now that I'm deep in the weeds of running SQLite in production for Datasette Cloud I'm starting to reconsider my policy of only supporting SQLite. Some of the factors making me think PostgreSQL support could be worth the effort:

- Serverless. I'm getting increasingly excited about writable-database use-cases for Datasette. If it could talk to PostgreSQL then users could easily deploy it on Heroku or other serverless providers that can talk to a managed RDS-style PostgreSQL.

- Existing databases. Plenty of organizations have PostgreSQL databases. They can export to SQLite using db-to-sqlite but that's a pretty big barrier to getting started - being able to run The above reasons feel strong enough to justify a prototype. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/670/reactions",

"total_count": 19,

"+1": 14,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 5,

"rocket": 0,

"eyes": 0

} |

||||||||

| 559964149 | MDU6SXNzdWU1NTk5NjQxNDk= | 665 | Introduce a SQL statement parser in Python | simonw 9599 | open | 0 | 1 | 2020-02-04T20:36:05Z | 2020-02-04T20:36:48Z | OWNER | 254 and #653 are both examples of problems that could be solved using a real SQL parser in Python. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/665/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 550293770 | MDU6SXNzdWU1NTAyOTM3NzA= | 658 | How do I use the app.css as style sheet? | null92 49656826 | open | 0 | 2 | 2020-01-15T16:27:57Z | 2020-02-07T00:29:50Z | NONE | Simon, I'm trying to use the app.css (in static folder) as style sheet but the datasette on Heroku simply ignore it! I read everything about customization here and on readthedocs but still can't. Is this possible? Thanks! |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/658/reactions",

"total_count": 1,

"+1": 1,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 548591089 | MDU6SXNzdWU1NDg1OTEwODk= | 657 | Allow creation of virtual tables at startup | dazzag24 1055831 | open | 0 | 4 | 2020-01-12T16:10:55Z | 2021-01-15T20:24:35Z | NONE | Hi, I've been experimenting with SQLite reading from huge datasets using this excellent Parquet extension from @cldellow. https://cldellow.com/2018/06/22/sqlite-parquet-vtable.html https://github.com/cldellow/sqlite-parquet-vtable This works really well, but I was keen to see if I could combine datasette with this. Having previously experimented with the spatialite extension I knew that datasette supports loading extensions in the underlying sqlite instance. However I hit a blocker as the current design only allows SELECT statements to be executed and so I am unable to execute the crucial CREATE VIRTUAL TABLE ......... command that is required to load the data from the parquet file into the table. It seems like this would be a simple-ish change, but I don't know enough about the architecture of datasette to start implementing this myself? Could this be done as a datasette plugin? or would this require more fundamental changes at initialisation time? My thoughts are that something at init time could detect that the user was loading a .parquet file and then switch to a mode were it loads that via the "CREATE VIRTUAL TABLE..." rather than loading the .db file in the default case?? I'm happy to contribute code and testing, I just need some pointers on the best approach. Thanks Darren |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/657/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 534629631 | MDU6SXNzdWU1MzQ2Mjk2MzE= | 650 | Add a glossary to the documentation | simonw 9599 | open | 0 | 3 | 2019-12-09T00:23:45Z | 2022-01-13T22:04:56Z | OWNER | Call it GlossaryTerm A definition of the term. Another term Another definition. ``` |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/650/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 531502365 | MDU6SXNzdWU1MzE1MDIzNjU= | 646 | Make database level information from metadata.json available in the index.html template | lagolucas 18017473 | open | 0 | Datasette 1.0 3268330 | 3 | 2019-12-02T19:55:10Z | 2022-03-15T20:50:34Z | NONE | Did a search on the issues here and didn't find anything related to what I want. I want to have information that is on the database level of the JSON like title, source and source_url, and use it on the index page. I tried some small tweaks on the python and html files, but failed to get that result. Is there a way? Thanks! |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/646/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

|||||||

| 530468212 | MDU6SXNzdWU1MzA0NjgyMTI= | 643 | Set up some basic benchmarks as part of the unit tests | simonw 9599 | open | 0 | 0 | 2019-11-29T19:24:19Z | 2019-11-29T19:24:19Z | OWNER | https://pypi.org/project/pytest-benchmark/ looks great for this. Here's how to run it as a github action: https://github.com/rhysd/github-action-benchmark/blob/master/examples/pytest/README.md |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/643/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 527710055 | MDU6SXNzdWU1Mjc3MTAwNTU= | 640 | Nicer error message for heroku publish name clash | psychemedia 82988 | open | 0 | 1 | 2019-11-24T14:57:07Z | 2019-12-06T07:19:34Z | CONTRIBUTOR | If you try to publish to Heroku using no set name (i.e. the default

It would be neater if:

It may also be useful to provide a command to list the current names that are being used, which I assume is available via a Heroku call? |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/640/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 527670799 | MDU6SXNzdWU1Mjc2NzA3OTk= | 639 | updating metadata.json without recreating the app | pkoppstein 172847 | open | 0 | 6 | 2019-11-24T09:19:53Z | 2019-11-30T06:08:50Z | NONE | I've sucessfully "uploaded" an SQLite database (with a metadata.json file) to heroku using: The question is: how can I modify the (small) metadata.json file without having to upload the (large) SQLite database. The directions on heroku indicate I should run: But this just results in an empty directory with a warning: warning: You appear to have cloned an empty repository. I've been able to "clone" the heroku "app" using the command: but this is not a git repository.... Ideally, it seems to me, there'd be an option of the (p.s. I ran (p.p.s. Thanks for Datasette!) |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/639/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 520681725 | MDU6SXNzdWU1MjA2ODE3MjU= | 621 | Syntax for ?_through= that works as a form field | simonw 9599 | open | 0 | 7 | 2019-11-11T00:19:03Z | 2021-12-18T01:42:33Z | OWNER | The current syntax for This means you can't target a form field at it. We should be able to support both - |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/621/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 520667773 | MDU6SXNzdWU1MjA2Njc3NzM= | 620 | Mechanism for indicating foreign key relationships in the table and query page URLs | simonw 9599 | open | 0 | 6 | 2019-11-10T22:26:27Z | 2021-04-05T03:57:22Z | OWNER | Datasette currently only inflates foreign keys (into names hyperlinks) if it detects them as foreign key constraints in the underlying database. It would be useful if you could specify additional "foreign keys" using both |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/620/reactions",

"total_count": 1,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 1

} |

||||||||

| 516874735 | MDU6SXNzdWU1MTY4NzQ3MzU= | 613 | Basic join support for table view | simonw 9599 | open | 0 | 1 | 2019-11-03T19:12:53Z | 2019-11-03T19:14:01Z | OWNER | I think it would be possible to support basic foreign key joins on the table page. The user could specify columns that should result in a join (from a set of suggestions similar to how facets work right now) and they could then be passed as This feature will make a lot of sense when combined with the ability to show / hide / customize columns, see #292 |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/613/reactions",

"total_count": 1,

"+1": 1,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 510076368 | MDU6SXNzdWU1MTAwNzYzNjg= | 605 | Support queries at the table level | bsilverm 12617395 | open | 0 | 2 | 2019-10-21T15:58:30Z | 2019-10-30T18:55:37Z | NONE | Per the issue described in issue #588, it was determined queries are not supported at the table level. Per my last comment in the issue, I'd like to request support for this as it would help eliminate errors in the event certain tables are not present in the database. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/605/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 507454958 | MDU6SXNzdWU1MDc0NTQ5NTg= | 596 | Handle really wide tables better | simonw 9599 | open | 0 | 9 | 2019-10-15T20:05:46Z | 2022-09-07T00:58:41Z | OWNER | If a table has hundreds of columns the Datasette UI starts getting unwieldy. Addressing this would be neat. One option would be to only select the first 30 columns by default and provide a UI for selecting more. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/596/reactions",

"total_count": 1,

"+1": 1,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 503053243 | MDU6SXNzdWU1MDMwNTMyNDM= | 582 | Datasette should not completely crash if one SQLite database is malformed | simonw 9599 | open | 0 | 0 | 2019-10-06T05:11:43Z | 2019-10-06T05:11:43Z | OWNER | If you run Datasette against a number of database files and one of them is malformed, you get this 500 error on the index page:

It would be better if Datasette still worked and listed the databases that were NOT malformed, then showed an inline error message just for the one that could not be accessed. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/582/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 501773982 | MDExOlB1bGxSZXF1ZXN0MzIzOTgzNzMy | 579 | New connection pooling | simonw 9599 | open | 0 | 1 | 2019-10-02T23:22:19Z | 2019-11-15T22:57:21Z | OWNER | simonw/datasette/pulls/579 | See #569 |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/579/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

| 481885279 | MDU6SXNzdWU0ODE4ODUyNzk= | 569 | More advanced connection pooling | simonw 9599 | open | 0 | 4 | 2019-08-17T13:20:41Z | 2019-10-02T22:44:37Z | OWNER | We need a much smarter way of handling database connections. Today, connections are simple: Datasette runs a number of threads (defaults to 3) and each thread gets a threadlocal read-only (or immutable) connection to each attached database - opened on demand. For Datasette Library (#417) I want to support potentially hundreds of attached databases. Datasette Edit (#567) is going to introduce a need for writable connections too. I'd also like to be able to run joins across multiple databases (#283) which further complicates things. Supporting thousands of open SQLite connections at once feels like it won't provide good enough performance (though I should benchmark that to be sure). Some kind of connection pooling is likely to be necessary. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/569/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 476852861 | MDU6SXNzdWU0NzY4NTI4NjE= | 568 | Add database_color as a configurable option | LBHELewis 50906992 | open | 0 | 1 | 2019-08-05T13:14:45Z | 2023-08-11T05:19:42Z | NONE | This would be really useful as it would allow us to tie in with colour schemes. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/568/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 473288428 | MDExOlB1bGxSZXF1ZXN0MzAxNDgzNjEz | 564 | First proof-of-concept of Datasette Library | simonw 9599 | open | 0 | 1 | 2019-07-26T10:22:26Z | 2023-02-07T15:14:11Z | OWNER | simonw/datasette/pulls/564 | Refs #417. Run it like this: Uses a new plugin hook - available_databases() |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/564/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

1 | ||||||

| 465327844 | MDU6SXNzdWU0NjUzMjc4NDQ= | 553 | Potential improvements to facet-by-date | simonw 9599 | open | 0 | 3 | 2019-07-08T15:37:53Z | 2019-07-08T15:41:55Z | OWNER | In addition to #483 Tobias had some useful suggestions on Twitter: https://twitter.com/rixxtr/status/1148253926476701696

Screenshot of that link:

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/553/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 465019882 | MDU6SXNzdWU0NjUwMTk4ODI= | 552 | Add --plugin-secret support to "datasette package" | simonw 9599 | open | 0 | 1 | 2019-07-08T01:46:47Z | 2019-07-08T01:47:30Z | OWNER | Split out from #544. I think I should combine this with #347 (renaming |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/552/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 465003070 | MDU6SXNzdWU0NjUwMDMwNzA= | 551 | Ship many-to-many faceting support (and facet-by-delimiter) | simonw 9599 | open | 0 | 2 | 2019-07-07T23:11:45Z | 2019-07-08T15:45:23Z | OWNER | datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/551/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

|||||||||

| 464987783 | MDExOlB1bGxSZXF1ZXN0Mjk1MTI3MjEz | 546 | Facet by delimiter | simonw 9599 | open | 0 | 2 | 2019-07-07T20:06:05Z | 2019-11-18T23:46:01Z | OWNER | simonw/datasette/pulls/546 | Refs #510 |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/546/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

| 463544206 | MDU6SXNzdWU0NjM1NDQyMDY= | 537 | Populate "endpoint" key in ASGI scope | simonw 9599 | open | 0 | 12 | 2019-07-03T04:54:47Z | 2019-07-22T06:03:18Z | OWNER | This is a trick used by Starlette so that other layers of ASGI middleware can see which route was selected. They added it here: https://github.com/encode/starlette/commit/34d0097feb6f057bd050d5057df5a2f96b97384e If Datasette supports it as well we can benefit from it if we integrate this sentry_asgi middleware (probably as a |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/537/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 463492815 | MDU6SXNzdWU0NjM0OTI4MTU= | 534 | 500 error on m2m facet detection | simonw 9599 | open | 0 | 1 | 2019-07-03T00:42:42Z | 2020-12-17T05:08:22Z | OWNER | This may help debug:

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/534/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 462117311 | MDU6SXNzdWU0NjIxMTczMTE= | 531 | /database/-/inspect | simonw 9599 | open | 0 | 1 | 2019-06-28T16:33:41Z | 2019-07-08T15:43:57Z | OWNER | Build It won't show table counts. Or maybe it will include them optionally but only for Originally posted by @simonw in https://github.com/simonw/datasette/issues/465#issuecomment-506797086 |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/531/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 460095928 | MDU6SXNzdWU0NjAwOTU5Mjg= | 528 | Establish a pattern for Datasette plugins built on top of Pandas | simonw 9599 | open | 0 | 0 | 2019-06-24T21:05:52Z | 2019-06-24T21:05:52Z | OWNER | The Pandas ecosystem is huge, varied and full of tools that are really good at doing interesting analysis on top of tabular data. Pandas should not be a dependency of Datasette core, but I think there is a lot of potential in having plugins which use Pandas to apply interesting analysis to data sucked out of Datasette's SQLite tables. One example (thanks, Tony): https://github.com/ResidentMario/missingno could form the basis of a fantastic plugin for getting a high-level overview of how complete each column in a table is. Some thought is needed here about what shape these kind of plugins might take, and what plugin hooks they would use. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/528/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 459882902 | MDU6SXNzdWU0NTk4ODI5MDI= | 526 | Stream all results for arbitrary SQL and canned queries | matej-fr 50578294 | open | 0 | 23 | 2019-06-24T13:09:45Z | 2022-09-28T04:01:25Z | NONE | I think that there is a difficulty with canned queries. When I want to stream all results of a canned query TwoDays I get only first 1.000 records. Example:

returns only first 1.000 records. If I do the same with the whole database i.e.

I get correctly all records. Any ideas? |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/526/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 459622390 | MDU6SXNzdWU0NTk2MjIzOTA= | 522 | Handle case-insensitive headers in a nicer way | simonw 9599 | open | 0 | 1 | 2019-06-23T21:56:34Z | 2019-06-26T18:48:53Z | OWNER | datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/522/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

|||||||||

| 459509126 | MDU6SXNzdWU0NTk1MDkxMjY= | 516 | Enforce import sort order with isort | simonw 9599 | open | 0 | 8 | 2019-06-22T20:35:50Z | 2023-08-23T02:15:36Z | OWNER | I want to use isort to order imports. A few steps here:

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/516/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 459469278 | MDU6SXNzdWU0NTk0NjkyNzg= | 515 | Try shrinking official image with docker-slim | simonw 9599 | open | 0 | 0 | 2019-06-22T12:25:37Z | 2019-06-22T12:25:37Z | OWNER | This looks really promising: https://github.com/docker-slim/docker-slim If it can shave substantial size from our official container reliably we could add it to the automated build process. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/515/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 457147936 | MDU6SXNzdWU0NTcxNDc5MzY= | 512 | "about" parameter in metadata does not appear when alone | chrismp 7936571 | open | 0 | 3 | 2019-06-17T21:04:20Z | 2019-10-11T15:49:13Z | NONE | Here's an example of metadata I have for one database on datasette.

The text in Is this intended? |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/512/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 456578474 | MDU6SXNzdWU0NTY1Nzg0NzQ= | 511 | Get Datasette tests passing on Windows in GitHub Actions | simonw 9599 | open | 0 | 13 | 2019-06-15T21:41:58Z | 2021-07-11T17:23:05Z | OWNER | This should almost happen as a side-effect or moving from Sanic to Uvicorn during the port to ASGI: #272 Additional steps:

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/511/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 456569067 | MDU6SXNzdWU0NTY1NjkwNjc= | 510 | Ability to facet by delimiter (e.g. comma separated fields) | simonw 9599 | open | 0 | simonw 9599 | 1 | 2019-06-15T19:34:41Z | 2019-07-08T15:44:51Z | OWNER | E.g. if a field contains "Tags,With,Commas" be able to facet them in the same way as |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/510/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

|||||||

| 455852801 | MDU6SXNzdWU0NTU4NTI4MDE= | 507 | Every datasette plugin on the ecosystem page should have a screenshot | simonw 9599 | open | 0 | 4 | 2019-06-13T17:02:51Z | 2020-09-17T02:47:35Z | OWNER | datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/507/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

|||||||||

| 451585764 | MDU6SXNzdWU0NTE1ODU3NjQ= | 499 | Accessibility for non-techie newsies? | chrismp 7936571 | open | 0 | 3 | 2019-06-03T16:49:37Z | 2019-06-05T21:22:55Z | NONE | Hi again, I'm having fun uploading datasets to Heroku via datasette. I'd like to set up datasette so that it's easy for other newsroom workers, who don't use Linux and aren't programmers, to upload datasets. Does datsette provide this out-of-the-box, or as a plugin? |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/499/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

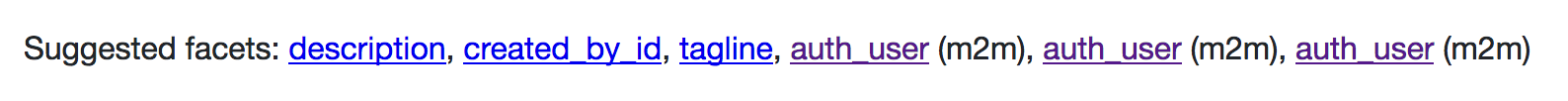

| 450032134 | MDU6SXNzdWU0NTAwMzIxMzQ= | 495 | facet_m2m gets confused by multiple relationships | simonw 9599 | open | 0 | 2 | 2019-05-29T21:37:28Z | 2020-12-17T05:08:22Z | OWNER | I got this for a database I was playing with:

I think this is because of these three tables:

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/495/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 449445715 | MDU6SXNzdWU0NDk0NDU3MTU= | 491 | Figure out how to use Firebase with cloudrun to enable vanity URLs and CDN caching | simonw 9599 | open | 0 | 0 | 2019-05-28T19:48:06Z | 2019-05-28T19:48:35Z | OWNER | It looks like Firebase can solve a couple of problems with the existing

https://firebase.google.com/docs/hosting/cloud-run looks like it can help with both of these. Lots of interesting questions:

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/491/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 447469253 | MDU6SXNzdWU0NDc0NjkyNTM= | 485 | Improvements to table label detection | simonw 9599 | open | 0 | simonw 9599 | 10 | 2019-05-23T06:19:49Z | 2022-10-03T00:04:42Z | OWNER | Label detection doesn't work if the primary key is called pk rather than id, so this page doesn't work: https://latest.datasette.io/fixtures/roadside_attraction_characteristics Code is here: |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/485/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

|||||||

| 447451492 | MDU6SXNzdWU0NDc0NTE0OTI= | 484 | Mechanism for displaying summary of m2m relationships in rows on table view | simonw 9599 | open | 0 | 1 | 2019-05-23T05:02:41Z | 2019-05-23T06:34:05Z | OWNER | Part of #354 (m2m support) It would be fantastic if rows that are part of a m2m relationship could display it in an additional column in the table view. It might look something like this: https://russian-ira-facebook-ads.datasettes.com/russian-ads-919cbfd/display_ads?_search=black+lives+matter

That example was achieved using a custom SQL query and datasette-json-html - but I'd like this to be a built-in feature instead. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/484/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 447408527 | MDU6SXNzdWU0NDc0MDg1Mjc= | 483 | Option to facet by date using month or year | simonw 9599 | open | 0 | 5 | 2019-05-23T01:25:29Z | 2019-05-29T21:38:27Z | OWNER | Facet by date (from #481) can take datetimes and facet them by the day component. https://latest.datasette.io/fixtures/facetable?_facet_date=created I'd like to also be able to facet by month or year. I'm not sure what the best way to achieve this is. Could be two more Facet classes (YearFacet and MonthFacet) but I think it might be nicer if the existing DateFacet could take an optional argument that changed its behaviour. But... if I do that, do I expose it in the UI somewhere or is it only available to URL-hackers? |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/483/reactions",

"total_count": 1,

"+1": 1,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 443021509 | MDU6SXNzdWU0NDMwMjE1MDk= | 461 | Paginate + search for databases/tables on the homepage | simonw 9599 | open | 0 | Datasette 1.0 3268330 | 4 | 2019-05-11T18:05:34Z | 2020-12-17T22:14:46Z | OWNER | Split out from #460 - in order to support large numbers of connected databases the homepage needs to be paginated. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/461/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

|||||||

| 440325850 | MDExOlB1bGxSZXF1ZXN0Mjc1OTIzMDY2 | 452 | SQL builder utility classes | russss 45057 | open | 0 | 0 | 2019-05-04T13:57:47Z | 2019-05-04T14:03:04Z | CONTRIBUTOR | simonw/datasette/pulls/452 | This adds a straightforward set of classes to aid in the construction of SQL queries. My plan for this was to allow plugins to manipulate the

Datasette-generated SQL in a more structured way. I'm not sure that's

going to work, but I feel like this is still a step forward - it

reduces the number of intermediate variables in There are a fair number of minor structure changes in here too as I've

tried to make the ordering of |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/452/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

| 426722204 | MDU6SXNzdWU0MjY3MjIyMDQ= | 423 | ?_search_col=X not reflected correctly in the UI | simonw 9599 | open | 0 | 0 | 2019-03-28T21:48:19Z | 2020-11-03T19:01:59Z | OWNER | datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/423/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

|||||||||

| 421546944 | MDU6SXNzdWU0MjE1NDY5NDQ= | 417 | Datasette Library | simonw 9599 | open | 0 | 12 | 2019-03-15T14:30:22Z | 2020-12-29T14:34:50Z | OWNER | The ability to run Datasette in a mode where it automatically picks up new (or modified) files in a directory tree without needing to restart the server. Suggested command: |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/417/reactions",

"total_count": 8,

"+1": 8,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 411257981 | MDU6SXNzdWU0MTEyNTc5ODE= | 412 | Linked Data(sette) | sfkeller 43340 | open | 0 | 2 | 2019-02-18T00:38:14Z | 2019-03-19T10:09:46Z | NONE | I've a radical feature idea (possible first as an extension in order to experiment?): I'd like to link to a remote table from a remote database, e.g. with a function "linked_datasette()". So one could do following query:

There's a foundation in the SQL Standard called SQL/MED (https://rhaas.blogspot.com/2011/01/why-sqlmed-is-cool.html ). And here's an implementation from me in Postgres FDW to connect another Postgres "endpoint": https://pastebin.com/Fz2v64Cz . |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/412/reactions",

"total_count": 1,

"+1": 1,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 400340905 | MDU6SXNzdWU0MDAzNDA5MDU= | 402 | Use SQLITE_DBCONFIG_DEFENSIVE plus other recommendations from SQLite security docs | simonw 9599 | open | 0 | 3 | 2019-01-17T15:52:28Z | 2019-01-17T16:15:21Z | OWNER |

https://twitter.com/ignoredambience/status/1085926961413869568 |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/402/reactions",

"total_count": 1,

"+1": 1,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 377166793 | MDU6SXNzdWUzNzcxNjY3OTM= | 372 | Docker build tools | psychemedia 82988 | open | 0 | 0 | 2018-11-04T16:02:35Z | 2018-11-04T16:02:35Z | CONTRIBUTOR | In terms of small pieces lightly joined, I note that there are several tools starting to appear for building generating Dockerfiles and building Docker containers from simpler components such as If plugin/extensions builders want to include additional packages, then things like incremental builds of composable builds that add additional items into a base Examples of Dockerfile generators / container builders: Discussions / threads (via Binderhub gitter) on:

- why Relates to things like: |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/372/reactions",

"total_count": 2,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 2,

"rocket": 0,

"eyes": 0

} |

||||||||

| 377155320 | MDU6SXNzdWUzNzcxNTUzMjA= | 370 | Integration with JupyterLab | psychemedia 82988 | open | 0 | 4 | 2018-11-04T13:57:13Z | 2022-09-29T08:17:47Z | CONTRIBUTOR | I just watched a demo video for the JupyterLab Chart Editor which wraps the plotly chart editor app in a JupyterLab panel and lets you open a plotly chart JSON file in that editor. Essentially, it pops an HTML app into a panel in JupyterLab, and I think registers the app as a file viewer for a particular file type. (I'm not completely taken by it, tbh, because it means you can do irreproducible things to the chart definition file, but that's another issue). JupyterLab extensions can also open files from a dialogue as the iframe/html previewer shows: https://github.com/timkpaine/jupyterlab_iframe. This made me wonder about what For example, by right-clicking on a CSV file (for which there is already a CSV table view) in the file browser, offer a View / Run as datasette file viewer option that will:

(? Create a new SQLite db for each CSV file and launch each datasette view on a new port? Or have a JupyterLab (session?) SQLite db that stores all As a freebie, the Related: |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/370/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 374953006 | MDU6SXNzdWUzNzQ5NTMwMDY= | 369 | Interface should show same JSON shape options for custom SQL queries | gfrmin 416374 | open | 0 | Datasette 1.0 3268330 | 2 | 2018-10-29T10:39:15Z | 2020-05-30T17:24:06Z | CONTRIBUTOR | At the moment the page returning a custom SQL query shows the JSON and CSV APIs, but not the multiple JSON shapes. However, adding the |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/369/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

Advanced export

JSON shape: default, array, newline-delimited, object

CREATE TABLE [issues] (

[id] INTEGER PRIMARY KEY,

[node_id] TEXT,

[number] INTEGER,

[title] TEXT,

[user] INTEGER REFERENCES [users]([id]),

[state] TEXT,

[locked] INTEGER,

[assignee] INTEGER REFERENCES [users]([id]),

[milestone] INTEGER REFERENCES [milestones]([id]),

[comments] INTEGER,

[created_at] TEXT,

[updated_at] TEXT,

[closed_at] TEXT,

[author_association] TEXT,

[pull_request] TEXT,

[body] TEXT,

[repo] INTEGER REFERENCES [repos]([id]),

[type] TEXT

, [active_lock_reason] TEXT, [performed_via_github_app] TEXT, [reactions] TEXT, [draft] INTEGER, [state_reason] TEXT);

CREATE INDEX [idx_issues_repo]

ON [issues] ([repo]);

CREATE INDEX [idx_issues_milestone]

ON [issues] ([milestone]);

CREATE INDEX [idx_issues_assignee]

ON [issues] ([assignee]);

CREATE INDEX [idx_issues_user]

ON [issues] ([user]);