issues

555 rows where repo = 107914493 and state = "open" sorted by author_association

This data as json, CSV (advanced)

| id | node_id | number | title | user | state | locked | assignee | milestone | comments | created_at | updated_at | closed_at | author_association ▼ | pull_request | body | repo | type | active_lock_reason | performed_via_github_app | reactions | draft | state_reason |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 723982480 | MDExOlB1bGxSZXF1ZXN0NTA1NDUzOTAw | 1030 | Make `package` command deal with a configuration directory argument | frankier 299380 | open | 0 | 1 | 2020-10-18T11:07:02Z | 2020-10-19T08:01:51Z | FIRST_TIME_CONTRIBUTOR | simonw/datasette/pulls/1030 | Currently if we run |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1030/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

| 774332247 | MDExOlB1bGxSZXF1ZXN0NTQ1MjY0NDM2 | 1159 | Improve the display of facets information | lovasoa 552629 | open | 0 | Datasette 1.0 3268330 | 9 | 2020-12-24T11:01:47Z | 2023-07-31T18:57:59Z | FIRST_TIME_CONTRIBUTOR | simonw/datasette/pulls/1159 | This PR changes the display of facets to hopefully make them more readable. Before | After

---|---

|

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1159/reactions",

"total_count": 4,

"+1": 4,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | |||||

| 855446829 | MDExOlB1bGxSZXF1ZXN0NjEzMTc4OTY4 | 1296 | Dockerfile: use Ubuntu 20.10 as base | tmcl-it 82332573 | open | 0 | 4 | 2021-04-12T00:23:32Z | 2021-07-20T08:52:13Z | FIRST_TIME_CONTRIBUTOR | simonw/datasette/pulls/1296 | This PR changes the main Dockerfile to use ubuntu:20.10 as base image instead of python:3.9.2-slim-buster (itself based on debian:buster-slim). The Dockerfile is essentially the one from https://github.com/simonw/datasette/issues/1249#issuecomment-803698983 with some additional cleanups to slim it down. This fixes a couple of issues: 1. The SQLite version in Debian Buster (2.6.0) doesn't support generated columns 2. Installing SpatiaLite from the Debian sid repositories has the side effect of also installing updates to libc and libstdc++ from sid. As a bonus, the Docker image becomes smaller:

Reproduction of the first issue``` $ curl -O https://latest.datasette.io/fixtures.db % Total % Received % Xferd Average Speed Time Time Time Current Dload Upload Total Spent Left Speed 100 260k 0 260k 0 0 489k 0 --:--:-- --:--:-- --:--:-- 489k $ docker run -v Here is the SQLite version:

Reproduction of the second issue

Both libc and libstdc++ are backwards compatible, so the image still works, but it will result in a combination of libraries and Python versions that exists only in the Datasette image, so it's likely untested. In addition, since Debian sid is an always-changing rolling-release, the versions of libc, libstdc++, Spatialite, and their dependencies change frequently, so the library versions in the Datasette image will depend on the day when it was built. |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1296/reactions",

"total_count": 1,

"+1": 1,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

| 947596222 | MDExOlB1bGxSZXF1ZXN0NjkyNTU3Mzgx | 1399 | Multiple sort | jgryko5 87192257 | open | 0 | 0 | 2021-07-19T12:20:14Z | 2021-07-19T12:20:14Z | FIRST_TIME_CONTRIBUTOR | simonw/datasette/pulls/1399 | Closes #197. I have added support for sorting by multiple parameters as mentioned in the issue above, and together with that, a suggestion on how to implement such sorting in the user interface. |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1399/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

| 1001104942 | PR_kwDOBm6k_c4r-EVH | 1475 | feat: allow joins using _through in both directions | bram2000 5268174 | open | 0 | 0 | 2021-09-20T15:28:20Z | 2021-09-20T15:28:20Z | FIRST_TIME_CONTRIBUTOR | simonw/datasette/pulls/1475 | Currently the This is an admittedly hacky change to implement bidirectional joins using |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1475/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

| 1268121674 | PR_kwDOBm6k_c45fz-O | 1757 | feat: add a wildcard for _json columns | ytjohn 163156 | open | 0 | 1 | 2022-06-11T01:01:17Z | 2022-09-06T00:51:21Z | FIRST_TIME_CONTRIBUTOR | simonw/datasette/pulls/1757 | This allows _json to accept a wildcard for when there are many JSON columns that the user wants to convert. I hope this is useful. I've tested it on our datasette and haven't ran into any issues. I imagine on a large set of results, there could be some performance issues, but it will probably be negligible for most use cases. On a side note, I ran into an issue where I had to upgrade black on my system beyond the pinned version in setup.py. Here is the upstream issue <https://github.com/psf/black/issues/2964 . I didn't include this in the PR yet since I didn't look into the issue too far, but I can if you would like. |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1757/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

| 1307359454 | PR_kwDOBm6k_c47iWbd | 1772 | Convert to setup.cfg | kfdm 89725 | open | 0 | 0 | 2022-07-18T03:39:53Z | 2022-07-18T03:39:53Z | FIRST_TIME_CONTRIBUTOR | simonw/datasette/pulls/1772 | Recent versions of setuptools can run most things from setup.cfg so one can have a simpler version that does not require executing code on install. The bulk of the changes were automated by running https://pypi.org/project/setup-py-upgrade/ with a few minor edits for the bits that it can not auto convert (the initial |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1772/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

| 1581218043 | PR_kwDOBm6k_c5JyqPy | 2025 | Add database metadata to index.html template context | palewire 9993 | open | 0 | 0 | 2023-02-12T11:16:58Z | 2023-02-12T11:17:14Z | FIRST_TIME_CONTRIBUTOR | simonw/datasette/pulls/2025 | Fixes #2016 :books: Documentation preview :books:: https://datasette--2025.org.readthedocs.build/en/2025/ |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/2025/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

| 1586980089 | PR_kwDOBm6k_c5KF-by | 2026 | Avoid repeating primary key columns if included in _col args | runderwood 8513 | open | 0 | 0 | 2023-02-16T04:16:25Z | 2023-02-16T04:16:41Z | FIRST_TIME_CONTRIBUTOR | simonw/datasette/pulls/2026 | ...while maintaining given order. Fixes #1975 (if I'm understanding correctly). :books: Documentation preview :books:: https://datasette--2026.org.readthedocs.build/en/2026/ |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/2026/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

| 1605481359 | PR_kwDOBm6k_c5LDwrF | 2031 | Expand foreign key references in row view as well | tmcl-it 82332573 | open | 0 | 5 | 2023-03-01T18:43:09Z | 2023-03-24T18:35:25Z | FIRST_TIME_CONTRIBUTOR | simonw/datasette/pulls/2031 | Unlike the table view, the single row view does not resolve foreign key references into labels. This patch extracts the foreign key reference expansion code from TableView.data() into a standalone function that is then called by both TableView.data() and RowView.data(). :books: Documentation preview :books:: https://datasette--2031.org.readthedocs.build/en/2031/ |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/2031/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

| 1613974869 | PR_kwDOBm6k_c5LgPS- | 2034 | remove an unused `app` var in cli.py | wenhoujx 4370201 | open | 0 | 2 | 2023-03-07T18:19:05Z | 2023-03-29T20:56:20Z | FIRST_TIME_CONTRIBUTOR | simonw/datasette/pulls/2034 | this var Feel free to ignore this PR if the deleted line actually does something. :books: Documentation preview :books:: https://datasette--2034.org.readthedocs.build/en/2034/ |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/2034/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

| 1639873822 | PR_kwDOBm6k_c5M29tt | 2044 | Expand labels in row view as well (patch for 0.64.x branch) | tmcl-it 82332573 | open | 0 | 0 | 2023-03-24T18:44:44Z | 2023-03-24T18:44:57Z | FIRST_TIME_CONTRIBUTOR | simonw/datasette/pulls/2044 | This is a version of #2031 for the 0.64.x branch. :books: Documentation preview :books:: https://datasette--2044.org.readthedocs.build/en/2044/ |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/2044/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

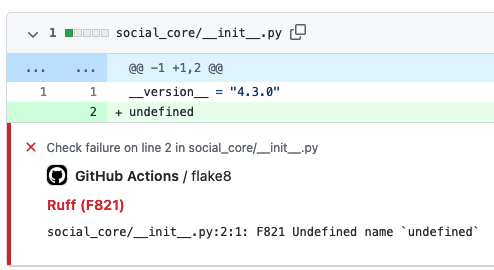

| 1661860507 | PR_kwDOBm6k_c5N_bMw | 2056 | GitHub Action to lint Python code with ruff | cclauss 3709715 | open | 0 | 6 | 2023-04-11T06:41:27Z | 2023-04-15T14:24:46Z | FIRST_TIME_CONTRIBUTOR | simonw/datasette/pulls/2056 | Ruff supports over 500 lint rules and can be used to replace Flake8 (plus dozens of plugins), isort, pydocstyle, yesqa, eradicate, pyupgrade, and autoflake, all while executing (in Rust) tens or hundreds of times faster than any individual tool. The ruff Action uses minimal steps to run in ~5 seconds, rapidly providing intuitive GitHub Annotations to contributors.

:books: Documentation preview :books:: https://datasette--2056.org.readthedocs.build/en/2056/ |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/2056/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

| 1674322631 | PR_kwDOBm6k_c5OpEz_ | 2061 | Add "Packaging a plugin using Poetry" section in docs | rclement 1238873 | open | 0 | 0 | 2023-04-19T07:23:28Z | 2023-04-19T07:27:18Z | FIRST_TIME_CONTRIBUTOR | simonw/datasette/pulls/2061 | This PR adds a new section about packaging a plugin using :books: Documentation preview :books:: https://datasette--2061.org.readthedocs.build/en/2061/ |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/2061/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

| 1708981860 | PR_kwDOBm6k_c5QdMea | 2074 | sort files by mtime | abbbi 3919561 | open | 0 | 0 | 2023-05-14T15:25:15Z | 2023-05-14T15:25:29Z | FIRST_TIME_CONTRIBUTOR | simonw/datasette/pulls/2074 | serving multiple database files and getting tired by the default sort, changes so the sort order puts the latest changed databases to be on top of the list so don't have to scroll down, lazy as i am ;) :books: Documentation preview :books:: https://datasette--2074.org.readthedocs.build/en/2074/ |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/2074/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

| 1715468032 | PR_kwDOBm6k_c5QzEAM | 2076 | Datsette gpt plugin | StudioCordillera 130708713 | open | 0 | 0 | 2023-05-18T11:22:30Z | 2023-05-18T11:22:45Z | FIRST_TIME_CONTRIBUTOR | simonw/datasette/pulls/2076 | :books: Documentation preview :books:: https://datasette--2076.org.readthedocs.build/en/2076/ |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/2076/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

| 1734786661 | PR_kwDOBm6k_c5R0fcK | 2082 | Catch query interrupted on facet suggest row count | redraw 10843208 | open | 0 | 0 | 2023-05-31T18:42:46Z | 2023-05-31T18:45:26Z | FIRST_TIME_CONTRIBUTOR | simonw/datasette/pulls/2082 | Just like facet's I've included :books: Documentation preview :books:: https://datasette--2082.org.readthedocs.build/en/2082/ |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/2082/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

| 1794604602 | PR_kwDOBm6k_c5U-akg | 2096 | Clarify docs for descriptions in metadata | garthk 15906 | open | 0 | 0 | 2023-07-08T01:57:58Z | 2023-07-08T01:58:13Z | FIRST_TIME_CONTRIBUTOR | simonw/datasette/pulls/2096 | G'day! I got confused while debugging, earlier today. That's on me, but it does strike me a little repetition in the metadata documentation might help those flicking around it rather than reading it from top to bottom. No worries if you think otherwise. :books: Documentation preview :books:: https://datasette--2096.org.readthedocs.build/en/2096/ |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/2096/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

| 1802613340 | PR_kwDOBm6k_c5VZhfw | 2100 | Make primary key view accessible to render_cell hook | meowcat 1563881 | open | 0 | 0 | 2023-07-13T09:30:36Z | 2023-08-10T13:15:41Z | FIRST_TIME_CONTRIBUTOR | simonw/datasette/pulls/2100 | :books: Documentation preview :books:: https://datasette--2100.org.readthedocs.build/en/2100/ |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/2100/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

| 1865572575 | PR_kwDOBm6k_c5Yt2eO | 2155 | Fix hupper.start_reloader entry point | cadeef 79087 | open | 0 | 2 | 2023-08-24T17:14:08Z | 2023-09-27T18:44:02Z | FIRST_TIME_CONTRIBUTOR | simonw/datasette/pulls/2155 | Update hupper's entry point so that click commands are processed properly. Fixes #2123 :books: Documentation preview :books:: https://datasette--2155.org.readthedocs.build/en/2155/ |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/2155/reactions",

"total_count": 2,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 2,

"eyes": 0

} |

0 | ||||||

| 1865983069 | PR_kwDOBm6k_c5YvQSi | 2158 | add brand option to metadata.json. | publicmatt 52261150 | open | 0 | 0 | 2023-08-24T22:37:41Z | 2023-08-24T22:37:57Z | FIRST_TIME_CONTRIBUTOR | simonw/datasette/pulls/2158 | This adds a brand link to the top navbar if 'brand' key is populated in metadata.json. The link will be either '#' or use the contents of 'brand_url' in metadata.json for href. I was able to get this done on my own site by replacing :books: Documentation preview :books:: https://datasette--2158.org.readthedocs.build/en/2158/ |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/2158/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

0 | ||||||

| 1866815458 | PR_kwDOBm6k_c5YyF-C | 2159 | Implement Dark Mode colour scheme | jamietanna 3315059 | open | 0 | 0 | 2023-08-25T10:46:23Z | 2023-08-25T10:46:35Z | FIRST_TIME_CONTRIBUTOR | simonw/datasette/pulls/2159 | Closes #2095. :books: Documentation preview :books:: https://datasette--2159.org.readthedocs.build/en/2159/ |

datasette 107914493 | pull | {

"url": "https://api.github.com/repos/simonw/datasette/issues/2159/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

1 | ||||||

| 281110295 | MDU6SXNzdWUyODExMTAyOTU= | 173 | I18n and L10n support | janimo 50138 | open | 0 | 2 | 2017-12-11T17:49:58Z | 2021-04-26T12:10:01Z | NONE | It would be less geeky and more user friendly if the display strings in the filter menu and possibly other parts could be localized. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/173/reactions",

"total_count": 2,

"+1": 2,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 285168503 | MDU6SXNzdWUyODUxNjg1MDM= | 176 | Add GraphQL endpoint | yozlet 173848 | open | 0 | 8 | 2017-12-29T23:21:01Z | 2020-04-21T14:16:24Z | NONE | Would make it much easier to build React & similar frontends. Maybe with https://github.com/graphql-python/sanic-graphql ? |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/176/reactions",

"total_count": 1,

"+1": 1,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 299760684 | MDU6SXNzdWUyOTk3NjA2ODQ= | 185 | Metadata should be a nested arbitrary KV store | carlmjohnson 222245 | open | 0 | 12 | 2018-02-23T16:02:07Z | 2019-05-13T18:33:33Z | NONE | I started using the metadata feature and was surprised to find that values are not inherited from the root object down to specific databases and tables. This makes metadata much less useful and requires a lot of pointless duplication. Ideally, metadata should allow arbitrary key-value pairs, and there should be a way of accessing metadata either in an inherited or non-inherited manner. Something like |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/185/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 319449852 | MDU6SXNzdWUzMTk0NDk4NTI= | 247 | SQLite code decoupled from Datasette | jsancho-gpl 11912854 | open | 0 | 1 | 2018-05-02T08:03:28Z | 2018-05-21T15:29:31Z | NONE | I'm working on the possibility of use Datasette with other file formats that aren't SQLite, like files with PyTables format. In order to accomplish that, I've started a fork for decoupling the code related with SQLite and putting it in an external connector to allow future connectors for a lot of file formats. It'd be nice if you could look at it and suggest improvements for a possible PR. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/247/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 330826972 | MDU6SXNzdWUzMzA4MjY5NzI= | 308 | Support extra Heroku apps:create options - region, space, team | annapowellsmith 78156 | open | 0 | 2 | 2018-06-08T23:08:33Z | 2018-09-21T14:09:28Z | NONE | It would be useful to document how to pass Heroku CLI options on |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/308/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 352768017 | MDU6SXNzdWUzNTI3NjgwMTc= | 362 | Add option to include/exclude columns in search filters | annapowellsmith 78156 | open | 0 | 1 | 2018-08-22T01:32:08Z | 2020-11-03T19:01:59Z | NONE | I have a dataset with many columns, of which only some are likely to be of interest for searching. It would be great for usability if the search filters in the UI could be configured to include/exclude columns. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/362/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 411257981 | MDU6SXNzdWU0MTEyNTc5ODE= | 412 | Linked Data(sette) | sfkeller 43340 | open | 0 | 2 | 2019-02-18T00:38:14Z | 2019-03-19T10:09:46Z | NONE | I've a radical feature idea (possible first as an extension in order to experiment?): I'd like to link to a remote table from a remote database, e.g. with a function "linked_datasette()". So one could do following query:

There's a foundation in the SQL Standard called SQL/MED (https://rhaas.blogspot.com/2011/01/why-sqlmed-is-cool.html ). And here's an implementation from me in Postgres FDW to connect another Postgres "endpoint": https://pastebin.com/Fz2v64Cz . |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/412/reactions",

"total_count": 1,

"+1": 1,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 451585764 | MDU6SXNzdWU0NTE1ODU3NjQ= | 499 | Accessibility for non-techie newsies? | chrismp 7936571 | open | 0 | 3 | 2019-06-03T16:49:37Z | 2019-06-05T21:22:55Z | NONE | Hi again, I'm having fun uploading datasets to Heroku via datasette. I'd like to set up datasette so that it's easy for other newsroom workers, who don't use Linux and aren't programmers, to upload datasets. Does datsette provide this out-of-the-box, or as a plugin? |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/499/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 457147936 | MDU6SXNzdWU0NTcxNDc5MzY= | 512 | "about" parameter in metadata does not appear when alone | chrismp 7936571 | open | 0 | 3 | 2019-06-17T21:04:20Z | 2019-10-11T15:49:13Z | NONE | Here's an example of metadata I have for one database on datasette.

The text in Is this intended? |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/512/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 459882902 | MDU6SXNzdWU0NTk4ODI5MDI= | 526 | Stream all results for arbitrary SQL and canned queries | matej-fr 50578294 | open | 0 | 23 | 2019-06-24T13:09:45Z | 2022-09-28T04:01:25Z | NONE | I think that there is a difficulty with canned queries. When I want to stream all results of a canned query TwoDays I get only first 1.000 records. Example:

returns only first 1.000 records. If I do the same with the whole database i.e.

I get correctly all records. Any ideas? |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/526/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 476852861 | MDU6SXNzdWU0NzY4NTI4NjE= | 568 | Add database_color as a configurable option | LBHELewis 50906992 | open | 0 | 1 | 2019-08-05T13:14:45Z | 2023-08-11T05:19:42Z | NONE | This would be really useful as it would allow us to tie in with colour schemes. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/568/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 510076368 | MDU6SXNzdWU1MTAwNzYzNjg= | 605 | Support queries at the table level | bsilverm 12617395 | open | 0 | 2 | 2019-10-21T15:58:30Z | 2019-10-30T18:55:37Z | NONE | Per the issue described in issue #588, it was determined queries are not supported at the table level. Per my last comment in the issue, I'd like to request support for this as it would help eliminate errors in the event certain tables are not present in the database. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/605/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 527670799 | MDU6SXNzdWU1Mjc2NzA3OTk= | 639 | updating metadata.json without recreating the app | pkoppstein 172847 | open | 0 | 6 | 2019-11-24T09:19:53Z | 2019-11-30T06:08:50Z | NONE | I've sucessfully "uploaded" an SQLite database (with a metadata.json file) to heroku using: The question is: how can I modify the (small) metadata.json file without having to upload the (large) SQLite database. The directions on heroku indicate I should run: But this just results in an empty directory with a warning: warning: You appear to have cloned an empty repository. I've been able to "clone" the heroku "app" using the command: but this is not a git repository.... Ideally, it seems to me, there'd be an option of the (p.s. I ran (p.p.s. Thanks for Datasette!) |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/639/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 531502365 | MDU6SXNzdWU1MzE1MDIzNjU= | 646 | Make database level information from metadata.json available in the index.html template | lagolucas 18017473 | open | 0 | Datasette 1.0 3268330 | 3 | 2019-12-02T19:55:10Z | 2022-03-15T20:50:34Z | NONE | Did a search on the issues here and didn't find anything related to what I want. I want to have information that is on the database level of the JSON like title, source and source_url, and use it on the index page. I tried some small tweaks on the python and html files, but failed to get that result. Is there a way? Thanks! |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/646/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

|||||||

| 548591089 | MDU6SXNzdWU1NDg1OTEwODk= | 657 | Allow creation of virtual tables at startup | dazzag24 1055831 | open | 0 | 4 | 2020-01-12T16:10:55Z | 2021-01-15T20:24:35Z | NONE | Hi, I've been experimenting with SQLite reading from huge datasets using this excellent Parquet extension from @cldellow. https://cldellow.com/2018/06/22/sqlite-parquet-vtable.html https://github.com/cldellow/sqlite-parquet-vtable This works really well, but I was keen to see if I could combine datasette with this. Having previously experimented with the spatialite extension I knew that datasette supports loading extensions in the underlying sqlite instance. However I hit a blocker as the current design only allows SELECT statements to be executed and so I am unable to execute the crucial CREATE VIRTUAL TABLE ......... command that is required to load the data from the parquet file into the table. It seems like this would be a simple-ish change, but I don't know enough about the architecture of datasette to start implementing this myself? Could this be done as a datasette plugin? or would this require more fundamental changes at initialisation time? My thoughts are that something at init time could detect that the user was loading a .parquet file and then switch to a mode were it loads that via the "CREATE VIRTUAL TABLE..." rather than loading the .db file in the default case?? I'm happy to contribute code and testing, I just need some pointers on the best approach. Thanks Darren |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/657/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 550293770 | MDU6SXNzdWU1NTAyOTM3NzA= | 658 | How do I use the app.css as style sheet? | null92 49656826 | open | 0 | 2 | 2020-01-15T16:27:57Z | 2020-02-07T00:29:50Z | NONE | Simon, I'm trying to use the app.css (in static folder) as style sheet but the datasette on Heroku simply ignore it! I read everything about customization here and on readthedocs but still can't. Is this possible? Thanks! |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/658/reactions",

"total_count": 1,

"+1": 1,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 567902704 | MDU6SXNzdWU1Njc5MDI3MDQ= | 675 | --cp option for datasette publish and datasette package for shipping additional files and directories | aviflax 141844 | open | 0 | 12 | 2020-02-19T22:55:56Z | 2020-12-28T18:49:21Z | NONE | I’m working on integrating Datasette into a documentation-oriented publishing workflow internally in my company, and in order to deploy the Docker image created by So it’d be excellent if there was an additional option for this command, something like, like, I’d envision it looking something like:

I’d be happy to help design, specify, implement, and test this feature, if you’d be interested. Thanks for the fantastic tools! |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/675/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 612382643 | MDU6SXNzdWU2MTIzODI2NDM= | 758 | Question: Access to immutable database-path | clausjuhl 2181410 | open | 0 | 6 | 2020-05-05T07:01:18Z | 2020-05-28T08:23:27Z | NONE | Hi Simon Is there anywhere in the app-context where one can access the hashed urlpath of the database? Currently it's included in the template-context ( |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/758/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 617323873 | MDU6SXNzdWU2MTczMjM4NzM= | 766 | Enable wildcard-searches by default | clausjuhl 2181410 | open | 0 | 2 | 2020-05-13T10:14:48Z | 2021-03-05T16:35:21Z | NONE | Hi Simon. It seems that datasette currently has wildcard-searches disabled by default (along with the boolean search-options, NEAR-queries and more, and despite the docs). If I try out the search-url provided in the docs (https://fara.datasettes.com/fara/FARA_All_ShortForms?_search=manafort), it does not handle wildcard-searches, and I'm unable to make it work on my datasette-instance. I would argue that wildcard-searches is such a standard query, that it should be enabled by default. Requiring "_searchmode=raw" when using prefix-searches seems unnecessary. Plus: What happens to non-ascii searches when using "_searchmode=raw"? Is the "escape_fts"-function from datasette.utils ignored? Thanks! /Claus |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/766/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 642388564 | MDU6SXNzdWU2NDIzODg1NjQ= | 858 | publish heroku does not work on Windows 10 | simonlau 870912 | open | 0 | 7 | 2020-06-20T14:40:28Z | 2021-06-10T17:44:09Z | NONE | When executing "datasette publish heroku schools.db" on Windows 10, I get the following error

to

as well as the other |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/858/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 707849175 | MDU6SXNzdWU3MDc4NDkxNzU= | 974 | static assets and favicon aren't cached by the browser | obra 45416 | open | 0 | 1 | 2020-09-24T04:44:55Z | 2022-01-13T22:21:28Z | NONE | Using datasette to solve some frustrating problems with our fulfillment provider today, I was surprised to see repeated requests for assets under /-/static and the favicon. While it won't likely be a huge performance bottleneck, I bet datasette would feel a bit zippier if you had Uvicorn serving up some caching-related headers telling the browser it was safe to cache static assets. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/974/reactions",

"total_count": 1,

"+1": 1,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 718238967 | MDU6SXNzdWU3MTgyMzg5Njc= | 1003 | from_json jinja2 filter | mhalle 649467 | open | 0 | 4 | 2020-10-09T15:30:58Z | 2020-10-09T17:17:07Z | NONE | When JSON fields are rendered in a jinja2 template, it is handy to be able to manipulate them as data (e.g., iterate over an array of values). Ansible has a "from_json" function, which just called json.loads. It's a trivial as a datasette plugin, but it seems generally useful. Does it makes sense to add it directly into the app? |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1003/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 735852274 | MDU6SXNzdWU3MzU4NTIyNzQ= | 1082 | DigitalOcean buildpack memory errors for large sqlite db? | justmars 39538958 | open | 0 | 3 | 2020-11-04T06:35:32Z | 2020-11-04T19:35:44Z | NONE |

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1082/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 764059235 | MDU6SXNzdWU3NjQwNTkyMzU= | 1143 | More flexible CORS support in core, to encourage good security practices | yurivish 114388 | open | 0 | Datasette 1.0 3268330 | 6 | 2020-12-12T17:06:35Z | 2022-02-13T17:41:17Z | NONE | It would be nice if the As an example, Observable notebooks namespace every user's notebooks by their username and user content is served from username.observableusercontent.com, so you would set Thank you for all of your work on Datasette! |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1143/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

|||||||

| 787104850 | MDU6SXNzdWU3ODcxMDQ4NTA= | 1192 | Form Plugin for in-depth Datasette Querying | tomershvueli 1024355 | open | 0 | 0 | 2021-01-15T18:24:50Z | 2021-01-15T18:24:50Z | NONE | I envision a sort of easy-to-build form plugin that would be able to map a user's inputs to different fields/columns in a Datasette database. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1192/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 791237799 | MDU6SXNzdWU3OTEyMzc3OTk= | 1196 | Access Denied Error in Windows | QAInsights 2826376 | open | 0 | 2 | 2021-01-21T15:40:40Z | 2021-04-14T19:28:38Z | NONE | I am trying to publish a db to vercel. But while issuing the below command throwing I am using PyCharm and Python 3.9. I have reinstalled both and launched PyCharm as Admin in Windows 10. But still the issue persists. Issued command PS: localhost is working fine. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1196/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 795367402 | MDU6SXNzdWU3OTUzNjc0MDI= | 1209 | v0.54 500 error from sql query in custom template; code worked in v0.53; found a workaround | jrdmb 11788561 | open | 0 | 1 | 2021-01-27T19:08:13Z | 2021-01-28T23:00:27Z | NONE | v0.54 500 error in sql query template; code worked in v0.53; found a workaround schema: Live example of correctly rendered template in v.053: https://cosmotalks-cy6xkkbezq-uw.a.run.app/cosmotalks/talks/1 Description of problem: I needed 'sql select' code in a custom row-mydatabase-mytable.html template to lookup the series name for a foreign key integer value in the talks table. So The code below worked perfectly in v0.53 (just the relevant sql statement part is shown; full code is here):

In v0.54, that code resulted in a 500 error with a 'no such table series' message. A second query in that template also did not work but the above is fully illustrative of the problem. All templates were up-to-date along with datasette v0.54. Workaround: After fiddling around with trying different things, what worked was the syntax from Querying a different database from the datasette-template-sql github repo to add the database name to the sql statement:

Though this was found to work, it should not be necessary to add |

datasette 107914493 | issue | |||||||||

| 802513359 | MDU6SXNzdWU4MDI1MTMzNTk= | 1217 | Possible to deploy as a python app (for Rstudio connect server)? | plpxsk 6165713 | open | 0 | 4 | 2021-02-05T22:21:24Z | 2022-11-04T11:37:52Z | NONE | Is it possible to deploy a In my enterprise, I have option to deploy python apps via Rstudio Connect, and I would like to publish a I welcome any pointers to converting |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1217/reactions",

"total_count": 1,

"+1": 1,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 803356942 | MDU6SXNzdWU4MDMzNTY5NDI= | 1218 | /usr/local/opt/python3/bin/python3.6: bad interpreter: No such file or directory | robmarkcole 11855322 | open | 0 | 1 | 2021-02-08T09:07:00Z | 2021-02-23T12:12:17Z | NONE | Error as above, however I do have python3.8 and the readme indicates this is supported. ``` (venv) (base) Robins-MacBook:datasette robin$ ls /usr/local/opt/python3/bin/ .. pip3 python3 python3.8 ``` |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1218/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 808771690 | MDU6SXNzdWU4MDg3NzE2OTA= | 1225 | More flexible formatting of records with CSS grid | mhalle 649467 | open | 0 | 0 | 2021-02-15T19:28:17Z | 2021-02-15T19:28:35Z | NONE | In several applications I've been experimenting with alternate formatting of datasette query results. Lately I've found that CSS grids work very well and seem quite general for formatting rows. In CSS I use grid templates to define the layout of each record and the regions for each field, hiding the fields I don't want. It's pretty flexible and looks good. It's also a great basis for highly responsive layout. I initially thought I'd only use this feature for record detail views, but now I use it for index views as well. However, there are some limitations:

* With the existing table templates, it seems that you can change the It would be helpful to at least have an official example or test that used a grid layout for records to make sure nothing in datasette breaks with it. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1225/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 811054000 | MDU6SXNzdWU4MTEwNTQwMDA= | 1230 | Vega charts are plotted only for rows on the visible page, cluster maps only for rows in the remaining pages | Kabouik 7107523 | open | 0 | 1 | 2021-02-18T12:27:02Z | 2021-02-18T15:22:15Z | NONE | I filtered a data set on some criteria and obtain 265 results, split over three pages (100, 100, 65), and reazlized that Vega plots are only applied to the results displayed on the current page, instead of the whole filtered data, e.g., 100 on page 1, 100 on page 2, 65 on page 3. Is there a way to force the graphs to consider all results instead of just the page, considering that pages rarely represent sensible information? Likewise, while the cluster map does show all results on the first page, if you go to next pages, it will show all remaining results except the previous page(s), e.g., 265 on page 1, 165 on page 2, 65 on page 3. In both cases, I don't see many situations where one would like to represent the data this way, and it might even lead to interpretation errors when viewing the data. Am I missing some cases where this would be best? Perhaps a clickable option to subset visual representations according visible pages vs. display all search results would do? [Edit] Oh, I just saw the "Load all" button under the cluster map as well as the setting to alter the max number or results. So I guess this issue only is about the Vega charts. |

datasette 107914493 | issue | |||||||||

| 814595021 | MDU6SXNzdWU4MTQ1OTUwMjE= | 1241 | Share button for copying current URL | Kabouik 7107523 | open | 0 | 6 | 2021-02-23T15:55:40Z | 2023-08-24T20:09:52Z | NONE | I use datasette in an This particular use prevents users to access the full URLs of their datasette views and queries, which is a shame because the way datasette handles URLs to make every view or query easy to share is awesome. I know how to get the URL from the context menu of my browser, but I don't think many visitors would do it or even notice that datasette uses permalinks for pretty much every action they do. Would it be possible to add a "Share link" button to the interface, either in datasette itself or in a plugin? |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1241/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 824750134 | MDU6SXNzdWU4MjQ3NTAxMzQ= | 1251 | facet option not appearing when table is big | verajosemanuel 15836677 | open | 0 | 0 | 2021-03-08T16:54:04Z | 2021-03-08T16:54:16Z | NONE | I have a big table with more than 500.000 rows. Trying to facet by one of my columns, the options are not available as for the other smaller tables. I have tried to set it in URL as:

to no avail. is there any limit? how can I force the option "facet" to appear for big tables? |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1251/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 826064552 | MDU6SXNzdWU4MjYwNjQ1NTI= | 1253 | Capture "Ctrl + Enter" or "⌘ + Enter" to send SQL query? | rayvoelker 9308268 | open | 0 | 1 | 2021-03-09T15:00:50Z | 2021-10-30T16:00:42Z | NONE | It appears as though "Shift + Enter" triggers the form submit action to submit SQL, but could that action be bound to the "Ctrl + Enter" or "⌘ + Enter" action? I feel like that pattern already exists in a number of similar tools and could improve usability of the editor. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1253/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 826700095 | MDU6SXNzdWU4MjY3MDAwOTU= | 1255 | Facets timing out but work when filtering | robroc 1219001 | open | 0 | 2 | 2021-03-09T22:01:39Z | 2021-04-02T20:50:08Z | NONE | System info: Windows 10 Datasette 0.55 installed via pip Python 3.8.5 in a conda environment I'm getting the message |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1255/reactions",

"total_count": 1,

"+1": 1,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 828858421 | MDU6SXNzdWU4Mjg4NTg0MjE= | 1258 | Allow canned query params to specify default values | wdccdw 1385831 | open | 0 | 5 | 2021-03-11T07:19:02Z | 2023-02-20T23:39:58Z | NONE | If I call a canned query that includes named parameters, without passing any parameters, datasette runs the query anyway, resulting in an HTTP status code 400, and a visible error in the browser, with only a link back to home. This means that one of the default links on https://site/database/ will lead to a broken page with no apparent way out.

Is there any way to skip performing the query when parameters aren't supplied, but otherwise render the usual canned query page? Alternatively, can I supply default values for my parameters, either when defining my canned queries or when linking to the canned query page from the default database template. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1258/reactions",

"total_count": 1,

"+1": 1,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 834602299 | MDU6SXNzdWU4MzQ2MDIyOTk= | 1262 | Plugin hook that could support 'order by random()' for table view | henry501 19328961 | open | 0 | 3 | 2021-03-18T10:02:01Z | 2021-03-18T17:55:01Z | NONE | I am frequently using Datasette to quickly get a visual impression for a table without reviewing it in its entirety. Because I have some groups of similar records, the default sorting options mean that each page is very similar and not representative of the full dataset. The current interface allows sorting by columns, but random sorting is only available via custom SQL. Maybe this could be a button or link. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1262/reactions",

"total_count": 1,

"+1": 1,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 849512840 | MDU6SXNzdWU4NDk1MTI4NDA= | 1288 | Facets: show counts for null | jungle-boogie 1111743 | open | 0 | 0 | 2021-04-02T22:33:44Z | 2021-04-02T22:33:44Z | NONE | Hi, Thank you for Datasette and being a fan of SQLite! Not all rows in a record will always contain data. So when using a facet on a column where some records have data and others don't, you don't get an accurate count of the results. Please consider also counting and showing null records with facets. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1288/reactions",

"total_count": 2,

"+1": 2,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 863884805 | MDU6SXNzdWU4NjM4ODQ4MDU= | 1304 | Document how to send multiple values for "Named parameters" | rayvoelker 9308268 | open | 0 | 4 | 2021-04-21T13:19:06Z | 2021-12-08T03:23:14Z | NONE | https://docs.datasette.io/en/stable/sql_queries.html#named-parameters I thought that I had seen an example of how to do this example below, but I can't seem to find it

Or, maybe this isn't a fully supported feature. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1304/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 870946764 | MDU6SXNzdWU4NzA5NDY3NjQ= | 1312 | how to query many-to-many relationship via json API? | bram2000 5268174 | open | 0 | 0 | 2021-04-29T12:09:49Z | 2021-04-29T12:09:49Z | NONE | Hi, Firstly thanks for Datasette, it's great! I'm trying to use the JSON API to query data from a Datasette instance. I have a simple 3 table many-to-many relationship, like so:

the Now I want to return "all documents within category X" but I cannot see a way to do this without executing two queries; the first to lookup the row_id of category X, and the second to join I could easily write this in SQL, but this makes programmatic handling of pagination much more difficult (we'd have to dynamically modify the SQL to select the row_id and include the correct where and limit clauses). Is there a way to achieve this using the JSON API? |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1312/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 919822817 | MDU6SXNzdWU5MTk4MjI4MTc= | 1376 | Official Datasette Docker image should use SQLite >= 3.31.0 (for generated columns) | jcgregorio 1726460 | open | 0 | 3 | 2021-06-13T15:25:51Z | 2021-06-13T15:39:37Z | NONE | Trying to run datasette via the Docker container doesn't seem to work:

I have confirmed that the downloaded ``` [skia-public] jcgregorio@jcgregorio840 ~/Downloads $ sqlite3 fixtures.db SQLite version 3.34.1 2021-01-20 14:10:07 Enter ".help" for usage hints. sqlite> pragma integrity_check; ok sqlite> ``` |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1376/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 924748955 | MDU6SXNzdWU5MjQ3NDg5NTU= | 1380 | Serve all db files in a folder | stratosgear 193463 | open | 0 | 5 | 2021-06-18T10:03:32Z | 2021-11-13T08:09:11Z | NONE | I tried to get the In more detail:

Is there an option/setting that I overlooked, or is this something missing? BTW, the Thanks! |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1380/reactions",

"total_count": 2,

"+1": 2,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 982803408 | MDU6SXNzdWU5ODI4MDM0MDg= | 1454 | Feature Request: Publish to IPFS | blitmap 1560788 | open | 0 | 0 | 2021-08-30T13:36:18Z | 2021-08-30T13:36:18Z | NONE | Hello, I am a huge fan of this being used for exploring data. I think it has a lot of flexibility not found in other tools. I'm not sure if what I'm asking for is possible: Can this be extended to publish to IPFS? IPFS is an attractive hosting option for decentralized journalism. Food for thought ~ |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1454/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 1010112818 | I_kwDOBm6k_c48NRky | 1479 | Win32 "used by another process" error with datasette publish | kirajano 76450761 | open | 0 | 7 | 2021-09-28T19:12:00Z | 2023-09-07T02:14:16Z | NONE | I unfortunately was not successful to deploy to fly.io. Please see the details above of the three scenarios that I took. I am also new to datasette. Failed to deploy. Attaching logs:

1. Tried with an app created via Error error connecting to docker: An unknown error occured. Traceback (most recent call last): File "c:\users\grott\anaconda3\lib\runpy.py", line 193, in _run_module_as_main "main", mod_spec) File "c:\users\grott\anaconda3\lib\runpy.py", line 85, in _run_code exec(code, run_globals) File "C:\Users\grott\Anaconda3\Scripts\datasette.exe__main__.py", line 7, in <module> File "c:\users\grott\anaconda3\lib\site-packages\click\core.py", line 829, in call return self.main(args, kwargs) File "c:\users\grott\anaconda3\lib\site-packages\click\core.py", line 782, in main rv = self.invoke(ctx) File "c:\users\grott\anaconda3\lib\site-packages\click\core.py", line 1259, in invoke return _process_result(sub_ctx.command.invoke(sub_ctx)) File "c:\users\grott\anaconda3\lib\site-packages\click\core.py", line 1259, in invoke return _process_result(sub_ctx.command.invoke(sub_ctx)) File "c:\users\grott\anaconda3\lib\site-packages\click\core.py", line 1066, in invoke return ctx.invoke(self.callback, ctx.params) File "c:\users\grott\anaconda3\lib\site-packages\click\core.py", line 610, in invoke return callback(args, **kwargs) File "c:\users\grott\anaconda3\lib\site-packages\datasette_publish_fly__init__.py", line 156, in fly "--remote-only", File "c:\users\grott\anaconda3\lib\contextlib.py", line 119, in exit next(self.gen) File "c:\users\grott\anaconda3\lib\site-packages\datasette\utils__init__.py", line 451, in temporary_docker_directory tmp.cleanup() File "c:\users\grott\anaconda3\lib\tempfile.py", line 811, in cleanup _shutil.rmtree(self.name) File "c:\users\grott\anaconda3\lib\shutil.py", line 516, in rmtree return _rmtree_unsafe(path, onerror) File "c:\users\grott\anaconda3\lib\shutil.py", line 395, in _rmtree_unsafe _rmtree_unsafe(fullname, onerror) File "c:\users\grott\anaconda3\lib\shutil.py", line 404, in _rmtree_unsafe onerror(os.rmdir, path, sys.exc_info()) File "c:\users\grott\anaconda3\lib\shutil.py", line 402, in _rmtree_unsafe os.rmdir(path) PermissionError: [WinError 32] The process cannot access the file because it is being used by another process: 'C:\Users\grott\AppData\Local\Temp\tmpgcm8cz66\frosty-fog-8565' ```

Error not possible to validate configuration: server returned Post "https://api.fly.io/graphql": unexpected EOF Traceback (most recent call last):

File "c:\users\grott\anaconda3\lib\runpy.py", line 193, in _run_module_as_main These are also the contents of the generated .toml file in 2 scenario: ``` fly.toml file generated for dark-feather-168 on 2021-09-28T20:35:44+02:00app = "dark-feather-168" kill_signal = "SIGINT" kill_timeout = 5 processes = [] [env] [experimental] allowed_public_ports = [] auto_rollback = true [[services]] http_checks = [] internal_port = 8080 processes = ["app"] protocol = "tcp" script_checks = [] [services.concurrency] hard_limit = 25 soft_limit = 20 type = "connections" [[services.ports]] handlers = ["http"] port = 80 [[services.ports]] handlers = ["tls", "http"] port = 443 [[services.tcp_checks]] grace_period = "1s" interval = "15s" restart_limit = 6 timeout = "2s" ```

```[+] Building 147.3s (11/11) FINISHED => [internal] load build definition from Dockerfile 0.2s => => transferring dockerfile: 396B 0.0s => [internal] load .dockerignore 0.1s => => transferring context: 2B 0.0s => [internal] load metadata for docker.io/library/python:3.8 4.7s => [auth] library/python:pull token for registry-1.docker.io 0.0s => [internal] load build context 0.1s => => transferring context: 82.37kB 0.0s => [1/5] FROM docker.io/library/python:3.8@sha256:530de807b46a11734e2587a784573c12c5034f2f14025f838589e6c0e3 108.3s => => resolve docker.io/library/python:3.8@sha256:530de807b46a11734e2587a784573c12c5034f2f14025f838589e6c0e3b5 0.0s => => sha256:56182bcdf4d4283aa1f46944b4ef7ac881e28b4d5526720a4e9ba03a4730846a 2.22kB / 2.22kB 0.0s => => sha256:955615a668ce169f8a1443fc6b6e6215f43fe0babfb4790712a2d3171f34d366 54.93MB / 54.93MB 21.6s => => sha256:911ea9f2bd51e53a455297e0631e18a72a86d7e2c8e1807176e80f991bde5d64 10.87MB / 10.87MB 15.5s => => sha256:530de807b46a11734e2587a784573c12c5034f2f14025f838589e6c0e3b5c5b6 1.86kB / 1.86kB 0.0s => => sha256:ff08f08727e50193dcf499afc30594c47e70cc96f6fcfd1a01240524624264d0 8.65kB / 8.65kB 0.0s => => sha256:2756ef5f69a5190f4308619e0f446d95f5515eef4a814dbad0bcebbbbc7b25a8 5.15MB / 5.15MB 6.4s => => sha256:27b0a22ee906271a6ce9ddd1754fdd7d3b59078e0b57b6cc054c7ed7ac301587 54.57MB / 54.57MB 37.7s => => sha256:8584d51a9262f9a3a436dea09ba40fa50f85802018f9bd299eee1bf538481077 196.45MB / 196.45MB 82.3s => => sha256:524774b7d3638702fe9ae0ea3fcfb81b027dfd75cc2fc14f0119e764b9543d58 6.29MB / 6.29MB 26.6s => => extracting sha256:955615a668ce169f8a1443fc6b6e6215f43fe0babfb4790712a2d3171f34d366 5.4s => => sha256:9460f6b75036e38367e2f27bb15e85777c5d6cd52ad168741c9566186415aa26 16.81MB / 16.81MB 40.5s => => extracting sha256:2756ef5f69a5190f4308619e0f446d95f5515eef4a814dbad0bcebbbbc7b25a8 0.6s => => extracting sha256:911ea9f2bd51e53a455297e0631e18a72a86d7e2c8e1807176e80f991bde5d64 0.6s => => sha256:9bc548096c181514aa1253966a330134d939496027f92f57ab376cd236eb280b 232B / 232B 40.1s => => extracting sha256:27b0a22ee906271a6ce9ddd1754fdd7d3b59078e0b57b6cc054c7ed7ac301587 5.8s => => sha256:1d87379b86b89fd3b8bb1621128f00c8f962756e6aaaed264ec38db733273543 2.35MB / 2.35MB 41.8s => => extracting sha256:8584d51a9262f9a3a436dea09ba40fa50f85802018f9bd299eee1bf538481077 18.8s => => extracting sha256:524774b7d3638702fe9ae0ea3fcfb81b027dfd75cc2fc14f0119e764b9543d58 1.2s => => extracting sha256:9460f6b75036e38367e2f27bb15e85777c5d6cd52ad168741c9566186415aa26 2.9s => => extracting sha256:9bc548096c181514aa1253966a330134d939496027f92f57ab376cd236eb280b 0.0s => => extracting sha256:1d87379b86b89fd3b8bb1621128f00c8f962756e6aaaed264ec38db733273543 0.8s => [2/5] COPY . /app 2.3s => [3/5] WORKDIR /app 0.2s => [4/5] RUN pip install -U datasette 26.9s => [5/5] RUN datasette inspect covid.db --inspect-file inspect-data.json 3.1s => exporting to image 1.2s => => exporting layers 1.2s => => writing image sha256:b5db0c205cd3454c21fbb00ecf6043f261540bcf91c2dfc36d418f1a23a75d7a 0.0s Use 'docker scan' to run Snyk tests against images to find vulnerabilities and learn how to fix them Traceback (most recent call last): "main", mod_spec) File "c:\users\grott\anaconda3\lib\runpy.py", line 85, in _run_code exec(code, run_globals) File "C:\Users\grott\Anaconda3\Scripts\datasette.exe__main__.py", line 7, in <module> File "c:\users\grott\anaconda3\lib\site-packages\click\core.py", line 829, in call return self.main(args, kwargs) File "c:\users\grott\anaconda3\lib\site-packages\click\core.py", line 782, in main rv = self.invoke(ctx) File "c:\users\grott\anaconda3\lib\site-packages\click\core.py", line 1259, in invoke return _process_result(sub_ctx.command.invoke(sub_ctx)) File "c:\users\grott\anaconda3\lib\site-packages\click\core.py", line 1066, in invoke return ctx.invoke(self.callback, ctx.params) File "c:\users\grott\anaconda3\lib\site-packages\click\core.py", line 610, in invoke return callback(args, **kwargs) File "c:\users\grott\anaconda3\lib\site-packages\datasette\cli.py", line 283, in package call(args) File "c:\users\grott\anaconda3\lib\contextlib.py", line 119, in exit next(self.gen) File "c:\users\grott\anaconda3\lib\site-packages\datasette\utils__init__.py", line 451, in temporary_docker_directory tmp.cleanup() File "c:\users\grott\anaconda3\lib\tempfile.py", line 811, in cleanup _shutil.rmtree(self.name) File "c:\users\grott\anaconda3\lib\shutil.py", line 516, in rmtree return _rmtree_unsafe(path, onerror) File "c:\users\grott\anaconda3\lib\shutil.py", line 395, in _rmtree_unsafe _rmtree_unsafe(fullname, onerror) File "c:\users\grott\anaconda3\lib\shutil.py", line 404, in _rmtree_unsafe onerror(os.rmdir, path, sys.exc_info()) File "c:\users\grott\anaconda3\lib\shutil.py", line 402, in _rmtree_unsafe os.rmdir(path) PermissionError: [WinError 32] The process cannot access the file because it is being used by another process: 'C:\Users\grott\AppData\Local\Temp\tmpkb27qid3\datasette'``` |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1479/reactions",

"total_count": 1,

"+1": 1,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 1049946823 | I_kwDOBm6k_c4-lOrH | 1502 | Full-text search: No support to unary "-" operator | gustavorps 516827 | open | 0 | 0 | 2021-11-10T15:11:19Z | 2021-11-10T15:11:19Z | NONE | datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1502/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

|||||||||

| 1072106103 | I_kwDOBm6k_c4_5wp3 | 1542 | feature request: order and dependency of plugins (that use js) | fs111 33631 | open | 0 | 1 | 2021-12-06T12:40:45Z | 2021-12-15T17:47:08Z | NONE | I have been playing with datasette for the last couple of weeks and it is great! I am a big fan of Basically what would like to have is a way to say load my plugin after the plugins I depend on have been loaded and rendered. There seems to be no prior art where plugins have these dependencies on the js level so I was wondering if that could be added or if it exists how to do it. Basically what I want to do is: my-awesome-plugin has a dependency on datastte-cluster-map. Whenever datasette cluster map has finished rendering on page load, call my plugin, but no earlier. To make that work datasette probably needs some total order in which way plugins are loaded intialized. Since I am new to datastte, I may be missing something obvious, so please let me know if the above makes no sense. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1542/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 1091257796 | I_kwDOBm6k_c5BC0XE | 1584 | give error with recursive sql | tunguyenatwork 58088336 | open | 0 | 0 | 2021-12-30T18:53:16Z | 2021-12-30T18:53:16Z | NONE | I got an error "near "WITH": syntax error" after I upgraded to version 0.59 from 0.52.4. This error is related to recursive sql. It works great on the previous version but it failed after upgraded. Below is an example of sql: WITH RECURSIVE manager_of(position, super_position) AS (SELECT position, case ifnull(INDIRECT_SUPER_POSITION,'') when '' then super_position else INDIRECT_SUPER_POSITION end as SUPER_POSITION FROM position where super_position<>'SGV000000001' and super_position!='' and position <> super_position),chain_manager_of_position(position, level) AS (SELECT super_position, 1 as level FROM manager_of WHERE super_position!='' and (position=:pos or position in (Select position from employee where employee=:ein)) UNION ALL SELECT super_position, level+1 as level FROM manager_of JOIN chain_manager_of_position USING(position)) SELECT * FROM chain_manager_of_position left join employee using(position) where employee is not NULL order by level limit 1 |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1584/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 1121121305 | I_kwDOBm6k_c5C0vQZ | 1618 | Reconsider policy on blocking queries containing the string "pragma" | strada 770231 | open | 0 | 6 | 2022-02-01T19:39:46Z | 2022-02-02T19:42:03Z | NONE | First of all, thanks for creating this cool project, and also supporting publishing to various hosting services out of the box. While testing out, I noticed legitimate queries such as

Example as seen from a Datasette instance: https://fivethirtyeight.datasettes.com/polls?sql=select+*+from+books+where+title+like+%27Pragmatic%25%27%0D%0A I'd propose a regular expression like

I can create a pull request with this change, unless the maintainers think it would allow unwanted queries to be executed. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1618/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 1129052172 | I_kwDOBm6k_c5DS_gM | 1633 | base_url or prefix does not work with _exact match | henrikek 6613091 | open | 0 | 2 | 2022-02-09T21:45:07Z | 2022-04-28T09:12:56Z | NONE | When i hit "Apply" button to search with "_exact" for a column syntax the URL prefix is removed from the url.

And the result is:

If I add the marked row to url_builder.py it seams to work:

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1633/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 1148725876 | I_kwDOBm6k_c5EeCp0 | 1640 | Support static assets where file length may change, e.g. logs | broccolihighkicks 57859326 | open | 0 | 2 | 2022-02-24T00:34:42Z | 2022-03-05T01:19:25Z | NONE | This is a bit of an oxymoron. I am serving a log.txt file for a background process using the Datasette --static CLI. This is useful as I can observe a background process from the web UI to see any errors that occur (instead of spelunking the logs via docker exec/ssh etc). I get this error, which I think is because Datasette assumes that the size of the content does not change (but appending new log lines means the content length changes).

Thanks, I am finding Datasette very useful. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1640/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 1154399841 | I_kwDOBm6k_c5Ezr5h | 1645 | Sensible `cache-control` headers for static assets, including those served by plugins | curiousleo 697092 | open | 0 | Datasette 1.0 3268330 | 4 | 2022-02-28T18:12:03Z | 2022-03-08T02:59:29Z | NONE | What I'm seeingWith A table view returns

A static asset returns no

What I expected to seeI expected the static asset to return a Why this mattersI'm productionising a Datasette deployment right now and was looking into putting it behind a Varnish instance. I was surprised to see requests for static assets being served from Datasette rather than Varnish, this is what led me to look more closely at the response headers. While Datasette serves those static assets pretty quickly, I don't see why Datasette should serve them. By their nature, static assets like images and JS files are very cacheable, so it should be easy to serve them from a cache like Varnish. (Note that Varnish can easily be configured to override this header, enabling caching for static assets. But it would be better if this override was not necessary.) DiscussionIt seems clear to me that serving static assets without a I see two options here: A. Static assets use the same logic as table / SQL views to set the |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1645/reactions",

"total_count": 1,

"+1": 1,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

|||||||

| 1174655187 | I_kwDOBm6k_c5GA9DT | 1671 | Filters fail to work correctly against calculated numeric columns returned by SQL views because type affinity rules do not apply | rayvoelker 9308268 | open | 0 | 8 | 2022-03-20T19:17:24Z | 2022-03-22T17:43:12Z | NONE | I found a strange behavior, and I'm not sure if it's related to views and boolean values perhaps, or if there's something else weird going on here, but I'll provide an example that may help show what I'm seeing happen. ```bash !/bin/bashecho "\"id\",\"expiration_date\" 0,2018-01-04 1,2019-01-05 2,2020-01-06 3,2021-01-07 4,2022-01-08 5,2023-01-09 6,2024-01-10 7,2025-01-11 8,2026-01-12 9,2027-01-13 " > test.csv csvs-to-sqlite test.csv test.db sqlite-utils create-view --replace test.db test_view "select id, expiration_date, case when julianday('NOW') >= julianday(expiration_date) then 1 else 0 end as has_expired FROM test" ```

Thanks again and let me know if you want me to provide anything else! |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1671/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 1181037277 | I_kwDOBm6k_c5GZTLd | 1686 | heroku bails if app name specifed in datasette publish is the same as existing app | tlongers 2115933 | open | 0 | 0 | 2022-03-25T17:10:34Z | 2022-03-25T17:10:34Z | NONE | Seem that

The resulting error has the below traceback:

It's a solid failsafe, but does |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1686/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 1221849746 | I_kwDOBm6k_c5I0_KS | 1732 | Custom page variables aren't decoded | tannewt 52649 | open | 0 | 2 | 2022-04-30T14:55:46Z | 2022-05-03T01:50:45Z | NONE | I have a page Datasette should unescape the url component before passing them into the template. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1732/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 1247315144 | I_kwDOBm6k_c5KWITI | 1749 | LDAP auth plugin | benswift 380241 | open | 0 | 0 | 2022-05-25T01:35:12Z | 2022-05-25T01:35:12Z | NONE | A search of the plugins directory doesn't turn up anything, but is is possible to set up a Datasette app which uses my organisation's LDAP for auth? If not, how much work would it be to write one (I may have some spare cycles on my team to do this, but we haven't written a datasette plugin before). |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1749/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 1251700382 | I_kwDOBm6k_c5Km26e | 1750 | Allow `label_column` to specify array of columns | knutwannheden 408765 | open | 0 | 0 | 2022-05-28T18:45:48Z | 2022-05-28T18:45:48Z | NONE | I think it would be great if the Datasette metadata would allow the |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1750/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 1266207143 | I_kwDOBm6k_c5LeMmn | 1755 | Gunicorn | ar-jan 1176293 | open | 0 | 0 | 2022-06-09T14:18:46Z | 2022-06-09T14:18:46Z | NONE | I've read issue #514 which resulted in running Datasette via systemd as recommended approach. We've also adopted this (for now), but I notice that Uvicorn says the following:

We usually deploy Python applications via Gunicorn for these process management features (e.g. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1755/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 1280799259 | I_kwDOBm6k_c5MV3Ib | 1761 | ensure_ascii=False | mustafa0x 1473102 | open | 0 | 0 | 2022-06-22T19:58:13Z | 2022-06-22T19:58:30Z | NONE | Hi, thanks for the project! For the JSON output, I would consider defaulting to |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1761/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 1323332006 | I_kwDOBm6k_c5O4HGm | 1774 | Request of feature for mongo | johnfelipe 428820 | open | 0 | 0 | 2022-07-31T01:00:05Z | 2022-07-31T01:00:05Z | NONE | Will love if can we use datasette for mongo and all pipelines and workflows |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1774/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 1323346408 | I_kwDOBm6k_c5O4Kno | 1775 | i18n support | johnfelipe 428820 | open | 0 | 9 | 2022-07-31T02:51:04Z | 2023-02-10T18:04:40Z | NONE | I want contribute for translate UI to es, de, de and it if you share strings |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1775/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 1337541526 | I_kwDOBm6k_c5PuUOW | 1780 | `facet_time_limit_ms` and `sql_time_limit_ms` overlap? | davepeck 53165 | open | 0 | 1 | 2022-08-12T17:55:37Z | 2022-08-15T23:50:08Z | NONE | I needed more than the default 200ms to facet a specific column in a database I was working with, so I ran But it still didn't work; it took a moment to realize I also needed to up my I'm happy to submit a PR that documents this behavior if it's helpful. Or, if there's a code change we'd like to make (like making sure Apologies if I missed this somewhere in the docs. And: thanks. I'm really enjoying the simple, effective tooling datasette gives me out of the box for exploring my databases! |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1780/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 1347717749 | I_kwDOBm6k_c5QVIp1 | 1791 | Updating metadata.json on Datasette for MacOS | ment4list 1780782 | open | 0 | 1 | 2022-08-23T10:41:16Z | 2022-08-23T13:29:51Z | NONE | I've installed Datasette for Mac as per the documentation and it's working great! However, I'm not sure how to go about adding something like "Canned Queries" or utilising other advanced features or settings by manipulating the I can view these files from the Datasette App from the top right "burger" menu but it only shows the contents of the file with no way to edit or change it. Am I missing something? Where can I update the PS: This is a fantastic tool! Thanks so much for all the effort and especially adding a bunch of different ways to get started quickly! |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1791/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,