issues

2,436 rows where type = "issue" sorted by updated_at descending

This data as json, CSV (advanced)

repo 14

type 1

- issue · 1,238 ✖

| id | node_id | number | title | user | state | locked | assignee | milestone | comments | created_at | updated_at ▲ | closed_at | author_association | pull_request | body | repo | type | active_lock_reason | performed_via_github_app | reactions | draft | state_reason |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 836273891 | MDU6SXNzdWU4MzYyNzM4OTE= | 1266 | Documentation for Response.asgi_send(send) method | simonw 9599 | closed | 0 | 1 | 2021-03-19T18:52:49Z | 2021-03-20T21:35:00Z | 2021-03-20T21:32:28Z | OWNER | I found myself wanting to use this method for https://github.com/simonw/datasette-auth-passwords/issues/15 - but it's not documented. It should be documented. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1266/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 836123030 | MDU6SXNzdWU4MzYxMjMwMzA= | 1265 | Support for HTTP Basic Authentication | yunzheng 468612 | closed | 0 | 3 | 2021-03-19T15:31:09Z | 2021-03-19T22:05:12Z | 2021-03-19T21:03:09Z | NONE | It would be nice if datasette could support HTTP Basic Authentication. For now I could ofcourse leverage Nginx for basic authentication, but it would be nice to have support for this in datasette by default or via a plugin like datasette-auth-github. My main usecase is to put the whole datasette instance behind a username/password prompt via Basic Auth and not specific urls. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1265/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 836063389 | MDU6SXNzdWU4MzYwNjMzODk= | 17 | Datetime columns are not properly formatted to be recognizes as datetime | n8henrie 1234956 | open | 0 | 0 | 2021-03-19T14:33:04Z | 2021-03-19T14:33:04Z | NONE | Currently, the datetimes are formatted in a way that is not recognized by datasette-vega for plotting with a For example, if you have datasette running locally with

The plot is blank unless you choose The If instead the format for this column is changed slightly: I have a PR that addresses this issue, will submit shortly. |

healthkit-to-sqlite 197882382 | issue | {

"url": "https://api.github.com/repos/dogsheep/healthkit-to-sqlite/issues/17/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 834602299 | MDU6SXNzdWU4MzQ2MDIyOTk= | 1262 | Plugin hook that could support 'order by random()' for table view | henry501 19328961 | open | 0 | 3 | 2021-03-18T10:02:01Z | 2021-03-18T17:55:01Z | NONE | I am frequently using Datasette to quickly get a visual impression for a table without reviewing it in its entirety. Because I have some groups of similar records, the default sorting options mean that each page is very similar and not representative of the full dataset. The current interface allows sorting by columns, but random sorting is only available via custom SQL. Maybe this could be a button or link. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1262/reactions",

"total_count": 1,

"+1": 1,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 830283447 | MDU6SXNzdWU4MzAyODM0NDc= | 34 | bucket name | dsisnero 6213 | open | 0 | 0 | 2021-03-12T16:40:57Z | 2021-03-12T16:40:57Z | NONE | I followed the instructions to setup credentials but I am getting a invalid bucket name. Can you put a sample auth.json file in the base that shows the correct format for this? Thanks |

dogsheep-photos 256834907 | issue | {

"url": "https://api.github.com/repos/dogsheep/dogsheep-photos/issues/34/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 787173276 | MDU6SXNzdWU3ODcxNzMyNzY= | 1193 | Research plugin hook for alternative database backends | simonw 9599 | open | 0 | 1 | 2021-01-15T20:27:50Z | 2021-03-12T01:01:54Z | OWNER | I started exploring what Datasette would like running against PostgreSQL in #670 and @dazzag24 did some work on Parquet described in #657. I had initially thought this was WAY too much additional complexity, but I'm beginning to think that the A bigger issue is SQL generation, but I realized that most of Datasette's SQL generation code exists just in the Very unlikely for this to make it into Datasette 1.0, but maybe this would be the defining feature of Datasette 2.0? |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1193/reactions",

"total_count": 3,

"+1": 3,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 824067604 | MDU6SXNzdWU4MjQwNjc2MDQ= | 1250 | Research: Plugin hook for alternative database connections | simonw 9599 | closed | 0 | 2 | 2021-03-08T00:28:15Z | 2021-03-12T01:01:25Z | 2021-03-12T01:01:17Z | OWNER | The The real win would be if this could lead to running Datasette against PostgreSQL. I made some initial explorations in that direction a while ago in #670. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1250/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 794554881 | MDU6SXNzdWU3OTQ1NTQ4ODE= | 1208 | A lot of open(file) functions are used without a context manager thus producing ResourceWarning: unclosed file <_io.TextIOWrapper | kbaikov 4488943 | closed | 0 | 2 | 2021-01-26T20:56:28Z | 2021-03-11T16:15:49Z | 2021-03-11T16:15:49Z | CONTRIBUTOR | Your code is full of open files that are never closed, especially when you deal with reading/writing json/yaml files. If you run python with warnings enabled this problem becomes evident. This probably contributes to some memory leaks in long running datasettes if the GC will not 'collect' those resources properly. This is easily fixed by using a context manager instead of just using open:

In some newer parts of the code you use Path objects 'read_text' and 'write_text' functions which close the file properly and are prefered in some cases. If you want I can create a PR for all places i found this pattern in. Bellow is a fraction of places where i found a ResourceWarning: ```python update-docs-help.py: 20 actual = actual.replace("Usage: cli ", "Usage: datasette ") 21: open(docs_path / filename, "w").write(actual) 22 datasette\app.py: 210 ): 211: inspect_data = json.load((config_dir / "inspect-data.json").open()) 212 if immutables is None: 266 if config_dir and (config_dir / "settings.json").exists() and not config: 267: config = json.load((config_dir / "settings.json").open()) 268 self._settings = dict(DEFAULT_SETTINGS, **(config or {})) 445 self._app_css_hash = hashlib.sha1( 446: open(os.path.join(str(app_root), "datasette/static/app.css")) 447 .read() datasette\cli.py: 130 else: 131: out = open(inspect_file, "w") 132 loop = asyncio.get_event_loop() 459 if inspect_file: 460: inspect_data = json.load(open(inspect_file)) 461 ``` |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1208/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 824750134 | MDU6SXNzdWU4MjQ3NTAxMzQ= | 1251 | facet option not appearing when table is big | verajosemanuel 15836677 | open | 0 | 0 | 2021-03-08T16:54:04Z | 2021-03-08T16:54:16Z | NONE | I have a big table with more than 500.000 rows. Trying to facet by one of my columns, the options are not available as for the other smaller tables. I have tried to set it in URL as:

to no avail. is there any limit? how can I force the option "facet" to appear for big tables? |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1251/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 823035080 | MDU6SXNzdWU4MjMwMzUwODA= | 1248 | duckdb database (very low performance in SQLite) | verajosemanuel 15836677 | closed | 0 | 1 | 2021-03-05T12:20:29Z | 2021-03-08T00:25:27Z | 2021-03-08T00:25:27Z | NONE | My sqlite is getting too big to be processed by datasette (more than 10 minutes waiting to load) so I am working with duckdb and is waaaaay faster. I think the fastest embeddable database actually. Taking into account DuckDb is SQLite based it would be GREAT to use it with datasette. is that possible? Regards and thanks for a superb job |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1248/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 617323873 | MDU6SXNzdWU2MTczMjM4NzM= | 766 | Enable wildcard-searches by default | clausjuhl 2181410 | open | 0 | 2 | 2020-05-13T10:14:48Z | 2021-03-05T16:35:21Z | NONE | Hi Simon. It seems that datasette currently has wildcard-searches disabled by default (along with the boolean search-options, NEAR-queries and more, and despite the docs). If I try out the search-url provided in the docs (https://fara.datasettes.com/fara/FARA_All_ShortForms?_search=manafort), it does not handle wildcard-searches, and I'm unable to make it work on my datasette-instance. I would argue that wildcard-searches is such a standard query, that it should be enabled by default. Requiring "_searchmode=raw" when using prefix-searches seems unnecessary. Plus: What happens to non-ascii searches when using "_searchmode=raw"? Is the "escape_fts"-function from datasette.utils ignored? Thanks! /Claus |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/766/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 778380836 | MDU6SXNzdWU3NzgzODA4MzY= | 4 | Feature Request: Gmail | Btibert3 203343 | open | 0 | 5 | 2021-01-04T21:31:09Z | 2021-03-04T20:54:44Z | NONE | From takeout, I only exported my Gmail account. Ideally I could parse this into sqlite via this tool. |

google-takeout-to-sqlite 206649770 | issue | {

"url": "https://api.github.com/repos/dogsheep/google-takeout-to-sqlite/issues/4/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 821841046 | MDU6SXNzdWU4MjE4NDEwNDY= | 6 | Upgrade to latest sqlite-utils | simonw 9599 | open | 0 | 1 | 2021-03-04T07:21:54Z | 2021-03-04T07:22:51Z | MEMBER | This is pinned to v1 at the moment. |

google-takeout-to-sqlite 206649770 | issue | {

"url": "https://api.github.com/repos/dogsheep/google-takeout-to-sqlite/issues/6/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 818684978 | MDU6SXNzdWU4MTg2ODQ5Nzg= | 243 | How can i use this utils to deal with fts on column meta of tables ? | svjack 27874014 | open | 0 | 0 | 2021-03-01T09:45:05Z | 2021-03-01T09:45:05Z | NONE | Thank you to release this bravo project. When i use this project on multi table db, I want to implement convenient search on column name from different tables. I want to develop a meta table to save the meta data of different columns of different tables and search on this meta table to get rows from the data table (which the meta table describes) does this project provide some simple function on it ? You can think a have a knowledge graph about the table in the db, and i save this knowledge graph into the db with fts enabled. |

sqlite-utils 140912432 | issue | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/243/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 818430405 | MDU6SXNzdWU4MTg0MzA0MDU= | 1247 | datasette.add_memory_database() method | simonw 9599 | closed | 0 | 2 | 2021-03-01T03:48:38Z | 2021-03-01T04:02:26Z | 2021-03-01T04:02:26Z | OWNER | I just wrote this code: It would be nice if you didn't have to separately instantiate a database object here. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1247/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 817597268 | MDU6SXNzdWU4MTc1OTcyNjg= | 1246 | Suggest for ArrayFacet possibly confused by blank values | simonw 9599 | closed | 0 | 3 | 2021-02-26T19:11:52Z | 2021-03-01T03:46:11Z | 2021-03-01T03:46:11Z | OWNER | I sometimes don't get the suggestion for facet-by-array for columns that contain arrays. I think it may be because they have empty spaces in them - or perhaps it's because the null detection doesn't actually work. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1246/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 718259202 | MDU6SXNzdWU3MTgyNTkyMDI= | 1005 | Remove xfail tests when new httpx is released | simonw 9599 | closed | 0 | Datasette 1.0 3268330 | 3 | 2020-10-09T16:00:19Z | 2021-02-28T22:41:08Z | 2021-02-28T22:41:08Z | OWNER |

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1005/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 814591962 | MDU6SXNzdWU4MTQ1OTE5NjI= | 1240 | Allow facetting on custom queries | Kabouik 7107523 | closed | 0 | 3 | 2021-02-23T15:52:19Z | 2021-02-26T18:19:46Z | 2021-02-26T18:18:18Z | NONE | Facets are a tremendously useful feature, especially for people peeking at the database for the first time and still having little knowledge about the details of the data. It is of great assistance to discover interesting features to explore futher in advanced queries. Yet, it seems it's impossible to use facets when running a custom SQL query, be it from the little gear icons in column names, the facet suggestions at the top (hidden when performing a custom query), or by appending a facet code to the URL. Is there a technical limitation, or is this something that could be unlocked easily? |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1240/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 817528452 | MDU6SXNzdWU4MTc1Mjg0NTI= | 1244 | Plugin tip: look at the examples linked from the hooks page | simonw 9599 | closed | 0 | 1 | 2021-02-26T17:18:27Z | 2021-02-26T17:30:38Z | 2021-02-26T17:27:15Z | OWNER | Someone asked "what are good example plugins I can look at?" and I realized that the answer is to look through the example links on https://docs.datasette.io/en/stable/plugin_hooks.html - but that tip should be written down somewhere on the https://docs.datasette.io/en/stable/writing_plugins.html page. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1244/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 815554385 | MDU6SXNzdWU4MTU1NTQzODU= | 237 | db["my_table"].drop(ignore=True) parameter, plus sqlite-utils drop-table --ignore and drop-view --ignore | mhalle 649467 | closed | 0 | 3 | 2021-02-24T14:55:06Z | 2021-02-25T17:11:41Z | 2021-02-25T17:11:41Z | NONE | When I'm generating a derived table in python, I often drop the table and create it from scratch. However, the first time I generate the table, it doesn't exist, so the drop raises an exception. That means more boilerplate. I was going to submit a pull request that adds an "if_exists" option to the However, for a utility like sqlite_utils, perhaps the "IF EXISTS" SQL semantics is what you want most of the time, and thus should be the default. What do you think? |

sqlite-utils 140912432 | issue | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/237/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 816523763 | MDU6SXNzdWU4MTY1MjM3NjM= | 238 | .add_foreign_key() corrupts database if column contains a space | simonw 9599 | closed | 0 | 1 | 2021-02-25T15:07:20Z | 2021-02-25T16:54:02Z | 2021-02-25T16:54:02Z | OWNER | I ran this: And got this: ``` ~/jupyter-venv/lib/python3.9/site-packages/sqlite_utils/db.py in add_foreign_keys(self, foreign_keys) 616 # Have to VACUUM outside the transaction to ensure .foreign_keys property 617 # can see the newly created foreign key. --> 618 self.vacuum() 619 620 def index_foreign_keys(self): ~/jupyter-venv/lib/python3.9/site-packages/sqlite_utils/db.py in vacuum(self) 629 630 def vacuum(self): --> 631 self.execute("VACUUM;") 632 633 ~/jupyter-venv/lib/python3.9/site-packages/sqlite_utils/db.py in execute(self, sql, parameters) 234 return self.conn.execute(sql, parameters) 235 else: --> 236 return self.conn.execute(sql) 237 238 def executescript(self, sql): DatabaseError: database disk image is malformed ``` |

sqlite-utils 140912432 | issue | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/238/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 816560819 | MDU6SXNzdWU4MTY1NjA4MTk= | 240 | table.pks_and_rows_where() method returning primary keys along with the rows | simonw 9599 | closed | 0 | 7 | 2021-02-25T15:49:28Z | 2021-02-25T16:39:23Z | 2021-02-25T16:28:23Z | OWNER | Original title: Easier way to update a row returned from .rows Here's a surprisingly hard problem I ran into while trying to implement #239 - given a row returned by The problem is that the Instead, currently, you need to introspect the table and, if A utility mechanism to make this easier would be very welcome. |

sqlite-utils 140912432 | issue | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/240/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 803356942 | MDU6SXNzdWU4MDMzNTY5NDI= | 1218 | /usr/local/opt/python3/bin/python3.6: bad interpreter: No such file or directory | robmarkcole 11855322 | open | 0 | 1 | 2021-02-08T09:07:00Z | 2021-02-23T12:12:17Z | NONE | Error as above, however I do have python3.8 and the readme indicates this is supported. ``` (venv) (base) Robins-MacBook:datasette robin$ ls /usr/local/opt/python3/bin/ .. pip3 python3 python3.8 ``` |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1218/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 813978858 | MDU6SXNzdWU4MTM5Nzg4NTg= | 1239 | JSON filter fails if column contains spaces | simonw 9599 | closed | 0 | 1 | 2021-02-23T00:18:07Z | 2021-02-23T00:22:53Z | 2021-02-23T00:22:53Z | OWNER | Got this exception:

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1239/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 811505638 | MDU6SXNzdWU4MTE1MDU2Mzg= | 1234 | Runtime support for ATTACHing multiple databases | simonw 9599 | open | 0 | 1 | 2021-02-18T22:06:47Z | 2021-02-22T21:06:28Z | OWNER |

Originally posted by @simonw in https://github.com/simonw/datasette/issues/283#issuecomment-781665560 |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1234/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 783778672 | MDU6SXNzdWU3ODM3Nzg2NzI= | 220 | Better error message for *_fts methods against views | mhalle 649467 | closed | 0 | 3 | 2021-01-11T23:24:00Z | 2021-02-22T20:44:51Z | 2021-02-14T22:34:26Z | NONE | enable_fts and its related methods only work on tables, not views. Could those methods and possibly others move up to the Queryable superclass? |

sqlite-utils 140912432 | issue | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/220/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 797651831 | MDU6SXNzdWU3OTc2NTE4MzE= | 1212 | Tests are very slow. | kbaikov 4488943 | closed | 0 | 4 | 2021-01-31T08:06:16Z | 2021-02-19T22:54:13Z | 2021-02-19T22:54:13Z | CONTRIBUTOR | Working on my PR i noticed that tests are very slow. The plain pytest run took about 37 minutes for me.

However i could shave of about 10 minutes from that if i used pytest-xdist to parallelize execution.

I can create a PR to mention that in your documentation. This will be a simple change to add pytest-xdist to requirements and change a command to run pytest in documentation. Does that make sense to you? After a bit more investigation it looks like python-xdist is not an answer. It creates a race condition for tests that try to clead temp dir before run. Profiling shows that most time is spent on conn.executescript(TABLES) in make_app_client function. Which makes sense. Perhaps the better approach would be look at the app_client fixture which is already session scoped, but not used by all test cases. And/or use conn = sqlite3.connect(":memory:") which is much faster. And/or truncate tables after each TC instead of deleting the file and re-creating them. I can take a look which is the best approach if you give the go-ahead. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1212/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 811680502 | MDU6SXNzdWU4MTE2ODA1MDI= | 236 | --attach command line option for attaching extra databases | simonw 9599 | closed | 0 | 1 | 2021-02-19T04:38:30Z | 2021-02-19T05:10:41Z | 2021-02-19T05:08:43Z | OWNER | This will enable cross-database joins, as seen in https://github.com/simonw/datasette/issues/283 Also refs #113 |

sqlite-utils 140912432 | issue | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/236/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 621286870 | MDU6SXNzdWU2MjEyODY4NzA= | 113 | Syntactic sugar for ATTACH DATABASE | simonw 9599 | closed | 0 | 2 | 2020-05-19T21:10:00Z | 2021-02-19T05:09:12Z | 2021-02-19T04:56:36Z | OWNER | https://www.sqlite.org/lang_attach.html Maybe something like this:

|

sqlite-utils 140912432 | issue | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/113/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 811589344 | MDU6SXNzdWU4MTE1ODkzNDQ= | 1235 | Upgrade Python version used by official Datasette Docker image | simonw 9599 | closed | 0 | 2 | 2021-02-19T00:47:40Z | 2021-02-19T01:48:31Z | 2021-02-19T01:48:30Z | OWNER | Currently uses 3.7.2: https://github.com/simonw/datasette/blob/73bed175631a79e13a521eee82f8451dd0477eb3/Dockerfile#L1 There's a security fix for Python which it would be good to ship in this image (even though I'm reasonably confident it doesn't affect Datasette): https://bugs.python.org/issue42938 |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1235/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 811458446 | MDU6SXNzdWU4MTE0NTg0NDY= | 1233 | "datasette publish cloudrun" cannot publish files with spaces in their name | simonw 9599 | open | 0 | 1 | 2021-02-18T21:08:31Z | 2021-02-18T21:10:08Z | OWNER | Got this error: ``` Step 6/9 : RUN datasette inspect fixtures.db extra database.db --inspect-file inspect-data.json ---> Running in db9da0068592 Usage: datasette inspect [OPTIONS] [FILES]... Try 'datasette inspect --help' for help. Error: Invalid value for '[FILES]...': Path 'extra' does not exist.

The command '/bin/sh -c datasette inspect fixtures.db extra database.db --inspect-file inspect-data.json' returned a non-zero code: 2

ERROR

ERROR: build step 0 "gcr.io/cloud-builders/docker" failed: step exited with non-zero status: 2

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1233/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 808843401 | MDU6SXNzdWU4MDg4NDM0MDE= | 1226 | --port option should validate port is between 0 and 65535 | simonw 9599 | closed | 0 | 4 | 2021-02-15T22:01:33Z | 2021-02-18T18:41:27Z | 2021-02-18T18:41:27Z | OWNER | Currently throws an ugly error message:

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1226/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 811054000 | MDU6SXNzdWU4MTEwNTQwMDA= | 1230 | Vega charts are plotted only for rows on the visible page, cluster maps only for rows in the remaining pages | Kabouik 7107523 | open | 0 | 1 | 2021-02-18T12:27:02Z | 2021-02-18T15:22:15Z | NONE | I filtered a data set on some criteria and obtain 265 results, split over three pages (100, 100, 65), and reazlized that Vega plots are only applied to the results displayed on the current page, instead of the whole filtered data, e.g., 100 on page 1, 100 on page 2, 65 on page 3. Is there a way to force the graphs to consider all results instead of just the page, considering that pages rarely represent sensible information? Likewise, while the cluster map does show all results on the first page, if you go to next pages, it will show all remaining results except the previous page(s), e.g., 265 on page 1, 165 on page 2, 65 on page 3. In both cases, I don't see many situations where one would like to represent the data this way, and it might even lead to interpretation errors when viewing the data. Am I missing some cases where this would be best? Perhaps a clickable option to subset visual representations according visible pages vs. display all search results would do? [Edit] Oh, I just saw the "Load all" button under the cluster map as well as the setting to alter the max number or results. So I guess this issue only is about the Vega charts. |

datasette 107914493 | issue | |||||||||

| 807174161 | MDU6SXNzdWU4MDcxNzQxNjE= | 227 | Error reading csv files with large column data | camallen 295329 | closed | 0 | 4 | 2021-02-12T11:51:47Z | 2021-02-16T11:48:03Z | 2021-02-14T21:17:19Z | NONE | Feel free to close this issue - I mostly added it for reference for future folks that run into this :) I have a CSV file with one column that has very long strings. When i try to import this file via the Traceback (most recent call last):

File "/usr/local/bin/sqlite-utils", line 10, in <module>

sys.exit(cli())

File "/usr/local/lib/python3.7/site-packages/click/core.py", line 829, in call

return self.main(args, kwargs)

File "/usr/local/lib/python3.7/site-packages/click/core.py", line 782, in main

rv = self.invoke(ctx)

File "/usr/local/lib/python3.7/site-packages/click/core.py", line 1259, in invoke

return _process_result(sub_ctx.command.invoke(sub_ctx))

File "/usr/local/lib/python3.7/site-packages/click/core.py", line 1066, in invoke

return ctx.invoke(self.callback, ctx.params)

File "/usr/local/lib/python3.7/site-packages/click/core.py", line 610, in invoke

return callback(args, kwargs)

File "/usr/local/lib/python3.7/site-packages/sqlite_utils/cli.py", line 774, in insert

default=default,

File "/usr/local/lib/python3.7/site-packages/sqlite_utils/cli.py", line 705, in insert_upsert_implementation

docs, pk=pk, batch_size=batch_size, alter=alter, extra_kwargs

File "/usr/local/lib/python3.7/site-packages/sqlite_utils/db.py", line 1852, in insert_all

first_record = next(records)

File "/usr/local/lib/python3.7/site-packages/sqlite_utils/cli.py", line 703, in <genexpr>

docs = (decode_base64_values(doc) for doc in docs)

File "/usr/local/lib/python3.7/site-packages/sqlite_utils/cli.py", line 681, in <genexpr>

docs = (dict(zip(headers, row)) for row in reader)

_csv.Error: field larger than field limit (131072)

sqlite-utils --versionsqlite-utils, version 3.4.1 datasette --versiondatasette, version 0.54 ``` It appears this is a known issue reading in csv files in python and doesn't look to be modifiable through system / env vars (i may be very wrong on this). Noting that using sqlite3 Finally, I'm loving https://datasette.io/ thank you very much for an amazing tool and data ecosytem 🙇♀️ |

sqlite-utils 140912432 | issue | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/227/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 688670158 | MDU6SXNzdWU2ODg2NzAxNTg= | 147 | SQLITE_MAX_VARS maybe hard-coded too low | simonwiles 96218 | open | 0 | 7 | 2020-08-30T07:26:45Z | 2021-02-15T21:27:55Z | CONTRIBUTOR | I came across this while about to open an issue and PR against the documentation for As mentioned in #145, while:

it is common that it is increased at compile time. Debian-based systems, for example, seem to ship with a version of sqlite compiled with SQLITE_MAX_VARIABLE_NUMBER set to 250,000, and I believe this is the case for homebrew installations too. In working to understand what Unfortunately, it seems that Obviously this couldn't be relied upon in |

sqlite-utils 140912432 | issue | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/147/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 808771690 | MDU6SXNzdWU4MDg3NzE2OTA= | 1225 | More flexible formatting of records with CSS grid | mhalle 649467 | open | 0 | 0 | 2021-02-15T19:28:17Z | 2021-02-15T19:28:35Z | NONE | In several applications I've been experimenting with alternate formatting of datasette query results. Lately I've found that CSS grids work very well and seem quite general for formatting rows. In CSS I use grid templates to define the layout of each record and the regions for each field, hiding the fields I don't want. It's pretty flexible and looks good. It's also a great basis for highly responsive layout. I initially thought I'd only use this feature for record detail views, but now I use it for index views as well. However, there are some limitations:

* With the existing table templates, it seems that you can change the It would be helpful to at least have an official example or test that used a grid layout for records to make sure nothing in datasette breaks with it. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1225/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 807817197 | MDU6SXNzdWU4MDc4MTcxOTc= | 229 | Hitting `_csv.Error: field larger than field limit (131072)` | frosencrantz 631242 | closed | 0 | 3 | 2021-02-13T19:52:44Z | 2021-02-14T21:33:33Z | 2021-02-14T21:33:33Z | NONE | I have a csv file where one of the fields is so large it is throwing an exception with this error and stops loading:

The stack trace occurs here: https://github.com/simonw/sqlite-utils/blob/3.1/sqlite_utils/cli.py#L633 There is a way to handle this that helps: https://stackoverflow.com/questions/15063936/csv-error-field-larger-than-field-limit-131072 One issue I had with this problem was sqlite-utils only provides limited context as to where the problem line is. There is the progress bar, but that is by percent rather than by line number. It would have been helpful if it could have provided a line number. Also, it would have been useful if it had allowed the loading to continue with later lines. |

sqlite-utils 140912432 | issue | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/229/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 808008305 | MDU6SXNzdWU4MDgwMDgzMDU= | 230 | --sniff option for sniffing delimiters | simonw 9599 | closed | 0 | 8 | 2021-02-14T17:43:54Z | 2021-02-14T21:15:33Z | 2021-02-14T19:24:32Z | OWNER |

Originally posted by @simonw in https://github.com/simonw/sqlite-utils/issues/228#issuecomment-778812050 |

sqlite-utils 140912432 | issue | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/230/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 808046597 | MDU6SXNzdWU4MDgwNDY1OTc= | 234 | .insert_all() fails if subsequent chunks contain additional columns | simonw 9599 | closed | 0 | 1 | 2021-02-14T21:01:51Z | 2021-02-14T21:03:40Z | 2021-02-14T21:03:40Z | OWNER | Reported by @nieuwenhoven in #225 along with a proposed fix. |

sqlite-utils 140912432 | issue | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/234/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 808036774 | MDU6SXNzdWU4MDgwMzY3NzQ= | 232 | Run tests against Windows in GitHub Actions | simonw 9599 | closed | 0 | 0 | 2021-02-14T20:09:45Z | 2021-02-14T20:39:55Z | 2021-02-14T20:39:55Z | OWNER |

Originally posted by @simonw in https://github.com/simonw/sqlite-utils/issues/225#issuecomment-778834504 |

sqlite-utils 140912432 | issue | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/232/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 808028757 | MDU6SXNzdWU4MDgwMjg3NTc= | 231 | limit=X, offset=Y parameters for more Python methods | simonw 9599 | closed | 0 | 2 | 2021-02-14T19:31:23Z | 2021-02-14T20:03:08Z | 2021-02-14T20:03:08Z | OWNER |

Originally posted by @simonw in https://github.com/simonw/sqlite-utils/issues/224#issuecomment-778828495 |

sqlite-utils 140912432 | issue | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/231/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 806743116 | MDU6SXNzdWU4MDY3NDMxMTY= | 1220 | Installing datasette via docker: Path 'fixtures.db' does not exist | aborruso 30607 | closed | 0 | 4 | 2021-02-11T21:09:14Z | 2021-02-12T21:35:17Z | 2021-02-12T21:35:17Z | NONE | Hi, If I run

I have

If I run What's my error? Thank you |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1220/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 797097140 | MDU6SXNzdWU3OTcwOTcxNDA= | 60 | Use Data from SQLite in other commands | daniel-butler 22578954 | open | 0 | 3 | 2021-01-29T18:35:52Z | 2021-02-12T18:29:43Z | CONTRIBUTOR | As a total beginner here how could you access data from the sqlite table to run other commands. What I am thinking is I want to get all the repos in an organization then using the repo list pull all the commit messages for each repo. I love this project by the way! |

github-to-sqlite 207052882 | issue | {

"url": "https://api.github.com/repos/dogsheep/github-to-sqlite/issues/60/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 803338729 | MDU6SXNzdWU4MDMzMzg3Mjk= | 33 | photo-to-sqlite: command not found | robmarkcole 11855322 | open | 0 | 4 | 2021-02-08T08:42:57Z | 2021-02-12T15:00:44Z | NONE | Having installed in a venv I get: ``` (venv) (base) Robins-MacBook:datasette robin$ photo-to-sqlite apple-photos photos.db -bash: photo-to-sqlite: command not found ``` |

dogsheep-photos 256834907 | issue | {

"url": "https://api.github.com/repos/dogsheep/dogsheep-photos/issues/33/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 743297582 | MDU6SXNzdWU3NDMyOTc1ODI= | 7 | evernote-to-sqlite on windows 10 give this error: TypeError: insert() got an unexpected keyword argument 'replace' | martinvanwieringen 42387931 | closed | 0 | 1 | 2020-11-15T16:57:28Z | 2021-02-11T22:13:17Z | 2021-02-11T22:13:17Z | NONE | running evernote-to-sqlite 0.2 on windows 10. Command: evernote-to-sqlite enex evernote.db MyNotes.enex I get the followinng error: File "C:\Users\marti\AppData\Roaming\Python\Python38\site-packages\evernote_to_sqlite\utils.py", line 46, in save_note note_id = db["notes"].insert(row, hash_id="id", replace=True, alter=True).last_pk TypeError: insert() got an unexpected keyword argument 'replace' Removing replace=True, Leads to below error: note_id = db["notes"].insert(row, hash_id="id", alter=True).last_pk File "C:\Users\marti\AppData\Roaming\Python\Python38\site-packages\sqlite_utils\db.py", line 924, in insert return self.insert_all( File "C:\Users\marti\AppData\Roaming\Python\Python38\site-packages\sqlite_utils\db.py", line 1046, in insert_all result = self.db.conn.execute(sql, values) sqlite3.IntegrityError: UNIQUE constraint failed: notes.id |

evernote-to-sqlite 303218369 | issue | {

"url": "https://api.github.com/repos/dogsheep/evernote-to-sqlite/issues/7/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 748372469 | MDU6SXNzdWU3NDgzNzI0Njk= | 9 | ParseError: undefined entity š | mkorosec 4028322 | closed | 0 | 1 | 2020-11-22T23:04:35Z | 2021-02-11T22:10:55Z | 2021-02-11T22:10:55Z | CONTRIBUTOR | I encountered a parse error if the enex file contained š or Run command: evernote-to-sqlite enex evernote.db evernote.enex

Workaround:

|

evernote-to-sqlite 303218369 | issue | {

"url": "https://api.github.com/repos/dogsheep/evernote-to-sqlite/issues/9/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 792851444 | MDU6SXNzdWU3OTI4NTE0NDQ= | 11 | XML parse error | dskrad 3613583 | closed | 0 | 2 | 2021-01-24T17:38:54Z | 2021-02-11T21:18:58Z | 2021-02-11T21:18:48Z | NONE | I am on Windows 10 using Windows Subsystem for Linux, Python 3.8. I installed evernote-to-sqlite via pipx (in a venv). I tried using enex files from the latest version of Evernote for Windows (10.6.9 which only lets you export 50 notes at a time) and from Legacy Evernote (6.25.2.9198 which lets you export all your notes at once). The enex file from latest evernote gives this error: The enex file from Legacy Evernote gives this error: |

evernote-to-sqlite 303218369 | issue | {

"url": "https://api.github.com/repos/dogsheep/evernote-to-sqlite/issues/11/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 792890765 | MDU6SXNzdWU3OTI4OTA3NjU= | 1200 | ?_size=10 option for the arbitrary query page would be useful | simonw 9599 | open | 0 | 2 | 2021-01-24T20:55:35Z | 2021-02-11T03:13:59Z | OWNER | https://latest.datasette.io/fixtures?sql=select+*+from+compound_three_primary_keys&_size=10 - Would also be good if it persisted in a hidden form field. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1200/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 803929694 | MDU6SXNzdWU4MDM5Mjk2OTQ= | 1219 | Try profiling Datasette using scalene | simonw 9599 | open | 0 | 2 | 2021-02-08T20:37:06Z | 2021-02-08T22:13:00Z | OWNER | https://github.com/emeryberger/scalene looks like an interesting profiling tool. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1219/reactions",

"total_count": 1,

"+1": 1,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 801780625 | MDU6SXNzdWU4MDE3ODA2MjU= | 9 | SSL Error | jfeiwell 12669260 | open | 0 | 2 | 2021-02-05T02:12:56Z | 2021-02-07T18:45:04Z | NONE | Here's the error I get when running

Does this require python 3? |

pocket-to-sqlite 213286752 | issue | {

"url": "https://api.github.com/repos/dogsheep/pocket-to-sqlite/issues/9/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 802583450 | MDU6SXNzdWU4MDI1ODM0NTA= | 226 | 3.4 release is broken - includes a rogue line | simonw 9599 | closed | 0 | 0 | 2021-02-06T02:08:01Z | 2021-02-06T02:10:26Z | 2021-02-06T02:10:26Z | OWNER | I started seeing weird errors, caused by this line: https://github.com/simonw/sqlite-utils/blob/f8010ca78fed8c5fca6cde19658ec09fdd468420/sqlite_utils/cli.py#L1-L3 That was added by accident in 1b666f9315d4ea6bb332b2e75e48480c26100199 I'm surprised the tests didn't catch this! |

sqlite-utils 140912432 | issue | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/226/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 788527932 | MDU6SXNzdWU3ODg1Mjc5MzI= | 223 | --delimiter option for CSV import | simonw 9599 | closed | 0 | 2 | 2021-01-18T20:25:03Z | 2021-02-06T01:39:47Z | 2021-02-06T01:34:54Z | OWNER |

Would be useful to be able to do this:

|

sqlite-utils 140912432 | issue | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/223/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 796234313 | MDU6SXNzdWU3OTYyMzQzMTM= | 1210 | Immutable Database w/ Canned Queries | heyarne 525780 | closed | 0 | 2 | 2021-01-28T18:08:29Z | 2021-02-05T11:30:34Z | 2021-02-05T11:30:34Z | NONE | I have a database that I only want to read from; when instructing datasette to treat the database as immutable my defined canned queries disappear. Are these two features incompatible or have I hit an unintended bug? Thanks for datasette in any way, it's a joy to use! |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1210/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 796736607 | MDU6SXNzdWU3OTY3MzY2MDc= | 56 | Not all quoted statuses get fetched? | gsajko 42315895 | closed | 0 | 3 | 2021-01-29T09:48:44Z | 2021-02-03T10:36:36Z | 2021-02-03T10:36:36Z | NONE |

In my database I have 13300 quote tweets, but eta 3600 have I fetched some of them using |

twitter-to-sqlite 206156866 | issue | {

"url": "https://api.github.com/repos/dogsheep/twitter-to-sqlite/issues/56/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 799693777 | MDU6SXNzdWU3OTk2OTM3Nzc= | 1214 | Re-submitting filter form duplicates _x querystring arguments | simonw 9599 | closed | 0 | 3 | 2021-02-02T21:13:35Z | 2021-02-02T21:28:53Z | 2021-02-02T21:21:13Z | OWNER | Really nasty bug, caused by #1194 fix in 07e163561592c743e4117f72102fcd350a600909 Navigate to this page: https://github-to-sqlite.dogsheep.net/github/labels?_search=help&_sort=id Click "Apply" to submit the form and the resulting URL is https://github-to-sqlite.dogsheep.net/github/labels?_search=help&_sort=id&_search=help&_sort=id That's because the (truncated) HTML for the form looks like this:

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1214/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 799663959 | MDU6SXNzdWU3OTk2NjM5NTk= | 1213 | gzip support for HTML (and JSON) responses | simonw 9599 | open | 0 | 3 | 2021-02-02T20:36:28Z | 2021-02-02T20:41:55Z | OWNER | This page https://datasette-tiles-demo.datasette.io/San_Francisco/tiles is 2MB because of all of the base64 images. Gzipped it's 1.5MB. Since Datasette is usually deployed without a frontend gzipping proxy, Datasette itself needs to solve for this. Gzipping everything won't work because some endpoints - the all-rows CSV endpoint and the download-database endpoint - are streaming and hence can't be buffered-and-gzipped. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1213/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 797784080 | MDU6SXNzdWU3OTc3ODQwODA= | 62 | Stargazers and workflows commands always require an auth file when using GITHUB_TOKEN | frosencrantz 631242 | open | 0 | 0 | 2021-01-31T18:56:05Z | 2021-01-31T18:56:05Z | CONTRIBUTOR | Requested fix in https://github.com/dogsheep/github-to-sqlite/pull/59 The stargazers and workflows commands always require an auth file, even when using a |

github-to-sqlite 207052882 | issue | {

"url": "https://api.github.com/repos/dogsheep/github-to-sqlite/issues/62/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 797728929 | MDU6SXNzdWU3OTc3Mjg5Mjk= | 8 | QUESTION: extract full text | darribas 417363 | open | 0 | 0 | 2021-01-31T14:50:10Z | 2021-01-31T14:50:10Z | NONE | This may be solved or a feature already, but I couldn't figure it out, is it possible to extract and store also full text from the saved pages? The same way that Pocket parses the text, it'd be amazing to be able to store (and thus make searchable later) the text. Thank you very much for the project, it's such an amazing idea! |

pocket-to-sqlite 213286752 | issue | {

"url": "https://api.github.com/repos/dogsheep/pocket-to-sqlite/issues/8/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 793881756 | MDU6SXNzdWU3OTM4ODE3NTY= | 1207 | Document the Datasette(..., pdb=True) testing pattern | simonw 9599 | closed | 0 | 1 | 2021-01-26T02:48:10Z | 2021-01-29T02:37:19Z | 2021-01-29T02:12:34Z | OWNER | If you're writing tests for a Datasette plugin and you get a 500 error from inside Datasette, you can cause Datasette to open a PDB session within the application server code by doing this:

You'll need to run |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1207/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 795367402 | MDU6SXNzdWU3OTUzNjc0MDI= | 1209 | v0.54 500 error from sql query in custom template; code worked in v0.53; found a workaround | jrdmb 11788561 | open | 0 | 1 | 2021-01-27T19:08:13Z | 2021-01-28T23:00:27Z | NONE | v0.54 500 error in sql query template; code worked in v0.53; found a workaround schema: Live example of correctly rendered template in v.053: https://cosmotalks-cy6xkkbezq-uw.a.run.app/cosmotalks/talks/1 Description of problem: I needed 'sql select' code in a custom row-mydatabase-mytable.html template to lookup the series name for a foreign key integer value in the talks table. So The code below worked perfectly in v0.53 (just the relevant sql statement part is shown; full code is here):

In v0.54, that code resulted in a 500 error with a 'no such table series' message. A second query in that template also did not work but the above is fully illustrative of the problem. All templates were up-to-date along with datasette v0.54. Workaround: After fiddling around with trying different things, what worked was the syntax from Querying a different database from the datasette-template-sql github repo to add the database name to the sql statement:

Though this was found to work, it should not be necessary to add |

datasette 107914493 | issue | |||||||||

| 793027837 | MDU6SXNzdWU3OTMwMjc4Mzc= | 1205 | Rename /:memory: to /_memory | simonw 9599 | closed | 0 | Datasette 1.0 3268330 | 3 | 2021-01-25T05:04:56Z | 2021-01-28T22:55:02Z | 2021-01-28T22:51:42Z | OWNER | For consistency with This change would need to be in before Datasette 1.0. I could land it earlier and set up redirects from the old URLs though. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1205/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 770448622 | MDU6SXNzdWU3NzA0NDg2MjI= | 1151 | Database class mechanism for cross-connection in-memory databases | simonw 9599 | closed | 0 | Datasette 0.54 6346396 | 11 | 2020-12-17T23:25:43Z | 2021-01-26T19:07:44Z | 2020-12-18T01:01:26Z | OWNER |

Originally posted by @simonw in https://github.com/simonw/datasette/issues/1150#issuecomment-747768112 |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1151/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

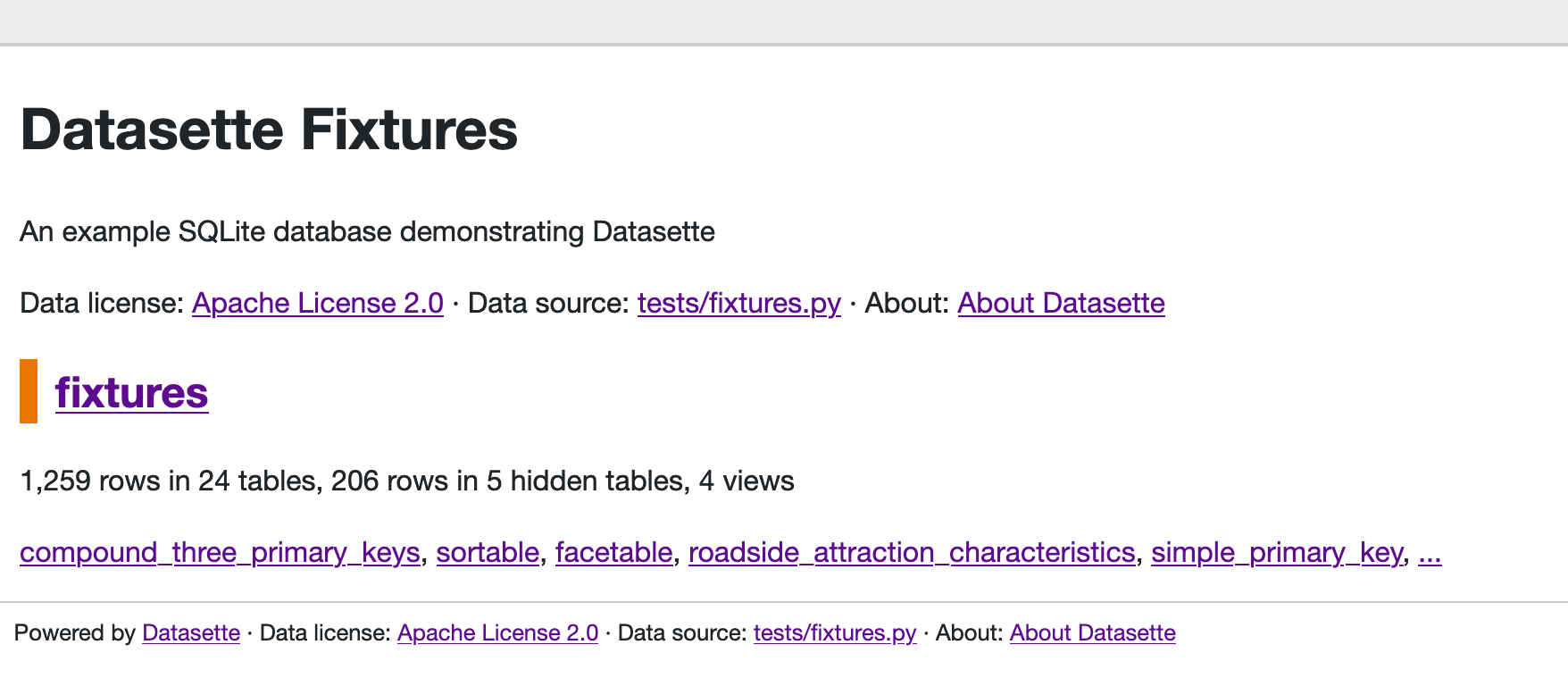

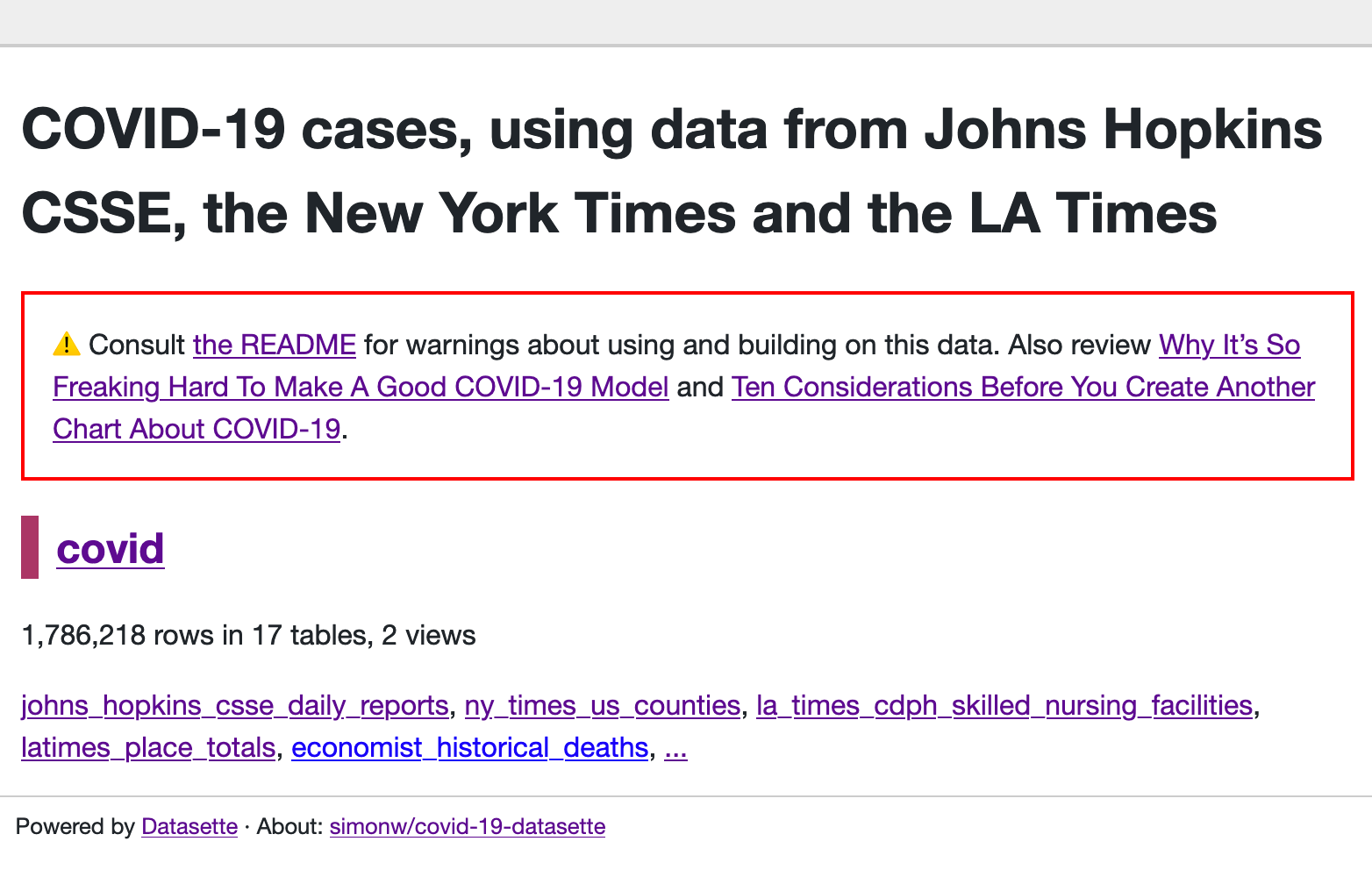

| 714377268 | MDU6SXNzdWU3MTQzNzcyNjg= | 991 | Redesign application homepage | simonw 9599 | open | 0 | 7 | 2020-10-04T18:48:45Z | 2021-01-26T19:06:36Z | OWNER | Most Datasette instances only host a single database, but the current homepage design assumes that it should leave plenty of space for multiple databases:

Reconsider this design - should the default show more information? The Covid-19 Datasette homepage looks particularly sparse I think: https://covid-19.datasettes.com/

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/991/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 792904595 | MDU6SXNzdWU3OTI5MDQ1OTU= | 1201 | Release notes for Datasette 0.54 | simonw 9599 | closed | 0 | Datasette 0.54 6346396 | 5 | 2021-01-24T21:22:28Z | 2021-01-25T17:42:21Z | 2021-01-25T17:42:21Z | OWNER | These will incorporate the release notes from the alpha, much expanded: https://github.com/simonw/datasette/releases/tag/0.54a0 |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1201/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 780278550 | MDU6SXNzdWU3ODAyNzg1NTA= | 1179 | Make original path available to render hooks | simonw 9599 | open | 0 | 8 | 2021-01-06T08:31:45Z | 2021-01-25T04:44:33Z | OWNER | https://github.com/simonw/datasette-export-notebook/blob/0.1/datasette_export_notebook/init.py

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1179/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 712260429 | MDU6SXNzdWU3MTIyNjA0Mjk= | 983 | JavaScript plugin hooks mechanism similar to pluggy | simonw 9599 | open | 0 | 47 | 2020-09-30T20:32:43Z | 2021-01-25T04:43:58Z | OWNER |

Originally posted by @simonw in https://github.com/simonw/datasette/issues/981#issuecomment-701616922 |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/983/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 789336592 | MDU6SXNzdWU3ODkzMzY1OTI= | 1195 | view_name = "query" for the query page | simonw 9599 | open | 0 | 4 | 2021-01-19T20:21:36Z | 2021-01-25T04:40:08Z | OWNER | It uses |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1195/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 712984738 | MDU6SXNzdWU3MTI5ODQ3Mzg= | 987 | Documented HTML hooks for JavaScript plugin authors | simonw 9599 | open | 0 | 7 | 2020-10-01T16:10:14Z | 2021-01-25T04:00:03Z | OWNER | In #981 I added |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/987/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 788447787 | MDU6SXNzdWU3ODg0NDc3ODc= | 1194 | ?_size= argument is not persisted by hidden form fields in the table filters | simonw 9599 | closed | 0 | Datasette 0.54 6346396 | 3 | 2021-01-18T17:41:52Z | 2021-01-25T03:10:23Z | 2021-01-25T03:10:23Z | OWNER | Click "Apply" on https://covid-19.datasettes.com/covid/ny_times_us_counties?_size=1000&county__exact=San+Francisco&state__exact=California&_sort_desc=date#g.mark=line&g.x_column=date&g.x_type=temporal&g.y_column=cases&g.y_type=quantitative and the |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1194/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 777145954 | MDU6SXNzdWU3NzcxNDU5NTQ= | 1167 | Add Prettier to contributing documentation | simonw 9599 | closed | 0 | Datasette 0.54 6346396 | 3 | 2020-12-31T22:00:55Z | 2021-01-25T02:01:19Z | 2021-01-25T01:58:28Z | OWNER | Following #1166 - the docs at https://docs.datasette.io/en/stable/contributing.html should include a section about JavaScript, and it should document how to run Prettier. I run it in VS Code but it can be run on the command-line too: |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1167/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 771208009 | MDU6SXNzdWU3NzEyMDgwMDk= | 1154 | Documentation for new _internal database and tables | simonw 9599 | closed | 0 | Datasette 0.54 6346396 | 2 | 2020-12-18T22:34:52Z | 2021-01-25T00:09:22Z | 2021-01-25T00:08:41Z | OWNER |

Originally posted by @simonw in https://github.com/simonw/datasette/issues/1150#issuecomment-748352106 |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1154/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 792931244 | MDU6SXNzdWU3OTI5MzEyNDQ= | 1202 | Documentation convention for marking unstable APIs. | simonw 9599 | closed | 0 | Datasette 0.54 6346396 | 2 | 2021-01-24T23:47:18Z | 2021-01-25T00:01:02Z | 2021-01-25T00:01:02Z | OWNER |

Originally posted by @simonw in https://github.com/simonw/datasette/issues/1154#issuecomment-766462197 |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1202/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 785588942 | MDU6SXNzdWU3ODU1ODg5NDI= | 1187 | extra_body_script() support for script type="module" | simonw 9599 | closed | 0 | Datasette 0.54 6346396 | 1 | 2021-01-14T02:01:47Z | 2021-01-24T21:21:44Z | 2021-01-14T02:14:39Z | OWNER | Follows #1186. The Relevant docs: https://docs.datasette.io/en/stable/plugin_hooks.html#extra-body-script-template-database-table-columns-view-name-request-datasette |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1187/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 785573793 | MDU6SXNzdWU3ODU1NzM3OTM= | 1186 | script type="module" support | simonw 9599 | closed | 0 | Datasette 0.54 6346396 | 1 | 2021-01-14T01:17:47Z | 2021-01-24T21:21:41Z | 2021-01-14T01:50:58Z | OWNER | Custom JavaScript can be loaded in |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1186/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 784628163 | MDU6SXNzdWU3ODQ2MjgxNjM= | 1185 | "Statement may not contain PRAGMA" error is not strictly true | simonw 9599 | closed | 0 | Datasette 0.54 6346396 | 3 | 2021-01-12T22:07:10Z | 2021-01-24T21:21:37Z | 2021-01-12T22:26:26Z | OWNER | It says "Statement may not contain PRAGMA" - but that's not actually true. Datasette has an allow-list of PRAGMA that are OK - in this case there was a typo in So the error message is misleading. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1185/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 783714076 | MDU6SXNzdWU3ODM3MTQwNzY= | 1184 | request.full_path property | simonw 9599 | closed | 0 | Datasette 0.54 6346396 | 0 | 2021-01-11T21:21:58Z | 2021-01-24T21:21:16Z | 2021-01-11T21:34:47Z | OWNER |

Originally posted by @simonw in https://github.com/simonw/datasette/issues/1179#issuecomment-755495387 |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1184/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 782692159 | MDU6SXNzdWU3ODI2OTIxNTk= | 1182 | Retire "Ecosystem" page in favour of datasette.io/plugins and /tools | simonw 9599 | closed | 0 | Datasette 0.54 6346396 | 3 | 2021-01-09T21:54:47Z | 2021-01-24T21:21:09Z | 2021-01-09T22:17:28Z | OWNER | https://docs.datasette.io/en/stable/ecosystem.html is no longer needed. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1182/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 780267857 | MDU6SXNzdWU3ODAyNjc4NTc= | 1178 | Use force_https_urls on when deploying with Cloud Run | simonw 9599 | closed | 0 | Datasette 0.54 6346396 | 9 | 2021-01-06T08:20:55Z | 2021-01-24T21:21:05Z | 2021-01-06T18:24:47Z | OWNER | Original title: datasette.absolute_url() should return https:// not http:// on Cloud Run https://latest-with-plugins.datasette.io/github/issue_comments.Notebook?_labels=on currently provides |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1178/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 772438273 | MDU6SXNzdWU3NzI0MzgyNzM= | 1157 | Use time.perf_counter() instead of time.time() to measure performance | simonw 9599 | closed | 0 | Datasette 0.54 6346396 | 1 | 2020-12-21T20:21:41Z | 2021-01-24T21:20:42Z | 2020-12-21T21:49:20Z | OWNER | I do that in a bunch of places: https://ripgrep.datasette.io/-/ripgrep?pattern=time%28%29&literal=on&glob=datasette%2F%2A%2A https://docs.python.org/3/library/time.html#time.perf_counter

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1157/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 772408750 | MDU6SXNzdWU3NzI0MDg3NTA= | 1156 | Rename _schemas to _internal | simonw 9599 | closed | 0 | Datasette 0.54 6346396 | 1 | 2020-12-21T19:27:58Z | 2021-01-24T21:20:39Z | 2020-12-21T19:51:18Z | OWNER | I like Refs #1154 #1150 #1155 |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1156/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 742011049 | MDU6SXNzdWU3NDIwMTEwNDk= | 1091 | .json and .csv exports fail to apply base_url | simonw 9599 | closed | 0 | Datasette 0.54 6346396 | 22 | 2020-11-12T23:45:16Z | 2021-01-24T21:20:24Z | 2021-01-09T22:19:29Z | OWNER |

Originally posted by @tballison in https://github.com/simonw/datasette/issues/865#issuecomment-726385422 |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1091/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 760605882 | MDU6SXNzdWU3NjA2MDU4ODI= | 1135 | Feature: --create option to create database file if it does not yet exist | simonw 9599 | closed | 0 | 0 | 2020-12-09T19:23:58Z | 2021-01-24T21:19:39Z | 2020-12-09T19:45:52Z | OWNER | I'd like to be able to tell people to run the following in the Datasette documentation to get started: This would give them a local Datasette instance with the ability to drag-and-drop CSV files directly into it. Just one catch: I don't want to have to talk them through creating an empty SQLite database file. So I want to add a new |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1135/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 791381623 | MDU6SXNzdWU3OTEzODE2MjM= | 1197 | DB size limit for publishing with Heroku | mtdukes 1186275 | closed | 0 | 1 | 2021-01-21T18:08:43Z | 2021-01-24T20:53:44Z | 2021-01-24T20:53:44Z | NONE | Hello, I tried searching for this, but can't seem to get a great answer: Does anybody know the size limit for databases deploying to Heroku? The files I'm working with are pretty large, but I might be able to pare them down if I have a limit in mind. I'm getting the following error when running

|

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1197/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 792625812 | MDU6SXNzdWU3OTI2MjU4MTI= | 1198 | Plugin testing documentation on using pytest-httpx | simonw 9599 | closed | 0 | 1 | 2021-01-23T18:46:16Z | 2021-01-24T20:40:38Z | 2021-01-24T20:38:43Z | OWNER | I keep on having to figure this out: if you use the https://pypi.org/project/pytest-httpx/ fixture to write tests against mocked external APIs, they will fail because that module will break Datasette's own You can fix this using:

See https://github.com/simonw/datasette-indieauth/blob/1.2/tests/test_indieauth.py I can add this tip to the https://docs.datasette.io/en/stable/testing_plugins.html page. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1198/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 725996507 | MDU6SXNzdWU3MjU5OTY1MDc= | 1036 | Make it possible to download BLOB data from the Datasette UI | simonw 9599 | closed | 0 | 0.51 6026070 | 16 | 2020-10-20T22:47:56Z | 2021-01-18T17:45:00Z | 2020-10-25T00:14:52Z | OWNER | Currently you can only extract binary BLOB data as base64-encoded JSON, which is not user friendly at all. It should always be possible for end-users to get the binary data out. I'm worried about XSS vulnerabilities here, but hopefully sending |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1036/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 743400216 | MDU6SXNzdWU3NDM0MDAyMTY= | 11 | Error thrown: sqlite3.OperationalError: table users has no column named lastName | beaugunderson 61791 | closed | 0 | 2 | 2020-11-16T01:21:18Z | 2021-01-18T04:35:22Z | 2021-01-18T04:35:22Z | NONE | Just installed

|

swarm-to-sqlite 205429375 | issue | {

"url": "https://api.github.com/repos/dogsheep/swarm-to-sqlite/issues/11/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 787900412 | MDU6SXNzdWU3ODc5MDA0MTI= | 222 | .m2m() should accept alter=True parameter | simonw 9599 | closed | 0 | 0 | 2021-01-18T04:15:43Z | 2021-01-18T04:26:10Z | 2021-01-18T04:26:10Z | OWNER | sqlite-utils 140912432 | issue | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/222/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||||

| 548591089 | MDU6SXNzdWU1NDg1OTEwODk= | 657 | Allow creation of virtual tables at startup | dazzag24 1055831 | open | 0 | 4 | 2020-01-12T16:10:55Z | 2021-01-15T20:24:35Z | NONE | Hi, I've been experimenting with SQLite reading from huge datasets using this excellent Parquet extension from @cldellow. https://cldellow.com/2018/06/22/sqlite-parquet-vtable.html https://github.com/cldellow/sqlite-parquet-vtable This works really well, but I was keen to see if I could combine datasette with this. Having previously experimented with the spatialite extension I knew that datasette supports loading extensions in the underlying sqlite instance. However I hit a blocker as the current design only allows SELECT statements to be executed and so I am unable to execute the crucial CREATE VIRTUAL TABLE ......... command that is required to load the data from the parquet file into the table. It seems like this would be a simple-ish change, but I don't know enough about the architecture of datasette to start implementing this myself? Could this be done as a datasette plugin? or would this require more fundamental changes at initialisation time? My thoughts are that something at init time could detect that the user was loading a .parquet file and then switch to a mode were it loads that via the "CREATE VIRTUAL TABLE..." rather than loading the .db file in the default case?? I'm happy to contribute code and testing, I just need some pointers on the best approach. Thanks Darren |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/657/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 787104850 | MDU6SXNzdWU3ODcxMDQ4NTA= | 1192 | Form Plugin for in-depth Datasette Querying | tomershvueli 1024355 | open | 0 | 0 | 2021-01-15T18:24:50Z | 2021-01-15T18:24:50Z | NONE | I envision a sort of easy-to-build form plugin that would be able to map a user's inputs to different fields/columns in a Datasette database. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1192/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 783910901 | MDU6SXNzdWU3ODM5MTA5MDE= | 221 | .add_missing_columns() does not take case insensitivity into account | simonw 9599 | closed | 0 | 0 | 2021-01-12T05:01:00Z | 2021-01-12T23:17:33Z | 2021-01-12T23:17:33Z | OWNER | SQLite columns are case insensitive - but the |

sqlite-utils 140912432 | issue | {

"url": "https://api.github.com/repos/simonw/sqlite-utils/issues/221/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | ||||||

| 782708469 | MDU6SXNzdWU3ODI3MDg0Njk= | 1183 | Take advantage of sqlite-utils cached table counts, if available | simonw 9599 | open | 0 | 2 | 2021-01-09T23:51:48Z | 2021-01-12T02:42:08Z | OWNER | sqlite-utils 3.2 now has a mechanism for creating a https://sqlite-utils.datasette.io/en/stable/python-api.html#cached-table-counts-using-triggers |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1183/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 778450486 | MDU6SXNzdWU3Nzg0NTA0ODY= | 1171 | GitHub Actions workflow to build and sign macOS binary executables | simonw 9599 | open | 0 | 8 | 2021-01-04T23:36:59Z | 2021-01-07T19:36:00Z | OWNER | Using PyInstaller, as explored in #93 and https://til.simonwillison.net/python/packaging-pyinstaller The bigger challenge will be the code signing bit. I'll need a Apple Developer account ($99/year) and some extensive CI fiddling. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1171/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 780767542 | MDU6SXNzdWU3ODA3Njc1NDI= | 1180 | Lazily evaluated arguments for call_with_supported_arguments | simonw 9599 | open | 0 | 2 | 2021-01-06T18:43:34Z | 2021-01-07T18:56:24Z | OWNER | While building https://github.com/simonw/datasette-export-notebook I thought it would be nice to be able to show a count of exported records on the page "This will stream 10,422 records to your notebook". None of the documented arguments on https://docs.datasette.io/en/0.53/plugin_hooks.html#register-output-renderer-datasette expose the count. The closest is So, idea: if your defined render function takes a To implement this I would need to teach the |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1180/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||

| 497170355 | MDU6SXNzdWU0OTcxNzAzNTU= | 576 | Documented internals API for use in plugins | simonw 9599 | closed | 0 | Datasette 1.0 3268330 | 10 | 2019-09-23T15:28:50Z | 2021-01-05T23:12:51Z | 2021-01-05T23:12:37Z | OWNER | Quite a few of the plugin hooks make a This means it should provide a documented, stable API so that plugin authors can rely on it. |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/576/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

completed | |||||

| 778682317 | MDU6SXNzdWU3Nzg2ODIzMTc= | 1173 | GitHub Actions workflow to build manylinux binary | simonw 9599 | open | 0 | 1 | 2021-01-05T07:41:11Z | 2021-01-05T07:41:43Z | OWNER | Refs #1171 and #93 |

datasette 107914493 | issue | {

"url": "https://api.github.com/repos/simonw/datasette/issues/1173/reactions",

"total_count": 1,

"+1": 1,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} |

||||||||